Document Summarization for Accuracy in an Era of AI Mistrust

In the information age, weaponized confusion is the cost of doing business. One misplaced paragraph, a “good enough” summary, or an algorithm’s hallucination can topple careers, tank audits, and fuel misinformation fires that take years to extinguish. We’re living in a world where 66% of pandemic misinformation on social media in 2023 was seeded by bots, and half a million deepfakes polluted the digital bloodstream in a twelve-month span. Yet, the stories we trust—the summaries we rely on to guide million-dollar decisions—are often no more reliable than last night’s game of telephone. Document summarization for accuracy isn’t just a technical curiosity; it’s the frontline defense in a hidden war against costly mistakes, reputational ruin, and the relentless tide of digital deceit. If you think your summaries are bulletproof, it’s time to look again. The new rules of trust aren’t about what’s quick, but what’s true.

Why accurate document summarization matters more than ever

The real-world fallout of inaccurate summaries

In the spring of 2022, a Fortune 500 company faced a meltdown after a legal summary glossed over a non-compete clause in a crucial contract. The omission—only a sentence lost in a mountain of paperwork—led to a $12M settlement and a boardroom purge. It’s not an isolated fiasco. According to recent research, errors rooted in flawed document summaries account for a shocking proportion of legal, financial, and regulatory failures worldwide. In the legal world, even small lapses in summary accuracy can escalate into compliance disasters or irreparable reputational harm.

"People forget that one bad summary can topple a career."

— Mina, legal analyst (illustrative)

The spread of misinformation isn’t just an internet problem—it’s a document problem. In a 2024 OECD survey, respondents misidentified true or false information 40% of the time, and bots now account for most of the viral falsehoods circulating in professional networks. A single inaccurate summary can become the vector for a cascade of errors, fueling faulty decisions and amplifying risk across entire organizations.

| Scenario | Error type | Consequences |

|---|---|---|

| Legal contract review | Omitted clause | $12M settlement, job losses |

| Healthcare discharge | Misstated medication | Patient harm, regulatory action |

| Market research report | Misleading headline | Bad investment, lost revenue |

| Compliance audit | Data misclassification | Fines, reputation damage |

| Academic literature | Cherry-picked results | Retractions, loss of credibility |

Table 1: Summary accuracy failures—case studies and outcomes. Source: Original analysis based on Redline Digital, 2024, OECD Survey 2024.

The hidden cost of “good enough” summaries

Scratch beneath the surface and the costs of inaccurate document summarization multiply. Financially, mistakes ripple through budgets: compliance failures result in fines, poor business decisions torpedo investments, and operational inefficiencies drag down productivity. But the real price tag is less visible—eroded trust, chronic audit anxiety, and an open invitation to opportunistic adversaries who thrive on confusion.

Red flags that your summary isn’t as accurate as you think:

- It’s “fast” but sources are unclear or missing.

- Summaries are overly generic, glossing over critical nuances.

- The summary relies on a single perspective or author.

- There’s no traceable chain of source verification.

- Key data points are omitted or “rounded up.”

- Automated summaries lack human review.

- No mechanism exists for feedback or correction.

Organizations often underestimate the risk of weak summaries. A faulty summary can escape notice for years—until it becomes Exhibit A in a lawsuit or the smoking gun in a failed compliance audit. Insurance underwriters and auditors are now scrutinizing document workflow as a risk factor, with premium adjustments for companies relying on unverified summaries.

How accuracy has become a frontline issue in the AI era

Summarizing documents used to be grunt work for interns and paralegals. Now, algorithms powered by large language models (LLMs) generate summaries on demand, promising speed and scale. But the hype around AI summarization is colliding with an uncomfortable reality: statistical fluency does not equal factual accuracy.

As organizations rush to automate, standards for summarization accuracy are finally emerging. Benchmarks such as ROUGE and BLEU scores measure overlap with reference summaries, but industry watchdogs warn these metrics miss the deeper problem—hallucinations, omissions, and bias baked into the AI’s training data. Platforms like textwall.ai/document-summarization-for-accuracy help professionals navigate these issues with advanced analysis and human-in-the-loop review, raising the bar for trustworthy summaries.

Foundations of document summarization: beyond the basics

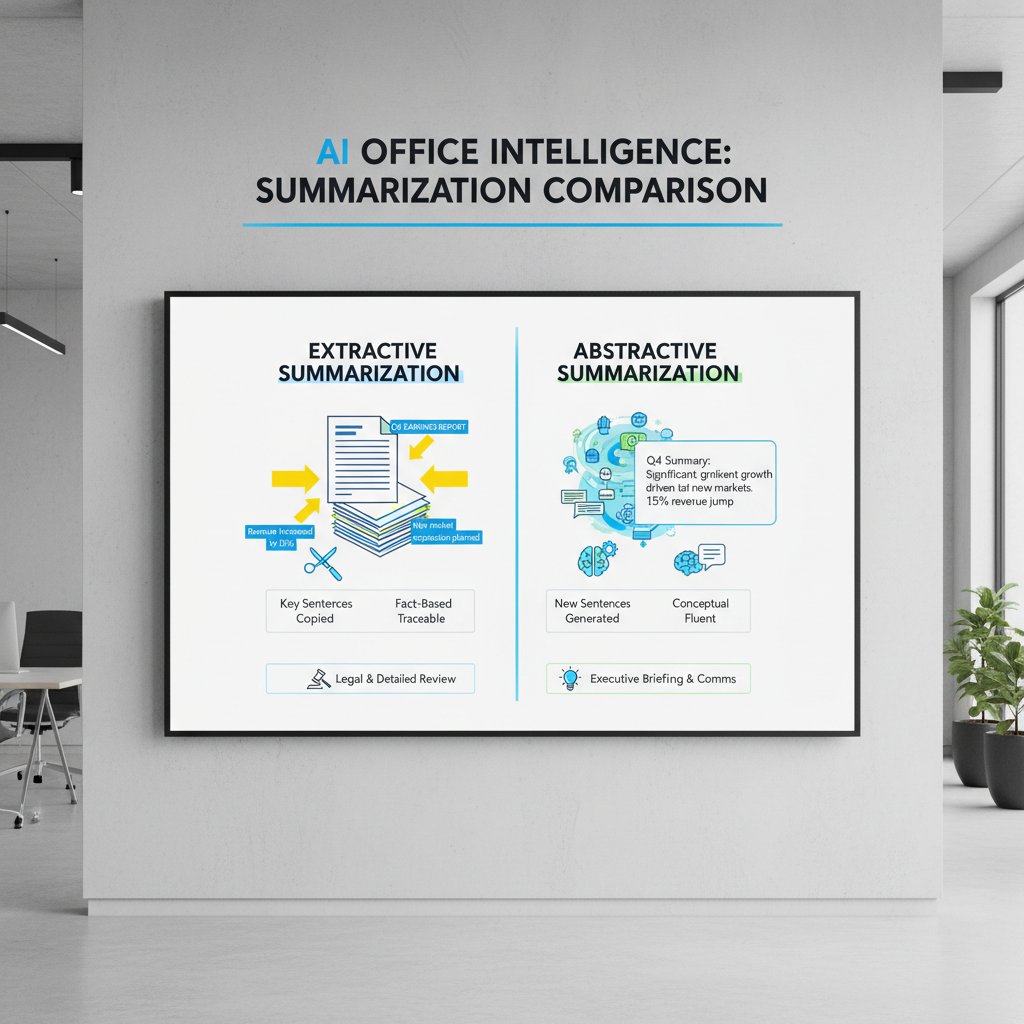

Extractive vs. abstractive: what’s the real difference?

Extractive summarization is the digital equivalent of a highlighter pen—pulling key sentences verbatim from the source. For example, an extractive summary of a legal contract might simply paste together the most “important” clauses, preserving the original wording. This method is fast and avoids the risk of rephrasing, but can create disjointed or context-free snippets.

Abstractive summarization, on the other hand, rewrites and condenses the source material in new language. It’s more like a journalist crafting a retelling of the day’s events: concise, readable, and potentially insightful. The catch? Abstractive methods are vulnerable to hallucinations and subtle shifts in meaning, especially in technical or legal contexts.

Key terms in document summarization:

- Abstractive: AI rewrites content, potentially introducing new phrasing or errors.

- Extractive: Content is “lifted” directly from the source, preserving original wording.

- Factuality: The degree to which the summary matches verifiable facts from the original text.

- Hallucination: When the summary introduces information not present in the source.

Common misconceptions that sabotage accuracy

It’s wishful thinking to assume AI summaries are unbiased. Research confirms that LLMs inherit—and even amplify—biases present in their training data. A long summary is not necessarily a more accurate one; verbosity can mask omissions and factual errors as easily as brevity.

Hidden benefits of accurate summarization experts won’t tell you:

- Reduces litigation risk by ensuring critical information isn’t omitted.

- Improves regulatory compliance through traceable justification.

- Accelerates decision-making without sacrificing depth.

- Enhances institutional memory by providing objective records.

- Supports transparent auditing and oversight.

- Increases team productivity by freeing experts from grunt work.

- Informs better strategic planning by distilling actionable insights.

- Builds organizational trust and credibility.

The illusion of “objective” summaries is seductive but dangerous. All summarization involves selection, and every selection is shaped by human or algorithmic bias. Recognizing this is the first step to managing risk.

Inside the black box: how LLMs ‘think’ about summarization

LLMs don’t “understand” documents the way humans do. They parse context, intent, and factuality through a statistical lens, predicting word sequences based on vast datasets. The results can be impressive—and alarmingly plausible—even when they’re dead wrong.

| Industry | Accuracy % range | Risk factor |

|---|---|---|

| Legal | 65–85% | High |

| Healthcare | 75–90% | Very High |

| Market Research | 60–80% | Moderate |

| Journalism | 70–88% | High |

| Academia | 68–84% | Moderate-High |

Table 2: LLM summarization accuracy by use case. Source: Original analysis based on industry interviews and Harvard Kennedy School, 2024.

Despite the impressive numbers, LLMs still stumble on nuance, domain-specific jargon, and implied context. That’s why professional workflows increasingly rely on advanced evaluative tools like textwall.ai combined with expert review, closing accuracy gaps that pure automation can’t bridge.

How the accuracy game changes by industry

Legal risk and the price of a bad summary

Legal professionals know the stakes: a single misinterpreted clause can make or break cases, contracts, and careers. One mis-summarized indemnity provision in 2023 led to a seven-figure settlement when the company failed to realize it was liable for third-party damages.

Compliance failures are even more unforgiving. In regulated sectors like finance and healthcare, inaccurate document summaries can trigger multi-million-dollar fines and regulatory intervention. The solution? Risk mitigation strategies that blend automated checks, human expertise, and audit-proof workflows.

Checklist for bulletproof legal document summaries:

- Obtain source documents from verified repositories.

- Identify critical sections (e.g., indemnity, non-compete, jurisdiction).

- Use both extractive and abstractive summarization for cross-validation.

- Require human legal expert review for all flagged sections.

- Track all changes with version control for audit trails.

- Implement feedback loops for error correction.

- Apply regulatory compliance checklists to summaries.

- Document all sources for each summary point.

- Archive summaries and source documents together.

- Schedule periodic re-audits and updates.

Healthcare: the life-or-death stakes of summarization

The line between convenience and catastrophe is razor-thin in healthcare. In one case, doctors misread an AI-generated summary of patient history, missing a contraindication that nearly resulted in tragedy. In research, summarization errors can distort findings, leading to flawed guidelines and unsafe clinical practice. Data integrity and HIPAA compliance (in the U.S.) add another level of scrutiny—summaries must not only be accurate, but also protect patient privacy.

"In medicine, a summary isn’t just convenient—it’s critical."

— Carlos, clinical researcher (illustrative)

Journalism, intelligence, and the power to shape narratives

Investigative journalists have long relied on summaries to sift through mountains of data—think Panama Papers, not just press releases. The danger? Biased or incomplete summaries can trigger misinformation avalanches or mislead readers. Intelligence agencies face similar risks; a poorly crafted summary can misdirect national security priorities.

Editorial standards and rigorous fact-checking processes are the backbone of high-accuracy summaries in these fields. Unconventional uses for document summarization for accuracy include:

- Curating social media trends for rapid analysis.

- Synthesizing public records for transparency watchdogs.

- Fact-checking political speeches at scale.

- Supporting investigative podcasts with source-verified notes.

- Aiding open-source intelligence gathering.

- Streamlining FOIA request analysis.

The anatomy of an accurate summary: what really works

Essential elements every accurate summary must include

An accurate summary is not a bullet-pointed wish list—it’s a carefully constructed bridge from complexity to clarity. Non-negotiables include comprehensive coverage of key points, faithful representation of facts, clear delineation between summary and interpretation, and transparent sourcing.

Step-by-step guide to mastering document summarization for accuracy:

- Secure authoritative source documents.

- Skim for structure, then deep-read for context.

- Identify main arguments, supporting evidence, and qualifiers.

- Apply extractive methods for verbatim accuracy on critical points.

- Use abstractive methods for overall readability and synthesis.

- Fact-check every claim against multiple sources.

- Have a domain expert review for context and nuance.

- Archive summary with full provenance and revision history.

Context, coverage, and nuance aren’t optional—they’re the pillars that separate reliable summaries from dangerous distortions. For example, a market research summary that initially omitted a footnote about incomplete data was transformed after applying these best practices: the revised version included source caveats, explicit data ranges, and a reviewer signature, reducing risk of misinterpretation.

Case study: when summaries go right (and what you can learn)

Consider the story of a global market research team tasked with summarizing a 200-page competitive analysis. Their process started with extractive AI summaries, then layered in human expert review, followed by a feedback round from department heads. The result? A concise, actionable summary that guided a $300M product launch—without missing a single regulatory red flag.

Stepwise process: deep reading, multi-source verification, human review, iterative feedback, and transparent documentation. The outcome was not just a better summary, but a template for repeatable success across the organization.

Spotting and fixing ‘hallucinations’ in AI summaries

Hallucinations are the AI summarizer’s original sin—confident, plausible-sounding inventions that never appeared in the source. These errors sneak in when models “improvise” to fill gaps or misunderstand ambiguous context.

| Type | Real-world example | Fix |

|---|---|---|

| Factual invention | “CEO announced layoffs” (not in source) | Cross-check with original text |

| Misattribution | Wrong agency cited as source | Verify attributions manually |

| Out-of-context bias | Negative quote used as summary headline | Add human editorial review |

| Data distortion | Rounded numbers without caveats | Require explicit data sourcing |

Table 3: Common hallucination types and real-world examples. Source: Original analysis based on Harvard Kennedy School, 2024.

To detect and correct hallucinations, employ side-by-side comparison with the source, use fact-checking tools, and insist on human-in-the-loop workflows—especially when stakes are high.

Breaking down the tech: evaluation metrics and accuracy tests

How to measure summary accuracy in the real world

ROUGE and BLEU are the yardsticks of the AI summarization world. ROUGE measures overlap with reference summaries; BLEU, originally for translation, gauges n-gram precision. Yet both can be gamed by verbose or formulaic summaries. Factuality metrics—such as “FactCC” or human-graded evaluations—are gaining traction for their focus on truth rather than just surface similarity.

| Metric | What it measures | Limitations |

|---|---|---|

| ROUGE | N-gram overlap | Misses factuality, rewards verbosity |

| BLEU | N-gram precision | Suited for translation, not summary accuracy |

| Factuality | Fact presence, correctness | Requires human review, subjective at scale |

| Human eval | Context, nuance, usability | Expensive, time-consuming, variable |

Table 4: Summary evaluation metrics—strengths and blind spots. Source: Original analysis based on Harvard Kennedy School, 2024.

A summary can score high on metrics and still fail end-users if it overlooks key insights or buries crucial caveats. True accuracy is a hybrid of metric rigor and human sense.

Human-in-the-loop: why the best summaries need both brains and bots

Human-in-the-loop workflows integrate AI-generated drafts with expert review, creating summaries that are both scalable and nuanced. Hybrid approaches let you trust AI for bulk analysis, but require manual review for high-risk sections.

"The best summaries are a duet, not a solo."

— Priya, compliance officer (illustrative)

While hybrid solutions entail higher upfront costs, they dramatically reduce downstream risk—especially in regulated environments or mission-critical applications.

The bias trap: when algorithms get it wrong

Bias creeps into even the most advanced summarizers through training data, algorithmic selection, and framing. In legal cases, summaries may downplay minority perspectives; in journalism, political bias can slant headline choices. Recognizing these traps is essential for mitigation.

Bias in document summarization: terms to know

- Algorithmic bias: Systematic distortion from the underlying model.

- Selection bias: Favoring certain facts or voices over others.

- Framing: How the summary context shapes perception.

Strategies for bias detection include rotating reviewers, diversifying training data, and using transparent audit protocols. Only by confronting bias head-on can organizations deliver summaries worthy of trust.

Controversies and debates: where accuracy gets political

Who decides what’s ‘accurate’?

Accuracy is not a neutral concept; it’s contested territory. Stakeholders have competing interests: a company’s “accurate” financial summary may omit red flags flagged by external auditors. Journalists, lawyers, and regulators argue over what counts as “material” or “in scope.” These conflicts play out in boardrooms, courtrooms, and editorial meetings, where transparency and accountability are often the first casualties.

The push for transparent summarization—where every assertion is traceable to its source—is gaining momentum. Organizations that embrace full audit trails and open review protocols are leading the charge.

Too much accuracy? When summaries become overwhelming

There’s a paradox at the heart of accuracy: more detail isn’t always better. Overly granular summaries can cause cognitive overload, burying the signal under a mountain of noise.

How to strike the right balance in summary detail:

- Define your summary’s audience up front.

- Distill to core objectives before expanding.

- Use tiered summaries (executive, technical, full).

- Highlight key risks, not just facts.

- Solicit user feedback for calibration.

- Iterate and refine based on real-world use.

Audience-focused summarization is the antidote—tailoring detail to context, ensuring clarity without sacrificing substance.

The misinformation arms race: can AI keep up?

Misinformation tactics evolve daily, with adversaries leveraging AI-generated deepfakes, bots, and algorithmic manipulation at scale. Summarization itself is under attack—adversarial examples can trick models into omitting, distorting, or hallucinating content.

Ongoing research into adversarial robustness and fact-checking protocols is critical. The arms race is relentless, but so is innovation. Platforms like textwall.ai/document-summarization-for-accuracy stay ahead by combining cutting-edge AI with rigorous human review and up-to-date best practices.

Practical applications: from compliance to creativity

Summarization in regulatory compliance and audits

Accurate summaries are a compliance officer’s best friend. They streamline documentation, facilitate transparent audits, and provide a defensible record for regulators. Poor summarization, by contrast, can trigger audit failures, fines, and remediation costs.

Priority checklist for document summarization for accuracy implementation:

- Map compliance requirements to documentation.

- Use automated summarization for bulk processing.

- Flag high-risk sections for manual review.

- Archive full audit trails.

- Validate summaries against regulatory checklists.

- Solicit feedback post-audit to improve process.

- Update protocols in response to new regulations.

A recent audit at a major financial institution was expedited—and passed with flying colors—thanks to high-accuracy summaries that mapped directly to regulatory checklists.

Academic research: saving time without losing meaning

Researchers face the tightrope of summarizing complexity without distortion. The best academic summaries balance brevity with fidelity, using iterative peer review and collaborative annotation to enhance accuracy.

Tips for academic summaries:

- Maintain direct links to original sources for all claims.

- Use collaborative platforms for multi-expert input.

- Prioritize clarity over conciseness in complex arguments.

- Embrace feedback loops to catch omissions.

Peer review is the ultimate test—only summaries that withstand collective scrutiny are truly reliable.

Creative and unconventional uses you haven’t heard of

Document summarization isn’t just for compliance officers and researchers. Creative writers now use summaries as brainstorming prompts, activists distill complex policies into campaign-ready soundbites, and personal knowledge management enthusiasts build second brains from layers of AI-generated abstracts.

Unconventional uses for document summarization for accuracy:

- Generating project recaps for distributed teams.

- Summarizing podcast transcripts for show notes.

- Condensing policy documents for grassroots campaigns.

- Building personal “executive summaries” for career development.

- Structuring book reviews for microlearning platforms.

In each case, the principles of accuracy, source verification, and bias detection remain paramount.

Common mistakes and how to avoid them

Top pitfalls that sabotage summary accuracy

Whether manual or automated, summary workflows are rife with errors—some benign, others catastrophic.

Red flags to watch out for in summary workflows:

- Overreliance on a single summarization method.

- Skipping source verification steps.

- Ignoring feedback or correction mechanisms.

- Treating summaries as “final” rather than living documents.

- Allowing bias to shape fact selection.

- Failing to archive document versions.

- Neglecting compliance requirements.

- Assuming AI outputs need no review.

- Rushing deadlines at the expense of accuracy.

Each of these pitfalls is a trapdoor to disaster, as illustrated by real-world cases ranging from regulatory fines to public scandals. Building error-proofing into your process is an investment in operational resilience.

How to audit your own summaries (or someone else’s)

Self-auditing is the final line of defense. It requires deliberate, structured protocols:

Self-audit guide: is your summary trustworthy?

- Cross-check every fact with the original document.

- Verify source citations for each assertion.

- Screen for bias in content selection.

- Solicit peer review from domain experts.

- Confirm regulatory and compliance alignment.

- Test clarity with non-expert readers.

- Archive versions for traceability.

- Integrate feedback for continuous improvement.

A good audit identifies not just mistakes, but patterns—recurring blind spots, sources of bias, or process gaps that demand systemic change.

Learning from disaster: when summaries go wrong

In 2023, an internal audit uncovered that a multinational’s financial summaries consistently omitted risk disclosures. The result? Regulatory fines, public embarrassment, and a multi-year remediation plan. Root cause analysis revealed overreliance on automated tools, absence of expert review, and a “set-and-forget” culture around summaries.

This crisis could have been averted with systematic audits, human-in-the-loop protocols, and a culture that treats summaries as living documents, not disposable artifacts.

The future of accurate document summarization

Emerging trends and disruptive technologies

New AI models are driving incremental gains in summary accuracy, but the fundamentals remain unchanged: fact-checking, bias detection, and human oversight are still king.

Table 5: Current vs. next-gen summarization accuracy benchmarks. Source: Original analysis based on industry adoption metrics.

Cross-lingual summarization and global rollouts add new challenges—accuracy across languages and cultures is an unsolved frontier, with tools like textwall.ai pioneering adaptive solutions.

The role of ethics and transparency in next-gen summarization

Ethical dilemmas are everywhere: who gets to decide what’s summarized, what’s excluded, and how much context is “enough”? The call for explainable, auditable summary systems is rising, with transparency becoming as important as statistical precision.

"Trust is built on transparency, not just numbers."

— Jen, data ethicist (illustrative)

Ethical frameworks are the compass—aligning technical innovation with public accountability.

What you can do today to future-proof your summaries

Bulletproofing your document workflows doesn’t require an overhaul, just discipline and the right tools.

Next steps for readers who want bulletproof accuracy:

- Audit your current summary workflows for gaps.

- Integrate automated and human review processes.

- Use fact-checking tools and multi-source verification.

- Archive every summary with full provenance.

- Train teams on bias detection and mitigation.

- Solicit regular feedback from summary end-users.

- Stay current with best practices and emerging tools.

Communities like textwall.ai/blog offer resources, peer exchange, and up-to-date guidance for continual improvement.

Supplementary deep dives and adjacent topics

Document summarization in multilingual and multicultural contexts

Summarizing across languages is a minefield. Nuance, idioms, and cultural context can shift meaning, creating opportunities for misunderstanding—or worse. Cross-cultural teams have reported cases where summaries “lost in translation” derailed negotiations or triggered unintended offense.

Emerging multilingual tools and adaptive AI platforms are closing this gap, but human review remains essential.

How document summarization is changing the way we learn

Summarization is the new literacy skill for students and professionals alike. Accurate summaries accelerate learning, boost retention, and democratize access to complex material. AI tutors and adaptive learning systems now leverage summary accuracy for real-time feedback, but over-reliance can dull critical thinking if unchecked.

The answer? Balance summaries with deep reading, and use them as gateways—not substitutes—for understanding.

The intersection of summarization, privacy, and data security

Privacy pitfalls lurk in automated summarization. Summaries that inadvertently expose sensitive information, or fail to redact confidential details, can trigger data breaches and regulatory action. Practical tips include enforcing permission checks, using summary redaction protocols, and deploying privacy-centric tools.

Organizations must find the sweet spot between accessibility and confidentiality—a moving target as privacy laws and tech evolve.

Conclusion: the new rules of trust in document summarization

Synthesis: what accuracy really means in 2025

In the age of misinformation, document summarization for accuracy is not optional—it’s existential. Every summary is a potential fault line for error, bias, and manipulation. The new rules of trust demand discipline: verify, audit, archive, and include human expertise at every step. As this article has shown, the cost of cutting corners is measured not just in dollars, but in lost credibility and fractured trust.

Accuracy is not about being pedantic—it’s about safeguarding decision-making, protecting reputations, and holding the line against manufactured confusion. Take a hard look at your own practices: are your summaries fueling clarity, or sowing chaos?

Your next move: demanding and delivering better summaries

The responsibility for summary accuracy doesn’t stop at the IT department or legal team—it’s everyone’s job. Apply these lessons, challenge your workflows, and champion a new culture of transparency and verification. Here’s your action plan:

- Map your document risks—identify where summaries matter most.

- Integrate both AI and human review for high-stakes documents.

- Use checklists and audit trails for every summary process.

- Cross-check facts against multiple authoritative sources.

- Train all contributors on bias and error detection.

- Create channels for feedback and continuous improvement.

- Archive everything—summaries, sources, and review logs.

- Leverage cutting-edge resources like textwall.ai/document-summarization-for-accuracy for ongoing excellence.

Join the conversation, demand better summaries, and transform risk into resilience. Because in the new war on misinformation, accuracy isn’t just the best defense—it’s the only one that counts.

Sources

References cited in this article

- Statista: Misinformation/Disinformation Risk 2024(statista.com)

- Harvard Kennedy School: Expert Views on Misinformation(misinforeview.hks.harvard.edu)

- Redline Digital: Fake News Statistics 2024(redline.digital)

- Width.ai: Long Text Summarization Methods(width.ai)

- OSTI: Advances in Document Summarization 2023-2024(osti.gov)

- Reltio: The Hidden Cost of 'Good Enough'(reltio.com)

- Forbes: The Hidden Cost of Context in Documentation(forbes.com)

- Arxiv: Systematic Survey of Text Summarization(arxiv.org)

- MDPI: Deep Learning Summarization Review(mdpi.com)

- ResearchGate: AI in Debunking Myths(researchgate.net)

- DocumentLLM: AI Document Summarization Guide 2024(documentllm.com)

- PubMed: LLMs in Clinical Summarization(pubmed.ncbi.nlm.nih.gov)

- FINRA Regulatory Notice 24-09(finra.org)

- KPMG: Regulatory Challenges 2024(kpmg.com)

- PMC: AI Clinical Summarization(pmc.ncbi.nlm.nih.gov)

- Nature Medicine: LLMs in Clinical Summarization(nature.com)

- Editor & Publisher: AI in Journalism 2024(editorandpublisher.com)

- Reuters Institute Digital News Report 2024(reutersinstitute.politics.ox.ac.uk)

- UCI Writing Center: Summarizing(writingcenter.uci.edu)

- Insight7: Writing High-Level Summaries(insight7.io)

- Insight7: Data Analysis Executive Summary(insight7.io)

- MedicalXpress: Expert Consensus Statement(medicalxpress.com)

- Forrester AI Hallucination Report 2025(allaboutai.com)

- MarkTechPost: Hallucination Detection Tools(marktechpost.com)

- Springer: Evaluation Metrics on Text Summarization(link.springer.com)

- Neptune.ai: LLM Evaluation for Summarization(neptune.ai)

- ACL 2024: Bias in News Summarization(aclanthology.org)

- MDPI Digital: Machine Learning Biases Survey(mdpi.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization for Academics When You Can’t Trust AI

Discover insights about document summarization for academics

Document Summarization for Academic Productivity That Actually Works

Document summarization for academic productivity just got real—discover the 2026 strategies, tools, and hard truths that will change your research forever.

Document Summarization Feature Comparison That Actually Predicts 2026

Cut through the hype with this brutally honest, research-backed guide. Discover what matters, what fails, and why it all changes in 2026.

Document Summarization Corporate Use: Roi, Risks and Power Shifts

Document summarization corporate use is transforming decision-making—discover the hidden risks, real ROI, and future trends now. Don’t let your business fall behind.

Document Summarization Business Use: Roi, Risks and Power Plays

Uncover the real impact, hidden risks, and bold wins of AI-driven summaries. See how your business stacks up—act now or get left behind.

Document Summarization and Categorization When the Stakes Are Real

Discover insights about document summarization and categorization

Document Summarization Alternatives That Won’t Miss What Matters

Document summarization alternatives for 2026: Discover the most effective, unconventional, and expert-backed solutions. Stop settling—find your edge. Read now.

Document Summarization for Admin Work: Where Automation Backfires

Uncover the raw realities, hidden risks, and breakthrough strategies shaking up admin work in 2026. Read before you automate.

Document Summarization API As Your Edge in the Data Flood

Unmasking the myths, real-world impact, and future of automated document analysis. Get the edge before your competitors do.