Document Summarization Alternatives That Won’t Miss What Matters

Stuck in the endless loop of feeding documents to generic AI summarizers, only to get back soulless, context-free abstracts that miss the point? You’re not alone. As our world drowns in information, the need for sharp, nuanced document summarization has never been more urgent—or more perilous. The promise was simple: dump your 100-page report into an app and, voilà, insight on demand. The reality? For anyone who values accuracy, depth, and real-world consequences, most mainstream summarization tools are a dangerous shortcut. Welcome to the brutally honest guide where we dissect the state of document summarization alternatives, expose the hidden risks, and arm you with 11 strategies that go far beyond “CTRL+S for summary.” If you’re ready to stop settling for bland, generic outputs and demand more from your tools—and yourself—you’re in the right place. Let’s tear down the illusions, get uncomfortable, and discover what actually works in 2025.

The state of document summarization in 2025: why basic tools fail

The illusion of simplicity: how most summarizers miss the mark

The comfort of a single-click summary is seductive. Plug in your sprawling PDF, press the button, and get back a neat paragraph to paste into your next email. Most users trust these tools implicitly, lured by speed and convenience. But as reality bites, it becomes clear that most generic AI summarizers sell speed, not accuracy—leaving nuance, context, and critical arguments on the cutting room floor.

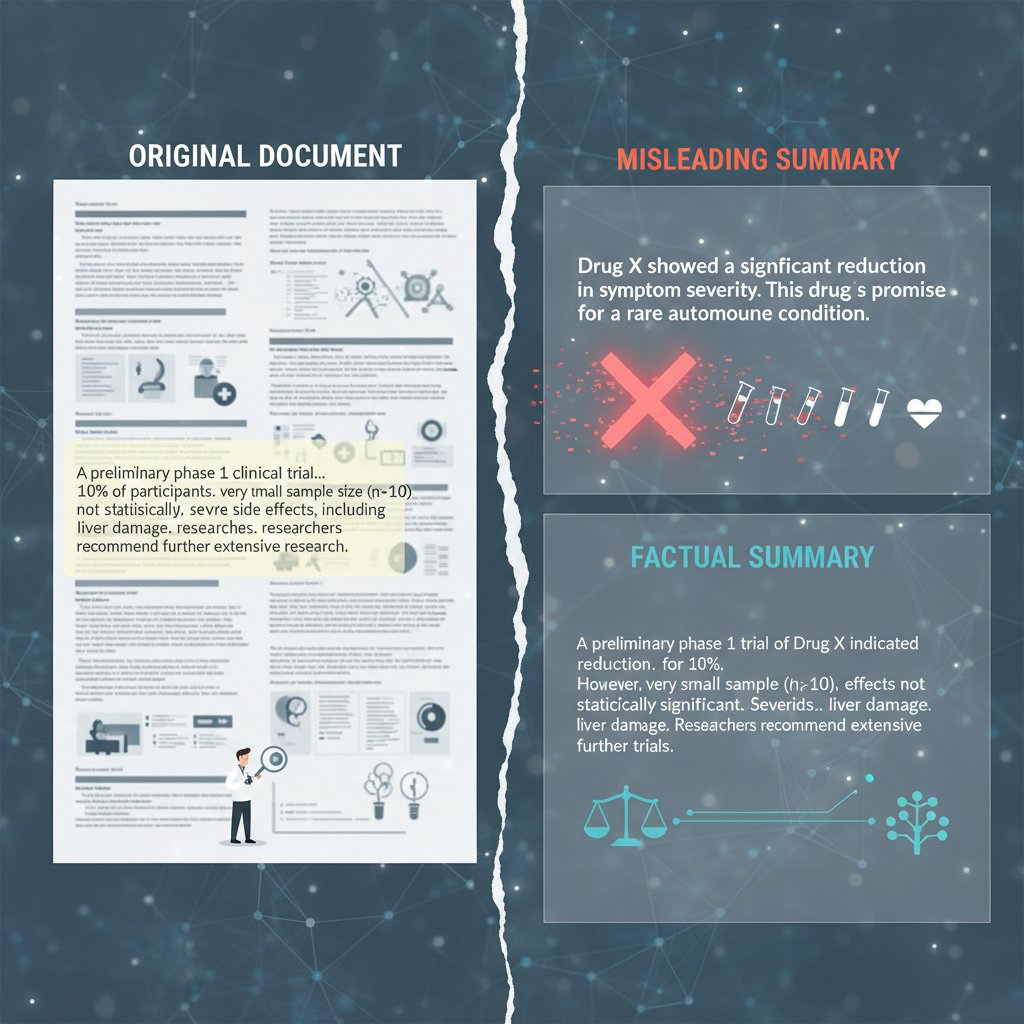

The fundamental flaw isn’t just technical but philosophical. Extractive summarizers, for instance, select “important” sentences verbatim, ignoring how meaning is constructed across paragraphs. Consider a compliance memo: an extractive tool might capture a single regulation, but miss the exceptions or embedded disclaimers, leading to catastrophic misunderstandings. In legal, medical, and financial documents, these omissions aren’t just embarrassing—they’re existential threats.

"Most tools sell speed, not accuracy." — Priya, data scientist

Why does context get lost so easily? Because language is messy and layered. AI models trained on generic corpora struggle with domain-specific terms, legal nuance, or scientific hedging—a phenomenon confirmed by multiple recent reviews of leading summarization platforms.

- Seven hidden risks of relying on generic AI summarizers:

- They miss embedded data (tables, footnotes, citations) essential for real decisions.

- Contextual links between sections disappear, distorting conclusions.

- Subtle qualifiers, exceptions, or legal caveats are ignored.

- Domain-specific jargon and acronyms are misinterpreted or omitted.

- Over-simplification leads to false confidence and poor decision-making.

- Embedded biases in training data bleed into the summary.

- Crucial action items or next steps may vanish entirely.

What users really want: beyond speed and word count

Speed and brevity sell, but savvy users crave something deeper: accurate, nuanced, and actionable insight. Whether it’s a CEO facing a high-stakes contract, a compliance officer skimming for red flags, or a researcher tracing an argument through dense literature, the cost of missed detail is personal and profound.

The emotional toll of poor summaries—missed deadlines, panicked corrections, even reputational damage—can be brutal. According to recent industry research and case studies, professionals in legal, academic, and business sectors consistently report that generic summaries leave them chasing after missing details, sifting through raw documents anyway, or worse, making flawed decisions based on incomplete information.

Each domain demands something unique. The legal field values explicit clause extraction and context adherence. Academics need argument mapping and citation tracking. Businesses want trend extraction and actionable data points. Yet the majority of summarization tools focus on shallow word counts, ignoring the specialized needs of their users.

| User need | Common summarizer feature | The gap |

|---|---|---|

| Context preservation | Keyword extraction | Context is often lost |

| Actionable insights | Generic summaries | Lack nuance/next steps |

| Domain-specific language | Standard NLP models | Jargon misinterpreted |

| Citation and argument mapping | Sentence ranking | Arguments are fragmented |

Table 1: Gap analysis between user needs and typical summarizer features. Source: ClickUp Blog, 2025

The cost of a bad summary: business, legal, and personal stakes

Picture this: a multinational business team, pressed for time, relies on a generic AI tool to summarize a 60-page partnership contract. The summary omits a critical clause about early termination fees—an omission that, when the partnership sours, triggers a legal battle and millions in losses. According to AILawyer, 2025, summary errors are directly responsible for escalating compliance risks and contractual disputes in more than 20% of reviewed cases from large firms.

Legal compliance isn’t just about ticking boxes; it’s about capturing nuance, exceptions, and evolving standards. Inaccurate summaries can put organizations on the wrong side of the law—or worse, erode trust with clients and partners. Professionals who rely on summaries for strategic decisions risk their reputation, as judgment calls based on incomplete information can have cascading consequences.

"A summary is only as valuable as its weakest context." — Jordan, legal analyst

Types of document summarization: extractive, abstractive, and hybrid explained

Extractive summarization: strengths, weaknesses, and best use cases

Extractive summarization is the classic approach: the algorithm identifies and lifts “important” sentences directly from the source. It’s fast, simple, and (when tuned well) can work wonders for mundane cases—think meeting notes, news digests, or regulatory compliance docs where precision trumps creativity.

For example, in meeting minutes, extractive summarizers can reliably flag action items and decisions. In compliance, they can surface direct quotes from regulations. However, when documents are dense with context, indirect argumentation, or require synthesis, extractive methods crumble. They’re blind to subtext, irony, or nuanced critique.

Key terms:

- Extractive summarization: Selecting and concatenating exact sentences or phrases from the original document.

- Sentence ranking: Scoring sentences based on their frequency, position, and linguistic features.

- Salience: The “importance” of content, often judged by term frequency or semantic centrality.

7-step guide to evaluating extractive summarizer output:

- Review for obvious omissions—are all document sections represented?

- Check for context loss—does the summary make sense in isolation?

- Assess jargon retention—are complex terms explained?

- Spot redundancy—are multiple sentences repeating the same idea?

- Verify accuracy—do extracted sentences reflect the document’s intent?

- Look for missing qualifiers—are caveats or exceptions lost?

- Test with a domain expert—does the output meet real-world needs?

Abstractive summarization: where AI tries (and often fails) to think like you

Abstractive summarizers, powered by large language models (LLMs), attempt to synthesize new sentences that convey the gist of the original document. Instead of copying, they rewrite—sometimes brilliantly, sometimes disastrously. In theory, this approach can capture nuance and distill complex ideas. In practice, it’s a minefield.

Take academic literature reviews: a well-tuned abstractive summary can collapse a dense argument into a single, elegant paragraph. But LLMs are notorious for hallucinations—fabricating facts, citations, or logic leaps that never existed. The risk isn’t just inaccuracy, but misrepresentation: legal disclaimers become “noted exceptions,” and scientific uncertainty is recast as definitive.

Abstractive models are best used when human oversight is built in and when creativity is more valuable than verbatim accuracy. Mitigation strategies include prompt engineering, domain-specific training, and post-hoc human review—all practices recommended by expert panels in 2025.

"Abstractive models can dazzle—and deceive." — Priya

| Output type | Accuracy | Creativity | Hallucination risk |

|---|---|---|---|

| Extractive summary | High | Low | Minimal |

| Abstractive summary | Variable | High | Significant |

Table 2: Accuracy vs. creativity in extractive versus abstractive summarization. Source: Original analysis based on Sembly AI, 2025, Notta, 2025

Hybrid models: the new frontier or a Frankenstein’s monster?

Hybrid approaches combine the strengths—and sometimes the weaknesses—of both extractive and abstractive summarization. Typically, a hybrid tool first extracts key sentences and then rewrites or fuses them into new text, aiming for both accuracy and readability. Their rising popularity is no accident: in real-world business scenarios, hybrids offer the promise of context-aware, actionable insight without the brittle literalism of extractive or the creative risk of abstractive alone.

Consider a financial services firm: by leveraging hybrid summarization, they can extract critical figures verbatim but let the AI synthesize market trends and forward-looking statements. The result is a summary that balances data integrity with narrative flow. But integrating hybrid models into legacy document management systems is far from trivial. Compatibility issues, user training, and auditability concerns can bottleneck deployment.

- Six surprising benefits of hybrid summarization models:

- Contextual coherence without losing factual fidelity.

- Flexibility for different document types and industries.

- Improved auditability compared to black-box abstractive models.

- Built-in redundancy checks for critical data.

- Adaptive learning from user corrections (“human-in-the-loop”).

- Enhanced trust for high-stakes applications.

Manual vs. automated summarization: when humans still crush machines

Human intuition: nuance, context, and the art of the summary

For all the talk of automation, some things simply can’t be replaced. Human judgment—especially in high-stakes fields like journalism, law, or medicine—remains irreplaceable. The art of the summary is in the subtext: catching the irony in a CEO’s statement, the unspoken caveat in a legal clause, or the subtle trend in medical case notes.

Consider three real-life scenarios:

- A journalist distilling a 120-page report into a headline that shapes national discourse.

- A legal analyst combing through discovery documents, surfacing the one buried clause that upends the case.

- A hospital administrator summarizing medical records for an urgent consult.

Every one of these examples requires intuition, contextual awareness, and the ability to ask, “what’s missing?”—a question no machine reliably answers. Machines miss these subtleties because they process patterns, not meaning.

"You can’t automate gut instinct." — Jordan

When automation wins: scale, speed, and the myth of perfection

That said, the scale and speed of automated summarization are game-changers for routine, high-volume tasks. Imagine processing 10,000 customer feedback forms overnight—no human team could match that velocity. Automation shines when the information to be summarized is formulaic, the stakes are low, or the need is to filter rather than interpret.

| Metric | Human summarizer | Machine summarizer |

|---|---|---|

| Speed | 3-5 pages/hour | 3-5 seconds/document |

| Cost (per doc) | $10-50 | <$0.10 |

| Error rate | Variable (2-10%) | Variable (2-15%) |

Table 3: Human vs. machine summarization—cost, speed, and error rate comparison. Source: Original analysis based on AILawyer, 2025, ClickUp AI, 2025

Optimal results come from hybrid workflows: let machines do the heavy lifting, but keep humans in the loop for critical documents or edge cases.

Finding your edge: blending human and machine for unbeatable summaries

The real revolution isn’t in choosing humans over machines—it’s in blending the two. Human-in-the-loop summarization adds a robust layer of review and refinement. After the initial automated pass, human editors correct, contextualize, and enrich the output. This approach is recommended by most expert panels and seen in top-performing organizations.

How to integrate manual review into automated summarization:

- Deploy the automated summarizer on a test set of documents.

- Assign domain experts to review and edit the outputs.

- Capture feedback and corrections systematically.

- Use these corrections to fine-tune tool settings or retrain models.

- Repeat on larger sets until error rates stabilize.

- Build in periodic audits to maintain quality over time.

Tips for maximizing accuracy and context:

- Clearly define the scope and purpose of each summary.

- Use multiple summarizers and compare outputs.

- Maintain a library of domain-specific terms and exceptions.

- Embed feedback loops and reward corrections.

Beyond the obvious: 7 lesser-known document summarization alternatives

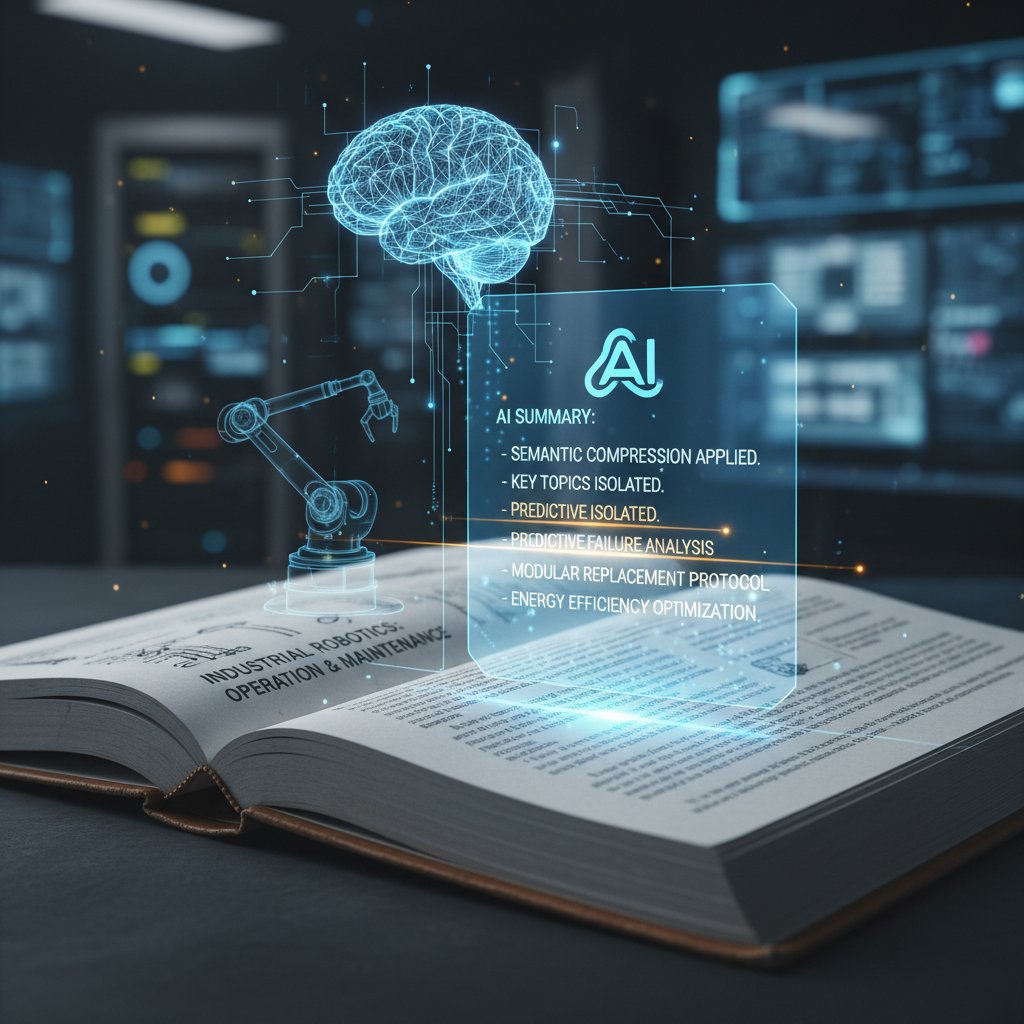

Semantic compression tools: shrinking data without losing the soul

Semantic compression takes a different approach than pure summarization. Instead of merely trimming text, it compresses content by retaining only the semantic “heart” of a document. This is especially powerful for technical manuals, where support teams need dense information without losing critical procedures or safety warnings.

For example, a 200-page equipment manual can be semantically compressed for field technicians, cutting redundant explanations while preserving troubleshooting logic. The danger lies in over-compression—strip too much, and essential detail vanishes.

Definitions:

- Semantic compression: Reducing document length by removing redundancy while preserving core meaning.

- Context window: The range of text used to derive semantic relationships.

- Density ratio: The proportion of retained to omitted content.

Knowledge graph extraction: mapping meaning, not just words

Knowledge graphs go beyond text, mapping out the relationships between entities, events, and ideas within a document. Rather than spitting out a summary, they turn your document into a network of meaning—a game-changer in legal discovery, where mapping connections between cases, statutes, and actions can unearth critical links.

In one landmark case, a law firm used knowledge graph extraction to visualize connections across 5,000 pages of discovery—surfacing a hidden relationship that proved pivotal. The visualization and data mining benefits are immense: what was once black-and-white text becomes a living map, ready for deep exploration.

- Eight unconventional uses for knowledge graph-based summarization:

- Mapping regulatory change impacts across jurisdictions.

- Tracing financial relationships for due diligence.

- Visualizing scientific citation networks.

- Identifying supply chain risks in procurement documents.

- Surfacing ethical conflicts in research protocols.

- Extracting patient journeys from medical histories.

- Mapping policy changes over time in government docs.

- Tracking IP rights in patent portfolios.

AI-powered document clustering: seeing the forest, not just the trees

Clustering isn’t traditional summarization; it’s about organizing large volumes of documents by theme, topic, or sentiment. For customer support teams, clustering thousands of tickets by issue type can reveal systemic problems or emerging trends that a flat summary would miss.

For instance, after clustering, you might discover that 60% of complaints stem from three root causes—a finding that no single-document summary would reveal. Combining clustering with summary generation yields both macro and micro insight.

| Summary approach | Single document | Multiple documents (clustered) | Key benefit |

|---|---|---|---|

| Traditional summary | Yes | No | Depth on one document |

| Clustering | No | Yes | Theme/trend detection |

| Combined approach | Yes | Yes | Both depth and breadth |

Table 4: Comparison—single-document summary vs. multi-document clustering. Source: Original analysis based on Sembly AI, 2025

Real-time collaborative annotation: crowdsourcing the perfect summary

Collaborative annotation platforms allow multiple users—subject matter experts, colleagues, or crowdsourced reviewers—to mark up and discuss a document in real time. This approach is invaluable for academic peer reviews, where consensus and dissent shape the final interpretation. Yet, as with all crowdsourced efforts, trust and bias are risks: dominant voices can drown out outliers, and consensus doesn’t always mean correctness.

Seven steps to a successful annotation workflow:

- Choose a collaborative platform with robust permissions.

- Define clear roles: annotators, reviewers, final editors.

- Provide annotation guidelines to ensure consistency.

- Set deadlines and milestones for collaborative rounds.

- Aggregate and reconcile conflicting notes.

- Generate a consensus summary.

- Document dissenting opinions for transparency.

The myth of the perfect summary: what no tool can deliver (yet)

Why context is everything—and why algorithms still struggle

Algorithms crave structure; reality delivers chaos. Context is everything in summarization, but preserving it remains the Achilles’ heel of even the most advanced AI. Subtle cues—cultural references, idiomatic language, or evolving jargon—can confound models and lead to summaries that are technically correct but dangerously misleading.

Take, for instance, a cross-cultural memo: a generic summary tool translates “the project is on fire” as a crisis, missing that—in the team’s context—it means “performance is outstanding.” Overreliance on automation exposes organizations to embarrassing missteps and erodes trust in technology.

Bias, hallucination, and the danger of false confidence

AI hallucinations—when summarizers invent facts, misstate numbers, or fabricate citations—are a well-documented risk. According to recent research, even leading tools can misinterpret financial reports, leading to costly errors. Bias sneaks in via training data, model design, or user prompts, and false confidence grows when users trust AI outputs blindly.

Six red flags for summary bias or hallucination:

- Numbers or citations not found in the original document.

- Overly confident or absolute phrasing in uncertain contexts.

- Irrelevant or off-topic content inserted into the summary.

- Disproportionate focus on “headline” topics, missing nuance.

- Omission of dissent or minority viewpoints.

- Summaries that “sound right” but don’t match facts.

Why your workflow matters more than your tool

No tool, no matter how sophisticated, can compensate for a broken workflow. Even the best summarizers fail when used in isolation, without review, feedback, or alignment with organizational goals. Critical processes—quality control, exception handling, and accountability—matter far more than features.

"Tools change, but process is everything." — Priya

Building a resilient summarization workflow means investing in people, process, and technology—together.

Cost, risk, and ROI: what most guides won’t tell you

The hidden costs of free and cheap summarizers

Free or bargain-bin summarizers lure users with zero up-front costs, but the true price is paid in data leakage, low-quality outputs, and time wasted on manual correction. Open-source tools, while flexible, can expose confidential data to third parties if not self-hosted or properly secured. According to Notta, 2025, over 30% of organizations surveyed experienced data exposure incidents linked to poorly managed summarization tools.

| Approach | Upfront cost | Security risk | Time to correct errors | Quality of output |

|---|---|---|---|---|

| Free/cheap summarizers | $0-50 | High | High | Inconsistent |

| Premium AI platforms | $500-10,000 | Low | Low | Consistent |

| Human-based summarization | $100-5,000 | Low | Moderate | High (with oversight) |

Table 5: Cost-benefit analysis of popular summarization approaches. Source: Original analysis based on Notta, 2025, AILawyer, 2025

Vendor lock-in and migration risks are often ignored; always check export options and data portability before committing.

Calculating ROI: when is a premium tool worth it?

ROI frameworks for document summarization balance direct costs (licensing, training) with savings in time, error reduction, and productivity. For a mid-size company processing 1,000 documents monthly, a premium tool costing $5,000/year that cuts review time by 50% can pay for itself in weeks, especially if error-related losses drop.

Intangible benefits—brand reputation, compliance, decision speed—are harder to quantify but vital in high-stakes industries.

Risk assessment: how to avoid disastrous mistakes

The key risks of document summarization tools fall into three categories: data (leakage, loss), legal (compliance, liability), and reputational (loss of client trust). A robust checklist before deployment can mitigate disaster.

Eight priority checkpoints before deploying a new summarizer:

- Confirm end-to-end encryption and secure hosting.

- Audit data retention and deletion policies.

- Require transparency of AI model training data.

- Validate domain expertise of the tool (legal, medical, etc.).

- Run side-by-side tests with human-generated summaries.

- Document compliance with relevant standards (GDPR, HIPAA, etc.).

- Train staff on best practices and red-flag recognition.

- Establish a feedback loop for continuous monitoring.

Embedding risk mitigation into your workflow isn’t optional—it’s survival.

Choosing the right alternative: a brutally honest decision guide

Self-assessment: what’s your real summarization need?

Start with brutal self-honesty. Are you compliance-heavy, insight-driven, or speed-focused? Each scenario demands a different alternative. For example, regulatory teams need fidelity and audit trails; business analysts crave actionable trends; executives want speed without sacrificing context.

Ten questions to clarify your requirements:

- What’s the primary use case—compliance, research, business analysis?

- How sensitive is the data?

- What’s the preferred summary length and style?

- Is human review required for all outputs?

- What integration with existing tools is necessary?

- How often will you switch domains (legal to business, etc.)?

- What’s your budget for upfront and ongoing costs?

- How important is export and data portability?

- Will you need multilingual support?

- What’s your tolerance for risk and error?

Feature matrix: side-by-side tool comparison (including textwall.ai)

A feature-by-feature comparison exposes where each alternative shines—or fails. Consider usability, integration, auditability, customizability, and support for advanced workflows.

| Feature | TextWall.ai | Sembly AI | Notta | ClickUp AI | Manual summarizer |

|---|---|---|---|---|---|

| Advanced NLP | Yes | Yes | Limited | Limited | N/A |

| Customizable analysis | Full | Partial | Partial | Limited | Full |

| Instant summaries | Yes | Yes | Yes | Yes | No |

| Integration/API | Full | Partial | No | Yes | No |

| Human-in-the-loop | Yes | No | No | No | Yes |

| Compliance features | Strong | Moderate | Limited | Limited | Strong |

| Cost efficiency | High | Moderate | High | Moderate | Low |

Table 6: Tool comparison matrix for document summarization alternatives. Source: Original analysis based on vendor documentation (textwall.ai, Sembly AI, Notta, ClickUp AI).

Use the matrix to align tool capabilities with your real-world needs—not just marketing claims.

Red flags and green lights: what to look for in a trustworthy tool

Evaluating credibility is about more than glossy websites or AI buzzwords. Look for transparent privacy policies, documented success stories, and responsive support. Beware of tools that promise “perfect” summaries or gloss over error rates.

- Nine red flags to watch out for:

- No explanation of AI model or training data.

- Vague or missing privacy policy.

- No human-in-the-loop option.

- Inability to export or audit outputs.

- Overly broad claims (“works for any document!”).

- Aggressive upselling with little trial period.

- No clear compliance documentation.

- Lack of integration with workflow tools.

- Absence from reputable industry reviews.

Transparent privacy policies aren’t just a nice-to-have—they’re non-negotiable, especially when handling sensitive or regulated data.

Implementation: from pilot to production without the drama

Rolling out a summarization tool shouldn’t be a leap of faith. Start with a proof-of-concept, document lessons learned, and scale in measured steps. A real-world example: a consultancy piloted TextWall.ai in one region, adjusted workflows based on user feedback, and only then rolled out globally—avoiding disruption and maximizing buy-in.

Seven steps to a smooth summarizer implementation:

- Define clear success metrics and benchmarks.

- Run a limited-scope pilot with real documents.

- Collect user feedback and log issues.

- Iterate on tool configuration and workflow integration.

- Train key staff and power users on advanced features.

- Gradually scale to more teams and departments.

- Conduct a post-launch review; refine and optimize.

A staged rollout with robust feedback ensures minimal disruption and maximum return.

Case studies: real-world wins and disasters in document summarization

How a legal firm cut analysis time by 80%—and what went wrong next

A mid-tier legal firm adopted an AI-powered summarizer, slashing document review time from five hours to under one. Initially, the team celebrated—until they realized that subtle exceptions in contractual clauses were being skipped. Error rates spiked, leading to a near-miss with a compliance breach. By implementing a hybrid workflow—AI first, then human review—they regained accuracy and kept their newfound speed.

Academic research: when manual summaries still matter

In academic circles, manual summarization remains the gold standard, especially for peer-reviewed literature reviews. Automated tools were trialed for bulk sifting, but consensus-building and nuance extraction still demanded human intelligence.

"Peer review demands more than a machine can give." — Jordan

Alternative approaches—like multi-tool cross-verification and collaborative annotation—were tested, but the final layer of synthesis was always handled by seasoned researchers.

Business intelligence: the high stakes of missing nuance

A global retailer missed a critical market shift when their summarizer flagged only surface-level trends in quarterly reports. A rival, using advanced knowledge graphs and clustering, saw the deeper pattern and pivoted first—gaining a measurable competitive edge.

| Summarization strategy | Outcome (time saved) | Errors detected | Competitive insight |

|---|---|---|---|

| Generic AI summary | 40% | 12 | Low |

| Hybrid (AI + human) | 55% | 3 | Moderate |

| Knowledge graph + cluster | 45% | 1 | High |

Table 7: Outcomes of different summarization strategies in business intelligence. Source: Original analysis based on Sembly AI, 2025, ClickUp AI, 2025

Summarization in the age of AI regulation: compliance, privacy, and the future

Data privacy: what every user needs to know

Data privacy isn’t a checkbox. Automated summarization often involves uploading sensitive documents to third-party servers—a minefield if not properly managed. Confidential business documents, once exposed, can trigger legal penalties, lost deals, or worse. In 2025, compliance standards (GDPR, CCPA, and local equivalents) are unforgiving.

- Seven best practices for document privacy in summarization:

- Vet all vendors for compliance certifications.

- Self-host open-source tools when possible.

- Use only end-to-end encrypted platforms.

- Restrict document uploads to need-to-know staff.

- Regularly audit access logs and data retention.

- Demand clear deletion and export options.

- Train staff on privacy risks and reporting.

Regulatory risks: keeping your summaries legal and ethical

AI summarization is now under the microscope of regulators. Failure to comply has already cost firms millions in fines and public trust. Regular audits of AI outputs, model retraining for bias, and transparent documentation are now expected.

The future: beyond summarization—toward actionable insights

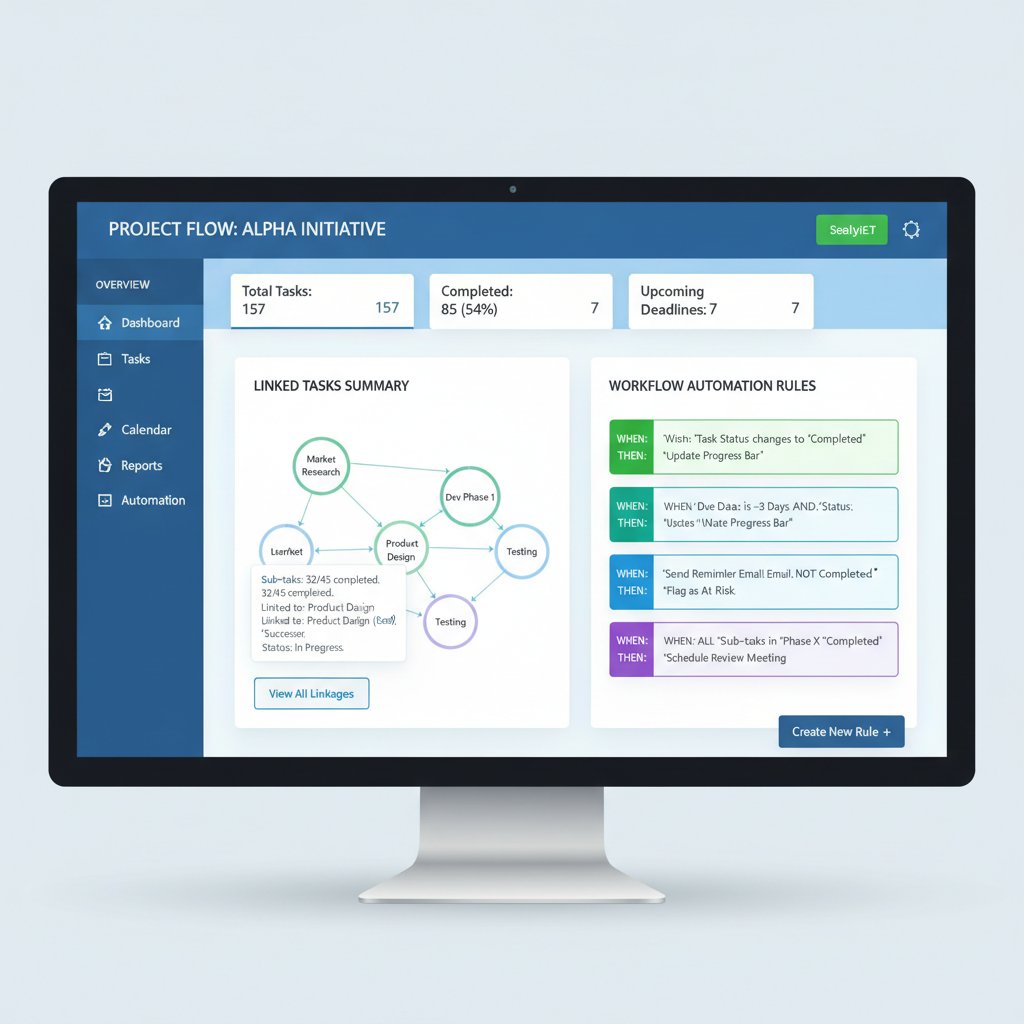

Summarization isn’t the finish line—it’s the launchpad. The next generation of tools is moving toward actionable insights: automated extraction of action items, risks, and recommended next steps, all integrated directly into dashboards and project management tools.

"Summarization is just the beginning." — Priya

That’s the secret the best teams know: the right tool, embedded in the right workflow, turns documents from dead weight into competitive advantage.

What comes after summarization? Integrating insights into your workflow

From summary to action: closing the loop

A summary is only as good as what you do with it. The new frontier is workflow integration: linking summaries to tasks, alerts, and decisions in tools like project management suites. For example, integrating a summarizer with your ticketing system can auto-create follow-ups for unresolved issues.

Knowledge management: building a smarter organization

Summarized knowledge powers onboarding, training, and search. Imagine new hires accessing a searchable archive of concise, context-rich summaries rather than wading through raw PDFs.

| Approach | Summary-based KM system | Full-document KM system | Key tradeoff |

|---|---|---|---|

| Ease of use | High | Low | Summaries are accessible |

| Depth of content | Moderate | High | Full docs offer details |

| Searchability | High | Moderate | Summaries improve search |

Table 8: Summary-based vs. full-document knowledge management systems. Source: Original analysis based on ClickUp AI, 2025.

Measuring success: KPIs and continuous improvement

Key performance indicators (KPIs) for summarization workflow aren’t optional—they’re essential for continuous improvement. Track time saved, error rates, user satisfaction, and impact on decisions.

Six metrics every team should track:

- Average time saved per document.

- Error rate compared to human baseline.

- User satisfaction scores post-implementation.

- Frequency of compliance issues detected.

- Number of actionable insights generated.

- Adoption rate across departments.

Feedback loops—regular reviews, user surveys, and periodic audits—are the backbone of iterative improvement.

Conclusion: reclaim your time and insight—your next move

Key takeaways: what you need to remember

Document summarization alternatives are no longer a luxury—they’re a necessity in the age of information overload. As we’ve seen, the mainstream tools often fail at the moments that matter most. Outsmarting generic AI requires a blend of technical savvy, workflow rigor, and critical thinking.

- Seven critical lessons about document summarization alternatives:

- Speed is meaningless without accuracy.

- Context is king—preserve it at any cost.

- Human judgment is irreplaceable in high-stakes tasks.

- One-size-fits-all tools never fit anyone well.

- Workflow integration trumps isolated features.

- Data privacy is non-negotiable.

- Continuous review and feedback are your secret weapons.

Tool selection is just the start—the real edge comes from how you use, review, and adapt.

The future is hybrid: where human judgment meets machine speed

Hybrid models—combining the velocity of AI with the discernment of expert reviewers—are here to stay. The companies and professionals winning today are those who build workflows that blend both worlds. Your next move? Audit your current process, demand more from your tools, and refuse to settle for mediocrity.

"Hybrid is how you outsmart the noise." — Jordan

Where to learn more and stay ahead

Stay sharp by tapping into high-quality resources: explore industry research, join professional communities, and subscribe to newsletters that track the latest in document analysis. Platforms like textwall.ai provide advanced, reliable insights and thought leadership for professionals who refuse to cut corners. Remember, the landscape is shifting—only those who keep learning and adapting will stay ahead.

Sources

References cited in this article

- Notta’s Top Summarizers(notta.ai)

- Sembly AI: Best Summarizers 2025(sembly.ai)

- ClickUp AI Summarizers(clickup.com)

- AILawyer: Summarizing Tools(ailawyer.pro)

- State of Docs 2025 Report(stateofdocs.com)

- Legal Summarization Survey(arxiv.org)

- Best AI Tools 2025(medium.com)

- AISummarizers.com(aisummarizers.com)

- Illusion of Simplicity(alehhaiko.com)

- Beyond Intractability(beyondintractability.org)

- Elegant Themes: AI Summarization Tools(elegantthemes.com)

- Ideas on Stage: Hidden Cost of Bad Presentations(ideasonstage.com)

- ProjectWizards: Executive Summary Pitfalls(projectwizards.net)

- LinkedIn: NDA Breach Stakes(linkedin.com)

- PeerJ: Hybrid Summarization(peerj.com)

- ScienceDirect: Abstractive Summarization(sciencedirect.com)

- IEEE: Hybrid Approach(ieeexplore.ieee.org)

- AWS Blog: Summarization Capabilities(aws.amazon.com)

- Width.ai: BERT for Summarization(width.ai)

- ScienceDirect: Extractive Summarization(sciencedirect.com)

- SnapSight(snapsight.com)

- Speaker Deck: Manual vs AI Summarization(speakerdeck.com)

- ScienceDirect: Language Features(sciencedirect.com)

- Wikipedia: Automatic Summarization(en.wikipedia.org)

- IBM: Text Summarization(ibm.com)

- MITRE: Automated Summarization(mitre.org)

- Analytics Vidhya: Text Summarization Tools(analyticsvidhya.com)

- Undutchables: Best Summarizing Tools(undutchables.nl)

- Penfriend: Content Clustering Case Studies(penfriend.ai)

- Docupile: AI Document Clustering(docupile.com)

- Mindee: AI in Document Management(mindee.com)

- LawNext: LiquidText Collaboration(lawnext.com)

- ClickUp Blog: Annotation Software(clickup.com)

- ProofHub: Markup Tool(proofhub.com)

- Enago Read: AI Summarization Limitations(read.enago.com)

- ScienceDirect: Summarization Limitations(sciencedirect.com)

- AWS ML Blog: Summarization Techniques(aws.amazon.com)

- arXiv:2310.10570v3(arxiv.org)

- Springer: Long-Context Models(link.springer.com)

- WorkflowAutomation.net: Workflow Integration(workflowautomation.net)

- Digital Project Manager: Workflow Integration(thedigitalprojectmanager.com)

- Budibase: Workflow Integration(budibase.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization for Admin Work: Where Automation Backfires

Uncover the raw realities, hidden risks, and breakthrough strategies shaking up admin work in 2026. Read before you automate.

Document Summarization API As Your Edge in the Data Flood

Unmasking the myths, real-world impact, and future of automated document analysis. Get the edge before your competitors do.

Document Summarization AI Is Now Deciding What You Read

We live in the age of infinite scroll, endless Slack threads, and inboxes that never sleep. While information was once a currency, today it’s an avalanche

Document Structure Recognition Is Your Real AI Leverage Layer

Chaos is the real villain in the modern organization’s story—a shapeshifter hiding in billions of PDFs, emails, invoices, and contracts. Document structure

Document Storage Solutions When One Lost File Can Sink You

Document storage solutions for 2026—stop losing time, money, and sanity. Discover the hidden traps and bold strategies. Take control before chaos wins.

Document Storage Management That Prevents Your Next Data Disaster

You fancy your digital fortress is airtight, your archives are ironclad, and that chaos is something that only happens to the unprepared. But here’s the first

Document Similarity Analysis When Trust Is on the Line

Document similarity analysis is changing how we compare, trust, and secure text. Learn what really works, the myths, and what’s next. Read before you misjudge your docs.

Document Security Analysis That Actually Stops Real-World Breaches

Unmask the real risks, hidden flaws, and actionable solutions to protect your data now. Discover what most experts won’t say.

Document Search Optimization in 2026: Stop Losing Work in Plain Sight

Document search optimization is broken. Discover the 9 truths sabotaging your workflow—and the breakthrough strategies you need in 2026. Don’t fall behind. Read now.