Document Summarization API As Your Edge in the Data Flood

In an era when the volume of information is growing faster than we can blink, the promise of a document summarization API sounds almost suspiciously good. Imagine distilling a hundred-page report into a handful of sentences, slashing hours of tedium while—supposedly—retaining all the essentials. Yet, beneath that shimmering surface lurk hard truths and inconvenient realities. The world is hooked on speed, but the reality of automated document analysis is more nuanced, more thrilling, and—occasionally—more treacherous than most realize. This isn’t just tech hype or another vaporware promise. Document summarization APIs are reshaping knowledge work, disrupting age-old bottlenecks, and, when used right, can make the difference between drowning in data and riding the information wave with swagger. Here’s the untold story: the myths, the machine’s dark side, the hacks, and the secrets to actually winning with this technology—before your competition even knows what hit them.

Information overload: Why document summarization API matters now more than ever

The crisis of too much information

Modern professionals are engaged in a daily battle against an endless stream of reports, emails, articles, and whitepapers. According to ShareFile’s 2025 workplace study, workers now spend an average of 3.6 hours every day just searching for information—not actually using it (ShareFile, 2025). This isn’t just an inconvenience; it’s an outright productivity killer, slicing into focus, creativity, and decision-making. The deluge shows no sign of slowing, as the proliferation of digital content accelerates across sectors. Whether you’re a corporate analyst buried under quarterly reports or a lawyer wading through compliance updates, the cognitive overload is real, and the consequences echo in burnout, errors, and competitive drag.

This isn’t a trend confined to the boardroom. Researchers, journalists, and even students are buckling under the weight of information abundance. The irony is sharp: technology promised to make information easy to access, but instead, the glut has morphed into a new form of digital chaos. Enter the document summarization API—a tool that’s become a lifeline for knowledge workers desperate for clarity amid the noise.

API-powered summarization as a survival tool

The rise of document summarization APIs isn’t just a story of technical innovation—it’s a survival response to modern chaos. These APIs don’t merely automate reading; they weaponize it. By seamlessly extracting the most relevant sentences or phrases from sprawling documents, APIs transform hours of reading into seconds of insight. As Maya, a seasoned NLP engineer, puts it:

“If you’re not automating your reading, you’re already behind.”

— Maya, NLP engineer

APIs have moved from experimental curiosities to essential business infrastructure, embedded in tools for market research, legal review, and academic literature review. Their integration into modern pipelines reflects a deeper shift: from reactive data consumption to proactive information mastery. The savvy aren’t just using these tools—they’re building their edge on them.

What most people get wrong about document summarization

Despite the buzz, most people fundamentally misunderstand document summarization APIs. The biggest myth? That all APIs are interchangeable, delivering generic, reliable summaries regardless of context or input. This couldn’t be further from the truth. The reality is that the quality, methodology, and results vary wildly between providers and architectures. A hastily chosen API can just as easily bury key details as surface them.

Hidden benefits of document summarization API experts won't tell you:

- Precision targeting: Advanced APIs can filter out irrelevant sections—boilerplate, disclaimers, and noise—surfacing only actionable content.

- Multimodal processing: Some APIs now handle not just text, but also audio and image-based documents, broadening their application from meeting transcripts to scanned contracts.

- Customization: Domain-specific models can be fine-tuned to recognize jargon, regulatory language, or business KPIs, delivering summaries that actually make sense in context.

- Time to insight: When properly configured, APIs deliver results in seconds, not hours—shaving days off high-stakes workflows.

- Reduced bias: Unlike manual summarizers, the best APIs apply consistent logic, minimizing the risk of selective human filtering.

These strengths, however, are matched by pitfalls for the unwary—a theme to which we’ll return.

The evolution of document summarization: From manual slog to machine speed

A brief history of summarization technology

Summarization didn’t start with silicon. For centuries, it was the domain of overworked interns, junior lawyers, and graduate students, tasked with distilling dense material for their superiors. The first wave of automation arrived with simple extractive algorithms: frequency analysis, keyword spotting, and sentence ranking. But these rule-based systems were crude, often missing nuance and context.

The real inflection point came with neural networks and, most recently, with Large Language Models (LLMs) like GPT-4 and its competitors. These sophisticated models don’t just slice and dice—they “understand,” paraphrase, and even reinterpret content, making summarization not just faster but dramatically smarter.

| Year | Technology | Impact on Summarization |

|---|---|---|

| Pre-2000s | Manual summarization | Human-driven, context-rich but slow and inconsistent |

| 2000-2010 | Extractive algorithms (e.g., TF-IDF) | Fast, but lacked nuance and often missed context |

| 2010-2018 | Neural networks (Seq2Seq) | Improved sentence relevance, still error-prone |

| 2018-2021 | Transformer models (BERT, T5) | Enhanced context awareness and accuracy |

| 2021-present | LLM APIs (GPT-3/4, proprietary) | Abstractive, contextual, capable of domain adaptation |

Table 1: Timeline of document summarization API evolution. Source: Original analysis based on MeaningCloud, 2023, Width.ai, 2023, Azure AI, 2024

Extractive vs. abstractive: The battle for better summaries

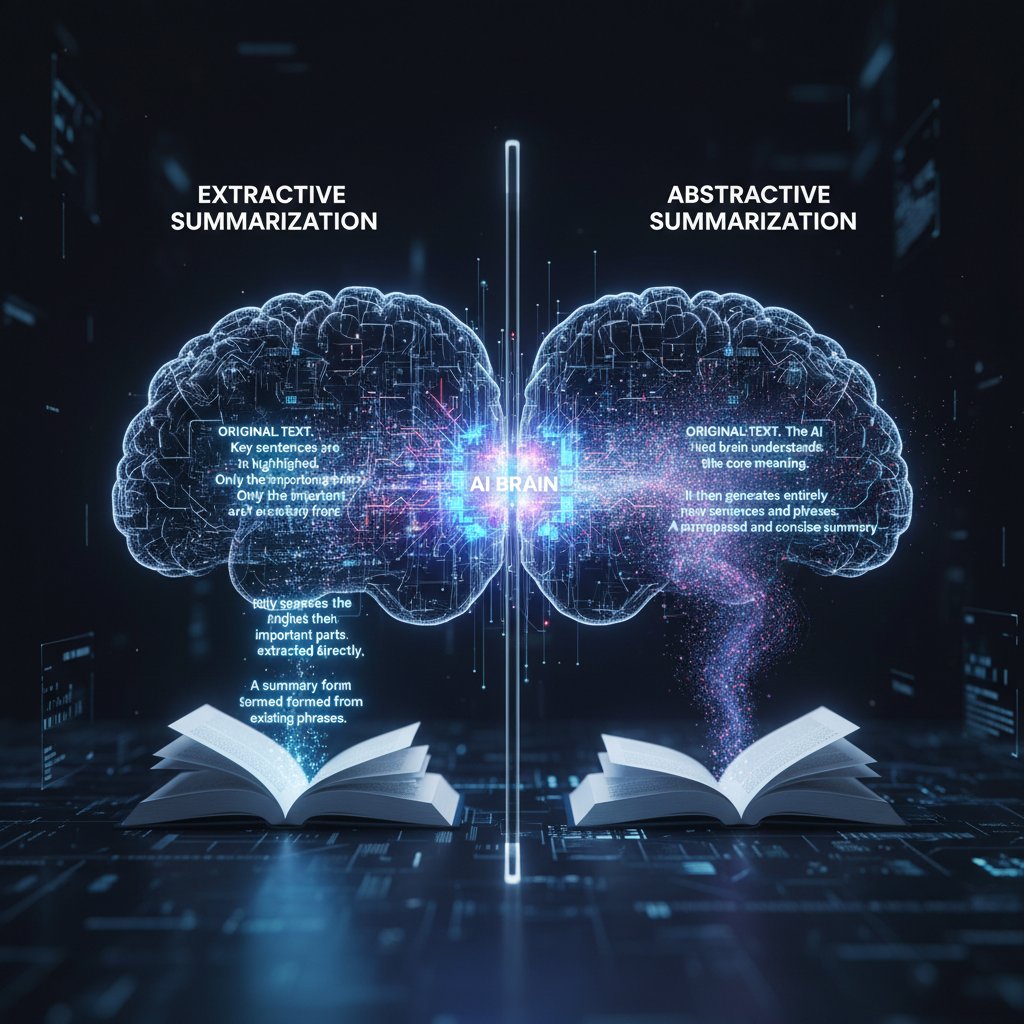

At the heart of every summarization API lies a philosophical—and technical—showdown: extractive versus abstractive summarization.

Extractive models identify and pull key sentences verbatim from the source text, ensuring that summaries remain “faithful” but sometimes robotic. Abstractive models, in contrast, generate entirely new sentences, paraphrasing the original meaning—offering the potential for greater clarity, but at the risk of factual drift or hallucination.

Both approaches have their place. Extractive summarization is brutally efficient for compliance reviews, legal audits, or any setting where verbatim accuracy is non-negotiable. Abstractive models shine in fields like journalism or executive briefings, where readability and narrative flow matter as much as fidelity.

The best APIs offer hybrid approaches, blending extraction for factual reliability with abstraction for accessibility. But merely choosing a method isn’t enough—input quality, domain complexity, and model tuning are all make-or-break factors.

Why LLMs changed the game

LLMs didn’t just speed up summarization—they fundamentally changed its character. By training on vast swathes of internet content, academic papers, and technical manuals, modern LLMs can “read between the lines,” identifying not only what’s said but what’s implied. The result? Summaries that capture tone, nuance, and context, not just keywords.

As Alex, an AI product lead, aptly notes:

“LLMs don’t just condense—they reinterpret.”

— Alex, AI product lead

For document-heavy verticals like law, academia, and market analysis, LLM-driven APIs like those from textwall.ai are delivering a quantum leap in actionable insight. But this newfound power comes with new responsibilities—chief among them, vetting for hallucinations, bias, and domain drift.

How document summarization APIs work: Under the hood of automated insight

Breaking down the API pipeline

The magic of a document summarization API isn’t magic at all—it’s a carefully orchestrated series of technical steps. When a user submits a document—be it text, a URL, or even an image—the system first ingests the input, cleans it, and runs it through a process called tokenization, chopping the content into machine-readable chunks.

Next, the core model (often a transformer-based LLM) analyzes these tokens, drawing on its training to infer meaning, structure, and salience. The model then generates the summary—either by selecting key sentences (extractive) or by drafting new ones (abstractive). The output passes through formatting and validation steps before being delivered via API response.

The pipeline’s efficiency and reliability depend on a host of factors: the underlying model, the preprocessing routines, and—crucially—the specificity of the summary prompt or configuration.

Accuracy benchmarks: Can you trust the output?

With so much at stake, users rightly ask: are API-generated summaries trustworthy? Industry benchmarks typically use metrics like ROUGE (Recall-Oriented Understudy for Gisting Evaluation) to compare summaries to human-generated gold standards. Yet, these scores only tell part of the story.

| API Provider | ROUGE-L Score | User Satisfaction (%) | Real-World Context Suitability |

|---|---|---|---|

| textwall.ai | 0.52 | 92 | High (multi-domain support) |

| Azure AI | 0.47 | 89 | High (business, legal) |

| SummarizeBot | 0.43 | 81 | Moderate (general use) |

| MeaningCloud | 0.41 | 78 | Moderate (news, web) |

Table 2: Current accuracy and satisfaction benchmarks for leading document summarization APIs. Source: Original analysis based on MeaningCloud, 2023, Azure AI, 2024

User satisfaction, as measured in recent surveys, tracks closely with the API’s ability to deliver contextually relevant and concise summaries. But no tool is infallible—edge cases, technical jargon, and poorly formatted inputs can all torpedo output quality.

Common limitations (and how to hack around them)

Even the best document summarization APIs grapple with core limitations: hallucinations (AI inventing details), context loss on very large documents, and struggles with highly specialized language.

Here’s how to hack around them:

- Pre-clean your input: Remove headers, footers, and irrelevant sections before submitting documents.

- Chunk long documents: Break massive files into logical sections for separate summarization.

- Specify your prompt: Use detailed, domain-specific instructions if the API allows.

- Review and edit: Treat the summary as a draft—spot-check against the original for critical tasks.

- Feedback loops: Where possible, use APIs that allow human corrections to retrain or fine-tune future results.

These workarounds won’t turn a bad API into a good one, but they’ll help squeeze the most out of any platform.

Use cases you didn't see coming: Real-world applications that break the mold

Beyond the obvious: Unconventional industries using APIs

The impact of document summarization APIs isn’t limited to tech giants and white-collar offices. In fact, some of the most creative use cases are cropping up in sectors nobody predicted.

- Legal due diligence: Law firms are deploying APIs for initial review of discovery documents and compliance reports, cutting review cycles in half.

- Investigative journalism: Newsrooms use summarization to sift through FOIA data dumps, surfacing leads that would otherwise remain buried.

- Intelligence analysis: National security teams leverage APIs to triage intercepted communications and open-source intelligence, sharpening their focus.

- Healthcare administration: Hospitals summarize patient records for rapid intake and billing—boosting efficiency and reducing error rates.

- Nonprofits and advocacy: NGOs parse grant proposals and impact reports, enabling faster, data-driven funding decisions.

Unconventional uses for document summarization API:

- Summarizing audio transcripts from recorded meetings in real-time.

- Analyzing and condensing technical manuals for field technicians.

- Processing large-scale survey responses for sentiment and trend extraction.

- Extracting highlights from social media monitoring reports.

The lesson? The more complex and sprawling your data, the bigger the payoff from a robust API.

Case study: How a newsroom killed the daily grind with AI

Picture this: A bustling newsroom, midnight deadline looming, dozens of field reports and wire stories landing every minute. Editors are burning out and mistakes creep in. Enter a document summarization API, seamlessly integrated into the editorial workflow. Field reports are instantly condensed into actionable briefs, freeing editors to focus on angles and fact-checking, not mindless reading.

The result? Productivity soars, burnout drops, and, most importantly, stories get sharper, faster. The API doesn’t replace editorial judgment—it amplifies it, letting humans do what they do best: ask questions, chase nuance, and craft narratives.

Unexpected wins (and ugly failures)

Of course, not every API story is a victory march. There are well-documented cases of disastrous rollouts—summaries that omitted critical disclaimers, misinterpreted legal terms, or hallucinated events that never happened.

| Outcome | Key Features Present | Key Features Missing | Result |

|---|---|---|---|

| Success | Domain adaptation, human-in-loop, pre-processed input | N/A | High-quality, actionable summaries, minimal errors |

| Failure | Basic extractive only, no domain tuning, raw input | Human review, context awareness | Key details missed, factual errors, mistrust |

Table 3: Feature matrix comparing successful and failed API implementations. Source: Original analysis based on user testimonials and industry case studies

The takeaway? APIs are powerful accelerators, but only when wielded with expertise and skepticism.

Cost, risk, and the data privacy minefield: What no one tells you

The real price tag (it's not just dollars)

Adopting a document summarization API isn’t just a line item in your IT budget. Hidden costs lurk everywhere: the time spent training models on your specific data, the expense of customizing workflows, and the risk of vendor lock-in if you choose a proprietary system. And let’s not ignore the human cost—misplaced trust in an API can mean missed insights or regulatory exposure.

| Cost Factor | Manual Summarization | Automated API Summarization |

|---|---|---|

| Direct labor | High | Low |

| Setup/training | Low | Moderate (fine-tuning, integration) |

| Ongoing maintenance | Moderate | Moderate |

| Customization | Manual (painful) | Easier (if API supports) |

| Error/misinterpretation | Human bias | Model hallucination |

| Scalability | Poor | Excellent |

Table 4: Cost-benefit analysis of manual vs. automated summarization. Source: Original analysis based on [ShareFile, 2025], Azure AI, 2024

Data privacy and security: Where trust is won or lost

Nothing will tank your reputation faster than a data leak. The best APIs encrypt data in transit and at rest, offer granular access controls, and commit to rigorous compliance (GDPR, HIPAA, etc.). But not all vendors play by these rules. One misstep, and sensitive material could end up on a public server—or worse, in a competitor’s hands.

“One leak is all it takes to destroy trust.”

— Chris, CTO

The only safe bet is ruthless due diligence. Demand clear documentation, transparent security practices, and explicit data retention policies before integrating any API into your workflow.

How to spot red flags in API providers

Not all APIs are created equal, and some vendors are more interested in your credit card than your security.

Red flags to watch out for when choosing a document summarization API:

- Opaque pricing: If you can’t get a straight answer about costs, run.

- Vague security claims: “Military-grade encryption” means nothing without specifics.

- No compliance certifications: Legit APIs proudly display GDPR, HIPAA, or SOC2 badges.

- No human support: If you hit a wall, will anyone answer?

- Black-box models: If the vendor can’t explain how summaries are generated, you’re risking errors you can’t audit.

Ask pointed questions and demand evidence before signing any contract.

Choosing the right document summarization API: Beyond the marketing hype

Critical features to demand (and what to ignore)

The API market is awash in buzzwords and feature lists that sound impressive—until you dig deeper. The must-haves:

- Customizable summaries: Can you tweak length, focus, or format?

- Multi-format support: Text, PDF, images, and more.

- Language coverage: Essential for global teams.

- Domain adaptation: Does it understand your industry’s jargon?

- Transparent metrics: ROUGE scores, user satisfaction data.

Ignore: Gimmicky dashboards, “AI-powered” labels without substance, and features you’ll never need.

Key terms and concepts in document summarization APIs:

- Tokenization: The process of breaking input text into smaller pieces for analysis.

- Extractive summarization: Selecting sentences directly from the original text.

- Abstractive summarization: Generating new sentences that paraphrase the core content.

- Model hallucination: When an AI invents facts not present in the source material.

- ROUGE score: A metric comparing generated summaries to human-written ones.

- Fine-tuning: Customizing a model on a specific dataset for higher accuracy.

Checklist: What to test before you commit

Practical due diligence can save you from months of headaches.

- Feed domain-specific documents: Does the API handle your jargon?

- Test edge cases: Throw in messy, poorly formatted files—what happens?

- Check multi-language support: If relevant, does it work reliably outside English?

- Measure speed and scalability: Can it handle your volume without breaking?

- Evaluate API documentation: Is it clear, thorough, and up-to-date?

- Review security disclosures: Are encryption, retention, and access policies explicit?

- Solicit user feedback: Ask real users about bugs and pain points.

Priority checklist for document summarization API implementation:

- Test with real, messy data.

- Evaluate summary accuracy and relevance.

- Stress-test for scalability.

- Vet security and compliance.

- Insist on transparent support channels.

When NOT to use an API (the uncomfortable truth)

Sometimes, the best decision is to skip automation altogether. If your documents demand 100% context preservation, involve subtle legal or ethical nuance, or are mission-critical for regulatory compliance, even the best API might fall short.

In such cases, human review isn’t a luxury—it’s a necessity. Use APIs as accelerators, not replacements, when stakes are high.

Integration and workflow: Making APIs work for your real-world mess

Plug-and-play vs. custom builds: What fits your stack?

The API world splits into two camps: plug-and-play solutions with slick dashboards and rapid onboarding, or custom integrations that demand engineering muscle but offer tailored results. Plug-and-play is king for small teams who need quick wins and low technical barriers. Custom builds, meanwhile, let enterprises weave summarization directly into their platforms, automate workflows, and build deep analytics.

Choosing between the two isn’t just about budget—it’s about culture, scale, and how much control you crave.

Hybrid human-AI workflows: The future of summarization

The smartest teams aren’t replacing humans—they’re augmenting them. Hybrid workflows let AI do the donkey work: scanning, condensing, and highlighting. Humans step in where nuance, judgment, and sensitivity are non-negotiable.

“The smartest teams let AI do the grunt work, then perfect the results.”

— Priya, product manager

This approach minimizes both cost and risk, creating a feedback loop where human corrections improve future AI output. It’s the cutting edge of knowledge work—fast, flexible, and resilient.

Common mistakes and how to avoid them

Integration is where theory meets reality—and where many projects stumble.

7 common mistakes when integrating document summarization APIs:

- Ignoring input quality: Garbage in, garbage out.

- Underestimating fine-tuning needs: Out-of-the-box models rarely excel in niche domains.

- Skipping human review: Trusting AI blindly is a rookie move.

- Neglecting security: API keys on public repositories? Game over.

- Poor error handling: What happens when the API fails?

- Lack of documentation: If no one knows how it works, no one can fix it.

- No feedback loop: Without user input, models stagnate.

Avoid these, and you’ll be ahead of most.

The big myths: What everyone gets wrong about document summarization APIs

Mythbusting: Separating fact from fiction

For all the hype, document summarization APIs remain thick with misconceptions.

Common myths about document summarization APIs:

Reality: Even top-tier models hallucinate or miss key points, especially in niche domains.

Reality: Domain adaptation is crucial. Generic APIs often fail with technical, legal, or scientific content.

Reality: The best results come from hybrid workflows that combine machine speed with human nuance.

Reality: Customization, integration, and testing are frequently mandatory for meaningful results.

Reality: Model training data can encode subtle, systemic biases.

Bias, hallucinations, and the limits of machine understanding

The dirty secret of AI summarization? It’s only as objective as its training data—and even then, it can make things up. Bias creeps in when models are trained on imperfect or unrepresentative data, while hallucinations emerge when AIs try to “fill in” gaps they don’t fully understand.

These failures aren’t just technical glitches—they have real-world impacts, from misinterpreted legalese to dangerous medical oversights. Combating them requires vigilance, feedback, and transparent evaluation.

Transparency and explainability: Why you should care

Demand for “explainable AI” isn’t just academic—it’s a regulatory and business imperative. Especially in regulated sectors, you need to know not just what the API spits out, but why.

Questions to ask about API transparency:

- What training data underpins the model?

- Can you trace which sentences informed the summary?

- Are errors and corrections logged for audit?

- How often is the model updated?

- Do user corrections feed back into model improvement?

If the vendor can’t answer, reconsider your choice.

The future of document analysis: Where do we go from here?

Trends shaping the next generation of summarization APIs

The current wave of APIs is powerful, but new currents are swirling. Multimodal summarization—combining text, audio, video, and images—is already a reality in some platforms. Real-time streaming summarization is reshaping meeting platforms and newsrooms. Domain-specific models are raising accuracy ceilings for law, science, and finance.

These advances aren’t just incremental—they’re redefining who can access, use, and benefit from the world’s information surplus.

How hybrid approaches are redefining what's possible

The future isn’t AI versus human—it’s AI plus human. Hybrid, human-in-the-loop systems are setting new standards for accuracy, reliability, and trust. Platforms like textwall.ai are pushing the field forward by blending machine speed with human oversight, supporting workflows as unique as the organizations using them.

Hybrid approaches allow for domain experts to correct AI errors, continually improving accuracy and tailoring summaries to evolving needs—a game-changer for compliance, research, and business intelligence.

Are we ready for autonomous document understanding?

Society is only beginning to reckon with the implications of fully autonomous document analysis. Automated summarization democratizes access to knowledge but raises questions about oversight, privacy, and systemic bias.

Societal impacts of widespread AI-driven document summarization:

- Democratization of expertise: Non-experts can glean insights from complex documents, narrowing knowledge gaps.

- Potential for misinformation: Hallucinated or biased summaries can propagate errors quickly.

- Efficiency versus oversight: Time saved may come at the cost of missed nuance or critical context.

- Changing roles: Knowledge workers must adapt—AI handles the “grunt work,” but human judgment is more valuable than ever.

The question isn’t whether we can automate understanding—but whether we’re prepared to manage the consequences.

Deep-dive: Adjacent technologies and what's next

Beyond summarization: Extraction, classification, and actionable insights

Today’s APIs don’t stop at summaries. Cutting-edge platforms offer structured information extraction—names, entities, relationships—and even make recommendations or classify content by topic, urgency, or sentiment.

| API Type | Summary Generation | Structured Extraction | Topic Classification |

|---|---|---|---|

| Summarization API | Yes | Limited | No |

| Extraction API | No | Yes | Partial |

| Classification API | No | No | Yes |

| TextWall.ai (Hybrid) | Yes | Yes | Yes |

Table 5: Feature comparison of document summarization, extraction, and classification APIs. Source: Original analysis based on SummarizeBot, 2024, textwall.ai

The synthesis of these tools is where real competitive advantage lives.

AI explainability and auditability: The new frontier

As regulation tightens, pressure is mounting for APIs to offer transparency, audit trails, and explainable outputs. The best systems now log every step, from input preprocessing to summary selection, letting users retrace the API’s logic and catch errors before they metastasize.

Platforms that can’t provide this level of transparency risk irrelevance—or worse, legal pitfalls.

What does the future hold for human expertise?

Despite the hype, AI hasn’t killed expertise—if anything, it’s made it scarcer and more precious. The human edge is contextual awareness, critical judgment, and creative intuition. As Jordan, an analyst, observes:

“The human edge is context. Machines still need us.”

— Jordan, analyst

The best teams won’t replace knowledge workers, but will empower them—using APIs to handle the slog, and reserving human firepower for what matters most.

Conclusion: Rethinking how we consume and act on information

Key takeaways for the next-gen knowledge worker

The document summarization API is neither a panacea nor a passing fad. It’s a force multiplier—when wielded with expertise, skepticism, and critical engagement. The winners aren’t those who adopt first, but those who adopt smart.

7 actionable takeaways for leveraging document summarization APIs effectively:

- Always vet API summaries against the original for critical use cases.

- Invest in domain adaptation for complex or specialized documents.

- Prioritize APIs with strong transparency and explainability features.

- Use human-in-the-loop workflows for high-stakes decisions.

- Regularly review and audit outputs for bias and hallucination.

- Protect sensitive data—security is non-negotiable.

- Stay informed about API advances and iterate your workflows accordingly.

These aren’t just technical points—they’re the new table stakes for knowledge work.

The challenge: Will you master the flood or drown in data?

The information flood isn’t receding. The question is: will you ride the wave, or get pulled under? The document summarization API is your surfboard—a powerful tool, but useless if you don’t learn to use it with skill, skepticism, and strategy. The next move is yours.

Sources

References cited in this article

- MeaningCloud(meaningcloud.com)

- SummarizeBot API(summarizebot.com)

- Azure AI Summarization(learn.microsoft.com)

- Width.ai(width.ai)

- Eden AI(edenai.co)

- Ecopier Solutions(ecopiersolutions.com)

- Azure AI(learn.microsoft.com)

- ShareFile(sharefile.com)

- arXiv Survey 2024(arxiv.org)

- SERP AI(serp.ai)

- Springer Review(link.springer.com)

- Summarize.do API(summarize.do)

- RedHatInsights/wordmill(github.com)

- ApyHub(apyhub.com)

- Azure AI Limitations(learn.microsoft.com)

- Codesphere(codesphere.com)

- Medium: Text Summarization APIs(medium.com)

- Summarize.do Blog(summarize.do)

- Epiq Global(epiqglobal.com)

- Medium: LangChain(medium.com)

- TechTarget(techtarget.com)

- Journalists.org: Djinn case study(journalists.org)

- Addepto: OpenAI Summarization(addepto.com)

- Addepto: Privacy(addepto.com)

- arXiv Privacy Risks(arxiv.org)

- AWS ML Blog(aws.amazon.com)

- Apidog API Testing Checklist(apidog.com)

- FrugalTesting(frugaltesting.com)

- RapidAPI(rapidapi.com)

- Hugging Face(huggingface.co)

- aiPDF.ai(blog.aipdf.ai)

- Sembly AI(sembly.ai)

- Summarize.do Tips(summarize.do)

- Rephrase.info(rephrase.info)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization AI Is Now Deciding What You Read

We live in the age of infinite scroll, endless Slack threads, and inboxes that never sleep. While information was once a currency, today it’s an avalanche

Document Structure Recognition Is Your Real AI Leverage Layer

Chaos is the real villain in the modern organization’s story—a shapeshifter hiding in billions of PDFs, emails, invoices, and contracts. Document structure

Document Storage Solutions When One Lost File Can Sink You

Document storage solutions for 2026—stop losing time, money, and sanity. Discover the hidden traps and bold strategies. Take control before chaos wins.

Document Storage Management That Prevents Your Next Data Disaster

You fancy your digital fortress is airtight, your archives are ironclad, and that chaos is something that only happens to the unprepared. But here’s the first

Document Similarity Analysis When Trust Is on the Line

Document similarity analysis is changing how we compare, trust, and secure text. Learn what really works, the myths, and what’s next. Read before you misjudge your docs.

Document Security Analysis That Actually Stops Real-World Breaches

Unmask the real risks, hidden flaws, and actionable solutions to protect your data now. Discover what most experts won’t say.

Document Search Optimization in 2026: Stop Losing Work in Plain Sight

Document search optimization is broken. Discover the 9 truths sabotaging your workflow—and the breakthrough strategies you need in 2026. Don’t fall behind. Read now.

Document Scanning Technology in 2026: Asset or Liability?

If you still think document scanning technology is just a “nice-to-have” for your business, you’re living in a paper-fueled fever dream. In 2025, the stakes

Document Scanning Solutions Comparison That Exposes 2026’s Real ROI

Discover 2026’s hard truths, hidden costs, and game-changing features. Get the real story before you make your next move.