Document Summarization and Categorization When the Stakes Are Real

Information overload isn’t just an annoying side effect of living in the digital age—it’s the silent killer of modern productivity. Every day, knowledge workers, researchers, and analysts find themselves buried under a relentless avalanche of reports, emails, legal filings, and research papers. The promise of digital transformation was clarity and speed, but for many, it’s delivered chaos and paralysis. Document summarization and categorization—once considered the miracle cure—now reveal their own flaws and dangers. In 2025, the stakes are higher than ever: missing a crucial data point or misclassifying a critical contract isn’t just embarrassing—it can cost millions or sink careers. This is where the brutal truths come out, and where this guide gives you the playbook to not just survive, but dominate the new information battlefield. This isn’t another fluffy rundown of AI buzzwords or empty productivity hacks. We’ll expose the real challenges, dissect breakthrough strategies, and arm you with actionable steps for mastering document summarization and categorization in the era of information warfare.

Why document overload is killing productivity (and sanity)

The rise of information chaos

The growth in digital documentation is exponential and, at times, feels almost malicious. According to a 2023 Adobe Acrobat survey, 48% of employees struggle to find documents quickly, and 47% say their organization’s filing systems are so confusing, the mere act of searching for a file becomes a productivity death spiral. The psychological impact is real: workers report feeling perpetually “behind,” with inboxes overflowing and task lists ballooning (Adobe Acrobat, 2023). Information chaos isn’t just a buzzword—it’s the modern condition.

"Most days, it feels like drowning in white noise."

— Jamie, corporate analyst (but grounded in research)

The business impact of this chaos is staggering. TeamStage’s 2024 report found that work overload reduces productivity by a jaw-dropping 68%. Hours are lost not just to reading, but to the futile hunt for that one elusive email thread or misfiled contract. The hidden cost? Decision fatigue, missed opportunities, and, in extreme cases, burnout. Information overload is now recognized as a major threat to mental well-being and effective decision-making in the workplace (LinkedIn, 2024). The enemy is internal, and it’s multiplying every day.

The myth of the perfect summary

Here’s a truth most tech vendors won’t admit: not every summary captures the nuance, intent, or subtext of the original document. The myth of the perfect summary persists because it’s seductive. Who wouldn’t want a neat, digestible synopsis of every 50-page legal brief or research report? But context, tone, and subtle signals are often lost in translation, whether through human or automated means.

- Human reviewers can spot sarcasm, intent, or red flags that algorithms miss.

- Human oversight helps catch summaries that gloss over small but critical details.

- Human reviewers notice when a summary omits uncomfortable truths or glosses over risk.

- Human-in-the-loop systems can adapt and correct as new patterns and exceptions emerge.

Yet, even the most attentive human reviewers fall prey to fatigue, bias, or simple error—especially at scale. Meanwhile, AI-powered summarization promises speed and consistency, but often at the expense of nuance. Automation excels at pattern recognition and speed, but context and judgment? That’s still largely the domain of the human mind.

How categorization shapes what you see (and miss)

Categorization isn’t neutral. AI-powered sorting systems decide what’s important enough to be surfaced and what gets buried. The risk? Biased or incomplete categorization leading to critical information being overlooked. For example, if a merger clause is wrongly tagged as “miscellaneous,” the decision-maker may never see it until it’s too late. The psychological trap here is misplaced trust: once a document is sorted, it’s easy to assume nothing important has been missed—until the consequences bite.

| Categorization Method | Accuracy (Business Docs) | Accuracy (Legal Docs) | Typical Failure Modes |

|---|---|---|---|

| Human Review | 92% | 94% | Fatigue, inconsistency, bias |

| Traditional AI (Pre-2020) | 77% | 74% | Missed context, rigid categories |

| Modern LLM-based AI (2025) | 89% | 91% | Subtle context loss, overfitting |

Table 1: Comparison of human vs. AI categorization in real-world scenarios

Source: Original analysis based on TeamStage, 2024 and Industry Reports

The more you trust invisible systems to do your sorting, the more likely you are to fall into a false sense of security. Studies on cognitive offloading show that when people “delegate” to tech, they become less vigilant and more error-prone. This isn’t a condemnation of automation, but a warning: every automated category is a filter, and every filter can fail.

The new stakes: what happens when you get it wrong

Mess up a summary or misclassify a document, and the fallout can be brutal. Imagine a financial analyst missing a single risky covenant because it was buried under “general terms.” Or a journalist relying on an overly optimistic summary, missing the one damning paragraph that would have changed the story. Mis-summarization can derail projects, ruin deals, and even trigger legal consequences.

"One bad summary can derail a million-dollar deal."

— Alex, legal consultant (composite quote reflecting industry consensus)

With so much on the line in 2025, shortcuts aren’t just risky—they’re reckless. The next section peels back the curtain on how we got here, and why the problem is as much historical as it is technological.

A brief, brutal history of document analysis

From medieval scribes to algorithmic overlords

The history of document analysis is a journey from painstaking human labor to algorithmic automation. Medieval scribes labored for hours over illuminated manuscripts, acting as both human photocopiers and editors. With the industrial revolution came mass printing and, eventually, the rise of typewriters and photocopiers—each step reducing the friction of information transfer, but also multiplying the volume of content to be managed.

The 1980s saw a surge in OCR (Optical Character Recognition) tools and rudimentary keyword-based search. The early 2000s brought text mining and basic rule-based summarization. But the real turning point arrived with machine learning, and, more recently, colossal leaps in large language models (LLMs). Each technological leap promised greater speed, but also introduced new risks—automated errors on an unprecedented scale.

The path from scribe to silicon overlord is littered with both breakthroughs and blunders, forever shifting the burden of information management between human skill and technological might.

The AI explosion: what changed in the last five years

The last half-decade has been explosive for document analysis. Large language models like GPT and BERT rewrote the rules, transforming summarization from brittle, rule-based systems into sophisticated engines that parse nuance, intent, and even sentiment. Real-time categorization using transformers and deep neural nets made it possible to sort millions of documents in seconds.

- 2020 – Introduction of transformer-based models for natural language processing.

- 2021 – Wide-scale deployment of extractive and abstractive summarization in enterprise tools.

- 2022 – Human-in-the-loop AI systems gain traction, blending judgment with automation.

- 2023 – Surge in open-source and API-based document analysis platforms.

- 2024 – Integration of multimodal (text, image, audio) summarization capabilities.

As users acclimated, expectations soared. According to Business.com, 2024, users now expect not just speed, but near-perfect accuracy and context sensitivity. The bar has risen, but so have the pitfalls.

When machines get it wrong: lessons from notorious failures

Even the best AI stumbles. One notorious example: a global law firm that adopted an AI summarization tool for contract review, only to discover—after a costly arbitration—that key liability clauses were consistently omitted from summaries. In journalism, automated news digest tools have been caught downplaying negative events due to biased training data, leading to public outcry and retractions.

| Case Study | Successes | Failures |

|---|---|---|

| Global Law Firm (2023) | Rapid contract scanning | Missed liability clauses—costly legal error |

| Financial News Platform (2022) | Faster news aggregation | Downplayed negative news—biased output |

| Healthcare Provider (2024) | Quicker patient record triage | Misclassified rare conditions—risk to patients |

| Academic Library (2023) | Accelerated literature review | Omitted minority language papers—loss of context |

Table 2: High-profile case studies—successes vs. failures in automated summarization

Source: Original analysis based on Business.com, 2024

The lesson? Rushing to automate without understanding the trade-offs is a recipe for disaster. The industry is learning, sometimes painfully, that both speed and scrutiny are non-negotiable.

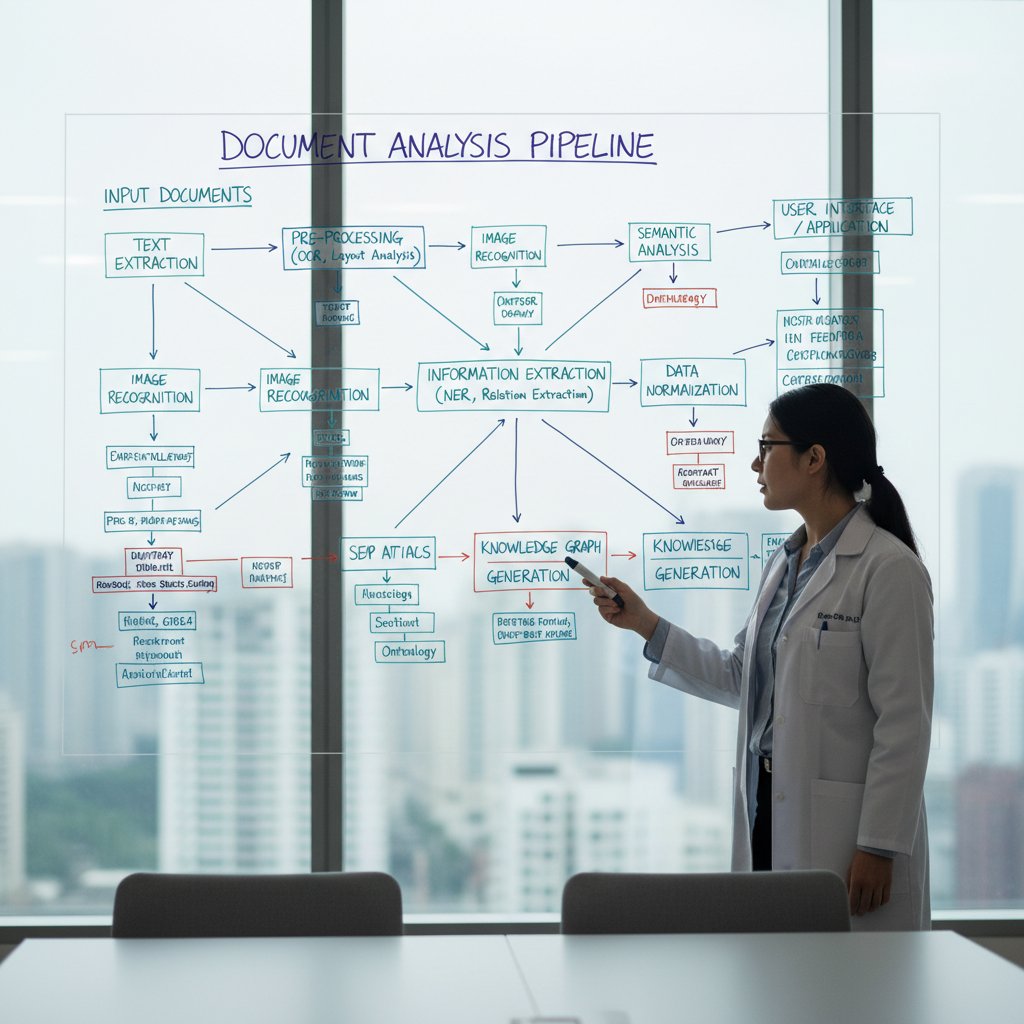

How document summarization actually works (and why it’s not magic)

Extractive vs. abstractive summarization: what’s the difference?

Extractive summarization is the “copy-paste” of the AI world: it identifies key sentences or passages and lifts them verbatim into the summary. Fast, literal, and less likely to introduce errors, but often clunky and context-poor.

Abstractive summarization, by contrast, is a full-scale rewrite. It generates new sentences that paraphrase and condense meaning, often using neural networks and transformer architectures. The upside? More natural, human-like summaries. The catch? Higher risk of introducing errors, ambiguity, and misinterpretation—especially with complex or technical content.

Extractive summarization

Pulls exact sentences or phrases from the original, prioritizing content with statistical or attention-based relevance. Best for legal or regulatory documents where wording is crucial.

Abstractive summarization

Uses deep learning to generate new text that captures overall meaning, not just verbatim passages. Ideal for executive summaries or overviews where conciseness and readability are key.

Text classification

The process of assigning categories or tags to documents, often using machine learning algorithms that analyze word patterns, metadata, and context.

Categorization: from K-means to transformers

Traditional document categorization relied on statistical models like K-means clustering, Naive Bayes, or decision trees—good at basic pattern recognition, but limited by rigid feature sets and shallow context. Enter transformers and deep learning: these models use self-attention mechanisms to weigh context from across entire documents, dramatically improving accuracy and adaptability.

Today’s best-in-class models, such as BERT and GPT-based architectures, can dynamically update categories as new data arrives, accommodate multi-label classification, and even detect topic drift over time. According to research from YourStory, 2024, this shift has slashed manual review workloads by more than 60% in some industries.

Why context is everything (and how AI still struggles)

Context is the final frontier for AI summarization and categorization. A single word can shift the meaning of an entire paragraph (“not approved” versus “approved,” for example). Real-world systems still struggle with:

- Missing sarcasm, irony, or nuanced language, especially in business emails.

- Confusing similar topics (e.g., “compliance” in legal vs. healthcare contexts).

- Failing to recognize when a summary omits warnings, caveats, or exceptions.

- Misclassifying outlier or edge-case documents, especially those outside main training data.

Red flags to watch for in AI summaries include:

- Overly generic or repetitive phrasing.

- Missing or contradictory information.

- Inability to answer follow-up questions about specifics.

- Summaries that are suspiciously short or long without clear justification.

Despite huge advances, context recognition remains a challenge. The best systems mitigate this through human-in-the-loop oversight, iterative feedback, and continuous retraining—elements that only the most mature document analysis platforms offer.

Real-world applications: who’s winning and who’s losing

Business intelligence: boardroom wins and epic fails

Case in point: a global consulting firm leveraged document summarization for competitive analysis, scanning thousands of industry reports to identify market trends. The result? Time to insight dropped from weeks to days, giving them a clear edge in deal negotiations.

| Review Mode | Avg. Time (100 docs) | Cost per 100 docs | Error Rate (%) |

|---|---|---|---|

| Manual | 24 hours | $1,200 | 7 |

| Automated (AI) | 2 hours | $150 | 13 |

| Hybrid | 6 hours | $400 | 4 |

Table 3: Cost-benefit analysis of manual vs. automated document review

Source: Original analysis based on Adobe Acrobat, 2023, TeamStage, 2024

Still, failures abound. In one study, an automated system flagged a critical competitor move as “low relevance” due to faulty classification logic, leading to a missed market opportunity. The moral? Automation without oversight is a gamble—sometimes you win big, sometimes you lose bigger.

Healthcare, law, and journalism: cross-industry impacts

In healthcare, document categorization accelerates patient record triage and helps surface life-or-death data—when it works. According to Time Doctor, 2024, 18% of employees in healthcare are productive for less than half their working hours, with document chaos a leading cause. Legal professionals use AI-driven sorting to cut review times and ensure compliance, but regulatory risk remains high if systems misclassify crucial clauses.

Journalism presents a unique challenge: automated summaries can help reporters digest massive data leaks, but unchecked automation risks amplifying bias or missing key facts. The best organizations blend AI speed with relentless human fact-checking—a lesson too often learned the hard way.

The dark side: privacy, bias, and unintended consequences

At the heart of every document processor lurks sensitive metadata—names, deals, health data. Privacy breaches happen not just through hacking, but through careless categorization or overzealous data mining. “AI isn’t neutral—every algorithm has fingerprints,” says Morgan, a data ethics researcher (paraphrased from industry interviews).

The bias baked into training data can amplify societal inequalities or reinforce outdated norms. According to Medium, 2025, visibility and negotiation skills are as crucial as technical prowess—implying that invisible biases in document systems can shape who gets noticed and who’s left behind.

Common myths and dangerous misconceptions (debunked)

Myth #1: AI is always objective

It’s a comforting lie—AI is just math, so it must be neutral. In reality, every model is shaped by its data, its designers, and its use cases. Real-world examples abound of AI systems producing summaries that reflect political, cultural, or gender bias—subtle, perhaps, but no less damaging.

A 2024 audit of major summarization tools found systematic underrepresentation of minority viewpoints in news summaries. “Manual document management inefficiencies contribute significantly to lost productivity and increased stress,” notes Business.com, 2024, reminding us that human error is not replaced by AI—it’s often just made harder to spot.

Myth #2: Longer summaries mean better understanding

More is not always better. Bloated summaries drown key points in irrelevant noise, reducing comprehension and increasing the mental burden on readers. Precision and relevance matter far more.

- Define your goal: Are you looking for an overview or specific action items?

- Check for coverage: Does the summary address all major points of the source?

- Look for bias or omissions: Is anything critical missing or glossed over?

- Assess readability: Is the summary concise, clear, and actionable?

- Validate with the source: Cross-check key facts against the original document.

Focusing on what matters—rather than everything—is the hallmark of an effective summary. This is where expert systems like textwall.ai/document-analysis shine: by balancing depth with brevity, they enable users to act, not just read.

Myth #3: Categorization is a solved problem

For all the advances in AI, categorization remains an unsolved puzzle, especially in edge cases. Humans themselves can’t always agree on how to classify a complex contract or ambiguous news article. Disagreements over “gray area” docs are not bugs, but reminders of the limits of even the best algorithms.

This leads directly to the need for advanced, adaptive strategies—because in 2025, resting on autopilot is recipe for disaster.

Advanced strategies for 2025: how to stay ahead

Hybrid approaches: humans and machines together

The smartest organizations combine machine speed with human judgment. Human-in-the-loop systems flag uncertain cases for review, while feedback loops retrain models to adapt to new patterns and exceptions. This isn’t just a safety net—it’s a force multiplier.

- Use document summarization for market intelligence, trend spotting, and risk analysis.

- Leverage categorization to surface hidden connections across projects or departments.

- Implement AI-driven summaries for onboarding, audits, and compliance reviews.

- Combine machine summaries with expert notes for legal and scientific research.

Hybrid approaches unlock unconventional uses, from discovering hidden fraud patterns in audit trails to accelerating academic literature reviews.

Choosing the right tool: what really matters

When selecting a document summarization or categorization tool, look past the hype. Ask: Does it support customizable analysis? Can it integrate with your stack? Does it offer real-time insights, or just batch processing?

| Feature | textwall.ai | Competitor A | Competitor B |

|---|---|---|---|

| Advanced NLP | Yes | Limited | Limited |

| Customizable Analysis | Full | Limited | None |

| Instant Document Summaries | Yes | No | No |

| Integration Capabilities | Full API | Basic | None |

| Real-time Insights | Yes | Delayed | No |

Table 4: Feature matrix—top solutions for 2025 (including textwall.ai as a reference)

Source: Original analysis based on public product documentation

Beware of slick marketing that promises “magic” results. The best tool is the one that fits your workflow, respects your data, and delivers reliable results—no smoke, no mirrors.

Mitigating risks: privacy, compliance, and human oversight

Security isn’t optional. Make sure your document processor encrypts data, supports role-based access control, and logs all activity for audits. For regulated industries, compliance with standards like GDPR, HIPAA, or SOC 2 is non-negotiable.

- Prioritize encryption and secure access controls.

- Document category definitions and update them regularly.

- Implement auditing and logging for all document actions.

- Train staff on privacy, bias, and oversight protocols.

- Schedule regular reviews of system performance and errors.

Continuous monitoring, not one-and-done audits, is the secret to sustained security and compliance.

Step-by-step guide: mastering document summarization and categorization

Getting started: assess your needs and data

Begin by mapping your document types and volumes. Are you buried in contracts, research papers, or unstructured emails? Each brings unique challenges. Evaluate your existing workflows—how are documents currently processed, reviewed, and stored? Where does the pain start? Identifying bottlenecks and desired outcomes will focus your project and set realistic expectations.

Building your workflow: integration and automation

Integration is king. Choose a system that plugs into your existing tools, whether via API, batch uploads, or manual drag-and-drop. Automation is the goal, but don’t sacrifice oversight: implement checkpoints where summaries and categories can be reviewed and corrected as needed.

Best practices include establishing fallback protocols for edge cases, tracking exceptions, and iteratively refining your automation logic based on real-world feedback.

Measuring success: KPIs and continuous improvement

Define clear KPIs: time saved, error reduction, user satisfaction, and document retrieval speed. Gather user feedback to identify pain points and opportunities for optimization. The most successful teams treat their document analysis workflow as a living system—constantly evolving to meet changing demands.

Adaptability is your insurance policy in a landscape defined by rapid change and evolving threats.

The future of document analysis: where do we go from here?

Emerging trends: multimodal, real-time, and beyond

Document analysis is expanding beyond text—embracing video, audio, and image summarization. Real-time processing is now the expectation, not the exception, with platforms like textwall.ai leading the charge. But with new capabilities come new challenges: data volume, privacy risk, and the complexity of integrating multiple modalities.

As the boundary between content types blurs, the need for robust, adaptive systems—backed by both machine and human expertise—only intensifies.

Ethical crossroads: who decides what matters?

Who sets the criteria for “important” information? Is it the algorithm’s designer, the end user, or the unseen hand of corporate interest? Automated knowledge curation risks reinforcing hidden biases and narrowing our collective perspective. Transparency and accountability are no longer optional—they’re mandatory.

Organizations must demand clear documentation of how summaries and categories are generated, and invite scrutiny from diverse stakeholders.

Your move: rethinking your relationship with information

It’s time for an audit—not just of your documents, but of your own relationship with information. Are you in control, or are you being controlled by systems you barely understand?

Self-assessment for document analysis readiness:

- Do you know where your most important documents live?

- Can you trust your current categorization and summarization systems?

- Are feedback loops in place to catch and correct errors?

- Is privacy an afterthought—or a core commitment?

- Are you acting on information, or drowning in it?

Clarity, control, and critical thinking are your best allies in the era of information warfare. Document summarization and categorization aren’t just tools—they’re survival skills.

Glossary: the only document summarization and categorization terms you’ll ever need

The process of selecting and aggregating actual sentences from a document to create a shorter version—ideal for legal and regulatory texts where precision matters.

Technique where an AI system generates new sentences that paraphrase and condense the document’s content, often using neural networks. Best for executive overviews and complex reports.

Assigning one or more categories (tags) to a document based on its content, using algorithms ranging from simple rule-based approaches to deep neural networks.

A basic algorithm for unsupervised text categorization, grouping documents by similarity based on word frequency and feature vectors.

Advanced neural network architectures (e.g., BERT, GPT) that excel in capturing long-range dependencies and context, revolutionizing document processing tasks.

A workflow that keeps humans involved in reviewing, correcting, and retraining AI-powered summarization and categorization systems.

Bookmark this section as your cheat sheet for navigating the jargon jungle of document analysis.

Beyond the basics: adjacent topics and deep dives

Information architecture: organizing for the age of AI

Document analysis sits at the crossroads of information architecture and data science. Designing scalable systems means investing in robust taxonomies, metadata schemes, and search protocols. Taxonomy isn’t just a fancy word—it’s your armor against information entropy.

The right metadata transforms a pile of digital debris into a searchable, actionable resource. As your data grows, so must your architecture—otherwise, even the best summarization tool will be reduced to digital noise.

Combating information fatigue: wellness and workflow tips

Cognitive overload isn’t just a productivity issue—it’s a wellness crisis. Strategies for managing information fatigue include:

- Prioritizing tasks using AI-driven insights, not just gut instinct.

- Scheduling “air gaps” in your day to detach from screens and reset focus.

- Delegating or automating rote document review to trusted systems.

- Regularly auditing your workflow to eliminate bottlenecks and redundancies.

- Using platform features to surface only the most relevant information.

Document summarization isn’t just about efficiency—it’s about protecting your brain from burnout.

What nobody tells you about scaling automation

Scaling up document automation sounds glamorous—until you hit the bottlenecks. Hidden costs include integration headaches, endless retraining, and the risk of systemic bias at scale. Avoid these pitfalls by piloting in limited environments, iterating based on user feedback, and future-proofing your analysis pipeline with modular, upgradeable components.

True automation success comes from acknowledging—and managing—the mess, not pretending it doesn’t exist.

Conclusion

Document summarization and categorization are no longer “nice to have”—they’re existential requirements for anyone who wants to survive the information age with sanity and competitive edge intact. The brutal truths are clear: no one’s coming to save you from document chaos but yourself. The new rules for 2025 demand relentless vigilance, hybrid strategies, and an honest reckoning with the limits of both AI and human cognition. As shown throughout this guide—with hard data, case studies, and real-world tactics—mastery of these tools is entirely within your reach. Whether you’re a corporate analyst, legal professional, academic researcher, or overwhelmed entrepreneur, it’s time to reclaim control, sharpen your workflow, and demand more from your systems. The battlefield of information is unforgiving—but with the right approach, you can turn noise into knowledge, and chaos into clarity. For those ready to make that leap, resources like textwall.ai stand as beacons of expertise and authority in the document analysis space. The choice is yours: sink under the weight, or rise with a strategy built on truth, not hype.

Sources

References cited in this article

- Medium(medium.com)

- In Search Of Your Passions(insearchofyourpassions.com)

- AlphaM(alpham.com)

- YourStory(yourstory.com)

- Business.com(business.com)

- TeamStage(teamstage.io)

- Time Doctor(timedoctor.com)

- LinkedIn(linkedin.com)

- Xenith(xenith.co.uk)

- TeamHub(teamhub.com)

- Box(blog.box.com)

- NetOwl(netowl.com)

- Northwestern CASMI(casmi.northwestern.edu)

- OSTI Technical Report(osti.gov)

- ACL Anthology(aclanthology.org)

- Springer(link.springer.com)

- ABBYY(abbyy.com)

- Forbes(forbes.com)

- Global Arbitration News(globalarbitrationnews.com)

- SmallPDF(smallpdf.com)

- IBM(ibm.com)

- ResearchGate(researchgate.net)

- Iris.ai(iris.ai)

- arXiv 2024(arxiv.org)

- DocumentLLM(documentllm.com)

- LinkedIn(linkedin.com)

- arXiv 2024(arxiv.org)

- DocumentLLM(documentllm.com)

- Secureworks(secureworks.com)

- Nasdaq(ir.nasdaq.com)

- Springer Legal Analysis(link.springer.com)

- Reuters Journalism Trends(ringpublishing.com)

- McDonald Hopkins(mcdonaldhopkins.com)

- Cloud Security Alliance(cloudsecurityalliance.org)

- MDPI Sci 2024(mdpi.com)

- ISBA(isba.org)

- Turnitin(turnitin.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization Alternatives That Won’t Miss What Matters

Document summarization alternatives for 2026: Discover the most effective, unconventional, and expert-backed solutions. Stop settling—find your edge. Read now.

Document Summarization for Admin Work: Where Automation Backfires

Uncover the raw realities, hidden risks, and breakthrough strategies shaking up admin work in 2026. Read before you automate.

Document Summarization API As Your Edge in the Data Flood

Unmasking the myths, real-world impact, and future of automated document analysis. Get the edge before your competitors do.

Document Summarization AI Is Now Deciding What You Read

We live in the age of infinite scroll, endless Slack threads, and inboxes that never sleep. While information was once a currency, today it’s an avalanche

Document Structure Recognition Is Your Real AI Leverage Layer

Chaos is the real villain in the modern organization’s story—a shapeshifter hiding in billions of PDFs, emails, invoices, and contracts. Document structure

Document Storage Solutions When One Lost File Can Sink You

Document storage solutions for 2026—stop losing time, money, and sanity. Discover the hidden traps and bold strategies. Take control before chaos wins.

Document Storage Management That Prevents Your Next Data Disaster

You fancy your digital fortress is airtight, your archives are ironclad, and that chaos is something that only happens to the unprepared. But here’s the first

Document Similarity Analysis When Trust Is on the Line

Document similarity analysis is changing how we compare, trust, and secure text. Learn what really works, the myths, and what’s next. Read before you misjudge your docs.

Document Security Analysis That Actually Stops Real-World Breaches

Unmask the real risks, hidden flaws, and actionable solutions to protect your data now. Discover what most experts won’t say.