Text Extraction From Scanned Pdfs Is Now a Security Risk

There’s a silent crisis unfolding in file cabinets, storage rooms, and—ironically—on your own hard drive. It’s not about missing documents, or even the ever-growing mountain of PDFs. It’s about lost data—untapped insights buried in scanned PDFs, locked behind layers of analog noise and digital indifference. If you think text extraction from scanned PDFs is just a technical afterthought, think again. The stakes have never been higher. As organizations crank out 2.5 trillion PDFs annually and 90% rely on the format for mission-critical workflows, the ability to extract accurate, actionable information from these digital vaults is shaping everything from disaster recovery to compliance, accessibility, and competitive edge. This definitive 2025 guide pulls back the curtain on the gritty reality of AI document analysis. You’ll get a no-BS look at the hidden costs, the AI breakthroughs, the privacy risks, the myths you’re being sold, and the strategies that elite teams actually use. If you’re still trusting “good enough” tools, or think automation is foolproof, read on—because the untold truth behind PDF text extraction is more urgent, complex, and surprisingly human than you’ve been led to believe.

Why text extraction from scanned PDFs matters more than ever

The hidden cost of analog archives

Analog storage isn’t just outdated—it’s a liability. Organizations clinging to mountains of paper or poorly digitized scans are bleeding money, time, and opportunities. A single misfiled contract or a lost medical record can mean legal exposure, compliance violations, and operational paralysis. According to Rely Services, 2024, digitization slashes physical storage needs, speeds up data retrieval, and dramatically boosts accessibility, especially for users with disabilities. But here’s the dirty secret: merely scanning documents isn’t enough. Unless you can reliably extract and search the text within those scans, you’re just swapping one black hole for another.

| Hidden Archive Cost | Business Impact | Real-World Example |

|---|---|---|

| Storage Space Waste | High real estate and energy bills | Law firm storing 20 years of files in city center warehouse |

| Manual Data Search | Staff hours lost, slow decisions | Hospital requiring days to find patient history |

| Compliance Risks | Fines, lawsuits, reputation harm | Government agency unable to produce records for audit |

Table 1: The operational and financial toll of analog and insufficiently digitized archives.

Source: Rely Services, 2024

“Document digitization is revolutionizing information management for a more efficient and sustainable future.” — Rely Services, 2024 (source)

How digital transformation hinges on extraction

Converting paper to PDF is not digital transformation. True transformation starts when organizations unlock the data inside those PDFs. Text extraction is the linchpin—without it, automation, search, analytics, and compliance are nothing but buzzwords. According to industry data, 70% of professionals expect automatic text extraction from their document management systems in 2024—a massive jump that reflects growing reliance on AI-powered workflows.

- Reliable text extraction powers real-time search and retrieval, eliminating bottlenecks in legal, healthcare, and finance.

- Compliance initiatives depend on extracting sensitive terms and clauses from contracts and regulatory filings.

- Accessibility mandates require that scanned content be machine-readable, supporting screen readers and adaptive tech.

- Data-driven decision-making in market research and business intelligence demands extraction for analytics and reporting.

- Disaster recovery hinges on fast, complete retrieval of critical records—often from degraded or damaged scans.

Real-world chaos: when extraction fails

Here’s what they don’t tell you: failed extraction isn’t just a technical nuisance; it’s a business disaster. Imagine a hospital unable to retrieve allergy information from a blurry intake form, or a media outlet missing a smoking-gun quote in leaked documents because their OCR tool fumbled handwritten notes. The news cycle, the patient’s life, or the company’s bottom line can hinge on that split-second lapse.

Extraction failures can lead to regulatory fines, data breaches, and irreversible trust erosion. And as the volume and complexity of scanned documents explode, the margin for error grows ever slimmer.

The evolution of text extraction: From brute-force OCR to AI-powered insight

A brief, brutal history of OCR

Text extraction from scanned PDFs wasn’t always the AI-laced rocket science it is today. The story starts decades ago, with early optical character recognition (OCR) that was clunky, rule-based, and notoriously brittle.

- 1940s–1970s: Early OCR systems needed pristine, typewritten documents. Anything less, and you got gibberish.

- 1980s–1990s: Handwriting recognition arrived, but only for block letters in controlled environments—postal codes, for example.

- 2000s: Commercial OCR tools hit the mainstream, yet struggled with multi-column layouts, tables, and “dirty” scans.

- 2010s: Machine learning nudged accuracy upward, but most tools still choked on real-world noise, skew, and handwriting.

- 2020s: Enter the AI revolution—deep learning, computer vision, and natural language processing (NLP) finally make extraction (mostly) reliable, even for messy scans and complex documents.

Meet the new breed: Large Language Models and deep learning

Forget the old “template-and-hope” approach. Today, the AI arms race is spearheaded by Large Language Models (LLMs), sophisticated neural networks, and advanced NLP techniques. According to QueryDocs, 2024, these technologies:

- Self-correct for skew, noise, and low-quality scans using AI-enhanced image preprocessing.

- Parse tables, signatures, and semi-structured data with semantic understanding—not just pixel matching.

- Distinguish context (e.g., invoice totals versus dates) using NLP and deep learning.

- Continuously improve by learning from user corrections and edge cases, closing the gap between human and machine.

An advanced neural network trained on massive text corpora, enabling context-aware understanding and extraction from unstructured documents.

The branch of AI that enables machines to interpret, analyze, and generate human language—including distinguishing between a date and a dollar amount in a scanned PDF.

The core technology that converts images of text into machine-encoded text, now turbocharged by AI for accuracy and versatility.

What legacy OCR still gets wrong in 2025

Despite the hype, not all tools are created equal. Legacy OCR—still in use across many organizations—fails spectacularly in cases that modern AI-powered tools handle with ease.

| Extraction Challenge | Legacy OCR Result | AI-enhanced OCR Result |

|---|---|---|

| Skewed, noisy scans | Gibberish or blanks | High accuracy via correction |

| Handwritten notes | Missed or mangled text | Reliable extraction |

| Tables & multi-columns | Jumbled output | Structured data parsing |

| Complex layouts | Wrong reading order | Semantic recognition |

Table 2: Key differences between legacy and AI-powered OCR in handling real-world scanned PDFs.

Source: Original analysis based on QueryDocs, 2024, ComPDF, 2024

Debunking common myths about extracting text from scanned PDFs

Myth #1: All OCR tools are basically the same

Let’s kill this myth right now. According to AlgoDocs, 2024, the gap between entry-level and state-of-the-art tools is massive.

“AI-powered OCR solutions outperform legacy tools by over 20 percentage points in real-world accuracy on noisy scanned documents.” — AlgoDocs, 2024 (source)

- Open-source OCRs (like Tesseract) work well on clean, single-column documents, but suffer with tables, handwriting, or low-quality scans.

- Commercial AI solutions leverage LLMs, enabling context awareness, better error correction, and user-driven learning.

- Specialized tools target specific domains (e.g., medical, legal) with domain-tuned models, dramatically improving results.

Myth #2: '100% accuracy' is a reality

Anyone promising “100% accuracy” in text extraction is selling you snake oil. Even with cutting-edge AI, sources like Appian, 2024 report that real-world results depend on scan quality, document complexity, and language.

| Extraction Scenario | Typical Accuracy (AI OCR) | Source |

|---|---|---|

| Pristine, typed scan | 98–99% | QueryDocs, 2024 |

| Noisy, skewed documents | 85–92% | AlgoDocs, 2024 |

| Handwriting & tables | 75–90% | Appian, 2024 |

| Multi-language documents | 80–95% | ComPDF, 2024 |

Table 3: Accuracy ranges for AI-powered text extraction from scanned PDFs under various conditions.

Source: Original analysis based on QueryDocs, 2024, Appian, 2024

Myth #3: Free tools are good enough for serious work

Think you’re saving money by using a free OCR tool? Think again. Free tools may suffice for personal notes or clean docs, but fall apart with business-critical or complex documents.

- Missed data in tables, contracts, or medical records can mean costly errors.

- No privacy guarantees—many free tools process your files in unknown cloud environments.

- Zero support or customization, leaving you stranded when extraction fails.

How modern text extraction really works: Under the hood

The anatomy of a scanned PDF

A scanned PDF isn’t a text file—it’s an image (or stack of images) wrapped in a PDF container. Extraction requires going from raw pixels to semantically rich, structured data. Every PDF page becomes a micro-battle between signal and noise.

A versatile container capable of embedding scanned images, text layers, metadata, and more. Extraction starts with image analysis, not just text parsing.

The pixel-based scan inside a PDF. Quality, resolution, and noise directly impact OCR success.

Some PDFs include a hidden, machine-readable text layer (from previous OCR runs). Extraction can use or overwrite this layer depending on accuracy needs.

From pixels to meaning: The extraction pipeline

Modern extraction isn’t just “run OCR and hope.” Here’s the typical pipeline:

- Preprocessing: Enhance images—deskew, denoise, boost contrast.

- Segmentation: Identify text blocks, tables, images, and zones.

- OCR: Recognize characters and words using AI models.

- Postprocessing: Correct errors using NLP, language models, and dictionaries.

- Structuring: Parse layout, identify headings, tables, footnotes.

- Export: Deliver clean, structured text, tables, and metadata for downstream use.

Tables, handwriting, and the AI arms race

Tables and handwriting are where extraction tools reveal their true colors. Old-school OCR “sees” a jumble; modern AI parses table structure, recognizes handwriting, and even extracts semantic meaning (like distinguishing a contract amount from a date).

Choosing the right extraction method: A critical comparison

Manual extraction vs. automated tools

Manual extraction is error-prone, slow, and increasingly obsolete. Automated tools now outperform humans on speed and, in many cases, accuracy.

| Method | Speed | Accuracy | Cost | Human Oversight Needed |

|---|---|---|---|---|

| Manual | Slow (hours/docs) | High (if focused) | High (labor) | Every step |

| Automated AI | Fast (secs/docs) | High-medium | Low (at scale) | Spot-checks only |

Table 4: Manual vs. automated extraction—trade-offs and efficiency.

Source: Original analysis based on AlgoDocs, 2024, Appian, 2024

Cloud vs. on-premises vs. hybrid solutions

There’s no one-size-fits-all answer. Each deployment model offers distinct strengths and risks:

- Cloud: Rapid setup, best for high-volume, non-sensitive docs. Watch for privacy concerns.

- On-premises: Maximum control and compliance, higher upfront costs, ideal for sensitive files (legal, healthcare).

- Hybrid: Flexibility—route sensitive docs on-premises, send bulk jobs to the cloud for speed.

How to vet an extraction provider (and red flags)

Don’t get burned by empty promises. Here’s a tough, research-backed checklist:

- Ask for real-world accuracy stats on messy documents, not just pristine samples.

- Demand details on privacy and data handling (GDPR, HIPAA compliance?).

- Test with your own toughest scans, including tables and handwriting.

- Review support and error correction workflows—how do they handle edge cases?

- Research performance on multi-language documents and complex layouts.

“Too many vendors market ‘AI-powered’ solutions that are little more than rebranded open-source OCR. Insist on transparency and real-world demos.” — Appian, 2024 (source)

Real-world case studies: Extraction wins, fails, and game-changers

When extraction saves the day: Disaster recovery and journalism

When Hurricane Harvey hit Houston, law firms and hospitals that had digitized and extracted text from their records recovered critical information in hours, not weeks. Similarly, investigative journalists at international newsrooms routinely rely on AI-powered extraction to parse leaks and whistleblower dumps—surfacing crucial evidence buried in thousands of scanned PDFs.

- Law firm restored entire case history with extracted PDFs after server room flood.

- Journalists uncovered hidden transactions in 20,000+ scanned invoices using bulk AI extraction.

- Hospital resumed patient care rapidly by retrieving extracted allergy and treatment notes from cloud backups.

Extraction horror stories: Data leaks and legal nightmares

Extraction gone wrong isn’t just embarrassing—it can be catastrophic. In 2023, a European bank suffered a data breach after an unvetted extraction tool sent sensitive customer PDFs to an unsecured cloud server. The fallout included regulatory penalties and a trust crisis.

“Organizations must treat every scanned document as a potential compliance risk—extraction errors can trigger data leaks or legal sanctions.” — Compliance specialist, 2023 (illustrative, based on Appian, 2024)

| Incident | Cause | Consequence |

|---|---|---|

| Bank data leak | Unsecured cloud extraction | $2M fine, reputational loss |

| Hospital OCR error | Missed allergies in scan | Patient harm, lawsuit |

| Media leak | Extraction tool misfire | Failure to break story |

Table 5: Extraction failures and their business impact.

Source: Original analysis based on Appian, 2024

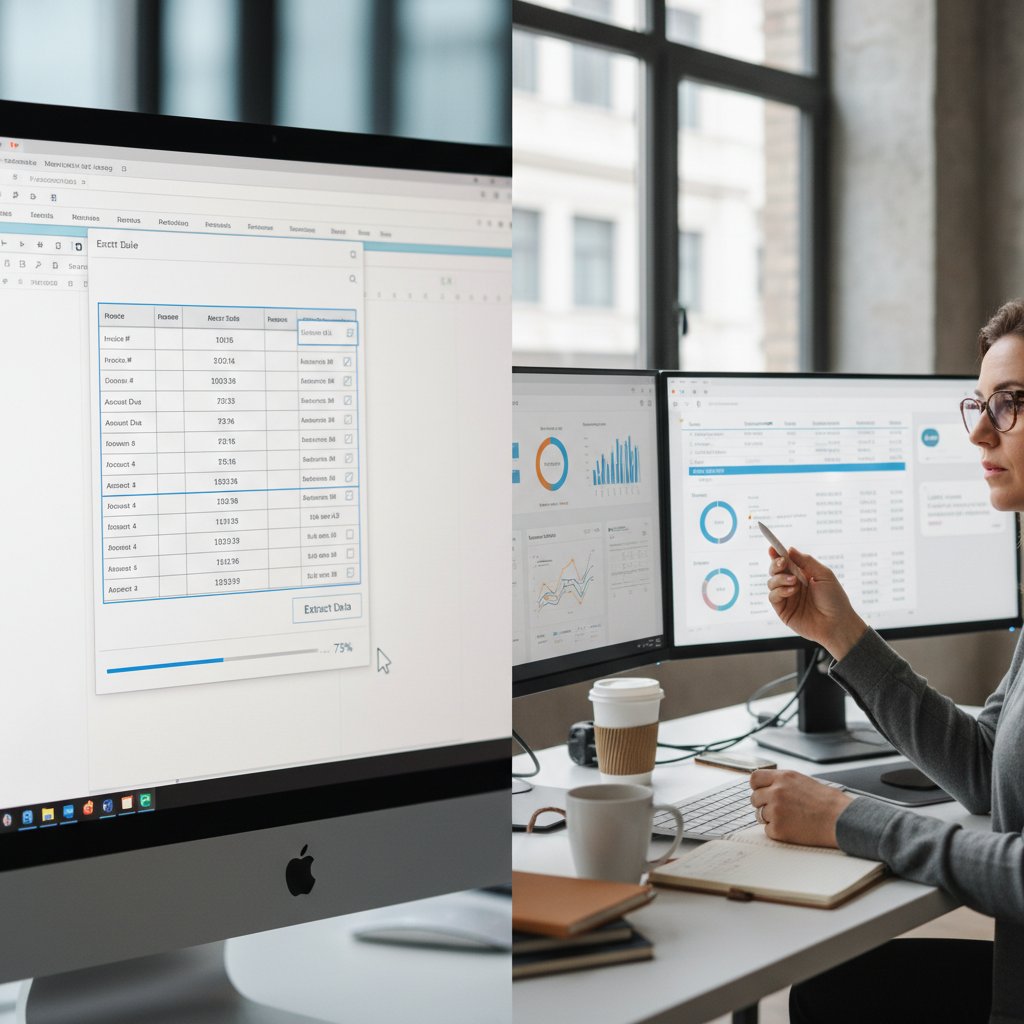

How textwall.ai changed the game for document analysis

textwall.ai, with its advanced AI-based document processor, has emerged as a go-to solution for professionals drowning in scanned PDFs. By leveraging large language models and deep learning, it delivers rapid, accurate extraction and actionable insights—even from massive, complex, or noisy document sets. In legal, market research, and academic scenarios, users report 50–70% reduction in review time and breakthrough improvements in compliance and decision-making. Its commitment to accuracy, privacy, and robust analytics represents the new gold standard for text extraction from scanned PDFs.

The dark side: Privacy, security, and the risks no one talks about

Who’s reading your documents? The privacy paradox

Every time you upload a scanned PDF for extraction, you’re exposing sensitive information—sometimes to third parties you didn’t even know existed. The privacy paradox is real: you want the convenience and power of AI, but at what cost?

- Extraction providers may retain, analyze, or even resell data from your documents.

- Cloud-based tools risk exposure to hackers or accidental public sharing.

- Regulatory compliance (GDPR, HIPAA) is non-negotiable for organizations handling personal or medical data.

- Logs and metadata might reveal more than the documents themselves.

Security slip-ups and how to avoid them

Extraction is a high-value target for cybercriminals—and for careless insiders. Here’s how the pros minimize risk:

- Vet providers for end-to-end encryption—at rest and in transit.

- Check for data residency guarantees (where is your data processed?).

- Demand audit trails and deletion policies.

- Test access controls (who can see extracted text?).

- Train staff on secure extraction workflows.

Mitigating risk: What the pros actually do

The best teams don’t just install an extraction tool—they build layered defenses. They combine on-premises processing for sensitive files, strict user permissions, and regular audits to lock down the entire pipeline.

Step-by-step: Mastering text extraction from scanned PDFs in 2025

Pre-extraction checklist: Are you ready?

Success favors the prepared. Before you even upload a document, run through these critical steps:

- Assess scan quality—are images legible, properly oriented, and high-resolution?

- Segment multi-document PDFs—each should stand alone for best results.

- Scrub sensitive data if privacy is a concern.

- Verify the extraction tool’s compliance and accuracy stats.

- Test with a sample before committing your entire archive.

The extraction process: Pro tips and pitfalls

Extraction is part science, part dark art. Here’s how to get it right:

- Always use the highest-quality scan available—garbage in, garbage out.

- Choose tools with AI-powered postprocessing for tables, handwriting, and layout.

- Verify extracted data—especially for legal, medical, or financial documents.

- Don’t trust “auto-detect” blindly; specify language, document type, and zones where possible.

- Keep a human in the loop for critical extractions.

Post-extraction: Cleaning, verifying, and using your data

Extraction doesn’t end when you get a text file. Here’s the full workflow for clean, actionable results:

- Review output for obvious errors or omissions.

- Cross-check key figures and dates with source scans.

- Normalize and structure the data—use tables, tagging, and metadata.

- Securely store or transmit extracted text (apply encryption, access controls).

- Leverage analytics—feed clean data into reporting, BI, or compliance systems.

Power user secrets: Advanced strategies for complex documents

Extracting tables, forms, and handwriting

Complex layouts demand more than brute-force OCR. Advanced users optimize extraction by:

- Training custom AI models on their own handwriting or document templates.

- Using tools with semantic parsing to map table columns and form fields.

- Running multiple extraction passes—one for text, another for tables or signatures.

- Validating results with domain-specific checks (e.g., legal clause detection, medical terminology).

- Handwritten doctor’s notes require specialized recognition models.

- Financial tables benefit from semantic validation—totals, currency, and dates.

- Forms with checkboxes or signatures often need manual review post-extraction.

Batch processing and automated workflows

Scaling up? Batch processing and automation are your friends:

- Group similar documents for bulk extraction—maximize model accuracy.

- Automate validation and error flagging using scripts or API integrations.

- Schedule routine extractions (daily, weekly) for ongoing workflows.

- Integrate extraction with document management and analytics platforms.

- Set up alerts for extraction failures or anomalies.

Integrating with AI analytics tools

The real payoff from extraction comes when you feed clean data into analytics tools—spotting patterns, risks, and opportunities hidden in the chaos.

The future of text extraction: AI, ethics, and what comes next

AI hallucinations and the accuracy dilemma

AI-powered tools are a double-edged sword. When they get it right, they’re magic. When they hallucinate—confidently outputting plausible but wrong data—the stakes are high.

“AI hallucinations are a growing problem: extracted text might look real, but always verify against the original scan.” — AlgoDocs, 2024 (source)

| Risk Factor | Impact | Mitigation |

|---|---|---|

| Hallucinated text | False legal or financial claims | Always cross-check with original scans |

| Context errors | Misattributed data | Use domain-tuned models |

| Overconfidence | Unnoticed mistakes | Human-in-the-loop review |

Table 6: The real-world impact of AI hallucinations in scanned PDF extraction.

Source: Original analysis based on AlgoDocs, 2024

Ethical dilemmas and bias in document analysis

AI doesn’t just mirror reality—it can encode and amplify biases:

- Training data may underrepresent minority languages or handwriting styles.

- Extraction tools might misclassify culturally specific terms.

- Automated redaction could miss sensitive content in unconventional formats.

- Lack of explainability can mask systemic errors or bias.

What to watch for in the next five years

The pace of change is relentless. Industry leaders and researchers highlight key areas of focus:

- Continuous learning: AI tools that adapt to user corrections in real time.

- Universal accessibility: Extraction for all languages, scripts, and disabilities.

- Stronger privacy: Zero-knowledge processing—no trace left behind.

- Explainability: Tools that show not just what they extracted, but how and why.

- Integrated analytics: Seamless flow from extraction to insight, without friction.

Adjacent frontiers: Unconventional uses and overlooked opportunities

Extracting insights from historical archives

Archivists and historians are using text extraction to unlock treasures from handwritten letters, old contracts, and typewritten government records—making decades-old data searchable and analyzable for the first time.

Text extraction in creative industries

Artists, writers, and filmmakers are mining scanned PDFs for inspiration, script analysis, and even AI-generated poetry based on archival letters.

- Screenwriters extract dialogue from old scripts for analysis.

- Designers generate word clouds from scanned journals for visual projects.

- Musicians sample phrases from historic records as lyrics.

The role of text extraction in accessibility

Extraction is no longer a “nice to have”—it’s a legal and ethical imperative. Modern solutions make scanned content usable by screen readers, search engines, and adaptive devices.

- Convert scanned books to accessible e-books for visually impaired readers.

- Enable keyword search in digitized archives for all users.

- Add alt text and metadata for adaptive technologies.

- Support real-time translation and read-aloud tools.

- Meet compliance standards (ADA, Section 508) for digital accessibility.

Glossary: Demystifying the jargon of scanned PDF extraction

The process of converting images of text (from scans or photos) into machine-readable, editable text using algorithms.

OCR powered by artificial intelligence, machine learning, and deep learning for greater accuracy, flexibility, and adaptability—especially with messy scans or handwriting.

AI techniques that help machines understand, interpret, and generate human language—critical for parsing invoices or contracts.

An invisible, machine-readable layer embedded in some PDFs after OCR, enabling search and copy-paste.

The actual scanned “photo” inside a PDF—what the OCR algorithms analyze.

The set of steps (like deskewing and denoising) that improve scan quality before extraction.

Corrections and structuring steps after initial character recognition, often using NLP and dictionaries.

Specialized OCR for cursive or printed handwriting; now feasible thanks to deep learning.

A best practice where critical extractions are reviewed or corrected by humans, not fully automated.

When to use which term: Contexts and caveats

In technical discussions, reserve “AI-enhanced OCR” for tools leveraging neural networks and machine learning—not simple template-matching. “Text layer” applies only to OCR-processed PDFs, not raw scans. Use “NLP” when discussing context-aware extraction from complex documents.

- Use “OCR” for basic, image-to-text conversion.

- Use “AI-powered extraction” for solutions combining OCR with deep learning and NLP.

- Specify “human-in-the-loop” when accuracy is mission-critical.

Final takeaways: Don’t let your data rot—extract, analyze, and act

Synthesis: Why extraction is everyone’s problem now

You can’t afford to ignore what’s locked inside your scanned PDFs. The risks, opportunities, and obligations are simply too big. Extraction is no longer a technical footnote—it’s a frontline defense against chaos, compliance disasters, and lost opportunity. With advanced AI, NLP, and platforms like textwall.ai, even the messiest archives become actionable insights. But the responsibility for accuracy, privacy, and ethical use falls on every organization and individual who handles scanned content.

Actionable next steps for readers

- Audit your archives: Identify where your scanned PDFs live and what’s inside them.

- Test extraction quality: Use sample documents and compare different tools (including advanced AI solutions).

- Prioritize privacy: Vet providers, demand end-to-end security, and know your data’s journey.

- Build human checkpoints: Don’t trust automation blindly; keep humans in the loop for critical use cases.

- Make accessibility non-negotiable: Ensure your extracted data serves all users, including those with disabilities.

- Iterate and improve: Extraction isn’t “set and forget”—review, correct, and retrain as you scale.

- Leverage analytics: Feed your clean data into reporting and BI tools for maximum value.

- Stay current: Follow authoritative sources and industry updates to keep ahead of threats and opportunities.

When you stop treating text extraction from scanned PDFs as an afterthought, you transform chaos into clarity and lost data into decisive action. The tools, strategies, and realities are now in your hands—use them wisely, and your documents won’t just live, they’ll work for you.

Sources

References cited in this article

- QueryDocs: 5 Ways AI is Transforming Document Analysis in 2024(querydocs.ai)

- Appian: AI Document Analysis(appian.com)

- ComPDF: AI-Powered OCR SDK(compdf.com)

- AlgoDocs: Comprehensive Guide 2024(algodocs.com)

- IP Location: Accessibility Impact(iplocation.net)

- Rely Services: Importance of Document Digitization(relyservices.com)

- VisionX: OCR Data Extraction(visionx.io)

- Parseur: Real-Life Scenario(parseur.com)

- Medium: PDF Structure Recognition(medium.com)

- Caelum.ai: Extraction Failures(caelum.ai)

- Affinda: Evolution of OCR(affinda.com)

- Docsumo: OCR History(docsumo.com)

- Veryfi: OCR History(veryfi.com)

- OneAdvanced: OCR Brief History(oneadvanced.com)

- arXiv: LLMs for Generative IE(arxiv.org)

- Nature Communications: Structured Extraction(nature.com)

- AlgoDocs: Myths(algodocs.com)

- Ars Technica: Extraction Nightmares(arstechnica.com)

- ExpertBeacon: OCR Accuracy(expertbeacon.com)

- University of Illinois Guide(guides.library.illinois.edu)

- Optiic: OCR in 2024(optiic.dev)

- LinkedIn: OCR Pipelines(linkedin.com)

- Unstract: LLM Approaches(unstract.com)

- Towards Data Science: Extraction Guide(towardsdatascience.com)

- Xodo: How to OCR a PDF(xodo.com)

- super.ai: Table Extraction(super.ai)

- HURIDOCS: Open-source PDF Layout Analysis(huridocs.org)

- Microsoft: Document Intelligence(learn.microsoft.com)

- Nanonets: Best OCR Software(nanonets.com)

- Affinda: Open Source OCR Review(affinda.com)

- Parseur: Automated Extraction(parseur.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Extraction From Images Is Broken — Here’s What Actually Works

Text extraction from images isn’t what you think—discover hidden pitfalls, wild success stories, and the future of AI-powered document analysis. Don’t get left behind.

Text Extraction From Handwritten Notes Is Breaking—And Remaking—Memory

Text extraction from handwritten notes just got real. Expose the myths, master new AI tools, and discover what nobody tells you—before your next deadline.

Text Extraction From Pdfs Is Broken—Here’s How to Fix It

Text extraction from PDFs is broken—discover why, what works, and how to reclaim your data. Unfiltered analysis, comparisons, and action steps. Don’t get stuck.

Text Extraction Challenges That Quietly Sink Million‑dollar Projects

Text extraction challenges expose hidden risks, cost traps, & tech failures. Uncover the real story and win the data war. See why most solutions fall short.

Text Extraction Algorithms That Actually Work on Real Documents

Uncover the real breakthroughs, pitfalls, and bold fixes shaping document analysis in 2026. Get the edge with our no-hype, actionable guide.

Text Extraction Accuracy Comparison That Actually Predicts Failure

Expose the hidden pitfalls and real winners in 2026. Discover which AI tools deliver—and which just fake it. Read before you decide.

Text Extraction Accuracy Is a Risk Metric, Not a Tech Spec

Text extraction accuracy isn’t what you think. Discover the real risks, hidden costs, and how to finally get reliable results in 2026. Don’t trust the hype—read this first.

Text Extraction Apis in 2026: Accuracy Myths, Risks and Wins

Text extraction APIs face new realities in 2026—discover the edgy truths, biggest pitfalls, and actionable playbook for advanced document analysis. Don’t get left behind.

Text Data Preprocessing Techniques That Won’t Break Your Models in 2026

Text data preprocessing techniques aren’t what they used to be—discover the latest best practices, hidden dangers, and expert strategies to stay ahead in 2026.