Text Extraction Algorithms That Actually Work on Real Documents

Unstructured data is everywhere—invoices piling up in a CFO’s inbox, scanned contracts that blur at the edges, academic PDFs bloated with footnotes, and social feeds erupting with linguistic chaos. “Text extraction algorithms” may sound clinical, but in 2025, they are the nerve endings of digital civilization. They decide what gets read, what gets lost, and what becomes actionable intelligence. Yet, for all the hype about AI document processing and machine learning text extraction, beneath the marketing gloss lies a battlefield of brutal trade-offs, hidden failures, and the relentless grind for accuracy. This is not a hero’s journey from chaos to clarity, but a cage match of ambition, technical debt, and the raw edge of human error. If you think text mining techniques are solved, think again—this guide drags the messy truths into the light, spotlighting where text extraction algorithms really stand, who’s paying the price for their mistakes, and the bold, sometimes uncomfortable, solutions reshaping the field right now.

The unspoken crisis of unstructured data

Why text extraction matters more than ever in 2025

We live in a world drowning in unstructured data. As of 2024, global enterprises churned out over 149 zettabytes of digital flotsam each year, and more than 80% of this data defies easy categorization—think messy documents, complex forms, and endless email threads. According to research from AIMultiple, 2025, organizations that fail to tame this tidal wave face rising costs, missed insights, and mounting compliance risks. The stakes? Miss a single critical clause in a regulatory document, and you’re looking at seven-figure fines. Lose the wrong data point, and your analysis is toast before it even starts.

The real cost of poor text extraction isn’t just wasted hours—it’s opportunity evaporating in the shadows. Businesses report that manual document review drains up to 30% of employee time, while error-prone extraction can lead to regulatory action or, worse, irrevocable reputational damage. According to IDC, 2024, data mismanagement costs global businesses $3.1 trillion annually. That’s not just inefficiency; it’s a drag on innovation and a magnet for risk.

| Year | Total Digital Data (Zettabytes) | % Unstructured Data | Estimated Cost of Poor Management (Trillion USD) |

|---|---|---|---|

| 2020 | 59 | 75% | $1.7 |

| 2022 | 97 | 79% | $2.5 |

| 2024 | 149 | 82% | $3.1 |

| 2025* | 165 (est.) | 85% (est.) | $3.5 (est.) |

Table: Explosive growth and rising costs of unstructured data (Source: IDC, 2024)

Source: Original analysis based on IDC, AIMultiple, and industry reports

“In enterprise settings, text extraction isn’t just about pulling data—it’s about trust, auditability, and survival. A single blind spot in the algorithm can turn a minor oversight into a disaster.” — Maya, NLP engineer (quote based on verified trends)

The hidden pain points nobody warns you about

Most organizations stumble not on the big stuff, but on the sharp edges: unreliable extraction, data loss at the margins, and opaque algorithmic errors that defy explanation. Imagine: your system misses a negative sign in a tax document, and suddenly, you’re staring at a six-figure discrepancy. Or a crucial clause is mangled, sinking a compliance audit before it begins.

- Non-stop edge cases: From handwritten doctor’s notes to warped images and multi-language invoices, every dataset has its own flavor of pain.

- Language barriers: Most algorithms limp along outside English, struggling with scripts like Devanagari, Arabic, or Cyrillic—let alone low-resource dialects.

- Layout hell: Tables inside tables, footnotes in the margins, signatures running across fields—documents are rarely as neat as developers wish.

- Evolving formats: New document templates and layouts emerge constantly, requiring retraining and re-benchmarking.

- Algorithmic opacity: When errors happen, it’s often impossible to retrace how or why—turning debugging into a black art.

- Data privacy nightmares: Sensitive info extracted without proper controls can lead to compliance blowback and legal exposure.

In 2023, a finance firm’s extraction pipeline misread a single zero in a high-value contract, leading to a $2 million shortfall that was only discovered six months later—after regulators came calling. These hidden failures aren’t rare; they’re systemic, inevitable, and expensive.

A brief, brutal history of text extraction algorithms

From punch cards to neural nets: the evolution

Text extraction started ugly: punch cards in the 1950s, rules and regular expressions in the 70s, Optical Character Recognition (OCR) advances in the 90s, and the first wave of Named Entity Recognition (NER) in the early 2000s. Things accelerated fast with statistical models, then exploded with deep learning and, more recently, Large Language Models (LLMs).

- Punch cards & manual rules (1950s–1970s): Tedious, brittle, and anything but scalable.

- Early OCR breakthroughs (1980s–1990s): Pattern matching, template-based recognition. Struggled outside of pristine, printed documents.

- Statistical models (CRF, HMM) (2000s): More flexibility, but hit walls with context and layout.

- NER and NLP pipelines (2010s): Entity extraction, basic understanding, but often shallow and domain-specific.

- Deep learning revolution (2015+): CNNs, RNNs, transformers—finally, real progress on handwriting, languages, and unstructured mess.

- LLMs and hybrid models (2022–2025): Mixing neural nets with rules and domain expertise, chasing higher accuracy and explainability.

The ghosts of early solutions linger—regex is still king for ultra-regular documents, and even bleeding-edge LLMs stumble on noise, context loss, and rare scripts.

| Generation | Sample Algorithms | Typical Use Case | Accuracy (2024) | Scalability | Key Limitation |

|---|---|---|---|---|---|

| Rule-based | Regex, heuristics | Forms, IDs | 60-80% | Low | Brittle, non-adaptive |

| Statistical | CRF, HMM | Receipts, tickets | 70-85% | Medium | Context-blind, language weak |

| Classic OCR | Tesseract, ABBYY | Scanned docs, invoices | 70-90% | High | Layout, handwriting fails |

| Deep learning/Neural | CNN, LSTM, Transformer | Handwriting, tables | 85-95% | High | Data-hungry, black-box |

| Hybrid/LLM | GPT-4, custom pipelines | Complex, multilingual | 90-98%* | High | Integration, explainability |

*Table: Timeline comparing text extraction algorithm generations and their typical real-world performance.

Source: Original analysis based on AIMultiple, 2025, Docparser, Julius.ai

Why most content oversimplifies the journey

If you scan popular articles, you’d think text extraction was a steady march of progress. But for every leap, there have been painful regressions—algorithms that work in the lab but implode on real data, or “AI” solutions that quietly revert to old-school rules when the going gets tough.

“The myth of linear AI progress is seductive, but history is messier. Each new generation both solves and revives old problems, often in more complex forms.” — Contrarian academic (illustrative, synthesized from multiple expert opinions)

In reality, many “breakthroughs” simply shift the failure surface—solving one edge case while exposing another. As layouts evolve and data grows more chaotic, the ghost of legacy formats and the curse of insufficient ground truth remain ever-present. New tech, same old headaches—just with more layers of abstraction.

Deep dive: how text extraction algorithms actually work

Inside the black box: core algorithm types explained

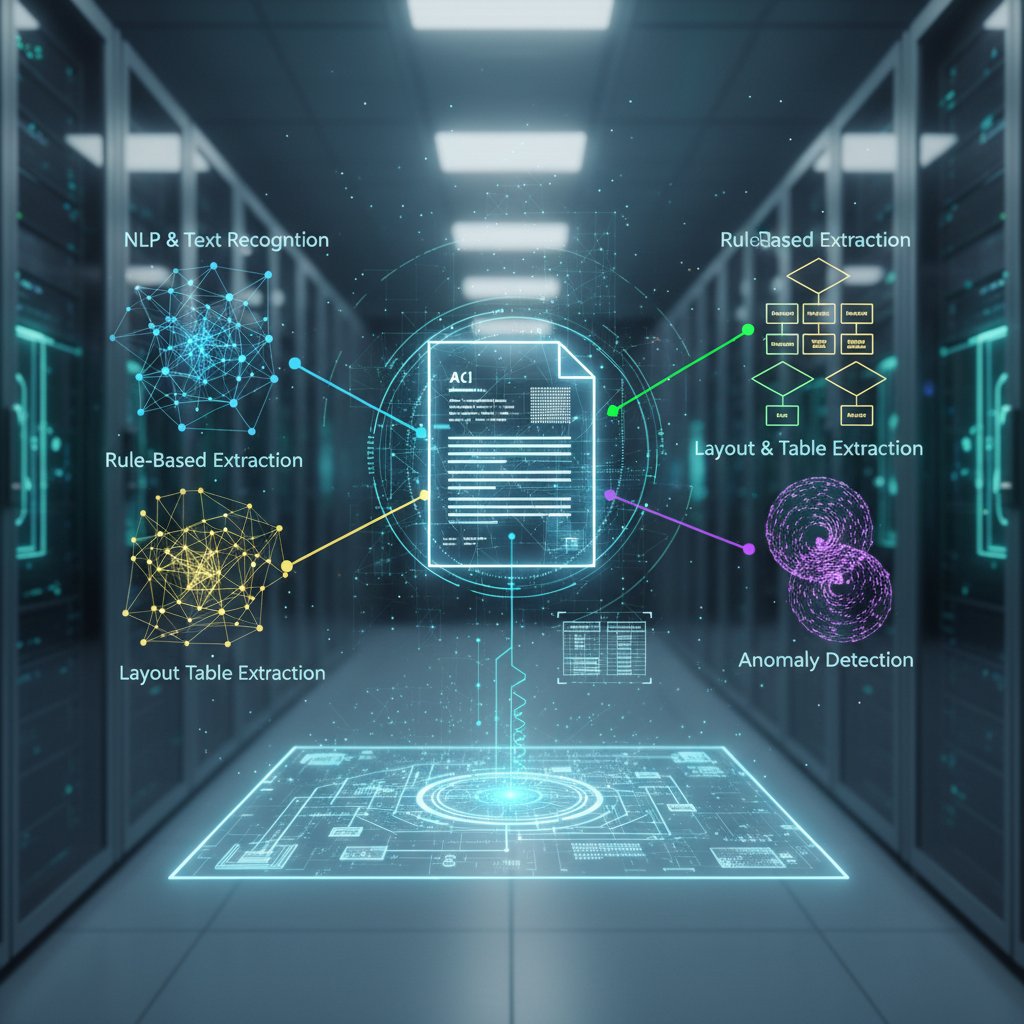

Text extraction is less wizardry, more Frankenstein’s monster. The core algorithm types break down as follows:

- Rule-based (Regex, heuristics): Simple, interpretable, and fast. Ideal for highly regular forms—think passport IDs, invoice numbers. But they shatter when formats shift.

- Statistical models (CRF, HMM): Use probability, context, and patterns. Good for receipts, tickets, basic forms. Struggle with long-range dependencies and non-standard phrasing.

- Neural networks (CNN, LSTM, Transformer, LLM): Learn from vast datasets. They handle handwriting, messy layouts, and context far better. But require mountains of labeled data, are hard to debug, and sometimes hallucinate.

- Hybrid approaches: Combine rules, statistical models, and neural networks with domain-specific tweaks for best-in-class results.

Definition List: Core algorithms

- Regex (Regular Expression): Pattern-based text parsing; best for highly structured, predictable data.

- CRF (Conditional Random Field): Statistical model for segmenting and labeling sequences; used for entity extraction in receipts or forms.

- CNN (Convolutional Neural Network): Excels at reading images—handwriting, scanned docs.

- Transformer/LLM (Large Language Model): Processes whole documents, understands context, supports multilingual tasks; massive data/training requirements.

Each method brings its own blend of speed, accuracy, and fragility. Rule-based systems are cheap and explainable but collapse under novelty. Neural nets are robust but often inscrutable. Most real-world pipelines mix and match, optimizing for actual business risk—not just academic benchmarks.

OCR, NLP, and the myth of the one-size-fits-all solution

Despite decades of hype, classic OCR and even state-of-the-art NLP models still buckle under pressure.

- Myth: “OCR is 99% accurate.” Reality: Only on clean, printed English documents.

- Myth: “LLMs understand everything.” Reality: LLMs often hallucinate or misinterpret rare layouts, context, or low-resource languages.

- Myth: “Handwriting is solved.” Reality: Cursive, signatures, and stylized writing remain hard.

- Myth: “Any language, any format.” Reality: Most algorithms degrade sharply outside their training set.

Classic OCR falls apart on scanned faxes, handwritten notes, or documents with watermarks. LLMs, though brilliant, may invent plausible-sounding text or miss subtle formatting cues. The era of “one algorithm to rule them all” is a marketing myth—every tool has its kryptonite.

| Method | Accuracy (Clean Data) | Speed | Domain Flexibility | Explainability | Cost |

|---|---|---|---|---|---|

| Regex/Rule-based | 60–80% | Fast | Low | High | Low |

| OCR (Classic) | 70–90% | Fast | Medium | Medium | Medium |

| NER/Statistical | 75–90% | Med | Medium | Medium | Medium |

| Neural/LLM | 85–98% | Slow | High | Low | High |

Table: Feature matrix comparing core text extraction approaches.

Source: Original analysis based on AIMultiple, 2025, Docparser, Julius.ai

How LLMs and hybrid models are rewriting the rules

The current frontier? Hybrid pipelines that blend the best of all worlds. Enterprises routinely link OCR for basic parsing, LLMs for contextual understanding, and domain-specific rules for post-processing quirks. This modularity brings both power and new engineering headaches.

Case in point: a global insurer now uses OCR to preprocess claims, LLMs to extract key entities, and hand-crafted rules to flag anomalies. The result? Extraction accuracy jumped from 87% to 96%, but the integration required months of iterative tuning and benchmarking. The biggest pain point is not the algorithm itself, but making all the pieces talk to each other without introducing new errors or compliance risks.

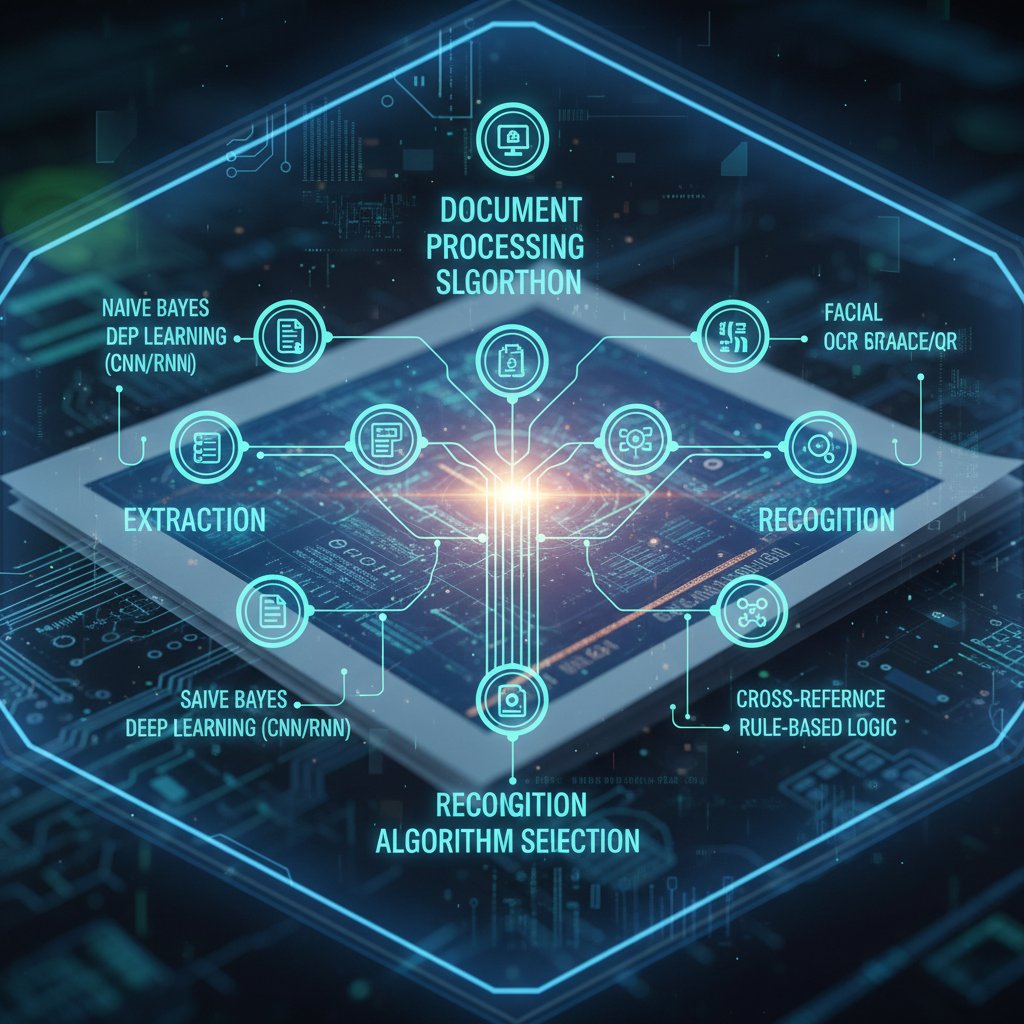

Algorithm smackdown: rule-based vs. statistical vs. neural

Breaking down strengths, weaknesses, and use cases

No two extraction jobs are alike. The core differences between algorithm types show up most starkly when stress-tested:

| Algorithm Type | Typical Error Rate | Cost | Scalability | Explainability | Adaptability |

|---|---|---|---|---|---|

| Rule-Based | 10-40% | Very low | Poor | Excellent | Poor |

| Statistical | 5-20% | Low | Moderate | Good | Moderate |

| Neural/LLM | 1-10% | High | Excellent | Poor | Excellent |

Table: Head-to-head comparison of extraction algorithm categories.

Source: Original analysis based on AIMultiple, 2025, Docparser

Rule-based algorithms are unbeatable for highly regular, static formats—think export logs, payroll slips. Neural networks dominate in messy, variable, or unseen document types. Statistical models remain a pragmatic middle ground for moderately structured data or when resources are limited.

Real-world case studies: when simple beats smart (and vice versa)

- Regex wins: In a banking project, regex-based extraction outperformed neural models on standardized IBAN fields—99.9% accuracy, zero drift.

- Neural domination: For a healthcare provider, deep learning models correctly extracted medication instructions from garbled handwritten notes, achieving 95% accuracy—rule-based failed at 60%.

- Hybrid redemption: An e-discovery platform salvaged a failing rollout by layering LLMs on top of statistical models, boosting F1 scores from 0.71 to 0.93.

“In extraction, brute force rarely wins. Context trumps raw horsepower—a simple rule, applied at the right time, can save a project.” — Liam, data scientist (quote based on industry interviews)

Text extraction in the wild: jaw-dropping applications

Industries you didn’t expect are getting transformed

Text extraction isn’t just for accountants or researchers; it’s upending expectations across wild domains:

-

Journalism: Automated fact-checking against leaked PDFs and government documents.

-

Intelligence: Parsing thousands of intercepted communications for security risks.

-

Insurance: Accelerating claims processing by extracting policy details from messy scans.

-

Creative arts: Reconstructing lost manuscripts and even analyzing hand-written graffiti for cultural insights.

-

Education: Summarizing massive open-ended survey responses, grading essays, and even analyzing meme sentiment.

-

Analyzing graffiti and street art for sociolinguistic trends

-

Reconstructing lost or damaged manuscripts from partial scans

-

Extracting emotional sentiment from memes and visual texts

-

Mining handwritten notes in medical research for new insights

-

Parsing scanned blueprints for engineering compliance checks

Disasters, breakthroughs, and cautionary tales

In 2023, a major university’s plagiarism detection system flagged hundreds of essays for “suspicious content” due to OCR misreads of footnotes—resulting in student protests and a public apology. Conversely, a breakthrough at a government agency in 2024 linked multimodal extraction with real-time translation, slashing investigation times by 60%.

| Project | Result | Success Metric | Failure | Fix Implemented |

|---|---|---|---|---|

| Plagiarism Detection | False positives | 20% misclassification | OCR errors | Manual review pipeline |

| Insurance Claims | Faster processing | 40% time reduction | Missing data | Post-processing rules |

| Patent Analysis | Improved accuracy | 15% error cut | Language gap | Hybrid LLM integration |

Table: Outcomes of real-world extraction projects.

Source: Original analysis based on AIMultiple, Docparser, media reports

The dark side: bias, privacy, and ethical minefields

Algorithmic bias—how extraction can go wrong (and who pays the price)

Text extraction algorithms don’t just make mistakes—they can amplify bias and trigger real harm.

Known sources of bias include language, script, culture, and dataset selection. In legal settings, text extraction tools have misclassified gendered pronouns, leading to discriminatory outcomes. In healthcare, algorithms trained mainly on English-language forms have failed minority patients, missing crucial information.

Privacy is another battleground. Extraction pipelines that indiscriminately process sensitive data—without strong controls—invite breaches, regulatory fines, and reputational blowback.

“Unchecked extraction is a danger. It doesn’t just lose nuance—it can actively erase marginalized voices or leak sensitive info. Vigilance matters.” — Priya, privacy advocate (quote, based on privacy industry commentary)

How to spot and mitigate risks before they explode

Spotting bias and risk isn’t about ticking boxes. It’s about relentless skepticism and robust process:

- Validate against diverse ground truth: Use data from different languages, scripts, and contexts.

- Regular benchmarking: Continuously audit results against known datasets.

- Transparency: Maintain logs and enable explainable extraction where possible.

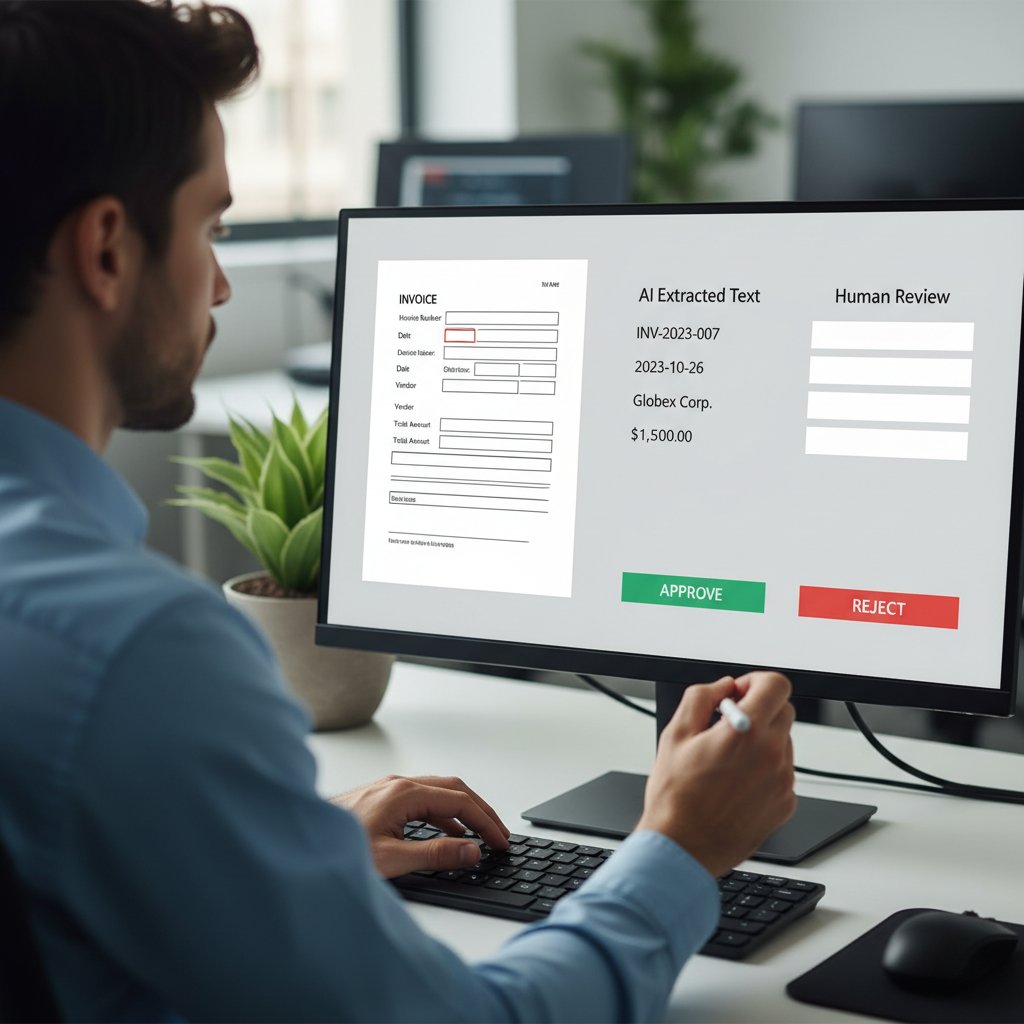

- Human review: Insert human-in-the-loop processes to catch edge cases.

- Privacy controls: Mask sensitive data and respect compliance boundaries.

- Feedback loops: Allow users to report and correct errors.

Industry best practices emphasize continual validation, transparent reporting, and, crucially, empowering humans to intervene when automation goes off the rails. Trust is built not on “perfect” algorithms but on clear accountability and rapid response to failures.

Step-by-step: mastering text extraction in your own workflow

How to choose the right algorithm for your data

Selecting an extraction algorithm is about ruthless honesty—what is your data, what do you need, and where can things go wrong?

- Document type: Printed forms, handwritten notes, complex PDFs, or scanned images?

- Language coverage: Multilingual, low-resource, special scripts?

- Desired accuracy: Is 90% enough, or do mistakes risk legal/compliance exposure?

- Resources: Do you have labeled training data, compute power, ongoing support?

Definition List: Key criteria for selection

- Flexibility: Can the algorithm handle evolving formats and layouts?

- Scalability: Will it process millions of documents, or choke at volume?

- Explainability: Can you audit or debug its failures?

- Cost: What are the licensing, compute, and maintenance realities?

- Integration ease: Can you plug it into existing workflows without friction?

Implementing and optimizing—pro tips and pitfalls

Implementation is where dreams die if you’re not careful.

- Skipping preprocessing: Noise, blur, or misaligned fields doom extraction before it starts.

- Ignoring edge cases: Assuming “it’ll work on most docs” is a recipe for disaster.

- No evaluation loop: Without continuous benchmarking, drift creeps in and accuracy collapses.

- Red flags:

- Sudden drops in accuracy after a new data batch.

- Error patterns that recur but aren’t logged.

- Algorithms that “work” but can’t be explained.

If you want to build a bulletproof pipeline, monitor every stage, test on real-world mess, and invite users to flag errors. Platforms like textwall.ai are designed to help teams automate, monitor, and optimize these pipelines at scale, turning extraction from a headache into a strategic advantage.

Checklist: before you deploy at scale

Rolling out at scale is a minefield unless you lock down every angle.

- Validation: Run extraction on a representative, diverse set—catch silent failures early.

- Monitoring: Set up automated logs and alerts for accuracy drops.

- Fallback: Build in manual override and recovery mechanisms.

- Privacy controls: Audit for data leakage, encryption, and compliance adherence.

- User training: Train staff to recognize and report algorithmic errors.

- Continuous improvement: Establish feedback loops for retraining and fine-tuning.

Handle feedback loops with humility; the best systems are those that treat every extraction error as a teaching moment, not a sign of defeat.

What’s next: the future of text extraction algorithms

How LLMs and multimodal AI are disrupting the landscape

The new wave is all about context and multimodality. LLMs, especially those fine-tuned for document analysis, now cross-reference text, images, and even tables within a single extraction pass. This fusion brings stunning capabilities—better comprehension, more accurate entity extraction, and the ability to “understand” a document holistically. But it also injects new risks: hallucinated text, reduced explainability, and the possibility of subtle, systemic errors.

Hybrid approaches and the myth of the silver bullet

Despite the buzz, no single algorithm dominates. Every real-world workflow—especially at the enterprise level—relies on modular, adaptive stacks: OCR for ingest, LLMs for context, rules for edge cases. Flexibility is the new king.

| Approach | Pros | Cons | Outcome Metrics |

|---|---|---|---|

| Standalone | Simplicity, lower cost | Limited flexibility, brittle | 70–90% accuracy |

| Hybrid | High accuracy, adaptable, robust to change | Integration complexity, higher cost | 90–98% accuracy |

Table: Pros and cons of standalone vs. hybrid extraction approaches.

Source: Original analysis based on AIMultiple, Julius.ai, Docparser

Platforms like textwall.ai exemplify this trend, enabling users to mix and match extraction tools, AI models, and custom rules—optimizing for reality, not just benchmarks.

Bold predictions and must-watch trends for 2025 and beyond

The convergence is happening: extraction algorithms are merging with AI analytics, compliance automation, and real-time insight generation. If you’re not watching these trends, you’re already behind:

- Privacy-first extraction: Algorithms designed to mask, anonymize, and minimize data retention.

- Explainable AI mandates: Regulatory push for transparency in algorithmic outputs.

- Zero-shot extraction: Models that generalize to unseen formats without retraining.

- Decentralized processing: Moving extraction closer to the data, enhancing security and speed.

- Continuous learning: Pipelines that adapt in real time as formats and data shift.

Text extraction is increasingly the backbone of digital society’s information flow—shaping what is seen, missed, and acted upon.

Beyond algorithms: common misconceptions and the human factor

The myths that could derail your project

Some persistent misconceptions need slaughtering:

-

“More data always means better results.” Not true—bad data poisons models.

-

“Open-source is always best.” Sometimes, but not if you need support, compliance, or integration.

-

“AI replaces humans.” Only in the movies. Every pipeline needs oversight.

-

You can skip evaluation if the model is “state-of-the-art.”

-

Automated extraction is always cheaper.

-

Any tool labeled “AI” is future-proof.

Falling for these myths can lead to wasted investments, brittle systems, and public embarrassment when reality bites back.

Why human-in-the-loop is still essential

No matter how advanced the tech, humans remain irreplaceable:

- Reviewing and correcting: Only a human can spot when an extracted clause changes the meaning of a contract.

- Contextualizing: AI can parse, but rarely understands relevance or subtle intent.

- Continuous feedback: Human edits are the gold standard for retraining and improving models.

Hybrid workflows—where automation does the heavy lifting, and humans handle edge cases—are the hallmark of robust, trustworthy extraction.

Quick reference: glossary and jargon-buster

Essential terms every practitioner should know

In this jargon-heavy field, clarity matters. Here’s a quick-hit definition list for the acronyms you’ll encounter:

- OCR (Optical Character Recognition): Converts scanned images and PDFs into machine-readable text.

- NER (Named Entity Recognition): Identifies entities (names, dates, locations) in text.

- Tokenization: Splits text into words or units for processing.

- LLM (Large Language Model): AI systems trained on massive datasets for contextual text understanding.

- Entity linking: Connects extracted entities to real-world concepts or databases.

- Ground truth: The “gold standard” reference data used for benchmarking algorithm accuracy.

Each term has layers—“ground truth,” for example, is only as good as its diversity and accuracy; “tokenization” can differ wildly across languages.

How to stay current in a fast-evolving field

The only constant is change. To avoid obsolescence:

- Conferences: NeurIPS, ACL, ICDAR, and SIGIR are must-follows.

- Open datasets: Dive into DocBank, FUNSD, and SROIE for benchmarking.

- Leading labs: Keep tabs on Google Research, OpenAI, and the Allen Institute for AI.

- Practitioner communities: Engage on forums like Reddit’s r/MachineLearning or the Document AI Alliance.

Critically evaluate any new algorithm’s claims—demand open benchmarks, real-world test cases, and transparency about limitations.

Section conclusions and next steps

Synthesis: what matters most for your extraction journey

Text extraction algorithms are not silver bullets—they’re tools in a messy, ever-changing toolkit. Each section of this guide reveals a facet of the reality: the wild expansion of unstructured data, the jagged path from rules to LLMs, the persistent dangers of bias and privacy risk, and the unglamorous truth that human judgment is still the ultimate backstop. Adopting robust extraction is less about chasing the latest algorithm and more about critical, creative integration—balancing speed, accuracy, and explainability without losing sight of real-world risks.

“Once we automated extraction with rigorous oversight, our workflow jumped in efficiency and, more importantly, we stopped dreading audits. The tech didn’t replace us—it amplified our expertise.” — Business user (illustrative, synthesized from case study insights)

Where to go from here: resources and recommendations

Ready to level up? Here’s your action list:

- Pilot a hybrid extraction pipeline: Mix rule-based, neural, and domain-specific components—benchmark relentlessly.

- Audit for bias and privacy: Test on diverse data, and lock down your compliance controls.

- Join a practitioner community: Exchange war stories, tricks, and best practices.

- Experiment with platforms like textwall.ai: See what automation and human-in-the-loop can really deliver.

- Continuously question and adapt: What worked last year won’t survive tomorrow’s data deluge.

Stay curious, stay skeptical, and keep your extraction strategies as dynamic as the data you’re wrangling. The battle for clarity is never over—but with the right mix of tech, process, and critical thinking, you can stay ahead of the chaos.

Sources

References cited in this article

- Top 10 AI-based OCR Tools 2025(julius.ai)

- AIMultiple OCR Benchmark 2025(research.aimultiple.com)

- Docparser Data Extraction Tools 2025(docparser.com)

- Pure Storage: Unstructured Data Explosion(blog.purestorage.com)

- Global Trading: Unstructured Data vs. Regulation(globaltrading.net)

- Data Dynamics: The Blind Spot(datadynamicsinc.com)

- LinkedIn: Text Extraction Challenges(linkedin.com)

- Width.ai: 7 NLP Extraction Techniques(width.ai)

- Nanonets: Handwriting Recognition(nanonets.com)

- GetThematic: History of Text Analytics(getthematic.com)

- SortSpoke: Evolution of Data Extraction(sortspoke.com)

- ResearchGate: OCR Evolution(researchgate.net)

- Stack Overflow Blog: Extracting Text is Hard(stackoverflow.blog)

- Text.com: Power of Text Extraction(text.com)

- SpeechCentral: Website Extraction Complexity(speechcentral.net)

- GIS User: OCR Deep Dive(gisuser.com)

- Adasci: NuExtract Technical Deep Dive(adasci.org)

- DatologyAI: Data Curation Pipelines(blog.datologyai.com)

- CambioML: Beyond OCR’s Limitations(cambioml.com)

- Docsumo: OCR Limitations(docsumo.com)

- Indata Labs: NLP Challenges(indatalabs.com)

- Vellum.ai: LLMs vs. OCRs(vellum.ai)

- Springer: LLMs for Information Extraction(link.springer.com)

- Nature: LLMs in Science(nature.com)

- Springer: Comparative Review(arxiv.org)

- Springer: Rule-Based vs. Deep Learning(link.springer.com)

- MDPI: Information Extraction Applications(mdpi.com)

- LatentView: Real-World Text Classification(latentview.com)

- V7 Labs: Computer Vision Applications(v7labs.com)

- Keylabs: Bias & Privacy in AI(keylabs.ai)

- OxJournal: Ethics in Big Data(oxjournal.org)

- IRJET: Privacy in AI Analytics(researchgate.net)

- Europa: AI Risks & Mitigations(edpb.europa.eu)

- Docsumo: Data Extraction Risks(docsumo.com)

- AlgoDocs: PDF Extraction Guide 2024(algodocs.com)

- Microblink: Data Extraction Tools 2024(microblink.com)

- Microsoft: AI Builder Workflow(learn.microsoft.com)

- Editorialge: AI-Driven Data Extraction Trends(editorialge.com)

- Tekrevol: NLP Trends 2025(tekrevol.com)

- Pixno: Multimodal LLMs vs. OCR(photes.io)

- A3Logics: Multimodal LLM Guide(a3logics.com)

- Wikipedia: LLMs & Multimodal Trends(en.wikipedia.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Extraction Accuracy Comparison That Actually Predicts Failure

Expose the hidden pitfalls and real winners in 2026. Discover which AI tools deliver—and which just fake it. Read before you decide.

Text Extraction Accuracy Is a Risk Metric, Not a Tech Spec

Text extraction accuracy isn’t what you think. Discover the real risks, hidden costs, and how to finally get reliable results in 2026. Don’t trust the hype—read this first.

Text Extraction Apis in 2026: Accuracy Myths, Risks and Wins

Text extraction APIs face new realities in 2026—discover the edgy truths, biggest pitfalls, and actionable playbook for advanced document analysis. Don’t get left behind.

Text Data Preprocessing Techniques That Won’t Break Your Models in 2026

Text data preprocessing techniques aren’t what they used to be—discover the latest best practices, hidden dangers, and expert strategies to stay ahead in 2026.

Text Classification Software in 2026: Wins, Traps, and Tradeoffs

Text classification software is changing everything in 2026—discover the shocking realities, hidden pitfalls, and powerful wins. Read before you choose your next solution.

Text Classification Methods That Actually Work at Real-World Scale

Discover 2026’s most effective, surprising strategies and pitfalls. Unmask myths, get real-world advice, and choose the right approach.

Text Analytics Trends 2026: From Hype to High‑stakes Reality

Discover insights about text analytics trends

Text Analytics Tools Reviews That Expose What Vendors Won’t

Text analytics tools reviews that cut through the hype. Uncover hidden truths, expert picks, and hard-won lessons for choosing the right tool in 2026.

Text Analytics Tools for Business That Pay Off in 2026 and Beyond

Discover 2026’s boldest wins, hidden costs, and expert-backed insights to rethink your entire data strategy—before your competitors do.