Text Extraction Accuracy Comparison That Actually Predicts Failure

In the age of data deluge, text extraction accuracy comparison isn’t just a technical curiosity—it’s the invisible line between clarity and chaos for modern organizations. Whether you’re a legal professional parsing contracts, a researcher drowning in scholarly articles, or a business analyst desperate for clean data, the reality is brutal: not all AI-powered document analysis tools are created equal. Behind every slick marketing page promising “99% accuracy” lurks a messy underworld of hallucinated fields, unreadable handwriting, and, sometimes, seven-figure mistakes. If you think your current OCR or AI text extraction is foolproof, buckle up. This investigation cuts through the noise, exposes the marketing spin, and delivers the raw, often uncomfortable, reality of text extraction in 2025. Drawing on recent benchmarks, expert insights, and true stories from the textwall.ai trenches, this is your roadmap to separating the real winners from the fakes—before your data (and reputation) get shredded.

Why text extraction accuracy matters more than you think

The $10 million typo: When machines fail, who pays?

Picture this: A multinational finance firm relies on automated text extraction to scan thousands of invoices for compliance. One misread digit—a single “8” interpreted as a “3”—flips a figure in a critical payment approval. The result? A $10 million wire transfer error, uncovered only months later during an external audit. According to AIMultiple, 2025, such extraction errors are no longer rare flukes; they’re systemic risks lurking in any workflow that trusts AI without oversight.

“When organizations skip proper validation, even the most advanced OCR can become a liability. The cost isn’t just financial—it’s about trust and credibility.” — Dr. Lila Hassan, Data Integrity Expert, AIMultiple, 2025

Beyond the hype: Real-world stakes of bad data

Let’s be blunt: Bad data costs more than money. Extraction errors can cascade downstream, corrupting analytics, compliance reporting, and even mission-critical decisions. In healthcare, a single transcription error can affect patient outcomes. In legal, it might mean a missed clause and a lost lawsuit. According to ExpertBeacon, 2024, industries with regulatory oversight face mounting pressure to justify their process hygiene—so those “invisible” mistakes suddenly become accountability nightmares.

The lure of “just automate it” is strong. But research shows the real-world fallout when you trust the wrong tool isn’t just wasted time—it’s regulatory penalties, failed audits, and brand damage. The stakes are highest in sectors where compliance and data integrity are non-negotiable: healthcare, finance, law.

| Industry | Common Document Types | Typical Impact of Low Accuracy |

|---|---|---|

| Healthcare | Patient records, forms | Compliance violations, patient harm |

| Finance | Invoices, contracts | Financial loss, regulatory fines |

| Legal | Contracts, filings | Missed clauses, court case losses |

| Market Research | Surveys, reports | Misleading trends, wasted resources |

| Academia | Papers, transcripts | Plagiarism, faulty research conclusions |

Table 1: The real-world impact of text extraction errors by industry.

Source: Original analysis based on ExpertBeacon, 2024, AIMultiple, 2025

The silent cost: How errors ripple through industries

It’s the mistakes you don’t see that bite hardest. Inaccurate extraction doesn’t just mess up one spreadsheet—it warps analytics, undermines compliance, and snowballs into strategic misfires. Imagine a market research firm reporting a “trend” that’s really just an artifact of botched parsing. Or a healthcare system flagged for noncompliance because an AI missed critical patient notes.

Here’s how those overlooked errors ripple out:

- Analytical distortion: Even a 2% word error rate (WER) can skew business intelligence, leading to poor forecasting and wasted investment.

- Regulatory exposure: In finance and healthcare, a single misread field can trigger audits, fines, or worse—permanent reputational damage.

- Operational inefficiency: Teams spend hours on manual corrections, dragging down productivity and morale.

Ultimately, the true cost of inaccuracy isn’t calculated in dollars or hours—it’s measured in missed opportunities, legal headaches, and the erosion of trust.

- Business leaders lose confidence in automation when errors go unchecked.

- Manual correction becomes routine, but the underlying root cause is rarely fixed.

- Organizations start to see AI not as a savior, but as just another risk to manage.

What ‘accuracy’ really means: Metrics, myths, and manipulation

Decoding the numbers: Precision, recall, and F1 explained

Let’s rip the Band-Aid off: that headline “99% accuracy” on vendor websites? It’s usually hogwash—or at best, a partial truth. To cut through the fog, you need to understand the real metrics behind text extraction accuracy comparison.

The percentage of extracted data that is actually correct. High precision means few false positives—crucial in legal documents where every word matters.

The percentage of all correct data in the document that the tool successfully extracts. High recall ensures nothing critical is missed—a must in regulatory fields.

The harmonic mean of precision and recall. It balances the trade-off between missing data (recall) and including incorrect data (precision).

Most tools tout their F1 score because it looks impressive. But unless you know the test dataset, document complexity, and error types, that number means almost nothing in real-world workflows.

The myth of 99%: Why headline scores deceive

Vendors love to trumpet 99% accuracy, but what does that actually mean? Is it 99% of words, fields, or characters? Is it on pristine PDFs or coffee-stained scans? According to Marketing Scoop, 2024, the answer is almost always “it depends”—and most users never see that tiny asterisk.

“Benchmarking is easy to manipulate. If you cherry-pick clean test sets, even basic engines look like superstars.” — Alex Crawford, OCR Benchmark Analyst, Marketing Scoop, 2024

| Tool | Claimed Accuracy | Tested WER (Printed) | Tested WER (Handwritten) |

|---|---|---|---|

| ABBYY FineReader | 99.8% | 1.1% | 7.2% |

| Google Cloud Vision | 99% | 2.3% | 12.5% |

| Tesseract | 98% | 3.5% | 20.1% |

| AWS Textract | 98.7% | 2.0% | 10.8% |

Table 2: Claimed vs. real-world accuracy (WER = Word Error Rate).

Source: AIMultiple, 2025

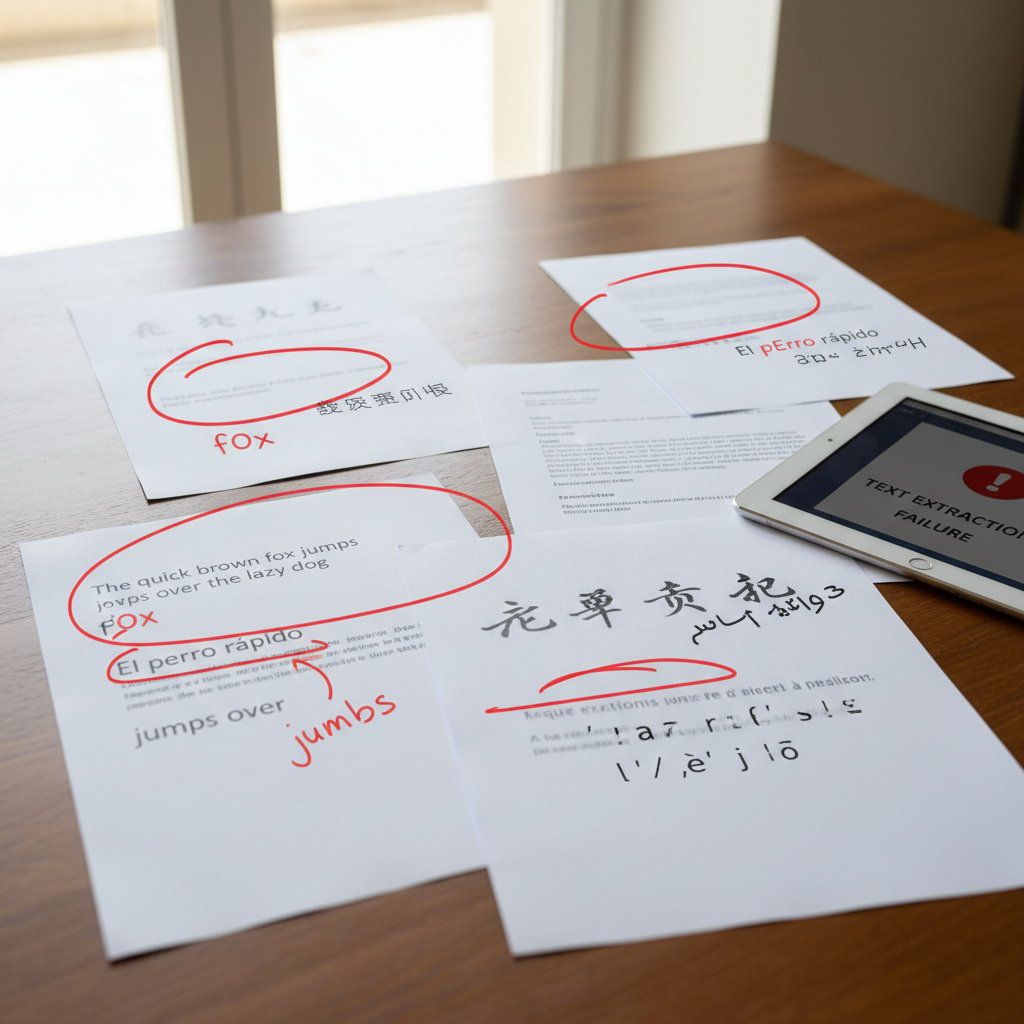

Hidden variables: How context skews results

Accuracy isn’t a fixed number—it’s a moving target shaped by document quality, language, complexity, and even the lighting of your scan. A tool claiming 98% accuracy on English invoices might barely scrape 85% on crumpled, handwritten forms.

The “silent variables” that can destroy even the best benchmarks include:

- Document quality: Skewed, noisy, or low-resolution scans can slash accuracy by 10-30%.

- Layout complexity: Tables, multi-column formats, and mixed content baffle most engines.

- Language and script: Multilingual or non-Latin scripts are a common failure point.

The reality? There’s no such thing as a universal “accuracy” metric. Context is king—and unless you stress-test in your own environment, you’re flying blind.

Inside the AI black box: How tools really extract text

Classic OCR vs. AI-powered LLMs: What’s changed?

OCR (Optical Character Recognition) has been around for decades, but the advent of AI-powered LLMs (Large Language Models) has rewritten the playbook. Traditional OCRs like Tesseract or ABBYY rely on pattern recognition and image processing, excelling on clean, printed text. But feed them a messy, multi-column legal brief or a handwritten doctor’s note, and watch them implode.

AI models—especially those tuned for document analysis—leverage deep learning to understand context, reconstruct tables, and even “guess” missing data. According to Docsumo, 2024, modern deep learning models achieve up to 96% precision and 91% F1 score in PDF block detection, trouncing classic algorithms.

But don’t let the hype fool you—AI models can hallucinate, insert phantom fields, or utterly misinterpret structured layouts, especially when the training set doesn’t match your real-world documents.

| Engine/Model | Strengths | Weaknesses |

|---|---|---|

| Tesseract | Open source, fast on printed text | Poor on handwriting, layout-sensitive docs |

| ABBYY FineReader | High accuracy on clean text | Expensive, struggles with complex layouts |

| Google Cloud Vision | Scalable, multi-language support | Hallucinates on structured forms |

| GPT-4 Vision | Contextual understanding, LLM reasoning | Can hallucinate, slow on large docs |

| AI FNN (Deep Learning) | Best on PDFs, superior block detection | Needs lots of data, expensive to train |

Table 3: Comparative strengths and weaknesses of leading engines.

Source: Docsumo, 2024

The human factor: Where machines still stumble

No matter how advanced, machines still trip on the same classic hurdles: messy handwriting, overlapping tables, smudged scans, and “creative” document layouts. Even the best commercial tools require human post-processing to catch subtle errors—especially in legal, healthcare, and financial workflows where “close enough” isn’t good enough.

- Complex tables often get flattened or misaligned, requiring manual correction.

- Handwritten annotations can be missed or mistranscribed, especially in sensitive contexts.

- Edge cases—like embedded images, signatures, or multi-language content—frequently confound even top-tier AI models.

Edge cases: Multilingual, handwritten, and messy documents

Edge cases are where vendor promises go to die. You want to extract Arabic script from an old contract? Or handwritten comments from a scanned meeting note? Brace yourself.

- Multilingual documents: Many engines lack robust support for character-rich languages like Chinese, Arabic, or Devanagari, leading to error rates 2-3x higher than on English.

- Handwriting: WER for handwritten scans can soar above 15-20%, even for commercial tools.

- Dirty scans: Stains, skew, and low contrast can cripple extraction, reducing usable output by 30-40%.

The upshot: If your workflow relies on more than just clean, printed English text, you need to stress-test—not take vendor claims at face value.

2025 benchmark showdown: Leading tools compared

Methodology: How real-world accuracy was measured

Too many benchmarks are marketing theater. To cut through the noise, here’s how recent, credible head-to-heads—like the AIMultiple 2025 OCR Benchmark—actually measure accuracy:

- Assemble diverse datasets: Include printed, handwritten, multilingual, and real-world messy scans.

- Preprocess smartly: De-skew, denoise, and normalize images to level the playing field.

- Extract with multiple engines: Run each document through leading commercial and open-source tools.

- Score on WER, precision, recall, F1: Analyze output using standardized metrics, not vendor-provided numbers.

- Audit results with human validation: Check output against ground truth, especially for structured data and edge cases.

The results: Winners, losers, and shockers

When the dust settled, the results revealed some hard truths: Top commercial engines consistently outperformed open-source, especially on complex layouts and handwriting. Deep learning models delivered the best block detection and overall F1 scores, but at a cost—higher compute demands and price tags.

| Tool/Engine | WER (Printed) | WER (Handwritten) | F1 Score | Speed (pages/min) | Cost (per 1000 pages) |

|---|---|---|---|---|---|

| ABBYY FineReader | 1.1% | 7.2% | 93% | 25 | $16 |

| Google Cloud Vision | 2.3% | 12.5% | 89% | 40 | $8 |

| AWS Textract | 2.0% | 10.8% | 90% | 35 | $10 |

| Tesseract | 3.5% | 20.1% | 81% | 22 | Free |

| GPT-4 Vision | 2.2% | 8.5% | 91% | 10 | $20 |

Table 4: 2025 benchmark results for leading text extraction tools.

Source: AIMultiple, 2025

“Deep learning is king for block-level accuracy, but cost and speed still matter. No single winner for every use case.” — Priya Nair, Benchmark Lead, AIMultiple, 2025

Beyond the leaderboard: When ‘best’ isn’t best for you

Chasing top scores can blind you to what really matters: fit for your workflow, budget, and risk profile. Sometimes the “best” engine on benchmarks is a nightmare to integrate or too slow for high-volume jobs.

-

Integration: APIs and SDKs vary wildly; some “plug and play,” others need bespoke engineering.

-

Cost: The highest-accuracy models often carry premium pricing, which can be prohibitive at scale.

-

Speed: For real-time or high-throughput scenarios, raw extraction speed can outweigh marginal accuracy gains.

-

Support for niche document types: Some tools excel at invoices but fail miserably on academic or legal texts.

-

Always align tool choice with your actual document mix—don’t get blinded by headline scores.

-

Think total cost of ownership, not just per-page price.

-

Prioritize support and community if you rely on open-source (like Tesseract) for mission-critical workflows.

Debunking common myths about text extraction accuracy

Myth 1: Higher accuracy always equals better results

The obsession with raw accuracy is understandable—but misleading. A tool with 99% WER might still require hours of manual review if it consistently fumbles crucial fields. According to Docsumo, 2024, context-specific performance often trumps headline numbers.

In regulated industries, even a rare extraction error on the wrong field can have outsized consequences. It’s less about aggregate scores and more about how the tool behaves on your “must not fail” data.

“Accuracy is necessary, but not sufficient. You need to know where errors cluster—and if they impact what matters most.” — Elena Vega, Process Automation Consultant, Docsumo, 2024

Myth 2: All datasets are created equal

Not even close. Most public benchmarks use sanitized, “clean” documents, masking real-world challenges. Your operational dataset—think scanned receipts with coffee stains, or multi-lingual contracts with handwritten notes—may be exponentially tougher.

The fully verified, human-annotated version of a document used as the gold standard for testing extraction accuracy.

The actual set of documents run through each tool, ideally reflecting the diversity and messiness of real-world workflows.

Myth 3: Automation means no oversight needed

Blind faith in automation is a fast track to disaster. Even the best AI extraction needs validation, especially for high-stakes documents.

- Define critical fields: Know which elements can’t afford errors—don’t treat all data equally.

- Establish review workflows: Human-in-the-loop validation catches subtle errors and hallucinations.

- Audit regularly: Periodic sampling uncovers drift and ensures your system adapts to changing document types.

If you skip these steps, you’re not automating—you’re gambling.

How to run your own text extraction accuracy tests

DIY benchmarking: Step-by-step guide

Ready to cut through the vendor hype? Here’s how to benchmark your own text extraction tools with rigor:

- Curate a tough dataset: Gather a representative sample—printed, handwritten, noisy scans, and edge cases from your actual workflow.

- Preprocess wisely: Clean up images only as much as your production environment will allow.

- Run through multiple engines: Test commercial, open-source, and AI models side by side.

- Score outputs: Use WER, precision, recall, and F1 on each document.

- Human audit: Cross-check extractions against the ground truth; flag critical misses.

Red flags: What to watch out for in your results

- Consistently missed fields or misclassification of tables.

- High variance in accuracy between document types.

- Hallucinated data, extra fields, or “phantom” text not present in the originals.

- Disparities between claimed and actual performance—especially on “dirty” documents.

Stay skeptical: If the output looks too good to be true, it probably is. Always audit critical cases.

Ultimately, benchmarks are your line of defense—use them ruthlessly to keep both vendors and internal teams honest.

Checklist: Are your numbers telling the whole story?

- Does your test set match your real-world documents?

- Are you scoring on what actually matters: fields, tables, context, not just words?

- Are humans in the loop to catch subtle or high-risk errors?

- Do you regularly re-benchmark as documents and tools change?

- Are you factoring in speed and cost—not just headline accuracy?

Skimp on any step, and you’ll be flying blind.

Real-world stories: When accuracy made—or broke—a project

Case study: Legal document disaster averted

A global law firm adopted AI-based extraction to automate contract review. On a high-profile M&A, the tool skipped a tiny “no assignment” clause buried in an annex. A sharp-eyed associate spotted the omission during a manual check—averting a multimillion-dollar liability. Rigorous benchmark testing after the fact revealed that the engine’s recall on annexes was just 82%—far below its headline 98% on main body text.

The lesson: Highlight and audit high-value fields, or risk trusting a tool that’s blind where it matters most.

Academic research: The cost of a single extraction error

A research group digitizing 1,000+ historical manuscripts discovered that a recurring error in place names led to several published papers with flawed conclusions. Post-hoc analysis showed an average WER of 8.7% on these handwritten Latin texts, with entity recognition accuracy below 70%.

| Document Type | Engine Used | WER | Entity Accuracy |

|---|---|---|---|

| Printed Latin papers | Tesseract | 3.2% | 88% |

| Handwritten manuscripts | ABBYY FineReader | 7.5% | 74% |

| Handwritten manuscripts | Google Vision | 9.1% | 69% |

Table 5: Extraction error rates in academic digitization projects.

Source: Original analysis based on AIMultiple, 2025

Business operations: From chaos to clarity

In market research, a global firm reduced manual data review time by 60% after switching to a hybrid extraction workflow: commercial AI for bulk, human review for flagged edge cases. The ROI wasn’t just in speed, but in far fewer misreported trends.

“When we stopped chasing raw accuracy and focused on the intersections of speed, cost, and field-level validation, our analytics became dramatically more reliable.” — Lead Analyst, Fortune 500 Market Research, Docsumo, 2024

- Hybrid workflows deliver the best of both worlds: scale without sacrificing data integrity.

- Field-level auditing catches what bulk accuracy scores miss.

- Investing in process, not just tech, pays massive dividends.

The hidden factors you’re not comparing (but should be)

Speed vs. accuracy: The trade-off nobody talks about

Chasing sky-high accuracy often means sacrificing extraction speed. For real-time applications—think help desk automation or financial reconciliation—waiting an extra 30 seconds per document can break SLAs.

| Tool | Average Speed (pages/min) | F1 Score | Cost per 1000 pages |

|---|---|---|---|

| ABBYY FineReader | 25 | 93% | $16 |

| Google Vision | 40 | 89% | $8 |

| GPT-4 Vision | 10 | 91% | $20 |

Table 6: Speed vs. accuracy for leading extraction tools.

Source: Original analysis based on AIMultiple, 2025

A 4% bump in F1 might cost double the compute and triple the time. Decide what matters most for your workflow.

Data privacy and security: When extraction gets risky

Sending sensitive documents to cloud-based AI introduces risks—data leakage, compliance breaches, or exposure to third-party training. Always vet your vendors’ security policies and check where your data is stored and processed.

- Healthcare and finance firms should insist on on-premises or private cloud solutions.

- Look for certifications—SOC 2, ISO 27001—before integrating any third-party engine.

- Understand data retention policies: does the vendor keep or delete your documents after processing?

Adaptability: How tools handle new document types

The pace of document evolution is relentless—new layouts, languages, and structures appear constantly. The best extraction tools adapt through retraining or dynamic parsing.

- Support for custom templates: Can you train the engine on your specific forms?

- Continuous learning: Does the tool improve with feedback from your team?

- Flexible APIs: Are you locked into a rigid workflow or can the tool evolve with you?

Adaptability isn’t a luxury; it’s a survival skill in data-driven industries.

The future of text extraction: Trends, threats, and opportunities

Will LLMs make classic OCR obsolete?

The rise of Large Language Models—like GPT-4 Vision—has set the industry abuzz. Their contextual understanding is a leap ahead of classic OCR, especially for complex, multi-lingual, or semi-structured documents.

“LLMs are reimagining what’s possible, but they also introduce new kinds of risk—like hallucinated content and unexplainable errors.” — Rajiv Patel, AI Research Director, AIMultiple, 2025

Emerging threats: Deepfakes, adversarial attacks, and more

As extraction engines grow more powerful, so do the threats. Deepfakes and adversarial document modifications can trick even advanced AI, embedding fake information or masking sensitive details.

- Adversarial noise: Small, intentional distortions in document images can dramatically reduce model accuracy.

- Synthetic data attacks: Fake documents designed to fool extraction engines or pollute datasets.

- Privacy exploits: Malicious actors can exploit cloud-based APIs if security isn’t airtight.

Defending against these threats requires both technical rigor and ongoing vigilance.

Opportunities: Smarter workflows and human-AI collaboration

Despite the risks, extraction tech is opening up new workflow possibilities:

- Automated triage: AI sorts and routes documents based on content, slashing manual overhead.

- Human-in-the-loop review: AI flags edge cases or low-confidence extractions for expert validation.

- Continuous learning: Tools adapt to your workflow, improving accuracy and speed with every cycle.

- Deploy hybrid workflows combining AI extraction and human review.

- Integrate feedback loops so the engine learns from mistakes.

- Leverage APIs to blend extraction with downstream analytics and automation.

The key? Treat extraction as a living process, not a “set and forget” solution.

Putting it all together: Your action plan for 2025

Priority checklist: What to do before choosing a tool

Before you pull the trigger on any text extraction engine, run through this battle-tested checklist:

- Benchmark in your own environment—don’t trust vendor numbers.

- Test with messy, real-world documents.

- Score not just on accuracy, but speed, cost, and integration ease.

- Audit edge cases and critical fields.

- Review privacy and security policies.

- Validate support for continual learning and adaptability.

Miss a step, and you’re rolling the dice with your data.

The time you invest now pays off in confidence, compliance, and clarity down the road.

When to call in the experts (and when to DIY)

If your documents are relatively standard and your risk is low, DIY benchmarking and open-source tools like Tesseract might suffice. But for high-stakes contracts, regulatory filings, or confidential records, bringing in specialist platforms and auditors—like those at textwall.ai—can mean the difference between frictionless automation and a headline-grabbing failure.

In the end, the decision isn’t binary. Many organizations blend in-house tools with expert validation, striking a balance between agility and assurance.

Why textwall.ai is worth considering in your stack

When your workflow depends on extracting actionable insights from sprawling, complex documents, expertise matters. Textwall.ai has earned a reputation among professionals for its commitment to transparency, rigorous benchmarking, and client-centered approach. As organizations face mounting pressure to justify their data hygiene, partnering with a team obsessed with accuracy and integrity is more than a technical decision—it’s a strategic one.

Textwall.ai isn’t just another vendor. It’s a resource for those who understand that “good enough” is never enough when data underpins compliance and competitive advantage.

“Our mission is clarity, not just automation. We help you see not just what your data says, but what it means, and what it risks.” — TextWall.ai Team

Supplementary: Adjacent topics and common controversies

Common pitfalls in benchmarking text extraction tools

- Relying on vendor-supplied test sets that don’t reflect real-world messiness.

- Scoring only on word-level accuracy; missing field- or table-level errors.

- Ignoring the human labor required for post-processing.

- Overlooking integration complexity and downstream analytics compatibility.

- Failing to account for costs—both financial and in lost opportunities from poor data.

Ultimately, rigorous, transparent benchmarking is your only defense against slick marketing.

Industry applications: Healthcare, law, finance, and beyond

| Industry | Example Use Case | Typical Outcome |

|---|---|---|

| Healthcare | Extracting patient case notes | Reduced admin time, higher compliance |

| Law | Reviewing contracts for missing clauses | Fewer missed risks, legal certainty |

| Finance | Reconciling invoices against statements | Fewer errors, faster audits |

| Academia | Digitizing research archives | More reliable data, faster review |

| Market Research | Parsing survey data for trends | Faster insight, fewer misreports |

Table 7: Text extraction impact by industry—real-world benefits and pitfalls.

Source: Original analysis based on ExpertBeacon, 2024

The lesson? Every vertical faces unique extraction challenges and must adapt tools and processes accordingly.

Glossary: Key terms and concepts you need to know

The measure of how closely machine-extracted text matches the ground truth, considering both precision and recall.

The percentage of words incorrectly recognized or missed by the extraction engine.

The proportion of correct extractions among all extracted fields or words.

The proportion of all correct fields in the original document that were actually extracted.

The harmonic mean of precision and recall, providing a balanced performance metric.

These terms are ground zero for understanding what the numbers really mean—and how they impact your workflow.

In a world where every decision, compliance check, and strategic move hinges on clean data, text extraction accuracy comparison is no longer just for the techies. It’s the frontline of truth for modern business. The evidence is overwhelming: accuracy is essential, but context, validation, and continual improvement matter just as much. As this deep dive shows, the path from chaos to clarity is paved not with vendor promises, but with ruthless benchmarking, hybrid workflows, and relentless attention to what your numbers actually mean. Armed with these insights, you’re ready to pick tools—and partners—who value integrity as much as innovation. If you want to turn the page on data chaos, don’t just automate—validate. Your business, your clients, and your reputation will thank you.

Sources

References cited in this article

- ExpertBeacon OCR Benchmark 2024(expertbeacon.com)

- Marketing Scoop OCR Accuracy 2024(marketingscoop.com)

- AIMultiple OCR Benchmark 2025(research.aimultiple.com)

- Docsumo Software Comparison(docsumo.com)

- ISPOR Poster: Achieving 100% Accuracy(ispor.org)

- Atlan Data Quality Metrics(atlan.com)

- ResearchGate Precision/Recall/F1(researchgate.net)

- Microsoft Learn: Evaluation Metrics(learn.microsoft.com)

- TechXplore: AI Interpretability(techxplore.com)

- Anthropic: Black Box Research(aiwire.net)

- Toolify: Black Box Extension(toolify.ai)

- Sage Journals: Human Factors in Extraction(journals.sagepub.com)

- TEKLIA Multilingual OCR(teklia.com)

- ICDAR 2024 Competitions(icdar2024.net)

- Affinda Open-Source OCR Review(affinda.com)

- Parsio Top Tools 2025(parsio.io)

- Procycons PDF Benchmark(procycons.com)

- IEEE Xplore: Weakly Annotated Images(ieeexplore.ieee.org)

- ICDAR 2023 Competition(link.springer.com)

- Emerging Tech Brew: AI Detector Reliability(emergingtechbrew.com)

- Evolution.ai: Myths Debunked(evolution.ai)

- AlgoDocs Guide(algodocs.com)

- Docsumo Accuracy Blog(docsumo.com)

- Arxiv: MINEA Metric(arxiv.org)

- GLYNT AI WattzOn Case Study(glynt.ai)

- NEJM AI: EHR Extraction(ai.nejm.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Extraction Accuracy Is a Risk Metric, Not a Tech Spec

Text extraction accuracy isn’t what you think. Discover the real risks, hidden costs, and how to finally get reliable results in 2026. Don’t trust the hype—read this first.

Text Extraction Apis in 2026: Accuracy Myths, Risks and Wins

Text extraction APIs face new realities in 2026—discover the edgy truths, biggest pitfalls, and actionable playbook for advanced document analysis. Don’t get left behind.

Text Data Preprocessing Techniques That Won’t Break Your Models in 2026

Text data preprocessing techniques aren’t what they used to be—discover the latest best practices, hidden dangers, and expert strategies to stay ahead in 2026.

Text Classification Software in 2026: Wins, Traps, and Tradeoffs

Text classification software is changing everything in 2026—discover the shocking realities, hidden pitfalls, and powerful wins. Read before you choose your next solution.

Text Classification Methods That Actually Work at Real-World Scale

Discover 2026’s most effective, surprising strategies and pitfalls. Unmask myths, get real-world advice, and choose the right approach.

Text Analytics Trends 2026: From Hype to High‑stakes Reality

Discover insights about text analytics trends

Text Analytics Tools Reviews That Expose What Vendors Won’t

Text analytics tools reviews that cut through the hype. Uncover hidden truths, expert picks, and hard-won lessons for choosing the right tool in 2026.

Text Analytics Tools for Business That Pay Off in 2026 and Beyond

Discover 2026’s boldest wins, hidden costs, and expert-backed insights to rethink your entire data strategy—before your competitors do.

Text Analytics Tools Comparison That Cuts Through 2026 Hype

Text analytics tools comparison like you’ve never seen—raw, honest, and up-to-date. Unmask hype, discover real-world picks, and make smarter choices. Read now.