Automated Summary Generation When You Should (and Shouldn’t) Trust It

In a world where attention spans are shrinking and the avalanche of information grows heavier by the day, automated summary generation stands out as both a hero and a villain. On one hand, machine-generated summaries promise to save us from data overload, offering instant clarity in a society addicted to speed. On the other, they threaten to flatten nuance, introduce bias, and lull us into trusting algorithms with the power to shape our understanding. Welcome to the reality of AI text summarization—a realm where extractive vs. abstractive methods, document analysis automation, and LLM summarization best practices aren’t just technical terms but frontline defenses (or weapons) in the battle for cognitive survival. This deep dive exposes the real impact, hidden pitfalls, and strategic uses of automated summary generation. Get ready to see through the hype, challenge your assumptions, and learn how to harness this technology without letting it decide what you know.

The overload paradox: Why we crave automated summaries

Drowning in data: The new normal

We’re not just living in the information age—we’re drowning in it. The modern professional faces a relentless flood of reports, emails, market analyses, and legal documents. In 2023, the amount of digital content created daily hit record highs, with the average knowledge worker spending nearly 30% of their workweek simply reading and processing information (Deloitte, 2024). The rise of remote work and digital transformation initiatives only amplified the deluge.

According to a 2024 McKinsey report, 71% of organizations now deploy generative AI in at least one business function, up from 65% earlier in the year. This drastic uptick isn’t just about chasing the next big thing—it's a survival tactic. Organizations realize that traditional document review isn’t scalable. The result? Automated summary tools have become essential, not optional.

But there’s a catch. The more summaries we churn out, the greater our hunger for even faster, even more distilled information—a dopamine-driven cycle that, ironically, leaves us craving clarity while eroding our patience for complexity. This is the overload paradox at work, where instant summaries promise salvation but risk deepening our addiction to cognitive shortcuts.

Information fatigue and cognitive burnout

The effects of information overload aren’t just theoretical—they’re painfully real. Forbes reported in late 2023 that the relentless quest for more data often backfires, increasing our confidence in decisions while actually reducing their quality (Forbes, 2023). The culprit? Decision fatigue, cognitive burnout, and an incessant craving for the next notification or summary.

Research from the Ponemon Institute (2023) exposed a dark side to this obsession: 27% of organizations admitted to missing critical security events because of alert overload—too many automated notifications, not enough context or prioritization. The human brain, wired for pattern recognition and nuance, simply can’t keep up.

Automated summaries are designed to ease the burden, but they risk dulling our analytical edge if we let them replace, rather than augment, our judgment. As data scientist Dr. Emily Chang bluntly put it: “Summaries are only as smart as the person interpreting them. You can’t outsource your critical thinking to an algorithm, no matter how sophisticated.”

The promise and peril of AI shortcuts

AI-driven summaries have become a “shortcut to clarity,” as the phrase goes in tech circles. Yet, beneath the streamlined surface lurk hidden dangers—over-simplification, loss of nuance, and algorithmic bias. For every time a legal professional slashes contract review time by 70% with automated tools (Forrester, 2023), there’s a risk of missing subtle provisions that could alter a deal.

"AI summaries rapidly distill complex information, but they still demand human oversight for context and accuracy." — McKinsey State of AI, 2024 (McKinsey, 2024)

The promise is seductive: instant insight, less grunt work, and fewer late-night reading marathons. But the peril is insidious: a creeping dependence on “machine wisdom” that can turn even the sharpest professional dull, especially when algorithms inherit or amplify the biases baked into their training data. The challenge, then, is not whether to use AI summaries—but how to use them without losing our grip on reality.

From punchcards to GPT: The wild history of summary tech

The birth of machine summarization

Automated summary generation isn’t some overnight phenomenon—it’s the culmination of decades of experimentation, failure, and incremental victories. The earliest attempts date back to the 1950s, when researchers punched stacks of cards into primitive computers, hoping to extract “main ideas” from news stories.

| Era | Technology | Key Milestone |

|---|---|---|

| 1950s-1970s | Rule-based systems | First attempts at keyword extraction |

| 1980s-1990s | Statistical models | Introduction of TF-IDF, sentence ranking |

| 2000s | Machine learning (ML) | Bayesian and decision tree-based summaries |

| 2010s | Deep learning | Neural networks and word embeddings |

| 2020s | LLMs (e.g., GPT, BERT) | Abstractive, human-like summaries |

Table 1: Milestones in automated summary generation technology.

Source: Original analysis based on multiple sources (McKinsey, 2024, AIPRM, 2024).

Early systems were laughably basic. They often grabbed the first few sentences of a document and called it a “summary.” Yet, even these crude tools hinted at a future where machines could help us keep up with the world’s ever-expanding textual sprawl.

Key milestones and revolutions

- TF-IDF and sentence ranking (1980s): Statistical approaches allowed computers to assess sentence importance.

- Bayesian and decision tree models (2000s): Early ML enabled more context-aware summaries, albeit still extractive.

- Neural networks and embeddings (2010s): Deep learning models like word2vec and GloVe ushered in nuanced understanding of context.

- Transformer architectures (2017+): BERT, GPT, and their ilk enabled abstractive summarization—machines could now “write” summaries, not just copy sentences.

- Specialized LLMs (2020s): Domain-specific models for law, academia, and business began to outperform generic tools.

With each leap, the gap between “machine summary” and “human abstract” narrowed. But progress was anything but straightforward.

The journey from rule-based scripts to today’s LLMs wasn’t linear. Funding droughts, technical dead-ends, and overhyped promises derailed entire research fields. Yet, the goal never changed: automate the grunt work, free the human mind for higher judgment, and try not to lose anything essential in translation.

Why progress has been anything but linear

The path to modern summarization tools is littered with abandoned projects and dashed hopes. Some breakthroughs happened by accident—like the realization that neural nets trained for translation could also excel at summarizing. Others required brute computational force that only recently became affordable.

Even today, every “advance” comes with trade-offs. Increased sophistication often means less transparency; the more powerful the model, the harder it is to explain why it made a particular summary choice. The result: users gain speed and scale but risk losing the ability to challenge or even understand the summaries they’re given.

Ultimately, the wild history of summary tech is a lesson in humility. For every AI-generated silver bullet, there’s a hidden flaw—or a reminder that nuance can’t be compressed without consequence.

How automated summary generation actually works (beyond the buzzwords)

Extractive vs. abstractive: The two tribes

This approach selects and stitches together the most “important” sentences from the source text, based on statistical weight or relevance. Think of it as a highlighter wielded by an algorithm.

Here, the machine generates entirely new phrases and sentences, rephrasing or even rewriting key points in its own words—much like a human would. This method relies on advanced neural networks and LLMs.

The extractive vs. abstractive divide isn’t just technical—it’s philosophical. Extractive methods preserve the original wording but may miss nuance or flow. Abstractive models promise more natural summaries but can “hallucinate” facts or introduce errors. According to Hatchworks (2024), extractive methods dominate in regulated industries (like law and finance), while abstractive methods are gaining ground in creative and academic contexts.

In practice, most commercial tools blend the two, using extractive logic to identify key points and abstractive rewriting for clarity and conciseness. This hybrid approach reflects the messy reality of real-world documents—where what matters is rarely obvious, and how you say it can matter as much as what you say.

Under the hood: Algorithms, LLMs, and black boxes

At its core, automated summary generation is a dance between pattern recognition and linguistic synthesis. Legacy systems relied on formulas—count the keywords, rank the sentences, spit out the top three. But modern LLMs (Large Language Models) like GPT-4, BERT, or custom-trained models used by platforms such as textwall.ai operate on an entirely different plane.

These models are trained on vast corpora—billions of sentences, articles, and books. They don’t just “read” text; they learn the patterns of meaning, context, and intent. When tasked with a summary, an LLM predicts the next word, phrase, or sentence based on probabilistic associations. This is why abstractive summaries can sound uncannily human—but also why they can invent (“hallucinate”) information.

Transparency is a major issue. While statistical methods could be audited line-by-line, LLMs are notorious black boxes. According to McKinsey, 2024, over 44% of organizations piloting generative AI struggle to validate outputs, especially when the stakes are high. This makes oversight—not blind trust—an essential part of any summary workflow.

What makes a ‘good’ summary? Metrics that matter

| Metric | Description | Typical Use Case |

|---|---|---|

| ROUGE Score | Measures overlap between machine and human summaries | Academic, benchmarking |

| BLEU Score | Assesses translation-like accuracy for machine-generated text | Comparative evaluation |

| Factual Consistency | Verifies if summary maintains truthfulness to source | Compliance, legal, research |

| Readability Index | Rates how easily a summary can be understood | Public-facing communication |

| Human Evaluation | Direct feedback on relevance, tone, and accuracy | High-stakes, sensitive documents |

Table 2: Key metrics for assessing automated summaries.

Source: Original analysis based on AIPRM, 2024 and McKinsey, 2024).

No single metric captures “quality” in all contexts. ROUGE and BLEU scores matter in research, but in the real world, factual consistency and readability can make or break trust. The best systems, like those powering textwall.ai, layer automated checks with human review—especially when decisions, not just speed, are on the line.

Debunking the myths: What AI summaries can’t (and shouldn’t) do

The neutrality illusion: Bias, context, and blind spots

It’s tempting to believe that machine-generated summaries are objective—immune to the messiness of human prejudice. This is a dangerous myth. The algorithms reflect their training data, and that data is riddled with bias, omissions, and context gaps.

- Subtle framing: AI can unintentionally highlight certain perspectives or facts, while burying dissenting views—especially in polarizing topics.

- Language bias: Most LLMs have a language or regional skew (e.g., Western-centric English), leading to blind spots in international or multicultural documents.

- Source selection: If the input text is biased, the summary will be too—unless explicitly counterbalanced by human oversight.

- Data drift: Models trained on old data may miss rapidly evolving topics or slang, making summaries less relevant or even misleading.

According to Forrester, 2023, up to 78% of legal job functions are already influenced by generative AI—raising the stakes for bias and context errors in contract summaries or legal analysis.

Speed vs. accuracy: The trade-off nobody admits

AI can summarize a ten-page document in seconds, but speed isn’t always a virtue. Rushed summaries often miss nuance or misrepresent the underlying message. Here’s how the trade-off plays out:

| Factor | Fast Summary | Accurate Summary |

|---|---|---|

| Turnaround Time | Seconds to minutes | Minutes to hours |

| Risk of Error | High | Lower |

| Context Depth | Surface-level | In-depth |

| Use Case | Quick decisions, triage | Legal, compliance, research |

Table 3: Trade-offs between speed and accuracy in automated summary generation.

Source: Original analysis based on Hatchworks, 2024).

In critical settings—think healthcare, law, or finance—accuracy trumps speed. But for inbox triage or news aggregation, rapid-fire summaries are often “good enough.” The danger is forgetting which context you’re in.

Why manual still matters (sometimes)

Despite the hype, human judgment isn’t obsolete. Manual review remains crucial for documents where nuance, tone, or legal liability is at stake.

"Strategic oversight in automation is essential; quality beats quantity in data handling every time." — Deloitte, 2024 (Deloitte, 2024)

The best organizations blend the precision of machines with the skepticism of seasoned professionals. They use automated summaries to winnow the haystack but still pick through the needles themselves—especially when lives, reputations, or dollars are on the line.

Manual review is not about distrust—it’s about context. Machines can surface the obvious, but only humans can sense the subtext, the politics, the hidden landmines embedded in language.

Inside the machine: Real-world failures and spectacular surprises

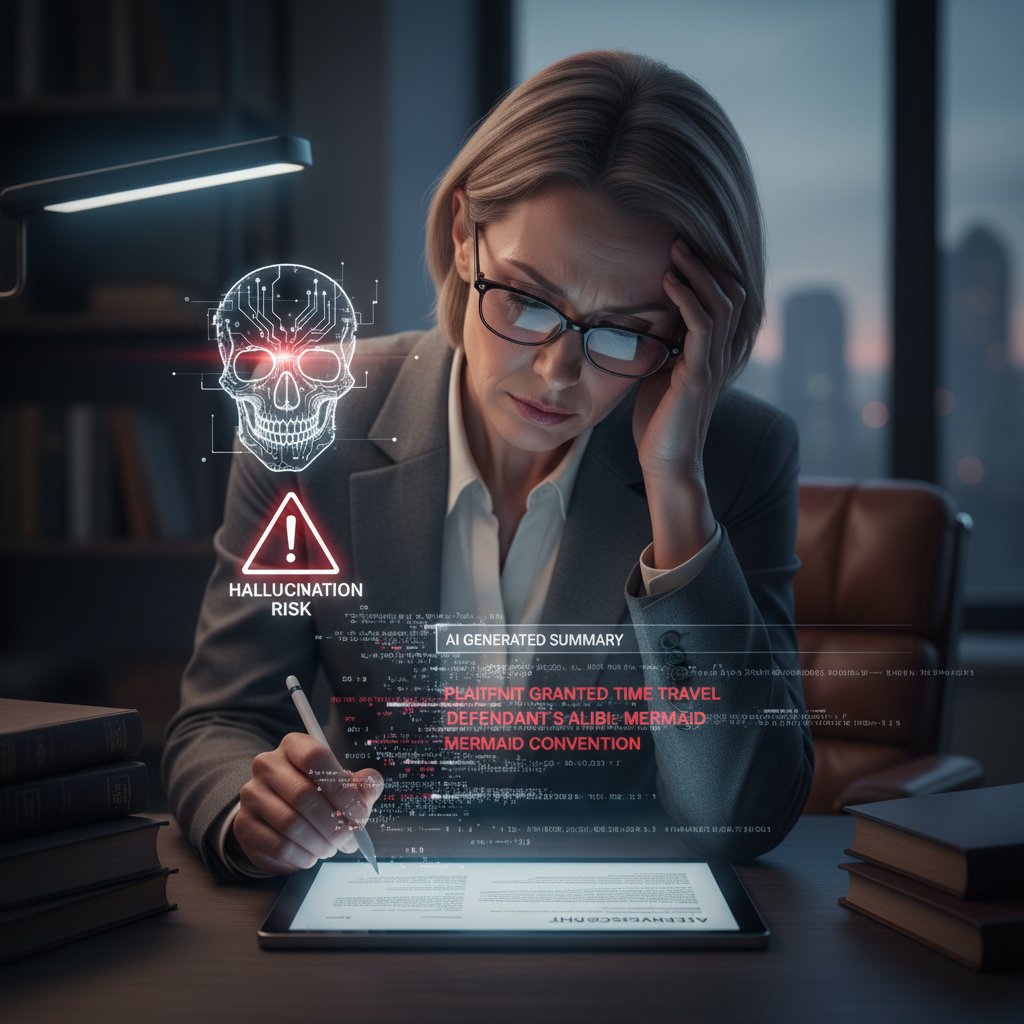

Hallucinations, omissions, and the edge cases

One of the dirty secrets of LLM-based summarization is the phenomenon of “hallucination”—when the AI invents details that weren’t in the source. For every summary that saves hours, there’s a nightmare story of a phantom clause added to a contract or a mischaracterized research finding.

Edge cases abound. Summaries of scientific papers may omit crucial caveats or express conclusions not supported by the data. In legal settings, even a single misinterpreted term can lead to disaster. This is why, according to Deloitte, 2024, 75% of organizations have boosted their data lifecycle investments to improve AI output quality.

Yet, not all surprises are bad. There are tales of AI uncovering overlooked trends in market research or clarifying technical manuals for non-experts—unexpected wins that would’ve been prohibitively expensive to achieve manually.

The lesson: treat summaries as hypotheses, not Gospel. Verify before you trust. And know that the machine’s greatest strength—pattern detection at scale—is also its Achilles heel when nuance matters most.

Case studies: When summaries saved (or ruined) the day

Consider a global consulting firm that used AI-powered summarization to comb through thousands of regulatory filings. The result: compliance teams identified critical changes in half the time, avoiding hefty fines. Conversely, a financial institution suffered a reputational hit when an AI summary mischaracterized a key risk clause, leading to poor investment decisions and client backlash.

In academia, automated summaries have sped up literature reviews by 40%, enabling researchers to focus on novel insights rather than rote reading (AIPRM, 2024). But there have also been instances where subtle methodological flaws were glossed over, leading to flawed secondary analyses.

The bottom line? Automated summary generation is a power tool, not a panacea. Its value depends on the quality of oversight, the clarity of input, and the willingness to question outputs—no matter how “smart” they seem.

Learning from mistakes: How to vet AI outputs

- Cross-reference summaries with source documents, especially for high-stakes content.

- Look for omitted qualifiers, caveats, or dissenting opinions—these are common weak spots.

- Use multiple tools or models to triangulate on complex documents—diversity reduces risk.

- Solicit human review for summaries flagged as “low confidence” or unusually terse.

- Document errors or hallucinations to improve future workflows and model selection.

A robust vetting process transforms AI from a liability into a force multiplier. It’s not about slowing down—it’s about knowing when to hit pause before the shortcut becomes a dead end.

Choosing your weapon: Comparing automated summary tools

What’s on the market: A landscape snapshot

The market for automated summary generators is as crowded as it is confusing. From off-the-shelf cloud APIs to hyper-specialized platforms like textwall.ai and legacy enterprise solutions, the choices can be overwhelming.

| Tool/Platform | Summarization Type | Integrations | Ideal Use Case | Notable Limitation |

|---|---|---|---|---|

| textwall.ai | Hybrid (LLMs) | API, workflow | Legal, research, business | Requires strong input data |

| OpenAI GPT-4 | Abstractive | API | Creative, academic | Prone to hallucination |

| Google Cloud NLP | Extractive | Cloud ecosystem | News, social media | Limited on nuance |

| Amazon Comprehend | Extractive | AWS integration | Enterprise, compliance | May need heavy tuning |

| Specialized legal | Extractive | Vertical-specific | Law firms | Narrow domain, high cost |

Table 4: Comparison of leading automated summary generation tools.

Source: Original analysis based on AIPRM, 2024.

Savvy users don’t just ask “what’s fastest” but “what’s safest for my context.” Integration, data privacy, and domain expertise are as important as model horsepower.

Deciding factors: Accuracy, speed, and trust

- Accuracy: Does the tool handle your document type? How often does it miss nuance or misrepresent facts?

- Speed: Is instant turnaround necessary, or can you wait for a more thoughtful pass?

- Trust: Does the platform explain its process, log errors, and support human review?

- Integration: Will it play nicely with your existing tools (e.g., document management, workflow platforms)?

- Data privacy: Are summaries processed securely, on-premises, or in the cloud?

Choosing a tool isn’t just about ticking boxes; it’s about aligning technology with your risk tolerance and workflow. In regulated industries, accuracy and auditability trump convenience—hence the rise of platforms like textwall.ai that blend AI with human-in-the-loop safeguards.

The role of textwall.ai and other disruptors

Textwall.ai is emblematic of a new breed of document analysis tools—intuitive, customizable, and built for complexity. By leveraging advanced LLMs and offering granular control over input, output, and review, platforms like textwall.ai are closing the gap between “good enough” and “mission critical.”

Disruptors in this space are also making inroads on integration and privacy—two Achilles heels of early solutions. The new standard is not just speed or price, but trust: can you verify, audit, and control the summaries your team relies on?

The result is a shift from “AI as magic” to “AI as collaboration”—where the technology empowers but never replaces the human analyst.

Mastering automated summary generation: A practical deep-dive

Step-by-step: From raw text to distilled insight

- Import or upload your document into the summary tool of choice.

- Select your summarization preference: extractive, abstractive, or hybrid.

- Set context-specific parameters (length, domain, sensitivity).

- Run the initial summary and review key points for completeness and tone.

- Cross-verify with source text, flagging any potential omissions or hallucinations.

- Refine using model settings or manual edits as needed.

- Finalize and share, documenting any human interventions or flagged issues.

Automation thrives on clarity. The more explicit the input and the more rigorously you review the output, the less likely you are to be caught off-guard by errors or oversights.

Avoiding common mistakes

- Blind trust: Never assume even the best summaries are infallible—always cross-check high-stakes outputs.

- Ignoring context: Set summarizer parameters to match the domain, tone, and audience—generic settings breed generic results.

- Overloading the model: Feeding massive, unstructured documents can overwhelm even the best LLMs—pre-process or segment your inputs.

- Failing to document errors: Track and report hallucinations or omissions to improve tool selection and workflow.

- Neglecting data privacy: Always ensure sensitive content is processed in compliance with your organization’s privacy rules.

Cutting corners on these fundamentals is an open invitation to disaster. Mastery lies in discipline, not just convenience.

Checklist: Are you using AI summaries wisely?

- Have you defined the context and purpose of your summary?

- Did you select the right summarization model and settings for your document type?

- Have you reviewed outputs for factual consistency and tone?

- Are flagged summaries routed for human oversight?

- Is your workflow compliant with data privacy and audit requirements?

A little vigilance goes a long way. The best professionals treat automated summaries as a first draft, not a final verdict.

The dark side: Risks, ethics, and the future of knowledge

Data privacy and intellectual property hazards

Automated summary generation tools often require uploading sensitive or proprietary content to cloud servers. This introduces real risks: data breaches, exposure of intellectual property, and compliance violations. According to Deloitte, 2024, 75% of organizations increased investment in data lifecycle management specifically to address AI-related privacy threats.

AI vendors have responded with on-premises deployment options, encrypted workflows, and contractually guaranteed data deletion. But the burden is on the user to ensure that automated summaries don’t leak what you wanted kept secret. Always read the privacy policy—and when in doubt, choose tools with verifiable compliance credentials.

Data privacy isn’t an abstract concern; it’s a frontline defense in a world where knowledge is power, and power can be stolen at the speed of an API call.

Critical thinking in the age of instant answers

The speed of automated summaries tempts us to accept answers at face value. But convenience is a poor substitute for understanding. As philosopher Daniel Dennett observed, “AI can be a prosthetic for thought, but not a replacement for thinking itself.”

"Information overload increases confidence but often reduces decision quality; dopamine-driven craving for information makes it hard to disengage." — Forbes, 2023 (Forbes, 2023)

Critical thinking is more essential than ever—but it’s also at risk. The more we delegate to machines, the more skills we let atrophy. Automated summaries must be a tool, not a crutch.

Accepting AI outputs uncritically is a recipe for mediocrity. The best defense? Stay curious, skeptical, and willing to chase the full story—no matter how convenient the shortcut.

Who controls the narrative?

- Algorithm designers: Decide what’s important, setting thresholds for “relevance” or “salience.”

- Data suppliers: Shape the training set, filtering which voices are heard and which are ignored.

- Platform owners: Enforce content moderation, compliance, and usage policies, sometimes in ways that stifle dissent or nuance.

- End users: Choose how to interpret, challenge, or override summaries—but only if they’re aware of the limitations.

Narrative control isn’t just about who writes the headline—it’s about who decides what’s left on the cutting room floor. Automated summary generation can democratize access to knowledge, but it can also centralize power in the hands of a few. The future of information is as much a social issue as a technical one.

Beyond the hype: The future of automated summary generation

Smart assistants or lazy crutches?

Automated summaries are already reshaping workflows from Wall Street to academia. The debate isn’t whether they work—they do—but whether they make us smarter or just lazier. For every story of productivity gains, there’s an undercurrent of concern about the erosion of deep reading and critical analysis.

Some professionals use summaries as a springboard—diving deeper into sources flagged as important. Others treat them as gospel, replacing judgment with convenience. The line between smart assistant and lazy crutch is determined not by the technology, but by the habits of its users.

The challenge is to preserve curiosity and skepticism in a world that rewards speed above all else.

What’s next? Predictions and provocations

Advanced AI will continue to raise the bar for summary quality, delivering near-human clarity for complex documents. But without strategic oversight, the risk of subtle errors, bias, or privacy lapses grows. As organizations double down on data investments (75% did in 2023-24, per Deloitte, 2024), the dividing line will be not between those who automate and those who don’t—but between those who automate wisely, and those who pay the price for shortcuts.

Platforms like textwall.ai and their competitors will remain essential players, not just for their raw power but for their ability to foster trust through transparency, oversight, and flexibility.

The future may be summarized, but it will never be simple.

How to stay ahead in the summarized world

- Cultivate layered reading habits: Use summaries to guide, not replace, your deep dives.

- Demand transparency: Favor tools that explain their logic and flag uncertainty.

- Prioritize data privacy: Treat every document as if it could be exposed—because it might.

- Invest in human oversight: Build workflows where AI is the assistant, not the arbiter.

- Track and learn from failures: Every hallucination is a chance to refine your processes.

Staying ahead in a world of automated summaries means refusing to surrender your agency. The shortcut to clarity is only as safe as the vigilance of those who walk it.

Supplement: Automated summaries in education, enterprise, and society

Classrooms reimagined: Learning with AI summaries

In education, automated summaries are reshaping how students and teachers engage with dense content. Complex papers, textbooks, and even video lectures can be distilled into digestible briefs—freeing up time for critical discussion, project work, or creative synthesis.

But there’s a flip side. Over-reliance on machine summaries can short-circuit learning, replacing the hard work of analysis with passive consumption. The best classrooms use summaries as scaffolding, not a ceiling—helping students build context and synthesize, not just regurgitate.

The challenge for educators is to balance efficiency with depth—encouraging students to question, critique, and seek out the full story behind every summary.

Corporate chaos or clarity?

Automated summary generation is a double-edged sword in the enterprise. On one hand, it streamlines compliance, accelerates market analysis, and reduces the grunt work of document review. On the other, it risks introducing new errors and blind spots—especially when used as a cover for shrinking headcounts or avoiding tough decisions.

| Scenario | Outcome (Best Case) | Outcome (Worst Case) |

|---|---|---|

| Legal contract review | 70% time reduction, improved compliance | Missed clauses, increased risk |

| Market research analysis | 60% faster insight extraction | Trend misinterpretation, lost nuance |

| Healthcare record processing | 50% less admin workload | Critical data omission, liability |

| Academic literature review | 40% less reading time | Overlooked flaws, shallow citations |

Table 5: Enterprise outcomes of automated summary generation.

Source: Original analysis based on AIPRM, 2024).

The difference between chaos and clarity is not the tool, but the governance around it.

Societal shifts: Misinformation, bias, and access

The societal impact of automated summaries is profound. On one hand, they democratize access, making knowledge available to those who might otherwise be excluded by jargon or volume. On the other, they risk amplifying misinformation, bias, and the subtle erosion of nuance.

If unchecked, automated summaries could create new forms of information inequality—where those with the best tools get clarity, and everyone else gets confusion. The antidote is transparency, accountability, and a relentless commitment to truth.

The future of knowledge hinges not on how fast we can summarize, but on how wisely we choose to do so.

Glossary: Decoding the jargon of automated summary generation

An approach that selects the most relevant sentences or phrases from a document without altering the original wording. Useful for fact-heavy or regulated domains.

A method where the summarizer generates new phrases or sentences, paraphrasing the source material to capture the essence in fewer words—much like a human would.

AI systems trained on vast amounts of text data, capable of understanding and generating human-like language. Examples include GPT-4, BERT, and domain-specific variants.

Evaluation metrics that assess the overlap between machine and human summaries (ROUGE) or translation-like accuracy (BLEU).

When an AI model generates information not present in the source, potentially introducing errors or inaccuracies.

Understanding this jargon isn’t just for techies—it’s essential for anyone who wants to use automated summary generation without falling for the magic trick.

Conclusion: Rethinking our relationship with information

The power and the peril of the shortcut

Automated summary generation sits at the crossroads of convenience and critical thinking. Used wisely, it’s a superpower—enabling rapid insight, transforming workflows, and leveling the information playing field. Used recklessly, it’s a recipe for error, bias, and intellectual complacency.

"Summaries are only as good as the questions we ask and the judgment we bring. Trust the shortcut, but never cede control." — Industry maxim, reflecting best practices in document analysis

Every innovation is a test of our discernment. The future doesn’t belong to those who summarize the fastest, but to those who read between the lines—questioning, challenging, and refusing to let algorithms decide what they know.

Final checklist: Are you ready to trust the summary?

- Have you verified the factual consistency of your AI-generated summary?

- Is the summary free of hallucinations, omissions, or subtle bias?

- Have you cross-checked against the original text or multiple tools?

- Is human oversight built into your workflow for high-stakes documents?

- Do you understand the privacy and compliance implications of your tool?

Automated summary generation is here to stay. Whether it makes us smarter or just faster is, in the end, up to us.

Sources

References cited in this article

- AIPRM Generative AI Statistics 2024(aiprm.com)

- McKinsey State of AI 2024(mckinsey.com)

- Hatchworks Gen AI Statistics(hatchworks.com)

- Forbes: Information Overload(forbes.com)

- Deloitte Tech Trends 2025(rdworldonline.com)

- Doctopus: Why Summarization Matters(doctopus.io)

- Siemens: From Punched Cards to ChatGPT(blogs.sw.siemens.com)

- TechnicalPort: Punch Cards(technicalport.com)

- Medium: Computing Evolution(medium.com)

- Wikipedia: Automatic Summarization(en.wikipedia.org)

- ScienceDirect: ATS Survey(sciencedirect.com)

- Forbes: AI History(forbes.com)

- Wiley: Topic Modeling Bibliometric Analysis(wires.onlinelibrary.wiley.com)

- ResearchGate: ATS Survey(researchgate.net)

- Springer: Business Process Models(link.springer.com)

- Nature: Clustering Algorithm Summarization(nature.com)

- arXiv: LLM-Based ATS Survey(arxiv.org)

- ScienceDirect: Abstractive Summarization(sciencedirect.com)

- Iris.ai: Tech Deep Dive(iris.ai)

- Radai: RAG and Summarization(radai.com)

- ICLR 2024 Papers(confviews.com)

- MIT News: LLM Mechanisms(news.mit.edu)

- arXiv: LLM-Based ATS Survey(arxiv.org)

- ACM: Survey for ATS(dl.acm.org)

- OSTI: Advances in Summarization(osti.gov)

- OpenAI Cookbook: Evaluation(cookbook.openai.com)

- Forbes: AI Myths(forbes.com)

- Sofigate: AI Myths Busted(sofigate.com)

- UCCS: AI Myths vs. Reality(libguides.uccs.edu)

- Codesphere: AI Summarization Trade-offs(codesphere.com)

- PubMed: Speed-Accuracy Trade-offs(pubmed.ncbi.nlm.nih.gov)

- dev.to: Speed vs. Accuracy in RAG(dev.to)

- Northwestern: AI Summarization Dilemma(casmi.northwestern.edu)

- Nature: AI for Lay Summaries(nature.com)

- ICMA: Unintended Consequences(icma.org)

- Factspan Case Study(factspan.com)

- Aligned Automation Case Study(alignedautomation.com)

- Springer Chapter(link.springer.com)

- Palos Publishing(palospublishing.com)

- Enago: AI Summarization Tools Comparison(enago.com)

- Wiley: Automation Tools in Scoping Reviews(onlinelibrary.wiley.com)

- Springer: Automation in Systematic Reviews(link.springer.com)

- Grand View Research: Generative AI Market(grandviewresearch.com)

- Analytics Insight: Market Trends(analyticsinsight.net)

- eWeek: Generative AI Landscape(eweek.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Automated Summarization of Scholarly Articles: Power and Hidden Risks

Automated summarization of scholarly articles is rewriting research. Uncover shocking truths, hidden risks, & actionable tips for smarter, faster insights.

Automated Summarization of Patient Data When Accuracy Is Life or Death

Automated summarization of patient data is revolutionizing healthcare—yet most hospitals miss the real story. Discover the risks, rewards, and raw facts.

Automated Report Summarization: Speed, Risk and Who to Trust

Discover insights about automated report summarization

Automated Legal Risk Analysis Is Quietly Rewriting Deal Power

Automated legal risk analysis changes everything. Uncover hidden dangers, unexpected wins, and how to outsmart the risks—before they outsmart you.

Automated Legal Compliance Tools in 2026: ROI or Risk?

Automated legal compliance tools—fact vs. hype. Discover the hidden risks, real benefits, and 2026’s must-know truths. Read before you buy or automate.

Automated Insight Extraction in 2026: Value, Risk, and Reality

Automated insight extraction is shaking up 2026. Discover the hidden risks, real wins, and edgy truths behind the AI that’s rewriting how we see data. Don’t get left behind—read now.

Automated Healthcare Documentation Tools: Cure for Burnout or Risk?

Automated healthcare documentation tools are revolutionizing medicine—but not how you think. Uncover myths, real costs, and actionable strategies. Read before you decide.

Automated Document Summarization Solutions That You Can Actually Trust

Discover insights about automated document summarization solutions

Automated Document Summarization Service As Your New Unfair Edge

Discover hidden risks, surprising benefits, and expert tips to revolutionize how you handle information—read before you decide.