Text Classification Software in 2026: Wins, Traps, and Tradeoffs

There’s a war raging in the heart of every modern organization—a war against chaos, misinformation, and digital exhaustion. Every day, inboxes overflow, contracts multiply, and reports pile up. It’s not just data overload; it’s an existential crisis in the era of unstructured information. Enter text classification software: the silent sentry sifting through this chaos, making it possible to turn endless text streams into clarity, insight, and action. In 2025, this isn’t just a nice-to-have—it’s a survival tool for anyone who wants to cut through the noise and make smarter decisions. But before you put all your faith (and budget) in the latest AI miracle, you need to know the brutal truths, the hidden pitfalls, and the bold wins that define today’s text classification landscape. This is your no-nonsense, all-access pass to the reality behind the hype—complete with hard data, expert quotes, real-world stories, and a checklist for surviving the software arms race.

Welcome to the machine: why text classification software matters now

The information overload crisis

Organizations are drowning in unstructured text data. According to recent analyses, the total volume of business-generated text—including emails, reports, legal documents, and social media—has exploded by more than 500% in the last five years. As businesses shift to hybrid and remote models, this mountain only grows steeper. A 2024 survey by IDC found that over 80% of enterprise data is now unstructured, with text dominating the field.

Psychologically, the consequences are just as brutal. Information fatigue syndrome is now a recognized workplace phenomenon, with employees reporting higher stress and decision paralysis when forced to process excessive, unorganized data. As organizations scramble for clarity, an entire industry has arisen—armed with algorithms promising to transform chaos into insight.

"Every day, we make choices our brains aren’t built for." — Maya

Yet, as anyone who’s tried to manually classify a week’s worth of customer feedback will tell you, this isn’t just a tech problem—it’s a human one. The crisis isn’t just about scale; it’s about cognitive limits.

What is text classification software, really?

Forget the jargon. Text classification software is, at its core, a set of algorithms that automatically assign categories or tags to chunks of text—emails, documents, chats, tweets, or anything else you throw at it. Think of it as the digital equivalent of a ruthless librarian, sorting the world’s chaos into neat, searchable boxes.

Key terms you need to know:

- Supervised learning: This is the machine learning approach where models are trained on labeled examples (e.g., emails marked as “spam” or “not spam”). It’s how most industrial-strength text classification gets done.

- NLP (Natural Language Processing): The field of AI focused on allowing computers to “understand” human language. Text classification is a flagship task in NLP.

- Labeling: The grunt work of assigning tags to sample data for the machine to learn from. It’s boring, essential, and, as you’ll see, full of hidden landmines.

These systems have quickly become the gatekeepers of digital information. Every time your inbox auto-sorts, your social feed filters, or your document management system “magically” tags files, text classification software is pulling the strings. And with the stakes getting higher—think legal compliance, fraud detection, and crisis management—getting those strings right is absolutely critical.

How does text classification software actually work?

Under the hood: algorithms and models explained

The magic of text classification isn’t magic at all—it’s machine learning, powered by mountains of data and relentless iteration. At the core, there are two primary families:

- Supervised models (think logistic regression, SVMs, neural networks): Trained on labeled data, these models “learn” what counts as, say, a complaint vs. a compliment.

- Unsupervised models (think clustering, topic modeling): These try to group or label text without explicit guidance—useful, but rarely as precise for classification.

Here’s how it breaks down from messy input to actionable output:

- Data ingestion: Raw text is collected from emails, chats, PDFs, or cloud storage.

- Preprocessing: The mess gets cleaned—think lowercasing, removing stopwords, tokenizing sentences.

- Feature extraction: Algorithms turn words into numbers. Classic methods use TF-IDF; modern approaches use word embeddings (BERT, Word2Vec) or graph representations.

- Model training: The system learns from labeled examples—good data = better results.

- Prediction: New, unseen texts are fed in, and the model returns a category—instantly.

- Feedback loop: Human review or corrections are fed back to improve model performance over time.

With advanced text classification software, such as those powered by hybrid models (like BERT plus graph neural networks), accuracy has smashed previous benchmarks. Still, the gap between benchmark datasets and messy real-world data remains a major battleground, as documented in the latest comparative reviews (arXiv, 2025).

The invisible labor behind the magic

Every “intelligent” AI model stands on the shoulders of thousands of human annotators. These are the people who decide—one click at a time—what’s urgent, what’s offensive, what’s actionable. Their work is invisible, underpaid, and absolutely mission-critical.

"The real intelligence is in the hands that label the data." — Alex

But the process is fraught with risk. Poorly labeled datasets introduce bias, misclassifications, and even legal headaches. According to a 2025 Bank of England working paper, mislabeling at scale can create systemic errors that ripple through entire compliance or risk management systems (Bank of England, 2025).

- Hidden challenges in building classification datasets:

- Annotator fatigue leading to inconsistent labels.

- Ambiguous definitions of categories (“Is this complaint or feedback?”).

- Cultural and linguistic bias—what’s “urgent” in one context is mundane in another.

- Lack of real-world complexity in benchmark datasets, causing poor generalization.

The invisible labor behind every “AI breakthrough” is the unsung backbone of the entire industry. If you want results that stand up in the real world, you need to invest in the human side as much as the algorithm.

From email triage to legal discovery: real-world text classification unleashed

Business game-changers

Text classification doesn’t just keep your inbox tidy—it’s quietly transforming every corner of business. In customer support, smart ticket routing slashes response times. In compliance, systems flag risky communications before regulators even blink. Marketers use classification to monitor brand sentiment in real-time, while HR depts. filter thousands of resumes with surgical precision.

| Industry | Application | Outcome | Example Software |

|---|---|---|---|

| Finance | Fraud detection | Early risk identification, lower losses | Alteryx, RapidMiner |

| Law | Legal discovery | 70% faster contract review | TextWall.ai, Relativity |

| Marketing | Sentiment analysis | Real-time brand monitoring | MonkeyLearn, Lexalytics |

| Healthcare | Clinical documentation | Improved data management, faster triage | IBM Watson, TextWall.ai |

Table 1: Applications of text classification software across major industries

Source: Original analysis based on arXiv, 2025, Bank of England, 2025

Consider these real-world examples:

- Legal discovery: One multinational law firm reported a 70% reduction in contract review time after automating text classification with hybrid NLP models (Bank of England, 2025). This translated to millions in annual savings and a leap in compliance.

- Market research: Media agencies use real-time classification to filter and analyze thousands of social posts per minute, surfacing trends and crisis signals before they make headlines.

- Healthcare records: Hospitals equipped with advanced text categorization tools have cut administrative workloads by 50%, freeing up resources for patient care (arXiv, 2025).

- Customer support: Startups like TextWall.ai help companies instantly route incoming complaints, questions, and feedback to the right teams, improving both speed and customer satisfaction.

Cross-industry tales: stories you never hear

Beyond mainstream business, text classification is making waves in places most people never see. Social justice organizations deploy it to flag hate speech and misinformation in real time, often preventing crises before they escalate. Newsrooms use classification to monitor sentiment shifts during elections or breaking news.

In high-stakes scenarios—disaster response, pandemic monitoring, regulatory sweeps—automated classification is the difference between chaos and order.

Timeline of significant text classification breakthroughs:

- 2016: Market worth $136 million; early SaaS tools focus on keyword filtering.

- 2018: Introduction of deep learning and BERT models raises classification accuracy dramatically.

- 2021: Hybrid models combine neural networks and graph analytics for nuanced context handling.

- 2023: AutoML democratizes access, allowing non-experts to build robust models.

- 2025: Market explodes to $5.4 billion; real-time, multilingual, and cross-domain support become industry standards (arXiv, 2025).

The revolution isn’t just technical—it’s cultural. As organizations discover what’s possible, the expectations (and the stakes) keep climbing.

Myths, hype, and harsh realities: what vendors won’t tell you

Debunking the 'AI knows all' myth

Despite the glowing marketing promises, no text classification software has reached omniscience. Algorithms are not oracles. They’re pattern-matching engines—powerful, but profoundly limited.

"No algorithm can read between the lines like a human." — Sam

Nuance gets lost. Sarcasm, cultural references, or subtle context can turn a perfect model into a liability overnight. Automated systems often miss the “why” behind the text, focusing only on the “what.”

- Common misconceptions about text classification accuracy:

- Accuracy metrics on benchmark datasets always translate to real-world success (false).

- Pre-trained models don’t need domain-specific tuning (false).

- Adding more data automatically improves results (it can add noise or bias).

- All errors are “fixable” with more annotations (sometimes, the label definitions are broken).

The result? Many organizations are lulled into a false sense of security, only to discover misclassifications when it matters most—during audits, lawsuits, or public crises.

Hidden costs and risk factors

Vendors love to talk ROI. Few mention the true costs—financial, operational, and ethical—that stack up after the contract is signed.

| Cost Area | Description | Example Impact |

|---|---|---|

| Maintenance | Ongoing tuning, updating models | Hidden labor costs, technical debt |

| Training | Preparing staff, labeling new data | Time drain, onboarding gaps |

| Bias mitigation | Auditing and correcting for model bias | Legal risk, compliance exposure |

| Data privacy | Securing data in cloud or SaaS environments | Regulatory fines, data breach exposure |

Table 2: Hidden costs of text classification software adoption

Source: Towards Data Science, 2024

Here’s how these costs sneak up:

- Maintenance: Models degrade over time—especially as language and business priorities shift. Without regular retraining, accuracy plummets.

- Bias risk: Models trained on improper data can propagate bias at scale, opening you up to legal headaches and reputational harm.

- Data privacy: Cloud-based classifiers, especially SaaS, raise the specter of data leaks or regulatory non-compliance. Edge deployments help, but add complexity.

- Annotation: New business lines or regulatory demands require fresh labeled data—often on tight deadlines.

According to research, firms underestimate post-purchase costs by up to 30%—a blind spot with consequences no spreadsheet can hide.

The great face-off: open-source vs. commercial text classification tools

Showdown: what you really get for your money

The debate is fierce: Open-source or commercial solution? Each has its champions and its skeletons.

| Feature | Open-Source Tools | Commercial Solutions | Winner (Context) |

|---|---|---|---|

| Model flexibility | High | Moderate | Open-source (custom needs) |

| Support and SLAs | Community only | Dedicated support | Commercial (mission-critical) |

| Cost | Free (with caveats) | License/subscription | Tie (depends on scale & staff) |

| Customization | Unlimited | API-based | Open-source (if you have experts) |

| Ongoing maintenance | DIY | Vendor-provided | Commercial (if you lack resources) |

| Security & compliance | Varies | Certified options | Commercial (regulated industries) |

Table 3: Feature matrix comparing open-source and commercial text classification solutions

Source: Original analysis based on arXiv, 2025, vendor documentation

If you have in-house machine learning firepower, open-source (Scikit-learn, spaCy, fastText) can be molded to fit any workflow. But if uptime, regulatory audits, and 24/7 support matter, commercial offerings like textwall.ai or IBM Watson Analytics often win out, especially at enterprise scale.

Case studies: surprising outcomes from unexpected choices

Consider these real-world pivots:

- Fintech startup: After investing months customizing open-source NLP, a fintech startup switched to a commercial SaaS tool when compliance audits overwhelmed their tiny team. Result: 2x faster response to regulatory changes, lower total cost.

- Academic research lab: Stuck with off-the-shelf APIs that couldn’t handle domain-specific jargon, one university lab rebuilt its pipeline with open-source transformers—gaining 20% accuracy but facing longer rollout times.

- Legal consultancy: A boutique law firm chose textwall.ai for its document analysis, citing seamless integration and rapid turnaround. The catch? Higher subscription fees, but a 70% reduction in manual review time.

These stories reveal what market stats often hide: The “right” tool is the one that fits your team, not the one with the flashiest pitch.

How to choose text classification software that won’t ruin your month

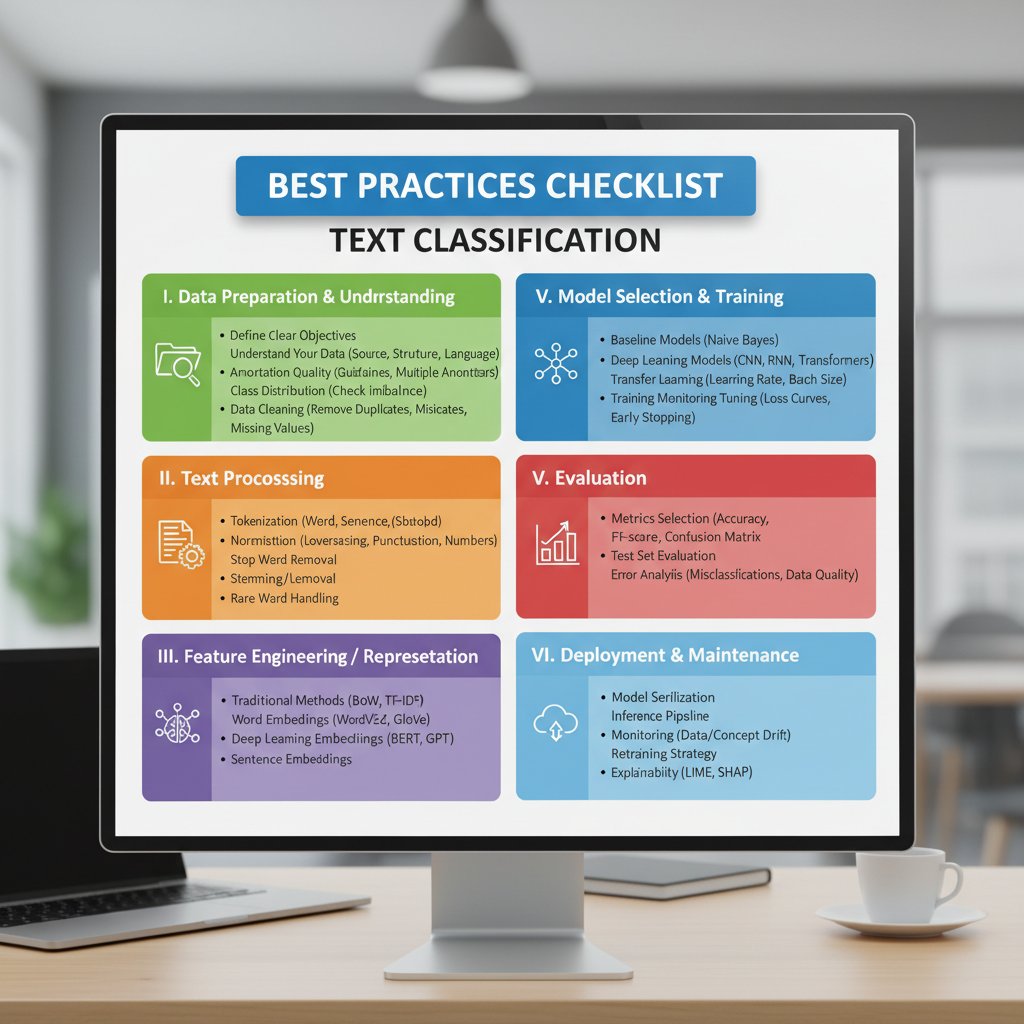

Priority checklist: what really matters

Choosing text classification software isn’t about ticking boxes—it’s about knowing your workflow, your risk tolerance, and your appetite for complexity.

- Define your use case: Are you triaging emails, auditing contracts, or mining social sentiment?

- Assess your data: How much, how messy, how multilingual?

- Evaluate integration: Can it talk to your CRM, DMS, or cloud storage?

- Calculate total cost: Include maintenance, annotation, and retraining.

- Prioritize explainability: How will you audit misclassifications?

- Check compliance: Does it meet your industry’s privacy and security mandates?

- Test at scale: Benchmark on your real data, not just vendor demos.

- Plan for growth: Will it scale as your business or workload explodes?

- Vet support/SLA: What happens when something breaks at 2am?

- Read the fine print: Who owns your data and your models?

Spotting red flags early—like opaque pricing, lack of on-premise options, or poor documentation—can save you months of headaches and thousands in sunk costs.

Hidden benefits experts won’t tell you

Beyond the obvious, advanced text classification software unlocks a cascade of secondary advantages:

- Workflow automation that removes bottlenecks and human error.

- Compliance boosts through real-time monitoring and audit trails.

- Intelligent prioritization—letting teams focus on high-value tasks.

- Cross-channel analytics—integrating insights from email, chat, and social.

- Continuous learning—models that adapt to your business as it evolves.

- Enhanced security—automatic redaction or flagging of sensitive data.

- Data-driven culture shift—putting real insights in the hands of more employees.

Unlocking these benefits means going beyond the demo—demanding transparency, robust customization, and the ability to iterate fast. Tools like textwall.ai are designed with these layers in mind, but no magic button replaces informed deployment.

Beyond the buzzwords: technical deep-dives (for humans, not robots)

Understanding model bias and explainability

Model bias isn’t just a technical bug—it’s a business risk and an ethical minefield. Bias creeps in when models are trained on unrepresentative data or when categories are ambiguously defined, leading to systematic misclassifications.

Definitions you can use:

- Explainable AI: Methods that make it possible to understand and audit why a model made a specific decision. Crucial for trust and regulatory compliance.

- Training data bias: Systematic errors introduced when the data used to train a model isn’t representative of the real-world distribution.

- Black box models: Complex algorithms (often deep learning-based) whose internal workings are opaque—even to their creators. Great for accuracy, terrible for explainability.

Practical steps to mitigate bias:

- Regularly audit model outputs for fairness across demographics or categories.

- Use human-in-the-loop pipelines, especially for high-stakes classifications.

- Demand transparency from vendors about training data sources and annotation guidelines.

- Maintain a feedback channel for users to flag questionable outputs.

Performance metrics that actually matter

In the arms race for “AI supremacy,” performance metrics are weaponized. But not all numbers tell the same story.

- Accuracy: Simple and seductive, but can be misleading when classes are imbalanced.

- Precision and recall: Precision measures how many predicted positives are correct; recall measures how many actual positives are caught.

- F1 score: The harmonic mean of precision and recall—widely accepted as a better measure for uneven datasets.

| Metric | Use Case | Benchmark Value | Interpretation |

|---|---|---|---|

| Accuracy | Balanced classes | 90-98% | Good, but check imbalance |

| Precision | Spam detection | 95-99% | Higher = fewer false alerts |

| Recall | Compliance alerts | 90-95% | Higher = fewer misses |

| F1 Score | Legal triage | 93-97% | Best for overall balance |

Table 4: Example performance metrics from recent benchmarking studies

Source: Original analysis based on arXiv, 2025, vendor documentation

Beware: Vendors touting sky-high accuracy often test on sanitized datasets. Always demand benchmarks on your real data and read the fine print on what those numbers actually mean.

The future of text classification: what’s next, what could go wrong?

Emerging trends and wild predictions

The buzzwords are everywhere—zero-shot classification, transformer models, multilingual NLP. But beneath the noise, several trends are already reshaping the landscape:

- Zero-shot learning: Models that can classify text into new, unseen categories with minimal training data.

- Transformer architectures: BERT, GPT, and their variants deliver context-aware analysis that was sci-fi just five years ago.

- Multilingual support: Today’s tools handle dozens of languages out of the box, making global rollouts possible.

- Cloud/edge hybrid deployments: Organizations split data and workloads between cloud and local servers for speed and privacy.

Predictions for the next five years aren’t guesses—they’re already happening:

- Enterprise demand for explainability and auditability is driving a new arms race among vendors.

- Open-source collaborations are closing the gap with commercial tools.

- Real-time classification on massive text streams is finally scalable (sort of).

- Automated workflow integration—AI that triggers actions, not just labels.

Steps organizations can take now to future-proof text analysis:

- Invest in tools with robust, transparent model documentation.

- Build internal expertise—don’t outsource your “AI brain.”

- Insist on continuous training and data refresh cycles.

- Integrate feedback loops at every stage.

- Benchmark against real-world, not just benchmark, data.

Ethics, regulation, and the dark side of automation

Text classification is powerful, but power cuts both ways. As organizations grow more reliant on automated decision-making, questions of privacy, surveillance, and algorithmic discrimination move from the margins to the front page.

- Controversies and open questions:

- Who owns the data—and the models trained on it?

- How do you audit for bias across global, multilingual deployments?

- What’s the liability when automation goes wrong—missed alerts, wrongful denials, regulatory breaches?

- Where’s the line between helpful automation and invasive surveillance?

- How do you balance performance with explainability—especially when regulations demand both?

Companies like textwall.ai are tackling these challenges head-on, prioritizing transparency and user control at every layer. But the hard truth remains: No system is immune to the dark side of automation. Responsible deployment demands vigilance, humility, and a willingness to adapt as new risks emerge.

Supplement: adjacent frontiers and common misconceptions

Adjacent fields: topic modeling, sentiment analysis, and more

Text classification isn’t the only game in town. It’s part of an ecosystem of NLP techniques, each with its strengths and blind spots.

Definitions you can use:

- Topic modeling: Identifies the main themes or subjects in a collection of texts. Great for clustering but not for precise categorization.

- Sentiment analysis: Measures emotional tone—positive, negative, neutral. Essential for brand monitoring but easy to fool with sarcasm or irony.

- Entity recognition: Extracts names, locations, dates, and more. Vital for structured data extraction but doesn’t classify whole documents.

When to use each technique? Use text classification for clear-cut categories (spam/not spam), topic modeling for exploratory analysis, and sentiment/entity analysis for targeted insights or data enrichment. Combining these tools—ideally within platforms like textwall.ai—gives you a full-spectrum view of your textual universe.

Common pitfalls and how to dodge them

The biggest mistakes teams make aren’t technical—they’re strategic.

- Red flags and warning signs:

- Deploying models trained on irrelevant or outdated data.

- Using default settings with no customization or domain tuning.

- Ignoring edge cases—sarcasm, slang, or multilingual input.

- Relying solely on vendor demos without real-world testing.

- Overlooking post-deployment monitoring and retraining.

- Skimping on annotation quality to save time or money.

- Failing to audit for bias or compliance.

Actionable advice: Always start with a pilot on a subset of your real data. Involve end users in reviewing outputs. Demand transparency from vendors and audit regularly. Most of all, treat text classification as an ongoing process, not a set-and-forget solution.

Conclusion: beyond the hype—your next move in the text classification arms race

Synthesizing everything above, the message is clear: Text classification software is not a magic bullet, but it is a game-changer when used with eyes open and expectations grounded in reality. The biggest wins come to those who understand both the capabilities and the traps—who pair powerful algorithms with a relentless focus on data quality, user feedback, and ethical deployment. As digital complexity explodes, these tools are no longer optional—they’re essential for survival.

The future of text classification is being written right now, not in some distant tomorrow. Its impact is already reshaping how we work, communicate, and decide. If you want to stay ahead, you need to master both the software and the strategic mindset that sets winners apart from the merely automated.

Your next move? Arm yourself with knowledge, demand transparency from your vendors, and never lose sight of the human element beneath every algorithm. The war on chaos can be won—but only if you choose your weapons wisely.

Quick reference: cheat sheet for surviving the chaos

- Define your use case and data sources before shopping for tools.

- Pilot on real (not vendor-provided) data sets.

- Demand transparency—know how your models are trained and updated.

- Audit for bias and compliance from day one.

- Prioritize explainability, especially for critical workflows.

- Integrate user feedback and corrections into your pipelines.

- Benchmark using multiple metrics, not just accuracy.

- Monitor and retrain models regularly—language changes, so must you.

- Don’t overlook costs: annotation, maintenance, compliance.

- Leverage expert platforms like textwall.ai for knowledge, guidance, and advanced tools.

Ready to cut through the noise? Explore advanced document analysis and text classification resources at textwall.ai—and start turning chaos into insight.

Sources

References cited in this article

- Towards Data Science: Brutal truths that NLP data scientists will not tell you(towardsdatascience.com)

- arXiv: Comparative Review of Text Classification (2025)(arxiv.org)

- Bank of England: Improving Text Classification (2025)(bankofengland.co.uk)

- Nyckel: What is text classification?(nyckel.com)

- LatentView: Real-world applications(latentview.com)

- ScienceDaily: Information overload as pollution(sciencedaily.com)

- Lausanne Movement: Information Overload(lausanne.org)

- Harvard Business Review: Reducing Information Overload(hbr.org)

- MDPI: Survey 2025(mdpi.com)

- AI Multiple: NLP Use Cases(research.aimultiple.com)

- Label Your Data: Types of LLMs(labelyourdata.com)

- LibHunt: Top open-source projects(libhunt.com)

- ZonkaFeedback: Best text analysis tools(zonkafeedback.com)

- OpenLogic: Community vs. Commercial OSS(openlogic.com)

- Datamation: Data classification software(datamation.com)

- Kapiche: Best text mining software(kapiche.com)

- Convin: Guide to text analytics software(convin.ai)

- ResearchGate: NLP trends survey 2025(researchgate.net)

- ITMediaLaw: Ethics and liability in automated decisions(itmedialaw.com)

- Dentons: AI regulation trends 2025(dentons.com)

- ResearchGate: Core-set active learning(researchgate.net)

- Frontiers: LLMs for psychological text classification(frontiersin.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Classification Methods That Actually Work at Real-World Scale

Discover 2026’s most effective, surprising strategies and pitfalls. Unmask myths, get real-world advice, and choose the right approach.

Text Analytics Trends 2026: From Hype to High‑stakes Reality

Discover insights about text analytics trends

Text Analytics Tools Reviews That Expose What Vendors Won’t

Text analytics tools reviews that cut through the hype. Uncover hidden truths, expert picks, and hard-won lessons for choosing the right tool in 2026.

Text Analytics Tools for Business That Pay Off in 2026 and Beyond

Discover 2026’s boldest wins, hidden costs, and expert-backed insights to rethink your entire data strategy—before your competitors do.

Text Analytics Tools Comparison That Cuts Through 2026 Hype

Text analytics tools comparison like you’ve never seen—raw, honest, and up-to-date. Unmask hype, discover real-world picks, and make smarter choices. Read now.

Text Analytics Tools Advantages That Prevent 2026 Blindspots

Text analytics tools advantages revealed: Uncover surprising business wins, hidden risks, and expert insights for 2026. Make smarter decisions—read before you invest.

Text Analytics Technology Trends That Will Outlast the Hype

Discover insights about text analytics technology trends

Text Analytics Technology Innovations That Actually Change Decisions

Discover insights about text analytics technology innovations

Text Analytics Technology Forecast 2026: Bets, Risks and Reality

Text analytics technology forecast for 2026: Discover bold predictions, hidden risks, and game-changing trends. Get ahead, avoid hype, and future-proof your strategy now.