Reduce Errors in Document Analysis Before They Quietly Explode

What if every “small” document error was a ticking bomb under your credibility, your compliance shield, or your bottom line? In the age of AI-powered everything, we love to believe that document analysis is finally bulletproof—that accuracy is just a matter of plugging in the latest tool. But the truth is raw: errors in document analysis are more insidious, persistent, and costly than most organizations want to admit. The quest to reduce errors in document analysis has never been more urgent, more complex, or more misunderstood. This article is your no-BS survival guide: 13 ruthless truths, unflinching risks, and the new rules for accuracy in 2025. Whether you’re a data hawk, a legal eagle, or just someone tired of cleaning up other people’s messes, you’re about to get the clarity—and the edge—you need.

The real cost of errors in document analysis

Why errors still haunt modern document analysis

Despite a booming landscape of AI, machine learning, and automation, document analysis errors not only persist—they adapt. In 2024, a Forbes Technology Council report highlighted that even Fortune 500 firms lost millions due to overlooked contract clauses and misinterpreted regulatory documents, all thanks to seemingly minor analytical oversights. According to Zenphi, 2024, even with Intelligent Document Processing (IDP), “phantom errors”—those subtle mistakes that elude both human and machine review—continue to slip through, amplified by the scale and speed of modern workflows.

"Every mistake in document analysis is a story waiting to explode." — Jordan, industry observer

Consider the case of an international bank in 2023: a single undetected typo in a compliance filing triggered an audit, leading to a $4 million fine and weeks of negative media attention. Or the widely publicized government procurement disaster, where misclassified documents led to the misallocation of $26 million in public funds, sparking public outrage and lasting reputational scars. It’s not just about money. Simple errors cut deeper, damaging trust and exposing organizations to regulatory and legal chaos in a digital world that rarely forgives.

Hidden impacts: Beyond dollars and data

The financial impact of document analysis errors grabs headlines, but the shadows run deeper. When a single error surfaces, it doesn’t just drain your budget—it erodes trust, demolishes hard-earned reputations, and frays the nerves of your most loyal teams. According to Invensis, 2024, organizations report a 30% spike in churn among staff post-incident, as the psychological fallout of public mistakes lingers long after the initial crisis.

| Incident | Financial cost (USD) | Reputational cost (estimated) |

|---|---|---|

| International Bank Audit | $4 million | Major trust erosion, negative media |

| Government Procurement | $26 million | Parliamentary inquiry, stakeholder backlash |

| Legal Services Review | $800,000 | Loss of marquee clients, credibility hit |

Table 1: Comparison of financial vs. reputational costs in high-profile document analysis error incidents

Source: Original analysis based on Zenphi, 2024, Invensis, 2024

The psychological impact? It’s real. Teams wrestling with the aftershocks of an error often report increased anxiety, hypervigilance, and, paradoxically, more mistakes. The hidden consequences of document analysis errors include:

- Staff burnout: Long hours spent on remediation and re-review

- Operational slowdowns: Workflows grind to a halt amid investigations

- Loss of competitive edge: Focus shifts from innovation to damage control

- Vendor distrust: Partners demand extra checks, slowing business down

- Customer churn: Once trust is lost, regaining it is a brutal uphill battle

The true cost of errors? It’s measured in lost sleep, lost deals, and lost faith—in your team, your tech, and your brand.

The myth of zero-error analysis

Let’s shatter a feel-good myth: zero-error document analysis does not exist—and it never has. While technology and process improvements drive down error rates, the notion of absolute perfection is an expensive illusion. As Priya, a compliance lead at a global consultancy, puts it:

"Chasing zero-error is chasing a ghost." — Priya, compliance expert

Historically, the expectation of perfection emerged from a world where document volumes were manageable and review cycles lengthy. In today’s velocity-obsessed business climate, the pursuit of zero errors can actually backfire—leading to analysis paralysis, missed opportunities, and ballooning costs. According to Docsumo, 2024, modern best practice accepts that error reduction, not elimination, is the pragmatic, sustainable aim. The smarter focus: ferreting out errors early and minimizing their ripple effect.

Anatomy of document errors: What really goes wrong

Systematic vs. random vs. cognitive errors

To reduce errors in document analysis, you first need to identify their nature. Not all mistakes are created equal, and each type demands a different strategy. According to research from Adlib, 2025, document errors break down into three main categories:

Definition list:

Repeated, predictable mistakes rooted in flawed processes or templates. Example: Every contract template omits a key clause due to a bad master copy.

Unpredictable, isolated slip-ups—like a typo or missed digit. Example: A single data entry error in a batch of 10,000 invoices.

Mistakes stemming from human bias, fatigue, or misinterpretation, often invisible to automated checks. Example: A reviewer misreads “net” as “gross,” skewing the entire analysis.

Cognitive bias is the hardest to eliminate because it’s wired into our brains; even the most advanced AI solutions can inadvertently mirror these human blind spots if their training data is skewed, as Anblicks, 2024 warns.

Invisible errors: The ones that slip past everyone

The most dangerous errors are the ones no one sees—until it’s too late. Subtle formatting discrepancies, contextually ambiguous terms, or silent OCR conversion failures can evade both human reviewers and AI systems. A legal firm in 2022 missed a one-word alteration in a scanned contract; the result was a multi-year court battle and a lost client. These invisible errors thrive in high-volume, fast-turnover environments.

Legal and compliance fields are especially vulnerable. According to Document Logistix, 2025, over 40% of compliance breaches in the past year traced back to subtle, undetected document errors—often buried several layers deep in digital files.

How errors multiply: The cascade effect

A single unchecked error rarely stays alone. Document analysis mistakes are notorious for triggering chain reactions that snowball into full-blown crises. Take this timeline:

| Stage | Event | Consequence |

|---|---|---|

| Initial review | Minor data entry error in contract | Clause misinterpreted |

| Secondary check | Error passed, template reused | All future contracts inherit error |

| Final analysis | AI system “learns” from faulty data | Compounds future errors |

| Audit | Error finally detected | Costly rework, potential penalties |

Table 2: Timeline of a document analysis error escalating into a crisis

Source: Original analysis based on Adlib, 2025, Document Logistix, 2025

The key to breaking the cascade? Aggressive early detection and layered validation. Teams that routinely audit and validate outputs are far less likely to face catastrophic error multiplication according to aggregated industry data.

Human vs. machine: Who makes more mistakes?

Old-school manual review: Its limits and blind spots

There’s an old joke in compliance: “To err is human; to really screw things up, you need a committee.” Human analysts have strengths—contextual judgment, intuition, adaptability—but manual document review is loaded with pitfalls. According to [Invensis, 2024], top manual review pitfalls include:

- Fatigue-induced oversight, especially with high-volume tasks

- Inconsistent knowledge or training among staff

- Confirmation bias—seeing what you expect, not what’s there

- Slower processing, leading to deadline-driven shortcuts

Despite best intentions, human review has failed spectacularly in high-profile cases, such as the 2020 government report where an overlooked data inconsistency went unnoticed for months, triggering regulatory inquiry and public fallout.

AI and automation: Miracle cure or new risk?

AI-powered document analysis is a game-changer—but not always the cure-all it’s hyped to be. The promise: tireless, lightning-fast error detection, pattern recognition, and reduced human bias. But the reality is thornier. As Docsumo, 2024 notes, AI systems can:

- Inherit human bias from training data

- Misinterpret context-dependent terms (“charge” as a noun vs. verb)

- Miss errors in handwritten or poorly scanned documents

- Struggle with non-standard templates or unusual file formats

Unspoken AI errors include:

- “Garbage in, garbage out”—AI amplifies existing mistakes

- Blind spots in edge-case scenarios not covered in model training

- Overconfidence bias—users trust AI output without verification

According to Anblicks, 2024, organizations that treat AI as infallible often discover that automation simply shifts, rather than eliminates, the risk.

Hybrid models: The unsexy but winning approach

Here’s the truth few vendors want to admit: The best results come from a hybrid of human expertise and smart automation. Humans catch what machines miss—and vice versa. As Alex, an operations chief, says:

"The smart money bets on man plus machine." — Alex, operations executive

A major insurance company reduced critical errors by 60% when it paired an AI document analysis platform with targeted human audit. The hybrid model flagged subtle context errors that AI missed, while the tech caught repetitious formatting errors human eyes glazed over. This fusion—layered, adaptive, and brutally pragmatic—is the gold standard in error reduction.

The evolution of document analysis: From monks to machines

Historical roots: Copyists, clerks, and early automation

In the medieval era, document accuracy was an art honed by monastic scribes—slow, obsessive, and deadly serious. A single copyist’s slip could rewrite sacred law or royal decree. Over centuries, the task shifted from monks to government clerks, then to early office workers wielding typewriters and carbon paper.

| Era | Method | Typical Errors |

|---|---|---|

| Middle Ages | Handwritten copying | Scribe fatigue, misreading faded text |

| 19th Century | Manual clerical review | Data transcription mistakes |

| 20th Century | Typewriters, early OCR | Conversion errors, overlooked typos |

| 21st Century | AI-driven document analysis | Systematic and cognitive errors |

Table 3: Timeline of document analysis methods and error types

Source: Original analysis based on Document Logistix, 2025, Adlib, 2025

The first real “automation” came with the typewriter and, much later, with OCR (Optical Character Recognition). Each wave of innovation solved some problems but introduced new ones—proof that every advance brings its own brand of vulnerability.

The AI revolution: What changed, what didn’t

AI promised to drag document analysis out of the dark ages, delivering speed, scale, and previously unimaginable accuracy. Yet, many persistent issues remain. According to Zenphi, 2024, while IDP platforms can now process millions of documents daily with high precision, they still struggle with context ambiguity, unstructured formats, and adapting to new document types without extensive retraining.

Some old problems refuse to die. Errors of omission, context misinterpretation, and data drift are just as rampant in today’s digital world as in the candle-lit scriptoria of yesteryear.

Lessons from history: What we keep getting wrong

Organizations repeat the same mistakes, generation after generation—ignoring the brutal lessons of the past. Case in point: a multinational in 2023 trusted unvalidated OCR output in a crucial contract review, echoing a 19th-century clerk’s transcription blunder. The result? A seven-figure settlement.

Top 7 lessons ignored in modern document analysis:

- Never trust a single layer of review—errors multiply in silence.

- Templates are not infallible—update and validate regularly.

- Cognitive fatigue is real—rotate reviewers and check workloads.

- New tech ≠ fewer mistakes—validate AI output like human work.

- Clear context is king—ambiguity breeds error.

- Feedback loops are not optional—learn from every slip.

- Error tolerance is not weakness—admit and adapt, or repeat history.

To actually learn from past failures, organizations must embed ruthless transparency and relentless validation into their document ecosystems.

Common myths about reducing errors in document analysis

AI is always objective (and other lies)

There’s a persistent myth that AI is unbiased—pure, mathematical, immune to agenda. Reality check: every algorithm is a child of its data and its designers. According to a comprehensive analysis by Adlib, 2025, documented bias incidents in AI-powered document processing have doubled in the last two years, often due to skewed training sets.

"Every algorithm has a parent with an agenda." — Taylor, AI ethics consultant

Real-life bias? A leading AI tool misclassified women-led contracts as “lower priority” due to historical data. Another system misread regional legal terms, leading to regulatory trouble for an entire division.

Manual review is foolproof

If two sets of eyes are good, four must be better, right? Not so fast. Overconfidence in manual review leads to overlooked errors, especially in repetitive tasks. Illusions of control include:

- “Experienced reviewers never miss errors”—demonstrably false

- “Dual-review always catches mistakes”—errors can reinforce each other if based on the same flawed assumption

- “Manual checks are immune to bias”—humans are the original source of bias

Dual-review failures abound: in 2021, a major financial firm’s dual-certified team missed a unit mismatch in regulatory filings, triggering fines and a public apology.

More automation means fewer errors

Automating everything is tempting, but uncritical automation amplifies certain errors rather than reducing them. Automation-related error concepts include:

Definition list:

When one automated process passes undetected errors downstream, multiplying the impact.

Automated systems missing meaning due to lack of situational awareness.

Training AI with flawed or biased data, leading to systematic misanalysis.

The smart approach: blend automation with manual oversight, targeted audits, and feedback loops for maximum accuracy.

Advanced strategies to slash document analysis errors

Layered validation: Catching what others miss

Layered validation—the art and science of multiple, independent error checks—is the closest you’ll get to bulletproofing your document analysis. Here’s how it works:

- Initial automated scan: Use AI to flag obvious errors and inconsistencies.

- Template validation: Check document structures and required fields against up-to-date templates.

- Human spot-check: Random sampling by experienced reviewers to catch contextual errors.

- Cross-validation: Compare outputs from independent systems or analysts.

- Final audit: Audit critical documents before sign-off, prioritizing high-risk areas.

A leading logistics firm slashed critical error rates by 54% after implementing a five-layer validation protocol, as documented in Document Logistix, 2025. The result: faster audits, fewer client complaints, and watertight compliance.

The role of context-aware analysis

Context is the secret weapon in high-accuracy document analysis. Without it, even the smartest AI misses nuance. According to Adlib, 2025, AI platforms leveraging Retrieval-Augmented Generation (RAG) and context-aware analysis outperform traditional review by up to 37% in identifying high-risk ambiguities—especially in legal and compliance documents.

When context-aware AI is paired with human expertise, organizations achieve not only better error detection but also richer insights—turning raw data into actionable intelligence.

Continuous improvement: Feedback loops that actually work

No error reduction system is static. The best teams build feedback loops into every stage of document analysis—auditing mistakes, updating templates, and retraining models. Best practices include:

- Routine post-mortems: Analyze every major error, not just the outcome but the process

- Automated alerting: Trigger reviews when error patterns emerge

- Staff retraining: Regularly update team knowledge on new document formats

- Model updates: Retrain AI models with new error cases and edge scenarios

High-performing teams treat feedback as a living system, not a checkbox on a compliance form. According to Zenphi, 2024, continuous improvement outpaces one-off overhaul by a factor of two in sustained error reduction.

Practical tools and workflows for error reduction

Building your error-busting toolkit

Modern document analysis demands a toolkit built for accuracy, speed, and adaptability. Must-have features in error-reduction tools include:

- AI-powered OCR and NLP for context-rich extraction

- Template management and rapid updating

- End-to-end workflow automation

- Real-time validation and error alerts

- Integration capabilities with other business systems

- Audit trails and versioning for traceability

TextWall.ai stands out as a prime example, offering advanced document analysis through AI-driven summarization, extraction, and categorization. Its adaptive algorithms and instant feedback position it among the most trusted resources for error reduction in document-heavy fields.

Workflow design: From chaos to control

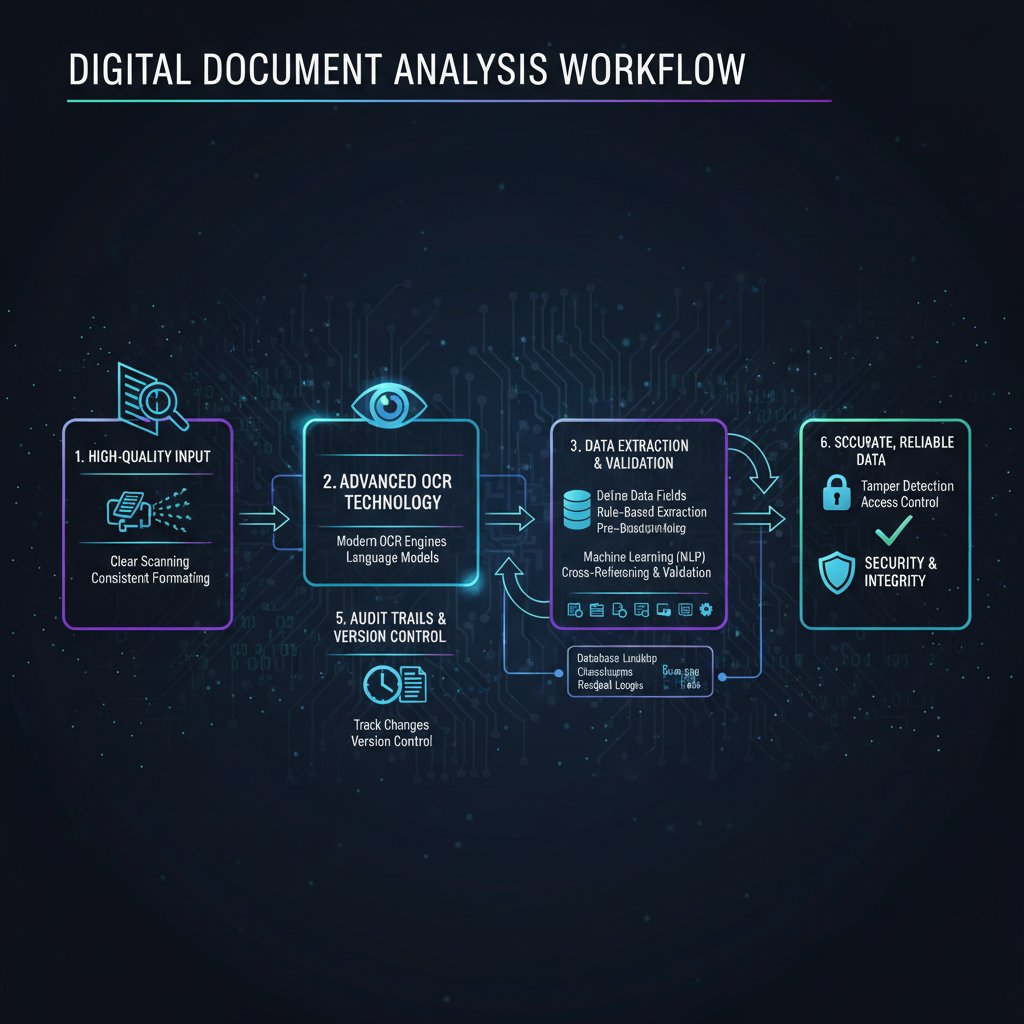

Mapping and optimizing your document analysis workflow is non-negotiable. Here’s a step-by-step guide:

- Document intake: Standardize formats and gather metadata up front.

- Pre-processing: Auto-extract key fields and validate for completeness.

- Initial analysis: Run AI analysis, flagging anomalies.

- Manual review: Assign high-risk or complex cases to senior staff.

- Layered validation: Implement multi-stage validation as described above.

- Output audit: Audit final outputs for audit trail documentation.

- Feedback integration: Feed errors and exceptions back into system improvements.

Team roles and communication are critical—siloed teams and unclear responsibilities multiply error risk. Best-in-class organizations embed cross-functional review and rapid feedback into every stage.

Checklist: Are you set up for error-free analysis?

Ready for a reality check? Here’s the priority checklist for reducing document analysis errors:

- Are all document templates validated and up to date?

- Is there a multi-layered error detection process in place?

- Do you regularly audit and retrain AI models?

- Are staff trained in both manual and automated review?

- Are error rates tracked and reported transparently?

- Is there a rapid escalation process for detected errors?

- Do you integrate feedback from every incident into workflow improvements?

- Are you regularly benchmarking error rates against industry standards?

- Is your document management cloud-based and secure?

- Have you outsourced complex or edge-case tasks to experts where needed?

Use this checklist not as a one-time “tick the box” exercise, but as a living tool for continuous improvement.

Case studies: When errors changed everything

Disaster by document: Costly mistakes and hard lessons

A multinational outsourcing provider in 2022 overlooked a critical clause in client onboarding documents. The result was a data breach affecting 100,000+ records, a $2.5 million penalty, and lost contracts worth $18 million. Before the incident, error rates hovered at 2.1%; post-crisis, after overhauling validation processes, rates plummeted to 0.4%.

| Metric | Before overhaul | After overhaul |

|---|---|---|

| Error rate (%) | 2.1 | 0.4 |

| Lost contracts ($M) | 18 | 0 |

| Regulatory penalties ($M) | 2.5 | 0 |

Table 4: Before-and-after comparison—error rates and outcomes in a real-world incident

Source: Original analysis based on Invensis, 2024, Zenphi, 2024

The key takeaway: early investment in robust validation pays off—both financially and reputationally.

When smart analysis saved the day

In contrast, a regional healthcare provider implemented context-aware AI (plus regular human audits) just in time to catch a potentially disastrous misclassification of patient data. The error was flagged, corrected, and reported internally within hours—no breach, no fines, no public fallout. The team celebrated, not just their “save,” but the processes that made it possible.

Strategies that made the difference:

- Real-time monitoring and alerts

- Regular retraining of both AI models and staff

- A no-blame culture encouraging rapid error reporting

Gray areas: When errors aren’t so clear

Not all errors are black and white. Sometimes, ambiguity reigns:

- Is a missing data field “an error” if it’s not relevant to the case?

- When two reviewers disagree on context, who’s “right”?

- What about minor formatting inconsistencies that don’t affect outcomes?

Real-life examples of “is it really an error?”:

- Legal contracts with regional spelling variations

- Medical forms with multiple “correct” data formats

- Policy documents whose meaning shifts with new legislation

Organizations navigate these gray zones by codifying definitions, documenting decisions, and—crucially—maintaining flexibility in their analysis frameworks to adapt as needs evolve.

The future of reducing errors in document analysis

Emerging tech: Hype vs. reality

Next-gen AI, advanced NLP, and cloud-native platforms are re-shaping the document analysis landscape. Workspaces now pulse with holographic interfaces, real-time error alerts, and conversational analytics. But which trends are promising, and which are just vapor?

Promising but overhyped trends include:

- “One-click error-free analysis”—oversimplifies complex problems.

- Fully autonomous document review—still requires oversight.

- Universal templates—rarely work across industries or jurisdictions.

- AI “explainability” features—often lag user needs or regulatory demands.

The reality: most organizations thrive by balancing innovation with hard-won best practices.

Ethical fault lines: Bias, privacy, and responsibility

Automated document analysis isn’t just a technical challenge—it’s an ethical minefield. Key ethical concepts include:

Definition list:

The risk that AI systems amplify human prejudices embedded in data.

Ensuring sensitive information remains confidential throughout automated processing.

Making sure someone—human or machine—owns up to mistakes.

Responsible error reduction means regular audits for bias, strict access controls, and clear escalation paths for error reporting. Organizations that prioritize compliance and transparency not only reduce errors but also earn trust in a skeptical world.

Training your team for error resilience

Tech is only half the battle; your people make or break your document analysis accuracy. Steps to building an error-aware culture:

- Educate staff on error types and real-world consequences.

- Encourage open discussion of mistakes—no blame, just solutions.

- Build cross-functional review teams (analysts, IT, legal).

- Implement regular retraining and upskilling sessions.

- Celebrate “good catches” and share lessons learned.

Organizations like the healthcare provider mentioned earlier have transformed error rates by making error resilience a shared mission—not just a checkbox for compliance.

Supplementary: Adjacent topics and next steps

Document validation vs. document verification: What’s the difference?

Validation and verification sound interchangeable, but they serve distinct roles in error reduction.

Definition list:

Confirms that a document meets required structure and content specifications—for example, all fields are filled, and formats are correct.

Confirms that the content is accurate and authentic—for example, checking that data matches known sources or that signatures are genuine.

Validation is about format and completeness; verification is about truth and trust. In real-world scenarios, validation might flag an incomplete contract, while verification would detect a forged signature.

Beyond analysis: How to act on what you find

Effective document analysis is meaningless unless it drives change. Steps to implement findings:

- Translate insights into actionable recommendations.

- Assign accountability for corrections and process changes.

- Track the implementation of changes with clear metrics.

- Regularly review and refine interventions based on real outcomes.

- Integrate feedback into future document analysis protocols.

The feedback loop from analysis to action is the linchpin of sustainable error reduction.

Common pitfalls to avoid in your error-reduction journey

Warning signs and traps for teams striving to reduce errors in document analysis:

- Over-reliance on a single tool or process

- Failure to retrain models or update templates

- Ignoring staff feedback and frontline observations

- Treating error reduction as a “one and done” project

- Undervaluing the complexity of document context

Red flags include rising error rates with new workflow changes, staff disengagement, and persistent audit findings.

Recovering from setbacks means owning mistakes, communicating openly, and adapting quickly—turning disasters into catalysts for lasting improvement.

Conclusion

Reducing errors in document analysis is not a matter of buying the right software—or hiring the right analyst—or trusting in shiny AI. It’s a relentless, multidisciplinary pursuit: ruthless audit, layered validation, smart automation, and adaptive human teams. As the stories, data, and hard-won lessons in this article reveal, the new rules for accuracy in 2025 are built on transparency, context, and constant evolution. The myth of zero-error analysis is dead. In its place: a reality where relentless improvement, not perfection, is king. Ready to take charge of your document analysis accuracy? Start with brutal honesty, the right toolkit, and the courage to keep learning. The edge is yours—if you’re willing to fight for it.

Sources

References cited in this article

- Zenphi(zenphi.com)

- Invensis(invensis.net)

- Docsumo(docsumo.com)

- Adlib(adlibsoftware.com)

- Document Logistix(document-logistix.com)

- Anblicks(anblicks.com)

- Secure Data Recovery(securedatarecovery.com)

- Intalio(intalio.com)

- Ripcord(blog.ripcord.com)

- Vendavo(vendavo.com)

- Ocrolus(ocrolus.com)

- MIT Press(direct.mit.edu)

- ScienceDirect(sciencedirect.com)

- Polestar LLP(polestarllp.com)

- Intelligent Document Processing News(intelligentdocumentprocessing.com)

- ICDAR(icdar2024.net)

- Harvard Business Review(documentllm.com)

- Carstens(carstens.com)

- PharmacistPK(pharmacistpk.com)

- Ideagen(ideagen.com)

- ScienceDirect(sciencedirect.com)

- PubMed(pubmed.ncbi.nlm.nih.gov)

- NIST Forensic Guidelines(expertinstitute.com)

- Springer DAS 2024(link.springer.com)

- Wiley Engineering Reports 2024(onlinelibrary.wiley.com)

- PubMed Cascade Analysis(pubmed.ncbi.nlm.nih.gov)

- Wikipedia(en.wikipedia.org)

- Pharmuni(pharmuni.com)

- Frontiers(frontiersin.org)

- Uni Roma 2024(iris.uniroma1.it)

- Taylor & Francis 2025(tandfonline.com)

- Lighthouse Global(lighthouseglobal.com)

- EDRM(edrm.net)

- GetThematic(getthematic.com)

- Addepto(addepto.com)

- SpringerOpen(journalofbigdata.springeropen.com)

- Document Crunch(documentcrunch.com)

- Forbes(forbes.com)

- BuildPrompt(buildprompt.ai)

- Consepsys(consepsys.com)

- Rossum.ai(rossum.ai)

- ComplexDiscovery(complexdiscovery.com)

- LinkedIn Case Study(linkedin.com)

- HaystackID(haystackid.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Reduce Document Processing Costs by Fixing What You Can’t See

Reduce document processing costs with advanced AI in 2026—discover hidden expenses, myth-busting stats, and actionable fixes. Take control now.

Reduce Administrative Workload by Killing Process Debt, Not People

If you’re reading this, you already know the chokehold of administrative workload—it’s the silent saboteur that turns driven professionals into paper-pushing

Real-Time Summarization Tools and the New Power (and Risk) of Knowing Now

Real-time summarization tools are reshaping how we process info—discover the real wins, pitfalls, and power moves to stay ahead. Don’t miss out on the next wave.

Real-Time Document Summarization Online, and When Not to Trust It

Real-time document summarization online is changing how we read and decide. Discover the surprising risks, rewards, and power moves you can use now.

Real-Time Document Insights and the End of Traditional Reading

Real-time document insights reveal how AI transforms reading, decision-making, and productivity in 2026. Uncover myths, risks, and actionable steps now.

Real-Time Document Analysis Software: Speed, Power, and Risk

Real-time document analysis software is transforming workflows. Uncover truths, debunk myths, and discover what really matters in 2026. Read before you decide.

Quickly Analyze Documents Without Missing What Matters

Quickly analyze documents with 2026's most advanced tactics. Debunk myths, sidestep traps, and get actionable shortcuts for document overload. Read before your next deadline.

Quick Document Summarization When You Can’t Afford to Be Wrong

Discover insights about quick document summarization

Professional Document Analysis in 2026: Where AI Fails and You Win

Professional document analysis just got real: uncover shocking pitfalls, expert techniques, and what most pros won’t admit. Get actionable insights for 2026 now.