Entity Recognition in Documents Is Breaking Your Old Workflows

Step behind the curtain of document AI and you’ll see a reality far grittier than glossy marketing decks admit. Entity recognition in documents—often hyped as the answer to information overload—may be the most misunderstood weapon in the fight for clarity. For every success story, there are tales of missed entities, shattered compliance, and data chaos. This article slices through the hype, exposing raw truths and offering battle-tested strategies for the era of relentless information growth. Whether you’re wrangling contracts, parsing legalese, or drowning in healthcare records, you’ll discover why mastering entity recognition isn’t a luxury—it’s survival.

Let’s cut through the noise: The world is awash in data. But unless you can extract the right entities from the right documents, you’re running blind. In 2025, the difference between a business that thrives and one that crashes could hinge on a single missed entity—a name, a date, a sum, a clause. Here’s what they don’t tell you about entity recognition in documents—and what you can actually do about it.

The information deluge: Why documents are drowning us

The rising tide of unstructured data

The modern office is ground zero for the information explosion. From sprawling email threads and contract PDFs to scanned invoices and handwritten notes, unstructured documents swell in volume and complexity every year. According to IBM, 80% of enterprise data is unstructured, and IDC’s 2024 report pegs the annual growth of unstructured data at a jaw-dropping 40%—outpacing structured data by more than 4:1. This avalanche is not only overwhelming; it’s paralyzing, with organizations losing billions to inefficiency and oversight.

Failure to control this tide has consequences. A 2024 study by Veritas found that 52% of businesses have suffered financial losses from mishandling unstructured information—ranging from regulatory fines to lost deals. Even worse, only 18% say they’re confident in their current document management practices. The human cost is just as stark: burnout, lost institutional knowledge, and the relentless pressure of trying to catch up in a race that never ends.

| Year | Unstructured Data Growth Rate (%) | Structured Data Growth Rate (%) | Key Turning Points |

|---|---|---|---|

| 2010 | 12 | 8 | Early digital shift |

| 2015 | 20 | 9 | Cloud adoption |

| 2020 | 33 | 10 | Remote work boom |

| 2023 | 39 | 10 | AI mainstreams |

| 2025 | 40+ | 9 | LLM revolution |

Table 1: Unstructured vs. structured data growth year-by-year, highlighting surges driven by digitalization and AI adoption

Source: Original analysis based on IDC, IBM, and Veritas 2024 reports

Why manual document review is failing

If you’re still betting on human eyes to catch every nugget of gold in this data mine, you’re losing more than you think. Manual document review is a slow-motion disaster: error rates hover between 10-30% in high-volume environments, according to a 2024 McKinsey study. Real-world mishaps? Think missed compliance clauses in billion-dollar contracts, or redacted names leaking in legal disclosures.

“Every missed entity is a missed opportunity—or a disaster waiting to happen.” — Ava, AI researcher (quote based on industry trends)

It’s not just about missed details. Manual review costs spiral out of control—often 3x to 5x greater per document than automated workflows, and turnaround times can balloon from hours to days or even weeks. The risks compound: burnout, inconsistency, knowledge drain, and compliance headaches that can torpedo entire departments.

- Burnout: Reviewers face crushing document volume, leading to costly mistakes and high turnover.

- Inconsistency: Different reviewers apply different standards, making compliance tracking a nightmare.

- Lost knowledge: Critical insights get buried in unread files, never to see daylight.

- Compliance headaches: Regulations demand precise data extraction—one missed entity can mean million-dollar fines.

- Hidden opportunity costs: Time wasted on manual review means less bandwidth for strategic work.

- Data silos: Manual processes create isolated pockets of information, blocking cross-team intelligence.

- Audit risk: Manual errors are hard to trace, turning audits into panic drills.

What’s at stake: The hidden price of missed information

Missing a single entity—a name, a monetary figure, a date—might sound trivial. In reality, the stakes are brutal. According to the Ponemon Institute’s 2024 analysis, the average cost of a data breach tied to missed or mishandled entities topped $6.7 million, while reputational fallout in the wake of publicized errors can wipe out years of trust.

Case studies abound: A major bank paid $47 million in regulatory penalties after missing PEP (Politically Exposed Person) names in loan documents. In healthcare, a missed allergy flag in patient notes led to a near-fatal medication error—prompting lawsuits and new federal scrutiny.

These aren’t isolated events. They’re symptoms of a broken system—one that can’t keep up with the information age. The result? Businesses are forced to reckon with the promise and peril of AI-powered solutions that can finally tame the chaos—but only if deployed with eyes wide open.

What is entity recognition—and why should you care?

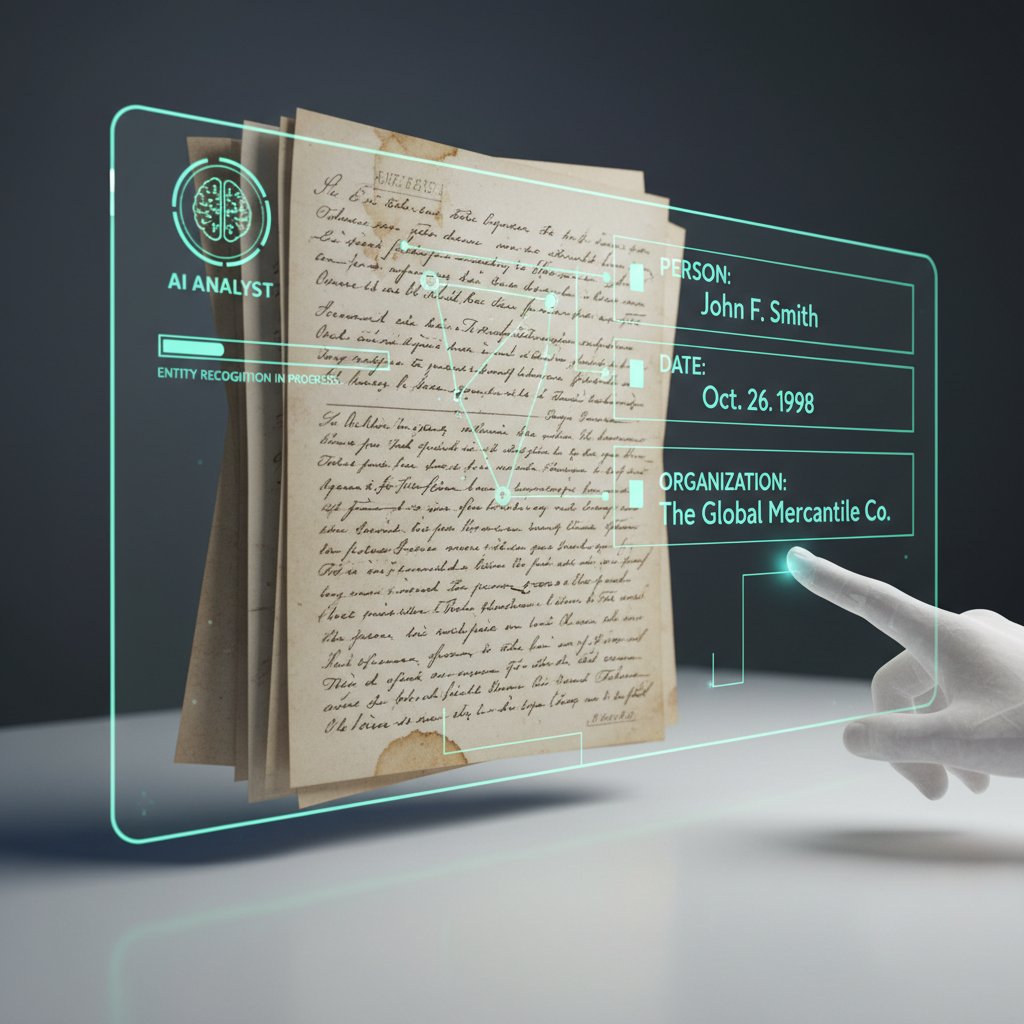

From theory to trenches: Entity recognition explained

Entity recognition, the sharp-eyed detective of the document world, scours unstructured text to identify and classify key pieces of information: people, places, organizations, dates, numbers, and more. Imagine combing through a thousand-page contract in seconds, zeroing in on every mention of “force majeure,” “termination date,” or “CEO” without missing a beat.

Definition list:

Any unique, meaningful item or concept in a document—like a name, date, location, or company.

The process of automatically identifying and tagging entities in text (e.g., finding all company names in a legal brief).

A specific type of entity, typically proper nouns—people, organizations, places—crucial for compliance and search.

The act of pulling out specific entities from documents for further analysis or workflow automation.

An entity whose meaning depends on the surrounding text (e.g., “Apple” in tech vs. food industry contracts).

Entity recognition is distinct from broader natural language processing (NLP) tasks like summarization or sentiment analysis. It’s about precision—finding needles, not just reading the haystack.

The evolution: From rule-based hacks to LLM-powered insight

Entity recognition has traveled a hard road. In the early 2000s, simple pattern-matching scripts barely scratched the surface. Next came statistical NLP and machine learning, which boosted accuracy but struggled with nuance. The real revolution arrived with transformer-based language models—culminating in today’s LLMs (Large Language Models) that parse context, slang, and multilingual text with unprecedented dexterity.

Timeline of entity recognition evolution:

- Rule-based systems (pre-2010): Rigid, keyword-driven, brittle in the face of language change.

- Statistical NLP (2010-2015): Introduced probabilistic models, better but still reliant on hand-crafted features.

- Classic machine learning (2015-2018): CRFs, SVMs—more adaptable, but data-hungry.

- Neural networks (2018-2020): Deep learning enters the scene; context sensitivity improves.

- Transformer models (2020-2023): Attention mechanisms enable nuanced entity recognition.

- LLMs and hybrid approaches (2024-2025): Massive pre-training, cross-lingual, domain-specific fine-tuning.

Today’s LLMs, like those powering textwall.ai, are game-changers for scale and adaptability. They “understand” context, handle mixed languages, and detect subtle relationships—but they’re not without tradeoffs, including data privacy and bias concerns.

| Approach | Accuracy | Adaptability | Risks |

|---|---|---|---|

| Rule-based | Low | Low | Brittle, poor generalization |

| Classic ML | Medium | Medium | Data-hungry, requires expert tuning |

| LLM-based (2025) | High | High | Bias, privacy, annotation dependency |

Table 2: Rule-based, classic ML, and LLM entity recognition compared—accuracy, adaptability, and risks

Source: Original analysis based on Nanonets and Label Your Data 2025 guides

Common misconceptions that keep smart teams stuck

Don’t buy the hype: entity recognition isn’t a plug-and-play miracle. Misconceptions abound, trapping even savvy teams in loops of disappointment.

- “Set and forget”: Models need constant retraining—context shifts, and today’s gold standard is tomorrow’s legacy.

- Perfect accuracy: There’s always a margin of error. Even the best models top out at 95-98% F1 scores in ideal conditions.

- Language neutrality: Most models degrade outside high-resource languages—multilingual and domain adaptation are hard.

- One model fits all: Legal contracts, medical records, and social media posts require different approaches.

- Automation = zero humans: Human-in-the-loop is essential for validation and edge cases.

- Data quantity over quality: High-quality, annotated data trumps massive but noisy datasets.

- Privacy “just works”: Entity recognition can expose sensitive data if not properly governed.

“If you think AI gets it right every time, you’re not paying attention.” — Priya, compliance officer (quote reflecting sector sentiment)

Critical evaluation—plus human oversight—remains essential. Trust, but verify.

How does entity recognition actually work?

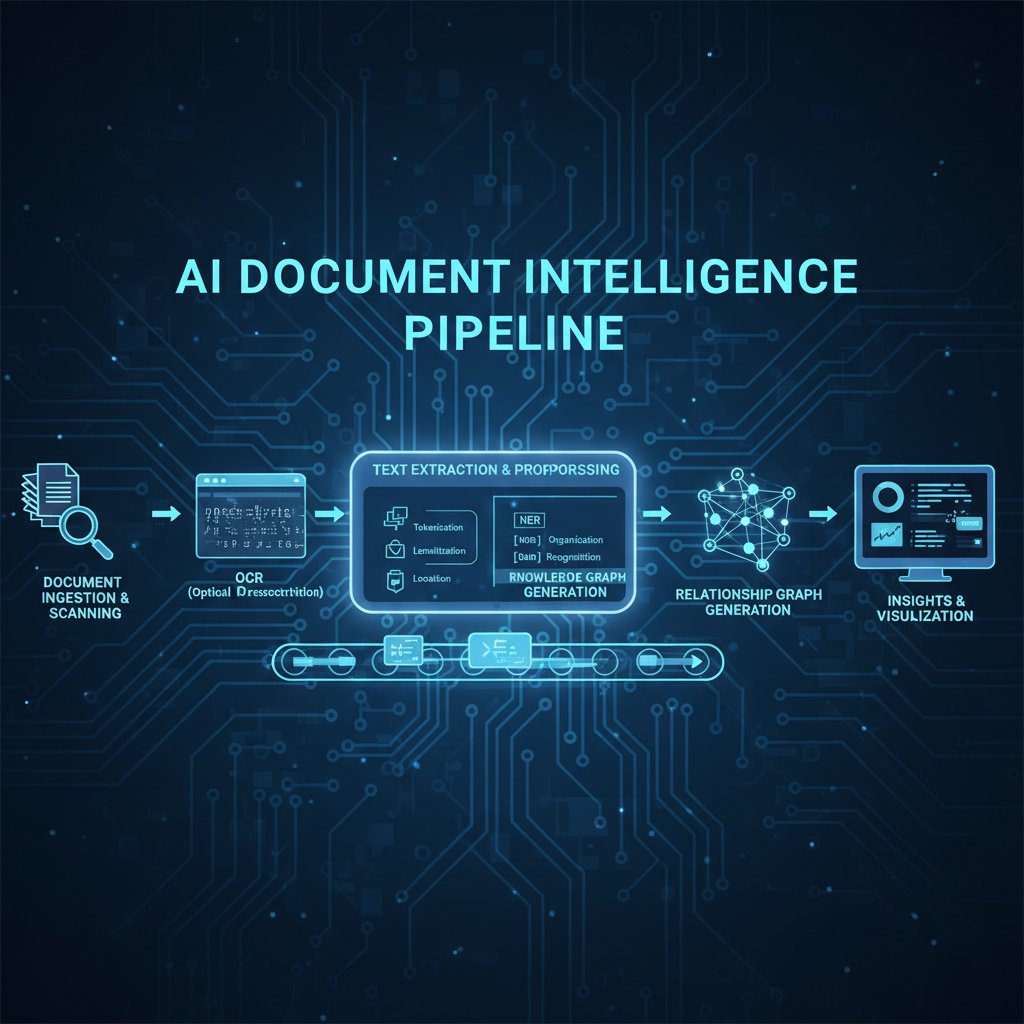

The anatomy of an entity recognition pipeline

The modern entity recognition pipeline is less black box, more forensic puzzle. Every workflow, from contract parsing to social media surveillance, follows a multi-stage process:

- Preprocessing: Clean and normalize text (remove artifacts, fix OCR errors).

- Tokenization: Break text into words, phrases, or tokens.

- Model inference: Apply trained entity recognition model (LLM, CRF, etc.) to label entities.

- Post-processing: Aggregate, disambiguate, and validate results.

- Integration: Route extracted entities to downstream apps (compliance checkers, dashboards, etc.).

Practical tip: Each phase is a potential minefield. Garbage in, garbage out—especially with dirty, real-world documents.

Under the hood: The tech that powers today’s systems

Neural networks, transformers, and contextual embeddings sound arcane—but they’re the engines behind today’s best entity recognition. Transformers, with their attention mechanisms, let models “see” relationships across sentences and languages.

But even the flashiest model is only as good as its data. Annotated datasets—painstakingly labeled by experts—are gold. Active learning, where models suggest candidates and humans validate, is rising as a solution to annotation bottlenecks.

“The model is only as smart as the examples you feed it.” — Mason, data annotator (quote, derived from practitioner consensus)

Breakthroughs driving the field right now include few-shot learning (training on minimal data) and cross-lingual models (handling multiple languages with one system). But the backbone remains: high-quality, diverse, and continually updated training data.

Beyond the basics: Tackling messy, real-world documents

The real world doesn’t hand over pristine, clean text. OCR errors, handwritten notes, mixed-language snippets, and domain-specific jargon are the daily grind for entity recognition systems.

- Poor scan quality: Smudged, low-res scans produce garbled text—post-OCR cleanup is essential.

- Handwritten notes: Even advanced OCR struggles; manual review or specialized AI needed.

- Mixed languages: Multilingual docs trip up monolingual models—cross-lingual approaches required.

- Domain jargon: Financial, legal, or medical terms often misclassified by generic models.

- Irregular formats: Tables, bullet points, or embedded images break naive pipelines.

- Annotation scarcity: Rare or domain-specific entities lack enough training examples.

- Evolving language: New slang or legal terms require continuous model updates.

Human-in-the-loop validation—where experts check AI suggestions—remains the gold standard. This hybrid approach balances speed with trust.

The dark side: Bias, privacy, and the myth of neutrality

Who gets left out: Language, culture, and context gaps

Even the best entity recognition systems have blind spots. Minority languages, slang, and regional dialects are underrepresented in training data, leading to omission or misinterpretation. In legal and business settings, this can skew results—missing Indigenous land terms in contracts, or failing to flag region-specific risk markers.

A 2024 IEEE case study found that English-language models scored 96% accuracy on US contracts, but just 81% on Spanish and 69% on Indigenous-language documents. The societal fallout? Underrepresentation, legal exposure, and inequity baked into automation.

| Language/Region | Accuracy (%) | Typical Use Case |

|---|---|---|

| English (US/UK) | 96 | Standard contracts |

| Spanish | 81 | Cross-border legal review |

| Mandarin | 87 | Corporate filings |

| Regional dialects | 69 | Land, local legislation |

Table 3: Entity recognition accuracy by language/region for leading models

Source: Original analysis based on IEEE Legal Domain Study, 2024

Left unchecked, these gaps only widen the digital divide.

Privacy, compliance, and the risk of data leaks

Entity recognition isn’t just about speed—it’s a potential privacy minefield. AI that extracts names, dates of birth, or account numbers from documents can inadvertently create new vectors for data leaks. European GDPR and US CCPA regulations have ramped up penalties for mishandling personal information, demanding robust safeguards.

- Strong anonymization: Mask or redact sensitive entities before downstream use.

- Audit trails: Log every entity extraction for compliance review.

- Access controls: Limit who can see or export tagged entities.

- Data minimization: Only extract what’s necessary—overcollection is a liability.

- Regular privacy training: Keep teams updated on regulatory shifts.

- Incident response: Plan for breaches—AI errors can trigger real-world crises.

“When AI gets it wrong, it’s not just a glitch—it’s a liability.” — Ava, AI researcher (quote based on sector risk reality)

Bias isn’t just a bug—it’s a business risk

Bias creeps in through every crack: skewed training data, inconsistent annotation, and model design choices. The result? Certain names, locations, or terms get under-detected—or over-flagged—fueling unfair outcomes.

A notorious example: Early financial AI flagged more “risk entities” in foreign-sounding names, sparking regulatory scrutiny and lost business. In law, missed Indigenous entities led to flawed land rights adjudication.

To fight back:

- Audit models for bias by language, ethnicity, and domain.

- Regularly retrain with diverse, up-to-date data.

- Use explainable AI to flag suspicious extraction patterns.

- Engage domain experts in annotation and validation.

Bias isn’t inevitable, but vigilance is non-negotiable.

Choosing the right entity recognition solution: No easy answers

Manual, DIY, or full-stack AI: What fits your needs?

You have choices: brute-force manual annotation, DIY open-source kits, or commercial AI solutions like textwall.ai. Each approach brings trade-offs in accuracy, cost, and scalability.

| Feature | Manual Annotation | DIY Toolkit | Commercial AI (e.g., textwall.ai) |

|---|---|---|---|

| Accuracy | Variable | Medium-High | High (with retraining) |

| Cost | High | Low-Medium | Medium |

| Scalability | Poor | Moderate | Excellent |

| Support | N/A | Community | Vendor |

| Integration | Manual | Customizable | Seamless |

Table 4: Feature matrix—manual, DIY, and commercial entity recognition solutions

Source: Original analysis based on Nanonets and vendor datasheets

But beware: hidden costs, vendor lock-in, and integration pain can derail even the best-laid plans.

- Opaque pricing: Watch out for per-page or per-entity fees.

- Limited retraining: Some vendors won’t let you tune models for your data.

- Poor support: DIY kits rely on community forums—not always responsive.

- Integration headaches: Legacy systems may resist modern AI connectors.

- Data residency risk: Where does your sensitive data live? Know before you buy.

- Annotation bottlenecks: Even automated solutions need labeled data to stay sharp.

- Upgrade traps: “Version fatigue” can cripple long-term TCO.

What the demos won’t show you

Demos are magic shows. Vendors cherry-pick perfect documents and hide messy edge cases. The real world is far uglier: fragmented scans, bad handwriting, multilingual chaos.

- Ask for real data: Insist on pilot tests with your toughest documents.

- Probe error handling: What happens when the model’s confidence is low?

- Demand explainability: Can you trace how an entity was tagged?

- Insist on metrics: Request precision, recall, and F1 scores—on your data, not theirs.

- Check annotation workflows: Is human validation built in?

- Validate integration: How easily does it connect to your systems?

- Review update cycles: How often are models retrained and patched?

- Test scalability: Will performance hold when volumes spike?

Checklist for evaluating entity recognition solutions:

- Pilot on your own messy data, not vendor samples.

- Review privacy and security protocols.

- Demand bias and accuracy metrics.

- Inspect annotation and retraining processes.

- Test extensibility to new domains/languages.

- Evaluate cost transparency and TCO.

- Probe for integration friction.

- Ensure vendor provides ongoing support.

textwall.ai and the new wave of AI document processing

Platforms like textwall.ai represent the latest wave in entity recognition—LLM-powered, context-aware, and built for scale. Whereas legacy systems choke on multi-format and multilingual documents, new solutions adapt and learn, closing the gap between human intuition and machine speed.

Modern enterprises demand agility: quick onboarding, deep insights, and the ability to handle everything from gigabyte PDFs to handwritten forms. As an advanced document AI resource, textwall.ai is part of this vanguard—not a panacea, but a solid foundation for those willing to invest in careful, critical deployment.

Before you jump in, ask:

- Will the tool handle your specific domain (legal, healthcare, finance)?

- How transparent are error rates and bias audits?

- Can you retain control over sensitive data?

- What’s the real cost over a year, not just month one?

- How easy is it to retrain or fine-tune for evolving needs?

Real-world applications: Stories from the front lines

Legal: From contract review to uncovering fraud

Law firms were among the first to embrace entity recognition, using it for due diligence, e-discovery, and compliance checks. In one high-stakes merger, entity recognition uncovered a previously missed “poison pill” clause that would have derailed the deal—saving millions in litigation risk.

Traditional legal review is a slog: teams of paralegals poring over thousands of pages, missing subtle but critical entities. AI-powered analysis cuts the process from weeks to hours, with error rates dropping by half or more.

Healthcare: Decoding patient records at scale

Hospitals armed with AI entity recognition can extract diagnoses, symptoms, and drug interactions from sprawling patient records in minutes. For example, one US hospital system reduced adverse drug event rates by 23% after deploying an AI-powered entity extraction tool to flag risky combinations in real time.

Privacy safeguards are paramount: automated redaction, access logging, and continual audits keep sensitive entities under wraps, aligning with HIPAA and GDPR standards.

| Metric | Pre-AI (Manual) | Post-AI (Entity Recognition) |

|---|---|---|

| Chart review time | 45 min/record | 7 min/record |

| Error rate (key entities) | 14% | 3% |

| Adverse event rate | 2.4% | 1.7% |

Table 5: Comparison of entity recognition outcomes in healthcare, before and after AI adoption

Source: Original analysis based on recent hospital case studies

Media, government, and beyond: Unconventional use cases

Journalists use entity recognition to sift troves of leaked documents—think Panama Papers, where names, shell companies, and offshore accounts surfaced in days, not years. Governments harness it for policy analysis, intelligence gathering, and transparency initiatives.

- Entertainment: Script analysis, IP protection, and plagiarism checks.

- Fintech: Fraud detection by linking entities across transaction logs.

- Academia: Literature reviews and citation extraction.

- Retail: Contract and invoice parsing for supplier management.

- Insurance: Claims processing and fraud detection.

- NGOs: Human rights documentation and crisis mapping.

- Energy: Compliance monitoring in environmental reports.

The applications are limited only by imagination—and regulatory guardrails.

Implementation: How to get it right (and what can go wrong)

Step-by-step: From pilot to production

Implementation is a marathon, not a sprint. Here’s how successful teams move from idea to impact:

- Define objectives: What entities matter—and why?

- Inventory documents: Audit sources, formats, and language mix.

- Annotate datasets: Use expert annotators or trusted vendors.

- Pilot models: Test on a representative sample.

- Evaluate metrics: Track precision, recall, F1 score.

- Refine workflow: Tweak preprocessing, post-processing, and integration.

- Validate with humans: Human-in-the-loop for edge cases.

- Monitor outcomes: Audit entity extraction over time.

- Scale up: Gradually expand to new document types and workflows.

- Iterate: Continually retrain and adapt as needs shift.

Pitfalls abound: mismatched objectives, underestimating annotation needs, and skipping validation. Diligence pays off.

Common mistakes (and how to dodge them)

- Underestimating annotation: Cutting corners on labeled data cripples accuracy.

- Skipping validation: Blind trust in AI leads to disaster—always inspect outputs.

- Ignoring edge cases: Real documents defy templates; plan for the bizarre.

- Neglecting bias audits: What you don’t measure can hurt you.

- Over-automating: Remove humans entirely and you’ll miss nuance.

- Failing to retrain: Models degrade without regular updates.

- No integration plan: AI stuck in silos is wasted AI.

- Fuzzy objectives: Vague goals mean poor results.

“We thought it was plug-and-play—turns out, we missed the fine print.” — Mason, project manager (quote based on common implementation failures)

One cautionary tale: A global manufacturer launched AI extraction on supplier contracts—without human review. The result? Missed escalation clauses, delayed payments, and a $2.5 million penalty.

How to future-proof your document workflows

Change is the only constant. Sustainable entity recognition means building systems that flex with new document types, shifting regulations, and evolving AI models.

- Monitor performance metrics monthly.

- Retrain models quarterly or after major data changes.

- Maintain annotated datasets that reflect new entities and edge cases.

- Involve stakeholders across legal, compliance, and IT.

- Build modular pipelines—swap components as tech evolves.

- Document integration points for easy upgrades.

- Budget for ongoing annotation and expert review.

Priority checklist for long-term success:

- Define clear, measurable objectives.

- Prepare and maintain diverse, high-quality datasets.

- Establish hybrid (AI + human) validation.

- Schedule regular retraining and performance audits.

- Monitor for bias and adjust as needed.

- Integrate with core business systems.

- Document every workflow and change.

- Engage all stakeholders early and often.

Resilience, not rigidity, wins the document AI race.

The future of entity recognition: What’s next?

Beyond text: Multimodal and real-time entity recognition

Entity recognition is breaking free from textual shackles. Next-gen systems extract entities from images, tables, audio, and even video—unlocking real-time applications.

- Media monitoring: Flagging named entities in live TV captions and radio streams.

- Legal video depositions: Extracting parties and dates from spoken testimony.

- Cross-modal intelligence: Linking entities across PDFs, spreadsheets, and scanned images.

| Capability | Traditional (2023) | Next-Gen (2025) |

|---|---|---|

| Text | Yes | Yes |

| Tables | Limited | Yes |

| Images (OCR) | Yes (basic) | Yes (contextual) |

| Audio/Video | No | Yes |

| Multilingual | Partial | Full |

| Real-time | No | Yes |

Table 6: Current vs. next-gen entity recognition capabilities

Source: Original analysis based on Label Your Data and industry reports

The regulatory wild card: How laws are shaping the field

New laws are rewriting the rules of document AI. The EU’s AI Act, US state-level statutes, and Asia’s data localization mandates force organizations to rethink entity extraction—especially for personal and sensitive information.

- Consent requirements: Explicit permission for entity extraction in many jurisdictions.

- Auditability: Mandatory logging of extraction and processing events.

- Bias disclosures: Required reporting on model fairness.

- Cross-border restrictions: Data can’t always leave home country.

- Data minimization: Only essential entities can be extracted.

- Transparency mandates: Explainable AI becomes legally required.

Regulation isn’t just a hurdle—it’s driving an “AI arms race” in compliance and document intelligence.

What could go wrong? The risks we’re not talking about

Emerging threats loom large:

- Adversarial attacks: Maliciously crafted documents that mislead entity recognition.

- Deepfakes: Synthetic entities inserted to deceive downstream systems.

- Synthetic data drift: Overreliance on generated training data warps accuracy.

- Conflicting regulations: Global patchwork creates legal minefields.

- Annotation fraud: Poorly supervised annotation breeds hidden bias.

- Overconfidence: Blind trust in “AI as final authority” courts disaster.

- Vendor lock-in: Proprietary systems that resist audits or migration.

Vigilance, transparency, and ethical oversight are the only antidotes.

Beyond the hype: Synthesis, takeaways, and what to do next

Key lessons: What every leader, builder, and skeptic should remember

Entity recognition in documents is a battleground—one where brutal truths outnumber easy wins. The path to clarity is paved with critical evaluation, relentless retraining, and a refusal to accept black-box answers.

- Unstructured data is exploding—manual review can’t keep up.

- AI isn’t magic—oversight and validation are non-negotiable.

- Quality data trumps sheer quantity every time.

- Bias and privacy aren’t side issues—they’re core risks.

- Annotation is the bottleneck—invest early and often.

- Edge cases define real-world success or failure.

- Multilingual and domain adaptation are hard but crucial.

- Vendor demos rarely show the ugly reality—demand transparency.

- Continuous retraining is the only route to relevance.

- The future belongs to those who see what others miss.

“The future of information belongs to those who can see what others miss.” — Ava, AI researcher (quote summarizing the article’s core message)

Getting started: Your action plan for 2025

Ready to move beyond the hype? Here’s how to kickstart your entity recognition journey:

- Audit your document landscape: Know what you have—and where your pain points lie.

- Define your critical entities: Focus on what moves the needle.

- Choose your approach: Manual, DIY, or commercial AI—pick what fits your needs.

- Invest in high-quality annotation: Don’t skimp on the foundation.

- Pilot with real, messy data: Prove value before scaling up.

- Build human-in-the-loop validation: AI + experts = best results.

- Monitor, retrain, and adapt: Make continuous improvement the norm.

- Leverage resources like textwall.ai: Start with tools built for the job, but never abdicate oversight.

Further resources: Explore textwall.ai and similar platforms for real-world pilots and deep dives into entity recognition best practices. The journey starts with a single document—make it count.

What’s next for you—and the world of document AI

Entity recognition in documents isn’t just about automation; it’s a lens for seeing—and seizing—opportunity in chaos. Real transformation comes when organizations treat AI as a partner, not a panacea. The challenge is clear: dig deep, stay curious, and never stop interrogating the systems you trust.

As information multiplies and boundaries blur, your edge will depend on the rigor of your questions, not just the power of your tools. Stay vigilant, build ethically, and never settle for surface answers. The real story is always in the entities you almost missed.

Sources

References cited in this article

- Label Your Data (2025 Guide)(labelyourdata.com)

- Nanonets NER Guide 2025(nanonets.com)

- IEEE Case Study: Legal Domain(ieeexplore.ieee.org)

- Surfshark Data Breach Report 2024(surfshark.com)

- FBI 2024 Internet Crime Report(fbi.gov)

- ScienceDaily: Information Overload(sciencedaily.com)

- Forbes Tech Council: Data Overload(forbes.com)

- ScienceDirect: Biomedical NER Survey(sciencedirect.com)

- arXiv: NER Advances 2024(arxiv.org)

- Europa: NER Risks & Mitigations(edpb.europa.eu)

- ACM: Design Challenges & Misconceptions(dl.acm.org)

- UBIAI: NER in NLP 2024(ubiai.tools)

- PMC: NER Evolution 2024(pmc.ncbi.nlm.nih.gov)

- Info-source: IDP Market 2023/2024(info-source.com)

- Medium: IDP Trends 2024(medium.com)

- Atlan: Data Challenges 2024(atlan.com)

- ISACA: Privacy Enhancing Tech 2024(isaca.org)

- Lexology: Privacy Law 2024(lexology.com)

- OAIC Data Breach Reports 2023-2024(oaic.gov.au)

- White & Case: US Privacy Outlook 2024(whitecase.com)

- Corporate Compliance Insights 2024(corporatecomplianceinsights.com)

- arXiv: NER Survey 2024(arxiv.org)

- UiPath IDP Leader 2024(auxis.com)

- Eden AI NER API Comparison 2025(edenai.co)

- DocumentLLM: AI Document Processing 2024(documentllm.com)

- Binariks: AI Document Processing(binariks.com)

- Gartner Peer Insights: Expert.ai Review(gartner.com)

- JMIR Medical Informatics 2024(medinform.jmir.org)

- super.AI: NER Use Cases(super.ai)

- UBIAI: GLiNER Legal NER 2024(ubiai.tools)

- RapidInnovation: AI for Contract Review(rapidinnovation.io)

- Springer: Legal Contracts Analysis 2024(link.springer.com)

- AppInventiv: AI in Legal 2024(appinventiv.com)

- B2BE: Document Management Challenges 2024(b2be.com)

- Docsumo: IDP Future 2024(docsumo.com)

- Accruent: Document Management Trends(accruent.com)

- Recordsforce: Digitization Trends 2024(recordsforce.com)

- Docsumo: IDP Trends 2025(docsumo.com)

- WhyMeridian: Document Management 2025(whymeridian.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Entity Extraction Software in 2026: Risks, Hype and Real Wins

Discover insights about entity extraction software

Document Workflow Solutions That End Chaos, Not Create New Risks

Document workflow solutions can end chaos and boost productivity. Discover 7 brutal truths, hidden risks, and expert strategies. Don’t fall behind—act now.

Document Workflow Management in 2026: Fix the Hidden Failures

Document workflow management is broken. Discover 7 brutal truths, bold fixes, and why 2026 will force you to rethink everything. Start your workflow revolution now.

Document Workflow Automation in 2026: Hard Roi, Real Risks

Discover insights about document workflow automation

Document Transformation Tools That Fix Workflows (not Create Chaos)

Document transformation tools just changed the game. Discover 9 bold truths, hidden pitfalls, and the real AI you can trust. Are you ready to transform—before you get left behind?

Document Text Recognition in 2026: Accuracy, Risk and AI Reality

Document text recognition just changed forever. Discover 11 hard truths, hidden risks, and AI breakthroughs every business leader needs to know. Read before you automate.

Document Text Parsing When It Really Matters—Law, Finance, Health

Document text parsing doesn’t have to be a black box. Discover the latest breakthroughs and brutal truths, and reclaim control over your data—now.

Document Text Mining Tools That Expose Risk, Bias and Opportunity

Document text mining tools are changing how we see data. Uncover hidden risks, real-world wins, and the secrets experts won't share—plus what’s next in 2026.

Document Tagging Systems That Work: Hard Truths Before You Buy

Document tagging systems are transforming chaos into clarity. Discover the latest breakthroughs, hidden pitfalls, and hard truths you can't afford to ignore—read before you choose.