Document Text Recognition in 2026: Accuracy, Risk and AI Reality

If you think document text recognition is a solved problem, think again. The world is drowning in data, but the real battle rages where analog meets digital—at the intersection of paper, pixel, and AI. Businesses crave instant insight, but the path from physical document to actionable intelligence is littered with failure, hype, and a dizzying array of technical landmines. In 2025, document text recognition isn’t just about digitizing; it’s about survival, compliance, and competitive edge. From OCR’s broken promises to AI’s seductive power and dangerous opacity, we’re peeling back the layers to reveal 11 brutal truths, hidden breakthroughs, and risks nobody’s talking about. Here’s what business leaders, technologists, and anyone who touches documents need to know—before your next workflow explodes or your most sensitive data leaks.

How did we get here? The messy evolution of document text recognition

The analog graveyard: when paper ruled everything

Picture the average corporate office in the late 20th century: the hum of fluorescent lights, the low buzz of printers, and desks lost beneath avalanches of contracts, memos, and invoices. Paper was power—and a liability. For decades, paper reigned supreme in business, law, healthcare, and government. Every process—approval, compliance, even innovation—ran through the slow grind of manual reading, copying, filing, and archiving. The risk was colossal: documents lost, misfiled, or destroyed meant legal exposure and missed opportunities.

Early attempts at digitization—think microfilm, early scanners, and clunky data entry terminals—offered a glimmer of hope but mostly delivered more pain. Repetitive strain injuries became an occupational hazard, and “going paperless” was a punchline more than a promise. According to industry historian Alex, “Back then, every document was a liability—and a secret weapon.” The analog era was a paradox: information was everywhere, but finding and using it was agony. Manual processes meant errors and omissions were the norm, not the exception, and stacks of paperwork often became graveyards for critical knowledge.

Rise and fall of legacy OCR: promises vs. reality

OCR (Optical Character Recognition) stormed onto the scene with grand ambitions. Suddenly, the dream of converting endless paper to searchable text seemed within reach. Big-budget initiatives sold visions of automated back offices, liberated knowledge, and “paperless” utopia. The reality? Far messier.

| Year | Milestone | Failure / Comeback |

|---|---|---|

| 1920s | Emanuel Goldberg's “Statistical Machine” | Only basic codes, limited adoption |

| 1974 | Ray Kurzweil’s omni-font OCR | High error rates on real-world docs |

| 1990s | Enterprise OCR software boom | Poor results on skewed or noisy images |

| 2000s | PDF and document management systems | Integration nightmares, data loss |

| 2010s | First AI-based OCR integrations | Exposed cracks: bias, low explainability |

| 2020s | Hybrid AI + rule-based models emerge | Legacy platforms struggle with complexity |

Table 1: Timeline of document text recognition evolution, showing milestones, key failures, and surprising comebacks. Source: Original analysis based on AIMultiple, 2025; Futran Solutions, 2025; verified technology histories.

Legacy OCR’s dirty secrets? In the real world, it fell apart on anything non-standard: skewed scans, poor handwriting, or exotic alphabets. The true cost was hidden in endless hours of correction, manual data validation, and expensive consultant “tuning” that rarely delivered as promised.

Hidden costs of legacy OCR nobody warned you about:

- Endless manual verification—sometimes doubling the total effort

- Frequent data loss or misinterpretation, especially with forms and tables

- Massive integration costs with outdated ERPs and document management systems

- Security gaps: unencrypted outputs and shadow IT workarounds

- Compliance nightmares from missed or corrupted records

- Long-term maintenance contracts locking you into obsolete tech

The first AI integrations in the 2010s held promise but exposed new cracks: bias in training data, black-box outputs, and the cold reality that no system could handle every edge case. As enterprises pushed for more automation, the limits of old-school OCR became painfully clear.

The AI takeover: why 2025 is different

Something fundamental shifted when large language models (LLMs) and advanced neural networks entered the game. No longer limited to pixel-level pattern matching, AI began to understand context, semantics, and structure, making sense of messy, real-world documents that would have shredded earlier systems. According to Base64.ai, multimodal AI now integrates text, images, and tables for seamless processing—a leap that changed the landscape.

One pivotal breakthrough: generative AI can now be trained on custom document types in minutes, not weeks, driving 35% compound annual growth in the intelligent document processing market (Futran Solutions, 2025). Suddenly, document text recognition wasn’t just about finding text—it was about extracting meaning, context, and actionable insight at scale. But with new power came new risks: bias, privacy, and explainability concerns became more urgent than ever, as organizations wrestled with the complexity and unpredictability of AI-driven decisions.

Document text recognition explained: beyond the buzzwords

What is document text recognition, really?

Strip away the hype, and document text recognition boils down to extracting useful, structured information from the unstructured chaos of real-world documents. It’s more than just “reading” text—it’s about translating images, forms, tables, and handwriting into data that can fuel automation, analytics, and compliance.

Key terms and what they really mean:

Converts scanned documents or images into machine-encoded text. The classic tech behind digitizing receipts or contracts, but struggles with complex layouts.

OCR’s smarter cousin, designed for handwriting and variable print styles. Still far from perfect, especially on messy or cursive scripts.

Advanced AI trained on massive text datasets, capable of understanding context, semantics, and intent—game-changing for deep document analysis.

AI discipline focused on understanding and generating human language. Powers tasks like summarization, classification, and sentiment analysis.

The (often human) act of tagging or categorizing parts of documents so AI can learn. The unsung hero of any successful AI project.

Goes beyond surface text—identifies meaning, relationships, and intent within documents.

In the broader AI landscape, document text recognition lives at the intersection of computer vision, NLP, and workflow automation. It promises to bridge the analog-digital divide in business, but the gap between marketing claims and reality remains yawning. According to AIMultiple, even the best OCR solutions still struggle with non-oriented or complex scripts, and real-world performance often falls short of glossy sales decks.

How AI document analysis works (step-by-step)

Step-by-step guide to modern document text recognition:

- Image capture: Scan, photograph, or ingest a digital document in any format.

- Preprocessing: Clean up noise, correct skew, enhance contrast, and prepare for analysis.

- Text detection: Use computer vision to find regions of interest—blocks, lines, words.

- Character recognition: Apply OCR/ICR or AI models to decode text or handwriting.

- Layout analysis: Map tables, forms, headers, and footers for structure and context.

- Data extraction: Pull out key fields (names, dates, totals), often using LLM or hybrid AI.

- Semantic analysis: Understand relationships, intent, and compliance triggers.

- Insight extraction: Summarize, categorize, and feed outputs to downstream workflows.

Each step is a minefield: poor scans sabotage even the best AI, handwriting recognition is still hit-or-miss, and tables can confound basic models. Edge cases—like multilingual documents, rotated scans, or heavily annotated PDFs—often require alternative workflows such as human-in-the-loop review or custom model tuning.

Why most organizations still get it wrong

The disconnect between vendor promises and real-world outcomes is brutal. Too many organizations treat document text recognition as “set and forget,” only to discover that automation amplifies mistakes just as easily as it multiplies speed. As compliance officer Morgan bluntly puts it: “If you think 99% accuracy is good enough, you’ve never seen a lawsuit.”

Common misconceptions abound: automation alone won’t fix broken processes; even state-of-the-art AI needs regular retraining and validation. The hidden challenge? Maintenance. Models degrade over time as business needs, document formats, and regulations evolve. The cost of “fixing it later” is always higher than getting it right from the start.

The brutal truths: where document text recognition fails (and why)

Garbage in, garbage out: the myth of perfect accuracy

No matter how advanced the algorithm, document text recognition is only as good as its inputs. Even the best AI models falter with poor scans, skewed documents, or obscure layouts. According to GoTranscript, as of 2025, AI transcription achieves over 95% accuracy—under ideal conditions. But industry data paints a harsher picture in the wild:

| Industry | Avg. Accuracy (2024-2025) | Most Common Pitfall |

|---|---|---|

| Banking | 94% | Handwritten forms, stamps |

| Healthcare | 91% | Physician scrawl, abbreviations |

| Government | 89% | Multilingual, old records |

| Retail | 96% | Faded receipts, logos |

Table 2: Real-world document text recognition accuracy rates by industry. Source: Original analysis based on GoTranscript, 2025; Base64.ai, 2025; sector reports.

Take banking: a single digit misread on a wire transfer can cost millions. In healthcare, a misinterpreted prescription could mean the difference between life and death. Government archives? One mistranslated phrase can trigger legal chaos or privacy breaches. The brutal truth: tiny errors often have outsized consequences, and “good enough” is rarely good enough.

The black box problem: when you can’t trust the output

As AI takes over, explainability becomes a battleground. Black-box models spit out results with no clear audit trail, making it impossible to know why one document passed and another failed. The risks are existential: regulatory penalties, wrongful decisions, and destroyed trust.

"Sometimes you need to know why, not just what." — Sam, AI specialist (illustrative, based on industry sentiment and compliance reports)

Relying on opaque AI erodes confidence and exposes organizations to new kinds of risk. Auditing outputs—using human review, model documentation, and performance dashboards—is no longer optional. Trustworthy AI in document analysis means being able to retrace every decision, flag anomalies, and prove compliance under scrutiny.

Bias, privacy, and other hidden landmines

Bias creeps in wherever training data is incomplete or unrepresentative—which, in the world of documents, is almost everywhere. Systems trained mostly on Western scripts routinely fail on non-Latin alphabets or regional forms. Privacy? The stakes are even higher. According to Papermark, 60% of enterprises are replacing VPNs with zero-trust access for secure document workflows, and biometric authentication is surging by 185%. Yet breaches happen, and compliance is a moving target.

Red flags to watch out for in document text recognition projects:

- Lack of transparency in model decision-making

- Insufficient bias testing on multilingual or diverse document sets

- Overreliance on automation without human oversight

- Weak encryption of document data at rest and in transit

- No clear protocols for auditing or correcting errors

- Poor documentation of training data sources and model updates

- Failure to align with evolving privacy regulations

The bottom line: document text recognition isn’t just a technical challenge—it’s a minefield of ethical, legal, and operational risks.

Breakthroughs and bold moves: what’s actually working in 2025

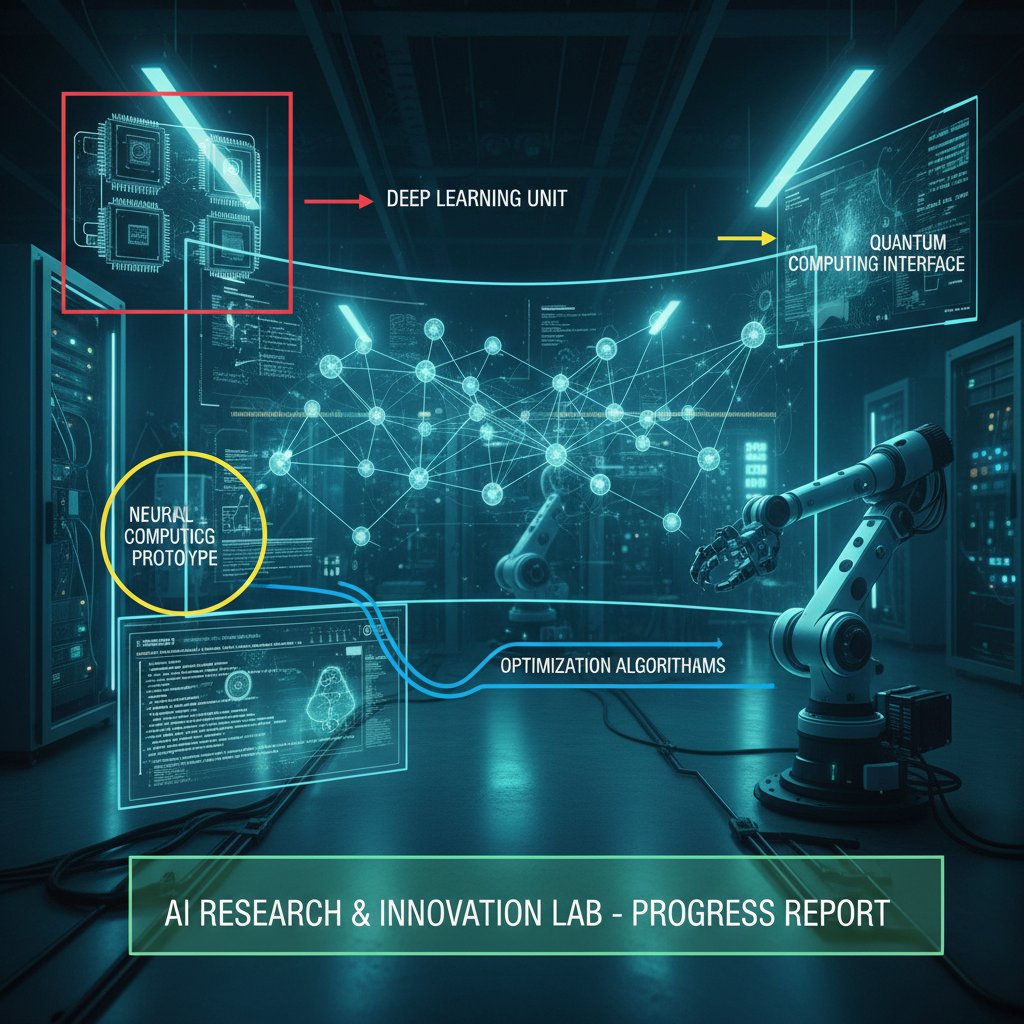

LLMs and hybrid approaches: the new state of the art

Large language models (LLMs) have rewritten the rules for document text recognition. By combining deep contextual understanding with robust pattern matching, LLMs can handle everything from legalese to medical shorthand. Hybrid approaches—mixing rule-based validation with AI-driven extraction—now deliver the best results in complex settings.

In the insurance sector, hybrid models flagged anomalies in claims forms that pure OCR missed, reducing fraud by 30%. Logistics companies now use multimodal AI to process invoices in dozens of languages, slashing turnaround times by 50%. In education, automated analysis of handwritten exam papers hit 93% accuracy—up from 78% just three years ago.

| System Type | Pros | Cons | Best Use Cases | Outcomes |

|---|---|---|---|---|

| Legacy OCR | Fast on clean, simple docs | Fails on noise, handwriting | Standard forms | Low maintenance |

| Pure AI | Handles complex, noisy inputs | Black-box, high compute cost | Multilingual, messy docs | Needs regular retraining |

| Hybrid AI + Rules | Balance of accuracy + explainability | Integration complexity | Compliance-heavy sectors | Best-in-class results |

Table 3: Feature matrix comparing legacy OCR, pure AI, and hybrid systems for document text recognition. Source: Original analysis based on industry case studies and Base64.ai, 2025.

Case studies: real-world wins (and the ugly lessons behind them)

A global bank spent millions on legacy OCR—only to see error rates spike when new document formats appeared. After switching to a hybrid AI approach, post-processing errors dropped by 70%, but only after a bruising six months of manual corrections and system tuning.

A major healthcare provider tackled handwritten forms with deep learning APIs, achieving 92% accuracy on over 10 million documents. The ugly truth? Initial models misread 15% of critical fields, requiring a “human-in-the-loop” approach before deployment.

In the public sector, a government agency digitizing archives for accessibility finally cracked the code with LLMs trained on historical scripts. The breakthrough: context-aware AI that could decipher faded, archaic handwriting, enabling full-text search for the first time.

Each win came at a price: persistent challenges in data annotation, the need for rigorous auditing, and the painful lesson that no system is ever “done.”

TextWall.ai and the new wave of advanced processors

TextWall.ai stands as a prime example of where the field is headed. By leveraging cutting-edge AI document analysis, platforms like textwall.ai transform mountains of text into clear, actionable insight—in seconds, not hours. Unlike previous generations, these tools don’t just “read” documents; they summarize, categorize, and distill meaning, making massive, messy information manageable.

The difference? Modern platforms focus on actionable outcomes, not just raw accuracy. They integrate seamlessly into workflows, support complex, multilingual documents, and adapt as new challenges emerge. To evaluate any advanced document analysis solution, ask: Does it handle your real documents—not just ideal samples? Is the output explainable? Can you audit decisions? And, most critically, how fast and safely can you extract insight at scale?

How to actually win: practical implementation for 2025 and beyond

Readiness checklist: is your organization set up for success?

10-point self-assessment for document text recognition readiness:

- Have you audited the types and formats of documents in your workflow?

- Is your existing infrastructure compatible with modern AI/LLM tools?

- Do you have a robust data privacy and compliance framework?

- Are domain experts involved in model training and validation?

- Have you budgeted for ongoing model maintenance and retraining?

- Can you identify and remediate bias in your datasets?

- Is there a plan for integrating human review with automation?

- Are performance metrics aligned with real business goals?

- Do you have protocols for auditing and explaining AI outputs?

- Is continuous improvement built into your workflow?

Scoring: 8–10 “yes” answers mean you’re well-prepared; 5–7 suggests moderate risk; under 5, you’re likely heading for trouble. Often-overlooked prep: mapping document diversity, planning for edge cases, and ensuring technical capacity matches ambition. Aligning business and technical stakeholders from the start is everything.

Step-by-step: deploying document text recognition the right way

Priority checklist for rolling out document text recognition:

- Audit and categorize all relevant document types.

- Define measurable business outcomes and risk tolerances.

- Select candidate vendors or build tools, focusing on explainability.

- Pilot with a controlled dataset, including edge cases.

- Integrate human-in-the-loop validation for initial outputs.

- Measure accuracy, speed, and ROI against real business needs.

- Plan for regular retraining and performance monitoring.

- Roll out incrementally, scaling based on early success.

- Document every decision, update, and correction for auditability.

- Build a feedback loop for continuous learning and improvement.

Three approaches to rollout:

- Small pilot: Start with a single department or document type; optimize before scaling.

- Rapid scale: Go enterprise-wide fast, but risk bigger, costlier mistakes.

- Hybrid: Combine both, starting small but architecting for growth.

Training staff and building feedback into every stage is non-negotiable. The best teams foster cross-disciplinary collaboration between IT, compliance, and “front lines.”

Avoiding disaster: common mistakes (and how to dodge them)

Five costly mistakes organizations keep making:

- Underestimating the diversity and messiness of real-world documents.

- Over-automating without robust human review.

- Ignoring ongoing model drift and retraining needs.

- Failing to document processes for audits and compliance.

- Treating AI outputs as “truth” without validation.

Setting realistic expectations is crucial: document text recognition is about reducing manual pain, not achieving perfection.

Unconventional uses for document text recognition:

- Automated extraction of regulatory updates from government bulletins

- Detecting plagiarism in contract clauses across versions

- Mining medical research articles for clinical trial recruitment

- Parsing handwritten feedback from customer surveys

- Digitizing and translating historical manuscripts for research

- Real-time monitoring of supply chain records for compliance triggers

The secret? Continuous improvement, relentless feedback, and knowing when to pivot strategy as workflows—and risks—change.

Industry deep dives: document text recognition in the wild

Banking and finance: where every error costs a fortune

Banks face an unforgiving environment: compliance demands are sky-high, and accuracy isn’t just a nice-to-have—it’s existential. Regulatory fines, fraud detection, and customer trust all hinge on the reliability of document text recognition. A recent deployment in a major bank cut manual verification costs by 65%, but only after an initial spike in false positives. According to sector data, AI-driven recognition flagged 20% more fraud attempts than manual review—yet also surfaced new risks around data privacy.

Manual verification isn’t dead, but it’s become the last line of defense. The hidden costs? Time, morale, and the risk of burnout. AI is solving old problems—and, inevitably, creating new ones.

Healthcare: the war on handwritten chaos

Few sectors are as document-heavy and error-prone as healthcare. Vast volumes of handwritten records persist, creating a formidable barrier to digital transformation. A 2024 hospital digitization push processed over 5 million forms—reducing administrative workload by 50%, but with a sobering 9% error rate on illegible handwriting.

Three approaches have emerged:

- Deep learning APIs: High accuracy, slow retraining cycles.

- Hybrid human-AI review: Costly but safest for critical fields.

- Standardized e-forms: Most reliable, but hard to enforce.

Each comes with trade-offs. Patient privacy and ethical dilemmas loom large; even the best systems must prioritize consent, data minimization, and transparency.

Government and public sector: accessibility, transparency, and trust

Government digitization lags for a reason: legacy archives, multilingual records, and political pressure for transparency. Recent breakthroughs—context-aware AI for historical documents—are opening up access for researchers and citizens alike. But the tension between transparency and privacy is real; public records must balance usability with rigorous redaction and security.

Controversies and open debates: what the industry won’t tell you

Is perfect accuracy a myth (and does it even matter)?

The debate rages: is 100% accuracy possible—or even necessary? Some experts argue that “good enough” accuracy delivers massive ROI if paired with strong human review. Others point out that in compliance-heavy sectors, even a single misread digit can be catastrophic.

Expert opinions:

- Dr. Smith, AI researcher: “Chasing 100% is a fool’s errand; the real value is in smart exception handling.”

- Jamie Lee, compliance auditor: “For regulated industries, 99% isn’t close to enough.”

- River Chen, tech lead: “Speed, cost, and accuracy are a three-way trade—you get two, never all three.”

| Sector | Typical Accuracy | Business Outcome |

|---|---|---|

| Banking | 94–99% | Cost savings, but compliance risk |

| Healthcare | 90–96% | Faster intake, but human verification needed |

| Government | 88–95% | Improved access, but privacy hurdles |

| Retail | 95–98% | Inventory accuracy, low risk |

Table 4: Accuracy benchmarks versus business outcomes in document text recognition, 2025. Source: Original analysis based on GoTranscript, 2025; Base64.ai.

The hidden environmental cost of AI-powered document analysis

AI’s power doesn’t come free. Large-scale document text recognition chews massive compute and cloud resources, raising real concerns over carbon footprint. According to industry studies, running LLM-based document analysis at scale can draw as much energy as a small data center—pushing organizations to weigh on-premise versus cloud-based solutions carefully.

On-premise offers more control but higher up-front costs and maintenance headaches. Cloud-based solutions scale easier but come with less transparency over data location and energy use. Green AI is gaining traction, with vendors pushing for renewable-powered operations and more efficient model architectures—but the gap remains.

Who owns your data? The privacy battle behind the curtain

Ownership of digitized document data is a legal gray zone. In recent years, disputes have erupted over academic archives, healthcare records, and even government databases.

Three real-world disputes:

- Academic researchers contesting publisher claims over digitized dissertations.

- Hospitals and SaaS vendors fighting over access to anonymized patient data.

- Government agencies clashing with contractors on proprietary metadata.

Best practices demand explicit agreements, robust encryption, and regular audits. The privacy landscape is shifting underfoot—in two to three years, regulations and public attitudes could upend what’s now considered “standard practice.”

Adjacent frontiers: what else you need to know about document intelligence

Data labeling and annotation: the unsung heroes

Quality data labeling is the backbone of any effective document text recognition system. Poor annotation sabotages even the most sophisticated models. Manual, semi-automatic, and crowdsourced strategies each have their place—but require careful oversight.

Annotation errors can propagate through entire pipelines, leading to systemic bias or recurring extraction failures. The best teams invest in rigorous training, clear guidelines, and ongoing quality control.

Accessibility revolution: making documents readable for all

Document recognition technology is quietly powering an accessibility revolution. For people with visual impairments, advanced recognition tools enable access to government forms, contracts, and books that were once off-limits. Three technologies driving this shift:

- Real-time screen readers leveraging AI-generated summaries

- Automated captioning for scanned images and PDFs

- Multilingual transcription for global accessibility

Social and legal pressures—from activists to new regulations—are accelerating inclusive digitization. The business payoff? Broader market reach, improved brand reputation, and risk mitigation.

The future of document text recognition: predictions and wildcards

Expert forecasts suggest document intelligence will further converge with related AI fields, from voice transcription to video analysis. But wildcards remain: breakthrough tech, regulatory upheaval, or “AI fatigue” that could disrupt adoption. The only certainty is that organizations must continue to audit, iterate, and future-proof their document workflows—or risk being left behind.

Myths, mistakes, and what nobody tells you about document text recognition

Top 7 myths debunked: what the sales decks won’t say

7 persistent myths about document text recognition:

- “OCR is solved” — In reality, complexity keeps rising.

- “AI replaces humans” — The best systems are hybrid, not pure automation.

- “You can set and forget” — Models degrade; maintenance is constant.

- “100% accuracy is possible” — Not with real-world data.

- “Any document format works out of the box” — Edge cases abound.

- “Cheaper means better ROI” — False savings breed hidden costs.

- “Compliance is automatic” — Only with active oversight and auditability.

These myths persist because they’re convenient, not because they’re true. As industry analyst Taylor puts it: “The truth is always messier—and more interesting.”

The only way to separate fact from fiction: demand transparency, auditability, and real-world proof in every vendor claim.

Mistakes even the pros keep making

Even expert teams stumble—often by skipping fundamentals or failing to plan for drift. Common advanced mistakes:

- Overfitting models to pilot datasets, missing real document diversity

- Ignoring evolving compliance rules as systems age

- Under-resourcing human review, especially during rollout

- Confusing “accuracy” with “impact” on business outcomes

Timeline of a document text recognition project gone wrong:

- Overpromise on capabilities (should have scoped document types)

- Rush to integrate (should have piloted first)

- Skip human review (should have built-in validation)

- Ignore retraining (should have scheduled updates)

- Fail to document changes (should have maintained audit logs)

- Miss compliance changes (should have dedicated compliance checks)

- Crisis response after system failure (should have planned contingency)

Lessons learned? Rigorous prep, continuous monitoring, and humility beat hype every time.

Conclusion: the next move is yours

The most important lesson: document text recognition is not a panacea—it’s a constantly evolving discipline, riddled with both peril and potential. The cultural and technical shift underway is profound; success no longer hinges on picking the “right” technology, but on building resilient, auditable, and adaptive workflows that combine AI power with human judgment.

For leaders, technologists, and end users, the roadmap is clear: audit your inputs, demand transparency, invest in quality data labeling, and never treat automation as “set and forget.” The difference between thriving and failing in 2025’s document landscape will come down to rigor, not rhetoric.

So, after learning these brutal truths, what will you do differently in your next document project?

Sources

References cited in this article

- AIMultiple OCR Technology(research.aimultiple.com)

- Papermark Document Security(papermark.com)

- Base64.ai Breakthroughs(base64.ai)

- GoTranscript AI Transcription(gotranscript.com)

- PairSoft: History of OCR(pairsoft.com)

- Docsumo: OCR Evolution(docsumo.com)

- UC Merced: Text Mining Terms(libguides.ucmerced.edu)

- Send AI: OCR Explained(send.ai)

- GDPicture: What is Document Recognition(gdpicture.com)

- ArtsylTech: Text Recognition Applications(artsyltech.com)

- AskDocs: How AI Document Analysis Works(askdocs.com)

- Appian: AI Document Analysis(appian.com)

- DocDigitizer: OCR Challenges(docdigitizer.com)

- Conexiom: OCR Problems(conexiom.com)

- DocuClipper: OCR Limitations(docuclipper.com)

- Adobe: OCR Not Recognizing Text(adobe.com)

- Rossum: OCR Accuracy Myths(rossum.ai)

- DocuClipper: OCR Accuracy(docuclipper.com)

- Marketing Scoop: Handwriting Recognition(marketingscoop.com)

- Pixno: LLMs & OCR in 2025(photes.io)

- Cradl.ai: LLM-OCR Hybrid(cradl.ai)

- Vellum.ai: LLMs vs OCRs(vellum.ai)

- Papers with Code: HTR Benchmarks(paperswithcode.com)

- ICDAR 2024/2025 Proceedings(icdar2024.net)

- AIMultiple: OCR Research(research.aimultiple.com)

- Medium: OCR Best Practices 2025(medium.com)

- Whisperit: Document Management Best Practices(whisperit.ai)

- Mindee: OCR API Guide(mindee.com)

- ScaleHub: IDP Guide 2025(scalehub.com)

- InData Labs: NLP & OCR Challenges(indatalabs.com)

- Docsumo: IDP Market Report 2025(docsumo.com)

- Google Cloud: Industry Use Cases(cloud.google.com)

- Thoughtful: Industries Benefiting from OCR(thoughtful.ai)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Text Parsing When It Really Matters—Law, Finance, Health

Document text parsing doesn’t have to be a black box. Discover the latest breakthroughs and brutal truths, and reclaim control over your data—now.

Document Text Mining Tools That Expose Risk, Bias and Opportunity

Document text mining tools are changing how we see data. Uncover hidden risks, real-world wins, and the secrets experts won't share—plus what’s next in 2026.

Document Tagging Systems That Work: Hard Truths Before You Buy

Document tagging systems are transforming chaos into clarity. Discover the latest breakthroughs, hidden pitfalls, and hard truths you can't afford to ignore—read before you choose.

Document Summarizer Tool Reviews 2026: Winners, Risks, Lies

Document summarizer tool reviews that cut through hype. Uncover real pros, cons, and expert insights in this 2026 deep-dive. Don’t choose blindly—read this first.

Document Summarizer Tool Online: Productivity Boost or Thinking Trap?

Document summarizer tool online revolutionizes how you digest and act on information. Discover hidden truths, real-world hacks, and expert critiques. Read before you decide.

Document Summarizer Professional: Can You Trust What It Hides?

Discover insights about document summarizer professional

Document Summarizer Integration Options That Won’t Break in 2026

Document summarizer integration options revealed: Discover the boldest strategies for seamless workflow, critical risks, and 2026’s must-know advances.

Document Summarizer Free Tools Aren’t Free If You Lose Your Data

Document summarizer free tools promise easy answers, but what's the real cost? Discover the hidden risks, best picks, and secrets to smarter summaries. Read before you upload.

Document Summarizer for Technical Teams That Won’t Break Your Code

Uncover hidden risks, real ROI, and the edgy new playbook for smarter, faster collaboration. Read before your next big project.