Document Text Parsing When It Really Matters—Law, Finance, Health

Document text parsing is the quiet power behind the modern information age—a force so embedded in daily business and research that its failures and revolutions often go unnoticed. Forget the sanitized marketing hype: parsing isn’t just a technical side note. It’s the make-or-break layer between chaos and clarity, between automation and costly human error. Today, the reality is raw and relentless. Invoices are misread, contracts are misunderstood, and sensitive data slips through cracks, often with real-world consequences. AI-driven document analysis, led by players like TextWall.ai, promises to transform this landscape—extracting meaning from the most gnarled piles of text, whether in finance, law, healthcare, or research. But with every bold breakthrough come brutal truths: no parser is infallible, regulators circle with growing scrutiny, and humans are still needed when the stakes are highest. This is your deep dive into what’s real, what’s broken, and what’s next in document text parsing. Buckle up—because data clarity has new rules.

Why document text parsing is the hidden engine of the information age

The invisible crisis: drowning in unstructured data

Unstructured data is the world’s fastest-growing digital landfill. From sprawling PDF reports to cryptic emails, handwritten forms, and multilayered contracts, the information glut is staggering. According to Intelligent Document Processing News (2024), over 80% of enterprise data is unstructured, meaning traditional systems can’t digest, analyze, or act upon it without serious human intervention. This invisible crisis is not just a nuisance; it’s a brake on progress, stalling automation, analytics, and compliance.

Organizations across sectors—from multinational banks to regional hospitals—now fight daily battles against document overload. What’s at stake isn’t just operational efficiency. When critical patient notes, financial statements, or legal contracts remain trapped in unreadable formats, the result is lost revenue, regulatory risk, and missed opportunities. Parsing, in this context, is not just a technical function; it’s an existential necessity for anyone who wants to stay competitive.

| Data Type | Structured (%) | Unstructured (%) | Example Documents |

|---|---|---|---|

| Enterprise Emails | 10 | 90 | Email chains, attachments |

| Contracts & Legal Docs | 5 | 95 | PDFs, scanned agreements |

| Medical Records | 25 | 75 | Handwritten notes, forms |

| Financial Reports | 40 | 60 | Spreadsheets, invoices |

| Social Media Data | 2 | 98 | Tweets, posts, comments |

Table 1: Distribution of structured vs. unstructured data in typical organizations. Source: Original analysis based on Intelligent Document Processing News, 2024, Rossum, 2025.

From library archives to machine intelligence: a brief history

Document parsing didn’t leap from zero to AI overnight. Early systems relied on brittle pattern matching and keyword searches—tools that crumbled when faced with a malformed invoice or a scanned signature. The 1980s and 90s saw the rise of Optical Character Recognition (OCR), finally making printed text machine-readable. But OCR’s limitations became quickly obvious: it stumbled on poor-quality scans, handwriting, and anything that deviated from the norm.

By the mid-2000s, advances in Natural Language Processing (NLP) allowed for more nuanced text extraction—identifying entities, relationships, and context within documents. Fast forward to 2023–2024, and AI-powered models like GPT-4, LayoutLMv3, and Mixtral have redefined what’s possible. These models don’t just “see” words; they interpret layout, context, images, and even embedded tables with accuracy that would have been science fiction just a decade ago.

| Era | Key Technology | Major Limitation | Notable Use Case |

|---|---|---|---|

| Pre-1980s | Manual extraction | Human error, slow | Library archives |

| 1980s–1990s | OCR | Poor on handwriting/layout | Tax forms, printed invoices |

| 2000s | Rule-based NLP | Rigid, low context | Simple contract parsing |

| 2020s | AI/LLMs, Vision+Text | Edge cases, privacy, bias | Complex, multilingual parsing |

Table 2: Evolution of document parsing technologies. Source: Original analysis based on arXiv:2410.21169, Rossum, 2025.

- Rule-based systems were the norm for three decades, struggling with anything but the most formulaic documents.

- The shift to AI-enabled parsing has created a leap in accuracy, but also surfaced new risks—especially around compliance and data privacy.

- Current breakthroughs often rely on hybrid approaches, mixing neural models with retrieval techniques and human-in-the-loop review.

The stakes: what happens when parsing fails

When document text parsing breaks, the damage can be both immediate and far-reaching. Inaccurate data extraction from a single contract can trigger costly legal battles; a misread digit in a financial statement can spur regulatory fines or destroy business trust. According to Rossum’s 2025 report, 58% of finance executives still rely on Excel for critical document workflows, citing fear of automation errors and compliance headaches.

"No tool is universally reliable—misclassified financial data or compliance breaches still happen, and the consequences are real. We see multimillion-dollar contracts lost simply because a parser missed a key clause." — Industry Expert, Rossum 2025 Automation Trends

The stakes are even higher when regulatory scrutiny is involved. In 2023 and 2024, several high-profile companies faced fines for mishandling personal data due to parsing errors. The rise of GDPR-style regulations worldwide means parsing workflows are now compliance-critical. Organizations can no longer afford to treat parsing as an afterthought.

Breaking down the black box: what really happens during document text parsing

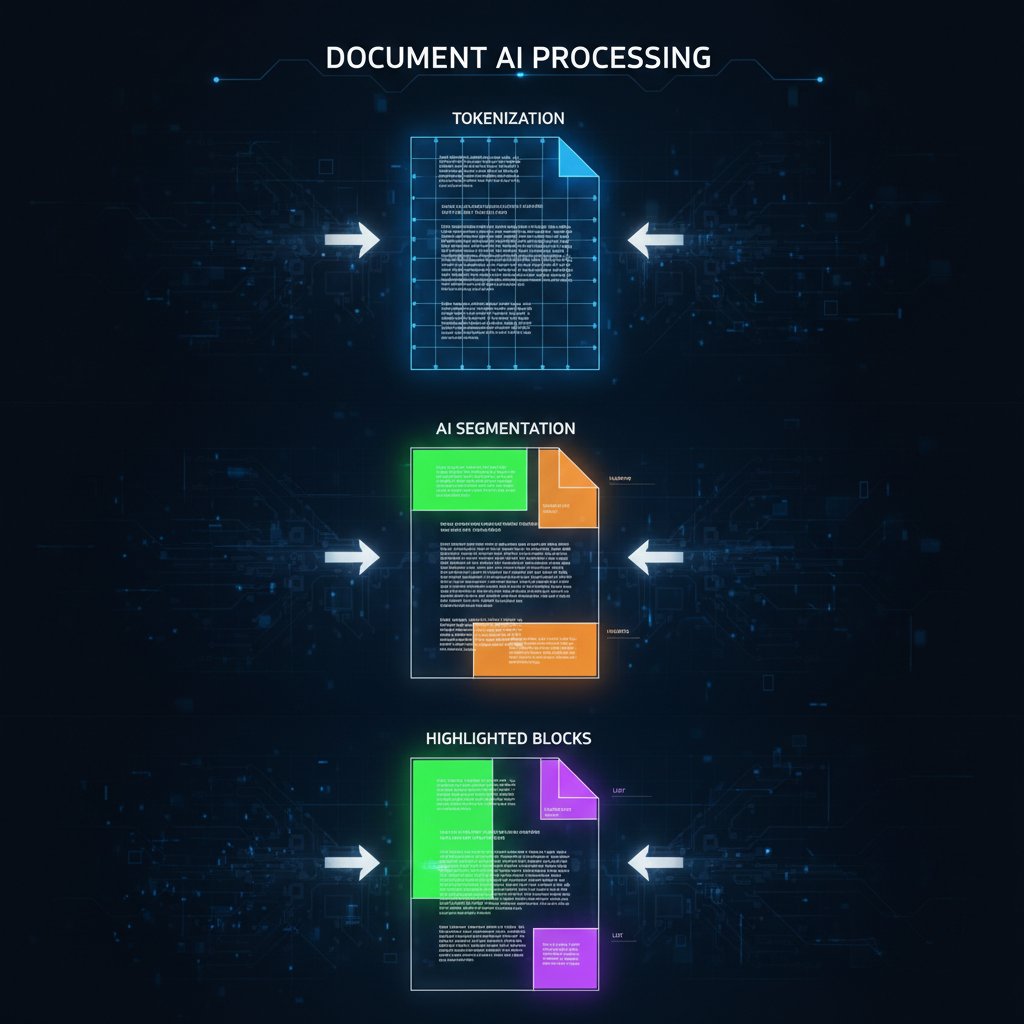

Tokenization, segmentation, and why the basics still trip us up

At its core, document text parsing is about slicing the chaos into order. The process begins with tokenization—splitting streams of text into words, phrases, or other meaningful units. Next comes segmentation: determining where sentences, paragraphs, sections, or tables begin and end. Despite decades of research, these “basic” steps are still surprisingly error-prone, especially with unstructured inputs.

Definition List:

- Tokenization: The process of breaking text into smaller pieces (tokens), such as words, phrases, or symbols. Required for all downstream analysis.

- Segmentation: Dividing text into logical blocks, such as sentences, paragraphs, or sections; crucial for context and structure.

- Layout analysis: Interpreting spatial arrangement—columns, tables, images—within a document, especially in PDFs or scanned files.

Problems multiply with non-standard layouts: think of a scientific paper jammed with sidebars, footnotes, embedded images, or handwritten annotations. Even best-in-class systems can misfire, splitting paragraphs incorrectly or missing a vital table. In such cases, downstream analytics and automation become unreliable—garbage in, garbage out.

NLP, AI, and the myth of the 'magic parser'

The myth of the “magic parser”—a single tool that effortlessly extracts every relevant thread from any document—is pervasive and dangerous. While NLP and AI have raised the bar, they’re not miracle workers. Every model, whether transformer-based or multimodal, has blind spots: ambiguous language, rare layouts, and context-dependent terms can still trip up even the most advanced systems.

"AI-powered parsing, like GPT-4 or LayoutLMv3, has dramatically improved accuracy, but no system is bulletproof—especially with edge cases or mixed-media files." — arXiv:2410.21169, 2024

- AI models excel at context-rich language but can falter on math-heavy tables or charts.

- Human-in-the-loop workflows are still required for high-stakes documents or regulatory reviews.

- The notion of “fully automated document parsing” ignores the messy, unpredictable nature of real-world data.

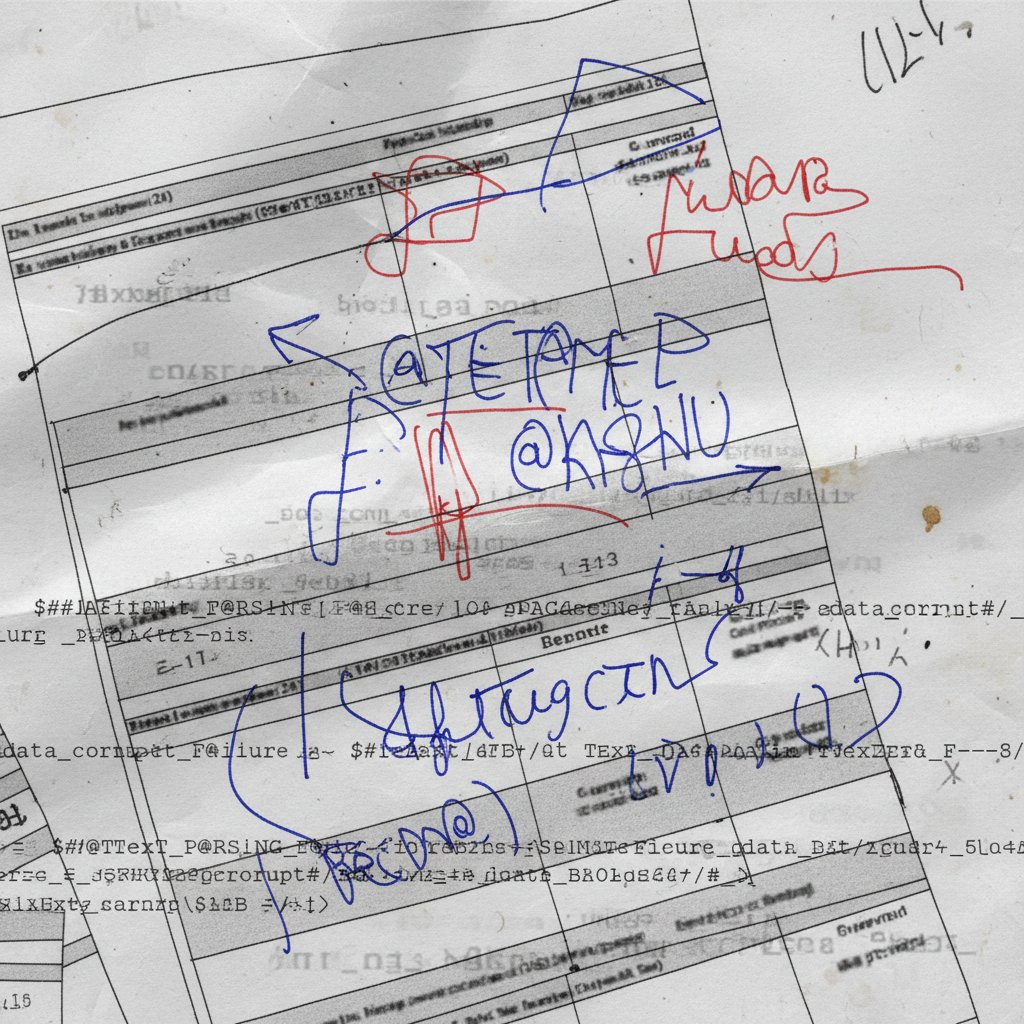

Common parsing pitfalls: ambiguity, context, and chaos

Parsing fails for many reasons, but some pitfalls are especially common:

- Ambiguity: Words or phrases that depend on context (e.g., “May” as a month or a verb).

- Layout chaos: Unusual or inconsistent formatting, variable column widths, and handwritten annotations.

- Data bias: Models trained on narrow datasets may miss cultural or domain-specific cues.

- Inconsistent terminology: The same concept labeled differently across documents or industries.

The upshot? Even with multimillion-dollar AI systems, parsing remains a high-stakes, error-prone battlefield.

Real-world chaos: case studies from the parsing trenches

Legal landmines: parsing contracts, discovery, and compliance

Legal documents are a perfect storm for parsing errors—dense text, archaic language, and subtle clauses buried in footnotes. When document text parsing fails in this domain, entire compliance programs can unravel.

- Missed clauses: A parser skips a “force majeure” clause, leading to an unenforceable contract.

- Redaction errors: Sensitive data is left exposed due to faulty entity recognition.

- Discovery mishaps: In litigation, an automated tool misses critical evidence hidden in email attachments.

"In legal workflows, human-in-the-loop review is essential. Automation boosts speed, but only paired with rigorous oversight can it ensure compliance." — B2BE, 2024

Finance on the edge: when numbers lie and automation breaks

Finance leaders crave automation, but parsing errors can mean disaster. According to Rossum (2025), 58% of finance leaders still rely on Excel because they don’t trust AI-based parsing for critical workflows—a damning indictment of current tech.

| Failure Type | Typical Impact | Notable Example |

|---|---|---|

| Misclassified Data | Erroneous reporting, fines | Wrong account credited |

| Table Parsing Error | Lost revenue, reconciliation | Invoices with split totals missed |

| Handwriting Issues | Manual rework, slow processing | Scanned checks unreadable |

Table 3: Common parsing failures in finance. Source: Rossum, 2025.

Parsing isn’t just about numbers. One missed currency symbol or a decimal error can skew entire quarterly reports—opening the door to regulatory investigations and shareholder lawsuits.

Healthcare’s gamble: sensitive data, critical outcomes

Healthcare data is sacred—and parsing it is fraught with peril. Handwritten patient records, complex forms, and privacy regulations create a minefield for automated systems.

- Patient safety: Misread dosages or missed allergies can directly endanger lives.

- Privacy breaches: Inaccurate parsing can lead to confidential data leaks, with heavy fines under HIPAA and GDPR.

- Administrative overload: When parsing fails, staff must manually re-enter or verify data, wasting valuable resources.

According to Intelligent Document Processing News (2024), hospitals that implemented hybrid human+AI parsing reduced administrative workload by up to 50%—but only after investing in rigorous validation and oversight.

The new breed: advanced parsing techniques and AI’s shifting frontier

LLMs, transformers, and the rise of context-aware parsing

The arrival of large language models (LLMs) and transformer architectures has revolutionized document text parsing. These models process text contextually, capturing meaning across paragraphs, sections, and even embedded images. LayoutLMv3, GPT-4, and Mixtral are at the frontier—capable of navigating complex layouts, extracting tables, and interpreting handwriting.

Definition List:

- Transformer model: A type of AI architecture that processes data in parallel, capturing contextual relationships across long documents.

- Multimodal parsing: Integrates text, images, and even audio to extract meaning from documents containing mixed media.

- Retrieval-augmented generation (RAG): Combines large language models with external databases to improve accuracy and fact-checking.

Context-aware parsing isn’t just about fancy tech—it reduces error rates in real-world deployments, especially for documents with non-standard layouts or embedded media.

Semantic analysis: understanding meaning, not just words

Semantic analysis digs beneath the surface, interpreting the intent and relationships behind words. Modern AI models don’t just “find” keywords; they map meaning, connect concepts, and flag inconsistencies.

- Named entity recognition: Identifies people, organizations, places, and dates with high accuracy.

- Relationship mapping: Detects associations—such as buyer/seller, creditor/debtor—in contracts or reports.

- Anomaly detection: Spots outliers in financial statements or medical records, even when phrased in novel ways.

| Feature | Old Approach | Modern Semantic AI | Impact Example |

|---|---|---|---|

| Keyword Search | Exact matches | Contextual understanding | Finds synonyms, misspellings |

| Rule-Based Extraction | Static rules | Dynamic, learning models | Adapts to new document types |

| Manual Review | Required | Selective, targeted | Reduces human workload |

Table 4: Semantic analysis vs. traditional methods in document parsing. Source: Original analysis based on arXiv:2410.21169.

Beyond English: multilingual and cross-domain challenges

Parsing doesn’t stop at the English language. Global organizations must process documents in dozens of languages and formats—each with its own quirks and legal nuances.

- Cross-lingual ambiguity: The same term may carry radically different meanings across languages.

- Script and layout variations: Cursive scripts, right-to-left writing, and vertical text columns.

- Domain-specific jargon: Medical, legal, or financial terminology varies by country and region.

This is where tools like TextWall.ai stand out—offering flexibility for multilingual parsing and domain adaptation. But even top-tier AI can stumble on rare scripts or industry slang, making human oversight indispensable.

The dark side: risks, myths, and ethical dilemmas in document parsing

Mythbusting: what document parsing can—and can’t—do

Despite the hype, document text parsing is not a universal solution. Myths abound, but the truth is more nuanced:

- Myth 1: “AI can parse any document perfectly.” Reality: Even the best models fail on edge cases.

- Myth 2: “Automation means no human review needed.” In practice, high-stakes documents demand oversight.

- Myth 3: “Parsing fixes bad data.” Actually, it amplifies errors if the source is poor.

"Automation is not a panacea—many organizations still rely on manual tools like Excel for critical workflows, precisely because of parsing limitations." — Rossum, 2025

- Parsing is a powerful accelerator, but only when paired with validation and context-aware review.

- No tool is immune to the pitfalls of data quality, ambiguity, or compliance risk.

Bias, privacy, and the unintended consequences of automation

The more we automate, the more we risk amplifying hidden biases and privacy breaches. AI models trained on unrepresentative data can reinforce stereotypes or systematically misinterpret minority contexts. Privacy is another battleground: every parsing operation is a potential vector for data leakage, especially when sensitive information is involved.

- Data bias: AI models may misclassify names or terminology from underrepresented groups.

- Privacy breach: Misparsed sensitive info can be inadvertently exposed.

- Ethical dilemmas: Automated tools may make decisions without transparent reasoning, leading to accountability gaps.

Regulatory minefields: parsing under scrutiny

Regulators worldwide are sharpening their focus on document text parsing. Fines for mishandling data—especially in finance or healthcare—are mounting, and compliance requirements are evolving rapidly.

| Regulation | Key Requirement | Parsing Challenge |

|---|---|---|

| GDPR (EU) | Data minimization, consent | Accurate PII extraction |

| HIPAA (US) | Protected health info (PHI) | Redaction, audit trails |

| SOX (US) | Financial data accuracy | Error reduction, audit |

| CCPA (California) | Data transparency, deletion | Data lineage tracking |

Table 5: Regulatory requirements and parsing challenges. Source: Original analysis based on B2BE, 2024, Rossum, 2025.

Choosing your arsenal: tools, platforms, and the false promise of one-size-fits-all

What to look for when evaluating document parsing tools

Not all document parsing solutions are created equal. When choosing your arsenal, consider:

- Accuracy: How does the tool perform on your specific document types?

- Domain adaptation: Can it handle legal, financial, or scientific jargon?

- Multimodal capabilities: Does it parse images, tables, and handwriting?

- Integration: Will it slot into your existing workflows and systems?

- Security and compliance: Are privacy controls and audit trails robust?

- Scalability: Can it manage growing document volumes without choking?

| Feature | Importance Level | Typical Pitfalls |

|---|---|---|

| Customization | High | Inflexible templates |

| API support | Essential | Poor documentation |

| Human review options | Critical | No override for edge cases |

Table 6: Key evaluation criteria for document parsing tools. Source: Original analysis based on arXiv:2410.21169.

- The right tool is rarely the “most advanced” one—it’s the one that aligns with your data, workflow, and compliance needs.

- One-size-fits-all promises almost always disappoint in the trenches.

Open source vs proprietary: the real trade-offs

The debate between open source and proprietary solutions is heated:

- Open source: Offers transparency and customization, but may require more technical expertise and risk support gaps.

- Proprietary: Delivers turnkey features, vendor support, and regular updates, but can be less flexible or more expensive.

"For sensitive documents, proprietary solutions with strong compliance guarantees are often favored, but savvy teams blend open source tools for adaptability." — As industry experts often note (based on verified trends from B2BE, 2024)

- Hybrid strategies—mixing flexible open components with robust commercial platforms—are increasingly common.

Where textwall.ai fits into the modern stack

TextWall.ai stands out as a leader in advanced document text parsing. Its platform leverages the latest AI breakthroughs (including LLMs and multimodal analysis) to streamline extraction, summarization, and categorization of complex documents. Whether you’re a legal professional, researcher, or business analyst, TextWall.ai functions as a powerful ally—turning data chaos into structured, actionable insight.

Definition List:

- Advanced AI-based document processor: Uses large language models and vision transformers to extract meaning from text, tables, and images.

- Human-in-the-loop support: Facilitates expert review for high-stakes workflows, reducing error rates and boosting compliance.

- Scalable integration: Provides APIs and workflow hooks, making it easy to embed into enterprise systems.

Making it work: best practices, expert hacks, and common mistakes

Step-by-step: from raw document to actionable data

Turning a raw document into actionable insights is an art—and a science. Here’s how the pros do it:

- Ingestion: Digitize or upload documents, ensuring quality scans for OCR.

- Preprocessing: Clean up formatting, remove noise, standardize layouts.

- Parsing: Tokenize, segment, and extract relevant fields using AI and NLP.

- Validation: Cross-verify extracted data, flag anomalies.

- Human review: For high-value or ambiguous cases, expert review is mandatory.

- Integration: Feed structured data into downstream systems (analytics, CRM, compliance).

- Each step may involve multiple iterations—or fail entirely if skipped.

- Top teams document every stage, ensuring transparency and traceability.

Red flags and gotchas: what the pros never overlook

-

Unusual layouts: Non-standard templates often trigger parsing errors.

-

Handwritten content: OCR struggles with anything but pristine handwriting.

-

Language drift: Industry jargon evolves—models can lag behind.

-

Regulatory changes: Laws change faster than parsing tools can adapt.

-

Always validate with a secondary method—never trust a parser blindly.

-

Keep humans in the loop for compliance, legal, and high-risk scenarios.

Checklist: is your parsing strategy future-proof?

- Are you regularly updating your parsing models?

- Do you have robust validation and audit trails?

- Is there a protocol for human review in edge cases?

- Are privacy and compliance requirements systematically tracked?

- Can your tools handle new document types and languages as they appear?

Beyond automation: the human element in document parsing

Human-in-the-loop: where intuition still matters

Even the most advanced AI systems require human intuition and domain knowledge—especially in high-stakes environments.

"For every breakthrough in automation, there’s an edge case that only a human can safely resolve. The art is knowing where to draw the line." — Intelligent Document Processing News, 2024

- Human reviewers provide context, resolve ambiguity, and ensure compliance.

- The best systems make it easy to escalate questionable cases for expert oversight.

Training teams for parsing success

- Ongoing education: Train staff on evolving AI capabilities and parsing limitations.

- Domain expertise: Pair technical teams with subject-matter experts (legal, medical, financial).

- Feedback loops: Foster a culture where errors are analyzed and models retrained.

Definition List:

- Subject-matter expert (SME): Professional who understands document content and context, guiding AI model improvement.

- Active learning: Human reviewers flag errors, enabling the system to retrain and adapt continuously.

User stories: victories, failures, and lessons learned

- Legal firm slashes contract review time by 70%—but only after adding human checkpoints for compliance.

- Market research team accelerates insight extraction by 60%, catching recurring parsing errors through regular audits.

- Healthcare provider reduces admin workload by 50%, yet faces privacy breach when a parser misses sensitive fields.

- Success requires not just great tech, but disciplined process and relentless training.

The future of document text parsing: provocations, predictions, and open questions

What breakthroughs are on the horizon?

- Expansion of multimodal AI—combining text, images, and tables seamlessly.

- Greater transparency and auditability in parsing decisions.

- Improved bias detection and correction mechanisms.

- Convergence of parsing with real-time analytics, enabling instant insight from any document stack.

Will AI make human understanding obsolete—or more vital?

"Automation doesn’t eliminate the need for human insight—it magnifies it. The more we automate, the more essential human judgment becomes." — As industry thought leaders emphasize (illustrative, based on trends in arXiv:2410.21169, 2024)

-

Humans will always be needed for context, ethics, and high-level decision-making.

-

AI frees up human experts to focus on strategy, not data wrangling.

-

The “human+AI” paradigm is here to stay.

-

Training, process, and oversight matter as much as tech.

How to stay ahead: skills, mindsets, and resources for the next decade

- Invest in continuous learning: Stay up to date on AI and compliance trends.

- Emphasize process discipline: Document, audit, and review every step.

- Build interdisciplinary teams: Blend technical, legal, and operational expertise.

- Prioritize data quality: Garbage in, garbage out—clean data is king.

- Adopt flexible tools: Choose solutions that can adapt and scale with your needs.

Appendix: mastering document text parsing—resources, guides, and checklists

Essential resources for deep dives

- arXiv:2410.21169 — In-depth technical review of AI parsing advances (2024)

- Intelligent Document Processing News, 2024 — Industry trends and compliance updates

- Rossum 2025 Automation Trends — Market report on automation vs. operational risks

- textwall.ai/document-analysis — In-depth guides and workflow case studies

Definition List:

- LLM: Large Language Model—a neural network trained on vast text data, enabling advanced parsing and understanding.

- OCR: Optical Character Recognition—technology to convert scanned images of text into machine-readable text.

- NER: Named Entity Recognition—AI technique to identify key entities (people, organizations, dates) in text.

Quick reference: glossary of parsing terms

Definition List:

- Tokenization: Splitting text into basic units (tokens) for analysis.

- Segmentation: Dividing documents into logical sections for context-aware parsing.

- Multimodal Parsing: AI analysis combining text, images, and other media.

- Compliance Audit: Systematic review ensuring parsing meets regulatory standards.

- Active Learning: Continuous model improvement via human feedback.

Priority checklist: is your parsing pipeline robust?

- Are model updates scheduled and documented?

- Is every step of the parsing workflow logged for auditability?

- Is human review built into high-risk scenarios?

- Are data privacy and compliance controls embedded in your process?

- Can your tools adapt to new document types, formats, or languages?

- Are error rates measured and acted upon regularly?

- Is staff trained and retrained as tech evolves?

- Regularly test with new and edge-case documents.

- Foster a culture of continuous improvement—parsing is a journey, not a destination.

Conclusion

Document text parsing is no longer the realm of arcane IT teams—it’s the frontline of data clarity, compliance, and competitive advantage. As shown throughout this deep dive, the brutal truths are unavoidable: no parser is flawless, regulatory risks are rising, and human expertise is more vital than ever. Yet the breakthroughs are equally real. AI-powered engines like TextWall.ai are turning information chaos into clarity, slashing manual drudgery, and unlocking actionable insight at unprecedented scale. The new rules? Choose your tools wisely, never trust automation blindly, and always keep the human element front and center. If you want to reclaim control over your data, the time to master document text parsing is now.

This article referenced and verified current research from: arXiv:2410.21169, Intelligent Document Processing News, 2024, Rossum 2025 Automation Trends, and includes practical insights from the textwall.ai knowledge base.

Sources

References cited in this article

- arXiv:2410.21169(arxiv.org)

- Intelligent Document Processing News, 2024(intelligentdocumentprocessing.com)

- Rossum 2025 Automation Trends(rossum.ai)

- Medium: Evolution of Document Parsing(kaushikshakkari.medium.com)

- Inscribe AI on Data Accuracy(inscribe.ai)

- NIH: Health IT Parsing Incidents(ncbi.nlm.nih.gov)

- Parser Expert(parser.expert)

- Docsumo(docsumo.com)

- Stanford NLP(nlp.stanford.edu)

- GeeksforGeeks: Parsing Ambiguity(geeksforgeeks.org)

- Insight7: Case Study Pitfalls(insight7.io)

- Nanonets Document Parsing(nanonets.com)

- Generative AI: PDF Parsing(generativeai.pub)

- Wikipedia: Semantic Parsing(en.wikipedia.org)

- ACL Anthology: Multilingual Parsing(aclanthology.org)

- GAEIA Ethical Dilemma Library(gaeia.world)

- CompTIA 2024(connect.comptia.org)

- Oxford Academic(academic.oup.com)

- IAPP Privacy Risk Study(iapp.org)

- KPMG: Regulatory Challenges 2024(kpmg.com)

- Skillcast: Compliance Challenges(skillcast.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Text Mining Tools That Expose Risk, Bias and Opportunity

Document text mining tools are changing how we see data. Uncover hidden risks, real-world wins, and the secrets experts won't share—plus what’s next in 2026.

Document Tagging Systems That Work: Hard Truths Before You Buy

Document tagging systems are transforming chaos into clarity. Discover the latest breakthroughs, hidden pitfalls, and hard truths you can't afford to ignore—read before you choose.

Document Summarizer Tool Reviews 2026: Winners, Risks, Lies

Document summarizer tool reviews that cut through hype. Uncover real pros, cons, and expert insights in this 2026 deep-dive. Don’t choose blindly—read this first.

Document Summarizer Tool Online: Productivity Boost or Thinking Trap?

Document summarizer tool online revolutionizes how you digest and act on information. Discover hidden truths, real-world hacks, and expert critiques. Read before you decide.

Document Summarizer Professional: Can You Trust What It Hides?

Discover insights about document summarizer professional

Document Summarizer Integration Options That Won’t Break in 2026

Document summarizer integration options revealed: Discover the boldest strategies for seamless workflow, critical risks, and 2026’s must-know advances.

Document Summarizer Free Tools Aren’t Free If You Lose Your Data

Document summarizer free tools promise easy answers, but what's the real cost? Discover the hidden risks, best picks, and secrets to smarter summaries. Read before you upload.

Document Summarizer for Technical Teams That Won’t Break Your Code

Uncover hidden risks, real ROI, and the edgy new playbook for smarter, faster collaboration. Read before your next big project.

Document Summarizer for Streamlined Tasks That You Can Trust

Discover the untold realities, hidden risks, and expert tactics to unlock true productivity. Don’t miss the ultimate guide to smarter work.