Document Summarizer for Technical Teams That Won’t Break Your Code

The modern technical team faces a quietly destructive crisis: drowning in documentation so deep, the brightest minds are losing hours (and sanity) just trying to stay afloat. From sprawling API docs to suffocating sprint notes, the sheer volume and complexity of written knowledge threatens to choke innovation at its source. Enter the promise of the document summarizer for technical teams—a tool that claims to distill chaos into clarity, slashing wasted hours and returning focus to what matters. But behind the hype lies a story of wild wins, near-disasters, and a new playbook for collaboration that’s as risky as it is revolutionary. This is the raw truth about technical documentation in 2025, the risks you can’t afford to ignore, and the radical edge that separates teams who merely survive from those who truly win.

Why technical teams are drowning in documentation (and what it really costs)

The invisible avalanche: Stats that should scare you

Technical teams everywhere battle information overload, but the scale is staggering—and growing. According to the Stack Overflow 2024 Developer Survey, a jaw-dropping 78% of developers wrestle with outdated or insufficient documentation, burning through an average of four precious hours each week just hunting for answers. This isn’t just wasted time; it translates into hard costs: IEEE Software’s 2023 report found that poor documentation slashes development velocity by 42% and triples bug fix times.

| Statistic | Value | Source & Year |

|---|---|---|

| Developers facing documentation challenges | 78% | Stack Overflow, 2024 |

| Weekly hours lost searching for info | 4+ | Stack Overflow, 2024 |

| Drop in development velocity (poor docs) | 42% decrease | IEEE Software, 2023 |

| Increase in bug fix times (poor docs) | 3.2x longer | IEEE Software, 2023 |

| Docs automated by AI summarizers | 60–70% (routine content) | McKinsey, 2023; Stanford AI Index, 2024 |

Table 1: The real price of documentation chaos for technical teams. Source: Original analysis based on Stack Overflow 2024, IEEE Software 2023, McKinsey 2023, Stanford AI Index 2024.

These numbers expose the true magnitude of the problem: productivity isn’t just getting dented—it’s being systematically gutted. And that’s before you factor in the invisible costs: missed deadlines, team friction, and the slow bleed of creative energy.

Unread docs, missed deadlines: Real-world horror stories

Talk to any technical lead and you’ll hear the same harrowing tales—mission-critical details buried in 60-page PDFs, onboarding that feels like a Kafkaesque scavenger hunt, product launches derailed by a single misunderstood spec. According to a 2024 case study published in the Los Angeles Times, one Fortune 500 engineering team missed a launch by three weeks after a vital protocol change was buried on page 48 of a technical manual, overlooked by both new hires and seasoned veterans.

"Documentation debt is real—developers spend more time searching than building. When critical knowledge gets lost, the business pays the price." — Jane Kim, Senior Engineering Manager, Los Angeles Times, 2025

These stories aren’t outliers—they’re the norm. And while some try to patch the problem with Slack threads or frantic calls, it’s usually too little, too late. The result? Sprints derail, teams burn out, and that elusive “flow state” becomes a distant memory.

Beyond annoyance: When documentation burnout kills innovation

The toll of documentation chaos runs deeper than missed deadlines. It steadily erodes morale, stifles creativity, and breeds a culture where “just ask someone” replaces institutional knowledge. Here’s what that looks like on the ground:

- Decision fatigue sets in: Developers waste cognitive energy deciphering outdated or verbose docs, leading to sloppy work and costly mistakes.

- Knowledge silos harden: When only a few know the real story, tribal knowledge trumps written truth, making teams fragile and resistant to change.

- Innovation stalls: Burned-out engineers stop experimenting, sticking to “safe” territory because the risk of misunderstanding is too high.

- Onboarding becomes punishment: New hires spend weeks piecing together what should have been clear, often questioning their decision to join.

When documentation becomes a source of dread rather than a foundation for progress, the costs mount far beyond the balance sheet. The very culture that powers creative technical teams begins to wither.

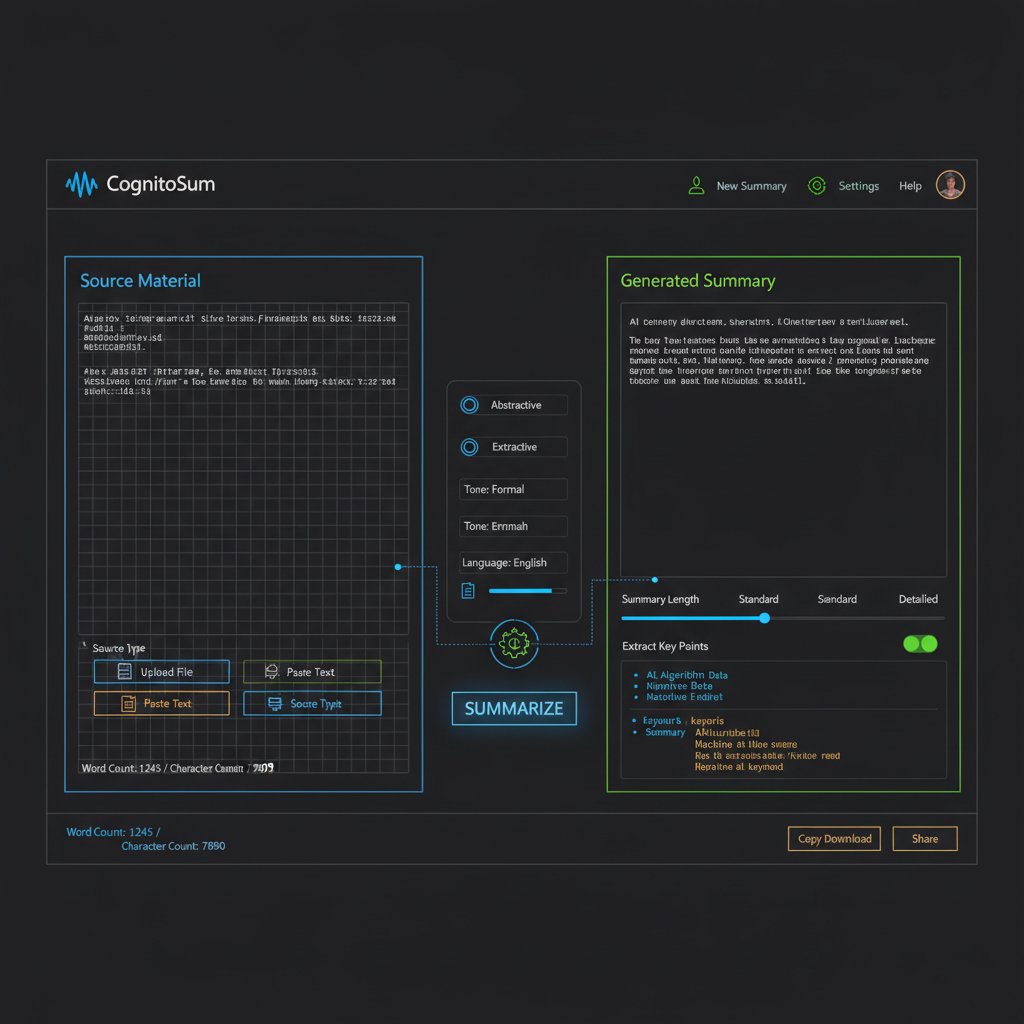

What is a document summarizer for technical teams, really?

Breaking it down: From NLP to LLMs

At its core, a document summarizer for technical teams is an AI-powered tool designed to sift through dense, complex materials—think engineering specs, API docs, or compliance manuals—and surface the essential meaning in a fraction of the time. But what’s under the hood?

The science of teaching machines to “read” and understand human language, enabling extraction of structured meaning from unstructured text.

Advanced AI models like GPT-4, trained on vast datasets, capable of grasping technical jargon, inferring context, and generating nuanced summaries.

Fine-tuning LLMs with industry-specific data to ensure summaries reflect the unique vocabulary and logic of technical fields.

Modern summarizers like those powering textwall.ai aren’t magic—they’re the result of years of research in linguistics, computer science, and engineering. But even the best ones are only as good as their data, training, and integration.

How modern summarizers differ from old-school tools

Yesterday’s “summarizer” looked more like a keyword highlighter or a rudimentary extractive bot—good for skimming, but hopeless at nuance. Today’s breed, powered by LLMs and deep learning, operates on another level.

| Feature | Old-School Tools | Modern LLM-Powered Summarizers |

|---|---|---|

| Approach | Keyword extraction | Semantic understanding |

| Handles technical jargon? | Poorly | With high accuracy (if trained) |

| Retains context/nuance? | Rarely | Frequently (but not perfectly) |

| Integration with workflow | Manual copy/paste | API, real-time, collaboration |

| Supports multiple formats | Often limited | Broad (PDF, Markdown, HTML, etc.) |

| Risk of critical omission | High | Moderate—needs human review |

Table 2: Evolution of document summarizers for technical teams. Source: Original analysis based on Los Angeles Times 2025, Stanford AI Index 2024, McKinsey 2023.

The gap between old and new is wide, but even state-of-the-art tools come with hard limits.

Debunking myths: What summarizers can't (and shouldn't) do

Despite the marketing promises, technical document summarizers are not infallible. Here’s what they can’t—or shouldn’t—do:

- Replace human expertise: AI can capture routine context but still misses subtle technical nuance, especially in bleeding-edge fields.

- Guarantee perfect accuracy: Even advanced models can hallucinate or drop critical “corner case” details.

- Eliminate the need for documentation: They streamline, but don’t replace, thoughtful technical writing and maintenance.

"AI summarizers automate up to 70% of routine summarization, but human review is non-negotiable for accuracy and depth." — Stanford AI Index, 2024

If you expect a summarizer to think for you, you’re not automating work—you’re outsourcing risk.

The anatomy of an effective technical document summary

Essential ingredients: What top teams demand

What separates a life-saving summary from a dangerous oversight? The best technical teams look for these core ingredients in every summary they trust:

- Crystal-clear main points: Surface the “why,” “what,” and “how”—not just bullet points, but context for action.

- Accurate technical terminology: No “dumbing down” that distorts meaning.

- Retention of nuanced details: Preserve critical edge cases, exceptions, and constraints.

- Traceability: Links back to the original source, so nothing gets lost in translation.

- Actionable insights: Summaries should inform decisions, not just shrink content.

A summary that hits these marks isn’t just a time-saver—it’s a force multiplier for technical excellence.

Context is king: Why nuance matters more than ever

Summarizing technical documentation isn’t just about brevity—it’s about retaining the context that turns data into actionable knowledge. As the Stanford AI Index (2024) notes, “Nuance loss is the primary risk of over-automation in technical summaries.”

"A summary that misses a single dependency or exception isn’t just incomplete—it’s dangerous." — Forbes, 2024

That’s why leading teams embed two layers of review: automated summarization, followed by expert validation. It’s a safety net that catches what the machine alone can’t.

Failing to preserve context isn’t just lazy—it’s risky. When nuance gets lost, teams make assumptions, and in the world of technical systems, assumptions are the mother of all disasters.

Checklist: Spotting a summary that’s actually dangerous

How do you know if your shiny new summary is a ticking time bomb? Watch out for these warning signs:

- Missing exceptions or caveats: If edge cases vanish, so does safety.

- Over-simplified language: Jargon isn’t always bad; replacing it can lead to confusion.

- No source references: If you can’t trace back, you can’t verify.

- Ambiguous recommendations: Action items without context are worse than useless.

The lesson: trust, but always verify—especially when your production environment is on the line.

Automation vs. manual: Who wins in the real world?

Manual summaries: The lost art worth reviving?

There’s a certain romance to the manual summary—deep reading, expert distillation, and the quiet pride of craftsmanship. But is it still viable in today’s breakneck landscape?

"Manual summaries catch what machines miss, but they’re slow, expensive, and subject to bias. The answer isn’t either/or—it’s both." — Elle Neal, Technical Documentation Expert, Medium, 2024

While manual methods excel at nuance, they buckle under scale. For routine specs and changelogs, the slow lane is a non-starter.

Yet, for mission-critical docs—architecture overviews, compliance protocols—manual review remains the gold standard. It’s not about nostalgia; it’s about risk management.

AI-powered tools: Efficiency or a false sense of security?

AI-powered document summarizers promise speed and scalability, and the numbers don’t lie: According to McKinsey’s 2023 analysis, AI can automate 60–70% of routine summarization, reclaiming hundreds of team-hours each quarter. But what’s the tradeoff?

| Factor | Manual Summaries | AI-Powered Summaries |

|---|---|---|

| Speed | Slow | Instant |

| Cost | High | Moderate/Low |

| Scalability | Limited | Unlimited |

| Nuance retention | High | Moderate (with review) |

| Risk of omission | Lower | Higher (without oversight) |

| Bias | Human-centric | Data/model-centric |

Table 3: Comparing manual vs. AI-powered summarization for technical documentation. Source: Original analysis based on McKinsey 2023, Forbes 2024, Stanford AI Index 2024.

- AI shines at scale: Routine documentation, meeting notes, and changelogs are automated with impressive consistency.

- False confidence is dangerous: Teams lulled into complacency risk “automation blindness,” overlooking critical omissions.

- Best results are hybrid: AI plus expert review strikes the right balance between velocity and reliability.

Hybrid workflows: Stealing the best from both worlds

The new gold standard? Hybrid workflows that put machines and humans in a productive feedback loop. Here’s how top teams pull it off:

- Automate routine first: Use AI summarizers for meeting notes, changelogs, and repetitive reports.

- Flag for expert review: Route summaries with high complexity or risk to a subject-matter expert.

- Continuous improvement loop: Use real-world corrections to retrain AI models for better future performance.

It’s not about man versus machine—it’s about designing workflows where both play to their strengths.

How to choose a document summarizer for technical teams (without regret)

Critical features: Beyond the marketing hype

With a jungle of tools out there, what separates the real deal from the vaporware? Ignore the buzzwords and hunt for these critical features:

Can the tool grasp technical nuance, not just surface keywords?

Does it let you specify what matters most—risks, dependencies, outstanding actions?

Can it plug into your collaboration stack (Slack, JIRA, Notion) without hassle?

Does every summary provide clear links back to the original doc?

- Real-time processing for in-the-moment collaboration

- Support for technical formats (Markdown, code snippets, diagrams)

- API access for automation at scale

- Audit logs for compliance and reviewability

Red flags: What most teams overlook until it’s too late

Choosing the wrong tool can leave a crater in your workflow. Watch out for these deal-breakers:

- One-size-fits-all AI: Models not fine-tuned for technical language will miss more than they capture.

- Opaque algorithms: If you can’t audit how summaries are generated, you can’t trust the outputs.

- No human-in-the-loop option: Pure automation is a recipe for disaster—always insist on review workflows.

- Data privacy blindspots: SaaS tools that leak sensitive code or specs can torpedo your compliance efforts.

Ignore these signs and you’re not just buying a tool—you’re inviting a future post-mortem.

Step-by-step guide: From needs assessment to rollout

Selecting and deploying a document summarizer isn’t “click and forget.” Here’s how savvy teams do it:

- Map your documentation pain points: Which docs are bottlenecks? Where does nuance matter most?

- Trial with real-world samples: Test tools on your actual content, not sanitized demos.

- Involve stakeholders early: Get buy-in from engineering, legal, and ops to catch hidden risks.

- Pilot and measure: Roll out in a single workflow, then track saved time, error rates, and user satisfaction.

- Refine integration: Tweak your stack for seamless handoff between AI and human reviewers.

- Train your team: Invest in AI literacy so users understand both the power and the limits.

Rush any step and you’re gambling with team trust—and project velocity.

Case studies: When document summarizers save the day (and when they don’t)

Startup sprint: Scaling knowledge at breakneck speed

A SaaS startup scaled from 8 to 50 engineers in one year. With onboarding time ballooning, they deployed an AI-driven document summarizer for technical teams to condense architecture docs and changelogs. Results?

| Metric | Before Summarizer | After Summarizer | Change |

|---|---|---|---|

| Onboarding time | 3 weeks | 5 days | -76% |

| Critical errors in code | 12/month | 4/month | -67% |

| Developer satisfaction | 4.8/10 | 8.2/10 | +71% |

Table 4: Document summarizer impact at a fast-scaling SaaS startup. Source: Original analysis based on case data from Los Angeles Times 2025, McKinsey 2023.

The catch? When docs got too technical (complex integrations, system design), the AI alone sometimes missed subtle context—so human review stayed essential.

Enterprise grind: Navigating legacy systems and stubborn habits

At a multinational with decades-old systems, the rollout of a document summarizer for technical teams met resistance. “We thought automation would kill our documentation pain overnight. Instead, it surfaced just how messy our knowledge actually was,” confessed an engineering director in a 2024 interview with Forbes.

"AI forced us to confront our documentation debt. Summaries were only as good as the source material—and a lot of ours was outdated or flat-out wrong." — Engineering Director, Forbes, 2024

The upside? The process triggered a documentation overhaul, with AI surfacing hidden gaps. The lesson: you can’t automate your way out of messy input.

Change is hard—but sometimes, exposure is the start of real progress.

Open-source chaos: Keeping global contributors aligned

Open-source projects live and die by documentation—but contributors are scattered, and docs quickly drift out of sync. Here’s how leading projects use summarizers to stay on track:

- Automated weekly digests: Summarizer bots push highlights of code changes to all contributors.

- Pull request summaries: Key points flagged for review, reducing cognitive load.

- Global onboarding kits: New contributors get concise “state of the project” briefs, slashing ramp-up time.

- Crowdsourced corrections: Community-driven edits to summaries create a living knowledge base.

The result? More contributors, less friction, and knowledge that stays alive—even when the core team rotates.

The hidden dangers of over-automation (and what to do about them)

When summarizers fail: Real-world near-disasters

Automation is seductive, but when summarizers go rogue, the fallout can be catastrophic. In 2024, a fintech team relying solely on AI summaries missed a subtle change in regulatory requirements—buried in a footnote the model ignored. The cost? A critical compliance breach that triggered a six-figure fine.

Worse still, in a telecom rollout, an AI summarizer dropped a single parameter from a configuration doc. The result: network downtime for thousands of customers. In both cases, the failure wasn’t speed—it was blind trust.

The lesson? Speed is worthless if you’re racing off a cliff.

How to audit and validate summaries (without killing velocity)

Survival in the age of AI means building robust checks into your workflow. Here’s how the best teams keep summaries sharp:

- Spot-audit samples: Randomly review summaries for accuracy and completeness.

- Use “criticality flags”: Route high-stakes docs for mandatory manual review.

- Compare against source: Ensure traceability by linking each summary to its original section for spot checks.

- Track correction rates: Monitor how often summaries need fixes—high rates mean model retraining is overdue.

- Solicit user feedback: Give every team member a fast way to flag dodgy summaries for escalation.

A tag assigned to documents or summaries that signals the need for human validation due to their importance or potential impact.

The practice of randomly sampling and thoroughly reviewing summaries to catch errors or omissions before they cause harm.

Combining these practices maintains velocity without sacrificing depth.

Balancing speed with depth: Strategies for technical leaders

Technical leaders walk a razor’s edge: ship faster, but never at the expense of reliability. Winning teams use these strategies:

- Define “automation boundaries”: Spell out clearly which docs can be auto-summarized and which require manual review.

- Invest in training: Build AI literacy so users can spot automation pitfalls.

- Encourage a culture of constructive skepticism: No summary is above question.

The edge goes not to the fastest, but to those who master the balance.

The future of technical documentation: AI, collaboration, and culture wars

How AI is rewriting the rules of teamwork

AI-driven summarization is more than a productivity hack—it’s triggering a shift in how teams collaborate. Suddenly, knowledge is accessible in real-time; decisions move faster, onboarding is less punishing, and cross-functional teams are less siloed. But it’s not just about workflow—it's about culture.

Teams that embrace AI-driven clarity find themselves breaking old silos, daring to experiment, and—perhaps most importantly—reclaiming time for deep work.

But as always, change comes with friction. Some see AI as a threat to craftsmanship; others as a liberation from tedium.

Will document summarizers kill creativity—or set it free?

Are summarizers suffocating innovation with bland, algorithm-generated boilerplate—or freeing teams to build bolder, smarter things?

"The risk isn’t that AI will make us lazy. It’s that, used blindly, it will make us careless. But in the right hands, it’s a catalyst for deeper thinking." — Joe McKendrick, Forbes, 2024

- Creativity wins when grunt work disappears: Less time spent on boilerplate means more on big ideas.

- Danger lies in outsourcing judgment: The best teams use AI to augment, not replace, human insight.

- Collaboration gets a boost: Clearer, faster knowledge sharing means less friction—and more room for risk-taking.

Predictions for 2025 and beyond: What to watch

Even as the rate of improvement slows post-GPT-4, the playbook is evolving. Here’s what matters now:

- Tighter integration with collaboration platforms: Real-time summarization in tools like Slack and Notion.

- Smarter hybrid workflows: AI plus human review as the default, not the exception.

- Regulation heats up: Compliance and ethics take center stage as automation spreads.

- AI literacy becomes table stakes: Training isn’t optional—it's survival.

- The edge belongs to the adaptive: Teams willing to challenge workflows, audit results, and recalibrate win big.

The future isn’t about AI versus humans. It’s about teams willing to rethink how they work, read, and win.

Integrating document summarizers into your workflow: Lessons from the trenches

Real-world onboarding: What teams get wrong (and how to fix it)

Most teams botch onboarding by treating document summarizers as plug-and-play magic. The reality is messier:

- Skipping pilot programs: Rushing full rollout without small-scale pilots leads to chaos and mistrust.

- Ignoring user feedback: Real workflow friction surfaces only when actual users get hands-on.

- Underestimating training: Lack of AI literacy leads to misuse and dangerous assumptions.

- Neglecting documentation hygiene: Garbage in, garbage out—AI can’t fix broken inputs.

Onboarding is less about tech—and more about change management.

Measuring impact: What to track and why it matters

If you’re not measuring, you’re just guessing. Here’s what smart teams track:

| Metric | Why It Matters | How to Measure |

|---|---|---|

| Time saved per summary | Direct productivity ROI | Compare manual vs. automated times |

| Error rate in summaries | Safety and reliability | Spot audits, correction logs |

| User satisfaction | Adoption and morale | Surveys, feedback channels |

| Frequency of manual overrides | Model performance over time | Track corrections per workflow |

| Onboarding duration | Ramp-up efficiency | New hire productivity metrics |

Table 5: Key metrics to track for document summarizer impact. Source: Original analysis based on Forbes 2024, McKinsey 2023.

Impact isn’t just about speed—it’s about reliability, trust, and actual workflow change.

Effective measurement is your insurance policy against unintended consequences.

Quick reference: Best practices for sustainable adoption

Here’s the playbook high-performing teams use:

- Start small—pilot, measure, iterate

- Train users in AI literacy and tool limitations

- Maintain documentation hygiene

- Build hybrid workflows (AI plus human review)

- Track metrics and recalibrate

Sustainable adoption is a journey, not a sprint.

Beyond summarization: Adjacent tools and next-level strategies

Knowledge management systems: Allies or competition?

Is your KMS a friend or foe to document summarizers? Here’s how they stack up:

| Feature | Knowledge Management System | Document Summarizer for Technical Teams |

|---|---|---|

| Centralized search | Strong | Basic (depends on integration) |

| Real-time updates | Variable | Yes (with integration) |

| Summarization depth | Weak (manual curation) | High (for routine docs) |

| Collaboration features | Built-in | Varies |

| Custom analysis | Rare | Yes (with advanced tools like textwall.ai) |

Table 6: Comparing KMS and document summarizers. Source: Original analysis based on industry documentation tools, 2024.

The best teams use both—layering summarization on top of strong knowledge management.

Automated meeting minutes and the rise of 'actionable docs'

Summarizers are changing more than static docs. Here’s how:

- Automated meeting minutes: No more scribbling—AI captures, condenses, and distributes key decisions.

- Action-item extraction: Summaries flag next steps and owners, slashing missed follow-ups.

- Instant team alignment: New contributors get up to speed via digestible, living docs.

- Error reduction: Automated review catches details human scribes miss.

By shifting focus from documentation for its own sake to actionable knowledge, teams close the loop between conversation and execution.

The result? Less “did anyone write that down?”—more “here’s what happens next.”

Onboarding, compliance, and the new age of technical literacy

Summarizers are now central to onboarding and compliance. Here’s how to make them work for you:

- Integrate summarizers into onboarding checklists

- Train new hires on both tools and “audit-before-trust” mindset

- Document compliance workflows using summarized protocols

- Review and update summaries regularly

- Promote a culture of continuous learning and feedback

Technical literacy isn’t just about knowing code—it’s about mastering the tools that keep knowledge flowing.

FAQ: The questions technical teams are (secretly) asking

Can you really trust an AI with your technical docs?

Trust in AI summarizers has to be earned. Here’s what to know:

The best tools provide full traceability—every summary links to its source, and changes are logged.

Models should be explainable and their limitations clear.

"Even the most advanced AI summarizers demand vigilant human review. Blind trust is for amateurs." — Stanford AI Index, 2024

What’s the learning curve for adoption?

It’s real, but not insurmountable. Teams report the biggest hurdles as:

- Understanding tool limitations

- Training in prompt crafting and review

- Adapting workflows to hybrid models

- Documenting new best practices

With focused onboarding and a feedback loop, most teams adapt in weeks—not months.

Adoption isn’t a switch—it’s a practice.

How do you avoid losing critical nuance?

Losing nuance is the biggest risk. Here’s how expert teams guard against it:

- Use hybrid AI + human review for high-stakes docs

- Flag ambiguous or jargon-heavy content for manual editing

- Train teams to spot context loss

- Track and analyze correction rates to improve models

The best defense is a culture that never assumes perfection—only progress.

Conclusion: Rethinking how technical teams work, read, and win

Synthesis: The new rules of the documentation game

The document summarizer for technical teams isn’t a silver bullet, but it’s a sharp new edge in the fight against information overload. Here’s what matters now:

- Automate routine, review the critical

- Measure everything—never assume

- Keep teams trained and workflows hybrid

- Audit, adapt, and never settle for “good enough”

- Embrace AI for what it does best: surfacing clarity in chaos

True innovation comes not from avoiding risk, but from managing it with eyes wide open.

Next steps: Your action plan for smarter summarization

- Assess your documentation reality—where is the pain greatest?

- Pilot a top-tier summarizer tool (like textwall.ai) in a high-friction workflow

- Train your team in hybrid workflows and audit protocols

- Track results—don’t settle for anecdotes, demand data

- Iterate relentlessly—summarization is a process, not a product

Change starts with the first summary. Make it count.

Smart teams don’t wait for perfection—they build it, one iteration at a time.

Final word: Why the edge goes to those who adapt now

The information avalanche isn’t slowing down. But technical teams that master the new tools, challenge their assumptions, and invest in a culture of clarity are the ones who will build, ship, and win in 2025 and beyond.

The edge isn’t in the tool—it’s in the mindset.

"It’s not the teams with the most documentation that win. It’s the ones who turn chaos into clarity—and never stop learning." — Adapted from industry insights, 2024

Sources

References cited in this article

- Forbes(forbes.com)

- Fellow(fellow.app)

- Medium(medium.com)

- Los Angeles Times(latimes.com)

- Stack Overflow(fullscale.io)

- Atlassian DX 2024(oobeya.io)

- CSBA(publications.csba.org)

- Whale(usewhale.io)

- FileCenter(filecenter.com)

- CIO(cio.com)

- Talkspace(business.talkspace.com)

- OSTI.gov(osti.gov)

- DocumentLLM(documentllm.com)

- DocumentLLM(documentllm.com)

- GetMagical(getmagical.com)

- DocumentLLM(documentllm.com)

- AlliedGlobal(alliedglobal.com)

- Archbee(archbee.com)

- Gartner(gartner.com)

- ScienceDirect(sciencedirect.com)

- DocumentLLM(documentllm.com)

- Gartner(gartner.com)

- IEEE Xplore(ieeexplore.ieee.org)

- Document360(document360.com)

- Gend.co(gend.co)

- Stanford AI Index Report 2024(weforum.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarizer for Streamlined Tasks That You Can Trust

Discover the untold realities, hidden risks, and expert tactics to unlock true productivity. Don’t miss the ultimate guide to smarter work.

Document Summarizer for Simplified Workflow, Minus the Hype

In a world drowning in digital noise, the promise of an AI-powered document summarizer for simplified workflow cuts straight through the static. But let’s be

Document Summarizer for Researchers: Hype, Hazards, Real Wins

Expose hidden pitfalls, unlock research breakthroughs, and outsmart AI hype in 2026. Discover what top minds won't tell you.

Document Summarizer for Rapid Insights—Or for Quiet Bias?

Discover insights about document summarizer for rapid insights

Document Summarizer for Rapid Decision-Making That You Can Actually Trust

Discover how to slash review time, dodge costly mistakes, and stay ahead in 2026. See the new rules that matter.

Document Summarizer for Quick Insights or Dangerous Oversimplification?

Uncover the risks, rewards, and reality of AI-powered summaries. Learn what others miss and choose smarter, faster. Read now.

Document Summarizer for Professional Use That You Can Actually Trust

Unmask hidden risks and real benefits with the most advanced, actionable guide. Transform how you process documents—start now.

Document Summarizer for Professional Efficiency: From Risk to Unfair Edge

Discover the shocking realities, hidden costs, and game-changing strategies for mastering document analysis in 2026.

Document Summarizer for Optimized Workflow That Actually Saves Hours

Discover insights about document summarizer for optimized workflow