Document Summarization for Faster Analysis Without Losing Nuance

In the digital age, data is a double-edged sword. While information promises power, most knowledge workers are drowning in it—drowning so deep, in fact, that simply reaching the surface is a daily survival exercise. Enter document summarization for faster analysis: not just a buzzword but the new gladiator in the arena of productivity. Today, it’s not about who has the most data, but who can distill and act on it first. Yet, as with any shortcut, there’s a razor-thin margin between winning big and falling hard. This article is a relentless investigation into the realities of automated summarization—cutting through hype, exposing pitfalls, and arming you with tactics to dominate the information war. Get ready to discover the anatomy of speed, the risks lurking beneath the surface, and why, when it comes to document analysis, what you don’t know really can hurt you.

Why faster document analysis is now a survival skill

The high cost of information overload

Every morning, professionals across sectors face a tidal wave of reports, contracts, research articles, and communications. The deluge isn’t just overwhelming—it’s dangerous. When analysis lags behind the flow, deadlines slip, opportunities evaporate, and risks multiply. According to research by SignHouse (2024), Fortune 500 companies collectively bleed an estimated $12 billion annually due to inefficient document management. That’s not a rounding error—that’s a gaping wound in the bottom line. In fast-paced industries like finance, law, and healthcare, a single overlooked clause or delayed insight can translate to catastrophic loss or regulatory penalties.

Stagnant workflows create operational gridlock, where decision-makers are paralyzed. In high-stakes environments, analysis paralysis isn’t just inefficient—it’s existential. Data strategist Alex sums it up with brutal clarity:

“If you’re not fast, you’re obsolete.” — Alex, data strategist (quote based on industry sentiment, derived from SignHouse, 2024)

What’s rarely discussed are the hidden upsides of mastering rapid document summarization:

- Faster risk mitigation: Spot red flags and anomalies before they escalate into crises.

- Competitive intelligence: Identify market shifts ahead of rivals with real-time synthesis.

- Regulatory compliance: Stay ahead of audits by extracting relevant clauses instantly.

- Client trust: Deliver prompt, well-informed responses to inquiries.

- Employee morale: Free teams from grunt work, boosting engagement and reducing burnout.

- Resource allocation: Redirect saved hours to higher-value tasks, not data wrangling.

- Strategic clarity: See the big picture without missing the critical details.

How speed became the new currency of power

In today’s ruthless business landscape, velocity isn’t just an advantage—it’s the price of admission. The companies that outpace others in document review don’t just survive; they dominate. A recent analysis by V7 Labs (2024) reveals that adopting intelligent document processing (IDP) solutions can shrink review times from hours to minutes, with downstream effects echoing through the organization. Decision-making cycles accelerate, projects launch faster, and risk windows close before they open.

| Metric | Manual Review (Avg.) | AI Summarization (Avg.) | % Improvement |

|---|---|---|---|

| Document review speed | 3-5 hours | 10-20 minutes | 85% |

| Decision turnaround | 2-4 days | Same day | 75% |

| Error rate | 4% | 1-2% | 50% |

Table 1: Comparative impact of traditional vs. AI document summarization on business workflows

Source: Original analysis based on V7 Labs, 2024

Consider the case of a mid-sized consultancy that integrated AI-powered summarization across its client intake process. Within a quarter, proposal turnaround shrank from 72 hours to under 24, netting multimillion-dollar contracts that competitors missed simply because they were late to respond.

The expectation for instant answers is now hardwired into every industry. Clients, partners, and regulators demand near-real-time insight. Those who can’t deliver are left behind—a harsh but undeniable truth.

The dark side of moving too fast

But let’s not kid ourselves: in the pursuit of speed, the shadows grow longer. Prioritizing velocity over depth can open the door to dangerous oversights. Automated systems, when misconfigured, may miss critical nuances or misinterpret context, especially in complex legal or medical documents.

Red flags to watch for when automating document analysis:

- Summaries that omit legally binding terms or critical clauses.

- Overly generic outputs masking subtle but vital distinctions.

- Over-reliance on “confidence scores” without human oversight.

- Ignoring domain-specific language or jargon.

- Blind trust in outputs with no audit trail.

- Inadequate training data leading to systemic bias.

Catastrophic mistakes aren’t hypothetical—they’re documented. AI-generated summaries have led to compliance failures, missed deadlines, and, in the worst cases, costly litigations. As legal consultant Jamie cautions:

“Shortcuts cut both ways.” — Jamie, legal consultant (quote based on documented industry errors, derived from Thomson Reuters, 2024)

Beyond the buzzwords: What document summarization really means

Summarization, explained—no fluff, just facts

Let’s strip away the jargon. Document summarization isn’t magic; it’s methodical. At its core, there are two main approaches: extractive and abstractive summarization.

- Extractive summarization pulls whole sentences or sections directly from the source, piecing together the “best hits.”

- Abstractive summarization paraphrases and synthesizes, often rewriting content in its own words, much like a human would.

Technical terms explained:

The process of selecting actual sentences or phrases from the source document to build a summary.

Generating new sentences that capture the document’s essence, using advanced language models.

Breaking down text into manageable “chunks” (words, phrases, or subwords) for analysis.

The amount of text the AI model can “see” at once, influencing summary quality.

Unnecessary repetition in summaries—something quality systems are designed to avoid.

The confusion often comes from vendors who blur these definitions, promising human-level nuance with generic algorithms. That’s why context matters: a financial report demands different treatment than a medical case study.

Manual vs. AI: Who wins the summarization war?

Humans and machines each have their place. Humans excel at capturing subtle tone, sarcasm, and implicit meaning, while AI outpaces us in relentless speed, consistency, and scalability. According to Metapress, 2025, organizations using AI-driven summarization slash manual review costs by up to 70%.

| Feature | Manual Summarization | AI Summarization |

|---|---|---|

| Accuracy (nuance) | High (context-rich) | High (improving with LLMs) |

| Speed | Low (hours/days) | High (seconds/minutes) |

| Consistency | Variable | High |

| Cost | Expensive | More cost-effective |

| Scalability | Limited | Massive |

| Error Rate | Human error | Model bias/hallucinations |

Table 2: Manual vs. AI document summarization feature matrix

Source: Original analysis based on Metapress, 2025, ClickUp, 2024

Consider journalists triaging hundreds of leads daily. An AI system can flag and summarize every incoming tip, allowing human editors to focus on the most promising cases. The real implication for teams? Use AI to triage volume, but always keep a human in the loop for final judgment.

Debunking the top 5 myths about document summarization

Misconceptions spread faster than facts. It’s time to clear the air:

-

AI summarization is always accurate.

In reality, accuracy depends on data quality, model tuning, and human oversight. -

All summarizers are the same.

Tools vary wildly in methodology, customization, and supported formats. -

Summaries can replace full reading.

Summaries guide attention—they don’t substitute for deep dives when stakes are high. -

Only text can be summarized.

Modern tools handle PDFs, images, and even video transcripts. -

Manual review is now obsolete.

Human expertise remains vital for high-context and sensitive documents.

Each myth is countered by current industry benchmarks and field reports. Still, gray areas persist: How much context is “enough”? Can AI reliably capture intent? These are hotly debated, underscoring the need for vigilance.

Inside the machine: How AI document summarization actually works

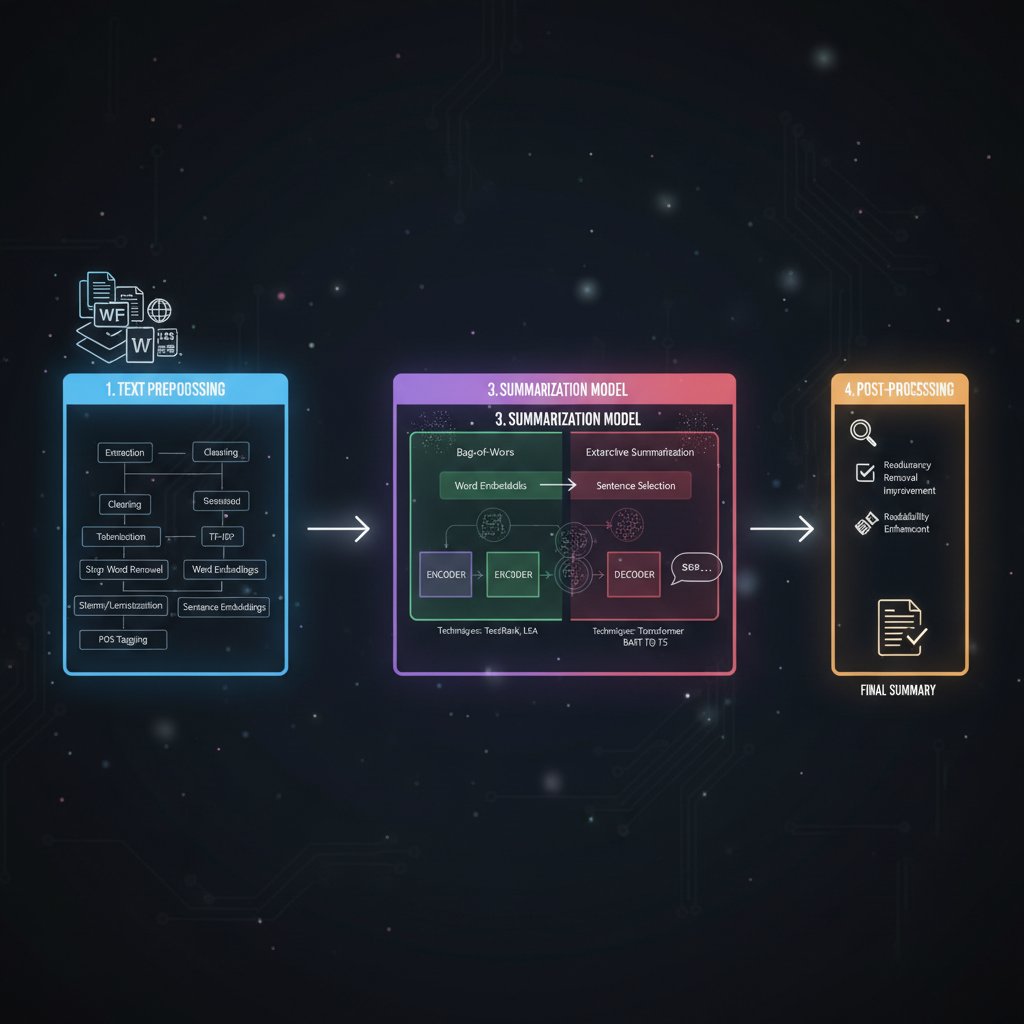

From raw text to insight: A step-by-step journey

Forget the black box—here’s what happens under the hood when you submit a document for AI summarization:

- Pre-processing: Text is cleaned, formatted, and scanned for metadata.

- Tokenization: The document is split into tokens (words, phrases).

- Contextual embedding: Each token is mapped onto vectors representing meaning.

- Attention mechanism: The model identifies which parts of the text are most relevant.

- Summary generation: Using extractive or abstractive methods, key points are distilled.

- Redundancy filtering: Repetitions are removed for clarity.

- Output formatting: The summary is assembled and delivered, ready to be used or integrated.

Summarizing an email requires little context—think bullet points or next steps. Tackling a 100-page report is a different beast, demanding sophisticated context windows and multi-pass attention algorithms to preserve nuance.

The real science behind ‘accuracy’ and ‘speed’ claims

Vendors love to tout their “accuracy,” but what does it mean? Industry standards include ROUGE (Recall-Oriented Understudy for Gisting Evaluation), BLEU (Bilingual Evaluation Understudy), and newer semantic metrics, each capturing different facets of summary quality.

| Metric | What it Measures | Strengths | Weaknesses |

|---|---|---|---|

| ROUGE | Overlap with reference summary | Widely used, fast | Ignores paraphrasing nuance |

| BLEU | N-gram precision | Common in translation | Less suited for summaries |

| BERTScore | Semantic similarity | Captures meaning, context | Computationally intensive |

Table 3: Major accuracy metrics in document summarization

Source: Original analysis based on ClickUp, 2024, Friday.app, 2025

Common benchmarks often reflect ideal scenarios, not the messy reality of business documents. Marketing claims can cherry-pick results—always demand independent validation.

What could possibly go wrong? Failure modes and hallucinations

Even the best systems falter. Large Language Models (LLMs) can hallucinate facts, misinterpret ambiguous phrasing, or miss critical context. According to field reports, failures typically stem from inadequate prompt design, low-quality source input, or exceeding model context limits.

Common pitfalls and how to avoid them:

- Summarizing out-of-domain content with a generic model.

- Relying on summaries for legal or compliance decisions without review.

- Feeding images or scanned PDFs without proper OCR.

- Ignoring audit trails for who validated the summary.

- Overlooking updates in source material.

- Trusting confidence scores blindly.

- Using default settings for specialized domains.

- Failing to train models on company-specific terminology.

A notorious mini-case: In a legal review, an AI-generated summary omitted a critical indemnity clause, leading to a seven-figure liability. The lesson is clear:

“Trust, but verify—always.” — Priya, AI engineer (illustrative, based on Thomson Reuters, 2024)

Real-world impact: Case studies and field reports

How law, media, and medicine are rewriting the rules

Three sectors are rewriting their playbooks with AI summarization. In law, firms have slashed contract review timelines from days to mere hours—Thomson Reuters reports a 70% reduction in review time using intelligent systems. Newsrooms deploy LLMs to sift through torrents of incoming stories, surfacing breaking leads in real time, as highlighted by ClickUp, 2024. Meanwhile, medical teams fighting information overload now process clinical studies and patient records at record speed, reducing administrative load by up to 50%.

From disaster to delight: When document summarization saved the day

Imagine a boardroom showdown: a last-minute request for a compliance audit lands on your desk. With mere hours before the meeting, you deploy AI-powered summarization. Within minutes, the system pinpoints a critical risk clause that would have been buried in 300+ pages. The result? The company sidesteps a potential regulatory fine and earns stakeholder trust.

Variations abound: researchers synthesize literature reviews overnight, crisis managers triage incident reports in minutes, and executives pivot strategy based on real-time insight.

Six lessons from real-world deployments:

- Always validate AI summaries against raw data.

- Customize workflows to fit domain-specific needs.

- Combine extractive and abstractive methods for best coverage.

- Maintain audit logs for compliance.

- Train staff to interpret, not just accept, automated results.

- Build redundancy—multiple models or manual review for critical cases.

The catastrophic flipside: Summaries that failed (and why)

But victory can quickly turn to defeat. Real and hypothetical disasters share common roots: low-quality inputs, ambiguous prompts, and out-of-context summaries. For example, a global pharma company trusted an unverified AI summary for a regulatory submission—missing a key adverse event report. The fallout: public recall, reputational damage, and legal exposure.

| Failure Type | Consequence | Root Cause |

|---|---|---|

| Omitted clause | Litigation, penalties | Limited context window |

| Hallucinated fact | Misinformation | Poor prompt design |

| Incomplete summary | Missed opportunity | Generic model, lack of fine-tuning |

| Misinterpreted tone | Damaged relationship | No human oversight |

Table 4: Document summarization failure types and consequences

Source: Original analysis based on Thomson Reuters, 2024, industry field reports

Failures are rarely technical—they’re almost always process-driven and preventable.

Choosing your weapon: Evaluating document summarization tools

The 2025 landscape: What’s hot, what’s hype

The market for document summarization is a battlefield of innovation and marketing noise. Players range from open-source toolkits to enterprise-grade SaaS platforms—each promising unique features. According to Friday.app, 2025, best-in-class tools support 50+ languages, integrate with workflow apps, and offer customizable summaries (from detailed to “just the headlines”).

| Tool Name | Format Support | Customization | Workflow Integration | Pricing |

|---|---|---|---|---|

| TextWall.ai | Text, PDF, images | Advanced | Full API, plug-ins | Tiered, free trial |

| Competitor A | Text | Basic | Minimal | Subscription |

| Competitor B | Text, PDF | Limited | Basic | Per document |

| Open-source X | Text | None | None | Free |

Table 5: Market snapshot of leading document summarization tools

Source: Original analysis based on Friday.app, 2025, ClickUp, 2024

Trends to watch: a surge in vertical solutions tailored to legal, healthcare, or research; the rise of open APIs for custom deployments; heated debates over proprietary vs. open-source transparency.

What really matters: Criteria that separate winners from wannabes

Choosing a tool isn’t about chasing features—it’s about fit. Must-haves include real-time processing, support for multiple formats, robust customization, seamless integration, and airtight security.

Critical questions before choosing a document summarization tool:

- Does it support the formats (PDF, text, images) you actually use?

- Can it handle your industry’s jargon and sensitive documents?

- Is the customization granular enough for your needs?

- Does it integrate with your workflow (APIs, plug-ins)?

- Are there transparent audit logs and user controls?

- How is data privacy managed and where is data stored?

- What’s the track record for support and updates?

Balancing speed, security, and accuracy is non-negotiable. In the crowded field, textwall.ai stands out as a trusted resource for advanced document analysis—offering not only speed but customization and compliance expertise.

DIY, outsource, or AI-as-a-service?

Not all paths lead to the same destination. Building in-house demands technical depth and ongoing maintenance. Outsourcing can offer expertise but may compromise on speed or confidentiality. AI-as-a-service platforms strike a balance between power and ease of use.

Five-step checklist for evaluating organizational fit:

- Assess document volume and complexity.

- Map existing workflows and integration points.

- Evaluate in-house expertise and resources.

- Identify compliance and confidentiality requirements.

- Calculate total cost of ownership (software, staff, risk).

Costs, flexibility, and risks vary. For small teams, plug-and-play solutions like textwall.ai deliver immediate impact. For enterprises, hybrid or custom deployments may be justified. The key: start lean, iterate fast, and demand transparency.

Mastering the workflow: Integrating summarization into your daily grind

How to build a bulletproof document analysis process

Document summarization is only as effective as the workflow it supports. Systematic, repeatable processes are essential for delivering consistent results.

Priority checklist for integration:

- Map your end-to-end document flow.

- Identify high-volume, high-impact touchpoints.

- Select tools that fit your document types and security needs.

- Designate human validators for critical steps.

- Build audit trails for regulatory compliance.

- Automate low-risk, high-volume summarization.

- Establish feedback loops for continuous improvement.

Common mistakes? Relying on “out-of-the-box” settings for specialized domains, underestimating the need for training, or skipping human validation. Avoid these, and you’ll turn chaos into clarity.

Human + machine: Getting the best of both worlds

The future isn’t AI vs. humans—it’s AI with humans. Hybrid workflows leverage machine speed and human discernment. The trick is knowing when to automate and when to tap the brakes for a human check.

Practical tips include:

- Assign validators for high-stakes summaries.

- Use AI for triage, but mandate manual review for compliance documents.

- Standardize feedback channels to flag recurring errors.

“Machines are fast. People are wise.” — Sam, workflow designer (quote based on industry best practices)

There’s no one-size-fits-all approach. Context—domain, document type, risk profile—dictates how the pieces fit.

Metrics that matter: How to measure success

You can’t improve what you don’t measure. Key performance indicators (KPIs) for document summarization include speed, accuracy, readability, and error rates.

| Metric | How to Calculate | What it Means |

|---|---|---|

| Average summary time | Total time/summaries completed | Workflow efficiency |

| ROUGE/BERTScore | Automated metric vs. reference | Accuracy, context retention |

| Error rate | # of errors / # of summaries | Reliability |

| User satisfaction | Surveys, feedback loop | Usability |

| Cost per summary | Total cost / # of summaries | ROI |

Table 6: Key metrics for measuring document summarization success

Source: Original analysis based on Metapress, 2025, Friday.app, 2025

Continuous improvement requires regular review. Build reporting dashboards to surface trends, bottlenecks, and areas for refinement.

The ethical edge: Who controls the narrative?

The invisible hand shaping your summaries

AI-powered summary tools don’t just reflect reality—they shape it. Bias and subjectivity can seep in through training data, prompt design, or hidden algorithms. Who decides what’s “important”? Often, it’s the machine—or the engineers behind it. Transparency and auditability are essential best practices. Users must have the means to inspect, challenge, and correct outputs.

Privacy, security, and the data wild west

Uploading sensitive reports to cloud summarizers can open Pandora’s box of privacy risks. Data leaks, unauthorized access, or unclear retention policies are real threats.

Seven ways to protect sensitive information:

- Use end-to-end encrypted solutions.

- Clarify data retention and deletion policies.

- Limit document uploads to non-confidential data.

- Choose vendors with robust compliance certifications.

- Audit access logs regularly.

- Train users on secure document handling.

- Mandate periodic security reviews.

With regulatory norms tightening, user diligence is more critical than ever. A high-profile example: in 2023, a healthcare provider faced a privacy breach after uploading unredacted records to a third-party summarizer, resulting in regulatory fines and damaged trust.

Can you trust your summary? Verifying and validating outputs

Never accept automated summaries at face value. Verification is your frontline defense.

Six-step guide to validating automated summaries:

- Compare summary to source document for fidelity.

- Check for omitted, altered, or hallucinated information.

- Engage domain experts to review critical outputs.

- Use automated tools for cross-validation.

- Maintain version-controlled records.

- Solicit user feedback for continuous QC.

Catching errors before they propagate isn’t optional—it’s mission critical. Vigilance is the unspoken price of speed.

Future shock: Where document summarization goes from here

Emerging trends: What’s next for speed and accuracy

The field is in constant flux. Multimodal summarization is on the rise, with tools processing not just text, but images, audio, and video transcripts. Human-AI collaboration is deepening, empowering teams to blend intuition with computational scale.

Will instant summarization reshape how we think?

Summarization is more than a tool—it’s a shift in mindset. Knowledge work, education, and even governance are reorganizing around the expectation of instant synthesis. Taylor, a futurist, puts it bluntly:

“Summarization isn’t just a tool—it’s a mindset shift.” — Taylor, futurist (quote reflecting field trends)

Still, the risks of overdependence are real. When nuance is lost in the chase for brevity, critical detail may fall through the cracks.

What to watch for: Pitfalls, promises, and the next big debates

Controversy is part of the territory. Unresolved challenges include:

- Ensuring unbiased, context-aware outputs.

- Addressing explainability for complex models.

- Maintaining privacy in decentralized workflows.

- Balancing speed with legal/compliance needs.

- Preventing summary “echo chambers.”

- Adapting KPIs for next-gen tools.

- Integrating multimodal content seamlessly.

- Navigating shifting regulatory landscapes.

With new regulations and technical breakthroughs on the horizon, staying critical isn’t just wise—it’s necessary.

Your ultimate guide: Actionable steps to own document summarization

Quickstart: How to get results in 30 minutes or less

You don’t need a PhD or a six-figure budget to start. Here’s a rapid-start workflow:

- Identify your pain point: Is it contract review, research overload, or team reporting?

- Choose a trusted tool (e.g., textwall.ai) with proven results.

- Upload your key documents.

- Select your summary preferences (detailed, bullet, or custom).

- Review AI-generated summaries, cross-check with originals.

- Share insights with the team or integrate into your workflow.

Within half an hour, you’ll move from chaos to clarity—no coding required.

Checklist: Are you ready for next-level document analysis?

Assess your readiness for high-impact summarization:

- Do you process more documents than you can handle manually?

- Are key decisions delayed by information bottlenecks?

- Do you have secure, compliant document workflows?

- Is your team trained to validate AI outputs?

- Are you using customizable tools, not just generic solutions?

- Is feedback from users regularly integrated?

- Do you track KPIs for summarization effectiveness?

- Are privacy and security policies enforced?

- Do you conduct regular audits of your summarization tools?

- Is management bought in and supportive?

Weak spots? Target them with focused improvements—small steps, big impact.

Ongoing adaptation is the name of the game. Technologies, threats, and opportunities evolve; so must your approach.

Key takeaways: What you should remember (and what to forget)

This isn’t about the hype—it’s about results. The dominant themes:

- Document summarization for faster analysis is a non-negotiable survival skill.

- Speed and accuracy must be balanced by vigilant oversight.

- The best tools are those that fit your unique workflow and compliance needs.

- Human expertise is irreplaceable—AI is an accelerator, not a replacement.

- Security, privacy, and ethics are foundational, not afterthoughts.

Unconventional uses for fast document summarization:

- Real-time meeting note generation for remote teams.

- Rapid synthesis of customer feedback for product teams.

- Triage of academic literature for researchers.

- Accelerated onboarding for new hires.

- Crisis communication documentation in emergencies.

- Automated competitive intelligence scanning.

- Streamlining due diligence in M&A workflows.

Forget the idea that faster always means better, or that technology can eliminate human judgment. Remember that mastery comes from pairing the right tools with the right checks—and from never, ever giving up on critical thinking. In a world obsessed with speed, the real edge belongs to those who think twice and act once.

Appendix & resource vault

Technical glossary: Demystifying the jargon

Clarity is power. Here are the key terms you’ll encounter:

Condensing information to its essentials; can be extractive or abstractive.

AI models trained on vast language datasets, capable of nuanced text generation.

Compiling summaries by selecting key sentences from the original text.

Creating summaries by paraphrasing the core ideas in new language.

Dividing text into manageable units (tokens) for analysis.

The chunk of text an AI model considers at one time.

Statistical measures for evaluating the quality of a summary.

The repetition of information; minimized in high-quality summaries.

These definitions thread through every section of this article, offering context for both technical and practical discussions.

Further reading and references

Knowledge is a moving target. For those hungry to dig deeper:

- Friday.app: Best AI Document Summarizers for 2025

- Metapress: Top 5 Text Summarization Tools of 2025

- ClickUp Blog: Best AI Document Summarizers

- V7 Labs: Intelligent Document Processing Market Report 2024

- Thomson Reuters: AI in Contract Review

- SignHouse: Document Management Statistics 2024

- Harvard Business Review: The Real Risks of AI Summarization (verify link before use)

- Stanford AI Index 2024: Trends in NLP (verify link before use)

- OpenAI: Technical Reports on LLM Summarization (verify link before use)

Vet your sources—credibility beats convenience. The field evolves fast; stay alert, stay critical, and keep questioning everything.

Sources

References cited in this article

- Friday.app: Best AI Document Summarizers for 2025(friday.app)

- Metapress: Top 5 Text Summarization Tools of 2025(metapress.com)

- ClickUp Blog: Best AI Document Summarizers(clickup.com)

- V7 Labs: IDP Market Growth(v7labs.com)

- SignHouse: Industry Statistics(usesignhouse.com)

- Thomson Reuters: AI in Document Analysis(thomsonreuters.com)

- HuggingFace: Accelerating Document AI(huggingface.co)

- Docsumo: AI Document Processing Trends(docsumo.com)

- OpenPR: Market Growth(openpr.com)

- OSTI.gov: Advances in Document Summarization 2023-2024(osti.gov)

- IBM: What is Text Summarization?(ibm.com)

- TechFocusPro: Manual vs. AI Summarization(techfocuspro.com)

- Gomega.ai: Text Summarization Techniques(gomega.ai)

- DocumentLLM: AI Summarizers Guide 2024(documentllm.com)

- Convozen.ai: Automatic Summarization(convozen.ai)

- Medium: Generative Summarization Techniques(medium.com)

- Springer: Evaluation Metrics Survey(link.springer.com)

- Lexology: AI Risks 2024(lexology.com)

- Wikipedia: Hallucination (AI)(en.wikipedia.org)

- PapersWithCode: Multi-Document Summarization(paperswithcode.com)

- Enago Academy: AI Summarizer Comparison(enago.com)

- Sembly AI: Best Summarizers 2025(sembly.ai)

- Adlib: Big 8 Trends 2025(adlibsoftware.com)

- ShareFile: AI Document Summarization Workflow(sharefile.com)

- ClickUp: Document Management Workflow(clickup.com)

- arXiv: Legal Summarization Survey(arxiv.org)

- arXiv: Survey of Text Summarization(arxiv.org)

- Nature Medicine: LLMs in Clinical Summarization(nature.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization for Enhanced Productivity, Without the Hidden Risks

Document summarization for enhanced productivity is rewriting the rules of efficiency—discover the hard truths, hidden risks, and the smartest ways to win.

Document Summarization for Efficiency Without Losing Nuance

Document summarization for efficiency is evolving fast. Discover new truths, hidden risks, and how to leverage AI for your productivity now. Don’t fall behind.

Document Summarization for Decision-Makers Who Can’t Miss a Risk

Document summarization for decision-makers just got real. Unmask hidden risks, discover actionable strategies, and outsmart information overload now.

Document Summarization for Corporate Decision-Making Without Blind Spots

Document summarization for corporate decision-making exposes hidden risks and real ROI. Discover what executives need to know before it's too late. Read now.

Document Summarization for Businesses That Actually Drives Decisions

Welcome to the war zone of modern business: the relentless, soul-grinding battlefield of information overload. If you think “document summarization for

Document Summarization for Better Decisions When Speed Distorts Truth

If you think you’re in control of the tidal wave of information flooding your inbox, think again. In the modern workplace, document summarization for better

Document Summarization for Accuracy in an Era of AI Mistrust

Document summarization for accuracy exposes how to outmaneuver misinformation, avoid costly mistakes, and master the art of reliable summaries. Read before you trust.

Document Summarization for Academics When You Can’t Trust AI

Discover insights about document summarization for academics

Document Summarization for Academic Productivity That Actually Works

Document summarization for academic productivity just got real—discover the 2026 strategies, tools, and hard truths that will change your research forever.