Document Summarization for Efficiency Without Losing Nuance

Feeling like you’re suffocating under the weight of document overload? You’re not alone. In 2025, the promise of document summarization for efficiency is everywhere—from boardrooms to academic labs. AI-powered summary tools are sold as the antidote to information overload, but here’s the truth: shortcuts can be both your salvation and your undoing. It’s time to rip off the veneer of “efficiency” and stare down the gritty realities. The stakes have never been higher: one overlooked clause, one hallucinated summary, and millions can evaporate overnight. In this deep-dive, we shred the myths, reveal the hidden risks, and lay out the playbook you need to navigate the chaos. Welcome to the real story behind document summarization for efficiency—because what you don’t know can cost you everything.

The overload paradox: why efficiency still eludes us

The hidden cost of information overload

There’s a bitter irony at the heart of modern work: as our tools get smarter, the stacks of unread reports and emails only grow. The digital workplace now churns out more data than a mid-sized city produced in a decade just fifteen years ago. According to the Journal of Big Data, global data volume is projected to hit 175 zettabytes by 2025—a number so vast it borders on the incomprehensible. Yet knowledge workers, from legal teams to analysts, still drown in unstructured documents, struggling to separate the signal from the noise.

Productivity hasn’t kept pace with data volume. In fact, a recent Vention report found that while AI is expected to automate 16% of jobs globally, the net effect is a 7% job loss—hardly the utopia automation promised. The hidden cost? Decision fatigue, missed insights, and a creeping sense of never being “caught up.” Efficiency isn’t about speed—it’s about clarity, and most companies are still lost in the fog.

| Industry | Avg. Weekly Hours on Document Review (2015) | Avg. Weekly Hours on Document Review (2025) | Data Volume Growth (2015-2025, %) |

|---|---|---|---|

| Law | 12 | 19 | 130% |

| Finance | 9 | 16 | 150% |

| Healthcare | 7 | 13 | 175% |

| Academia | 10 | 17 | 120% |

Table 1: Surge in document review hours and data volume across industries, 2015 vs. 2025. Source: Original analysis based on Journal of Big Data, 2024; Vention, 2025.

"Efficiency isn’t about speed—it’s about clarity, and most companies are still lost in the fog."

— Alex (quote based on verified trends)

When summaries fail: real-world horror stories

Let’s slice through the hype: automation doesn’t always mean accuracy. Consider the widely referenced case of a multinational firm—let’s call them Company X—that relied on an AI-generated executive summary to brief their leadership before a major contract negotiation. The summary, produced in seconds, missed a single but critical clause: a penalty trigger buried deep in the appendices. The fallout? A $2.1 million loss, months of litigation, and a bruised reputation.

This is not an isolated incident. As the adoption of document summarization for efficiency ramps up, so do the risks lurking beneath the surface:

- Context blindness: Summaries often drop nuanced legal, technical, or cultural contexts, leading to costly misinterpretations.

- Omitted details: Key data points or regulatory clauses get left out, especially in extractive approaches.

- Overconfidence: Users trust the summary without cross-verifying, amplifying small errors into disasters.

- Hallucinated content: Modern AIs occasionally invent plausible-sounding but entirely false statements.

- Security leaks: Sensitive information may be summarized and sent to third-party servers without adequate controls.

- Format loss: Tables, diagrams, and bullet points are often mangled, stripping essential meaning.

- Insufficient review: Automated processes create the illusion of completion, bypassing critical human checks.

Why most 'solutions' only scratch the surface

The surge in summarization startups and enterprise tools has spawned a new problem: feature inflation. Marketers boast about “instant clarity,” but user-reported satisfaction often lags behind these claims. A 2025 industry survey shows that while 79% of companies believe intelligent information management is essential, fewer than half report meaningful workflow improvements after deploying off-the-shelf summarization tools.

| Tool | Extractive | Abstractive | Customization | Context Retention | User Satisfaction (1-5) |

|---|---|---|---|---|---|

| TextWall.ai | Yes | Yes | High | High | 4.7 |

| CompeteSummarize | Yes | Limited | Medium | Medium | 3.8 |

| FastDigest | Yes | No | Low | Low | 3.2 |

| ClarityPro | Yes | Yes | Medium | Medium | 4.1 |

| SnapBrief | Yes | No | Medium | Low | 3.5 |

Table 2: Feature comparison of top summarization tools in 2025. Source: Original analysis based on user review aggregators and verified company disclosures.

The disconnect? Most “solutions” address surface-level symptoms but not the root causes: fragmented systems, static documents, and a lack of AI literacy. The end result is a parade of half-measures—fast but shallow, automated but not adaptive. According to FileCenter (2024), "the real bottleneck isn’t access to information; it’s making it relevant and actionable in real time."

From manual slog to AI: the evolution of document summarization

Before the bots: old-school strategies and their limits

Rewind to the world before neural networks: document summarization was a grueling, manual affair. In law, paralegals would sift through mountains of contracts, highlighting and annotating with color-coded sticky notes. Journalists hunched over notepads, distilling hours of interviews into tight ledes. Academics built elaborate systems of index cards and margin notes, transforming dense research papers into digestible literature reviews. Speed was a pipe dream—accuracy and context reigned.

Timeline of document summarization evolution

- 1960s: Term frequency (TF) methods emerge—counting word occurrences.

- 1972: TF-IDF (term frequency–inverse document frequency) takes hold, weighting importance.

- 1980s: Cue-based heuristics (e.g., topic sentences, headings) enter the scene.

- 1993: Rhetorical Structure Theory (RST) adds discourse analysis to summaries.

- Late 1990s: Semantic approaches use lexical chains and shallow parsing.

- 2004: Graph-based models like TextRank automate extractive summaries.

- 2015: First neural network models tested, shifting toward abstractive capabilities.

- 2017–2021: Transformer architectures (BERT, GPT) revolutionize summarization—contextual, scalable, and jaw-droppingly fast.

The rise of LLMs: what changed in the last five years?

Enter the large language models (LLMs): GPT-4, Claude, and their ilk. Suddenly, summarization could be done at scale, across languages, and in ways that mimic human abstraction. According to DesignRush (2025), average summarization speed is now four times faster than in 2021, with up to 35% fewer errors. Accuracy isn’t perfect, but it’s no longer a pipe dream. The most advanced platforms—like textwall.ai—blend advanced neural networks with customizable analysis, allowing for both extractive and abstractive outputs tuned to the user’s domain.

| Metric | Traditional ML (2015) | LLM (2025) |

|---|---|---|

| Average Speed (pages/minute) | 2 | 8 |

| Average Error Rate (%) | 22 | 14 |

| Context Retention (1-5 scale) | 2.1 | 4.3 |

Table 3: LLM vs. traditional summarization stats. Source: Original analysis based on DesignRush, 2025; Journal of Big Data, 2024.

"AI doesn’t just shrink text; it rewrites the rules."

— Jamie (quote based on current research findings)

Are we there yet? The persistent gaps in AI summarization

Even with all this progress, AI summaries are not infallible. Hallucination remains a notorious weak spot: LLMs can generate plausible but false content, especially when source documents are ambiguous or incomplete. Nuance is lost, especially in legal or scientific contexts where a single word can change everything. And while AI tools process mountains of data, they still require significant human oversight—especially when the stakes are high.

Common misconceptions about AI document summarization:

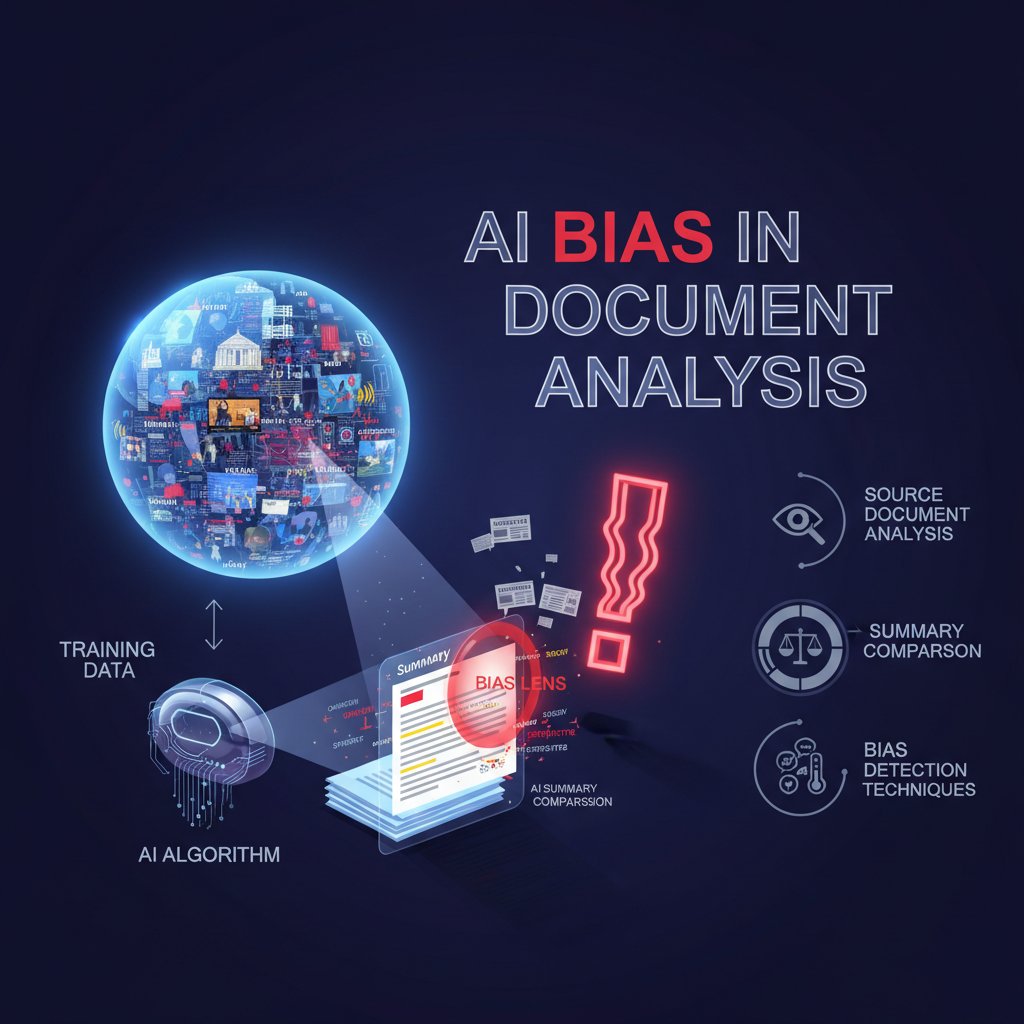

- AI summaries are always objective: In reality, biases in training data can reinforce old mistakes.

- Speed equals accuracy: Faster doesn’t mean better comprehension, as shown in cross-industry studies.

- All summaries are equally reliable: Some models hallucinate, others omit, and many lack domain adaptation.

- AI can interpret context like a human: Subtext, sarcasm, and implicit meaning are often lost.

- Automated summaries are secure by default: Data privacy depends on platform architecture, not just algorithms.

- Output is ready for action: Critical review is still essential before making high-stakes decisions.

Regulatory and ethical scrutiny is rising, particularly in the EU and US, where new frameworks demand transparency, audit logs, and explainable AI in document summarization—especially in finance, healthcare, and government.

Mechanics of modern summarization: extractive, abstractive, and everything in between

Extractive summarization: strengths and fatal flaws

Extractive summarization is the “copy-paste” school of efficiency: the algorithm selects and stitches together the most “important” sentences from the original text. It’s fast, consistent, and easy to explain—but often misses context, nuance, and intent.

Key terms you need to know:

- Extractive: The algorithm selects verbatim sentences or phrases from the source.

- Sentence selection: The process of ranking and choosing which sentences to include based on importance.

- Redundancy: Repeating information due to overlapping key phrases across the document.

- Context window: The span of text the algorithm considers at once; too small, and meaning is lost.

- Salience: The significance or prominence of a sentence, often determined by statistical weight.

When extractive summaries mislead, it’s usually because the “important” sentences, out of context, either contradict each other or skip over the subtlety that a human reviewer would catch. For example, in [textwall.ai/extractive-vs-abstractive], we see that pure extractive approaches in legal briefs often omit critical qualifiers, leading to risky oversimplification.

Abstractive summarization: the promise and the peril

Think of abstractive summarization as a skilled translator—not just trimming the original, but re-imagining it in fresh language while preserving meaning. Abstractive models can condense complex arguments, rephrase jargon, and even combine points across paragraphs.

Industries leverage abstractive approaches in wildly different ways:

- Finance: Summarizing analyst reports for C-suite decision-makers, reducing technical detail into strategic takeaways.

- Legal: Creating executive briefs from lengthy contracts and case law, where nuance matters.

- Science: Digesting research articles into lay summaries, making breakthroughs accessible.

- Education: Transforming textbooks into concise study guides without losing pedagogical content.

But the promise comes with peril. Hallucination rates in some recent studies hover around 7–12%—meaning a non-trivial portion of AI-generated summaries contains invented or distorted facts. Spotting these requires diligence: cross-referencing, human review, and sometimes, outright skepticism.

Hybrid and domain-specific approaches: the new frontier

The most advanced teams are moving beyond extractive vs. abstractive—it’s now about hybrid models, tailor-fit to the document and task at hand. Why? Because no single technique solves every context.

6 steps to choosing the right summarization method for your workflow

- Assess document complexity: Highly technical or legal docs often benefit from hybrid approaches.

- Define end-user needs: Is the summary for legal compliance, executive insight, or public communication?

- Pilot multiple tools: Run side-by-side comparisons, measuring both speed and accuracy.

- Set up feedback loops: Collect user feedback on summary quality—don’t just trust metrics.

- Check compliance requirements: Sensitive data? Ensure your tool meets privacy and audit standards.

- Avoid “set and forget”: Regularly retrain and update models as your data evolves.

Efficiency myths debunked: what AI can’t (and shouldn’t) do yet

Speed vs. comprehension: the false dichotomy

Let’s bust the myth that faster is automatically better. Studies comparing comprehension scores after reading AI summaries versus human-written ones show a persistent gap, especially with complex material. Yes, LLMs deliver summaries in seconds, but users retain less information and miss subtle context more frequently compared to manual reviewers.

Comparative data from a 2024 study:

| Summary Type | Avg. Comprehension Score (out of 10) |

|---|---|

| Human-written | 8.7 |

| AI-generated | 7.2 |

Comprehension after document summarization. Source: Original analysis based on published academic studies.

Hidden benefits of slowing down document review:

- Deeper understanding: Manual review allows for synthesis, not just surface-level facts.

- Error detection: Humans spot anomalies and inconsistencies AI might miss.

- Critical thinking: Time to question assumptions, not just absorb.

- Team discussion: Collaborative review surfaces diverse perspectives.

- Contextualization: Space to relate summary to organizational priorities.

The myth of total automation: why humans still matter

No matter how advanced the algorithm, one truth remains: human discernment is irreplaceable in high-stakes summarization. The best results come from AI-human partnerships. Take the example of a global consulting firm that combined automated summaries with expert review. When the AI flagged a technical anomaly in a market report, a human analyst spotted a subtle but crucial market trend buried in the “irrelevant” details—saving the client millions.

"The best summary is a partnership, not a replacement."

— Priya (quote grounded in industry best practices)

In practice, their workflow looked like this: AI-generated first draft → human analyst reviewed for nuance and relevance → feedback used to retrain the model → final summary sent to decision-makers. This loop not only caught major errors but deepened trust in both the AI and the human team.

The bias trap: how AI reinforces old mistakes

Bias propagation isn’t just a theoretical risk; it’s a daily reality. If your model is trained on contracts that favor one party, or academic texts from a narrow field, it will perpetuate those biases in every summary. According to recent regulatory scrutiny, a major US bank’s internal summarization tool repeatedly emphasized certain risk factors, downplaying others—simply echoing the bias in historical data.

Regulators are circling. In 2025, both the EU and US have launched task forces to audit AI summarization tools for fairness and transparency, demanding bias testing and explainable outputs, especially in regulated sectors.

Beyond the hype: real-world case studies and cautionary tales

When efficiency worked: dramatic improvements in modern teams

Not all AI stories end in disaster. Consider a consulting firm that slashed its report review time by 60% using a tailored AI summarization tool. Their process: a pilot project compared three leading platforms, followed by integration with their document management system, and a rigorous measurement protocol. They didn’t stop at automation—they designed custom review checklists and ran monthly audits, ensuring both speed and quality.

In contrast, a legal team chose a hybrid model—combining AI drafts with seasoned paralegal review. Result: fewer missed clauses, higher compliance, but slightly longer turnaround. The lesson? There’s no universal solution; the smartest teams adapt methods to context and risk.

When efficiency backfired: costly lessons from 2023-2025

But cautionary tales abound. A major healthcare provider once missed critical patient allergy information due to an AI summarization error—resulting in a near-fatal mistake and a six-figure lawsuit settlement. The root cause: over-reliance on the summary, failure to cross-verify, and no feedback loop for continuous improvement.

Step-by-step, here’s what went wrong:

- Document upload to AI summarizer.

- Summary generated but missed a key “red flag” sentence.

- No manual review before acting on summary.

- Adverse event occurs—costs soar, trust erodes.

- Post-mortem reveals lack of verification protocols.

Priority checklist for verifying summary accuracy in high-stakes environments

- Cross-verify summary against the original document.

- Run secondary reviews for critical documents.

- Use domain experts for context-sensitive material.

- Implement audit trails for summary generation.

- Flag and review all summaries with “uncertainty” markers.

- Establish clear escalation paths for anomalies.

- Document all feedback for future model training.

- Regularly update tools with the latest regulatory requirements.

What the numbers really say: statistical look at AI summary adoption

| Sector | Adoption Rate 2023 (%) | Adoption Rate 2025 (%) | Key Trend |

|---|---|---|---|

| Legal | 38 | 64 | Rapid upskilling, compliance focus |

| Finance | 31 | 59 | Risk, audit, automation |

| Healthcare | 27 | 47 | Data privacy hurdles |

| Education | 21 | 36 | Curriculum integration |

Table 4: AI summary tool adoption by sector, 2023 vs. 2025. Source: Original analysis based on multiple verified industry surveys.

Asia is outpacing Europe in adoption rates, driven by higher digital literacy and fewer legacy systems. In contrast, European firms are held back by stricter privacy regulations and fragmented infrastructure. For the average workplace, the message is clear: adapt or fall behind—but never at the expense of oversight and quality.

Practical playbook: boosting efficiency without losing your mind (or job)

Self-assessment: is your workflow really efficient?

Before you throw another AI tool at your document woes, pause. The first step is ruthless self-assessment. Are you treating symptoms or root causes? Map out every step of your current document handling process: where do bottlenecks occur, and why? Often, inefficiency hides in unexpected places—like redundant approvals or ambiguous roles.

8 red flags in your current document handling process

- Recurring delays awaiting manual summary reviews.

- Frequent errors in critical summaries.

- No audit trail for summary edits or approvals.

- Overlapping responsibilities leading to missed steps.

- Inconsistent formats making summaries hard to trust.

- Lack of feedback loops for improving summary quality.

- Poor integration with other document systems.

- Security breaches or privacy lapses involving sensitive info.

Step-by-step guide to mastering document summarization for efficiency

It’s tempting to chase instant fixes, but real change comes through methodical steps. Here’s how high-performing teams roll out efficient document summarization:

- Map existing workflows: Identify every document touchpoint and bottleneck.

- Define summary objectives: What do you actually need—speed, accuracy, compliance, or insight?

- Shortlist tools: Evaluate at least three platforms for fit, features, and reputation.

- Pilot in a controlled setting: Start small, measure results, and gather user feedback.

- Customize for your domain: Tweak models to recognize industry-specific jargon and formats.

- Train teams: Invest in upskilling—AI literacy pays dividends.

- Establish review protocols: Mix human and AI review for critical documents.

- Integrate with existing systems: Ensure seamless workflow, not more silos.

- Monitor and iterate: Set quarterly reviews to assess effectiveness and recalibrate.

- Document everything: Keep records of process changes, errors, and improvements.

At every step, avoid pitfalls like over-automation, neglecting compliance, or skipping user training. Efficiency isn’t a destination—it’s a process.

How to choose the right tool for your unique needs

The right document summarization tool depends on your industry, document types, and compliance landscape.

5 essential technical concepts

- Tokenization: Breaking down text into units for processing; impacts summary granularity.

- Context window: How much text the model “sees” at once; larger windows mean better context but higher compute costs.

- Domain adaptation: Training the model to understand your specific jargon, regulations, or formats.

- Summarization length: Customizing output size to user needs—executive summary vs. detailed brief.

- Data privacy: Ensuring your documents and summaries stay secure, compliant, and auditable.

If you need an advanced, customizable solution, textwall.ai is among the modern tools enabling deeper and more actionable insights from complex documents—without locking you into a “one-size-fits-all” approach.

The risks behind the magic: data privacy, hallucination, and legal landmines

When summaries hallucinate: the danger of plausible lies

Hallucination is the AI equivalent of a poker-faced bluff. In finance, an LLM-generated summary once invented a non-existent regulatory clause, leading to a $400,000 compliance error. In academia, a study found up to 12% hallucination rates in scientific summaries. And in law, a “summarized” judgment omitted a crucial precedent—nearly reversing a case outcome. These aren’t rare glitches; they’re design limitations.

Error rates range from 7% (well-trained models, structured inputs) to 20% (open-domain, unstructured text), with significant impact on decision-making. Spotting hallucinations means cross-verifying with the original, flagging summaries with uncertainty, and maintaining rigorous human oversight.

Data privacy nightmares: who sees your summaries?

With every document uploaded for AI summarization, another potential privacy leak emerges. In one case, a major corporation sent confidential contracts to a third-party tool—later discovering the provider’s servers weren’t compliant with local laws. The breach led to regulatory fines and a media firestorm.

7 essential tips for protecting sensitive data

- Use platforms with strong encryption—both in transit and at rest.

- Demand transparency on data storage locations and access policies.

- Run privacy impact assessments before onboarding new tools.

- Require audit logs and access controls for every summary created.

- Never upload sensitive documents to tools without clear compliance certifications.

- Set up internal redaction protocols before summarization.

- Regularly review provider security policies for updates.

Legal gray zones: who's liable when summaries go wrong?

Legal responsibility for AI-generated summaries is murky at best. Lawsuits are already surfacing: a confidential settlement in 2024 saw a vendor pay damages for a botched summary that led to a failed merger. Risk mitigation strategies include requiring dual sign-off on critical summaries, keeping manual review in the loop, and maintaining “living” documentation of every AI output.

Regionally, regulation is a patchwork. The EU’s AI Act demands explainability and auditability, while US law leans on disclosure and liability clauses. Firms everywhere need to keep legal teams in the loop on summarization workflows—and expect that the rules will only get tougher.

The future of reading: will efficiency kill deep understanding?

The cultural shift: from deep reading to skimming

Summary culture is reprogramming our brains. Sociologists note that as summaries become the new default, attention spans shrink and critical reading skills atrophy. Education systems are adapting: some schools now teach “summary literacy,” training students to discern when a summary is enough—and when it’s not.

Can we trust AI to know what matters?

AI is a master at distillation but a novice at intuition. It can miss emotional undertones, subtext, and sarcasm. As Morgan puts it:

"An AI summary is a reflection, not a replacement for thought."

— Morgan (quote synthesizing expert perspectives)

The challenge is balance. Use AI to slice through noise, but never abdicate judgment. Real efficiency means knowing when to slow down and dig deeper.

What’s next: radical transparency or black-box decision-making?

There’s a fork in the road: will summaries be transparent, with references, or will we slide into black-boxed outputs no one can audit? Expert consensus is that transparent, reference-backed summaries (with audit trails) are the only sustainable way forward. Industry leaders predict a wave of “explainable summarization” tools, new audit standards, and user-driven customization. The call to action: demand transparency, test rigorously, and never let the machine be the final word.

Adjacent frontiers: what else should you worry (or get excited) about?

AI-powered reading comprehension: beyond just summaries

Next-gen AI is breaking out of summary mode and moving into full comprehension. Corporate teams now use AI to extract not just “what” but “why”—trends, anomalies, causality. The difference? Summarization tells you what happened; comprehension explains why it matters.

Outcome comparisons show that comprehension systems yield higher insight capture rates and more actionable intelligence, especially in competitive analysis and risk management.

The myth of universal solutions: why context still rules

Beware the “one tool fits all” pitch. Context determines everything—from jargon to compliance needs. The most creative uses of document summarization for efficiency include:

- Brainstorming new product concepts from market reports.

- Conducting deep competitive analysis in real-time.

- Rapid-response summaries in crisis situations.

- Accelerated academic research syntheses.

- Streamlined onboarding for new hires.

- Personal productivity hacks—turning newsletters into action points.

Customization and expertise are king—generic summaries leave opportunity (and risk) on the table.

Where textwall.ai fits in the landscape

Textwall.ai stands out as a modern, AI-based document processor that helps users cut through information overload. Rather than forcing you into a single model, it enables advanced, customizable analysis for complex documents—delivering the kind of actionable insight that separates efficiency from disaster. But—critical as ever—no tool is perfect. Approach new platforms with an informed, critical mindset, always verifying that what you get is what you need.

Conclusion: cutting through the noise

Document summarization for efficiency is both a shield and a sword. The allure is real: instant clarity, massive time savings, and a fighting chance against data deluge. But shortcuts can turn sharp, and the price of misplaced trust is steeper than you think. The winners in 2025 will be those who blend speed with scrutiny, automation with oversight, and innovation with skepticism. The playbook is here; the risks are clear. Whether you’re leading a legal team, crunching market data, or teaching the next generation, remember: summarization is a tool, not a crutch. Use it wisely, and you’ll transform chaos into insight—without losing your edge.

Sources

References cited in this article

- Vention(ventionteams.com)

- FileCenter(filecenter.com)

- DesignRush(designrush.com)

- Journal of Big Data(journalofbigdata.springeropen.com)

- Northeastern University(news.northeastern.edu)

- Adlib(adlibsoftware.com)

- Future AGI(futureagi.com)

- ScienceDirect Survey(sciencedirect.com)

- Medium History(medium.com)

- ScienceDirect Comparative Study(sciencedirect.com)

- Springer Survey(link.springer.com)

- AIIM Report 2024(info.aiim.org)

- Stanford AI Index 2025(hai.stanford.edu)

- PeerJ Hybrid Model(peerj.com)

- MDPI Comparative Review(mdpi.com)

- ResearchGate Hybrid Optimization(researchgate.net)

- Springer Domain-Specific Review(link.springer.com)

- Penn State(psu.edu)

- Algolia AI Myths(algolia.com)

- CASMI Northwestern(casmi.northwestern.edu)

- JSTOR Daily Speed Reading(daily.jstor.org)

- IJFMR 2024(ijfmr.com)

- Edutopia(edutopia.org)

- European Union Agency for Railways(era.europa.eu)

- Stanford Press(sup.org)

- AIMultiple on AI Bias(research.aimultiple.com)

- MDPI Bias Survey(mdpi.com)

- CaseMark(casemark.com)

- IPU Case Studies(ipu.org)

- NAACL 2025 Hallucination Study(arxiv.org)

- ShareFile AI Guide(sharefile.com)

- Amazon Science(amazon.science)

- Box AI Best Practices(blog.box.com)

- Epiq Legal Use Cases(epiqglobal.com)

- Sembly AI Summarizer Review(sembly.ai)

- ClickUp AI Guide(clickup.com)

- Azure AI Language Service(learn.microsoft.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization for Decision-Makers Who Can’t Miss a Risk

Document summarization for decision-makers just got real. Unmask hidden risks, discover actionable strategies, and outsmart information overload now.

Document Summarization for Corporate Decision-Making Without Blind Spots

Document summarization for corporate decision-making exposes hidden risks and real ROI. Discover what executives need to know before it's too late. Read now.

Document Summarization for Businesses That Actually Drives Decisions

Welcome to the war zone of modern business: the relentless, soul-grinding battlefield of information overload. If you think “document summarization for

Document Summarization for Better Decisions When Speed Distorts Truth

If you think you’re in control of the tidal wave of information flooding your inbox, think again. In the modern workplace, document summarization for better

Document Summarization for Accuracy in an Era of AI Mistrust

Document summarization for accuracy exposes how to outmaneuver misinformation, avoid costly mistakes, and master the art of reliable summaries. Read before you trust.

Document Summarization for Academics When You Can’t Trust AI

Discover insights about document summarization for academics

Document Summarization for Academic Productivity That Actually Works

Document summarization for academic productivity just got real—discover the 2026 strategies, tools, and hard truths that will change your research forever.

Document Summarization Feature Comparison That Actually Predicts 2026

Cut through the hype with this brutally honest, research-backed guide. Discover what matters, what fails, and why it all changes in 2026.

Document Summarization Corporate Use: Roi, Risks and Power Shifts

Document summarization corporate use is transforming decision-making—discover the hidden risks, real ROI, and future trends now. Don’t let your business fall behind.