Document Image Analysis in 2026: Power, Pitfalls, and Payoffs

Document image analysis isn’t just another tech buzzword—it’s the backbone of digital transformation in enterprise, research, and daily business. But forget the glossy brochures for a minute. Behind every promise of “automated workflows” and “AI-powered insight” lies a reality that’s more complicated, less reliable, and far messier than most vendors will admit. In 2025, the stakes are higher than ever: organizations are betting millions on systems that touch everything from legal contracts to medical records, and slip-ups aren’t just embarrassing—they’re catastrophic. This article rips the plastic off document image analysis, exposing its brutal truths, the wild failures nobody likes to confess, and the real-world tactics that separate survivors from victims in the digital document arms race. Prepare for an unfiltered look at the risks, rewards, and unspoken realities of AI-driven document workflows—plus the actionable insights pros actually use to win.

The rise and reinvention of document image analysis

From dusty archives to digital chaos: a brief history

There’s something almost cinematic about the origins of document analysis—a procession of archivists, sleeves rolled up, hunched over endless rivers of paper. Before digital tools, the process was raw muscle: sorting, cataloging, typing out handwritten forms, and hoping nothing got lost in translation. Human error wasn’t a risk; it was a guarantee. According to historical accounts, entire careers were built on the backbreaking labor of moving paper, and the phrase “lost in the files” was more curse than cliché.

When digitization first arrived, it was less a revolution than a stopgap. Early optical character recognition (OCR) systems promised to free workers from the tyranny of paper, but let’s be honest: those first attempts were clunky, error-prone, and deeply limited by the technology of their time. OCR struggled with anything but pristine, typewritten pages, and even then, accuracy rates hovered precariously low. The idea was right—the execution, not so much.

Yet necessity breeds invention. The leap to modern, AI-driven analysis was triggered by several converging forces: a tidal wave of digital documentation, massive leaps in compute power, and breakthroughs in machine learning. Suddenly, “document image analysis” wasn’t just about reading words—it was about understanding context, extracting relationships, and doing it all at scale. Enterprises realized their data was useless if locked in static images. The transition from static OCR to dynamic, learning systems wasn’t clean or easy, but it was inevitable.

How AI flipped the script

Fast forward to the present, and rule-based document systems have become relics—replaced by the ruthless efficiency of machine learning. Where old software demanded infinite lists of “if-then” rules, modern document image analysis uses deep learning to recognize patterns and adapt to novel layouts, fonts, and languages. The shift wasn’t just technical—it was philosophical. Now, algorithms could “see” a receipt, a handwritten note, or a stamped invoice and make sense of it with uncanny accuracy.

But don’t mistake progress for perfection. According to Recordsforce, 2024, today’s AI models outperform their ancestors by a country mile, especially in accuracy and speed. Yet, the very complexity that gives AI its edge also introduces new hurdles: data bias (where models misinterpret certain styles or languages), opaque decision-making (“black box” outputs that can’t be easily explained), and constant retraining needs. In other words, we’re no longer limited by what the machine can read, but by what it understands—and by how much trust we’re willing to place in algorithmic judgment.

The overlooked costs of going digital

Here’s the gut punch: digital doesn’t always mean cheaper or easier. The “automation” behind document image analysis is propped up by armies of human annotators and quality control specialists—often working in the shadows to feed and police AI models. Annotation costs, data cleaning, and manual review can devour budgets faster than legacy paper handling ever did. Furthermore, as the volume of digital documents explodes (scan volumes are projected to rise 6–8% in 2024, according to Recordsforce, 2024), the environmental toll of data centers and e-waste comes into sharper focus.

Another uncomfortable truth: every digital document is a potential privacy risk. With data breaches and regulatory scrutiny on the rise, security and compliance have never mattered more. Skimping on encryption or access controls is a shortcut straight to legal disaster.

| Year | Major Milestone | Context/Impact |

|---|---|---|

| 1980 | Early OCR systems | Limited to typewritten, clean documents; error-prone and slow |

| 1995 | Introduction of desktop scanning & OCR software | Brought basic digitization to offices, still limited accuracy |

| 2010 | Deep learning enters document analysis | Dramatic improvement in recognizing diverse formats |

| 2020 | AI-powered Intelligent Document Processing (IDP) | Context-aware extraction, support for handwriting, images |

| 2023 | Multimodal analysis (text, image, metadata fusion) | Real-time insights, support for unstructured data |

| 2025 | Edge AI & privacy-preserving analysis | Secure processing close to data sources, focus on compliance |

Table 1: Timeline of document image analysis milestones.

Source: Original analysis based on Recordsforce, 2024 and ResearchGate, 2017

Behind every cloud-based system lies a stack of servers and a trail of carbon emissions. The ethics of large-scale digitization—especially when offshored labor and sustainability are at play—demand hard questions, not easy answers.

How document image analysis really works (beyond the buzzwords)

Decoding the technical layers: OCR, vision, and NLP

At its core, document image analysis is a symphony of technologies. Optical Character Recognition (OCR) converts scanned text to digital characters. Computer vision interprets layout, structure, and imagery. Natural Language Processing (NLP) extracts meaning, sentiment, or even intent from the decoded content. When these layers work in harmony, documents are transformed from static images into actionable data.

Key technical terms you need to know:

The process of converting images of text into machine-encoded text. Essential for digitizing printed documents and forms. Modern OCR handles multi-language and handwritten content, but struggles with poor scans.

Dividing a document image into regions (e.g., text blocks, tables, images) for targeted analysis. Vital for accurate extraction from complex layouts, like invoices or academic articles.

Breaking text into manageable units (words, sentences) for analysis. Critical for NLP tasks such as sentiment analysis or entity recognition.

Combining different data types (text, images, metadata) for richer analysis. Key for extracting context from receipts with both scanned text and embedded logos.

But here’s the deal: preprocessing is the unsung hero. Skewed scans, noise, and inconsistent lighting can tank accuracy before machine learning even gets a chance. Experts agree—get your preprocessing wrong, and even the most advanced model will crumble.

Why most systems struggle with real-world documents

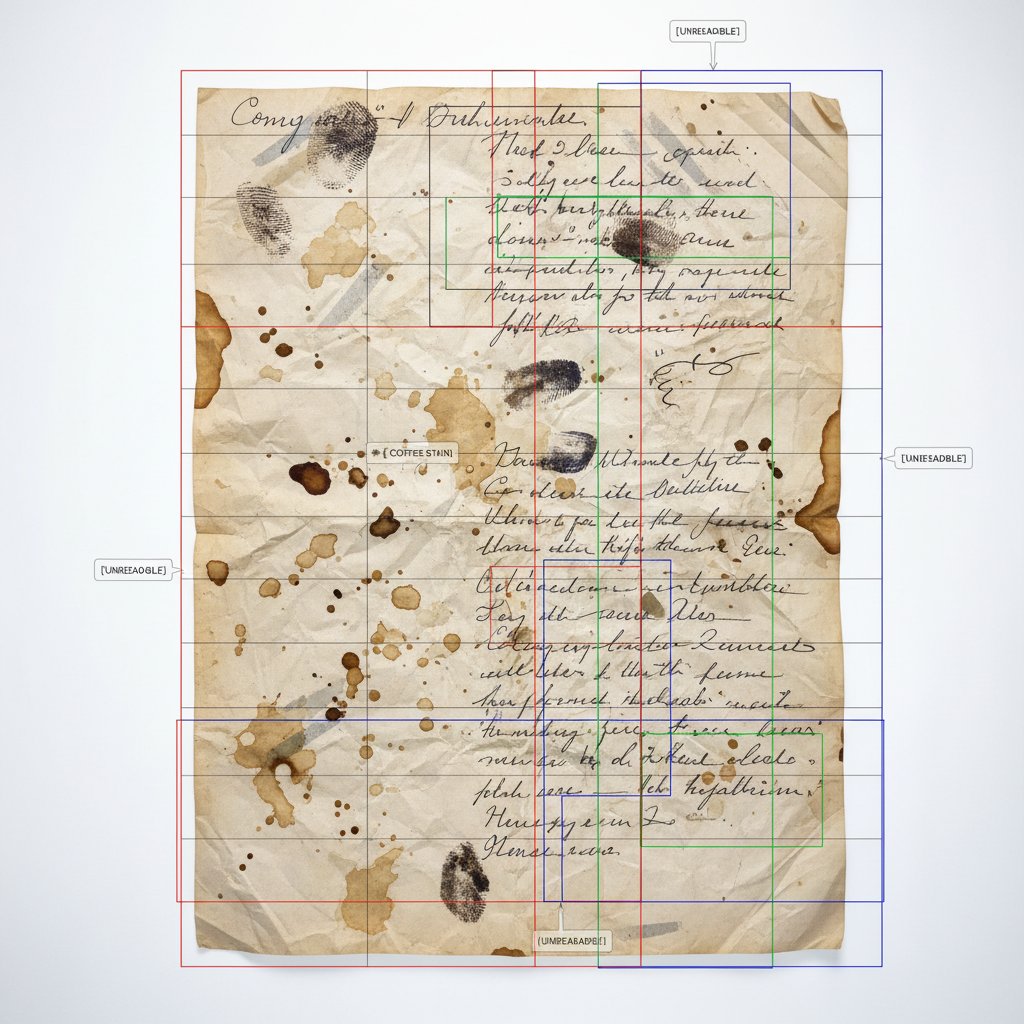

The marketing copy loves to tout “99% accuracy,” but reality bites. Documents in the wild are a mess: torn, coffee-stained, written in six languages, stamped to oblivion, or printed in fonts that only a 90s zine would love. According to Document Logistix, 2025, the biggest challenge isn’t the model—it’s the data. Real-world edge cases push even the best systems to their breaking point.

State-of-the-art models, even with massive training sets, hit their limits when the input defies expectation. Handwritten notes, low-quality faxes, or heavily redacted legal files can shatter the illusion of “automation.” And when the system fails quietly, undetected errors propagate downstream, creating a chain reaction of bad decisions.

“In the field, perfection is a myth—robustness matters more than anything.” — Nick, AI researcher

Inside the black box: model training and data

AI isn’t magic. It runs on labeled data—meticulously annotated by humans who know what to look for. High-quality data is the linchpin. If your training set is biased, incomplete, or inconsistent, even the fanciest algorithm will miss the mark. Overfitting (where a model works perfectly on test data but fails in real life) stalks every project.

Common pitfalls? Biased labeling, under-representation of niche document types, and the mistaken belief that “more data” always means “better results.” The truth: quality > quantity. The best practitioners use feedback loops and human-in-the-loop validation to catch edge cases, fine-tune outputs, and ensure models adapt to new formats and languages as they emerge. Without this vigilance, accuracy erodes quickly.

Why document image analysis fails (and how to spot it early)

The myth of 100% accuracy

It’s a seductive myth: plug in the latest AI tool, and watch it churn out error-free data. Wrong. Every document type—invoice, legal contract, handwritten note—has a different risk profile. According to Recordsforce, 2024, error rates for complex documents remain stubbornly above 5%, even in best-case scenarios.

Real-world studies show:

| Document Type | Typical Accuracy Rate | Strengths | Weaknesses |

|---|---|---|---|

| Invoices | 95-98% | Structured layout | Handwritten edits, stamps |

| Legal documents | 90-95% | Standardized terms | Ambiguous formatting |

| Handwritten notes | 70-85% | Short, neat scripts | Messy handwriting |

| Receipts | 80-93% | Simple layouts | Faded ink, odd fonts |

Table 2: Typical accuracy rates for common document types.

Source: Original analysis based on Recordsforce, 2024 and ResearchGate, 2017

So how do you interpret these numbers? Look for confidence scores, review error distributions (not just averages), and never trust a “99%” claim without context—especially if your workflow can’t afford a single mistake.

Red flags in your workflow

- Lack of transparency: If you can’t audit what the system is doing, you can’t trust the results.

- Excessive manual review: “Automated” systems that generate constant exceptions aren’t saving time.

- Inconsistent results: If accuracy varies wildly between document types, your model isn’t generalizing.

- No feedback loop: Systems that don’t learn from mistakes stagnate fast.

- Unclear error reporting: Vague or missing logs make troubleshooting a nightmare.

- Hardcoded rules everywhere: Indicates underlying AI isn’t robust enough.

- Privacy shortcuts: Weak compliance opens the door to regulatory pain.

Running a workflow self-audit? Start by tracing rejected or error-prone documents back to their source. Map the journey: where does automation break, and where is human intervention masking systemic failures?

Case study: a multimillion dollar mistake

Imagine a mid-size bank automating loan approvals. The vendor promises “99% AI accuracy.” For months, everything seems fine—until regulators discover hundreds of misclassified contracts. The root cause? A subtle formatting inconsistency in scanned legal documents triggered AI hallucinations, rubber-stamped by overworked staff. QA checks were sparse; ambiguous cases weren’t escalated. The fallout: client lawsuits, regulatory fines, and a bruised reputation.

The alarm was finally raised when a sharp-eyed analyst spotted a pattern of approvals that didn’t fit. A post-mortem revealed missed feedback loops, overconfidence in “automated” accuracy, and a lack of contingency planning. Only a hybrid workflow—with robust human-in-the-loop checks—could have prevented the disaster.

Cutting through the hype: what document image analysis can and can’t do

The limits of automation

Here’s the inconvenient truth: some documents will always resist automation. Historical records, creative manuscripts, and heavily redacted files are just too unpredictable. Human review isn’t optional—it’s mission-critical in edge cases, regulatory tasks, or when the cost of error is exorbitant.

Manual workflows offer control and context but are slow and expensive. Semi-automated systems mix AI with strategic human oversight. Fully automated pipelines are lightning-fast but prone to silent failure. The best practitioners blend the three, tuning the workflow to the risk profile and document complexity.

Unconventional uses nobody talks about

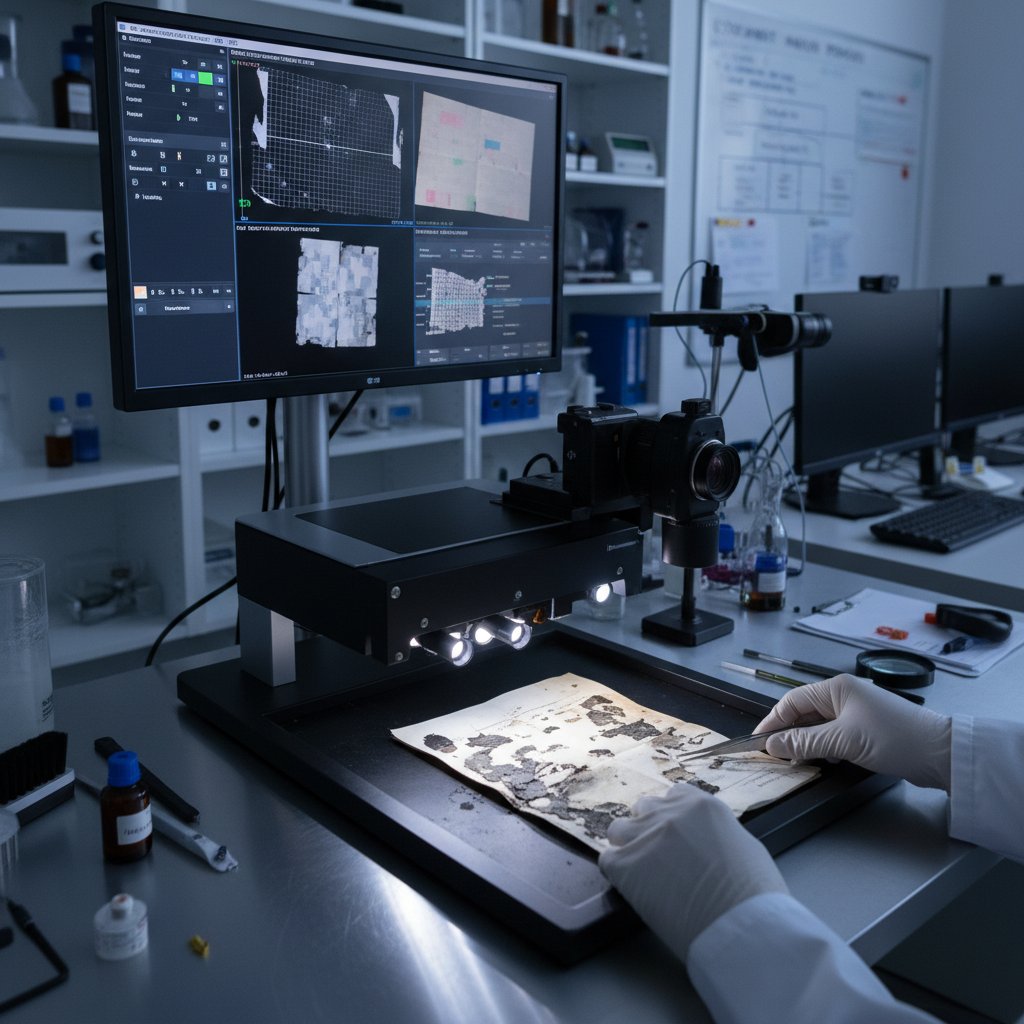

- Forensic reconstruction: Restoring shredded or water-damaged documents for court evidence, using AI to piece together fragments.

- Art restoration: Digitally reconstructing faded manuscripts or paintings, preserving historical context.

- Disaster relief: Processing damaged government records after floods or fires to restore critical services faster.

- Heritage preservation: Digitizing rare books and fragile artifacts, extracting text and imagery without physical handling.

- Journalistic investigations: Sifting through scanned archives to uncover hidden patterns or connections.

- Counterfeit detection: Identifying forged documents by analyzing micro-patterns or ink variations invisible to the naked eye.

These high-stakes scenarios rarely make the tech press, but they’re where document image analysis proves its worth in unexpected ways.

Why are these applications overlooked? They’re niche, technically challenging, and don’t fit the mass-market SaaS narrative. But for those in the know, they’re game-changers.

Debunking the top 5 myths

-

“AI document analysis is always accurate.”

Even the best models struggle with noise, layout changes, and poor scans. Expect errors. -

“Automation means no more human review.”

Every robust system uses humans for validation, especially on edge cases. -

“Handwriting is a solved problem.”

Modern OCRs are better than ever but still stumble on messy, stylized, or multilingual scripts. -

“You can digitize any document instantly.”

Preparation, cleaning, and validation take real time—sometimes as long as manual review. -

“Open-source tools are just as good as commercial.”

Open-source is powerful but often lacks the polish, support, and scalability of enterprise platforms.

These myths persist because marketing thrives on simplicity, not nuance. To stay critical, always demand evidence—not anecdotes.

“It’s not just about the tech—it’s about the context.” — Priya, data scientist

The state of the art: where are we now?

Current breakthroughs (and their real-world limits)

The biggest advances in 2025? Transformer-based models, multimodal AI, and real-time edge processing. Tools like LayoutLM, Google Document AI, and open-source platforms like Tesseract have rewritten the playbook for what’s possible. Yet, every breakthrough comes with tradeoffs: higher computational demands, ongoing data requirements, and persistent struggles with edge cases.

| Platform | Best for | Key limitation |

|---|---|---|

| Tesseract (open-source) | Simple OCR, DIY setups | Struggles with complex layouts |

| Google Document AI | Cloud-based automation | Privacy concerns, cost |

| Amazon Textract | Scale, integration | Customization limits |

| ABBYY FlexiCapture | Enterprise compliance | High price, complex setup |

| LayoutLM (research) | Structured documents | Needs fine-tuning, big data |

Table 3: Feature matrix of leading document analysis platforms.

Source: Original analysis based on Recordsforce, 2024 and ResearchGate, 2017

For most users, the biggest gains are in speed and flexibility. But unless you control your data pipeline and validation, none of these platforms are a magic bullet.

Who’s pushing the boundaries?

The field is being shaped by a mix of academic powerhouses (MIT, Stanford, Tohoku University), tech giants (Google, Microsoft, Amazon), and hungry startups that specialize in niche workflows. Open-source communities, from OCR.space to LayoutParser, offer democratized tools for tinkerers and SMEs. Meanwhile, platforms like textwall.ai are making advanced document analysis broadly accessible, bridging the gap between cutting-edge research and practical deployment in the wild.

What’s still broken (and why)?

Edge cases remain the bane of every system—think highly stylized fonts, dialect variations, or legal documents with obscure formatting. Privacy is a mounting concern, especially in regulated industries. Multilingual analysis lags far behind English-centric systems, and regulatory hurdles differ wildly across regions. The gulf between academic research (where data is clean and expectations are clear) and real-world deployment (messy, ambiguous, high-stakes) is as wide as ever.

Real-world applications and industries transformed

Finance and law: the double-edged sword

Banks and law firms are leading adopters of document image analysis, using AI to screen contracts, verify compliance, and accelerate onboarding. The upside? Faster processing, fewer bottlenecks, and enhanced regulatory monitoring. But the risks are real: a badly classified clause or a misread signature can trigger lawsuits, cause compliance failures, or wreck client trust. False positives (where a document is flagged incorrectly) and false negatives (where errors go undetected) can hit the bottom line hard.

For example, a bank misclassifying loan documents due to OCR errors might approve risky clients, while a law firm missing a critical clause could expose itself to litigation. The lesson: automate carefully and always keep human oversight in the loop.

Healthcare, logistics, and the unexpected

In healthcare, digitizing patient records is revolutionizing efficiency—but only if accuracy is rock solid. According to industry reports, administrative workload has been reduced by up to 50% through intelligent document processing, but misreads can have life-or-death implications.

In logistics, document image analysis accelerates customs clearance and inventory checks, shaving days off supply chain timelines. Meanwhile, unexpected sectors are getting in on the action:

- Insurance: Rapid claims processing by auto-extracting data from scanned forms.

- Journalism: Mining scanned archives for investigative reporting.

- Humanitarian aid: Digitizing refugee records to restore access to critical services after disasters.

These examples illustrate the breadth—and the stakes—of document image analysis across industries.

Personal productivity and small business

For freelancers and small business owners, document image analysis isn’t just convenience—it’s survival. Automating expense reports, extracting data from receipts, or digitizing contracts can transform hours of grunt work into seconds of insight. The steps to integrate document image analysis into a personal workflow are surprisingly achievable:

- Identify pain points: Focus on repetitive, manual document tasks.

- Choose a platform: Evaluate options like textwall.ai for robust, user-friendly analysis.

- Scan and upload: Use your phone or scanner for digitization.

- Preprocess documents: Clean up images, crop, and adjust contrast for best results.

- Run analysis: Let the AI extract data, summarize, or categorize.

- Validate outputs: Manually check results for errors—especially on critical documents.

- Integrate results: Export data into your preferred tools (accounting, project management, etc.).

By following these steps, small businesses can unlock savings and reduce risk—without an IT department.

Choosing the right solution: a brutal buyer’s guide

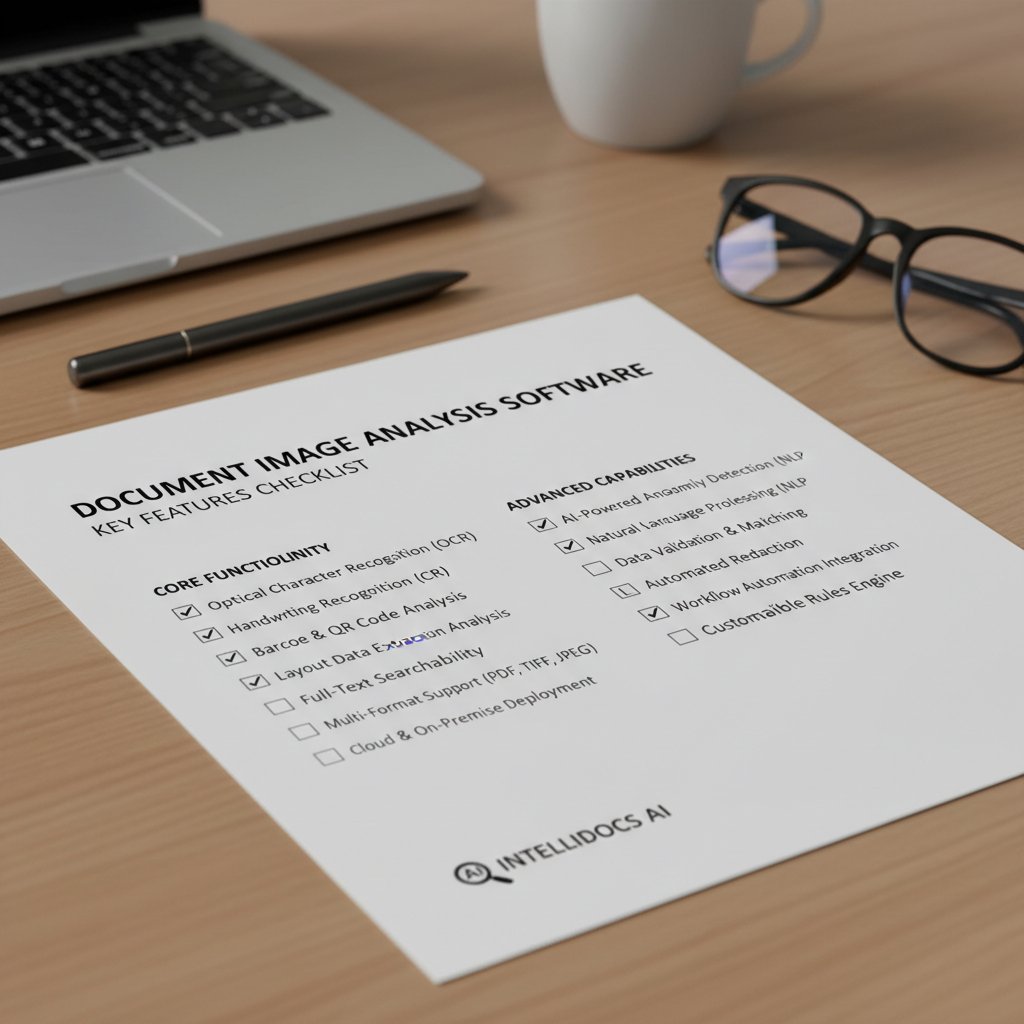

The big questions to ask before you buy

Choosing a document image analysis solution isn’t about picking the shiniest toy. It’s a high-stakes decision that shapes risk, compliance, and productivity. Ask yourself: What’s the real volume and variety of documents? How often do you need human review? What’s your regulatory exposure? Can you audit the system’s decisions, or is it a black box?

Look for vendors that offer clear support channels, detailed transparency reports, and proven scalability. If they won’t show you how the sausage is made, keep walking.

“If you can’t see inside the black box, you’re the one at risk.” — Jordan, CTO

Feature checklist for 2025

- Multilanguage support: You’ll need it sooner than you think.

- Handwriting recognition: Even occasional scribbles matter.

- Customizable workflows: Adapt to your processes, not the other way around.

- Real-time processing: For instant decision-making.

- Robust error reporting: Find and fix issues fast.

- Data privacy controls: Lock down sensitive information.

- Integration APIs: Connect with your existing tools seamlessly.

- Continuous learning: Models that improve over time.

- Transparent audit logs: For compliance and troubleshooting.

- Human-in-the-loop support: Critical for edge cases.

Prioritize features based on frequency and business impact. Don’t get dazzled—get what you need.

Cost-benefit analysis: what you’re really paying for

The sticker price of a document analysis tool is just the beginning. Factor in integration costs, staff training, ongoing annotation, and—most importantly—the cost of errors and compliance failures. Open-source tools might be “free,” but demand technical chops; commercial platforms often bundle support and scalability. Custom builds can eat budgets alive.

| Solution Type | Total Cost of Ownership | Time to Value | Best for |

|---|---|---|---|

| Open-source | Low (DIY effort) | Slow/variable | Tech-savvy, niche workflows |

| Commercial SaaS | Medium-high | Fast | Mainstream, enterprise needs |

| Custom-built | High | Slow | Unique, mission-critical use |

Table 4: Cost-benefit analysis of document image analysis solutions.

Source: Original analysis based on Recordsforce, 2024

To avoid common traps, demand a proof-of-concept, run pilot projects, and always budget for post-launch support and error correction.

Pitfalls, perils, and how to avoid disaster

Common mistakes (and how to fix them)

- Ignoring edge cases: Test with real-world messy documents, not just perfect samples.

- Over-relying on vendor defaults: Customize preprocessing and extraction rules.

- Neglecting feedback loops: Build in regular review and retraining cycles.

- Skipping human validation: Always check AI on critical documents.

- Assuming “set and forget”: Monitor outputs, update models as formats evolve.

- Weak access controls: Lock down sensitive data, audit system users.

- Poor documentation: Keep clear logs of errors and interventions.

- Chasing hype over need: Buy tools that match your workflow, not the latest trend.

These mistakes are all too common because wishful thinking is easier than systematic vigilance. A culture of continuous risk management, not blind optimism, is the only antidote.

Actionable takeaways: Create playbooks for exception handling, escalate persistent issues, and invest in ongoing user training.

Data privacy, bias, and the ethics debate

The more data you collect, the higher the stakes. Privacy risks lurk at every stage—especially when handling legal, financial, or health documents. Regulations like GDPR and HIPAA aren’t just checkboxes; they’re existential threats if ignored. According to compliance experts, the best systems employ role-based access, robust encryption, and regular external audits.

Bias is a tougher nut. AI models trained on narrow or incomplete data can reinforce inequities—misreading minority languages, for instance, or flagging certain formats as “errors.” Best practices include diverse data collection, regular bias audits, and transparent reporting.

To audit for bias and compliance: sample outputs across demographics, document formats, and languages; log error types; and involve external reviewers where possible.

When to call in the experts

Some problems outpace in-house talent. Highly regulated environments, mission-critical workflows, or persistent error spikes demand outside expertise. Consulting with external specialists, or relying on advanced platforms like textwall.ai, can save money (and face) in the long run.

Checklist for escalation:

- Consistent, unexplained errors

- Regulatory or audit failures

- Integration headaches with legacy systems

- Security or privacy incidents

- Need for custom workflow engineering

Don’t wait for disaster to strike before bringing in the cavalry.

The next frontier: generative AI, multimodal fusion, and beyond

What generative AI means for document analysis

Generative AI, the tech powering everything from deepfakes to creative writing bots, is now restructuring document analysis. Its biggest contribution? Filling in gaps—reconstructing missing, shredded, or damaged documents by learning plausible structure and content. Today’s systems can “hallucinate” missing words based on context, making sense of partial or degraded files.

Current capabilities include reconstructing water-damaged legal files, generating missing pages, and summarizing complex multi-document sets. The future possibilities are vast—but always grounded by the need for human verification.

Multimodal approaches: not just images anymore

Modern document analysis isn’t limited to scanned pages. By blending text, images, tables, and metadata, multimodal systems achieve a depth of understanding old OCR could never dream of. Example: analyzing a scientific research paper by parsing both textual content and embedded charts, or extracting context from a receipt by reading both the merchant logo and transaction data.

Challenges remain: integrating disparate data types, ensuring consistent accuracy, and managing (or mitigating) model biases. Best practices include modular pipeline design, iterative validation, and rigorous user feedback loops.

What’s next: predictions for 2025 and beyond

- AI-first compliance: Automated, real-time audit trails for every document.

- Edge processing dominance: Document analysis moves from data centers to devices, slashing privacy risk.

- True global support: AI models that handle dozens of languages and formats without retraining.

To stay ahead, you’ll need a restless mindset: embrace continuous learning, demand evidence, and never stop questioning your tools’ limits. Staying informed isn’t a luxury—it’s your only defense.

Glossary: decoding the jargon

Essential terms in document image analysis:

Converts images of text into machine-readable text—essential for all digitization.

Divides images into logical sections for targeted processing—think tables, text blocks.

Breaks down text into individual words or phrases for deeper analysis.

Simultaneous processing of text, images, and metadata.

Running AI models on local devices rather than cloud servers for speed and privacy.

Specialized OCR for cursive or stylized scripts—accuracy varies widely.

Closed system for learning from errors and improving over time.

Systematic review to uncover and fix AI bias.

Strategic human intervention to catch edge cases and errors.

Machine-created, tamper-proof logs for compliance and troubleshooting.

Common confusion points? Mixing up OCR and NLP, assuming “digitization” equals “analysis,” or underestimating the complexity of layout parsing. Pro tip: always map jargon to real workflow pain points.

Appendix: DIY checklist and quick reference

Self-assessment: is your workflow ready?

- Map your document types: List all formats (invoices, contracts, forms).

- Assess scan quality: Sample real-world docs—look for noise, skew, or damage.

- Inventory legacy systems: Note where integration will be required.

- Evaluate volume: Estimate weekly/monthly throughput.

- Define accuracy needs: What’s your tolerance for error?

- Check compliance risks: List relevant regulations (GDPR, HIPAA).

- Identify manual review points: Where are humans still essential?

- Plan for feedback loops: How will you catch and fix errors?

- Budget for ongoing support: Factor in annotation, retraining, and troubleshooting.

Score your readiness: The more “yes” answers, the smoother your rollout. Stuck? Revisit pain points and escalate where needed.

Quick reference: troubleshooting guide

- Low accuracy: Rerun with better scans, clean up input images.

- Missing data fields: Check segmentation settings.

- Inconsistent results: Retrain model with better data diversity.

- Frequent manual review: Adjust thresholds, refine AI rules.

- Slow processing: Upgrade hardware or try edge processing.

- Integration fails: Revisit API documentation, consult support.

- Compliance warnings: Audit access controls, update privacy settings.

When to escalate: Persistent errors, regulatory failures, or integration dead ends. DIY fixes work for small snags—call in experts for anything mission-critical.

Conclusion: document image analysis isn’t magic (but it’s your next power move)

The dirty secret of document image analysis? It’s not about the tech—it’s about relentless, unsentimental realism. The systems that survive aren’t the ones with the shiniest marketing, but those built on hard facts, continuous feedback, and a refusal to believe in magic bullets. Whether you’re in finance, law, healthcare, or running a solo consultancy, mastering document image analysis in 2025 means embracing brutal truths, not empty promises.

The gains are undeniable: productivity, compliance, and insight at scale. But so are the risks: hidden costs, privacy landmines, and the ever-present possibility of catastrophic error. As you navigate this landscape, let skepticism be your superpower. Demand evidence, validate every claim, and—when in doubt—lean on trusted resources like textwall.ai to chart your course. Digital transformation isn’t a destination; it’s a mindset. Stay sharp, stay critical, and let document image analysis become your next power move.

Sources

References cited in this article

- Document Image Analysis: A Primer (ResearchGate)(researchgate.net)

- Document Management Trends in 2025(document-logistix.com)

- Recordsforce: Document Digitization Trends 2024(recordsforce.com)

- Quocirca: Document Capture Trends 2024(quocirca.com)

- Historical Document Processing Survey (2020)(scitepress.org)

- Royal Historical Society Blog (2023)(blog.royalhistsoc.org)

- DocumentLLM: AI Document Analysis 2024(documentllm.com)

- Nanonets: AI Image Processing(nanonets.com)

- ScienceDirect: Document Image Overview(sciencedirect.com)

- V7 Labs: Image Processing Guide(v7labs.com)

- UBIAI: OCR Overview 2024(medium.com)

- Addepto: AI-powered OCR(addepto.com)

- CVPR 2024: Robustness Benchmark(openaccess.thecvf.com)

- IEEE Xplore: Open Problems in DIA(ieeexplore.ieee.org)

- Medium: Modern-day Challenges(medium.com)

- Docsumo: Fraud Detection Checklist(docsumo.com)

- Nature: Community-developed Checklists(nature.com)

- ICDAR 2023 Proceedings(dl.acm.org)

- Sciencefather: ICDAR 2024(computer-vision-conferences.scifat.com)

- Google Books: Document Image Analysis(books.google.com)

- CVPR 2024: Robustness Benchmark(openaccess.thecvf.com)

- ICDAR 2024(icdar2024.net)

- Rossum: Innovation Awards 2023(rossum.ai)

- Springer: Document Image Analysis Challenges(dl.acm.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Format Conversion Risks That Quietly Break Workflows

Document format conversion isn’t just about compatibility—uncover the hidden risks, expert tactics, and essential strategies powering smarter workflows in 2026.

Document Extraction Technology Trends That Will Decide Who Wins 2026

Document extraction technology trends are rewriting the rules of data in 2026. Uncover what’s next, what’s hype, and how to stay ahead. Explore the real edge now.

Document Extraction Technology Forecast 2026: Hype Vs Reality

Discover what’s real, what’s hype, and what’s next in 2026. Unfiltered analysis, expert insights, and actionable strategy inside.

Document Extraction Technology and Who Really Gains From It

Document extraction technology is rewriting the rules in 2026. Discover the hard truths, hidden pitfalls, and real breakthroughs—plus expert tips you won’t find elsewhere.

Document Extraction Systems That Won’t Fail You in 2026

Discover the hard truths, real risks, and future-proof strategies for AI-driven document processing in 2026. Don’t get left behind.

Document Extraction Software Vendor Reviews Buyers Actually Trust

Document extraction software vendor reviews—no BS, just real data, pitfalls, and winners. Unmask the truth in 2026's AI-powered extraction landscape. Read before you buy.

Document Extraction Software Tools That Won’t Wreck Your Stack

Document extraction software tools in 2026: Discover edgy truths, expert analysis, and what no one else will tell you. Uncover real-world wins, hidden risks, and the ultimate decision checklist. Read before you choose.

Document Extraction Software Solutions That Won’t Explode in 2026

Discover insights about document extraction software solutions

Document Extraction Software Reviews That Vendors Hate to See

Document extraction software reviews that cut through hype: Unmask hidden pitfalls, compare top tools, and get real-world insights to make your smartest choice now.