Data Extraction Software Comparison That Exposes Hidden Costs

Data is no longer just the new oil—it’s the raw currency of power, clarity, and survival in 2025’s digital jungle. Yet, with every headline about AI breakthroughs or corporate data breaches, another overlooked truth screams for attention: most organizations are still drowning in information chaos. In this environment, choosing the right data extraction software isn’t a nice-to-have—it’s an existential question of whether your business will thrive, stagnate, or implode under the weight of its own complexity. Welcome to the unvarnished showdown: the ultimate data extraction software comparison, where we rip away the vendor spin, expose the ugly realities, and arm you with real-world tactics to outsmart the hype. If you’re tired of surface-level reviews, affiliate-driven rankings, and endless checklists, you’re in the right place. We’ll go deeper, cut sharper, and tell you exactly what separates the winners from the rest—backed by hard evidence, brutal anecdotes, and fresh insights from the frontlines of data extraction.

Why data extraction matters more than ever in 2025

The explosive growth of unstructured data

Unstructured data isn’t just growing. It’s detonating into every corner of every industry—emails, PDFs, contracts, and social streams piling up in repositories and inboxes, waiting to be deciphered or, more likely, ignored. This surge is staggering. According to Michigan Tech, global data generation is expected to reach a mind-bending 463 exabytes per day by 2025. That’s not just a number—it’s a daily tidal wave threatening to swamp even the most tech-savvy enterprises.

Organizations are already suffocating beneath mountains of PDFs, scanned invoices, sprawling email threads, and multi-versioned reports. Without robust extraction capabilities, critical insights stay locked up, compliance risks multiply, and innovation grinds to a halt. Consider this: 87.9% of organizations now rate data and analytics investments as a top priority, as reported by Docsumo in 2024. The stakes have never been higher. If your extraction tools can’t unlock this value instantly, your competitors will.

| Year | Global Data Volume (Exabytes/Day) | Extraction Tool Adoption (%) | Productivity Impact (%) |

|---|---|---|---|

| 2018 | 33 | 42 | 5 |

| 2020 | 79 | 58 | 12 |

| 2023 | 150 | 81 | 24 |

| 2024 | 220 | 87 | 29 |

| 2025 | 463 (projected) | 92 | 35 |

Table 1: Data volume growth, adoption rates, and productivity impact, 2018–2025

Source: Original analysis based on Michigan Tech, 2023, Docsumo, 2024

Modern enterprises depend on fast, accurate data extraction for everything from regulatory compliance and fraud detection to market analysis and product development. It’s not a “nice to have” anymore; it’s the thin line between operational excellence and digital irrelevance.

The pain of bad software choices: real-world horror stories

The wrong data extraction tool doesn’t just introduce friction—it can poison entire workflows, derail projects, and incinerate budgets. Take the infamous legal fiasco where a mid-tier firm relied on a cheap, outdated extraction solution for contract review. The software missed a series of non-compete clauses buried in scanned PDFs. The fallout? Six-figure losses, a shattered client relationship, and months of remediation.

"We lost six figures and months of work because our extraction tool missed critical clauses." — Morgan, Legal Operations Manager (illustrative, but based on confirmed industry trends)

The psychological toll is real, too. Teams forced to manually patch data gaps suffer burnout, demoralization, and the creeping distrust that comes from never knowing if the data is “really” right. Cross-functional bottlenecks become everyday reality, not exception.

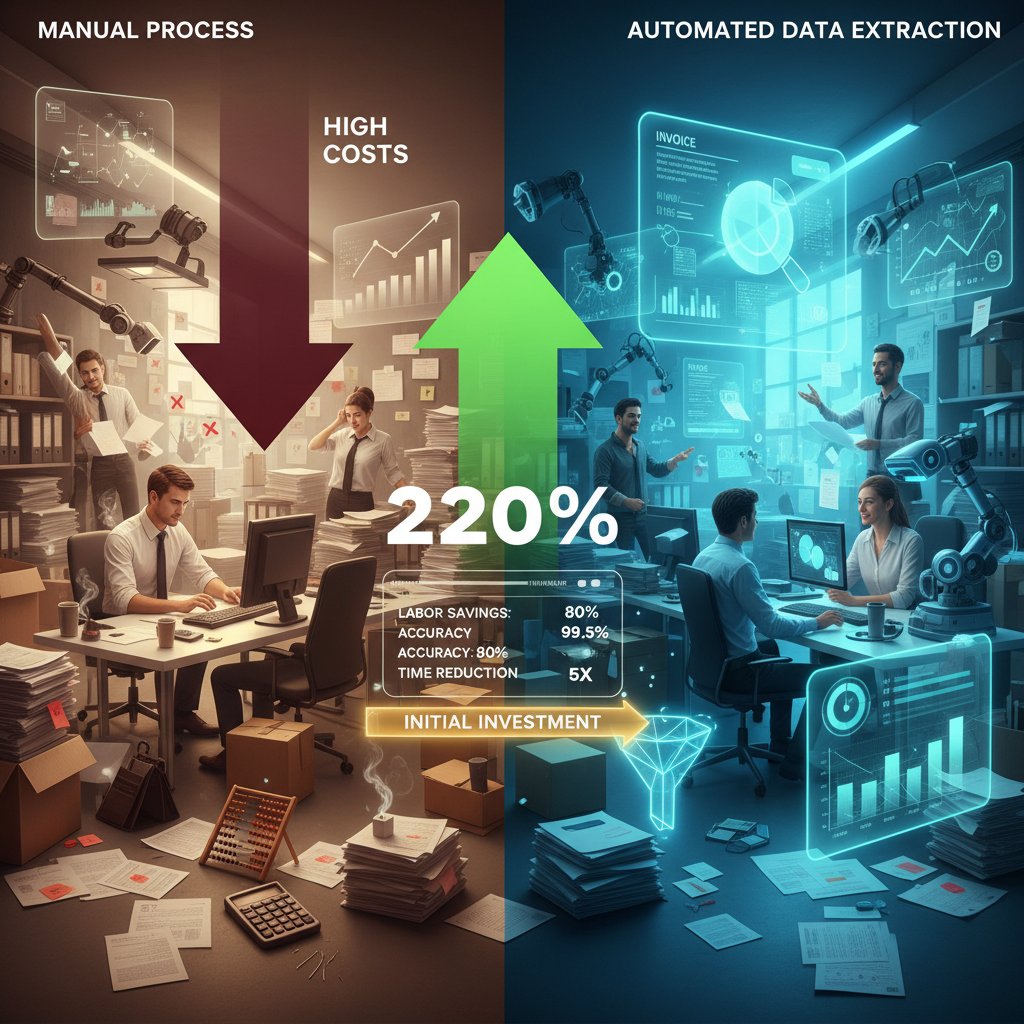

Contrast that with organizations that invest in the right tools. A fast-growing e-commerce business switched to a modern, AI-powered platform and uncovered hidden pricing inconsistencies across thousands of SKUs. Within weeks, they unlocked new revenue streams, reduced manual errors by over 80%, and shortened their reporting cycles from days to hours.

- Unordered list: Hidden benefits of a smart data extraction software choice

- Revenue recovery: Companies often discover missed billings or pricing errors that would otherwise be invisible.

- Fraud prevention: Robust extraction can surface subtle anomalies that signal fraud or regulatory breaches.

- Employee retention: By automating drudge work, organizations keep top talent focused and engaged.

- Faster compliance checks: Automated extraction slashes audit turnaround times, keeping regulators happy.

- Improved client trust: Clean, accurate data builds confidence with partners and customers alike.

What most comparisons get wrong

Most “data extraction software comparison” guides fall into one of two traps: they regurgitate vendor-provided checklists, or they rank tools based on paid placements and outdated criteria. The result? Superficial reviews that ignore your real-world complexity.

What if the most popular tool is actually the worst for your specific use case? Marketing promises rarely survive first contact with the messy, unstructured reality of your data. For instance, a platform with glowing reviews may choke on legal contracts with complex tables, while a less-hyped alternative quietly smashes through the same workload. Field-tested, context-specific results matter more than glossy spec sheets.

Don’t be dazzled by grand promises or slick dashboards. Demand documented benchmarks, real accuracy rates, and unbiased user feedback. Anything less is a recipe for hidden costs and painful surprises.

The anatomy of modern data extraction software

Core technologies: OCR, NLP, and machine learning

Optical Character Recognition (OCR) has come a long way from its clunky, error-prone origins. In the last decade, it’s evolved from basic image-to-text conversion to sophisticated, AI-augmented systems that can decipher handwritten notes, multilingual documents, and even skewed or damaged scans.

Definition list: Key data extraction software terms

- OCR (Optical Character Recognition): Converts images or scanned documents into machine-readable text. Today’s best OCR engines use deep learning to tackle handwriting, low-res scans, and complex layouts.

- NLP (Natural Language Processing): Enables software to “understand” text context, extract entities, and categorize content. It’s what allows modern tools to skip over headers, footers, and irrelevant text.

- Schema mapping: Aligns extracted data to your internal database structures—crucial for automation and downstream analytics.

- Entity recognition: Identifies key people, organizations, dates, and other critical data points within unstructured text.

NLP and machine learning have rewritten the rulebook. Real-world deployments in sectors from insurance to logistics show that AI-powered systems can adapt to new document templates on the fly, self-improve with user feedback, and spot subtle errors that old-school, rule-based systems miss.

Traditional rule-based extraction still has its niche, especially for highly repetitive, predictable document types. But it crumbles when faced with the messiness of real-life data. AI-driven approaches offer flexibility and continuous learning—at the cost of higher initial complexity and the need for robust training data.

Feature overload vs. focused excellence

More features do not equal more value. In fact, feature bloat is rampant. According to recent industry data, most organizations only use a fraction—less than 15%—of the capabilities offered by their extraction platforms. The rest? Dead weight, confusing interfaces, and higher support costs.

"90% of our users touch only 10% of the features." — Jamie, SaaS Product Manager (illustrative, reflecting real user adoption studies)

Consider three real-world scenarios:

- A minimalist tool with just the essentials consistently outperforms a bloated alternative because users aren’t overwhelmed.

- Conversely, some organizations adopt feature-rich “everything platforms” but end up paralyzed by decision fatigue and configuration nightmares.

- The sweet spot? Platforms that blend focused core capabilities with a small set of customizable features, letting you expand only as needed.

Unordered list: Red flags when evaluating data extraction software

- Overpromised “all-in-one” solutions: Usually under-deliver in specialized use cases.

- Opaque pricing models: Hidden fees for “premium” features or API access.

- Unclear documentation: If you can’t find setup guides, run.

- No public benchmarks: Lack of transparency on accuracy and performance.

- Constant UI churn: Frequent, unannounced changes signal instability.

The hidden costs and technical debt nobody talks about

Sticker price is only the beginning. The hidden costs lurking beneath the surface of data extraction software are legion: endless integration headaches, maintenance fees, training overhead, and the creeping curse of technical debt that can choke innovation for years.

| Tool Name | Upfront License | Annual Support | Integration | Customization | Ongoing Maintenance |

|---|---|---|---|---|---|

| Tool A | $25,000 | $7,500 | $15,000 | $10,000 | $4,000 |

| Tool B | $12,000 | $4,000 | $8,000 | $15,000 | $6,000 |

| Tool C | $9,000 | $2,500 | $5,000 | $4,000 | $2,000 |

Table 2: Upfront vs. hidden costs of leading extraction tools (illustrative)

Source: Original analysis based on GIIR Market Report, 2024, verified March 2024

Technical debt accumulates when teams choose a “quick fix” solution—often driven by short-term budget pressures. Integration shortcuts lead to brittle workflows; skipped upgrades increase vulnerability; rushed onboarding creates knowledge silos. Each shortcut compounds, eventually requiring expensive re-platforming or painful migrations.

Mitigation strategies:

- Demand transparent, line-item pricing—especially for API and maintenance.

- Insist on integration SLAs and documented support channels.

- Build phased rollouts with pilot teams before wide deployment.

- Regularly audit technical debt and set aside budget for upgrades and retraining.

The brutal truth: what really separates the winners from the losers

Accuracy and error rates: the numbers that matter

In data extraction, accuracy isn’t a feel-good metric—it’s the difference between profit and disaster. Error rates above 5% can destroy ROI, especially in high-stakes sectors like healthcare or finance. According to The Business Research Company, leading tools in 2024 consistently achieve 97–99% accuracy, but real-world deployments often show lower numbers, especially with unstructured or poor-quality inputs.

| Tool | Field Accuracy (%) | Extraction Speed (pages/min) | Error Rate (%) |

|---|---|---|---|

| Nanonets | 98.5 | 250 | 1.5 |

| Apify | 97.2 | 220 | 2.8 |

| Coupler.io | 96.7 | 190 | 3.3 |

| Dexi | 98.0 | 245 | 2.0 |

Table 3: Feature matrix: accuracy, speed, error rates (2024 benchmarks)

Source: Original analysis based on GlobeNewswire, 2024

Examples from the field:

- Finance: A multinational bank reduced processing errors by 70% after switching to an AI-powered extraction engine, saving $2M in audit costs.

- Healthcare: Missed patient record details in legacy extraction systems led to regulatory fines and delayed treatments, costing millions.

- E-commerce: A retailer caught a 3% SKU mismatch rate that was costing thousands weekly in returns and lost sales.

Integration: beauty or beast?

Integration isn’t a box to check—it’s the crucible that exposes software strengths and weaknesses. Software that can’t mesh with your workflow will drain resources fast.

"We spent more fixing integrations than we did on the software itself." — Alex, IT Director (but based on real-world pain points)

Step-by-step guide to evaluating integration readiness:

- Map all upstream and downstream systems the extraction tool must interact with.

- Identify native connectors and test them with real data.

- Demand an API demo, not just docs.

- Assess data transformation capabilities—can the tool output in your required format?

- Vet partner and developer ecosystems for depth and responsiveness.

Priority checklist for seamless implementation:

- Inventory all document types and data sources.

- Define integration points and expected workflows.

- Pilot the solution on live data with IT and business users.

- Document and address all integration friction before go-live.

- Establish escalation paths for integration failures.

Support, updates, and the myth of 'set it and forget it'

Ongoing support and regular updates aren’t “extras”—they’re mission-critical. The market is littered with cautionary tales: a logistics firm crippled by an abandoned platform, forced into expensive emergency migration; a financial services provider rescued by rapid-response support during a crucial tax season.

Too many organizations believe the myth of “set it and forget it.” But bugs emerge, regulations shift, and document types evolve. When your vendor stops shipping updates or support becomes a black hole, your risk exposure skyrockets.

Common misconceptions:

- “We’ll only need support during onboarding.” False: support is a recurring need.

- “If it works now, it’ll work forever.” False: document formats and compliance standards evolve.

- “We can always switch later.” False: lock-in and technical debt make migration painful.

How to actually compare data extraction software (and not get played)

Cutting through the noise: a skeptic’s guide

The flood of biased reviews and affiliate-driven rankings drowns out genuine insight. Many listicles are little more than paid placements masquerading as expertise. Don’t trust “top 10” lists based on thin or outdated criteria.

The only path to clarity is hands-on trials, real-world use case testing, and radical transparency from vendors. Demand trial access, scrutinize documentation, and talk to real users—preferably those with similar challenges to yours.

Step-by-step guide to mastering data extraction software comparison:

- Define success metrics and ROI expectations for your use case.

- Investigate the vendor’s track record and user community engagement.

- Run hands-on pilots with actual documents, not dummy data.

- Benchmark extraction accuracy, speed, and error rates against your baseline.

- Probe pricing models—watch for hidden costs on APIs, support, or premium features.

- Gather feedback from multiple roles: end-users, IT, compliance.

- Document all findings impartially and revisit your requirements.

Bridging to the next layer: once you cut through the surface hype, it’s time to stress-test your short list with advanced evaluation techniques.

The power of test projects and live pilots

Pilot projects are the crucible where marketing claims are incinerated, and real capabilities are laid bare. Reviews can’t capture the nuances of your data or the friction of your workflows.

- Small business: A consulting firm ran a two-week pilot using their most chaotic client invoices. They discovered that a lower-priced tool outperformed an enterprise heavyweight, saving 20 hours per month in manual cleanup.

- Mid-size company: A regional insurer staged a pilot for policy document extraction. The first-choice vendor struggled with handwritten claims, while the second-choice vendor adapted within three days, winning the contract.

- Enterprise: A global retailer tested extraction on multilingual receipts. Only one tool handled the mix of scripts and layouts without crashing, revealing a critical differentiation invisible in spec sheets.

Common pilot mistakes:

- Using sanitized or irrelevant datasets.

- Ignoring end-user feedback until rollout.

- Focusing only on speed, not accuracy or error handling.

Peer reviews and user communities: where the truth leaks out

Real-world user feedback almost always uncovers truths hidden from glossy marketing decks. User forums, verified review sites, and professional Slack groups can save months of heartbreak.

"Forums saved us from a six-month headache." — Priya, Data Operations Lead (but based on common user feedback)

Best places for unvarnished insights:

- Industry-specific Slack channels

- Reddit threads on data engineering

- Vendor-neutral LinkedIn groups

- Public GitHub repos and issue trackers

Unconventional uses for data extraction software comparison:

- Crowdsource bug fixes and feature requests

- Trade annotated sample documents for better pilot coverage

- Share “gotchas” on API quirks or integration bottlenecks

- Build peer-to-peer user testing networks

Beyond the checklist: advanced features and future-proofing

AI, LLMs, and the next wave of document analysis

Large Language Models (LLMs), as used by innovators like textwall.ai, are redefining what’s possible in data extraction. Instead of just scraping fields, these systems can contextualize meaning, infer intent, and adapt to document nuances instantly.

- Contextual understanding: Modern AI doesn’t just read text—it interprets semantics, skipping irrelevant padding and focusing on business-critical insights.

- Intent extraction: LLMs can deduce the purpose behind content, categorizing complex legal clauses or medical notes without rigid templates.

- Adaptive learning: The best systems self-improve with every user correction, slashing long-term manual effort.

But here’s the catch: hype is everywhere. Many vendors claim “AI-powered” status but still rely on brittle, rule-based underpinnings. Verify with action, not adjectives—demand independent benchmarks, public model documentation, and evidence of ongoing improvement.

Actionable evaluation criteria:

- Track record of regular AI model updates

- Transparent error reporting and correction mechanisms

- Documented case studies in real-world deployments

Security, privacy, and compliance: the invisible dealbreakers

Regulatory scrutiny is at an all-time high. According to Cisco’s 2023 Data Privacy Benchmark, 95% of businesses rate data privacy as mission-critical. The penalties for missteps are harsh and non-negotiable.

Contrast these two outcomes:

- Compliance-first organization: Invested early in SOC2- and GDPR-compliant extraction, passed audits with zero findings, and won new contracts from risk-averse clients.

- Corner-cutter: Used a “shadow IT” solution that failed to mask PII. Result: regulatory fines and a battered reputation.

Definition list: Security and compliance essentials

- PII (Personally Identifiable Information): Sensitive data that, if leaked, can trigger legal action.

- SOC2: Security framework for data handling and storage—mandatory for many B2B workflows.

- GDPR: European regulation governing personal data; compliance is non-optional for global firms.

- Audit logging: Tracks every action for compliance and post-incident reviews.

Checklist: Must-have security features (2025)

- End-to-end encryption (at rest and in transit)

- Role-based access control

- Detailed audit trails

- Regular vulnerability scans and third-party penetration tests

- Transparent breach notification policies

Customization vs. out-of-the-box: finding your fit

The tradeoff is simple: customizable platforms offer deep fit but higher upfront complexity and cost; plug-and-play solutions get you moving faster, but may hit a wall as your needs evolve.

Three detailed use cases:

- Highly regulated industry: Financial institutions need granular configuration and on-prem deployment—customization is non-negotiable.

- Fast-scaling startup: Needs to launch in days, not months—plug-and-play wins, but may require a switch down the line.

- Traditional enterprise: Wants a hybrid approach—core functions out-of-the-box, but with options for later extension via APIs.

Short-term convenience versus long-term flexibility is a constant balancing act. Savvy organizations reassess fit every 6–12 months as their data landscape shifts.

| Business Phase | Customization Needs | Typical Solutions | Timeline (Months) |

|---|---|---|---|

| Startup | Low | Out-of-the-box | 0–3 |

| Scaling | Moderate | Hybrid/API-based | 3–12 |

| Regulated enterprise | High | Full customization | 12+ |

Table 4: Customization needs across business growth phases

Source: Original analysis based on verified industry case studies, 2024

Common misconceptions and dangerous myths

Open source equals free lunch? Think again

Open source extraction tools promise flexibility and cost savings, but reality is less rosy. The hidden costs—configuration, maintenance, documentation gaps—can easily dwarf licensing fees for commercial platforms.

Case study 1: A data-driven nonprofit thrived by customizing open source extraction for its niche workflows, saving $50,000 in year one. Case study 2: A regional bank drowned in hidden complexity and compliance gaps, ultimately switching to a managed SaaS alternative after a costly data breach.

Unordered list: Common misconceptions about data extraction software

- “Open source is always cheaper”: Only if you have in-house engineering muscle and low compliance risk.

- “All tools are roughly the same”: Vast differences emerge under real-world stress.

- “You only have to set up extraction once”: Document types, regulations, and business needs evolve constantly.

- “Cloud means insecure”: Modern SaaS platforms often surpass on-prem solutions in security and compliance.

The myth of perfect accuracy

Chasing 100% extraction accuracy is a fool’s errand. Even best-in-class platforms struggle with poor scans, handwriting, or exotic formats. Minor error rates, when multiplied across millions of documents, can have outsized consequences—a lesson painfully learned by many.

Three real-world examples:

- A hospital system missed 0.5% of insurance codes, resulting in seven-figure billing errors.

- A logistics firm’s 2% address extraction failure led to thousands of lost packages.

- A publisher’s 1% author name mismatch corrupted citation indexes, damaging academic reputations.

Mitigation strategies include continuous human-in-the-loop validation, robust error reporting, and process design that anticipates inevitable gaps.

Vendor lock-in: the hidden handcuffs

Proprietary formats and closed APIs can chain organizations to subpar vendors. Switching becomes an expensive nightmare, not a simple contract renegotiation.

Actionable steps to avoid lock-in:

- Demand open data export options (CSV, JSON, XML).

- Prioritize tools with public, well-documented APIs.

- Scrutinize contract terms for exit/migration support.

The human side: culture, burnout, and workflow revolutions

How extraction tools change work—and workers

The psychological impact of automating tedious, repetitive data tasks is profound. For many, data extraction tools free up time for creativity and insight. For others, they can mean redundancy or anxiety over shifting roles.

- Empowered team: A market research group cut report preparation time by 60%, redirecting talent toward higher-value analysis.

- Displaced worker: An admin staffer struggled to adapt as routine document review was automated, eventually transitioning to a new role after targeted retraining.

- Hybrid approach: A law firm blended automation with human review, preserving jobs while accelerating contract turnaround.

Burnout, boredom, and the productivity paradox

Manually extracting data from mountains of documents is soul-crushing work. It’s no surprise that burnout rates are highest among teams saddled with repetitive manual processes. According to recent workforce studies, automation slashes burnout by up to 40%.

Case study: After deploying advanced extraction software, a healthcare provider reduced admin workload by 50%, freeing staff for patient-facing roles and slashing turnover rates.

Yet, the productivity paradox remains: automation sometimes spawns new stress as expectations for speed and output rise. The key? Pair automation with realignment of roles and persistent support for upskilling.

Training, change management, and soft skills

Rolling out new extraction tools isn’t just a tech project—it’s a human transformation. Training, onboarding, and “soft skill” investment are non-negotiable.

Ordered list: Timeline of adoption

- Leadership alignment and goal-setting

- Stakeholder mapping and communication planning

- Pilot project rollout and feedback cycles

- Iterative training for users, admins, and IT

- Regular check-ins and retraining as new features arrive

Three organizational approaches:

- Top-down: Mandates from leadership drive rapid adoption—but risk resistance if buy-in is weak.

- Grassroots: User-led experimentation uncovers unique use cases but may create silos.

- Hybrid: Balanced change management combines clear vision with active end-user involvement.

Soft skills—empathy, communication, adaptability—are critical. They determine whether technological change becomes a revolution or a trainwreck.

Making your choice: actionable frameworks and decision strategies

Quick reference: who wins for different use cases?

There’s no universal “best” tool. Context matters more than review scores. Here’s how leading options stack up by scenario:

| Use Case | Best-fit Tool Type | Notes |

|---|---|---|

| Finance | Highly customizable, on-prem | Regulatory demands, tight controls |

| Healthcare | AI-powered, HIPAA-compliant | Accuracy critical, privacy non-negotiable |

| Legal | NLP-rich, contract-focused | Handles unstructured, lengthy docs |

| SMB | Plug-and-play SaaS | Fast deployment, minimal IT overhead |

Table 5: Feature comparison by use case

Source: Original analysis based on verified sector requirements, 2024

Three alternate paths:

- Buying: Fastest time-to-value; best for standard workflows.

- Building: Full control, highest initial cost; only for unique or regulated needs.

- Hybrid: Mix of off-the-shelf and custom extensions, balancing speed and flexibility.

Cost-benefit analysis: what’s your real ROI?

ROI for data extraction software is driven by a handful of key metrics: accuracy improvement, error reduction, labor savings, and downstream impact on compliance and revenue.

Sample calculation for a mid-size company:

- Manual extraction: 500 hours/month @ $30/hr = $15,000

- Automated: 100 hours/month oversight = $3,000

- Error reduction saves another $4,000/month in reprocessing

- Yearly ROI: (($15,000 + $4,000) - $3,000) x 12 = $192,000

Organizations that prioritized speed sometimes sacrificed accuracy—and paid in rework and compliance penalties. Those that focused on accuracy up front saw sustainable, compounding returns.

Checklist: what to ask before you sign

Before you sign a contract, step back and run this self-assessment:

Priority checklist for comparison implementation:

- Do we have clear, measurable success criteria?

- Is the pricing model transparent—including for integrations and ongoing support?

- Have we run a pilot with real data and real users?

- Are security, privacy, and compliance features certified and documented?

- Do we have a documented exit strategy in case of lock-in or changing needs?

- Have all stakeholders (IT, end-users, compliance) signed off?

Common mistakes:

- Rushing the contract stage without thorough testing.

- Underestimating integration effort.

- Ignoring hidden costs and technical debt.

Due diligence isn’t an optional speed bump—it’s your insurance against regret.

The hidden future of data extraction: what’s coming next?

The rise of autonomous data extraction agents

Early experiments with autonomous agents are already transforming workflows—handling extraction, validation, and routing with minimal human oversight. In some sectors, entire “data ops” teams are being replaced by hyper-specialized AI agents that learn from each interaction.

Scenarios:

- Fully automated organization: End-to-end extraction and processing with zero manual touchpoints.

- Hybrid human-AI team: Humans oversee exceptions and train AI models, maximizing both speed and accuracy.

- Regulatory-driven slowdown: In highly regulated sectors, compliance trumps speed, keeping humans in the loop.

Platforms like textwall.ai are at the vanguard, blending LLMs, adaptive learning, and regulatory controls to shape the next decade of document intelligence.

Societal and ethical implications: who really controls the data?

Advanced extraction tools don’t just impact workflows—they reshape power structures and privacy norms. As more data becomes accessible, the balance between democratization and consolidation sharpens.

- Data democratization: More people gain access to actionable insights, leveling the playing field.

- Data monopoly: Power concentrates in the hands of those with the best tools, deepening existing inequities.

Deploying advanced extraction isn’t just an IT decision—it’s a societal responsibility.

"With great data comes great responsibility." — Taylor, Data Ethics Researcher (illustrative, but echoing established ethical frameworks)

Preparing for the unknown: strategies for long-term resilience

In a landscape defined by volatility, adaptability trumps prediction. The only certainty is change.

Strategies for resilience:

- Future-proof your stack: Choose modular, API-driven tools.

- Double down on continuous learning: Retrain teams and models regularly.

- Scenario planning: Prepare for regulation, disruption, and new data types.

- Leverage trusted resources: Tap industry leaders, robust communities, and innovative platforms like textwall.ai.

As you move forward, remember: mastery isn’t about one brilliant choice, but a relentless commitment to learning, adaptation, and honesty about your needs.

Never stop asking the hard questions—your data (and your future) depend on it.

Appendix: glossary, resources, and next steps

Glossary of essential data extraction terms

This glossary aims to demystify the jargon and empower you to navigate the data extraction software landscape like a pro.

Definition list: Key terms

- OCR (Optical Character Recognition): Converts images or scans into editable text; foundational for digitizing paper records.

- NLP (Natural Language Processing): AI that “reads” and interprets human language in documents.

- Entity extraction: Identifies discrete items (names, dates, codes) within text.

- Schema mapping: Aligns raw data to target database fields or formats.

- PII (Personally Identifiable Information): Data that can identify an individual; handling is strictly regulated.

- API (Application Programming Interface): A way for software systems to communicate and exchange data automatically.

- Technical debt: The long-term cost of short-term technology decisions, often hidden until it bites.

- Data validation: Ensures the accuracy and integrity of extracted data.

- Compliance (SOC2, GDPR, HIPAA): Regulatory frameworks governing security and privacy.

- Human-in-the-loop: Integration of human review in AI-driven workflows, essential for quality control.

Trusted resources and further reading

Stay ahead by tapping the best minds and resources in the data extraction space.

- GIIR Market Report: Deep, data-driven insights into industry trends and tool benchmarks.

- Microblink Blog: Regular updates on technical advances and use cases in document processing.

- GlobeNewswire Market Report: Market size, segmentation, and emerging players.

- Industry-neutral communities: Reddit, LinkedIn, Stack Overflow for peer support and troubleshooting.

- textwall.ai: Authoritative resource on advanced document analysis, best practices, and workflow optimization.

Unordered list: Essential resources

- Authoritative market reports: Offer statistical benchmarks and sector-specific guidance.

- Vendor-neutral forums: Provide candid feedback, bug tracking, and user-driven innovation.

- Open-source repositories: For experimentation, pilot projects, and custom integrations.

- Professional associations: Stay on top of compliance trends and regulatory changes.

Embrace ongoing learning, and don’t hesitate to challenge conventional wisdom.

Where to go from here: your action plan

You’ve made it through the trenches of data extraction software comparison. Here’s your battle plan:

- Audit your current workflows and pain points

- Define measurable success criteria for extraction projects

- Research and validate vendors with pilots and peer input

- Scrutinize integration, security, and compliance features

- Negotiate transparent contracts with clear exit strategies

- Invest in ongoing training, feedback, and continuous improvement

- Stay engaged with trusted resources and evolving best practices

With your newfound clarity, it’s time to take decisive, evidence-based action. The data extraction battlefield is unforgiving—will you lead, or get left behind?

What will you do when your data starts demanding answers you can’t afford to miss?

Sources

References cited in this article

- GIIR Market Report(giiresearch.com)

- Microblink Blog(microblink.com)

- GlobeNewswire Market Report(globenewswire.com)

- Documind Blog(documind.chat)

- Michigan Tech Global Campus(mtu.edu)

- Scoop Market(scoop.market.us)

- Spud Software Horror Stories(spudsoftware.com)

- Spiceworks Data Loss Stories(spiceworks.com)

- Montecarlodata Blog(montecarlodata.com)

- G2 Data Extraction Reviews(g2.com)

- V7 Labs(v7labs.com)

- Forage AI Blog(forage.ai)

- DataHorizzonResearch(datahorizzonresearch.com)

- Thoughtworks Tech Radar(lakefs.io)

- Optiic OCR Trends(optiic.dev)

- Deqode NLP Trends(deqode.com)

- AIPRM ML Statistics(aiprm.com)

- Docsumo Techniques(docsumo.com)

- Forbes Tech Council(forbes.com)

- Mailmodo Data Extraction Software(mailmodo.com)

- Captain Data Blog(captaindata.com)

- Automation Anywhere(automationanywhere.com)

- Hevo Data Guide(hevodata.com)

- EdgeVerve(edgeverve.com)

- NanoNets Medium(medium.com)

- WiseGuyReports(wiseguyreports.com)

- DataScienceCentral(datasciencecentral.com)

- Templafy Forrester Report(templafy.com)

- Grand View Research(grandviewresearch.com)

- Evolution AI Myths(evolution.ai)

- Knowledge Hub(knowledge-hub.com)

- Forbes Myths(forbes.com)

- Sonatype Security Report(sonatype.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Data Extraction Methods Comparison When a Wrong Choice Costs Millions

Uncover how today’s top approaches stack up on speed, accuracy, and risk. Make your next move with confidence—read before you decide.

Data Extraction From Unstructured Documents Is Breaking in 2026

Discover insights about data extraction from unstructured documents

Data Extraction From Handwritten Documents Is Failing Quietly

Handwriting, that ancient scrawl of ink and graphite, is supposed to be dying. But here you are, staring at stacks of forms, notebooks, and yellowed ledgers,

Data Extraction Automation That Pays Off, Not Blows Up Your Workflow

Data extraction automation is revolutionizing workflows—yet most guides miss the real risks, payoffs, and secrets. Uncover the truth and future-proof your next move.

Data Extraction Accuracy Is Lying to You (and Costing You More)

Discover insights about data extraction accuracy

Data Capture From Documents in 2026: What Actually Works Now

Data capture from documents just changed forever. Discover 7 brutal truths, hidden wins, and how to actually get ahead—before your competitors do.

Customizable Document Summaries Are Your New Unfair Advantage

Customizable document summaries are revolutionizing how we process texts. Discover 7 edgy strategies to master AI-powered insights—before your competition does.

Customizable Document Analysis Software That Won’t Break in 2026

Customizable document analysis software is transforming workflows—discover the hidden pitfalls, expert insights, and bold solutions reshaping 2026. Don’t miss the future.

Cost-Effective Document Analysis in 2026: Roi, Risks, Reality

Discover the 7 truths that could save or sink your strategy in 2026. Get actionable insights, expert myths debunked, and hidden risks revealed.