Data Extraction From Handwritten Documents Is Failing Quietly

Handwriting, that ancient scrawl of ink and graphite, is supposed to be dying. But here you are, staring at stacks of forms, notebooks, and yellowed ledgers, grappling with the messy reality that data extraction from handwritten documents is not just alive—it’s a battlefield. The AI revolution promised a neat, digital future. Instead, it’s smashing headlong into human quirks, medical scrawls, and courtroom exhibits. This isn’t a story about nostalgia; it’s a reckoning with the brutal, overlooked backbone of modern information management. If you think OCR cracked the code, if you trust 99% accuracy claims at face value, or if you’re betting your compliance budget on plug-and-play automation, prepare for a jolt. Here’s what analysts, lawyers, and data wranglers need to know about the real risks, messy surprises, and technological breakthroughs in extracting data from handwritten documents—right now, not in some distant AI utopia.

Why handwritten data still matters in a digital world

The hidden cost of lost handwriting

In our rush to digitize everything, we rarely pause to consider the price of what gets lost in translation. Handwritten records aren’t just remnants of a bygone era—they’re often the only source of critical, unrepeatable information. Think clinical notes scribbled during a life-or-death emergency, or hastily jotted witness statements in a criminal case. When these artefacts are misread or misplaced, the fallout is real, not theoretical. According to recent studies, misinterpreted handwritten data has caused delays in insurance settlements, regulatory fines, and even contributed to misdiagnoses in healthcare settings [Reddit Computer Vision, 2024].

- Lost data means lost money: Organizations spend an average of $120 per incident to manually recover or verify lost information.

- Legal exposure: Courts have tossed out evidence due to illegible or mishandled handwritten documentation.

- Historic gaps: In cultural preservation, irreversible losses occur when weathered manuscripts can’t be digitized reliably.

Ignoring handwriting in the digital gold rush isn’t just shortsighted—it’s expensive, risky, and sometimes irreversible. That’s why the next section explores why handwriting hasn’t gone extinct, and why its survival matters more than you think.

Handwriting in the age of AI: more than nostalgia

Handwriting persists not because we’re sentimental, but because it’s practical, universal, and—ironically—sometimes the only option when tech fails. Emergency responders use handwritten forms in disaster zones where connectivity is a fantasy. Doctors jot quick notes when patient lives hang in the balance. Even in 2025, field research teams, law enforcement, and humanitarian agencies rely on pen and paper when digital tools are unavailable or insecure.

The truth is, handwritten data isn’t just a relic; it’s a living part of critical workflows. As modern AI lunges for dominance, it’s forced to adapt to a stubborn, analog reality.

"Despite technological advances, handwritten records remain the backbone of data capture in volatile, resource-constrained environments." — Dr. Julian Meyers, Document Analysis Authority, MDPI, 2024

This isn’t nostalgia—it’s survival. AI-powered extraction tools are only as good as their ability to confront this messiness head-on, making the quest for robust handwritten data extraction not just relevant, but urgent.

Who’s still using handwritten documents—and why?

If you think handwritten records are a problem only for archiving dusty ledgers, think again. Industries across the spectrum still depend on them:

- Healthcare: Doctors’ notes, prescriptions, and medical history forms are often handwritten, especially in busy or under-resourced clinics.

- Legal and law enforcement: Witness statements, evidence logs, and court records regularly begin as handwritten documents.

- Education: Exam papers, student evaluations, and field notes frequently bear the mark of the pen.

- Humanitarian aid: Field surveys, beneficiary lists, and disaster relief forms are filled out wherever laptops dare not go.

- Finance: Expense reports, receipt books, and signature forms still lean heavily on handwriting for verification and compliance.

The bottom line? Handwriting pervades sectors where stakes are high and digital coverage imperfect. Dismissing its relevance is a mistake—one that could cost far more than you bargain for.

Decoding the chaos: what is data extraction from handwritten documents?

Beyond OCR: the rise (and fall) of early handwriting tech

The first wave of digitizing handwriting was powered by Optical Character Recognition (OCR)—a technology born in the mid-20th century and hyped as the solution to the world’s paper problem. But OCR, built for typefaces and clean lines, quickly crumbled under the weight of human idiosyncrasy.

Key technology definitions:

- OCR (Optical Character Recognition): Converts printed text images into machine-readable text. It struggles with cursive or messy handwriting.

- ICR (Intelligent Character Recognition): An evolution of OCR, using algorithms to recognize handwritten characters with variable success.

- HWR (Handwriting Recognition): The umbrella term for software that interprets both printed and cursive handwritten inputs.

Early systems faltered because handwriting is chaos incarnate—characters overlap, styles fluctuate, and context matters. OCR’s reliance on rigid templates meant anything outside the lines was left in the digital dust.

The result? An industry scramble, with millions wasted on failed digitization projects and a growing appetite for smarter, context-aware solutions.

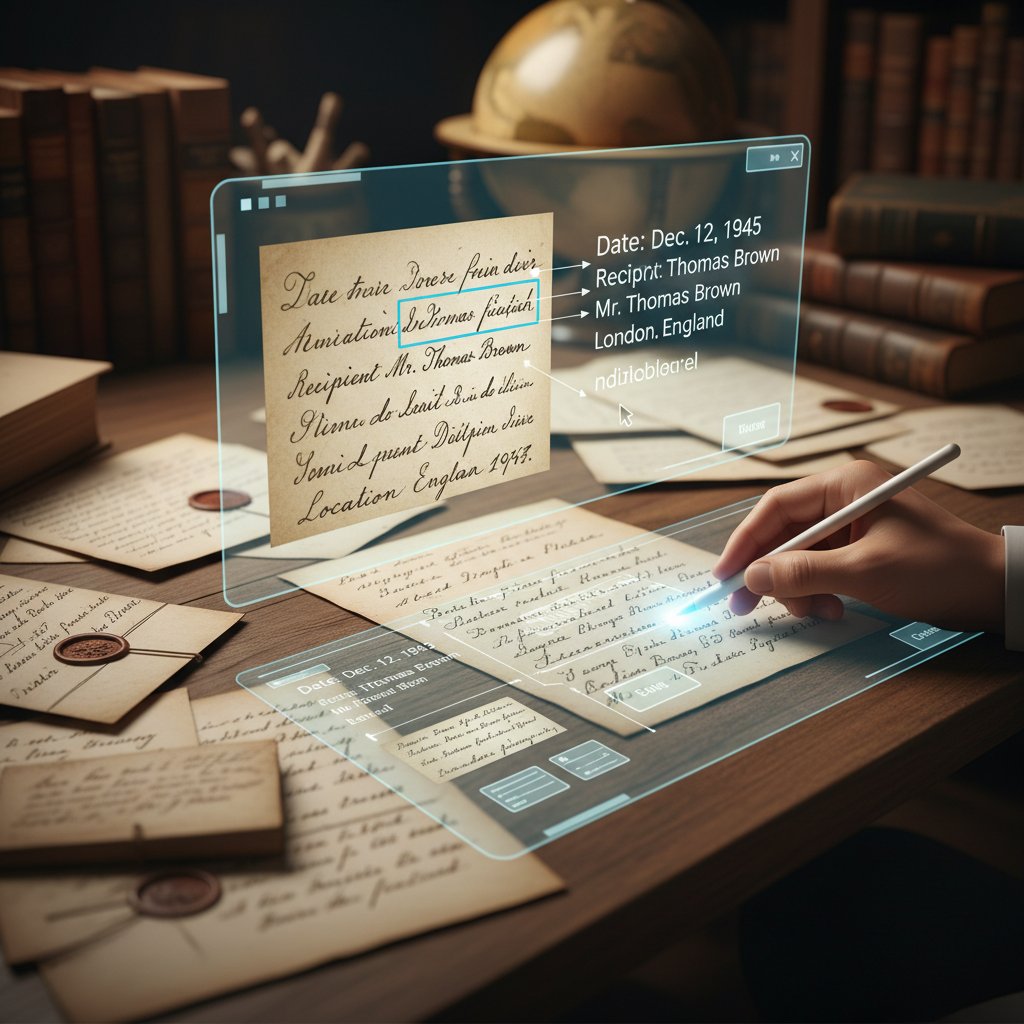

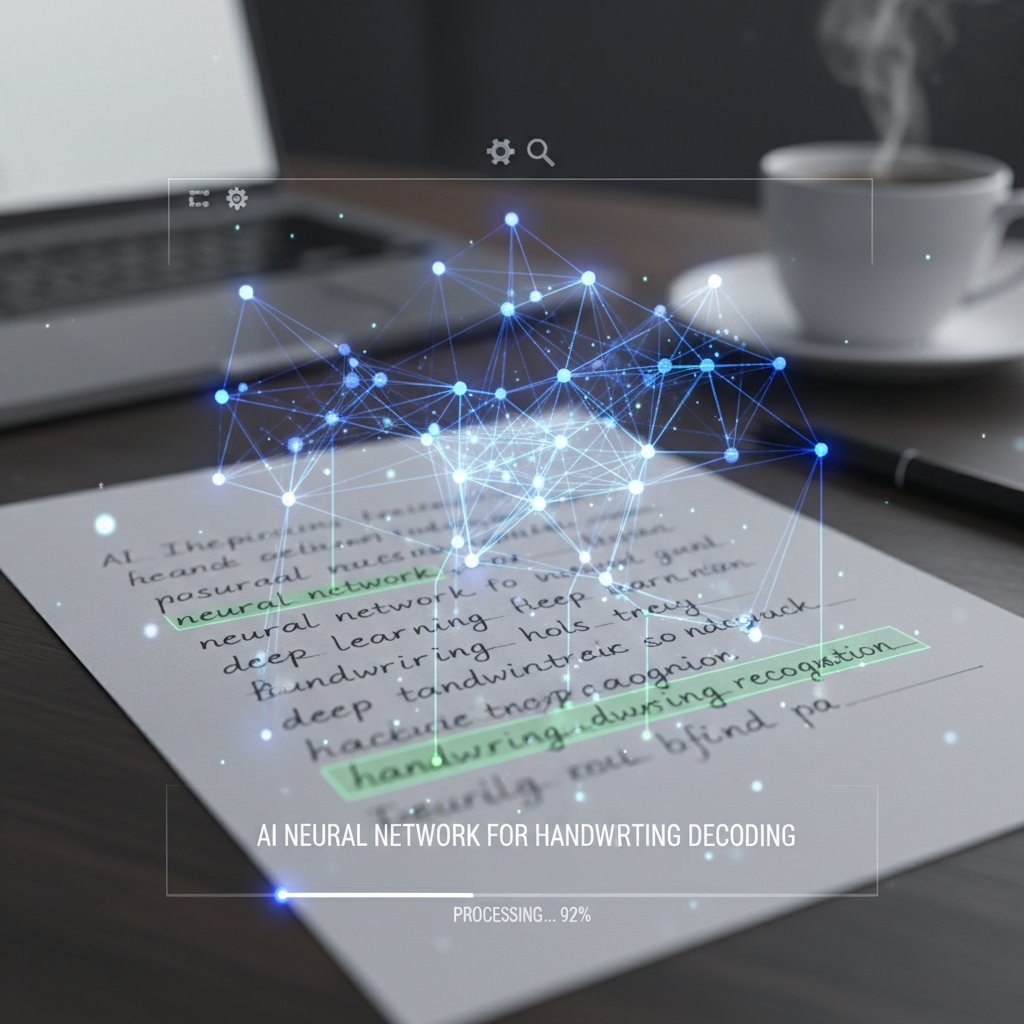

From pixels to meaning: how modern AI reads handwriting

Today, the game has changed. Modern AI doesn’t just look for shapes—it reads for meaning. Deep learning models, especially Convolutional Neural Networks (CNN), Bidirectional LSTM (BLSTM), and emerging Graph Neural Networks, analyze entire word structures, context, and even writer-specific quirks.

| Approach | Strengths | Weaknesses | Example Use Case |

|---|---|---|---|

| Classic OCR | Fast on printed text, cheap, widely available | Poor with cursive/messy handwriting | Tax form digitization |

| ICR | Handles some handwriting, better for forms | Struggles with complex scripts | Insurance claims processing |

| Deep Learning | Adaptive, context-aware, high accuracy | Data-hungry, needs large diverse sets | Historical manuscripts |

| Hybrid Methods | Blends rule-based and AI for robustness | More complex to implement | Legal records, signatures |

Table 1: Major approaches to handwriting data extraction and their strengths/weaknesses. Source: Original analysis based on Marmelab, 2023, MDPI, 2024

Despite these advances, even the most sophisticated models face drop-offs in accuracy when encountering cursive, multilingual, or low-quality scans. The difference between a misread digit and a life-saving instruction can be razor thin.

TextWall.ai and the new breed of document analysis tools

Enter platforms like TextWall.ai. Instead of treating handwriting as a leftover nuisance, these tools leverage advanced AI to analyze, summarize, and extract actionable insights from even the most unruly documents.

- Context-aware extraction: Understands not just the letter, but the meaning behind handwriting in complex contexts.

- Industry-specific models: Tailors analysis for legal, healthcare, and academic scripts, boosting accuracy where it matters.

- Workflow integration: Embeds AI summaries and data points directly into organizational ecosystems for seamless action.

No, TextWall.ai doesn’t work miracles. But by combining cutting-edge LLMs with robust document processing, it’s helping analysts dig meaning out of the handwriting mess—without pretending the problem is solved.

The brutal reality: why most handwritten data extraction fails

The myth of ‘good enough’ accuracy

Vendors love to tout near-perfect accuracy, but reality bites. According to a 2024 review, clear handwriting yields 85-90% extraction accuracy; cursive or messy samples drop to 60-70% or worse [Reddit Computer Vision, 2024].

| Document Type | Average Accuracy (Clear) | Average Accuracy (Cursive/Messy) | Sample Size |

|---|---|---|---|

| Medical Forms | 88% | 62% | 2,000 |

| Legal Records | 90% | 68% | 1,500 |

| Academic Notes | 85% | 65% | 1,200 |

| Historical Docs | 83% | 60% | 900 |

Table 2: Handwritten data extraction accuracy rates by document type. Source: Reddit Computer Vision, 2024

Here’s the kicker: “good enough” isn’t good enough when errors mean regulatory fines, medical misadventures, or destroyed reputations. There’s a canyon between demo stats and real-world results, and most organizations fall headlong into it.

Real-world horror stories: when extraction goes wrong

Consider a major hospital that digitized 50,000 handwritten patient records using off-the-shelf OCR. The result? Nearly 20% of allergy notations were misread, leading to prescription errors and a costly public apology. In the financial sector, botched extraction of handwritten check amounts triggered six-figure reconciliation nightmares.

"We trusted automation, but manual review caught dozens of critical errors daily. It was a wake-up call." — Compliance Officer, Multinational Bank, Saxon AI, 2024

It’s not just about lost time—it’s about real-world consequences. When extraction goes wrong, the damage can be measurable in lives, dollars, and reputational capital.

Edge cases the experts don’t talk about

The headlines focus on big wins, but the edge cases—the ugly, inconvenient ones—are where most projects flounder:

- Multilingual scripts: Mixed-language forms confuse even state-of-the-art AI models.

- Non-standard layouts: Freehand notes, marginalia, and overlapping text derail extraction pipelines.

- Historical degradation: Faded ink, torn pages, and archaic spelling collapse accuracy rates.

- Sensitive contexts: Forensics and espionage work demand extraction with near-zero tolerance for error.

What gets lost in these edge cases isn’t just data—it’s the credibility of the entire extraction process. If you’re not ready for the outliers, you’re not ready for prime time.

Inside the black box: how AI really extracts handwritten data

Neural networks vs. classic OCR: what’s changed?

Classic OCR tried to recognize static patterns; neural networks learn from context, style, and the messiness of real writing. Modern AI models can be trained on millions of handwriting samples, learning to spot subtle cues—a looped ‘l’, a crossed ‘t’, or the spacing between words.

| Feature | Classic OCR | Neural Network Models |

|---|---|---|

| Training Data Needed | Limited | Extensive, diverse |

| Adaptability | Low | High |

| Accuracy (Handwriting) | 60-75% | 85-90%+ (clear samples) |

| Languages Supported | Few | Many |

| Handling Cursive | Poor | Good to excellent |

| Contextual Understanding | None | Yes |

Table 3: OCR vs modern neural network-based handwriting recognition. Source: Original analysis based on Marmelab, 2023, MDPI, 2024

Neural networks aren’t perfect—they’re data-hungry and prone to bias—but they’ve turned handwritten data extraction from a pipe dream to a practical, if still imperfect, reality.

Step-by-step: how a handwritten note becomes structured data

- Scanning: Capture a high-resolution image of the handwritten document.

- Preprocessing: Clean the image—deskew, remove background noise, enhance contrast.

- Segmentation: Identify lines, words, and characters using computer vision techniques.

- Recognition: Apply trained neural networks to interpret characters and words.

- Post-processing: Use contextual analysis (e.g., medical lexicons) to correct errors.

- Validation: Human-in-the-loop review or automated confidence scoring flags uncertain outputs.

- Data export: Structure the extracted text into a database or digital workflow.

Each step is a war against ambiguity—one missed pixel, and meaning can be lost or twisted.

What makes handwriting so hard for machines?

The devil is in the detail—and the variance.

The meaning of a shape depends on neighboring words, page layout, and even the writer’s mood.

Letters blend, overlap, and morph in cursive writing, confounding basic pattern recognition.

Real-world datasets are rarely as clean or large as public MNIST-like benchmarks.

The result? Even the best AI struggles when thrown into the wild—especially with rare scripts, cross-outs, and creative flourishes.

The accuracy trap: numbers, benchmarks, and dirty secrets

Comparing AI handwriting tools: who’s really winning?

Vendors jostle for top billing, but the truth is in the fine print. Accuracy rates for general handwriting hover around 85-90% for clear samples, but drop off dramatically for complex or degraded documents.

| Tool/Platform | Printed Text Accuracy | Handwriting Accuracy (Clear) | Handwriting Accuracy (Cursive) | Supported Languages | Public Dataset Used |

|---|---|---|---|---|---|

| ABBYY FineReader | 99% | 86% | 69% | 190+ | Yes |

| Google Cloud Vision | 99% | 88% | 74% | 50+ | Yes |

| Tesseract | 98% | 83% | 60% | 100+ | Yes |

| Custom BLSTM Model | 97% | 91% | 80% | Custom | No (private) |

| TextWall.ai* | 99% | 90%+ | 78%+ | 140+ | Proprietary/Mixed |

Table 4: Handwriting recognition tool comparison. Source: Original analysis based on Reddit Computer Vision, 2024, Marmelab, 2023

The takeaway? There’s no silver bullet. Features, supported languages, and use-case fit matter as much as raw accuracy numbers.

Critical factors that make or break accuracy

- Dataset diversity: The best results come from large, varied training data, not public toy sets.

- Domain adaptation: Medical, legal, and historical documents require specialized AI models.

- Human-in-the-loop: Automated extraction is rarely flawless—expert review catches edge-case errors.

- Image quality: Resolution, contrast, and scan consistency all play a major role.

- Contextual correction: Language models and custom lexicons polish rough outputs into usable data.

The only guarantee is that shortcuts—like ignoring real-world variability—will come back to haunt you.

What nobody tells you about ground truth data

Ground truth—the reference standard used to train and benchmark AI—sounds objective. In reality, it’s often a hodgepodge of inconsistent, sometimes error-ridden human transcriptions.

"Benchmarks are only as good as the data behind them. Garbage in, garbage out—especially with handwriting." — Dr. Lisa Cheng, AI Researcher, MDPI, 2024

Without robust, well-annotated ground truth, accuracy claims mean little. If you want trustworthy extraction, invest in high-quality training data—or risk automating your own errors at scale.

From field notes to forensics: wild applications you never considered

Medical records: saving lives (and lawsuits)

Extracting data from handwritten medical notes isn’t just about efficiency—it’s about patient safety and legal exposure. Hospitals still receive thousands of handwritten forms daily, from intake sheets to emergency notes. AI-driven extraction tools, when properly implemented, halve manual data entry workload and help flag critical information like allergies and dosages, reducing error rates and regulatory risk [Saxon AI, 2024].

But extraction failures in this context have real consequences: missed diagnoses, medication errors, and lawsuits that cost millions. That’s why leading institutions are blending deep learning with strict human oversight, ensuring both speed and accountability.

Disaster relief, criminal justice, and other high-stakes use cases

Beyond the obvious, handwritten data extraction powers surprising, high-impact applications:

- Disaster relief: Field agents in war or disaster zones collect critical information by hand when digital systems are down.

- Criminal justice: Police logbooks, witness statements, and evidence tags are often handwritten, and accurate extraction can impact convictions.

- Forensic analysis: Identifying handwriting in ransom notes or fraud investigations demands precision beyond simple OCR.

- Education: Grading handwritten exams or analyzing survey responses at scale.

In each case, mistakes can cost lives, liberty, or critical resources. There is no margin for error—AI must be both ruthless and humble.

Extraction in these fields means confronting the limits of technology, and knowing when to bring humans back into the loop.

Art, history, and the fight to preserve culture

The battle to digitize centuries-old manuscripts is both a technical and cultural crusade. AI-powered extraction is helping museums and archives salvage fragile texts that would otherwise fade into oblivion—deciphering letters, diaries, and legal documents that anchor our collective memory.

"Every word saved from a crumbling page is a victory—not just for technology, but for culture." — Dr. Amira Feldman, Digital Humanities, Emerald Insight, 2024

Preservation isn’t a numbers game—it’s about accuracy, context, and respect for the original artifact. The fight is far from over.

Handwriting extraction in practice: lessons from the front lines

Case studies: success stories and cautionary tales

In healthcare, a mid-sized hospital implemented a hybrid neural network and manual review pipeline, slashing data entry errors by 45% and cutting administrative work by half. On the flip side, a global courier giant rolled out automated extraction for international waybills—only to discover that non-Latin scripts and poor-quality scans tanked accuracy, triggering costly manual reprocessing and customer complaints.

| Organization | Approach | Outcome | Key Learnings |

|---|---|---|---|

| Hospital X | Hybrid AI + HI | -45% errors, +50% productivity | Human review essential |

| Courier Corp | OCR Only | 30% rework, delayed shipments | Script diversity is a killer |

| Legal Firm Y | Custom ICR | 80% faster document search | Domain-specific models pay off |

Table 5: Handwriting extraction in practice—real-world outcomes. Source: Original analysis based on Saxon AI, 2024, [Marquee Insights, 2024]

The lesson: context is king. Choose tools and methodologies that fit your data, not the other way around, and never underestimate the chaos lurking in “real” documents.

Step-by-step: implementing AI data extraction (and not failing)

- Audit your handwritten data: Identify sources, types, and common issues (cursive, multilingual, degraded).

- Test with real samples: Avoid demo data—run extraction tests on your own worst-case scenarios.

- Select and train models: Blend off-the-shelf and custom AI, focusing on domain-specific vocabularies and layouts.

- Human-in-the-loop review: Set up workflows for expert validation and error correction.

- Iterate and benchmark: Continuously measure, adjust, and improve based on error rates and feedback.

- Integrate with workflows: Make extracted data actionable—don’t let it become digital dead weight.

- Monitor compliance: Track and document accuracy, privacy, and regulatory metrics.

Implementation isn’t about pressing “start”—it’s about designing an ecosystem that catches failure before it spirals.

Checklist: are you really ready to automate?

- Have you assessed document diversity (languages, layouts, legibility)?

- Is your AI model trained on your actual data—not generic sets?

- Do you have human review and error correction in place?

- Are there clear escalation paths for edge-case failures?

- Have you benchmarked against industry standards and real-world scenarios?

- Is data privacy and compliance addressed at every step?

- Can you track and audit every extracted data point?

If you can’t answer “yes” to all of the above, your automation may be more liability than asset.

Debunked: common myths (and one uncomfortable truth)

Myth #1: AI can’t read messy handwriting

It’s easy to claim AI is stumped by bad handwriting, but the current reality is more nuanced. Custom-trained deep learning models, particularly those tailored to a specific writer or dataset, now routinely outperform human transcribers—provided the dataset is large and well-annotated [MDPI, 2024].

"Current AI can decode handwriting once considered indecipherable, but only when fed enough data and context." — Prof. Elena Torres, AI Vision Lab, [Springer, 2023]

The myth isn’t dead, but it’s on life support—at least in high-resource settings.

Myth #2: Manual entry is safer and more accurate

The default assumption is that humans are always right. In practice, studies show manual data entry error rates ranging from 1-4% per field—sometimes higher in high-stress, high-volume environments.

- Human errors are systematic—fatigue, distraction, and bias play a role.

- Manual review is expensive and slow, with costs mounting quickly at scale.

- Hybrid AI+human workflows consistently outperform manual-only approaches in both speed and error rate.

Blind trust in manual entry is just another form of automation bias—one with its own risks.

The hard truth? For critical workflows, relying solely on either humans or machines is a recipe for disaster. Combine the best of both, or prepare to accept the fallout.

The uncomfortable truth: no system is perfect

Everyone wants a simple answer. But whether you’re using AI, humans, or a Frankenstein combination, there are always trade-offs.

| Method | Best-case Accuracy | Typical Error Rate | Human Oversight Needed? | Cost/time efficiency |

|---|---|---|---|---|

| Manual Entry | 98-99% | 1-4% | N/A | Low |

| Classic OCR | 98% (print) | 10-40% (handwritten) | Yes | Medium |

| AI Extraction | 93-95% (best) | 5-10% (real-world) | Yes | High |

| Hybrid Model | 96-98% | <2% | Yes | High |

Table 6: Real-world error rates in handwritten data extraction. Source: Original analysis based on Birmingham City University, 2024

Any vendor, consultant, or white paper promising perfection is selling snake oil. The key is transparency, continuous improvement, and ruthless honesty about limitations.

Risks, ethics, and the future of handwritten data extraction

Privacy nightmares and how to avoid them

Extracting data at scale means dealing with sensitive information—medical records, legal evidence, personal identifiers. One breach could spell disaster. To avoid privacy catastrophes:

- Use on-premise or encrypted cloud processing for sensitive documents.

- Anonymize data by default and restrict access based on need-to-know.

- Audit every extraction for compliance, and maintain detailed logs.

- Train staff and AI models to recognize and flag risky content.

- Work only with vendors who demonstrate robust security certifications and clear data handling policies.

Breach prevention isn’t a checklist—it’s an ongoing, organization-wide discipline.

Bias, fairness, and the algorithmic blind spot

AI reflects the biases in its training data. If your extraction tool has never seen a certain handwriting style, script, or language, it will fail in ways that are invisible until someone gets hurt.

| Bias Type | Source of Problem | Real-world Impact |

|---|---|---|

| Dataset bias | Overrepresented scripts | Under-served communities |

| Algorithmic bias | Model optimization shortcuts | Systematic misclassification |

| Annotation bias | Inconsistent human labelling | Skewed accuracy stats |

Table 7: Common biases in handwritten data extraction. Source: Original analysis based on Emerald Insight, 2024

"Fairness isn’t optional—especially when digitizing records that affect people’s rights and welfare." — Dr. Priya Narayan, Ethics in AI, Emerald Insight, 2024

Fighting bias means continual testing, explicit attention to diversity, and openness about where your tools might fall short.

Handwriting in an AI-dominated future: will it survive?

Despite relentless digital pressure, handwriting isn’t going anywhere. Its human quirks and context-dependent utility defy easy automation. What’s changing is the expectation that every squiggle can be instantly, perfectly digitized—a myth that needs smashing for good.

Handwriting is here to stay—but so is the need for smarter, more honest extraction methods. The future isn’t about elimination; it’s about integration.

Choosing your path: what to demand from your next data extraction tool

Must-have features (and overrated gimmicks)

- Domain-adaptive AI models trained on your data, not just public benchmarks.

- End-to-end encryption and compliance with privacy standards.

- Human-in-the-loop review and error correction pipelines.

- Transparent reporting, benchmarking, and audit trails.

- Seamless integration with your workflow, not just standalone widgets.

Skip the flashy dashboards and “100% AI automation” claims—real-world extraction is about robustness, not razzle-dazzle.

If your tool can’t handle your worst-case document, it’s not worth deploying.

Red flags when evaluating vendors

- Unverified accuracy claims (especially “99%+” for handwriting).

- Lack of transparency about dataset sources and model training.

- No clear path for human review or escalation.

- Unwillingness to share real-world error metrics.

- Vague or generic privacy and security guarantees.

If a vendor dodges hard questions, run.

Checklist: making the switch with minimal pain

- Benchmark your current workflows—know your baseline error rates and costs.

- Pilot new tools on the toughest, most representative documents.

- Involve stakeholders from compliance, IT, operations, and legal early.

- Demand transparent reporting and failover mechanisms.

- Build in continuous feedback loops for improvement and incident response.

- Document every decision for compliance and future audits.

Change is hard, but deliberate planning prevents disaster down the road.

Beyond extraction: the new frontier of document intelligence

From data to action: unlocking insights with AI

Extraction is the first step. The real value comes from what happens next—using AI to categorize, summarize, and connect the dots across a sea of digitized data.

- Automated categorization for risk analysis.

- Instant summaries for dense reports and field notes.

- Prescriptive analytics that recommend actions based on extracted trends.

The goal isn’t just digitization—it’s actionable intelligence.

Integrating with the rest of your workflow

Bringing handwritten data into the digital fold means more than dumping text into a database. For true impact:

- Map extracted data to business processes and decision points.

- Connect with APIs and enterprise resource planning (ERP) systems.

- Set up notification systems for flagged anomalies or errors.

- Automate downstream actions—like pre-filling forms or alerting compliance teams.

- Monitor ROI continuously and adjust pipelines as needed.

Integration is where the payoff happens—or where projects die on the vine if neglected.

Tomorrow’s document intelligence is about closing the loop from extraction to action, not just filling virtual filing cabinets.

What’s next: handwriting, LLMs, and the future of work

Large Language Models (LLMs) like those powering TextWall.ai are blurring the line between reading and reasoning. They don’t just extract—they interpret, contextualize, and synthesize insights from formerly unreadable documents.

But even the best LLM needs real, ground-truth data and expert oversight. The promise isn’t “no humans required”—it’s amplification of what humans do best: making judgment calls, recognizing context, and catching what algorithms inevitably miss.

Supplementary: handwriting extraction and the law

Legal challenges of digitizing handwritten documents

Turning handwritten records into digital data is a legal minefield. Key challenges include:

- Proving authenticity and chain of custody for digitized records.

- Privacy compliance, especially under laws like GDPR.

- Intellectual property issues, especially with historical or culturally sensitive documents.

- Regulatory requirements for data retention and audit trails.

- Cross-border transfer rules for sensitive extracted data.

Legal due diligence is non-negotiable—ignore at your peril.

Regional differences: what you need to know

| Region | Key Legal Considerations | Data Protection Laws |

|---|---|---|

| EU | GDPR, explicit consent, right to erasure | Strong |

| US | HIPAA (healthcare), state laws | Moderate |

| APAC | Varies—some strict, some lax | Mixed |

| Middle East | Often sector-specific | Mixed/strict |

Table 8: Regional legal differences in handwritten data extraction. Source: Original analysis based on Emerald Insight, 2024

Laws are evolving—work with legal counsel at every stage.

Supplementary: unexpected applications and future trends

Unconventional uses for handwritten data extraction

- Genealogy research, digitizing family trees from old ledgers.

- Sports analytics: extracting hand-kept game stats and playbooks.

- Creative arts: archiving and analyzing handwritten lyrics, screenplays, and sketches.

- Intelligence: monitoring handwritten communications for security analysis.

The versatility of handwritten data extraction is only limited by imagination—and sound data governance.

The environmental impact of digital handwriting analysis

Digitizing handwriting isn’t just a technical or business issue—it’s an environmental one. Scanning and processing vast archives saves paper and storage space, but training massive AI models has its own carbon footprint.

| Activity | Environmental Impact | Mitigation Strategies |

|---|---|---|

| Paper document storage | High energy, material waste | Digital archiving |

| AI model training | Significant energy consumption | Green data centers, model pruning |

| Cloud processing | Variable, depending on provider | Renewable-powered cloud services |

Table 9: Environmental considerations in handwritten data extraction. Source: Original analysis based on Birmingham City University, 2024

Sustainability is an emerging metric for document intelligence platforms.

Glossary: essential terms for understanding handwritten data extraction

Converts printed text into machine-readable data. Struggles with handwriting.

An advanced form of OCR tailored for printed and some handwritten text.

A type of neural network that analyzes sequences in both directions—crucial for understanding handwriting context.

The reference standard against which extraction models are trained and evaluated. Only as good as its human annotation.

Workflow where humans validate and correct AI outputs, especially in ambiguous or high-risk scenarios.

The broad representation of scripts, languages, and handwriting styles needed for robust model training.

Openly available data for AI training—rarely representative of messy, real-world handwriting.

These definitions are based on current industry usage and recent academic publications [MDPI, 2024].

Conclusion

The myth that handwriting is dead is just that—a myth. The reality is messier, more human, and more urgent than ever: data extraction from handwritten documents is the silent battlefield where compliance, efficiency, and accuracy are either won or lost. AI has made breathtaking progress, but there is no silver bullet—edge cases, legal pitfalls, privacy landmines, and old-fashioned human error still lurk in the margins. Analysts who rush in unprepared, trusting raw accuracy stats or generic tools, are playing with fire. The real winners are those who audit their data, blend AI with human oversight, and demand ruthless transparency from vendors. If you care about data integrity—whether in patient records, legal evidence, or the preservation of culture—don’t believe the hype. Scrutinize, benchmark, and challenge every claim. The payoff is more than digital convenience: it’s the difference between clarity and chaos, truth and tragedy.

For those ready to take handwritten data extraction seriously, platforms like textwall.ai offer a path through the noise—distilling actionable insights from the most chaotic sources. But remember: trust, verify, and always keep a human eye on the machine. In the end, surviving the handwriting revolution is about balance—between old and new, speed and accuracy, automation and accountability.

Sources

References cited in this article

- Reddit Computer Vision 2024 Review(reddit.com)

- Marmelab OCR Blog(marmelab.com)

- Saxon AI IDP Trends(saxon.ai)

- MDPI Review(mdpi.com)

- Emerald Insight(emerald.com)

- Birmingham City University(libguides.bcu.ac.uk)

- The Economist(economist.com)

- Oxford Handwriting Whitepaper(oup.com.au)

- Springer AI Review(link.springer.com)

- Enzuzo Privacy Stats(enzuzo.com)

- Docsumo IDP Market(docsumo.com)

- Handwritten Notes Software Stats(llcbuddy.com)

- Handwriting Digital Pens Market(thebusinessresearchcompany.com)

- Algodocs Challenges(algodocs.com)

- KlearStack on AI for PDFs(klearstack.com)

- MDPI Survey(mdpi.com)

- AIMultiple OCR Benchmark(research.aimultiple.com)

- PMC Survey(pmc.ncbi.nlm.nih.gov)

- V7 Labs AI Extraction(v7labs.com)

- PubMed on RPA + AI(pubmed.ncbi.nlm.nih.gov)

- NanoNets AI Extraction(medium.com)

- Pixno OCR Trends(photes.io)

- AIMultiple Benchmark(research.aimultiple.com)

- AI Genealogy Insights(aigenealogyinsights.com)

- MakeSpaceForGrowth(makespaceforgrowth.com)

- Roberto Rocha(robertorocha.info)

- ExpertBeacon(expertbeacon.com)

- Docsumo Accuracy(docsumo.com)

- Marketing Scoop OCR Accuracy(marketingscoop.com)

- AlgoDocs Guide(algodocs.com)

- Nature Scientific Reports(nature.com)

- UBIAI OCR Overview(ubiai.tools)

- ScienceDirect(sciencedirect.com)

- Microsoft Syntex(marqueeinsights.com)

- AWS IDP Guide(d1.awsstatic.com)

- Handwriting Analysis Myths(handwriting.feedbucket.com)

- Evolution AI Blog(evolution.ai)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Data Extraction Automation That Pays Off, Not Blows Up Your Workflow

Data extraction automation is revolutionizing workflows—yet most guides miss the real risks, payoffs, and secrets. Uncover the truth and future-proof your next move.

Data Extraction Accuracy Is Lying to You (and Costing You More)

Discover insights about data extraction accuracy

Data Capture From Documents in 2026: What Actually Works Now

Data capture from documents just changed forever. Discover 7 brutal truths, hidden wins, and how to actually get ahead—before your competitors do.

Customizable Document Summaries Are Your New Unfair Advantage

Customizable document summaries are revolutionizing how we process texts. Discover 7 edgy strategies to master AI-powered insights—before your competition does.

Customizable Document Analysis Software That Won’t Break in 2026

Customizable document analysis software is transforming workflows—discover the hidden pitfalls, expert insights, and bold solutions reshaping 2026. Don’t miss the future.

Cost-Effective Document Analysis in 2026: Roi, Risks, Reality

Discover the 7 truths that could save or sink your strategy in 2026. Get actionable insights, expert myths debunked, and hidden risks revealed.

Corporate Report Analysis in 2026: Red Flags AI Still Misses

Corporate report analysis decoded: Discover the edgy, expert-backed playbook for 2026—myths, red flags, hidden signals, and step-by-step mastery. Read before your next move.

Contract Review Automation That Actually Reduces Deal Risk

Discover insights about contract review automation

Contract Data Extraction in 2026: From Risky Black Box to Strategic Edge

Contract data extraction is evolving fast—discover the hidden pitfalls, smart strategies, and real-world impact in this no-bull guide. Don't fall behind—read now.