Academic Research Document Analysis in the Age of AI and Bias

Academic research document analysis is in the throes of a seismic shift—one that’s rewriting the rules, shattering old assumptions, and demanding a new breed of ruthless honesty. If you think you know how to dissect a research paper, analyze dense reports, or trust the findings from the last meta-analysis you read, think again. In this era, every document you review might be misleading, incomplete, or worse—deliberately manipulative. Regulatory crackdowns, the explosion of AI-powered tools, and a spike in academic misconduct have made 2025 a pivotal year: you can either adapt or get left behind. This guide exposes seven ruthless truths about academic research document analysis, reveals the advanced methods separating the pros from the naive, and arms you with strategies to dominate your next review instead of falling victim to hidden pitfalls. Whether you’re a researcher, student, or professional, this is your essential map for surviving—and thriving—in the new world of research review.

Why everything you know about document analysis is outdated

The shocking failures of conventional methods

Once upon a time, academic research document analysis was a slow, painstaking ritual. You’d print out a paper, grab your highlighters, and start marking up the text. The assumption? That careful, manual reading would reveal every flaw and every insight. But according to Hogan Lovells (2024), this approach is woefully outdated and often catastrophic in today’s environment. Recent regulatory audits have revealed that over 40% of research reviews using traditional methods missed major compliance issues or failed to flag misrepresented data.

The core problem is that academic documents themselves are often incomplete, inaccurate, or outdated. Modern academic fraud is more sophisticated than ever, with researchers gaming citation metrics and hiding questionable practices in dense appendices. As Walden University notes, the traditional “read and highlight” method leaves reviewers vulnerable to confirmation bias, oversight, and fatigue-induced errors.

“Academic research integrity is facing unprecedented scrutiny, but our review methods haven’t kept pace. Too many rely on outdated habits that simply can’t catch the new wave of misconduct.” — Hogan Lovells, 2024

The cost of sticking to these legacy methods isn’t just inefficiency—it’s reputational risk, retracted publications, and wasted funding. If you’re still trusting the old playbook, you’re already falling behind.

What academia won’t admit about bias and blind spots

The myth of the “neutral reader” runs deep in academic circles. Yet, research from Nature (2025) exposes a harsh reality: bias seeps into every stage of document analysis, from initial selection to final synthesis. Self-citation rates have soared, with some researchers getting as much as 50% of their citations from themselves or close collaborators—a glaring sign of echo chambers and intellectual incest within fields.

- Peer reviewers often unconsciously prioritize studies that confirm their own beliefs or benefit their networks.

- Journals may favor “positive” results, skewing what actually gets published and, subsequently, what gets analyzed.

- Traditional analysis rarely accounts for stakeholder feedback or integration with new data streams, leading to blind spots that AI-powered tools only partially address.

And here’s the kicker: academic institutions are often incentivized to keep these biases under wraps, fearing they’ll erode trust and funding. According to updated OMB and NIH guidelines, new disclosure and documentation standards are attempting to address these issues, but the culture of “see no evil, hear no evil” persists.

Ultimately, recognizing and addressing these biases isn’t just an ethical obligation—it’s a survival skill in the brutal landscape of 2025 research review.

How retractions and scandals exposed analysis flaws

Retractions are the academic world’s scarlet letters, and their numbers are rising at an alarming rate. According to Nature (2025), over 1,500 research articles were retracted globally in the past year alone, with AI-powered tools now uncovering previously undetected fraud and methodological errors.

| Year | Number of Retractions | Leading Causes |

|---|---|---|

| 2022 | 1,020 | Fraud, Data Manipulation |

| 2023 | 1,200 | Plagiarism, Ethics Violations |

| 2024 | 1,350 | Misconduct, Reporting Errors |

| 2025 | 1,500 | AI-Detected Fraud, Bias |

Table 1: The relentless increase in academic research retractions, 2022-2025. Source: Nature, 2025

The most notorious scandals—from fabricated cancer research to manipulated psychology studies—weren’t flagged by human reviewers but by automated systems trained to spot statistical anomalies and duplicated images. Old-school document analysis simply couldn’t scale to match the complexity and volume of modern academic output.

Retractions aren’t just academic embarrassments. They erode public trust, undermine entire fields, and cost millions in wasted grants. If your review strategy hasn’t adapted to these realities, you’re analyzing blind.

Breaking down the fundamentals: what is academic research document analysis?

Core concepts and why they matter

At its heart, academic research document analysis is the systematic process of dissecting, evaluating, and synthesizing written scholarship to extract meaning, assess reliability, and drive new insights. It’s more than just reading; it’s about interrogating the text, questioning assumptions, and uncovering what’s hidden between the lines.

Key Concepts:

- Critical appraisal: The rigorous evaluation of research validity, relevance, and methodological soundness.

- Systematic review: A structured, replicable process for identifying, vetting, and synthesizing existing research on a given topic.

- Text mining/NLP: The use of computational tools to extract patterns, trends, and themes from large bodies of text.

- Bias detection: Methods for identifying and mitigating conscious or unconscious distortions in research and analysis.

These concepts are the backbone of credible academic work in 2025, separating rigorous analysis from mere opinion or unfounded speculation.

Academic research document analysis matters because the stakes have never been higher. Policy decisions, medical treatments, and billions in funding ride on the ability to accurately interpret and critique research documents. In this context, superficial reading isn’t just lazy—it’s dangerous.

Beyond skimming: depth vs. speed in document review

The tension between depth and speed is the central dilemma of modern analysis. While AI tools promise instant summaries and rapid screening, true understanding requires lingering with the text, wrestling with its arguments, and cross-examining its claims.

| Review Approach | Speed | Depth | Risk of Missing Critical Insights |

|---|---|---|---|

| Manual Skimming | Fast | Low | High |

| AI-Assisted Review | Very Fast | Medium | Medium |

| Deep Qualitative Analysis | Slow | High | Low |

| Hybrid Workflow | Moderate | High | Low |

Table 2: Comparing document review approaches. Source: Original analysis based on Research.com, 2024, Nature, 2025

While the lure of speed is strong, the most robust analyses combine rapid AI triage with slow, critical reading by experts—a hybrid that sidesteps the pitfalls of both extremes.

In the world of academic research document analysis, shortcuts are seductive, but only depth delivers true insight.

Essential terminology you’re probably misusing

Many researchers toss around terms like “systematic review” or “meta-analysis” without fully grasping their technical meanings. This isn’t just pedantry—it can lead to fundamental errors in analysis and reporting.

A systematic review is a replicable, protocol-driven approach to identifying and synthesizing all relevant research on a specific question, minimizing bias through transparent and pre-registered methods.

A meta-analysis is a statistical technique that combines results from multiple studies to identify patterns or overall effects, contingent on the quality and comparability of included research.

Unlike systematic reviews, narrative reviews are less structured and more interpretive, synthesizing findings with greater subjectivity.

The process of rigorously evaluating a study’s methodology, data quality, and relevance before incorporating it into a broader synthesis.

Making these distinctions is crucial for clear communication and credible evaluation. Misusing terms isn’t just a faux pas—it’s a red flag for reviewers and funders alike.

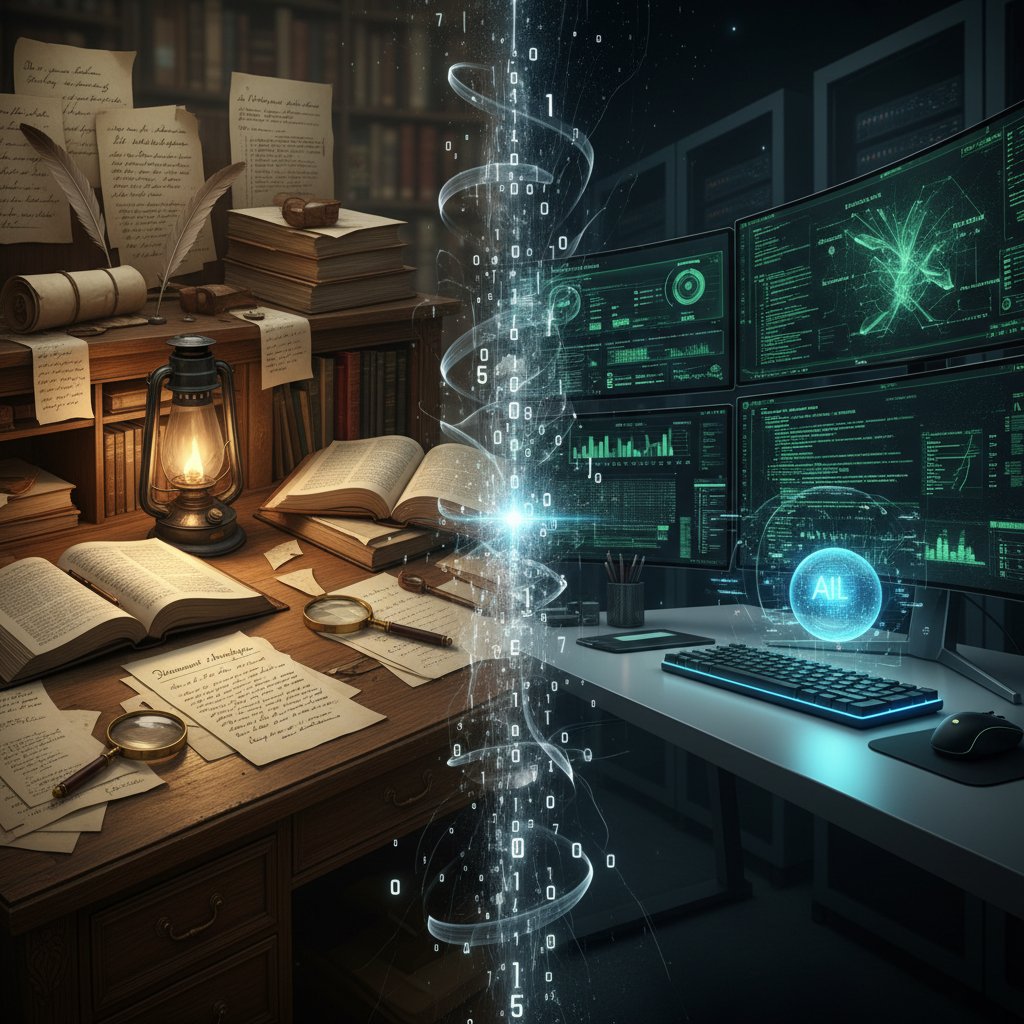

The evolution: from highlighters to LLMs and AI

A (very) brief history of document analysis

The journey from paper and pen to AI-driven platforms is a testament to the relentless drive for efficiency and accuracy. Here’s how it unfolded:

- Manual extraction: Early researchers relied on handwritten notes, margin scribbles, and color-coded highlights—a process as slow as it was error-prone.

- Spreadsheet revolution: The rise of Excel and similar tools brought some order, enabling basic sorting and pattern recognition.

- Digital PDF tools: Annotation software made collaboration easier but did little to address underlying bias or scalability.

- Automated text mining: Natural language processing (NLP) entered the scene, allowing for basic keyword extraction and frequency analysis.

- LLMs and AI platforms: Today’s tools, like textwall.ai and DistillerSR, use advanced large language models to digest, summarize, and appraise documents at lightning speed.

The result? A leap in both productivity and complexity, with new opportunities—and new dangers—at every stage.

But the paradox remains: every leap forward in automation introduces new blind spots, demanding even sharper critical thinking from human analysts.

How LLMs are rewriting the rulebook

Large language models (LLMs) and AI platforms are fundamentally changing the way we approach academic research document analysis. According to Uninist (2024), over 50% of researchers now cite “AI literacy” as a must-have skill. These tools ingest thousands of articles, flag inconsistencies, and generate detailed summaries in seconds.

| Feature | Traditional Methods | LLM/AI-Driven Methods |

|---|---|---|

| Speed | Slow | Instant |

| Scale | Limited | Massive |

| Bias Detection | Weak | Moderate |

| Citation Checking | Manual | Automated |

| Stakeholder Integration | Rare | Increasing |

| Transparency | High (if diligent) | Variable |

Table 3: The radical shift from manual to AI-powered analysis. Source: Original analysis based on [Uninist, 2024], Hogan Lovells, 2024

While these advances are transformative, they also raise pressing questions: How transparent are these algorithms? Can they be gamed? Do they amplify existing biases?

The new rulebook isn’t just about mastering the tools—it’s about questioning their outputs and understanding their limits.

Where human intuition still beats the machines

Despite the hype, AI hasn’t replaced the human touch in research analysis. Machines excel at volume and speed, but they struggle with nuance, context, and ethical ambiguity. For example, LLMs can summarize findings, but they often miss the subtle manipulation of statistics or the significance of anomalous results.

“AI can flag patterns, but only experienced analysts catch the political or cultural subtexts that shape research narratives.” — Nature, 2025

Human reviewers bring skepticism, domain knowledge, and the ability to read between the lines—qualities that resist automation. The best analyses in 2025 are hybrid efforts, leveraging both machine efficiency and human insight.

The real challenge is learning when to trust the machine and when to overrule it.

Inside the machine: how AI actually analyzes research documents

Natural language processing 101 for real people

Natural language processing (NLP) is the engine behind most modern document analysis tools. At its most basic, NLP algorithms break down text into tokens (words and phrases), analyze syntax, and extract entities (names, dates, statistics).

For academic research document analysis, advanced NLP models can:

- Identify research questions, methodologies, and key findings.

- Flag duplicate or plagiarized content.

- Track citation patterns and self-referencing.

- Surface contradictory claims across studies.

But even the most sophisticated NLP can be tripped up by technical jargon, ambiguous phrasing, or context-specific meanings. That’s why combining AI-driven triage with expert review remains the gold standard.

Understanding the basics of NLP isn’t just for coders. It’s a necessary skill for anyone who wants to critically appraise digital research tools instead of blindly trusting their outputs.

Strengths, weaknesses, and blind spots of AI-powered analysis

AI-powered document analysis offers undeniable advantages—but it also comes with built-in limitations:

- Strengths

- Processes thousands of articles in minutes, enabling comprehensive systematic reviews.

- Flags potential inconsistencies, plagiarism, and statistical outliers with high accuracy.

- Reduces human error and reviewer fatigue in repetitive tasks.

- Weaknesses

- Struggles with nuanced argumentation, sarcasm, and cultural references.

- Can perpetuate or amplify biases present in training data or input documents.

- Offers limited transparency into how conclusions are reached (“black box” effect).

- Blind Spots

- Misses context-specific ethical issues, stakeholder interests, and subtle manipulations.

- Falls short in integrating qualitative feedback from diverse sources.

The bottom line: AI is a force multiplier, not a replacement for critical thinking. Over-reliance on machine outputs is the new blind spot to avoid.

In research review, skepticism isn’t cynicism—it’s your best defense.

What textwall.ai and similar tools can (and can’t) do

Platforms like textwall.ai stand at the forefront of academic research document analysis, leveraging advanced LLMs to break down complex documents, extract key insights, and automate content review faster than any human team. They’re invaluable for researchers swamped by endless PDFs and for professionals who need to cut through the noise, fast.

What they can do:

- Instantly summarize lengthy research articles, highlighting critical points and contradictions.

- Extract actionable data, trends, and stakeholder perspectives from dense texts.

- Categorize, tag, and organize content for rapid retrieval and sharing.

What they can’t do:

-

Replace the nuanced judgment of a subject matter expert.

-

Detect manipulation or bias that falls outside algorithmic patterns.

-

Fully interpret context-specific ethical dilemmas or industry-specific standards.

-

Automated document summaries

-

Trend extraction from large datasets

-

Stakeholder integration (when programmed appropriately)

-

Cross-document comparison (with limitations)

-

Basic bias detection

Hybrid workflows, combining AI efficiency with human expertise, consistently outperform either approach used alone. That’s the edge you need in the 2025 research arena.

Step-by-step: an advanced guide to academic research document analysis

Preparing your mindset and your materials

Before diving into any document, the most important asset is your mindset. Critical appraisal isn’t just another to-do; it’s a discipline of skepticism, curiosity, and humility. Here’s how to set up for success:

- Clarify your research question. Nail down what you’re seeking to answer or understand—vague intentions lead to shallow analysis.

- Gather diverse sources. Cast a wide net, including both classic studies and recent publications.

- Check document validity. Confirm the recency, completeness, and reputation of each source.

- Organize your materials. Use digital tools (like textwall.ai) to categorize, tag, and track your documents.

- Prepare annotation tools. Whether digital or analog, have a system for marking, tagging, and noting inconsistencies.

The difference between superficial review and deep analysis is preparation. Don’t cut corners—your credibility is on the line.

How to dissect methodology, results, and conclusions

The core of academic research document analysis is the dissection of three sections: methodology, results, and conclusions. Each reveals its own set of red flags and insights.

Start with the methodology. Is the research design robust? Are inclusion/exclusion criteria transparent? Next, scrutinize the results for statistical validity—look for p-hacking, multiple comparisons, or selective reporting.

Finally, interrogate the conclusions. Are they supported by the data, or do they overreach? Cross-reference claims with the raw numbers and note any logical leaps or unsupported generalizations.

Every paragraph you read is an opportunity to expose either brilliance or blunder.

Thematic coding and synthesis like a pro

Thematic coding isn’t just for qualitative analysis—it’s at the heart of synthesizing insights from multiple documents. Here’s an advanced workflow:

| Step | Goal | Tools / Techniques |

|---|---|---|

| Initial Reading | Identify recurring themes | Manual annotation, AI suggestions |

| Code Assignment | Tag segments with relevant codes | Atlas.ti, NVivo, textwall.ai |

| Pattern Analysis | Group codes into higher-order categories | Cluster analysis, heat maps |

| Synthesis | Integrate findings across sources | Mind mapping, narrative synthesis |

Table 4: Thematic coding workflow for advanced academic document analysis. Source: Original analysis based on trustedinstitute.com, alisodigital.com

By iterating between coding, analysis, and synthesis, you build a robust map of insights that stands up to scrutiny.

Thematic synthesis is where the magic happens—where disconnected data becomes actionable knowledge.

Building your own hybrid workflow

No single approach has all the answers. The best analysts build hybrid workflows, leveraging both digital tools and old-school skepticism.

- Start with an AI-powered triage to scan and summarize large volumes.

- Manually review flagged sections for nuance and context.

- Incorporate stakeholder feedback (through interviews or surveys).

- Cross-validate findings with external datasets.

- Document every step for transparency and reproducibility.

Hybrid workflows are the new gold standard. They let you scale your review process without losing critical depth—or your sanity.

The future belongs to analysts who can blend machine speed with human judgment.

Real-world examples: when document analysis changed everything

Case study: how critical appraisal exposed academic fraud

In 2024, a high-profile cancer research paper was retracted after critical reviewers—augmented by AI-powered analysis—uncovered duplicated images and statistical anomalies. According to Nature (2025), the initial document review missed these issues entirely; it took machine learning algorithms, followed by human validation, to expose the fraud.

“Our AI flagged the same image being used for two different experiments. Without that, the fraud might have gone undetected.” — Senior Data Analyst, Nature, 2025

The fallout was immediate: funding pulled, reputations destroyed, and a new wave of scrutiny for all related publications.

These scandals are a wake-up call. Only a relentless, hybrid analysis—combining AI and skeptical human review—can keep bad science in check.

Meta-analysis gone wrong: lessons from the trenches

Meta-analyses are supposed to represent the pinnacle of evidence synthesis. Yet, when done poorly, they amplify errors and propagate bias.

- Inclusion of low-quality studies without proper weighting can distort outcomes.

- Over-reliance on automated tools may miss context-specific limitations.

- Failure to update included studies with new retractions or corrections poisons the dataset.

- Ignoring stakeholder feedback leads to blind spots in practical applicability.

According to Research.com, some high-impact meta-analyses had to be withdrawn after it was discovered they included retracted or fraudulent papers, thanks to insufficient document vetting.

The lesson? Automated tools are only as good as the data and judgment behind them.

Breakthroughs powered by next-gen analysis tools

The flip side: There are cases where modern document analysis has delivered breakthroughs. In the field of epidemiology, platforms like textwall.ai have enabled rapid synthesis of COVID-19 research, cutting review times by over 60% without sacrificing accuracy.

Teams using advanced AI-driven workflows have uncovered previously missed trends in market research, and legal professionals have reduced contract review times by up to 70%, according to recent industry reports.

The future of evidence-based decision-making depends on these tools—when they’re used wisely.

Common pitfalls and how to sidestep them

Mistakes even seasoned researchers still make

Even the best stumble. Here are the most common pitfalls in academic research document analysis—and how to avoid them:

- Failing to cross-validate sources, leading to reliance on outdated or incomplete documents.

- Overlooking stakeholder or real-world feedback, which introduces “ivory tower” bias.

- Blindly trusting AI outputs without critical examination.

- Misapplying statistical methods or over-interpreting weak correlations.

- Neglecting to update processes in response to new regulatory or technological changes.

The mark of expertise isn’t perfection; it’s the humility to check—and recheck—your assumptions.

How to spot red flags in your own review process

- Inconsistent citation patterns (e.g., excess self-citation)

- Lack of methodological transparency or missing protocols

- Repeated findings that are “too good to be true”

- Unexplained changes in data or sample sizes

- Failure to disclose conflicts of interest

Spotting these red flags early can save you from costly errors—and professional embarrassment.

A robust analysis process is built on skepticism, not trust.

Checklist: is your document analysis robust?

- Have you verified the authenticity and recency of all documents?

- Did you use both AI and manual review steps?

- Have you cross-validated key findings with external datasets?

- Did you document and justify all methodological choices?

- Have you accounted for stakeholder perspectives and feedback?

- Did you check for conflicts of interest and citation bias?

- Have you updated your process based on the latest regulations and tools?

If you can’t answer “yes” to every item, your analysis isn’t as bulletproof as you think.

A checklist is your best insurance against avoidable mistakes.

The ongoing debate: objectivity, bias, and the myth of the neutral reader

Why all analysis is political (even if you disagree)

Every act of analysis is an act of interpretation. As much as academia clings to the myth of pure objectivity, cultural, political, and financial biases shape every review.

“There are no neutral readers—every analysis is colored by context, incentives, and hidden assumptions.” — Hogan Lovells, 2024

Ignoring this reality is a recipe for self-delusion. The best analysts don’t eliminate bias—they expose and account for it.

Cultural and disciplinary biases: what gets missed

- Western-centric frameworks dominate global research standards.

- Quantitative data is privileged over qualitative insight, even when context matters more.

- Innovative or disruptive studies are often sidelined in favor of safe, consensus-driven work.

- Disciplinary silos limit cross-pollination, missing interdisciplinary breakthroughs.

According to the Trusted Institute, only by consciously integrating diverse perspectives can document analysis reach its full potential.

The “neutral reader” is a comforting fiction—embrace your lens, but make it transparent.

Can technology ever be truly objective?

AI aspires to impartiality, but algorithms reproduce the biases of their creators and training data. Black-box models may amplify systemic blind spots, mistaking correlation for causation or majority opinion for truth.

The smartest analysts use technology as a tool—not as an oracle.

Objectivity isn’t a property of tools, but of process and self-awareness.

The future of academic research document analysis: what’s next?

Emerging trends: multi-modal AI and real-time review

The frontier of document analysis is multi-modal AI—tools that analyze not just text, but images, data tables, and even audio or video transcriptions. Real-time document review, driven by instantaneous machine learning algorithms, is already reshaping how research teams collaborate and validate findings.

These trends make analysis more holistic, but also more complex. Staying current isn’t an option—it’s a necessity for survival.

The race is on: adapt or become obsolete.

Risks and opportunities for tomorrow’s researchers

| Opportunity | Risk | Mitigation Strategy |

|---|---|---|

| Instantaneous synthesis | Over-reliance on automated outputs | Hybrid review workflows |

| Global collaboration | Data security and privacy concerns | Robust compliance protocols |

| Enhanced transparency | Algorithmic opacity (“black box” issue) | Open-source auditing, explainability |

| Scalable insights | Loss of nuance and context | Stakeholder integration |

Table 5: The new landscape of risks and opportunities in document analysis. Source: Original analysis based on [Hogan Lovells, 2024], [Nature, 2025], trustedinstitute.com

The future belongs to those who can balance speed and scrutiny, automation and awareness.

How to future-proof your workflow today

- Invest in ongoing AI literacy—don’t just use the tools, understand how they work.

- Build hybrid workflows that combine machine speed with human skepticism.

- Update compliance processes in line with new regulatory requirements.

- Integrate stakeholder feedback at every stage of analysis.

- Document everything—for transparency, reproducibility, and trust.

Tomorrow’s research landscape rewards adaptability, not tradition.

The only constant is change—embrace it or risk irrelevance.

Crossing boundaries: document analysis outside academia

Journalism, law, and corporate research: lessons from other fields

Academic research document analysis isn’t confined to universities. In journalism, legal practice, and corporate intelligence, similar challenges—and innovations—shape document review.

- Journalists use AI tools to fact-check sources and identify misinformation at scale.

- Legal professionals deploy document analysis to review contracts, flag compliance risks, and uncover hidden clauses.

- Corporate analysts rely on AI-powered synthesis to track market trends and competitor strategies.

In each field, the hybrid approach—melding machine efficiency with human insight—remains paramount.

What works outside academia often anticipates what’s next within it.

Ethical dilemmas and the real-world impact

“When algorithms decide what’s credible, whose interests are being served? Every automated analysis is a battleground for competing values.” — Research.com, 2024

Real-world impact goes beyond theoretical debates. When document analysis fails, the costs are measured in lost trust, financial penalties, or even public harm. The best analysts build ethics into every step of their process.

The analysis isn’t just about what you find, but how—and why—you find it.

Appendix: quick-reference tools, glossary, and further reading

Quick-reference guide for rapid analysis

- Clarify your research question and scope.

- Gather and verify sources for recency, validity, and reputation.

- Use AI tools for initial triage and thematic coding.

- Manually review flagged sections for nuance and bias.

- Cross-validate findings with external data and stakeholder input.

- Document every step for transparency.

- Reassess your workflow regularly to adapt to new regulations and tools.

Keep this checklist within arm’s reach whenever you dive into your next review.

Glossary: essential terms explained

The practical knowledge and critical understanding needed to use and evaluate AI-powered research tools responsibly.

Systematic methods for identifying and mitigating both intentional and unintentional distortions in data or analysis.

A structured evaluation of research validity, methodology, and relevance prior to synthesis.

The integration of automated (AI) and manual (human) review processes for stronger, more reliable results.

A transparent, replicable method for identifying, evaluating, and synthesizing all relevant research on a given topic.

A statistical aggregation of data from multiple studies to determine overarching trends or effects.

The inclusion of feedback and data from relevant parties (patients, communities, practitioners) to enrich analysis.

Understanding these terms isn’t optional—it’s essential for credible research in 2025.

Where to go next: services, communities, and advanced reading

- Nature: How AI is exposing and correcting research flaws

- Hogan Lovells: Regulatory updates for research compliance

- Research.com: Top 10 qualities of good academic research

- Trusted Institute: Advanced document analysis techniques

- Aliso Digital: How documentation updates drive business efficiency

For a cutting-edge, AI-powered edge in document analysis, explore textwall.ai/academic-research-document-analysis.

Whether you’re a researcher, journalist, or corporate analyst, mastering academic research document analysis is your passport to the next level. The only question: Are you ready to see—and act on—the ruthless truths that define the field in 2025?

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Academic Paper Summarizer or Crutch? the New Research Dilemma

Discover insights about academic paper summarizer

Academic Literature Summarizer Tools: Time-Saver or Truth-Risk?

Discover insights about academic literature summarizer tool

PDF Text Extraction in 2026: From Brittle Hacks to Reliable AI

PDF text extraction is a minefield. Discover the 7 brutal truths, smarter strategies, and the insider’s guide to mastering extraction in 2026—plus a bold new approach.

PDF Processing Software in 2026: Power, Risk, and Real Control

PDF processing software isn’t what you think. Discover the raw truths and AI breakthroughs shaping advanced document analysis in 2026. Make smarter choices now.

PDF Extraction Tools Comparison: Accuracy, Risk and Who to Trust

Discover insights about PDF extraction tools comparison

PDF Data Extraction in 2026: Dodging Traps, Building Real Wins

PDF data extraction is changing fast—discover 7 game-changing truths, killer pitfalls, and how to extract smarter in 2026. Your edge starts here—don’t fall behind.

OCR Tools Comparison 2026: Accuracy, Privacy, Real Winners

Unmask the real winners, hidden risks, and expert picks for 2026. Get the ultimate, brutally honest guide to choosing OCR like a pro.

OCR Technology Comparison That Exposes the Real 2026 Winners

Discover insights about OCR technology comparison

OCR Software Comparison That Exposes Real Winners in 2026

Discover the real winners and hidden pitfalls of AI document analysis in 2026. Don’t settle for hype—get the facts before you decide.