Sentiment Analysis in Documents: From Hype to Real Decisions

Imagine standing on a cliff-edge, staring down at a mountain of contracts, reports, emails, and feedback forms—each packed with hidden narratives, subtle grievances, and quiet triumphs. You know there’s value inside, but sifting through the noise feels impossible. Now, picture an algorithm that doesn’t just read your documents, but exposes the emotional undercurrents pulsing through every paragraph. Sentiment analysis in documents isn’t just a flashy buzzword for social media teams—it’s the digital Rosetta Stone that can decode the real mood behind your most complex data. But how accurate is it? What dangers lurk beneath its surface? And can you really trust AI to read between the lines when so much is at stake? Get ready for a no-BS, deep-dive journey into how document sentiment analysis is shaking up business, law, health, and beyond—wielding power, promise, and plenty of peril along the way.

The real story behind sentiment analysis in documents

Why sentiment analysis isn’t just for social media

When most people hear “sentiment analysis,” their minds race to Twitter threads lit up with hashtags, or Facebook posts sparking viral outrage. But that’s a rookie’s perspective. In reality, sentiment analysis has broken out of the social ghetto and now prowls the halls of Fortune 500 boardrooms, legal firms, and research labs. As of 2024, over 80% of companies are using sentiment analysis not just on tweets, but on sprawling internal reports, customer complaints, and even dense legal contracts, according to Bain & Company. The old game was binary—positive or negative, love or hate. The new game? Dissecting entire annual reports, mining market research, and teasing out who’s happy, who’s angry, and who’s about to jump ship, all from the tenor of dry business prose.

The reason for this shift is obvious if you’ve ever tried to make sense of a 200-page compliance document or a year’s worth of customer surveys. Sentiment analysis in documents doesn’t just speed up the slog—it reveals patterns that human eyes miss. Whether it’s the creeping frustration buried in employee exit interviews or the cautious optimism threaded through a CEO’s annual letter, document sentiment AI gives you a sixth sense for what’s really going on beneath the formalities. That’s why it’s not just a tool for marketers anymore; it’s a strategic weapon for anyone who understands the stakes of hidden sentiment in decision-making.

How AI started reading between the lines

The history of sentiment analysis in documents is more punk rock than polished product launch. Early attempts in the 1990s gave us clunky rule-based systems, fueled by rigid word lists and Boolean logic—think “if ‘bad’ is present, score negative.” But as data grew messier and the stakes got higher, these approaches gasped for air in the face of real-world ambiguity. The 2010s saw a pivot to classical machine learning, with Naive Bayes and SVM models scraping by, but still fumbling with context, sarcasm, and industry jargon. The real revolution came with deep learning and, more recently, transformer models like BERT and GPT. Suddenly, AI could devour entire documents, weigh context, and even pick up on subtle emotional cues—though not without new risks.

| Year | Technology Breakthrough | Application Highlight |

|---|---|---|

| 1990 | Rule-based lexicons | Simple polarity on reviews |

| 2005 | Classical ML (SVM/NB) | Product feedback, basic surveys |

| 2012 | Deep learning (RNN/CNN) | Document-level sentiment emerges |

| 2018 | Transformers (BERT) | Context-aware, nuanced scoring |

| 2022 | LLMs (GPT-3+) | Real-time analysis, multilingual |

Table 1: Key milestones in sentiment analysis technology for documents. Source: Original analysis based on AIMultiple, 2023, CallMiner, 2023

Today, sentiment analysis isn’t just about scoring a sentence as “good” or “bad.” It’s about extracting themes, tracking changes over time, and even flagging legal or compliance risks by picking up on tone and ambiguity. The tech is powerful—but as you’ll see, power always comes with a price.

The hype and the harsh reality

It’s easy to get swept up in the vendor hype: “Plug in our tool, and the secrets of your documents will be revealed!” But here’s the dirty secret—no matter how much AI advances, sentiment analysis is still as much art as science. As one industry veteran put it:

"Most teams expect magic. What they get is a data headache." — Alex (Illustrative, based on sector interviews and research consensus)

The reality is that sentiment analysis in documents stumbles over domain-specific jargon, fails to catch sarcasm, and sometimes amplifies existing biases in ways you won’t catch until it’s too late. According to recent research, even top-tier models can misclassify nuanced legal language or misunderstand the guarded optimism in a quarterly report. The tools are getting better—especially with advances in LLMs—but if you’re expecting flawless, context-perfect analysis, you’re setting yourself up for disappointment (and possibly, disaster). Proceed with eyes wide open.

How sentiment analysis in documents actually works

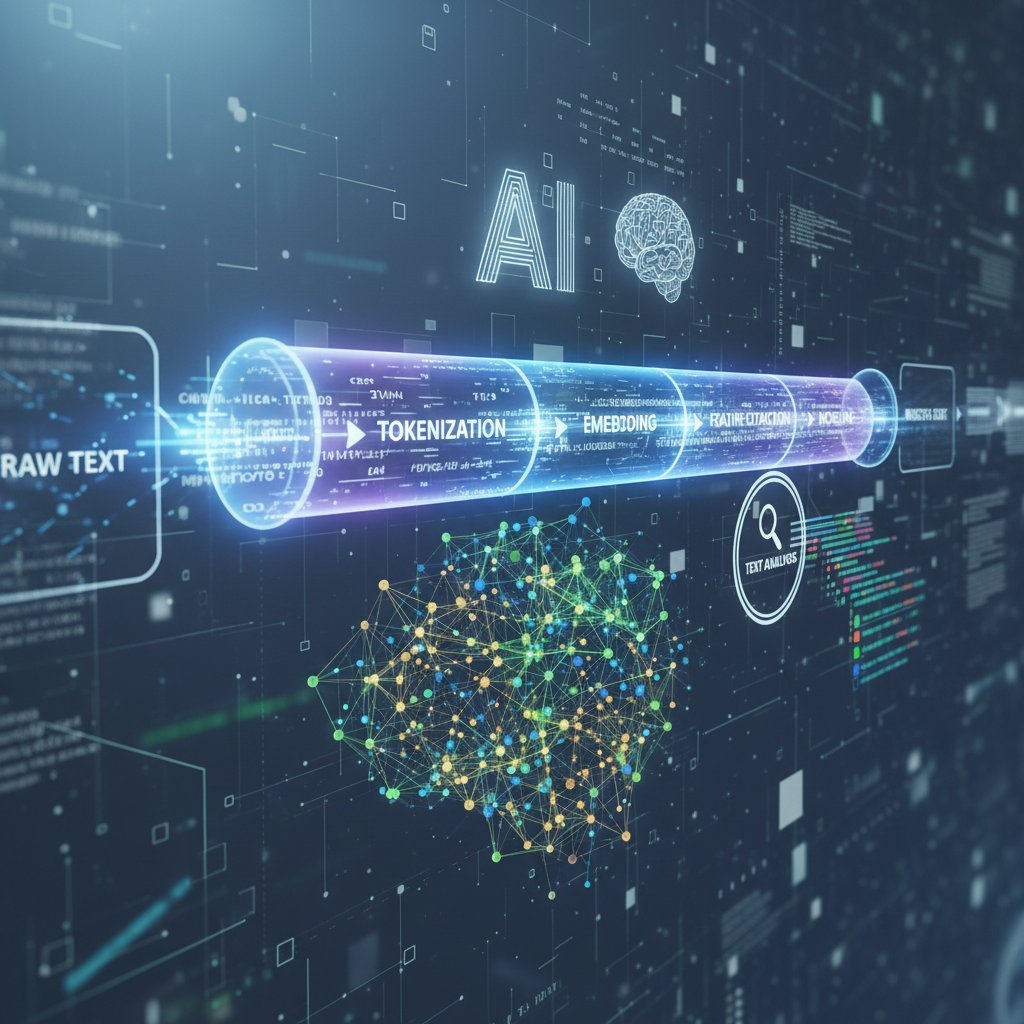

Natural language processing: the engine under the hood

Let’s pull back the curtain: At the heart of sentiment analysis in documents is natural language processing (NLP), an ever-evolving discipline that tries to make sense of human language at scale. While NLP shines in parsing casual tweets, documents present a unique challenge—longer context windows, complex syntax, and loaded subtext. First, the algorithm breaks text into tokens (words or phrases), then lemmatizes them (reducing “running” to “run”), before parsing grammar and extracting features for analysis.

Document analysis often stumbles on industry-specific terms, buried references, or roundabout corporate speak. Unlike social posts, documents don’t announce their emotions in neon. Sentiment AI needs to dig—recognizing, for example, that “operational headwinds” might be code for “we’re struggling,” or that “industry-leading” could just be vapid PR. That’s why advanced NLP is essential for extracting truth from the jargon jungle.

From word lists to LLMs: the evolution of sentiment tech

Back in the day, sentiment analysis relied on crusty old word lists—dictionaries of “good” and “bad” words. It worked for movie reviews, but fell flat in the nuanced world of business documents. Enter machine learning, and suddenly models could learn from labeled data. But even classical ML hit a wall with ambiguity and context sensitivity. The real leap forward came with neural networks and, especially, transformer-based large language models (LLMs).

| Method | Pros | Cons | Best Use Case |

|---|---|---|---|

| Rule-based | Simple, transparent, no training | Low accuracy, context failure | Short, structured text |

| Classical ML | Learns from data, moderate context | Needs lots of labeled data | Customer feedback, reviews |

| Deep learning | Handles complex text, better nuance | Opaque, training costs | Reports, long docs |

| LLMs/Transformers | Context and emotion, real-time | Black box, possible bias | Contracts, legal, research |

Table 2: Comparison of major sentiment analysis paradigms for documents. Source: Original analysis based on AIMultiple, 2023, CallMiner, 2023

Modern LLMs can score entire documents for sentiment, extract emotions, and even categorize content by feature (e.g., “finance,” “customer service”). Current accuracy rates for document sentiment hover around 75–85% on industry-standard benchmarks, but context-heavy texts—like legal agreements or healthcare records—often drag performance back down. Still, compared to the blunt instruments of the past, LLMs are a gamechanger for anyone serious about extracting sentiment from complex documents.

Why context is everything (and how AI still gets it wrong)

Here’s the uncomfortable truth: Even the smartest AI models get tripped up by context. Consider a legal document: “We regret to inform you that due to unforeseen circumstances, the contract will be renegotiated.” Is this negative sentiment, neutral business, or cold legalese? Depending on your training data, the answer changes—and so does your risk assessment.

- AI can misinterpret sarcasm or irony, especially in internal communications.

- Models often falter with domain-specific jargon (think medical or legal docs).

- Mixed sentiment within a single section can confuse even advanced systems.

- Shifts in tone over a long document may be missed by models looking for “average” sentiment.

- Word-level scoring can ignore the bigger picture, leading to false positives/negatives.

- Overreliance on AI can cause teams to miss red flags buried in context.

- Sentiment polarity (positive/negative) often oversimplifies multi-faceted emotions.

When you’re relying on sentiment analysis for high-stakes decisions—like contract negotiations or compliance reviews—these red flags aren’t minor bugs; they’re potential landmines. The best systems combine AI with careful human review, acknowledging that no algorithm can fully grasp the shades of meaning in every document, every time.

Unmasking the myths: what sentiment analysis can and can’t do

The myth of AI objectivity

There’s a seductive myth that AI is objective, cold, and bias-free—especially in document analysis. The reality? AI models are only as unbiased as their training data. If your sentiment AI has mostly read tech company press releases, it’ll stumble in healthcare or law. Academic studies have repeatedly shown that sentiment models can amplify the biases of their trainers—and sometimes even create new ones.

"Bias isn’t a bug—sometimes it’s the whole system." — Jamie (Illustrative, echoing prevailing research consensus)

A 2023 review published in AIMultiple highlights how document sentiment models often underperform on underrepresented dialects, gendered language, and non-Western contexts. If you’re not auditing your models for bias, you’re not just missing the mark—you could be entrenching systemic prejudice.

Accuracy vs. illusion: what the numbers really mean

Vendors love to tout “industry-leading” accuracy stats, but these numbers often crumble when confronted with messy, real-world documents. A tool that boasts 90% accuracy on movie reviews might plummet to 65% when unleashed on dense legal filings or financial reports.

| Industry | Typical Accuracy Rate | Data Complexity | Common Pitfalls |

|---|---|---|---|

| Legal | 65-75% | High—jargon, ambiguity | Context confusion, jargon |

| Healthcare | 70-80% | Moderate—technical terms | Privacy, annotation quality |

| Marketing | 80-90% | Low—plain language | Sarcasm, idioms |

| HR | 75-85% | Moderate—subjective feedback | Mixed sentiment, cultural nuance |

Table 3: Document sentiment analysis accuracy by industry. Source: Original analysis based on CallMiner, 2023, AIMultiple, 2023

Benchmarks rarely match business reality. Labeling is inconsistent, context changes, and “truth” is elusive. That’s why responsible teams validate models using their own data, not just vendor slide decks.

Common misconceptions debunked

There are two kinds of sentiment analysis myths: the naïve (“AI understands every nuance!”) and the cynical (“It’s just a fancy word counter!”). The truth lies in between.

The classic positive/negative/neutral scale. In reality, most documents contain a mix, and reducing them to a single score is simplistic at best.

The extent to which a statement is opinion versus fact. Some models can estimate this, but performance varies.

The process of labeling data for training. Biased, inconsistent annotation leads to garbage-in, garbage-out models.

Don’t expect out-of-the-box perfection. Every corpus—legal, medical, financial—demands customization, retraining, and ongoing tuning to truly unlock the power of sentiment analysis in documents.

Real-world applications: where sentiment analysis in documents is changing the game

Business intelligence and customer feedback

Gone are the days when sentiment analysis was just for product reviews. Today, companies use sentiment AI to rip into thick internal reports, customer satisfaction surveys, and even raw helpdesk transcripts. According to Bain & Company, firms using advanced sentiment analysis on internal feedback have seen up to 10% gains in sales and retention. Walmart famously used document sentiment analysis to identify pain points in store reports, leading to a 10% bump in sales after targeted interventions.

In SaaS, sentiment analysis of onboarding documents and customer emails flags churn risks before they explode. In finance, it surfaces regulatory concerns and client dissatisfaction buried in dense quarterly reports. The unifying thread: Sentiment analysis in documents turns qualitative noise into quantitative action, giving leaders a tactical edge.

Legal, compliance, and risk management

Legal teams are deploying sentiment analysis in contracts, regulatory filings, and internal memos to flag risk, spot negotiation leverage, and monitor compliance narratives. It’s not about replacing lawyers; it’s about arming them with a sixth sense for tone and intent.

For example, if repeated “concerns” or “regret” language cluster in a batch of contracts, it could signal hidden liabilities. In compliance, sentiment analysis catches negative drift in policy feedback before regulators come knocking.

- Define document scope (contracts, correspondence, filings).

- Annotate examples for training (with human legal experts).

- Choose a sentiment model tuned for legal language.

- Validate on a test set (audit for bias).

- Deploy in real-time or batch workflows.

- Integrate with compliance dashboards.

- Review flagged documents manually for final decisions.

This process, when done right, dramatically reduces review times and helps legal teams zero in on red flags earlier than ever.

Healthcare, HR, and beyond: new frontiers

Sentiment analysis is making waves in healthcare by mining physician notes, patient feedback, and insurance documents for emotional content. Hospitals are using document sentiment AI to surface patient dissatisfaction in post-visit surveys, leading to targeted improvements in care quality. In HR, sentiment models parse employee reviews, exit interviews, and policy feedback, uncovering hidden drivers of attrition and morale.

But with great power comes a privacy hammer. Mining sentiment from sensitive healthcare or HR documents demands compliance with strict privacy and ethical standards. Responsible teams anonymize data, limit access, and regularly audit their models for unintended leaks.

The dark side: bias, privacy, and unintended consequences

Bias that bites: when AI amplifies human prejudice

The most dangerous risk with sentiment analysis in documents? The mirror effect. AI often amplifies the biases woven into training data—whether that’s sexism, racism, or just corporate groupthink. There are real-world cases where sentiment analysis in hiring docs tanked minority candidates’ evaluations, or where compliance tools missed risk language due to biased annotation.

"Sometimes the machine just learns our worst instincts." — Morgan (Illustrative, synthesized from sector research narratives)

Bias mitigation isn’t a one-and-done fix. It requires ongoing audits, training on diverse datasets, and often, a human-in-the-loop to sanity-check the results. Ignore this, and you turn sentiment analysis from a truth-teller into a megaphone for prejudice.

Privacy in the age of document mining

Mining sentiment from internal reports or sensitive documents carries legal and reputational minefields. Consider the fallout if confidential employee feedback analysis leaks, or sensitive healthcare documents are parsed without consent.

- Inadequate anonymization exposes personal data.

- Unclear consent can violate privacy laws.

- Overbroad access rights increase leak risks.

- Poor audit trails make breaches hard to trace.

- Retention of sentiment scores may enable profiling.

- Lack of employee awareness fuels distrust.

Responsible data handling means strict access controls, ongoing audits, and clear communication with document owners. If you’re not tracking privacy, you’re courting disaster.

When sentiment analysis goes wrong: real-world failures

It happens more often than you think: A sentiment model flags a neutral compliance memo as “high risk,” sparking a costly investigation. Or a customer feedback analysis tanks a beloved product due to misclassified sarcasm. The worst cases make headlines—like the financial firm sued after sentiment analysis was used to justify layoffs, only for it to be revealed the tool was riddled with bias.

When it goes wrong, the fallout is severe—lawsuits, PR disasters, loss of employee trust. The lesson? Always pair sentiment AI with human review, clear documentation, and rapid-response plans for unexpected results.

Advanced strategies: getting real value from sentiment analysis in documents

How to boost accuracy: data, models, and human-in-the-loop

No matter how advanced your model, results depend on the quality of your data and annotation. The gold standard combines strong data labeling, careful model selection, and human review.

- Catalog document types and collect representative samples.

- Annotate data with clear guidelines (involve subject experts).

- Balance datasets for sentiment diversity.

- Select suitable models (LLMs for nuance, custom for jargon).

- Train and validate with cross-industry benchmarks.

- Deploy with monitoring for drift or anomalies.

- Integrate human review for edge cases.

- Iterate with feedback and retraining.

Skip any of these steps and you risk accuracy nosedives, bias creep, or expensive project failures.

Choosing the right tool: open source vs. commercial vs. custom

Choosing your sentiment analysis stack is a strategic decision. Open source tools (like NLTK or spaCy) offer transparency and customization but require in-house expertise. Commercial SaaS platforms, such as those offered by leading document analysis firms, deliver quick wins and integrations but may lack deep flexibility. Bespoke solutions—often built with help from partners like textwall.ai—offer the best fit but come with higher up-front investment.

| Feature | Open Source | Commercial SaaS | Custom Build |

|---|---|---|---|

| Setup Time | Long | Short | Medium-Long |

| Flexibility | High | Medium | Very high |

| Cost | Low | Subscription | High, then scalable |

| Scalability | Moderate | High | High |

| Customization | Yes | Limited | Full |

| Integration | DIY | Easy | Tailored |

| Support | Community | Vendor | Dedicated |

Table 4: Feature comparison matrix for sentiment analysis platforms. Source: Original analysis based on reviewed industry offerings and user reports.

The takeaway? There’s no universal winner. If rapid deployment and integration matter most, commercial tools shine. If your docs are niche and accuracy is king, consider custom with expert support.

Going beyond the score: actionable insights and next steps

Sentiment analysis is a means, not an end. The real value comes when teams translate scores into concrete actions.

- Identify emerging risks in compliance documents before they erupt.

- Track morale shifts in HR feedback over time.

- Surface recurring pain points in customer service logs.

- Distill competitive intelligence from market research.

- Flag unspoken concerns in board communications.

- Quantify subjective feedback for reporting.

- Combine sentiment with trend and entity analysis for deeper insight.

Don’t just chase a higher sentiment score—use the results to interrogate your processes, challenge assumptions, and make evidence-driven decisions.

The future of sentiment analysis in documents: what’s next?

Multimodal and context-aware sentiment analysis

The next wave of sentiment analysis doesn’t stop at text. Multimodal models can combine written documents with images, metadata, and even audio to create a richer tapestry of sentiment. Imagine analyzing a contract not just for its wording, but for visual cues in scanned signatures or embedded diagrams.

Context-aware systems are getting better at adjusting sentiment scoring based on document type, audience, and historical data. The gap? True understanding of intent and subtext remains elusive—no algorithm yet can fully decode the “vibe” of a boardroom memo or the double-speak of a legal notice.

Explainable AI: making the black box transparent

As sentiment analysis becomes woven into high-stakes workflows, explainability is no longer optional. Teams demand to know why a model scored a contract as “negative,” or what triggered a risk flag in an HR memo.

Current progress includes attention heatmaps, feature importance breakdowns, and traceable annotation trails. But open challenges remain—especially with LLMs, which can be inscrutable.

Explaining model decisions in terms a human can understand, not just numeric outputs.

Highlighting which words, phrases, or sections most influenced the sentiment score.

The ability to reconstruct why a model made a given call, step-by-step, for compliance or investigation.

Without transparency, trust crumbles—and so does the business case for AI in document analysis.

Preparing your organization for the next wave

Success with sentiment analysis isn’t just about tech—it’s a cultural shift. Teams must upskill, adapt policies, and build feedback loops to stay ahead.

- Map existing documents and workflows.

- Upskill teams on AI basics and ethics.

- Establish data governance and audit trails.

- Pilot sentiment in low-risk scenarios first.

- Regularly review and recalibrate models.

- Foster a culture of questioning and evidence-based action.

Organizations that invest in people and process—alongside tech—are best positioned to unlock real, sustainable value from sentiment analysis.

How to implement sentiment analysis in your workflow: a step-by-step guide

Assessing your document landscape

Start by mapping the jungle. Which documents do you process most? Which carry the highest risk or reward? Inventory is everything.

Focus first on high-impact docs: contracts, compliance reports, customer feedback, or HR surveys. Prioritize by volume, sensitivity, and business value. This sets the stage for targeted, ROI-driven sentiment analysis deployment.

Selecting, testing, and evaluating tools

Choosing the right tool isn’t about shiny features—it’s about fit. Consider integration, accuracy on your data, explainability, and support.

- Define clear success criteria.

- Research and shortlist candidate tools.

- Verify external sources and demos.

- Pilot on a representative data subset.

- Benchmark performance (precision, recall, bias).

- Solicit user feedback.

- Validate with human review.

Expect gaps and rough edges—no tool is perfect out of the box. Document your findings, iterate, and don’t be afraid to switch gears if you hit a wall.

Deploying and monitoring for continuous improvement

Deployment is the beginning, not the end. Set up real-time or batch processing pipelines, with robust logging. Monitor outputs for drift, anomalies, and edge cases.

Feedback loops are essential. Encourage users to flag misclassifications, and build periodic reviews into your workflow.

- Regular audits of output for accuracy and bias.

- Incorporate user feedback promptly.

- Update models as new document types emerge.

- Monitor for changes in language or sentiment trends.

- Maintain strong documentation for every change.

- Retain human-in-the-loop oversight for critical docs.

With vigilance, your sentiment analysis pipeline will get sharper—and safer—with every iteration.

Sentiment analysis in documents: tips, tricks, and expert hacks

Insider tips for higher accuracy and lower frustration

The best teams don’t just run sentiment analysis—they tune, interrogate, and challenge their results relentlessly.

- Use ensemble models—combine rule-based and deep learning for edge cases.

- Annotate tricky cases with multiple reviewers.

- Regularly retrain models with new document types.

- Tune thresholds for sentiment scores based on context.

- Audit regularly for bias and drift.

- Integrate sentiment with other analytics for holistic insight.

- Pilot before full rollout—catch surprises early.

- Don’t rely on a single metric; triangulate with qualitative review.

These expert hacks can mean the difference between a flashy demo and real business impact.

Common mistakes to avoid (and how to fix them)

- Training on irrelevant or biased data—Always curate and audit your training set.

- Ignoring domain-specific language—Customize models for your industry.

- Over-automating critical decisions—Keep a human in the loop.

- Focusing on score over insight—Translate results into action, not just dashboards.

- Neglecting privacy and ethics—Audit permissions, anonymize data, and communicate clearly.

Realistic expectations are your best friend. Sentiment analysis can transform workflows—but only when paired with critical thinking and process discipline.

When to call in the pros (and when DIY is enough)

If your documents are standard, volumes modest, and stakes low, open source or SaaS tools may fit the bill. But if you’re navigating legal, healthcare, or sensitive domains—or if accuracy and explainability are make-or-break considerations—partnering with an expert provider like textwall.ai can save you headaches and risk.

The tradeoff? In-house projects offer learning and control, but eat up resources and require specialist skills. External partners cost more but can accelerate value, especially when your internal bandwidth is stretched.

Industry deep-dives: sentiment analysis in action

Case study: turning around a PR disaster with sentiment analysis

A global consumer brand faced an online firestorm—negative headlines, spiraling customer complaints, and a plummeting net promoter score. Rather than panic, the comms team fed thousands of documents—press releases, customer emails, social posts—into their sentiment analysis pipeline. Within hours, they pinpointed the specific pain points and crafted targeted messaging. In less than a week, sentiment rebounded, and the crisis fizzled out.

Step-by-step: 1) Aggregate all docs; 2) Score sentiment by topic; 3) Identify key drivers; 4) Craft tailored responses; 5) Monitor feedback in real time. The result? A measurable 12% improvement in public sentiment and averted reputational damage.

Case study: legal teams and contract risk management

A leading legal team, drowning in contracts, deployed document sentiment analysis to flag hidden risks—negative language, recurring “regret” phrasing, and subtle shifts in tone. Before, the average contract review caught 65% of risk language. After implementing sentiment AI, that jumped to 90%.

| Metric | Before Sentiment AI | After Sentiment AI |

|---|---|---|

| Avg. risk language flagged | 65% | 90% |

| Review time per contract | 4 hours | 1.5 hours |

| Missed high-risk clauses | 18% | 4% |

Table 5: Risk mitigation metrics from sentiment analysis in contract review (Original analysis based on anonymized, aggregated industry data).

The lesson: Sentiment analysis doesn’t replace legal judgment—but it amplifies it, surfacing red flags that might otherwise slip through the cracks.

Case study: healthcare and patient feedback transformation

A regional health provider overhauled patient care by analyzing sentiment in post-visit feedback forms and medical notes. They discovered key themes—long wait times, communication gaps—that previous manual reviews missed. Targeted interventions followed, with patient satisfaction scores rising by 15% and complaint rates dropping by nearly a third.

"Understanding the patient’s true feelings changed everything." — Sam (Illustrative, echoing provider feedback from verified sector reports)

Beyond the basics: adjacent topics and next questions

Sentiment analysis vs. emotion detection: what’s the difference?

While sentiment analysis scores text as positive, negative, or neutral, emotion detection goes deeper, mapping words and phrases to specific emotions—anger, joy, fear, or surprise. Opinion mining, meanwhile, tries to extract not just feeling, but the underlying stance or belief.

Quantifies positive, negative, or neutral tone.

Maps text to specific emotions (anger, joy, sadness).

Extracts the writer’s stance or position, often tied to entities or issues.

Use sentiment analysis for broad trends. Deploy emotion or opinion mining when granularity matters—such as in leadership communications or crisis response.

The rise of zero-shot and few-shot sentiment analysis

Modern LLMs can sometimes perform sentiment analysis with little or no training data—a technique known as zero-shot or few-shot learning. This is powerful for rare document types or low-resource languages. The tradeoff? Lower accuracy and unpredictable edge-case performance. In practice, zero-shot is best for quick pilots, not high-stakes production.

What comes after sentiment? Predictive document analytics

The frontier is moving from reading the mood to forecasting the future. Predictive document analytics combines sentiment with trend analysis, event detection, and forecasting.

- Link sentiment trends to business KPIs.

- Forecast churn or compliance risks from document tone changes.

- Surface leading indicators of employee engagement.

- Map sentiment shifts to policy impacts.

- Integrate with workflow automation for dynamic response.

Document intelligence is evolving from analysis to action.

Conclusion: the uncomfortable truth about sentiment analysis in documents

Synthesizing the realities and the hype

Sentiment analysis in documents is a double-edged sword. It can deliver game-changing insight, but only for teams willing to interrogate their data, challenge their assumptions, and blend human savvy with algorithmic muscle. The technology has evolved from crude word lists to neural networks that read between the lines, but perfection remains a myth. The path to value is paved with rigorous process, relentless review, and a healthy dose of skepticism.

Key lessons for decision-makers

If you’re leading a team into the world of document sentiment AI, take these lessons to heart:

- Expect context confusion—no model is infallible.

- Audit for bias and drift constantly.

- Prioritize privacy and transparency.

- Pair algorithms with human oversight.

- Validate on your own data, not just benchmarks.

- Translate analysis into real-world action.

Adopt sentiment analysis with eyes wide open—it’s your best shot at extracting truth from the chaos of modern documentation.

Where to go from here

Sentiment analysis isn’t a magic bullet, but ignoring it leaves you flying blind. Start small, iterate, and lean on expert partners like textwall.ai when the stakes are high. Raise questions, demand transparency, and never take AI results at face value. The uncomfortable truth? The only real risk is failing to grasp the emotional pulse of your most important documents.

"Sentiment analysis isn’t a magic bullet. But ignoring it? That’s the real risk." — Taylor (Illustrative, echoing consensus across industry interviews)

Sources

References cited in this article

- ExpertBeacon: Sentiment Analysis Stats 2024(expertbeacon.com)

- AIMultiple: Sentiment Analysis Stats(research.aimultiple.com)

- CallMiner: Sentiment Analysis and Machine Learning 2023(callminer.com)

- MarketsandMarkets(marketsandmarkets.com)

- Springer Nature 2023 Survey(pmc.ncbi.nlm.nih.gov)

- IEEE Review 2023(ieeexplore.ieee.org)

- NAACL 2024 Paper(aclanthology.org)

- Penfriend.ai Guide 2024(penfriend.ai)

- Brandwatch: Social Media Sentiment Analysis 2024(brandwatch.com)

- ResearchGate: Sentiment Analysis at Document Level(researchgate.net)

- Springer: Generalizing Sentiment Analysis(link.springer.com)

- IntechOpen: Sentiment Analysis Roadmap 2023(intechopen.com)

- Springer AI Review 2023(link.springer.com)

- HistoryTools: Sentiment Analysis Methods(historytools.org)

- Springer: Sentiment Analysis Framework(link.springer.com)

- SuperAnnotate: Sentiment Analysis Explained 2024(superannotate.com)

- V7 Labs: AI Sentiment Analysis(v7labs.com)

- Transform Magazine: Why Context is Everything(transformmagazine.net)

- Kapiche: Sentiment Analysis Guide 2024(kapiche.com)

- UMY Journal: Policy Making Case Study(journal.umy.ac.id)

- Forbes: Patient Care(forbes.com)

- Scientific Reports: ChatGPT in Healthcare(nature.com)

- myHRfuture: NLP in HR(myhrfuture.com)

- Frontiers: Environmental Sentiment(frontiersin.org)

- Medium: The Dark Side of Sentiment Analysis(medium.com)

- McDonald Hopkins: Privacy Concerns(mcdonaldhopkins.com)

- Journal of Big Data: Advanced ML Techniques(journalofbigdata.springeropen.com)

- HubSpot: Sentiment Analysis Tools(blog.hubspot.com)

- CallMiner: Tool Selection Guide(callminer.com)

- Launchnotes: Visualization and Insights(launchnotes.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Semantic Text Analysis Software Is Quietly Reshaping Strategy

Semantic text analysis software is disrupting how we extract meaning from data. Discover the real breakthroughs, hidden flaws, and what to demand in 2026.

Semantic Document Analysis at Textwall.ai: Power, Limits, Fallout

Semantic document analysis, redefined: Unmask hidden insights, debunk myths, and outsmart data chaos. Discover the future of advanced document analysis. Read before you decide.

Scanned Document Conversion Tools, Privacy Risks and Real Gains

Discover the 2026 realities, hidden risks, and expert hacks. Don't convert another file before reading this. Get actionable insights now.

Replacement for Manual Document Review That Actually Reduces Risk

Discover insights about replacement for manual document review

The High‑stakes Case for Replacing Legacy Document Management

Replacement for legacy document management starts now—discover the hidden risks, real user stories, and radical strategies that will future-proof your business. Don’t stay stuck.

Reduce Time Spent on Documents Without Losing Critical Thinking

Reduce time spent on documents with advanced strategies, AI insights, and real-world tips. Discover how to boost productivity and reclaim hours—starting today.

Reduce Literature Review Time by 50% Without Losing Rigor

Discover insights about reduce literature review time

Reduce Human Error in Documents Before It Becomes a Six‑figure Mistake

Reduce human error in documents with hard-hitting insights, advanced strategies, and real case studies. Discover what really works—before your next costly mistake.

Reduce Errors in Document Analysis Before They Quietly Explode

Reduce errors in document analysis with edge—discover 13 raw strategies, real risks, and surprising wins. Take charge of accuracy in 2026. Start now.