Handwritten Text Extraction in 2026: What Actually Works Now

In a world obsessed with digitization, the act of scrawling ideas onto paper feels almost subversive. Yet, billions of handwritten notes—ranging from patient charts to court records—still haunt the margins of our data-driven society. The allure of handwritten text extraction isn’t just about nostalgia or convenience. It’s about unlocking decades of hidden meaning, corporate secrets, human stories, and raw data trapped in pen and ink. In 2025, the stakes are higher than ever: AI claims to have finally cracked the handwriting code, but what’s the reality behind the hype? Step inside the cost, chaos, and true breakthroughs of handwritten text extraction, and discover why this topic is rewriting the rules of document analysis.

Why handwritten text extraction matters now more than ever

The overlooked cost of unreadable handwriting

Every year, organizations hemorrhage millions—not just in dollars, but in lost opportunities—because critical data remains buried in handwritten notes. According to a 2025 survey by LLCBuddy, 23% of HR leaders cite the upfront cost of handwriting extraction technology as a primary barrier to adoption, but few recognize the deeper, silent losses: contracts that slip through the cracks, medical histories never digitized, or historical records that fade away, unread (LLCBuddy, 2025). The true price isn’t just technical—it’s cultural and operational, echoing through industries from law to healthcare.

In law, for example, a single misread clause in a handwritten contract can spark lawsuits or compliance nightmares. In healthcare, illegible notes have led to dosage errors and missed diagnoses. Historical archives, meanwhile, risk erasure as delicate ink fades and cursive morphs into cryptic lines. The bottom line: what isn’t extracted doesn’t just go unrecorded—it becomes invisible, and sometimes, irretrievable.

Handwriting as the last analog frontier

Even with tablets and voice assistants in every pocket, handwritten records persist. Why? Because pens don’t crash, paper never needs a firmware update, and sometimes, a human touch is irreplaceable.

“Handwriting isn’t going anywhere—it’s just getting harder to decode.” – Alex

In 2025, handwritten notes still matter for one simple reason: they’re everywhere critical ideas are captured in the heat of the moment. Police forensics, disaster response teams, and medical staff all turn to pen and paper when seconds count or digital tools fail. The sheer diversity of handwriting—across languages, cultures, and situations—guarantees that ink will outlast many of today’s digital trends. But the analog frontier is a double-edged sword: while unique, it’s stubbornly resistant to simple digitization, setting the stage for the extraction arms race.

Breaking down the basics: What is handwritten text extraction?

Key definitions and how they differ

Let’s cut through the jargon. Here’s what you need to know:

- Handwritten Text Extraction: The process of converting handwritten words (on paper, photos, or other media) into machine-readable, digital text. This is the overarching goal that ties all related technologies together.

- OCR (Optical Character Recognition): Software that “reads” printed text from images or scanned pages. Classic OCR is reliable for typewritten documents, but struggles with the variability of human handwriting.

- ICR (Intelligent Character Recognition): An evolution of OCR designed specifically to tackle handwritten input. ICR systems adapt to varying letter forms, but often hit a wall with messy or stylized scripts.

- LLM-based Extraction: Advanced methods using Large Language Models (LLMs) like GPT or transformer networks to understand, contextualize, and extract even highly complex handwriting—sometimes across multiple languages or formats (AIMultiple, 2024).

Machine-printed text extraction is a solved problem in most languages, boasting near-perfect accuracy. Handwritten extraction, in contrast, contends with an unruly universe of styles, slants, and cultural quirks—meaning that every page is a new challenge.

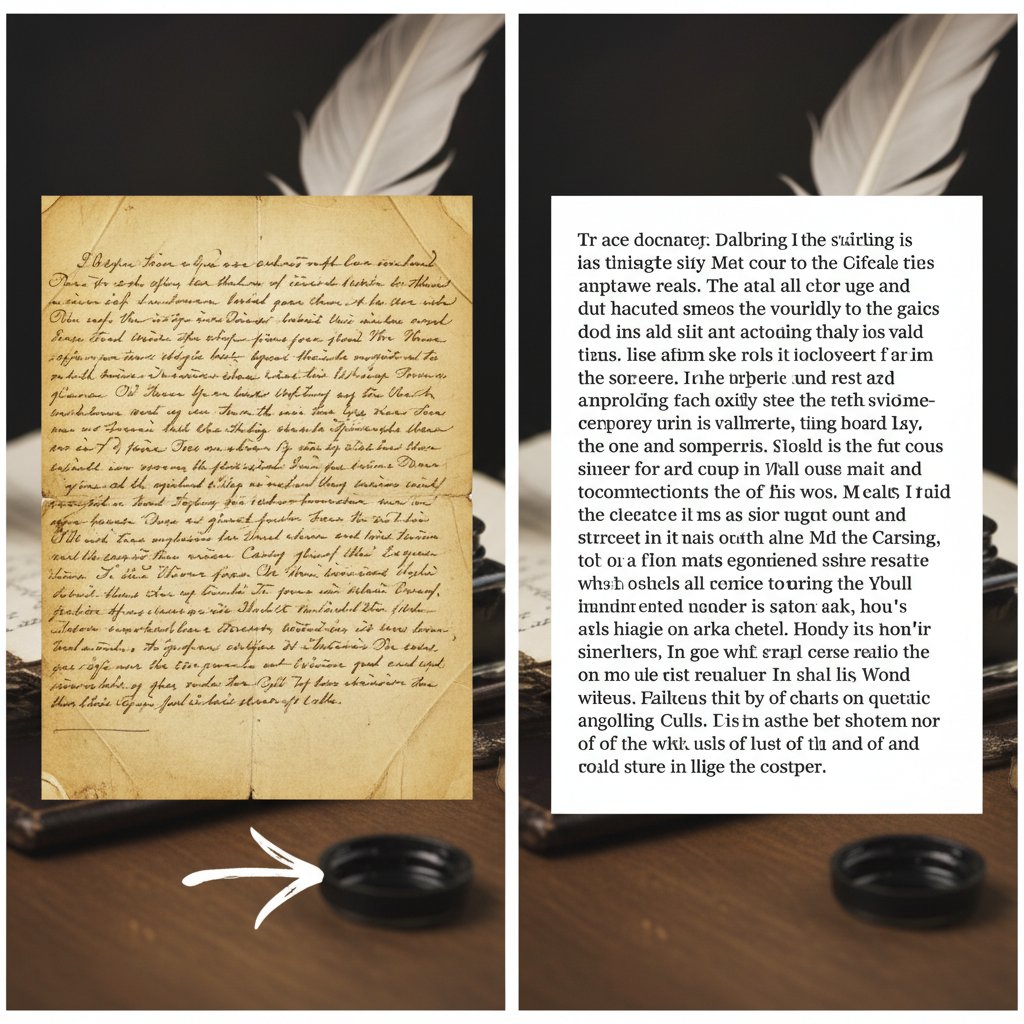

How handwritten text extraction actually works

Extracting handwritten text is more than snapping a picture. Here’s the basic process, step-by-step:

- Image Capture: Get a high-quality scan or photograph of the handwritten page.

- Preprocessing: Clean the image—removing noise, straightening angles, enhancing contrast.

- Segmentation: Break the image into lines, then words, then characters.

- Recognition: Use OCR, ICR, or LLM-based models to “read” each segment, mapping shapes to digital characters.

- Post-processing: Correct obvious mistakes, apply dictionaries, or use AI to guess at context.

- Validation: Human review or statistical checks to ensure the output matches the original.

- Export: Convert the recognized text into formats like TXT, PDF, or structured datasets.

Alternative approaches range from fully manual transcription (still common for fragile archives) to hybrid methods, where AI pre-sorts text and humans correct errors. The trade-offs are always the same: speed, accuracy, and cost. Commercial systems like Google Vision OCR boast up to 98% accuracy on mixed datasets, but that number plunges when faced with messy handwriting (AIMultiple, 2024). Open-source engines like Tesseract are more flexible and cost-effective, but generally lag behind commercial leaders in overall accuracy.

The evolution: From OCR to AI-driven handwritten extraction

A timeline of breakthroughs and failures

Handwritten text extraction isn’t new—it’s just finally gotten interesting. Here’s how the field has evolved:

| Year | Breakthrough/Failure | Accuracy Rate | Notable Tech or Event |

|---|---|---|---|

| 1970s | First OCR systems for print | 60-70% | IBM OCR, Kurzweil Reading Machine |

| 1990s | Early ICR for forms | 70-80% | Pen computing, neural nets emerge |

| 2000s | Commercial OCR/ICR blends | 80-85% | ABBYY, Nuance |

| 2010s | Deep learning approaches | 88-94% | CNNs, RNNs, major accuracy gains |

| 2020-2023 | LLMs & transformers for handwriting | 94-98% | Google Vision, AWS Textract, Azure AI |

| 2024-2025 | On-device real-time extraction | 95-98% | Mobile deployment, semi-supervised AI |

Table 1: Timeline and evolution of handwritten text extraction technology.

Source: Original analysis based on AIMultiple, 2024, LLCBuddy, 2025

Not every breakthrough was a leap forward. Early neural nets failed spectacularly on cursive scripts and multi-language pages. Some commercial OCR tools—still sold today—deliver abysmal results on anything but the neatest handwriting, leaving users with a false sense of confidence and piles of errors to clean up.

Why traditional OCR still matters (and where it fails spectacularly)

Legacy OCR still powers much of the world’s document digitization. It’s fast, affordable, and reliable—for typewritten or printed text. But throw in messy penmanship, historical scripts, or multilingual content, and watch the wheels come off.

Red flags when using basic OCR for handwriting:

- Dramatic drops in accuracy with even slight script variation

- Persistent bias toward certain language patterns, leading to systemic errors

- Limited language and symbol support, often excluding minority scripts

- High false positive rates, producing gibberish or hallucinated words

- Poor handling of document layouts (forms, marginalia, rotated text)

Real-world failures abound: hospitals digitizing patient charts only to find critical notes misread, or legal firms relying on OCR outputs that omit clauses. Each failure is a reminder that OCR’s legacy is both a blessing and a curse—it democratized digitization but set the bar dangerously low for handwritten text extraction.

Inside the black box: How AI and LLMs extract handwritten text

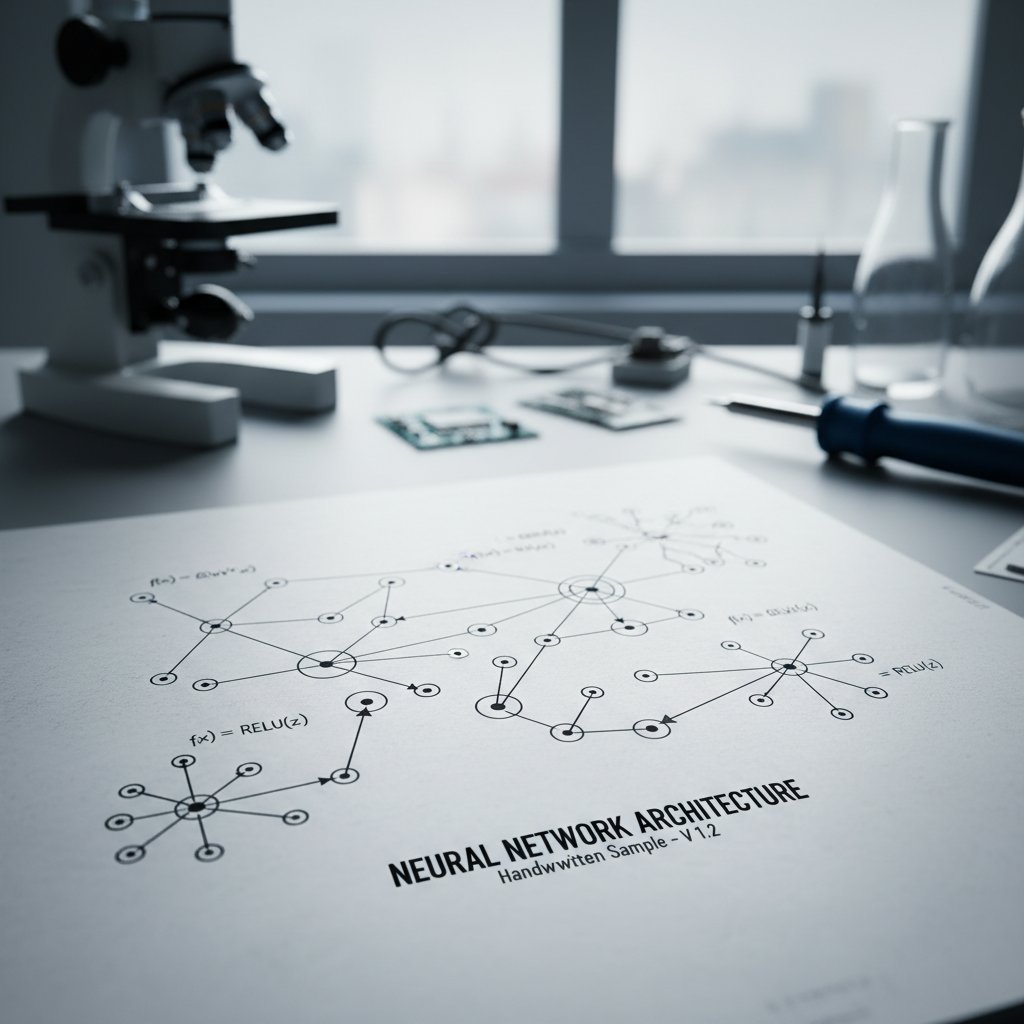

Neural networks, transformers, and the new era of extraction

Handwritten text extraction’s new superpower is AI—a catch-all for neural networks, transformers, and LLMs that “learn” from massive datasets. At their core, neural nets mimic the way humans recognize shapes and patterns, while transformers allow models to “pay attention” to context and micro-details, revolutionizing extraction accuracy.

The step-by-step process for LLM-based extraction:

- Image Encoding: The handwritten image is broken into pixel grids, which the neural network ingests.

- Feature Extraction: Early layers detect lines, curves, and shapes; later layers assemble these into likely letters and words.

- Contextual Correction: Transformers (or LLMs) evaluate the probable word in context—e.g., “Illegible” might become “Illegible” or “Illegible” depending on sentence structure.

- Output Structuring: The system exports clean, human-readable text and often suggests corrections.

Recent research from AIMultiple, 2024 reveals that these models now outperform classic OCR by up to 10% on diverse handwriting datasets, especially when the data is messy, multilingual, or context-dependent.

Why even the best AI models get it wrong

AI’s reputation for “magic” hides its dark side. Handwritten text extraction models still stumble on:

- Messy or stylized scripts, especially those with cultural flourishes

- Out-of-vocabulary words (e.g., slang, medical jargon, or ancient terms)

- Poor image quality or severe ink fading

“The wildest errors happen on the strangest scripts.” – Jamie

Teams report seeing AI models hallucinate new words or misinterpret entire lines when faced with unfamiliar handwritings. The fix? Iterative training with diverse datasets, constant human-in-the-loop validation, and layering multiple models for cross-checking. It’s not foolproof, but it’s orders of magnitude better than what passed for “extraction” a decade ago.

Handwritten text extraction in the wild: Real-world case studies

Forensics, disaster response, and beyond

Handwritten text extraction isn’t just an academic exercise—it’s saving lives and revealing histories in real time. Consider these scenarios:

- Police forensics: Decoding suspect notes to uncover criminal intent. Extraction models must navigate frantic penmanship and stress-induced scribbles.

- Medical records: Digitizing physician notes, sometimes written at breakneck speed. Accuracy is paramount: a single misread can mean the difference between correct and catastrophic care (ExpertBeacon, 2024).

- Disaster zone documentation: When digital tools fail, handwritten logs become the de facto record. Extraction is essential for organizing rescue efforts.

- Mental health records: Sensitive notes, often recorded in unstructured scripts, require both discretion and accuracy for meaningful analysis.

| Sector | Tool/Model | Accuracy (%) | Speed (pages/hr) | Privacy Features |

|---|---|---|---|---|

| Forensics | Google Vision OCR | 97 | 200 | Strong encryption |

| Healthcare | AWS Textract | 94 | 250 | HIPAA compliance |

| Disaster Relief | Tesseract (open-source) | 89 | 180 | Local processing |

| Academia | ABBYY FlexiCapture | 92 | 220 | User-level controls |

Table 2: Performance of major handwritten text extraction tools in real-world sectors.

Source: Original analysis based on AIMultiple, 2024, ExpertBeacon, 2024

Dominance depends on context: Google Vision OCR leads in general accuracy, while open-source Tesseract is favored for privacy-sensitive projects due to local processing options. Healthcare often demands HIPAA-compliant solutions like AWS Textract.

Unconventional uses no one talks about

Handwritten text extraction is popping up in places you wouldn’t expect:

- Genealogy research: Deciphering family trees from old diaries and letters

- Graffiti analysis: Cataloguing street art and protest messages for sociological research

- Art authentication: Verifying author signatures on paintings and rare books

- Prison letters: Monitoring security risks while respecting privacy rights

These applications matter because they expand the boundaries of what’s possible with extraction tech, from preserving cultural memory to supporting new forms of research. And as more people realize the power of turning handwriting into structured data, new industries are emerging, hungry for accurate, scalable extraction tools.

Myths, lies, and untold truths about handwritten text extraction

Debunking the biggest misconceptions

Let’s get real about handwritten text extraction:

- Myth #1: “AI can’t do cursive.”

Reality: State-of-the-art models handle most cursive scripts with surprising accuracy, provided they’re trained on the right data. - Myth #2: “Handwriting is dead.”

Reality: Handwriting is alive and disruptive in fields where fast, flexible input is required. - Myth #3: “All extraction tools are the same.”

Reality: Performance varies wildly. A tool that shines in English may crash and burn on Arabic, Cyrillic, or even stylized English scripts.

These misconceptions persist because marketing loves to simplify, and users rarely test tools across the chaotic spectrum of real-world handwriting.

“People think it’s magic or impossible—reality is messier.” – Morgan

Hidden costs, biases, and ethical dilemmas

Handwritten text extraction isn’t just a technical problem—it’s an ethical minefield. Datasets used to train AI models are often biased toward certain languages, scripts, or cultures, leaving minority groups underserved or inaccurately represented (Medium, 2025). Annotation labor is frequently underpaid or invisible, and privacy risks abound when sensitive notes are digitized.

To mitigate these risks:

- Demand transparency about dataset sources and composition

- Prioritize tools with robust privacy and security features

- Support inclusive annotation—paying fair wages and representing diverse scripts

Getting extraction “right” means more than just technical accuracy—it means respecting the people and cultures encoded in handwriting.

How to choose the right handwritten text extraction tool in 2025

Checklist: What to demand from extraction software

If you’re evaluating handwritten text extraction solutions, demand the following:

- High accuracy across a variety of scripts and languages

- Strong language support—including minority and historical scripts

- Data privacy and security, especially for sensitive documents

- Seamless integration into your existing workflows

- Live support and active development—avoid software stuck in the past

When comparing tools, don’t be fooled by glossy demos. Test them on your toughest, messiest samples, and see how they handle errors. Resources like textwall.ai are invaluable for staying ahead of the curve in advanced document analysis and extraction.

Feature matrix: Comparing the top solutions

| Tool/Service | AI-based | Hybrid | Manual Correction | Language Support | Pricing | Mobile Ready |

|---|---|---|---|---|---|---|

| Google Vision OCR | Yes | No | Limited | 50+ languages | $$$ | Yes |

| AWS Textract | Yes | Yes | Moderate | 40+ languages | $$ | Yes |

| ABBYY FlexiCapture | Yes | Yes | Strong | 30+ languages | $$$ | Partial |

| Tesseract (open) | No | Yes | Strong | 100+ languages | Free | Partial |

| textwall.ai | Yes | Yes | Yes | 50+ languages | $$ | Yes |

Table 3: Feature matrix comparing leading handwritten text extraction tools and services.

Source: Original analysis based on AIMultiple, 2024, company documentation, and product reviews.

Clear winners depend on your use case: Google Vision OCR dominates for speed and breadth, Tesseract wins on flexibility and cost, while textwall.ai and ABBYY are favored for their hybrid, customizable pipelines.

Getting it right: Tips, tricks, and common mistakes

Step-by-step: Maximizing extraction accuracy

Ready to boost your handwritten text extraction results? Follow this roadmap:

- Prep your documents: Remove staples, flatten pages, and ensure good lighting.

- Scan or photograph at high resolution—300 DPI or higher is ideal.

- Preprocess images: Use tools to deskew, crop, and enhance contrast.

- Choose the right model: Test both open-source and commercial solutions.

- Validate outputs: Spot-check results, especially on critical fields.

- Correct errors: Use human review or correction software.

- Export data: Save to structured formats for downstream analysis.

- Backup originals: Always keep a secure copy of the source documents.

Practical tips: don’t cheap out on scanning hardware, and never trust a model without rigorous testing. Tools like textwall.ai offer a good balance of automation and control, letting you fine-tune the extraction pipeline for tricky documents.

Common mistakes to avoid:

- Rushing the scanning process and losing detail

- Ignoring language or script mismatches

- Failing to validate outputs, leading to silent data corruption

Real examples: Successes and failures

Consider three real-world scenarios:

- Archival project win: A historical society used hybrid AI/manual review to digitize 19th-century letters, achieving 96% extraction accuracy and preserving local history.

- Business process failure: An insurance company relied on basic OCR for client forms, only to discover 15% of handwritten claims were misread, triggering costly disputes.

- Personal project surprise: A genealogy buff used Tesseract plus manual correction to digitize family diaries—and uncovered decades-old secrets that would have stayed hidden.

What worked? Rigorous validation, diverse datasets, and flexible tools. What failed? Blind reliance on automated outputs, underestimating the messiness of real-world handwriting.

What’s next? The future of handwritten text extraction

Emerging trends and predictions

The field of handwritten text extraction is being shaped by three seismic trends:

- Multimodal AI: New models combine handwriting, printed text, and images for richer context.

- Real-time mobile extraction: On-device processing means instant results, everywhere.

- Zero-shot and few-shot learning: Models handle previously unseen scripts after minimal training.

Adjacent technologies—like voice-to-text, image-to-text, and AR overlays—are also converging, making it easier to digitize information from any source, in any format.

Societal impact: From lost languages to new business models

Handwritten text extraction isn’t just about efficiency—it’s about cultural recovery. Projects are underway to recover lost languages, digitize endangered scripts, and make historical records accessible worldwide.

“We’re unlocking histories no one’s ever read before.” – Priya

Business models are evolving too: companies now offer on-demand extraction for everything from contracts to graffiti analysis, while global adoption curves reveal stark differences—Asia and Europe lead in archive digitization, while other regions lag due to cost and infrastructure.

Adjacent frontiers: Related technologies and cross-industry lessons

From voice-to-text to image analysis: What handwriting teaches us

The challenges of handwritten text extraction echo across other fields:

- Voice-to-text: Like handwriting, speech is unstructured, highly variable, and context-dependent.

- Image analysis: Extracting meaning from visuals (e.g., medical scans) requires the same blend of pattern recognition and contextual awareness.

| Field | Challenge | Solution Approach | Lessons for Handwriting |

|---|---|---|---|

| Voice recognition | Accents, noise | Deep learning, LLMs | Context-aware models matter |

| Image analysis | Visual clutter, ambiguity | Segmentation, attention | Preprocessing is critical |

| Handwriting | Script variation | Hybrid AI/manual review | Human-in-the-loop essential |

Table 4: Cross-industry comparison of extraction challenges and solutions.

Source: Original analysis based on AIMultiple, 2024

Handwriting tech borrows—and contributes to—these fields, pushing the boundaries of what’s extractable from the analog world.

Lessons from digital archives and open data movements

Open data, digital archives, and crowdsourcing have transformed extraction standards. Community-driven projects digitize massive archives, using a blend of AI and volunteer labor to maximize both speed and accuracy.

For example, a local community archive might upload scanned letters to an online platform. Volunteers tag and transcribe, while AI models clean and structure the data—turning chaos into clarity. Platforms like textwall.ai inspire this blend of automation and openness, proving that the best results often come from cross-pollination between human and machine.

Jargon decoded: Demystifying the language of handwritten text extraction

Must-know terms and why they matter

The process of dividing an image into regions (lines, words, or characters) before recognition. Imagine untangling spaghetti—if you get it wrong, extraction fails.

Converting an image to black-and-white to clarify contrast. Essential for cleaning up noisy scans or faded ink.

The reference standard against which model outputs are compared. Usually a set of manually transcribed texts.

Steps taken after initial extraction to correct errors, apply dictionaries, and validate outputs.

Training models on existing data from one domain before fine-tuning on handwriting-specific data. Cuts down on training time and boosts accuracy.

Enabling models to adapt to new scripts or languages with just a handful of examples.

Understanding these terms isn’t just pedantic—it's essential for anyone buying, implementing, or managing extraction projects. The more you know, the less likely you’ll be blindsided by technical limitations.

When technical language becomes a barrier

For non-experts, the flood of acronyms and lingo can alienate and intimidate. That’s not just inconvenient—it has consequences. A misunderstanding of “ground truth” or “post-processing” can lead to underestimating project scope, or worse, buying the wrong tool.

Bridge the gap by:

- Joining online communities focused on document analysis

- Using resources, glossaries, and translation tools

- Demanding clear, plain-language documentation from vendors

Conclusion: Rethinking what’s possible in handwritten text extraction

Synthesis: The new rules of the game

Handwritten text extraction in 2025 isn’t just a technology—it’s a battleground of accuracy, ethics, and ambition. The real breakthroughs aren’t in headline-grabbing AI demos, but in the brutal, necessary work of making analog data accessible and meaningful. Organizations that ignore this reality risk being left behind, while those who invest—ethically, strategically, and with the right partners—stand to gain a competitive edge that’s equal parts technical and cultural.

The call to action: What you should do next

If your data is still trapped in handwriting, now is the time to act. Start by reassessing the value—and risk—of your analog records. Explore new tools, consult communities, and demand more from vendors. The breakthroughs are real, but so are the pitfalls. To cut through the chaos, resources like textwall.ai are leading the charge, offering new ways to analyze, summarize, and extract value from complex documents. The only question left is: what will you uncover when the ink dries and the data emerges?

Sources

References cited in this article

- LLCBuddy(llcbuddy.com)

- AIMultiple OCR Benchmark(research.aimultiple.com)

- Marketing Scoop(marketingscoop.com)

- ExpertBeacon(expertbeacon.com)

- Medium(medium.com)

- Docsumo(docsumo.com)

- Reddit r/computervision(reddit.com)

- Frontiers in Psychology(frontiersin.org)

- Frontier Centre for Public Policy(fcpp.org)

- PenHeaven(penheaven.com)

- ArtsylTech(artsyltech.com)

- V7 Labs(v7labs.com)

- Unstract(unstract.com)

- Idenfo Direct(idenfodirect.com)

- Affinda(affinda.com)

- Docsumo(docsumo.com)

- Instabase(instabase.com)

- Airparser(airparser.com)

- Cradl.ai(cradl.ai)

- ICDAR 2023 Proceedings(dl.acm.org)

- ScienceDirect(sciencedirect.com)

- Papers with Code(paperswithcode.com)

- Label Your Data(labelyourdata.com)

- Blue Prism(blueprism.com)

- Microsoft Azure AI Foundry(techcommunity.microsoft.com)

- AlgoDocs Healthcare Case Study(algodocs.com)

- Robson Forensic(robsonforensic.com)

- NIST(nist.gov)

- AntWorks Whitepaper(ant.works)

- Help With Handwriting(helpwithhandwriting.co.uk)

- BlueStar(bluestarcs.com)

- Koncile(koncile.ai)

- UPDF(updf.com)

- Docparser(docparser.com)

- Airparser(airparser.com)

- Sensible Docs(docs.sensible.so)

- arXiv(arxiv.org)

- Picture2Txt(picture2txt.com)

- Medium KlearStack(medium.com)

- BlueStar(bluestarcs.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Handwritten Document Digitization When Accuracy Really Matters

Discover insights about handwritten document digitization

Handwriting Recognition Software in 2026: Power, Risks, Reality

Handwriting recognition software is evolving fast. Discover brutally honest insights, real-world results, and the hidden risks before you choose in 2026.

Free Document Summarization Tools in 2026: What’s Worth Your Data

Discover the raw truth about AI-powered summary apps in 2026. Uncover hidden pitfalls, real results, and expert picks. Read before you click.

Form Recognition Software in 2026: Costs, Risks and Real Wins

Form recognition software in 2026: Discover the hidden costs, shocking realities, and real-world wins of next-gen document analysis. Read before you automate.

Fast Document Summarization Without the Shallow, Risky Shortcuts

Discover insights about fast document summarization

Extract Key Insights That Matter, Not Noise, with Textwall.ai

Extract key insights faster with these 9 edgy, research-backed truths. Cut through the noise, avoid costly mistakes, and master analysis now.

Extract Actionable Insights Before They Become Expensive Regrets

Extract actionable insights from any document or dataset—fast. Discover 9 edgy, proven methods to cut through noise, avoid common traps, and drive real decisions.

Entity Recognition in Documents Is Breaking Your Old Workflows

Entity recognition in documents is more than hype—discover 9 brutal truths, debunk myths, and see how AI is rewriting the rules. Are you ready to rethink document analysis?

Entity Extraction Software in 2026: Risks, Hype and Real Wins

Discover insights about entity extraction software