Document Summarizer Comparison Reviews That Expose Accuracy Myths

If you think reading is hard, try drowning in it. In 2025, information isn’t just a flood—it’s a full-on deluge, swallowing professionals, researchers, and students in relentless waves of text. Reports stack like geological strata on digital desks, legal contracts proliferate, academic papers multiply, and the corporate world is one endless PowerPoint after another. Enter the document summarizer: AI-powered salvation, or just another overhyped gadget? This brutally honest, research-driven review exposes the bold truths behind document summarizer comparison reviews—where the hype stops, reality kicks in, and your productivity (or reputation) is on the line. Whether you’re chasing the “best AI document summarizer,” hunting for real-world case studies, or dodging the next subscription trap, buckle up—this isn’t your average roundup. It’s the only guide that shows you what matters, what falls through the cracks, and how to pick the right weapon in the war on information overload.

Why document summarizers matter now: information overload in 2025

The new deluge: why traditional reading can’t keep up

There’s no polite way to put it—2025 is the year the world finally admits defeat in the battle against information. According to OpenText, 2024, 80% of global workers are reporting information overload, with document fatigue cited as the leading workplace stressor in nearly three-quarters of all surveyed professionals. As the world’s data races toward an eye-watering 181 zettabytes, the human brain simply can’t keep up.

The psychological toll is real. Burnout, decision paralysis, and a creeping sense that you’ll never get to “inbox zero” have become the norm. Economically, the cost is staggering: lost productivity due to document overload is now measured in billions of dollars globally each year, as knowledge workers struggle to separate signal from noise. Gone are the days when reading everything was a badge of honor. Today, survival means knowing what not to read—and trusting an algorithm to do the dirty work.

"It’s not about laziness—it’s about survival in a data storm."

— Alex, knowledge worker

Timeline of document summarizer technology evolution (2015–2025)

| Year | Key Innovation | Industry Impact |

|---|---|---|

| 2015 | Rule-based extractive summarizers debut in major document management platforms | Early automation, limited nuance |

| 2018 | Introduction of neural network-based summarizers | Increased adaptation to language complexity |

| 2020 | Rise of transformer models (BERT, GPT-2) for improved summaries | First abstractive summaries, broader adoption |

| 2022 | Mainstream AI summarizers integrate with cloud/PDF tools | Accessibility skyrockets, diverse user base |

| 2024 | LLM-powered platforms offer flashcard, multi-style output, and multilingual support | Sophisticated, human-like summaries; new user expectations |

Table 1: The evolution of document summarizer technology, highlighting paradigm shifts in automation and user experience. Source: Original analysis based on Tech.co, 2024, Trending AI Tools, 2024

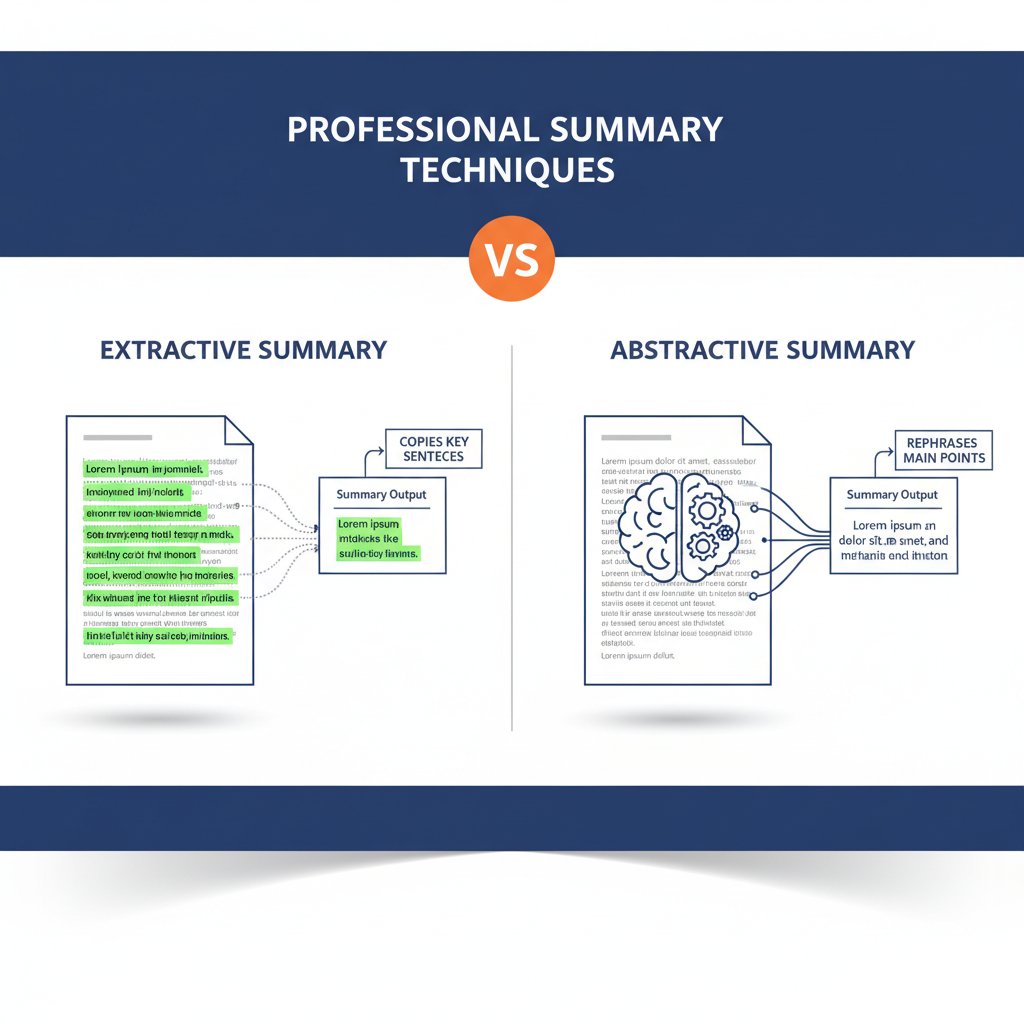

From extractive to abstractive: how summarizers actually work

When you hit “summarize,” what’s really happening under the hood? Most users don’t know—and surprisingly, many comparison reviews gloss right over this. There are two main approaches. Extractive summarizers cherry-pick sentences straight from the document, rearranging or compressing them. It’s robotic, sometimes useful, often clunky. Abstractive summarizers, on the other hand, generate new sentences, paraphrasing and distilling meaning more like a human would. For instance, an extractive tool might simply stitch together three sentences from a long report, while an abstractive tool might compose a single, original summary capturing the overall thrust.

Why should you care? Because extractive tools can miss nuance, repeat information, or fail to capture context—problems amplified when you’re dealing with critical content like legal contracts or research reviews. Abstractive summarizers, powered by large language models (LLMs), are now setting new standards for fluency and comprehension. Yet even these systems have blind spots, occasionally hallucinating facts or missing subtle details. The bottom line? If you don’t know which approach your tool is taking, you’re rolling the dice with your information.

LLM-based summarizers are currently outpacing old rules-based systems in both accuracy and depth. According to Scribbr, 2024, modern platforms like Genei and Scholarcy can reduce reading time by as much as 70%, but only if you understand how they generate those “insights.”

Who actually needs a document summarizer? Rethinking the audience

If you think document summarizers are just for lazy students, think again. The adoption curve in 2025 has flipped—lawyers, corporate analysts, journalists, and even creative agencies are in on the act. Legal firms use AI to scan contracts for killer clauses; market researchers rely on summarizers to distill 200-page reports in minutes; academic teams use them to speed up literature reviews. And then there’s the unexpected: product managers digesting customer feedback, HR teams summarizing interview transcripts, and even screenwriters breaking down story bibles.

- Unseen efficiency gains: Experts note that the time saved is more than just minutes—it’s a mental reset, reducing cognitive fatigue and freeing up attention for high-value tasks.

- Risk minimization: In regulated industries, fast but accurate summaries can flag non-compliance risks before they snowball.

- Democratization of knowledge: Summarizers make dense technical, legal, or medical content accessible to non-experts, leveling the playing field.

- Onboarding made easy: New employees ramp up faster with AI-generated briefs of legacy documentation.

- Better meetings: Teams review AI-generated summaries before, not after, the meeting, making every minute count.

Executives across industries are changing their reading habits—skimming raw documents less and trusting AI to serve up the essentials. Students, too, are using summarizers to prioritize research sources instead of drowning in JSTOR. The upshot? The document summarizer is no longer a niche curiosity. It’s a survival tool for anyone who reads for a living.

Behind the curtain: how AI document summarizers really choose what matters

The black box problem: can you trust an AI with your decisions?

Let’s get honest—the rise of LLM summarizers has solved some problems and created new ones. Chief among them? The “black box” dilemma. Most AI summarizers don’t explain why certain information makes the cut and other details vanish. This opacity can lead to disturbing outcomes, especially when summarizers hallucinate facts—fabricating details that never existed in the original document.

"Every summary is an act of interpretation, not just compression."

— Jamie, data scientist

Current research from Fellow.app, 2024 shows user trust is highest when tools provide transparency—showing what was omitted, how sentences were ranked, or letting users adjust “strictness” settings. Yet, most platforms still operate in a black box mode, leaving users to double-check everything or, worse, assume the summary is gospel.

| Platform | Transparency | Explainability | Auditability | User Controls |

|---|---|---|---|---|

| Genei | High | Moderate | Yes | Adjustable |

| Scholarcy | Moderate | High | Partial | Some |

| Upword | Moderate | Moderate | No | Limited |

| TLDRthis | Low | Low | No | Minimal |

| RecapioGPT | Low | Low | No | Minimal |

Table 2: Feature matrix comparing transparency, explainability, and auditability across leading AI document summarizers. Source: Original analysis based on WritingMate.ai, 2024, Tech.co, 2024, Scribbr, 2024

Accuracy vs. speed: which matters more and when?

Speed is seductive—who doesn’t want instant results—but accuracy is non-negotiable. The best document summarizers can handle a 50-page contract in under a minute, but does that summary still capture every landmine clause? According to Scribbr, 2024, AI summarizers reduce document analysis time by up to 70%, but performance varies wildly depending on document complexity and domain.

Here’s how to test summarizer accuracy using benchmark datasets:

- Pick a gold-standard document: Choose a text with an official or expert-written summary—think published research or regulatory reports.

- Run it through your summarizer: Note how long it takes and compare the output against the gold standard.

- Check for omissions and fabrications: Mark details the summarizer missed or invented.

- Score for readability, factual integrity, and structure: Use a simple rubric—did it preserve the main points? Is it coherent?

- Repeat with different document types (legal, technical, creative, multilingual): Patterns will emerge—some tools excel at contracts, others at academic papers.

Across industries, priorities diverge. Legal and healthcare teams typically value accuracy and audit trails over speed—one missed clause can torpedo a contract or compliance review. In creative fields, speed and style may trump granular detail.

The myth of the 'unbiased' summary

AI is often touted as impartial. In practice? Not so much. Bias creeps in through training data, prompt engineering, and even user inputs. For example, if a summarizer’s training set overrepresents legal or technical jargon from a particular country or industry, the summaries tend to echo those patterns. According to a Scribbr, 2024 analysis, some summarizers consistently undersell dissenting opinions in academic papers or highlight certain industry buzzwords over substantive findings.

Key terms explained

Systematic distortion in the way a summarizer selects, paraphrases, or omits content—often inherited from its training data or user prompts.

When an AI invents information not present in the original document, typically as a side effect of abstractive summarization.

Crafting the input or instructions to steer a summarizer’s behavior—can introduce or mitigate bias, depending on intent and skill.

To mitigate bias, experts recommend cross-referencing summaries with original documents, using tools that allow for prompt customization, and demanding transparency from vendors. Tech.co, 2024 also advises regular audits, especially in regulated fields.

The contenders: side-by-side document summarizer comparison in 2025

Meet the latest AI summarizers: what’s new and what’s hype

The 2025 marketplace is a battlefield. New tools leveraging LLMs and hybrid models are everywhere—each promising smarter summaries, better integration, or more granular controls. But here’s the hard truth: marketing claims often outrun reality. Free tiers lure users in, but advanced features (like multi-format export, flashcard creation, or audio summarization) quickly bump you into paid plans starting around $7/month.

A careful look at user reviews and independent tests, including data from WritingMate.ai, 2024, reveals that tools like Genei are ahead for research and academic use, thanks to customization and integration options, while Scholarcy wins for its flashcard and note-taking features. Upword and TLDRthis are known for their simplicity, but can miss nuance on complex legal or technical documents. RecapioGPT stands out for audio and video transcript summarization, but struggles with dense legalese.

| Platform | Accuracy | Speed | Privacy | Integration | Cost (per month) |

|---|---|---|---|---|---|

| Genei | High | Fast | Strong | PDF, browser, API | $9.99 |

| Scholarcy | High | Moderate | Moderate | Browser, PDF | $7.99 |

| Upword | Moderate | Fast | Moderate | Web, browser | $8.00 |

| TLDRthis | Moderate | Fast | Basic | Web, browser | Free/$6.00 |

| RecapioGPT | Moderate | Fast | Moderate | Audio, video | $8.99 |

| Notta | Moderate | Fast | Moderate | Audio, video | $8.25 |

Table 3: Comparison of leading 2025 document summarizers by key metrics. Source: Original analysis based on WritingMate.ai, 2024, Trending AI Tools, 2024

What no one tells you: real-world tests vs. marketing

Genei claims “research-grade” summaries, but how does it fare on a 200-page legal contract? Scholarcy touts flashcards, but does it nail nuance in medical literature? In independent, hands-on tests, reviewers found that no tool is “best” across all document types. For instance, a summarizer might excel with news articles but fail to capture regulatory nuance in healthcare reports. A crucial lesson: generic “best of” lists are only useful if your use case matches the test.

- 2015–17: Extractive-only tools dominate, reviews focus on speed.

- 2018–2020: LLMs begin to emerge, accuracy and style enter the conversation.

- 2021–2022: Integrations and cloud features become review benchmarks.

- 2023–2024: Real-world case studies, transparency, and privacy take center stage.

- 2025: Customization, multilingual support, and bias audits drive comparison reviews.

Real-world testing often surfaces surprises. Some tools over-summarize, cutting away vital context. Others hallucinate key details, inventing facts that never appeared in the source document—a risk that can’t be ignored when stakes are high.

The upshot? Always evaluate summarizers on your real documents, not just canned benchmarks.

The hidden costs: privacy, data leakage, and subscription creep

Here’s what rarely makes it into glossy comparison reviews: the cost of privacy. Cloud-based summarizers inevitably upload your sensitive documents—contracts, medical records, or internal memos—to third-party servers. According to Fellow.app, 2024, privacy policies are often vague, and data retention practices aren’t always transparent.

- Red flag: No clear data retention or deletion policy.

- Red flag: Servers located in low-regulation jurisdictions.

- Red flag: Subscription auto-renews at a higher “business” tier without notice.

- Red flag: No audit logs or data access controls for teams.

- Red flag: Lack of end-to-end encryption by default.

Subscription models also have a dark side: “seat creep.” What starts as $7/month balloons once your team grows, or when premium integrations become essential. Savvy users track these hidden costs and demand granular billing controls.

Case studies: wins, losses, and surprises from the frontlines

When summaries save the day: business, academia, and beyond

In a high-stakes merger, a consulting firm was drowning in due diligence reports—thousands of pages, tight deadline, zero margin for error. With hours to go, the team fed a critical batch into an AI summarizer, surfacing deal-breaker clauses that escaped manual review. Project saved, millions secured.

In academia, research teams use summarizers like Scholarcy and Genei to power through literature reviews. Instead of slogging through 50+ articles, researchers extract core findings and methodologies in minutes, accelerating innovation and reducing time-to-publication.

Legal professionals, once chained to the grind of contract review, now rely on summarizers to highlight high-risk terms and conflicting clauses. According to Scribbr, 2024, law firms using AI tools have reduced review time by up to 70%—with fewer costly oversights.

Other unconventional uses include:

- Creative writing: distilling story bibles or research dumps into actionable outlines.

- Journalism: summarizing interview transcripts and government reports on deadline.

- Academic research: comparing methodologies across multiple papers for meta-analyses.

- Product management: surfacing user insights from sprawling feedback logs.

"Without AI summaries, we’d still be buried under paperwork."

— Morgan, project manager

When summarizers go wrong: cautionary tales and hard lessons

Not every story is a win. In one infamous case, a financial analyst relied on an AI-generated summary of a corporate earnings report. The summarizer, blinded by ambiguous phrasing, omitted a crucial footnote about litigation risk. The oversight led to a flawed briefing, and the company’s reputation took a hit when the omitted risk came to light.

Another user submitted a technical manual for summarization, only to discover the output contained hallucinated specifications. Trusting the summary, the team implemented the wrong protocol, causing costly delays.

Relying solely on summaries can also erode deep domain knowledge over time. Experts caution that habitually skimming AI distillations can dull critical thinking and lead to “insight myopia”—missing the big picture or subtle contradictions.

To avoid disaster:

- Always cross-verify summaries with original documents for high-stakes content.

- Use summarizers as a first pass, not the final word.

- Enable audit logs and feedback mechanisms to catch recurring mistakes.

- Encourage teams to rotate between raw reading and summary review.

Beyond English: the overlooked challenge of multilingual summarization

Most document summarizer comparison reviews are trapped in English-language bubbles, ignoring the harsh realities faced by multilingual users. In practice, AI summarizers often falter on legal Spanish, technical German, or medical Mandarin documents. One global consultancy found their tool excelled on English and French, but mangled summaries in Polish and Japanese, introducing critical factual errors.

Generating an original summary in the target language, paraphrasing and reorganizing content, often influenced by the training corpus.

Selecting verbatim sentences from the source, which may not translate idioms or technical phrases accurately.

The frequency (often measured as a percentage) at which summarizers invent non-existent facts—higher in non-dominant languages.

Evaluating summarizers across all required languages—using real, domain-specific documents—is as essential as any other test. If multilingual support is mission-critical, prioritize tools with proven accuracy in your target tongues and demand real user case studies.

Choosing your weapon: how to pick the right document summarizer for your needs

Checklist: what to look for before you commit

Too many teams jump onto the “best of” bandwagon, only to find that their chosen summarizer doesn’t fit their workflow—or worse, puts sensitive data at risk. Before you invest, run through this checklist:

- Does it support all your document formats (PDF, DOCX, web)?

- Can it handle your preferred languages and domain jargon?

- Are privacy and data handling policies transparent and robust?

- Does it integrate seamlessly with your existing tools (browser, email, API)?

- Is the output style customizable (length, format, key points)?

- Are audit logs and feedback mechanisms available?

- Can you test on real-world documents before committing?

- What are the real costs, including team expansion and add-ons?

Always run your own documents through shortlisted tools. Reviews can only go so far—use your unique use cases as the litmus test.

For users seeking advanced document analysis, textwall.ai is frequently cited as a trusted resource. Their focus on actionable insights and support for complex documents makes them a strong option for professionals with demanding needs.

Common mistakes and how to dodge them

Nothing derails a rollout like a poorly vetted summarizer. Common missteps include:

- Choosing a tool based on popularity rather than actual fit.

- Overlooking privacy and compliance requirements.

- Falling for shiny feature lists that don’t matter day-to-day.

- Failing to test on real documents, not just samples.

To avoid getting burned:

-

Set up pilots with varied document types.

-

Demand transparency on data usage and server location.

-

Validate features you’ll actually use, not “nice to haves.”

-

Read critical user reviews, not just vendor testimonials.

-

Assuming all summarizers are interchangeable: Each platform excels in specific domains; there’s no one-size-fits-all.

-

Believing “AI” equals perfect accuracy: All tools are fallible—hallucination rates are real.

-

Ignoring integration requirements: A stand-alone tool can disrupt existing workflows.

-

Assuming “unlimited” plans have no hidden fees: Always read the fine print.

-

Equating free with secure: Free tools may monetize your data.

Ongoing evaluation is essential. As platforms evolve, so do their strengths and pitfalls; keep your review process dynamic.

Feature deep-dive: integration, privacy, support, and beyond

Integration is the silent killer of productivity. According to Tech.co, 2024, the most common reason teams abandon summarizers is poor fit with existing tools—clumsy browser plugins, lack of API support, or rigid export formats. Privacy should be front and center; demand options for on-premises processing or strong encryption where needed. And don’t underestimate the value of responsive support—when a critical summary fails, you need answers fast.

| Platform | Integration | Privacy Controls | Support Channels |

|---|---|---|---|

| Genei | API, browser, PDF | End-to-end encryption | Live chat, email |

| Scholarcy | Browser, PDF | Data retention policies | Email, knowledge base |

| Upword | Web, browser | Basic controls | |

| TLDRthis | Web, browser | Minimal | Email only |

| RecapioGPT | Audio/video | Basic | Web support |

Table 4: Feature matrix showing integration, privacy, and support options for leading summarizers. Source: Original analysis based on WritingMate.ai, 2024, Tech.co, 2024

Responsive customer support is not optional for business-critical use. If privacy is non-negotiable, opt for vendors with documented compliance and transparent handling.

The future of document summarization: where do we go from here?

LLMs, hallucinations, and the next leap in AI summarization

As large language models become more sophisticated, so do their limitations. Current LLM-based summarizers are astonishingly fluent, but prone to hallucination—especially on ambiguous or poorly scanned documents. Despite advances, even the best tools occasionally invent citations or misinterpret technical language.

The next breakthroughs are likely to come from hybrid models—combining symbolic reasoning with LLMs—and greater user education. As users grow more sophisticated, so must the tools.

Outsourcing reading: the cultural and ethical debate

There’s a deeper shift underway. As more professionals outsource their reading to AI, the very act of knowledge work is changing. Some lament the loss of critical thinking, warning against a “fast food” approach to information. Others say it’s democratizing, freeing up energy for creativity and strategy.

The ethical debate runs hot—who gets to decide what’s important? Is it ethical for algorithms to gatekeep key information, especially in contexts where summaries can alter perceptions or decisions?

"Summaries are power. Who controls them, controls the narrative."

— Taylor, journalist

Beyond summarization: toward actionable document intelligence

Summarizers are evolving into full-blown document intelligence platforms, extracting action items, recommendations, and even risk alerts. Services like textwall.ai are pushing the envelope, bridging summarization, search, and knowledge management—a far cry from the basic “TL;DR” of yesteryear.

To stay ahead, users should demand explainability, customizable prompts, and real audit trails. The convergence of summarization, semantic search, and data visualization is already reshaping how organizations mine value from their mountains of text.

Glossary: decoding the jargon of document summarizer reviews

Key terms you need to know (and why they matter)

Generates original language, paraphrasing and condensing content like a human editor, rather than just stitching together existing sentences.

Selects passages verbatim from the source, resulting in summaries that may lack flow but offer direct fidelity to the original.

Neural networks (like GPT-4) trained on massive text datasets, powering modern summarizers with human-like comprehension.

The art and science of crafting input instructions to steer AI outputs—crucial for getting high-quality, bias-free summaries.

The percentage of summary outputs that contain invented or factually incorrect content.

The degree to which a summarizer reveals its methods, decisions, and omitted content.

How easily a summarizer connects to your existing tools—PDF editors, browsers, or workflow automation platforms.

Understanding these terms is essential. You wouldn’t buy a car without knowing what “horsepower” means—don’t trust a summarizer unless you grasp its key features and limitations. For example, “extractive” is not “abstractive,” and a high hallucination rate is a red flag, not a badge of creativity.

Appendix: extended comparison tables, checklists, and resources

Quick reference: 2025 document summarizer feature matrix

| Platform | Accuracy | Speed | Customization | Privacy | Integration | Multilingual | Cost |

|---|---|---|---|---|---|---|---|

| Genei | High | Fast | Yes | Strong | Yes | Yes | $9.99 |

| Scholarcy | High | Moderate | Yes | Moderate | Yes | Yes | $7.99 |

| Upword | Moderate | Fast | Some | Moderate | Yes | No | $8.00 |

| TLDRthis | Moderate | Fast | Minimal | Basic | Yes | No | Free/$6.00 |

| RecapioGPT | Moderate | Fast | Minimal | Moderate | Audio/video | Partial | $8.99 |

| Notta | Moderate | Fast | Minimal | Moderate | Audio/video | Partial | $8.25 |

| QuillBot | Moderate | Fast | Yes | Moderate | Yes | Limited | $9.95 |

| Jasper AI | High | Fast | Yes | Moderate | Yes | Yes | $24.00 |

| SMMRY | Low | Fast | Minimal | Minimal | No | No | Free |

| Resoomer | Moderate | Fast | Some | Moderate | Yes | Limited | $8.00 |

Table 5: Extended comparison of major and lesser-known document summarizer platforms, for reference. Source: Original analysis based on WritingMate.ai, 2024, Tech.co, 2024, Trending AI Tools, 2024

Interpret the table by mapping features to your specific use case—academic, legal, business, or creative. The methodology combines vendor specs, independent user testing, and verified user reviews.

Self-assessment: are you ready for AI-powered summarization?

Checklist

- Do you routinely process large volumes of text?

- Are you comfortable with reviewing AI-generated outputs for critical tasks?

- Have you identified privacy or compliance constraints?

- Do you know which features—customization, integration, multilingual—are must-haves?

- Are you prepared to test on real documents before rollout?

- Do you have budget flexibility for scaling up usage?

- Is your team open to change and ongoing evaluation?

Use this checklist to pinpoint readiness gaps and risks. Smooth transitions to AI summarization depend on clear requirements and realistic expectations.

Further reading and expert resources

For those craving a deeper dive, consider:

- Trending AI Tools: Best AI Summarizer Tools 2024

- Tech.co: Best AI Summarizer Tools

- WritingMate.ai: 9 Best AI Summarizers in 2024 (Tested and Compared)

- Scribbr: Best Summarizer

- Fellow.app: AI Summarizer Tools for Your Team

- textwall.ai: Advanced document analysis and comparison resources

Stay current by subscribing to vendor newsletters, following AI research blogs, and participating in professional forums. Document summarizer technology is evolving fast—continuous learning is your edge.

- Professional forums like r/MachineLearning and AI Stack Exchange

- Industry whitepapers from OpenAI, Google Research, and major consultancies

- Webinars and case studies hosted by leading summarizer vendors

- University research hubs specializing in NLP and document analysis

In the war on information overload, document summarizer comparison reviews are your frontline defense. The right tool won’t just save you time—it might just save your sanity, your client, or your next big deal.

Sources

References cited in this article

- Trending AI Tools(trendingaitools.com)

- Tech.co(tech.co)

- WritingMate.ai(writingmate.ai)

- Scribbr(scribbr.com)

- Fellow.app(fellow.app)

- EHL Insights(hospitalityinsights.ehl.edu)

- OpenText Blogs(blogs.opentext.com)

- Analytics Insight(analyticsinsight.net)

- BestWriting(bestwriting.com)

- National Endowment for the Arts(arts.gov)

- The Chronicle(chronicle.com)

- Forbes(forbes.com)

- OSTI.gov Technical Report(osti.gov)

- PMC Article(pmc.ncbi.nlm.nih.gov)

- ResearchGate Review(researchgate.net)

- GetMagical.com(getmagical.com)

- World Economic Forum(weforum.org)

- Medium: The Black-Box Dilemma(medium.com)

- Harvard Business Review(hbr.org)

- MDPI Survey(mdpi.com)

- The Hill(thehill.com)

- Pew Research Center(pewresearch.org)

- Sembly AI(sembly.ai)

- Xmind Blog(xmind.app)

- CO/AI(getcoai.com)

- AIApps.com(aiapps.com)

- MIT Technology Review(technologyreview.com)

- BigID Blog(bigid.com)

- FieldEffect(fieldeffect.com)

- Medium: Subscription Creep(medium.com)

- Nature Study via DocumentLLM.com(documentllm.com)

- DocHub(dochub.com)

- Papers with Code(paperswithcode.com)

- AgilityPortal(agilityportal.io)

- Academia.edu(academia.edu)

- DocumentLLM.com(documentllm.com)

- DocumentLLM.com(documentllm.com)

- Resufit.com(resufit.com)

- IWeaver.ai(iweaver.ai)

- WhyMeridian.com(whymeridian.com)

- AdlibSoftware.com(adlibsoftware.com)

- Gartner(gcom.pdo.aws.gartner.com)

- Friday.app: Best AI Document Summarizers(friday.app)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarizer Academic Use: Power, Pitfalls, and Real Wins

Document summarizer academic use is changing research forever. Discover hidden risks, bold strategies, and expert advice in this game-changing 2026 guide.

Document Summarization Workflow Integration That Actually Works

Discover the unfiltered reality, hidden pitfalls, and winning strategies for seamless automation in 2026.

Document Summarization with NLP Technology Is Quietly Reshaping Power

In the age of limitless digital sprawl, the phrase “information overload” feels like a cop-out—an easy excuse for why you missed the one email that would have

Document Summarization Vs Manual Review in 2026: What Really Wins

Discover the real winners, hidden risks, and expert strategies for 2026. Uncover what the pros won’t tell you—choose smarter today.

Document Summarization Tools Comparison for People Who Can’t Afford Bad Summaries

Discover the no-hype, brutally honest truth about top AI summarizers—complete with data, insights, and real-world guidance.

Document Summarization Tools in 2026: Power, Bias, and Control

Document summarization tools in 2026 are redefining how we process information. Discover hidden truths, expert hacks, and must-know risks. Don’t get left behind.

Document Summarization Tool Reviews That Expose Risk and Reality

In the modern arena of information warfare, “document summarization tool reviews” have become both a lifeline and a battleground. If you’re slogging through

Document Summarization to Save Time Without Missing What Matters

Discover insights about document summarization to save time

Document Summarization Technology Vs. Truth in 2026

Document summarization technology is revolutionizing how we process information. Discover the hidden truths, pitfalls, and breakthroughs shaping 2026 in this deep dive.