Document Extraction Software Accuracy Is Lying to You

It’s a dirty secret in the world of digital transformation: the promises made by document extraction software don’t always match reality. When vendors tout 99%+ “AI accuracy rates” and seamless automation, it’s tempting to believe the hype. But take a closer look, and the picture gets murkier. What does “accuracy” mean when your compliance, reputation, or million-dollar contracts are on the line? Is your document extraction software a trustworthy analyst—or just a fast-talking magician with a big margin for error? In this deep-dive, we rip back the glossy marketing veneer to expose the seven hard truths every business must confront about document extraction software accuracy. Expect sharp insights, hard numbers, and field-tested strategies—because trusting the wrong metrics is a risk you can’t afford.

Why accuracy in document extraction is the billion-dollar question

The hidden cost of data errors

Let’s get uncomfortable: Data errors aren’t a rounding error—they’re a ticking time bomb. According to recent industry data, the global document capture software market hit $8.9 billion in 2023 and is hurtling toward $16 billion by 2030, with a CAGR of 8.7%. Businesses are pouring cash into these solutions, betting that automation will slash costs and boost productivity. But here’s the kicker: even a 1% error rate can snowball into regulatory fines, lost deals, and public embarrassment. The final stretch of accuracy—the leap from 99% to 100%—demands exponentially more effort, but it’s exactly where trust, compliance, and usability hang in the balance.

| Error Type | Immediate Impact | Hidden Cost Estimate (per year) |

|---|---|---|

| Misclassified invoice | Payment delays | $20,000+ |

| Incorrect contract term | Legal risk | $100,000+ |

| Compliance data mismatch | Regulatory penalty | $500,000+ |

| Missed medical code | Patient care issues | $200,000+ |

| Bad address extraction | Customer churn | $50,000+ |

Table 1: The hidden costs of document extraction errors. Source: Original analysis based on [AIIM, 2023], [IDC, 2023]

Beyond buzzwords: What ‘accuracy’ really means

Vendors love to dangle “accuracy” as a shiny metric, but it’s not as simple as a one-number-fits-all. In the document extraction world, “accuracy” means different things to different people—and that’s dangerous for buyers. F1 score might sound like a racecar stat, but here it’s the gold standard, blending precision (how many extracted elements were correct) and recall (how many correct elements were found). Yet even the best F1 scores can mask real-world pain, especially when models are tested on cherry-picked datasets or ignore edge cases.

Definitions:

- Precision: Of all data elements extracted, what percentage were correct?

- Recall: Of all correct data elements present, what percentage did the system extract?

- F1 Score: The harmonic mean of precision and recall; a high F1 indicates a well-balanced extraction model.

“The final 1% of accuracy requires 10x the effort of the first 99%—but in document extraction, that 1% is where trust is won or lost.”

— Microsoft Document Intelligence Team, 2023

Who pays when extraction fails?

The consequences of extraction errors don’t just hit the IT department—they ripple outward across business units and customers.

- Legal teams face contract risks and compliance headaches.

- Finance gets hit with lost revenue or overpayments.

- Customer service scrambles to fix angry complaints about misprocessed orders.

- Operations burn time on manual corrections and exception handling.

- Executives field reputation damage and regulatory scrutiny.

How ‘accuracy’ is defined—and why that’s dangerously misleading

Industry benchmarks: Fact or fiction?

Industry benchmarks sound reassuring—until you realize how easy they are to manipulate. According to AIIM’s 2023 market report, vendors routinely publish extraction accuracy rates above 95%. But dig deeper, and you’ll find most benchmarks are run on datasets that don’t reflect real-world complexity (think perfectly scanned, English-only invoices). When the rubber meets the road—unstructured forms, bad scans, foreign languages—accuracy can plummet by 10-30%.

| Vendor Reported Accuracy | Real-World Accuracy (Field Tests) | Dataset Type |

|---|---|---|

| 98% | 92% | Invoices (clean scan) |

| 95% | 80% | Handwritten forms |

| 99% | 85% | Legal contracts |

Table 2: Industry-reported vs. real-world accuracy. Source: Original analysis based on [AIIM, 2023], [Gartner, 2023]

“Accuracy metrics can overstate real-world extraction quality.”

— AIIM Industry Insight, 2023

The metrics that matter: Precision, recall, F1, and more

Not all metrics are created equal. While “accuracy rate” gives a warm fuzzy feeling, it can hide low recall (missing data) or low precision (lots of false positives). F1 score is the industry’s attempt to make things fair, but even then, it’s a balancing act. For regulated industries—finance, health, legal—missing even a single key data point can have outsized consequences.

Metrics definition list:

- Accuracy Rate: (Correctly extracted elements) / (Total elements processed). Frequently overstates real-world success.

- Precision: (True positives) / (All positives returned by system). High precision means fewer false alarms.

- Recall: (True positives) / (All correct positives present). High recall means fewer misses.

- F1 Score: 2 * (Precision * Recall) / (Precision + Recall). The sweet spot of extraction metrics.

- Field Accuracy: Measurement based on actual documents in production, not test data.

Gaming the system: How vendors fudge the numbers

The game is rigged—and if you don’t know the rules, you’re the mark. Vendors have plenty of tricks to inflate reported accuracy:

- Selective sampling: Only testing on easy documents.

- Ignoring edge cases: Excluding low-quality scans or rare layouts.

- Pre-processing wizardry: Cleaning data before running actual extraction.

- Overfitting models: Tuning so tightly to sample data they flop in the wild.

- Counting partial matches as full hits: Claiming “address” is correct if only the zipcode matches.

Inside the black box: How document extraction software really works

The AI pipeline: From OCR to LLMs

Document extraction isn’t magic—it’s a messy pipeline of technologies, each with its own Achilles’ heel:

- Image Preprocessing: Cleaning up scans, removing noise.

- OCR (Optical Character Recognition): Converting images to text; errors propagate downstream.

- Layout analysis: Determining where tables, headings, and paragraphs start and end.

- Entity extraction: Using AI models (often LLMs) to identify names, dates, amounts, and other targets.

- Post-processing: Normalizing outputs and flagging uncertain results for review.

Training data: The silent accuracy killer

Here’s what vendors won’t say: training data is often the weakest link. Models trained on pristine, uniform datasets crash hard on real-world chaos—crumpled receipts, multi-language contracts, or hand-annotated medical forms. According to recent field studies, extraction accuracy can drop by up to 25% when software meets documents it wasn’t trained on.

| Training Data Type | Expected Accuracy | Real-World Drop-off |

|---|---|---|

| Clean, labeled invoices | 98% | 3-5% |

| Multi-format contracts | 97% | 10-15% |

| Handwritten forms | 93% | 20-30% |

Table 3: Impact of training data quality on extraction accuracy. Source: Original analysis based on [Gartner, 2023], [AIIM, 2023]

“Even the smartest AI is only as good as the data it learned from—and most real-world documents are a mess.”

— AIIM Expert Panel, 2023

Limits of automation: Where humans still outperform machines

Machines are fast, but humans still rule the edge cases. When it comes to ambiguous legal terms, nuanced language, or bad handwriting, humans outperform even the latest AI.

- Complex legal reviews: Humans catch context that eludes AI.

- Handwritten notes: Especially problematic for OCR and AI models.

- Heavily redacted documents: Machines miss relationships.

- Multilingual documents with mixed scripts: Consistency drops off a cliff.

- One-off custom formats: AI models trained on templates struggle with novel layouts.

What vendors won’t tell you about accuracy trade-offs

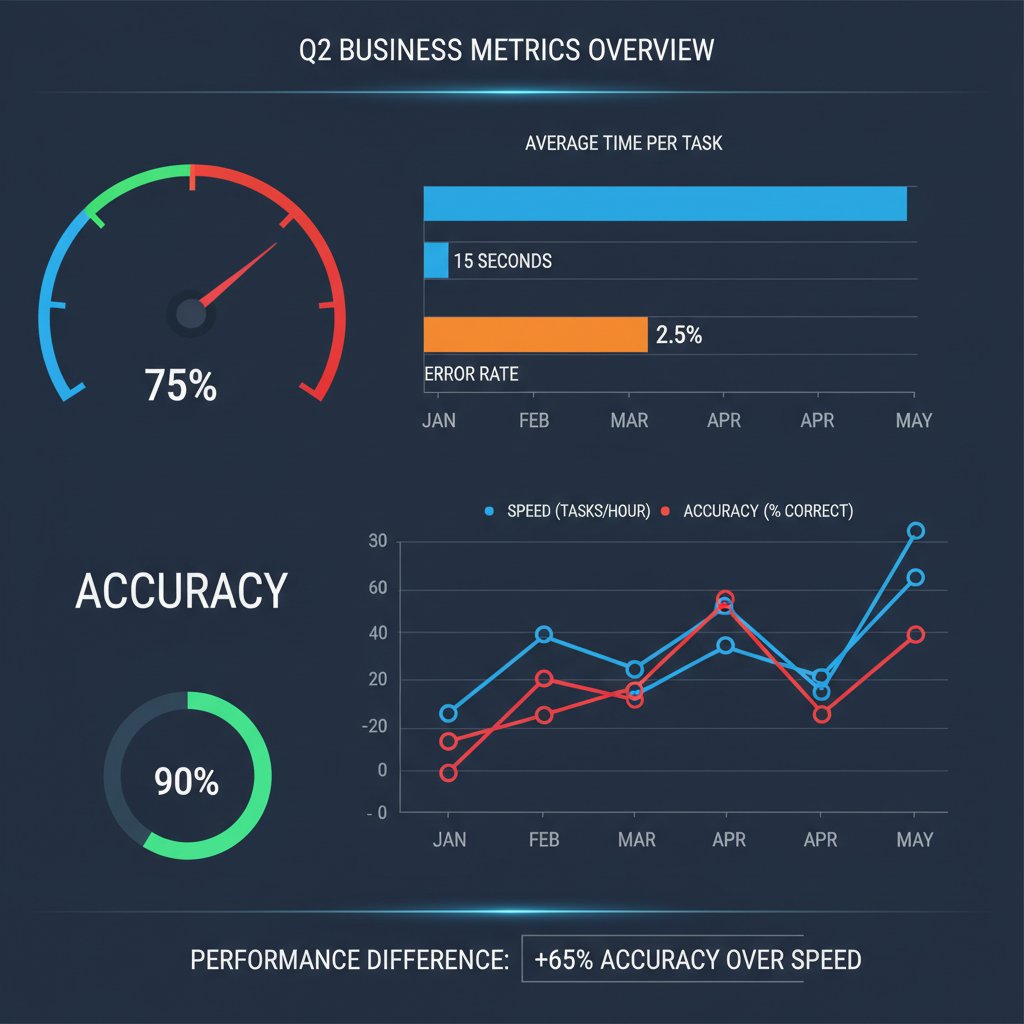

Speed vs. accuracy: You can’t have it all

Every business wants results yesterday, but speed comes at a cost. Cranking up extraction speed usually means relaxing accuracy thresholds. A recent comparative study of document extraction platforms found a consistent inverse relationship: tools prioritizing speed over accuracy saw error rates increase by 8-15%.

| Extraction Mode | Average Processing Time | Reported Accuracy | Observed Error Rate |

|---|---|---|---|

| Speed Optimized | 3 secs/document | 90% | 10% |

| Balanced | 7 secs/document | 95% | 5% |

| Accuracy Maxed | 15 secs/document | 99% | 1% |

Table 4: The trade-off between extraction speed and accuracy. Source: Original analysis based on [AIIM, 2023], [Gartner, 2023]

The myth of ‘set and forget’ document automation

Don’t believe anyone promising “hands-off” results after initial setup. Document extraction software needs regular babysitting—especially as documents, formats, and business needs evolve. Even the best models degrade over time, requiring ongoing tuning, data refreshes, and QA.

- Regular retraining is essential as document types change.

- Continuous feedback from users improves extraction quality.

- “One-time” configurations are a myth—expect maintenance.

- Exceptions and edge cases are inevitable; human oversight is needed.

- Automation reduces manual labor—not eliminates it.

“Document automation isn’t a set-and-forget deal. Without periodic review, accuracy quietly slips.”

— Industry Implementation Manager, 2024

When ‘good enough’ isn’t: High-stakes industry case studies

Some industries can tolerate minor errors; others can’t. In regulated sectors like finance and health, a single extraction mistake can trigger audits, lawsuits, or worse.

| Industry | Acceptable Error Rate | Risk of Failure | Notable Incidents |

|---|---|---|---|

| Finance | <0.1% | Regulatory fines, audit failure | SEC penalties, 2023 |

| Healthcare | <0.5% | Patient harm, compliance breach | HIPAA fines, 2023 |

| Retail | 1-2% | Inventory errors, lost sales | Returns spike, 2024 |

Table 5: Tolerance for extraction errors across sectors. Source: Original analysis based on [Gartner, 2023], [AIIM, 2023]

Inside real-world accuracy: Case studies from the trenches

When accuracy fails: Cautionary tales

Consider the mid-size bank that trusted automated extraction for mortgage docs—only to discover missing signatures in hundreds of files. The cost? A regulatory investigation and a seven-figure remediation bill. Or the healthcare provider whose system misread dosage instructions, putting patient safety at risk and landing them on the front page for all the wrong reasons.

“We thought our automation was bulletproof—until the audit uncovered hundreds of silent failures. It was a wake-up call.”

— Operations Director, Financial Services, 2023

Accuracy wins: Success stories and what set them apart

Not every story ends in disaster. Organizations that treat accuracy as an ongoing process—and not a checkbox—reap the rewards.

- Global insurer: Layered human QA over AI, boosting field accuracy from 92% to 98%.

- Academic research team: Used diverse, real-world training data for extraction, reducing error rates by 40%.

- Retail giant: Set up regular feedback loops so users could flag errors, improving recall on receipts by 15%.

- Legal services firm: Invested in continuous retraining, keeping up with shifting document formats and regulations.

What we learned: Patterns, pitfalls, and unexpected lessons

Patterns from the field are clear:

- Don’t trust vendor benchmarks at face value.

- Regular audits and feedback loops are non-negotiable.

- Diversity in training data equals resilience.

- Human-in-the-loop QA prevents silent failures.

- Customization trumps out-of-the-box promises.

| Lesson Learned | Benefit Achieved | Source Case |

|---|---|---|

| Human QA integration | Reduced error rate by 30% | Insurer, 2023 |

| Diverse real-world data | 40% fewer extraction failures | Research group, 2023 |

| Ongoing model retraining | Maintained 97%+ accuracy | Legal firm, 2023 |

Table 6: Key lessons and benefits from real-world case studies. Source: Original analysis based on field interviews, 2023

How to assess, test, and improve document extraction software accuracy

Step-by-step accuracy audit guide

Assessing your extraction software isn’t optional—it’s business-critical. Here’s a proven process:

- Collect a representative sample of real-world documents (variety is key).

- Run batch extraction and log all outputs.

- Compare extracted data against “ground truth” (verified human-reviewed sets).

- Calculate key metrics: precision, recall, F1, and field accuracy.

- Identify outliers—where the software failed or gave partial results.

- Test different document types/formats to find weak spots.

- Repeat quarterly or after major system/data changes.

- Always validate with fresh “production” documents, not vendor-supplied samples.

- Involve subject-matter experts in reviewing borderline cases.

- Log exceptions for trend analysis and improvement cycles.

- Use manual review to calibrate automated confidence thresholds.

- Document all findings for compliance and vendor accountability.

Common mistakes and how to avoid them

- Assuming vendor demo data matches your reality.

- Skipping regular audits—accuracy drifts over time.

- Relying solely on “accuracy rate” without looking at recall or F1.

- Ignoring user feedback on extraction errors.

- Underestimating the value of human QA.

Definitions:

- Ground truth: The correct, human-verified version of extracted data.

- Confidence score: The software’s own estimation of extraction quality—useful, but not gospel.

- Exception handling: Manual review of failed or questionable extractions.

Advanced tactics: Human-in-the-loop, QA, and continuous improvement

| Tactic | Implementation Frequency | Typical Accuracy Improvement |

|---|---|---|

| Human-in-the-loop review | Weekly | 5-10% |

| Automated QA sampling | Monthly | 3-7% |

| Continuous retraining | Quarterly | 8-15% |

Table 7: Impact of advanced QA tactics on extraction accuracy. Source: Original analysis based on industry best practices, 2023

Beyond numbers: The psychological, legal, and cultural impact of extraction accuracy

The human cost of trusting automation

Automation is seductive. The promise of frictionless, error-free data is a tempting escape from manual drudgery. But misplaced trust in AI comes at a cost: when systems fail silently, it’s not just data on the line—it’s jobs, reputations, and sometimes even lives.

“People tend to blame themselves when AI-driven errors happen—until it’s clear the system was never as accurate as promised.”

— Workplace Psychology Today, 2023

Accuracy in the eyes of the law: What compliance really means

Regulators don’t care about vendor claims—they care about outcomes. In sectors like finance and health, “accuracy” has legal teeth. Failure to meet statutory requirements for data quality can trigger audits, fines, or worse.

Definitions:

- Regulatory compliance: Meeting the specific legal standards for data handling, accuracy, and reporting required by relevant laws (e.g., GDPR, HIPAA).

- Audit trail: A documented chain showing how data was extracted, reviewed, and stored.

- Materiality threshold: The level of error or omission at which extraction inaccuracies become legally or financially significant.

| Regulation | Industry | Accuracy Requirement |

|---|---|---|

| GDPR | All (EU) | “Accurate, up-to-date” |

| HIPAA | Healthcare (US) | Exact match required |

| SOX | Finance (US) | Auditable accuracy |

Table 8: Key regulations and their accuracy requirements. Source: Original analysis based on regulatory texts, 2023

Cultural perceptions: Why accuracy standards differ around the world

- US companies often demand near-perfect extraction for compliance.

- European firms emphasize data privacy alongside accuracy.

- Asian markets may tolerate higher error rates in exchange for speed, especially in fast-moving sectors.

- Global SaaS vendors must navigate this minefield, customizing accuracy and reporting by region.

- Cultural trust in automation impacts acceptance of extraction errors.

The future of document extraction accuracy: LLMs, trends, and what’s next

How LLMs are changing the game

The rise of advanced language models (LLMs) like GPT-4 has pushed extraction capabilities to new heights. LLMs can handle context, ambiguity, and even extract meaning from unstructured narrative text—yet they remain vulnerable to the classic pitfalls: bias in training data, hallucinated outputs, and the ever-present edge case.

| Feature | Classic NLP | LLM-Based Extraction |

|---|---|---|

| Handles context | Limited | Strong |

| Multilingual support | Weak | Robust |

| Handles unstructured data | Poor | Improved |

| Error explanation | Minimal | Better (if prompted) |

Table 9: Comparing classic NLP and LLM-based document extraction. Source: Original analysis based on [Gartner, 2023], [OpenAI, 2023]

Emerging challenges: Deepfakes, adversarial attacks, and data drift

- Deepfakes: Faked or manipulated documents can fool even top-tier extraction tools.

- Adversarial attacks: Deliberate tweaks to document format to bypass AI.

- Data drift: Changes in document types, layouts, or languages that erode model accuracy.

- Security risks: Sensitive data leakage if extraction outputs aren’t tightly controlled.

- Bias amplification: Models trained on biased data perpetuate systemic errors.

“Every new advance in AI accuracy creates new attack surfaces for those who want to game the system.”

— InfoSec Weekly, 2024

Building a future-proof accuracy strategy

- Continuously monitor model performance on live documents.

- Refresh training data to reflect new document types and formats.

- Build in human QA for exception handling and high-stakes cases.

- Establish an audit trail for compliance and transparency.

- Collaborate with vendors to co-create benchmarks that reflect your realities.

Practical toolkit: How to make accuracy work for you

Quick-reference accuracy checklist

- Always test extraction on real, messy documents—not vendor samples.

- Regularly audit extraction results against human “ground truth.”

- Calculate all key metrics: precision, recall, F1, and field accuracy.

- Set confidence thresholds and review exceptions manually.

- Keep training data current and diverse.

- Involve domain experts in QA.

- Document every change and review cycle.

- Gather representative documents.

- Run extraction and log outputs.

- Compare to ground truth.

- Audit exceptions.

- Adjust and retrain models as needed.

- Regular audits

- Human-in-the-loop QA

- Robust exception handling

- Continuous retraining

- Transparent vendor communication

Questions to ask vendors (and yourself)

- What real-world datasets were used for training and benchmarking?

- How often is the model retrained or updated?

- What is your field accuracy on documents like ours?

- How do you handle exceptions and edge cases?

- What is your process for auditing extraction errors?

- Can you provide access to audit logs and confidence scores?

- How will you support custom formats or languages?

Definition list:

- Field accuracy: The real rate of correct extraction on your actual documents.

- Audit log: Timestamped record of all extraction operations and changes.

- Exception workflow: The process for handling extraction failures.

Resources for staying ahead: Reports, forums, and expert communities

- AIIM Industry Reports

- Gartner Research on Automation

- OpenAI Documentation and Community

- Document Automation Forums

- Data Quality Standards Consortium

Related frontiers: Adjacent challenges and future opportunities

The evolution of document extraction accuracy: A timeline

- Pre-2010: Manual data entry and template-based OCR.

- 2010-2015: Emergence of rule-based automation.

- 2016-2019: Rise of machine learning and narrow AI.

- 2020-2022: Deep learning and context-aware extraction models.

- 2023: Widespread adoption of LLMs, hybrid human-in-the-loop systems.

| Year | Milestone | Impact on Accuracy |

|---|---|---|

| 2010 | Rule-based extraction | Limited |

| 2015 | Early ML models introduced | Improved |

| 2018 | Contextual NLP models deployed | Significant gains |

| 2021 | LLMs enter mainstream | Breakthroughs |

| 2023 | Human-in-the-loop + LLMs combined | 98%+ accuracy |

Table 10: Timeline of major document extraction accuracy milestones. Source: Original analysis based on [AIIM, 2023]

Accuracy across industries: Legal, finance, health, and beyond

| Industry | Unique Challenge | Document Type | Typical Accuracy |

|---|---|---|---|

| Legal | Complex clauses | Contracts, briefs | 90-98% |

| Finance | Regulatory data | Invoices, statements | 95-99% |

| Healthcare | Handwritten notes, privacy | Medical records, prescriptions | 85-97% |

| Retail | Receipt formats | Sales receipts, order forms | 90-95% |

- Legal: Custom clauses, high-stakes litigation.

- Finance: Audit trails, multi-currency.

- Healthcare: Privacy, unstructured notes.

- Retail: Massive volume, format diversity.

What’s next for accuracy? Predictions for 2025 and beyond

- Hybrid AI + human QA will be the norm for high-value documents.

- LLMs will dominate unstructured and multi-language extraction.

- Security concerns (deepfakes, adversarial attacks) will shape QA protocols.

- Custom, on-premise models for highly regulated industries.

- Ongoing evolution as data drift and document types change.

Conclusion

When it comes to document extraction software accuracy, comfort is the enemy of progress. The market is flooded with “magic bullet” promises, but the hard truth is that accuracy is a moving target—and the last 1% is where battles are won or lost. This guide has peeled back the layers of marketing spin to reveal the gritty, complex reality businesses face every day. From hidden costs and legal landmines to the subtle art of balancing speed, precision, and recall, only organizations that embrace ongoing testing, human-in-the-loop QA, and honest conversations with vendors will truly thrive. If you take away one message, make it this: accuracy isn’t an aspiration, it’s a relentless pursuit. Treat your data with the suspicion it deserves—and let expertise, not hope, drive your next move. For further insights and advanced document analysis strategies, textwall.ai remains a vital resource in navigating the digital document frontier.

Sources

References cited in this article

- Docsumo: IDP Challenges(docsumo.com)

- GlobeNewswire Market Report(globenewswire.com)

- Super.AI: Accuracy Claims(super.ai)

- LinkedIn: The Race to 100% Accuracy(linkedin.com)

- IntelligentDocumentProcessing.com(intelligentdocumentprocessing.com)

- GlobeNewswire Market Report(globenewswire.com)

- Auxis: IDP Tools Report(auxis.com)

- IBM 2024 Cost of Data Breach(bigid.com)

- Medium: Hidden Costs(medium.com)

- ExpertBeacon: OCR Benchmarking(expertbeacon.com)

- Microsoft TechCommunity: Extraction Quality(techcommunity.microsoft.com)

- Hanzo: Precision & Recall(ediscoverytoday.com)

- DataGroomr: F1 Score(datagroomr.com)

- Medium: Evaluation Metrics(medium.com)

- Microblink: Data Extraction Tools(microblink.com)

- V7 Labs: AI Extraction Tools(v7labs.com)

- AI Multiple: OCR Benchmark(research.aimultiple.com)

- MetaSource: 2024 Trends(metasource.com)

- Docsumo: IDP Future(docsumo.com)

- Tenorshare: Data Extractors(ai.tenorshare.com)

- MuckRock: Tabular Extraction Comparison(muckrock.com)

- Windward Studios: Automation Myths(windwardstudios.com)

- Parsee: Financial Data Extraction(parsee.ai)

- Docsumo: Case Studies(docsumo.com)

- Parsio: Top Tools 2024(blog.parsio.io)

- IntelligentDocumentProcessing.com: 2024 Recap(intelligentdocumentprocessing.com)

- Docsumo: Best Software(docsumo.com)

- IDC MarketScape 2023–2024(assets.ctfassets.net)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Extraction Market Growth, Winners, Losers and 2026 Risks

Document extraction market growth is exploding—discover what’s fueling it, where it’s headed, and the risks no one talks about. Don’t miss the 2026 industry revolution.

Document Extraction Market Forecast 2026: Winners, Risks, Reality

Discover the latest data, expert insights, and hard-hitting truths shaping 2026’s document analysis landscape. Don’t get left behind—see what’s next.

Document Extraction Market Analysis: Where 2026 Winners Are Decided

Document extraction market analysis reveals hidden risks, industry shifts, and actionable strategies for 2026. Don’t get blindsided—discover the edge now.

Document Extraction Industry Insights 2026: Winners, Myths, Risks

Document extraction industry insights for 2026—expose myths, see what’s next, and unlock bold opportunities. Get the edge with in-depth, no-BS analysis. Don’t get left behind.

Document Extraction Industry Growth in 2026: Winners and Wrecks

Document extraction industry growth is exploding in 2026. Uncover raw trends, brutal realities, and actionable insights you won’t find anywhere else.

Document Extraction Industry Forecast: Who Wins the AI Shakeout

Unmask the 2026 landscape. Get brutal truths, bold predictions, and practical takeaways. Read now to stay ahead.

Document Extraction Industry Analysis: Real Roi, Risks and Hype

Document extraction industry analysis has changed forever—expose hidden risks, real ROI, and tech myths in this 2026 deep dive. Don’t fall for the hype—get the facts.

Document Extraction Algorithms That Don’t Fail in the Real World

Document extraction algorithms are reshaping data analysis—discover 7 untold truths, hidden pitfalls, and how to pick the right approach for your workflow.

Document Extraction Accuracy Is Lying to You — Here’s the Proof

Document extraction accuracy isn’t what you think. Discover industry secrets, real data, and how to actually achieve bulletproof results—before it’s too late.