Document Extraction Accuracy Is Lying to You — Here’s the Proof

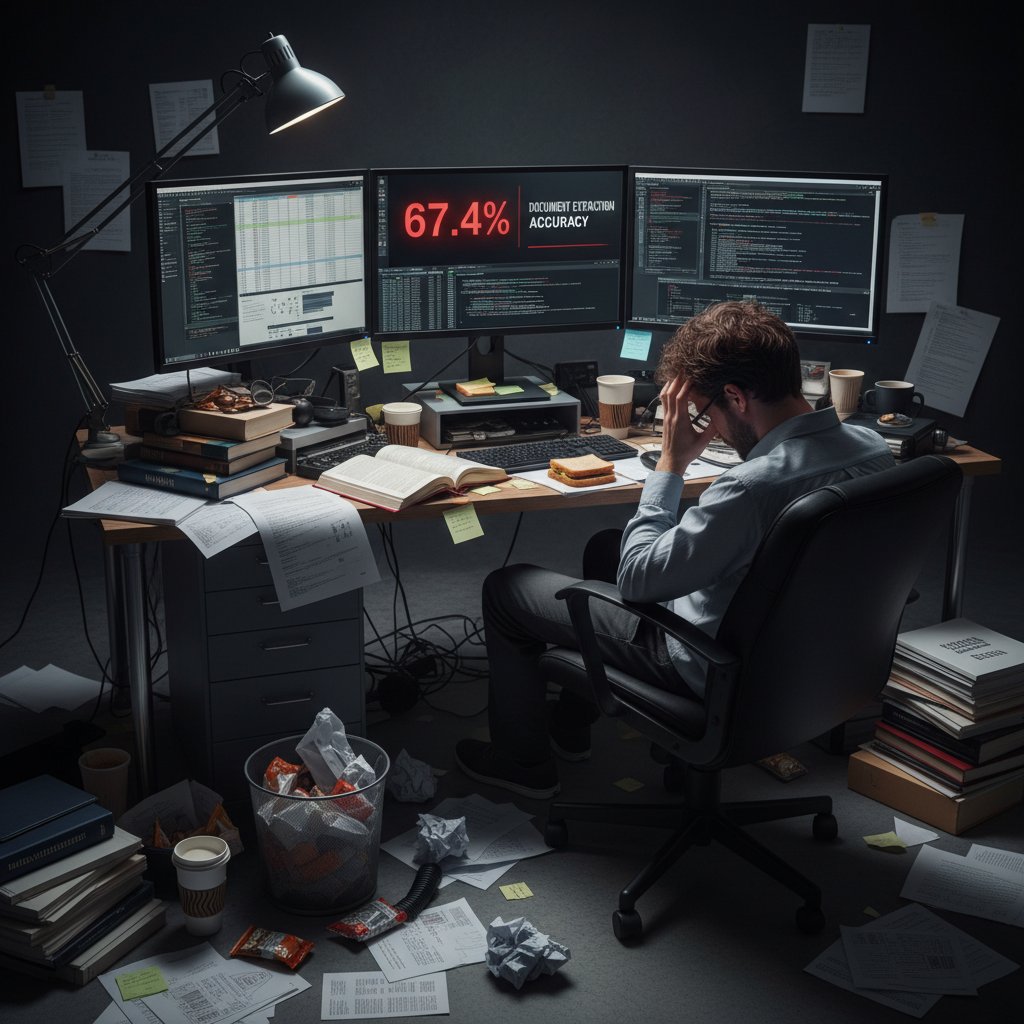

You think your document extraction is accurate? Think again. In the world of AI-powered document processing, vendors boast stratospheric accuracy rates—99%, 99.9%, some even whisper about perfection. But here’s the brutal, often-ignored truth: for every “flawless” extraction, there’s a crisis brewing somewhere—a compliance slip, a financial mishap, an entire team left cleaning up an invisible mess. Document extraction accuracy isn’t just a marketing metric; it’s the thin line between operational efficiency and outright chaos. This article cuts through the hype, exposes the industry’s best-kept secrets, and arms you with the knowledge to survive—and thrive—when the numbers lie.

Document extraction accuracy isn’t a trivial concern for data geeks—it’s the silent backbone of every high-stakes industry, from finance to healthcare to government. Inaccurate extraction doesn’t just cost time; it can mean regulatory penalties, massive financial loss, or, in the worst cases, irreversible reputational damage. Welcome to the messy, high-stakes reality of automated document analysis, where the difference between “almost perfect” and “good enough” can upend everything you thought you knew about digital transformation. Let’s rip the veil off the industry’s favorite myth.

The high-stakes world of document extraction accuracy

Why accuracy matters more than you think

The consequences of inaccurate document extraction are rarely visible until they spiral out of control. Picture this: a single misread contract clause leads to a multimillion-dollar compliance penalty, or a misclassified patient record triggers a life-threatening delay. These scenarios aren’t rare outliers—they’re everyday risks in data-driven industries. According to TechHQ, 2024, errors in AI document processing have directly contributed to financial losses and regulatory actions across multiple sectors, underlining that accuracy is not just a technical metric but a boardroom concern.

When the numbers are off, the fallout isn’t just a few extra hours of cleanup. Financial services firms face fines stretching into the millions for reporting inaccuracies. Healthcare providers risk patient safety and regulatory censure due to misfiled information. In legal environments, a single misinterpretation can undermine entire cases. The cost isn’t just in remediation; it’s in lost trust, broken processes, and, most damaging of all, shattered credibility.

“One bad extraction, and you’re cleaning up for months.” — Alex, documentation lead, MetaSource, 2024

Reputational damage lingers long after technical errors are fixed. Clients and regulators remember the organization that failed to deliver. Worse, opportunities are missed—contracts lost, insights overlooked, or analytics crippled—because decision-makers quietly started doubting their data. This is why document extraction accuracy is the ultimate high-wire act in digital transformation.

The hidden costs of getting it wrong

Behind every botched data extraction lies a trail of hidden costs. Operationally, teams get bogged down in manual corrections, reruns, and compliance firefighting. Financially, the numbers are staggering: studies compiled by Scoop.market.us, 2024 estimate that extraction errors cost global businesses billions annually, across fines, lost productivity, and missed revenue.

| Industry | Avg. Annual Loss from Extraction Errors | Source Year & URL |

|---|---|---|

| Financial Services | $800M | TechHQ, 2024 |

| Healthcare | $600M | Scoop.market.us, 2024 |

| Government | $340M | TechHQ, 2024 |

| Legal | $410M | Kili Technology, 2024 |

Table 1: Estimated annual financial losses from document extraction errors, by industry.

Source: Original analysis based on TechHQ, 2024 and Scoop.market.us, 2024

Beyond the ledger, there’s a deeper impact: reputational fallout. According to Kili Technology, 2024, organizations reporting major extraction errors have seen client churn rates spike by up to 30%, with long-term trust erosion that’s nearly impossible to reverse. The digital age amplifies every mistake—think headline-generating data leaks or compliance breaches that become public scandals.

Consider the infamous 2023 incident where a multinational bank’s automated extraction system misclassified hundreds of sensitive documents, triggering regulatory investigations and a client exodus. The incident reverberated for months, showing that document chaos can become front-page news overnight.

Red flags to watch out for:

- Extraction tools promising unrealistic “100% accuracy”

- Lack of transparency about error rates in real-world deployments

- Absence of human-in-the-loop processes for exceptions

- No audit trail or explainable AI for disputed outputs

- Vendor reluctance to share benchmark data from diverse industries

Document chaos: A cautionary tale

Let’s get real: document chaos is not a theoretical risk—it’s a lived reality. In one notorious case, a healthcare provider deployed a new extraction engine to process tens of thousands of patient files. Within weeks, vital information was being routed to the wrong departments, billing codes mismatched, and compliance officers were in full-blown crisis mode.

How did this unravel? The extraction model, tuned for perfect lab conditions, collapsed under the messiness of real-world documents—handwritten notes, inconsistent formats, and scanned PDFs. The human review team was cut to save costs, leaving no safety net. It took months of forensic backtracking, system re-training, and manual corrections to regain control.

The lesson is clear: document extraction accuracy isn’t just a number on a dashboard—it’s the difference between seamless operations and operational disaster. Before you trust any AI solution, you need to know not just its best day, but its worst failure.

Unpacking the 'accuracy' myth

What vendors don’t tell you

In the wild west of AI marketing, “accuracy” is more slogan than science. Vendors cherry-pick their best-case numbers—lab conditions, clean data, handpicked benchmarks—and gloss over the messiness of real deployments. The result? A serious reality gap between what’s advertised and what you’ll experience in the trenches of document processing.

Key terms demystified:

The proportion of correct predictions (true positives and true negatives) in the total predictions made. In extraction, it means the share of correctly extracted data points versus all attempts.

The percentage of correctly extracted fields among all fields the system claims to have extracted. High precision means fewer false positives.

The percentage of relevant fields extracted among all relevant fields present in the document. High recall means fewer false negatives.

Vendors will trumpet “over 99% accuracy,” but rarely specify whether that’s precision, recall, or a vague composite. For example, one widely publicized vendor claim touted near-perfect extraction, only to reveal in the fine print that their test set excluded low-quality scans and handwritten notes—the very things that trip up real-world deployments.

“If you don’t ask the right questions, you’ll never get the right answers.” — Priya, senior data scientist, LinkedIn, 2024

Real-world versus lab results

There’s a painful truth to acknowledge: vendor benchmarks rarely survive contact with reality. Extraction engines that boast 99.9% accuracy under tightly controlled conditions can see performance plunge when faced with genuine document variability, noisy scans, and complex layouts.

| Use Case | Published Accuracy | Actual Field Accuracy (2024) | Source |

|---|---|---|---|

| Invoices (standardized) | 99.5% | 95.2% | MetaSource |

| Healthcare records | 99.0% | 92.3% | Kili Tech |

| Legal contracts | 98.7% | 89.8% | TechHQ |

Table 2: Comparison of vendor-published document extraction accuracy rates vs. actual rates across use cases.

Source: Original analysis based on MetaSource, 2024, Kili Technology, 2024, TechHQ, 2024.

Sources of error are everywhere: smudged scans, handwritten annotations, field position drift, multilingual content, and images embedded in text. Consider three scenarios:

- Finance: A bank’s automated extraction tool aces structured account statements but misreads 7% of variable-format loan documents, leading to reporting headaches.

- Healthcare: An AI model accurately extracts numerical lab results but fumbles freeform doctor notes, missing critical context.

- Legal: Extraction works well for standard NDAs but falters on bespoke contracts with unusual layouts and amendments, requiring expensive human review.

The perils of 'accuracy obsession'

Ironically, chasing the highest possible extraction accuracy can backfire. Piling on ever more sophisticated models and rules can create brittle, opaque pipelines that are impossible to audit or fix when things go wrong. Sometimes, aiming for “good enough” accuracy—paired with rapid exception handling—delivers better real-world outcomes.

Hidden benefits of ‘good enough’ extraction accuracy:

- Speed: Faster processing for the majority of routine documents, with manual review reserved for true exceptions.

- Cost-efficiency: Lower development and operational costs by not over-engineering for diminishing returns.

- Flexibility: Easier to adapt pipelines as document types or regulations evolve.

- Transparency: Simpler systems are easier to audit and defend in compliance reviews.

Alternative KPIs—such as time-to-resolution, exception rate, and user satisfaction—can reveal more about extraction value than a single “accuracy” number ever will. The next logical question: how do you actually measure document extraction accuracy in a meaningful way?

How is document extraction accuracy measured?

Precision, recall, and F1 score decoded

Let’s break down the real metrics behind the marketing gloss. Accuracy alone is a blunt instrument; true performance is measured using precision, recall, and the F1 score—a harmonic mean of the two. Here’s how each works in practice:

- Precision: Imagine your extraction tool identifies 100 fields, but only 90 are correct. Precision = 90%.

- Recall: If there are actually 120 fields to extract and your tool gets 90, recall = 75%.

- F1 Score: This balances precision and recall: F1 = 2 × (Precision × Recall) / (Precision + Recall).

| Metric | What It Measures | Best Use-Case | Weaknesses |

|---|---|---|---|

| Precision | Correct extractions over claimed | Legal, regulatory data | Can hide missed fields |

| Recall | Correct extractions over actuals | Healthcare, research | Can hide false positives |

| F1 Score | Balance of precision and recall | Any with lots of variability | Sensitive to data imbalance |

Table 3: Feature matrix comparing document extraction accuracy metrics and their ideal use-cases.

Source: Original analysis based on Kili Technology, 2024

Auditing your extraction pipeline isn’t rocket science—but it demands rigor. Here’s a typical process:

- Establish ground truth: Collect a representative sample of real documents, and manually annotate the correct fields for extraction.

- Run extraction: Process the sample set using your tool.

- Compare outputs: Measure which fields were correctly extracted (true positives), missed (false negatives), or incorrectly added (false positives).

- Calculate metrics: Use the formulas above to compute precision, recall, and F1.

- Iterate: Use findings to tune your model or process, then repeat.

Steps to audit extraction results for accuracy:

- Gather a statistically valid sample of documents from actual production data.

- Create meticulous human-reviewed annotations as the benchmark (ground truth).

- Run your document extraction tool on the sample set.

- Compare outputs and categorize each result (true positive, false positive, false negative).

- Calculate precision, recall, and F1 scores for each field type.

- Document findings with error breakdowns, not just averages.

- Adjust processing pipeline based on error analysis.

Ground truth: Defining your gold standard

Ground truth is your gold standard—the absolute, human-verified “right answer” for what should be extracted. But establishing it is anything but straightforward. Creating reliable ground truth means wrestling with ambiguities, document chaos, and human error.

Three approaches:

- Finance: Teams of analysts review a random sample of loan agreements, flagging every extractable data point, then double-reviewing for accuracy.

- Healthcare: Multi-tiered review with clinical experts validating extracted fields from complex patient histories, ensuring no vital clinical context is lost.

- Legal: Consensus-based annotation for a sample of contracts, with multiple legal professionals resolving disputes over key terms or clauses.

Human review remains the most reliable way to create ground truth, but it’s resource-intensive and subject to its own biases. Automated checks can speed up the process, but they’re only as good as the rules they’re given. Striking the right balance between human and machine is an art—and a constant battle.

Beyond numbers: Qualitative accuracy

Not all extraction errors are created equal. Sometimes, a perfectly accurate extraction on paper still fails in context—misreading subtle intent or overlooking nuances that only a seasoned expert would catch. This is where qualitative accuracy comes in.

The degree to which extracted data faithfully reflects the underlying meaning or intent—not just the literal text.

How well the extraction interprets a field based on its role or significance in the broader document context.

Overlooking qualitative errors can have outsized impacts: a contract field that’s technically correct but semantically wrong can lead to litigation; a patient note stripped of nuance can result in misdiagnosis; a regulatory field pulled out of context can mean hefty fines.

All of this sets the stage for the next leap in document extraction: the evolution from legacy OCR systems to the cutting edge of machine learning and large language models.

State of the art: From OCR to LLMs

OCR's legacy and limitations

Optical Character Recognition (OCR) has been the backbone of automated document extraction for decades. Its legacy is long—and checkered. Early OCR systems were plagued by poor accuracy, struggling with anything but the cleanest, typewritten pages.

| Year | Extraction Technology | Breakthrough/Limitations |

|---|---|---|

| 1980 | Rule-based OCR | Basic text extraction, high error rate on poor-quality scans |

| 2000 | Template-based extraction | Better for standard forms, brittle with layout changes |

| 2010 | ML-based OCR | Improved adaptability, but limited by training data |

| 2020 | AI-powered IDP with NLP | Handles unstructured data, but struggles with context and semantics |

| 2023 | Early LLM integration | Boosts semantic extraction, high resource demand |

Table 4: Timeline of document extraction technologies and their breakthroughs/limitations.

Source: Original analysis based on MetaSource, 2024

Legacy OCR comes with challenges—blurry scans, handwritten notes, and layout shifts can wreck accuracy. For example, a legal department relying on 2010s-era OCR found its system missed over 20% of key clauses in new contract templates post-merger, leading to costly rework.

Modern AI-based approaches, especially those integrating NLP and ML, deliver real gains but are not immune to real-world messiness. They bridge the gap, but context—the holy grail—remains elusive.

AI and machine learning: The new frontier

Machine learning (ML) has rewritten the accuracy playbook. Instead of rigid rules, ML models learn from data, adapting to wild document variability and extracting meaning beyond mere text. According to MetaSource, 2024, integrated ML and NLP approaches now drive the most advanced IDP systems.

Three techniques reign:

- Supervised learning: Models trained on annotated data, learning to extract precise fields from diverse formats.

- Transfer learning: Leveraging pre-trained models (like BERT) for domain adaptation, boosting performance on industry-specific documents.

- Active learning: Continuously improving extraction by prioritizing samples the model is uncertain about for human review—a feedback loop that steadily raises accuracy.

Real-world results? A financial firm cut manual review times by 60% using active learning. A healthcare provider slashed error rates in patient intake forms by integrating transfer learning models tailored to medical language.

How LLMs are rewriting the rules

Large Language Models (LLMs) like GPT-4 and their industry siblings are upending document extraction again. These models bring unprecedented semantic understanding, reading documents more like a human than a robot. Their impact? Extracting meaning from dense, unstructured text, summarizing documents, and flagging anomalies with nuance.

For instance, a market research firm using LLM-based extraction cut report analysis time in half and uncovered key insights previously buried in text walls. In legal workflows, LLMs accurately highlighted risky clauses, outperforming legacy systems by 18% in recall.

However, LLMs are not a panacea. They’re resource-intensive, sometimes hallucinate, and still require human oversight for edge cases and compliance. As the tech evolves, case studies continue to emerge—illustrating both breakthrough wins and sobering limitations.

Case files: When document extraction failed (and when it saved the day)

Epic fails: Lessons from the field

High-profile failures are the cautionary tales every data leader dreads. In 2023, a major insurer’s botched document extraction led to thousands of claim denials and a public relations nightmare. The news cycle was merciless—screenshots of garbled data became memes, and regulatory probes followed.

What went wrong? The system—optimized for speed, not edge cases—couldn’t handle a sudden influx of handwritten claims. Manual reviews had been scaled back. The fallout?

- Initial errors triggered mass denials.

- Customer outrage hit social media.

- Regulators demanded a full audit.

- Months of remediation and compensation followed.

Success stories: What worked, what didn’t

But not all stories are disasters. Three anonymized wins:

- A government agency processed 80,000 grant applications with a hybrid AI/human workflow, achieving 97% field-level accuracy and meeting tight compliance deadlines.

- A healthcare provider used NLP-powered extraction for patient records, reducing manual review by 50% and error rates by 30%.

- A global bank paired active learning with targeted human review, driving accuracy from 92% to 98% in high-risk document types.

Alternative approaches—layered validation, continuous feedback loops, and regular KPI-driven audits—proved decisive. The lesson? No tool alone guarantees success. Details matter.

“The difference is always in the details.” — Jordan, data quality architect

Industry snapshots: Healthcare, finance, government

Healthcare: Extraction accuracy is critical—one wrong field can compromise patient safety. According to Scoop.market.us, 2024, the average accuracy for structured healthcare documents is 92%, but drops to 80% for semi-structured cases.

Finance: Regulatory scrutiny means every data point counts. Missed fields can trigger audits, fines, or worse. Top banks now demand field-level accuracy above 95% for high-risk processes.

Government: Extraction empowers transparency and efficiency, but legacy systems and document diversity drag accuracy down. Continuous improvement and hybrid approaches are the norm.

| Industry | Average Field-Level Accuracy (2025) | Compliance Impact | Source |

|---|---|---|---|

| Healthcare | 88-92% | High (patient safety) | Scoop.market.us, 2024 |

| Finance | 93-97% | Very high (regulation) | MetaSource, 2024 |

| Government | 84-91% | Medium (transparency) | TechHQ, 2024 |

Table 5: Industry benchmarks for document extraction accuracy, 2025.

Source: Original analysis based on Scoop.market.us, 2024, MetaSource, 2024, TechHQ, 2024

Improving your extraction accuracy: Practical steps

Auditing your pipeline: Step-by-step

Regular audits are the lifeblood of accurate extraction. Complacency is dangerous—errors creep in through shifting document types, model drift, and unforeseen scenarios.

Detailed checklist for auditing extraction accuracy:

- Identify all document types and field variations in your workflow.

- Assemble a representative sample set for each major document group.

- Annotate ground truth with multi-person human review.

- Run extraction and collect raw outputs.

- Calculate precision, recall, and F1 metrics at field level.

- Analyze error patterns by document type and field complexity.

- Investigate root causes: model drift, OCR quality, layout anomalies.

- Document corrective actions and track improvements over time.

- Schedule quarterly re-audits with updated samples.

- Engage frontline teams for qualitative feedback on edge cases.

Common mistakes? Sampling only “easy” documents, relying on averages instead of field breakdowns, and skipping qualitative review. Avoid these to build bulletproof pipelines.

As you audit, consider when and how to integrate human-in-the-loop strategies—because the robots aren’t ready to fly solo just yet.

Human-in-the-loop: Not dead yet

Despite the AI hype, human review remains essential—even in the most advanced pipelines. Exception handling, ambiguous cases, and compliance-critical workflows all benefit from a human touch.

Three real-world examples:

- A legal firm uses junior analysts to double-check AI-extracted contract terms for high-stakes deals.

- A hospital deploys expert nurses to validate auto-extracted patient data on critical fields only.

- A logistics company leverages part-time reviewers to sample-check randomly selected shipments, catching rare but costly errors.

Unconventional uses for human reviewers:

- Training data enrichment: Humans label obscure formats to boost model coverage.

- Edge case escalation: Reviewers flag patterns the AI consistently struggles with, triggering model retraining.

- Compliance auditing: Humans document AI decisions for regulatory filings and dispute resolution.

Hybrid approaches aren’t free—there’s a cost, but it’s often dwarfed by the cost of unchecked errors. The game is to find the sweet spot where AI automates the routine, and humans catch the rest.

Choosing the right tool (and questions to ask vendors)

Selecting an extraction tool is a minefield of bold claims and slippery metrics. Arm yourself with the right questions:

- What is the real-world extraction accuracy for MY document types? Show the evidence.

- How are accuracy numbers measured—precision, recall, F1, or something else?

- How is the solution audited and updated over time?

- What human-in-the-loop options exist for exceptions?

- Is there an explainable AI component for disputed extractions?

- Can you share benchmarks from similar industries/clients?

| Selection Criteria | Why It Matters | Typical Vendor Response | Best Practice |

|---|---|---|---|

| Real-world accuracy | Avoids cherry-picked numbers | “Up to 99.9% (lab)” | Request field-level data |

| Audit process | Ensures ongoing reliability | “Annual review” | Continuous, quarterly audits |

| Human review options | Catches edge cases | “Optional” | Built-in and customizable |

| Compliance features | Legal defensibility | “Meets GDPR/SOX” | Ask for audit documentation |

| Model retraining | Adapts to new document types | “On request” | Automated or feedback-driven |

Table 6: Decision matrix for evaluating document extraction tools. Source: Original analysis based on vendor documentation and industry best practices.

When you’re ready to compare, treat textwall.ai as a reliable knowledge resource—a site that curates up-to-date research and real-world insights on document extraction accuracy, without the vendor whitewash.

The future of document extraction accuracy

Generative AI and LLMs: Disruption or hype?

Generative AI and LLMs have set the document extraction world ablaze, promising new levels of accuracy and semantic understanding. Their ability to “read between the lines” can, in some cases, outstrip traditional models, especially for unstructured and multilingual content.

But with power comes new risk—LLMs require massive compute resources, can hallucinate answers, and demand robust oversight. They’re not a plug-and-play fix. The wisest adopters experiment judiciously, layering LLMs atop proven pipelines and measuring with the same old-fashioned rigor as any other tool.

What to expect in the next two years:

- LLMs integrated with niche industry ontologies, improving field-level extraction in specific domains

- AI pipelines combining multiple model types for layered validation

- Expanded use of active learning and feedback loops to handle drift and data evolution

- More transparent audit trails and explainable AI to satisfy regulators

Ethics, bias, and the accuracy dilemma

Accuracy isn’t just a technical challenge—it’s an ethical one. Extraction models can bake in bias, propagating errors that disproportionately impact marginalized groups or introduce systemic risk.

Three ethical dilemmas:

- A mortgage lender’s extraction tool underperforms on non-standard forms, disproportionately impacting applicants from underrepresented communities.

- A healthcare system’s extraction misses nuanced patient history, skewing care recommendations.

- A government agency’s automated extraction mislabels immigration documents, triggering unfair denials.

Strategies for mitigating risk:

- Conduct regular bias audits using diverse, real-world data samples.

- Build transparency into every pipeline layer—document every decision and exception.

- Involve cross-functional teams (legal, compliance, DEI) in pipeline design and review.

Regulation is tightening—GDPR, CCPA, and sector-specific mandates mean the accuracy debate is now a legal, as well as a technical, imperative.

What to watch for in 2025 and beyond

As new benchmarks are set and old pipelines are overhauled, staying ahead of accuracy challenges means vigilance, learning, and relentless improvement.

Priority checklist for staying ahead:

- Audit extraction accuracy quarterly, not annually.

- Integrate human-in-the-loop at every exception point.

- Measure KPIs beyond accuracy—time-to-resolution, exception rate, user satisfaction.

- Track regulatory changes and update pipelines proactively.

- Cultivate a culture of data skepticism—trust, but verify.

Don’t just chase numbers—chase understanding. Is your extraction pipeline battle-tested, or a ticking time bomb? The answer will define your digital destiny.

Supplementary: Adjacent topics you can’t ignore

Data privacy and security in extraction pipelines

Accuracy lives next door to privacy and security. A highly accurate pipeline is worthless if it leaks sensitive data or exposes you to cyberattack. Recent headlines are full of organizations burned by careless document handling—where data was “accurately” extracted straight into a breach.

Example 1: A finance firm’s secure, encrypted pipeline kept customer data safe, even during a massive extraction update.

Example 2: A rival’s shortcut-laden pipeline saw extracted documents dumped in unencrypted cloud storage—resulting in a public breach, regulatory fines, and reputational carnage.

Security tips:

- Encrypt data at every pipeline step, in transit and at rest.

- Limit access on a need-to-know basis with robust role controls.

- Regularly audit logs for anomalous extraction or access patterns.

- Never process sensitive documents on unsecured or public infrastructure.

The role of feedback loops and active learning

Feedback loops are the secret weapon for sustained extraction accuracy. By capturing and acting on errors, exceptions, and user corrections, pipelines “learn” over time—outpacing static systems.

Active learning workflow:

- Model flags uncertain extractions for human review.

- Reviewers correct or confirm outputs.

- System retrains using corrected samples, improving future accuracy.

- Continuous integration of feedback, error tracking, and reporting.

Outcomes? Organizations report up to 30% faster adaptation to new document types and a marked reduction in recurring errors. These improvements have ripple effects—lower costs, higher compliance, and happier users.

Regulatory trends shaping accuracy expectations

Regulations are raising the bar for extraction accuracy across industries. From GDPR in Europe to HIPAA in healthcare and SOX in finance, compliance requirements demand rigorous documentation of accuracy metrics and audit trails.

Regional comparisons:

- EU: Stricter reporting and transparency mandates.

- US: Patchwork of sector-specific rules, but rising expectations.

- APAC: Growing focus on data localization and oversight.

Regulatory compliance checklist:

- Maintain detailed logs of extraction decisions and accuracy metrics.

- Ensure all data processing is auditable and explainable.

- Stay current with evolving regional rules and update pipelines accordingly.

For up-to-date insights and compliance resources, consult textwall.ai, a trusted industry knowledge hub.

Conclusion

Document extraction accuracy isn’t a vanity metric—it’s the backbone of trust, compliance, and operational excellence in the digital era. The brutal truth? No solution, however advanced, is immune to the chaos of real-world documents. Chasing perfect numbers can be a costly distraction; the winners are those who combine machine intelligence with human judgment, ruthless auditing, and relentless adaptability. As the stakes rise and the tech evolves, only the vigilant will thrive. Want to transform your extraction strategy from fragile to bulletproof? Start questioning the numbers, demand real-world evidence, and never stop learning. The next document disaster—or breakthrough—might be just a click away.

Sources

References cited in this article

- Scoop.market.us: IDP Statistics(scoop.market.us)

- Kili Technology: 2024 Guide(kili-technology.com)

- LinkedIn: Race to 100% Accuracy(linkedin.com)

- MetaSource: IDP Trends 2024(metasource.com)

- TechHQ: The Future of Data Extraction(techhq.com)

- KPMG: Cyber Incidents 2023(assets.kpmg.com)

- Medium: Hidden Costs of Document Extraction(medium.com)

- Evolution AI: Invoice Data Extraction(evolution.ai)

- LinkedIn: 5 Misconceptions About Document Retrieval(linkedin.com)

- Toolify: Debunking Myths of Intelligent Document Processing(toolify.ai)

- Hyperscience: Document Processing in 2023(hyperscience.com)

- Cradl.ai: Guide to Document Data Extraction(cradl.ai)

- JMIR: Medical Text Extraction with LLMs(jmir.org)

- AIMultiple: OCR Accuracy Benchmark 2025(research.aimultiple.com)

- ExpertBeacon: OCR Accuracy(expertbeacon.com)

- Microsoft: Evaluating AI Document Extraction(techcommunity.microsoft.com)

- Vellum.ai: LLMs vs. OCR(vellum.ai)

- Fetch-A-Set Benchmark(arxiv.org)

- UBIAI NLP: OCR Overview 2024(medium.com)

- arXiv: LMDX Paper(arxiv.org)

- Airesearchblogs: LLMs in Document Extraction(airesearchblogs.com)

- DocumentsFlow: Document Extraction Revolution(documents-flow.com)

- Capella Solutions: Agentic AI(capellasolutions.com)

- Sirion: Financial Services Case Studies(sirion.ai)

- Auxis: Top 2024 IDP Tools(auxis.com)

- V7 Labs: Best Data Extraction Tools(v7labs.com)

- MetaSource: What Is Human-in-the-Loop?(metasource.com)

- Frontiers: Editorial on HITL(frontiersin.org)

- Indicodata.ai: Future of IDP(indicodata.ai)

- Docsumo: 2025 IDP Trends(docsumo.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Digitization Workflow Risks You Can’t Ignore in 2026

Uncover hidden risks, expert insights, and radical fixes for 2026. Don’t digitize blind—master every step now. Read before you scan.

Document Digitization Technology Solutions That Actually Deliver ROI

Document digitization technology solutions unlock hidden value—discover 11 bold strategies, pitfalls, and real-world wins. Stop settling for outdated workflows. Read now.

Document Digitization Technology Innovations That Won’t Fail You

Walk into an office today, and you’ll see a chasm. On one side: mountains of paper, endless filing cabinets, the ghost of “that one lost contract” haunting

Document Digitization Technology Forecast: the Risks Everyone Missed

Uncover the real future, hidden risks, and actionable insights for 2026. Read now for the edge you need to stay ahead.

Document Digitization Technology Comparison That Exposes 2026’s Real Costs

Document digitization technology comparison that exposes hidden costs, pitfalls, and real-world winners. Get the unfiltered 2026 guide to choosing the right tech. Read before you buy.

Document Digitization Technologies That Will Decide Your ROI in 2026

Document digitization technologies are evolving fast—discover the hidden realities, hard data, and bold strategies to transform your business in 2026. Don’t get left behind—read now.

Document Digitization Strategies That Won’t Backfire by 2030

Discover insights about document digitization strategies

Document Digitization Solutions Comparison for 2026 Buyers Who Can’t Afford a Bad Bet

Discover the most revealing 2026 insights, hidden costs, and expert strategies you won’t find anywhere else. Choose with confidence today.

Document Digitization Solutions That Pay Off (and Those That Don’t)

Document digitization solutions are redefining success in 2026. Uncover the hidden pitfalls, real costs, and smart moves to transform chaos into clarity today.