Document Analysis Tools for Researchers Who Refuse to Drown in Pdfs

The world is drowning in data, and researchers are at the center of a storm most people don’t see coming. From clinical trial reports to centuries-old manuscripts, the deluge of documents is relentless, merciless, and absolutely unforgiving. Document analysis tools for researchers aren’t just a convenience—they’re a survival kit in an era where information overload has evolved from a nuisance to a full-blown existential threat. If you’re still skimming PDFs manually, you’re already behind. The raw truth: the difference between thriving and drowning in 2025’s research landscape has never been sharper, and the stakes have never been higher. This guide slices through the hype, exposes uncomfortable truths, and delivers 11 real strategies to reclaim your research edge—with facts, not wishful thinking.

Why document analysis matters more than ever in the age of AI

The new reality for modern researchers

Picture this: knowledge workers now spend around a third of their day just searching for information, according to recent data from TechTarget, 2024. The volume of global data is expected to hit a staggering 175 zettabytes by the end of 2025. That’s not hyperbole—it’s the ground-zero reality for modern research. Each new wave of data, every digital archive, and every scholarly dump becomes one more layer of complexity that’s impossible to navigate without the right tools. Document analysis isn’t about squeezing a few extra hours out of your week; it’s about clawing back the very possibility of meaningful research in a system designed to overwhelm.

"Most knowledge workers are suffocating under an avalanche of digital information. The right tools aren’t a luxury—they’re oxygen."

— Dr. Jane Hamilton, Information Science Specialist, TechTarget, 2024

Information overload: crisis or opportunity?

It’s tempting to see information overload as a crisis—and it is, for those unprepared. But for researchers who embrace advanced document analysis tools, it’s also a rare opportunity. Here’s why:

- Accelerated discovery: Researchers using AI summarizers publish up to 30% faster, as reported by Metapress, 2025. When you can scan, slice, and synthesize a mountain of papers in hours, not weeks, you don’t just catch up—you leap ahead.

- Selective focus: Smart platforms like textwall.ai and Docugami let you zero in on the 5% of content that actually matters, instead of drowning in irrelevant details.

- Uncovering hidden insights: Tools such as NVivo and Kapiche aren’t just counting words; they’re revealing patterns and connections that manual review would never surface.

- Collaboration at scale: Platforms like Bit.ai and ResearchFlow break down silos and let teams hammer through document piles together, boosting both speed and depth.

- Multilingual advantage: With translation and explanation features (e.g., Kami AI, UPDF AI), researchers can process global sources without hitting linguistic roadblocks.

How AI is rewriting the research rulebook

The rulebook isn’t just being edited—it’s being shredded and rewritten by AI. Traditional boundaries between qualitative and quantitative analysis blur as machine learning techniques become standard fare even for humanities research. Instead of reading 200 pages, you let an assistant like Monica’s AI Summarizer generate a meta-analysis in minutes. With document analysis tools for researchers, your role shifts from parser to strategist: less drudgery, more critical thinking.

Consider this: according to Metapress, 2025, AI-powered summarization tools allow researchers to publish up to 30% faster than those relying solely on manual methods. In the past, qualitative data coding took days—now platforms like ATLAS.ti and NVivo can categorize themes from transcripts in hours. Moreover, quantitative tools such as Kapiche crunch through survey data to extract patterns that might otherwise slip through the cracks.

| Feature | Manual Analysis | AI-Powered Tools | Impact on Workflow |

|---|---|---|---|

| Time to summarize 100 pages | 8-12 hours | 20 minutes | 95% reduction |

| Error rate | 2-5% (human fatigue) | 0.5-1% (machine consistency) | Greater accuracy |

| Depth of insight | Surface-level (time-limited) | Multilayered, cross-referenced | Deeper findings |

| Collaboration efficiency | Siloed, email-heavy | Real-time, cloud-based | Seamless teamwork |

Table 1: Comparison of manual vs. AI-powered document analysis tools for researchers. Source: Original analysis based on TechTarget, 2024 and Metapress, 2025.

From manual slog to machine intelligence: the evolution of document analysis

A brief history: paper cuts to algorithms

Once, document analysis for researchers meant red pens, highlighters, and a desk buried under paper. The digital transition brought PDFs, but the task remained Sisyphean—manual searches, CTRL+F, endless scrolling. Fast-forward to the present, and the script has flipped. Algorithms now spot what the human eye misses, and AI can summarize, tag, and extract insight faster than you can say “literature review.”

- Handwritten notes and index cards: The pre-digital era’s only option for organizing findings.

- Basic digital search: The PDF revolution introduced keyword searches but little true analysis.

- Manual coding in spreadsheets: Early attempts at structure, still labor-intensive.

- Rise of text mining: NLP tools begin processing unstructured data.

- AI-powered platforms: True automation, summarization, and insight generation.

Milestones in AI-driven document analysis

Modern document analysis tools for researchers didn’t appear overnight—they’re built on decades of progress. The introduction of NLP (Natural Language Processing) enabled large-scale understanding of unstructured text. Machine learning models began to categorize and classify documents with growing accuracy. Integration of multimodal learning and explainable AI—now central in platforms like Adobe Acrobat AI—boosted transparency and trust, addressing the “black box” problem.

| Milestone | Year | Impact on Research |

|---|---|---|

| Keyword search in digital docs | 1990s | Faster, but shallow search |

| NLP integration | 2000s | Semantic understanding |

| Automated summarization | 2010s | Rapid synthesis |

| Cross-document comparison | 2020s | Holistic analysis |

| AI workflow hubs (e.g., Bit.ai) | 2020s | End-to-end collaboration |

Table 2: Key milestones in document analysis tools for researchers. Source: Original analysis based on Metapress, 2025 and TechTarget, 2024.

"AI document analysis isn’t about replacing researchers—it's about amplifying what they can do."

— Dr. Michael Lee, Data Science Lead, Metapress, 2025

What most researchers still get wrong

Despite the surge in smart tools, many researchers cling to old habits:

- Overreliance on basic search: Assuming that keyword hits equal insight is a rookie mistake. True analysis requires context—something only advanced AI can deliver.

- Ignoring qualitative nuances: Quantitative tools are powerful, but neglecting the “why” behind data leads to shallow, misleading conclusions.

- Manual copy-paste syndrome: Even with AI, researchers still manually transfer data between apps—wasting precious time.

- Underestimating integration: Disconnected tools mean lost productivity. Comprehensive platforms like textwall.ai streamline the process.

The lesson? Don’t treat document analysis tools as glorified search bars. Their power lies in integration, context, and automated insight—not just digital convenience.

Decoding the tech: how document analysis tools work under the hood

Natural language processing and machine learning basics

At the core of advanced document analysis tools for researchers is a blend of Natural Language Processing (NLP) and machine learning (ML). NLP is what lets a tool “understand” human language—identifying topics, extracting entities, and even detecting sentiment. ML models, meanwhile, learn from vast data sets to spot patterns, classify content, and predict relevance.

NLP algorithms identify key entities, phrases, and concepts within unstructured text, surfacing what matters most.

ML processes measure the emotional tone of documents—useful for everything from product reviews to political discourse analysis.

Advanced ML finds clusters of related ideas, allowing researchers to map discourse landscapes rather than just count keywords.

Beyond the buzzwords: what really makes a tool smart?

A genuinely “smart” document analysis tool does more than throw buzzwords at you. It adapts, learns, and explains itself.

First, explainable AI is crucial. Researchers can’t afford to trust black-box decisions, especially in high-stakes fields like law or medicine. Tools like Adobe Acrobat AI Assistant and Docugami now offer transparent summaries and traceable logic paths, boosting trust.

Second, multimodal learning integrates text, images, and (in some cases) audio to generate richer context. This means you’re not just analyzing words, but also charts, photos, and scanned documents—expanding your analytic reach.

- Real-time feedback: The best tools show you how your choices (e.g., tagging parameters) affect results, reducing trial-and-error.

- Workflow automation: Integrations with platforms like Bit.ai and ResearchFlow mean you spend less time on logistics and more on thinking.

- Cross-document insight: Instead of analyzing documents in isolation, cutting-edge platforms compare, contrast, and synthesize across multiple sources.

The anatomy of a document analysis workflow

Here’s how a modern analysis unfolds:

- Uploading: Feed your PDFs, text files, or scanned documents into a secure cloud platform.

- Preprocessing: The system cleans, de-duplicates, and segments content, prepping it for analysis.

- Analysis: NLP and ML models extract entities, classify sections, and summarize key points.

- Insight extraction: Tools surface trends, connections, and anomalies—often with visual highlights.

- Synthesis and export: Results are delivered as concise summaries, themed reports, or exportable datasets.

The contenders: top document analysis tools for researchers in 2025

Features that actually matter (and which don't)

Not all shiny features deliver. Here’s what separates the real contenders from the pretenders in document analysis for researchers:

Essential. You need control over what’s extracted, summarized, and categorized.

Must-have. Tools should plug into your existing workflow—no more tab overload.

Critical. See why a result was generated, not just what the result is.

Useful, but only if combined with robust error handling.

Increasingly vital for research teams working across continents.

| Feature | Must-have? | Why it matters |

|---|---|---|

| NLP Summarization | Yes | Saves hours, reduces overload |

| Custom Taxonomy | Yes | Reflects your unique project |

| API Access | Yes | Enables automation |

| On-device processing | Sometimes | For privacy, not scale |

| Flashy dashboards | No | Often distract, rarely inform |

Table 3: Features that matter in document analysis tools for researchers. Source: Original analysis based on Metapress, 2025 and ToolsForHumans.ai, 2025.

Comparing the leaders: strengths, gaps, and wildcards

Let’s get brutally honest about the current heavyweights:

| Tool Name | Strengths | Weaknesses |

|---|---|---|

| textwall.ai | Deep NLP, integration, explainability | Limited on-device privacy options |

| Docugami | Automated extraction, user-friendly | Customization less granular |

| Monica’s AI | Fast summaries, accurate coding | Lacks advanced visualization |

| ATLAS.ti | Rich qualitative coding, academic focus | Steeper learning curve |

| Kami AI | Multimodal, translation, annotation | Collaboration features basic |

| Kapiche | Quantitative, real-time, great UI | Not ideal for unstructured docs |

Table 4: Comparative analysis of leading document analysis tools for researchers. Source: Original analysis based on Metapress, 2025 and ToolsForHumans.ai, 2025.

- Wildcard features: Some platforms (Adobe Acrobat AI, PDF.ai) now support cross-document comparison and annotation, moving the needle for complex literature reviews.

- Integration gaps: Not all tools play nicely with institutional logins or cloud storage—make sure your choice won’t force a workflow overhaul.

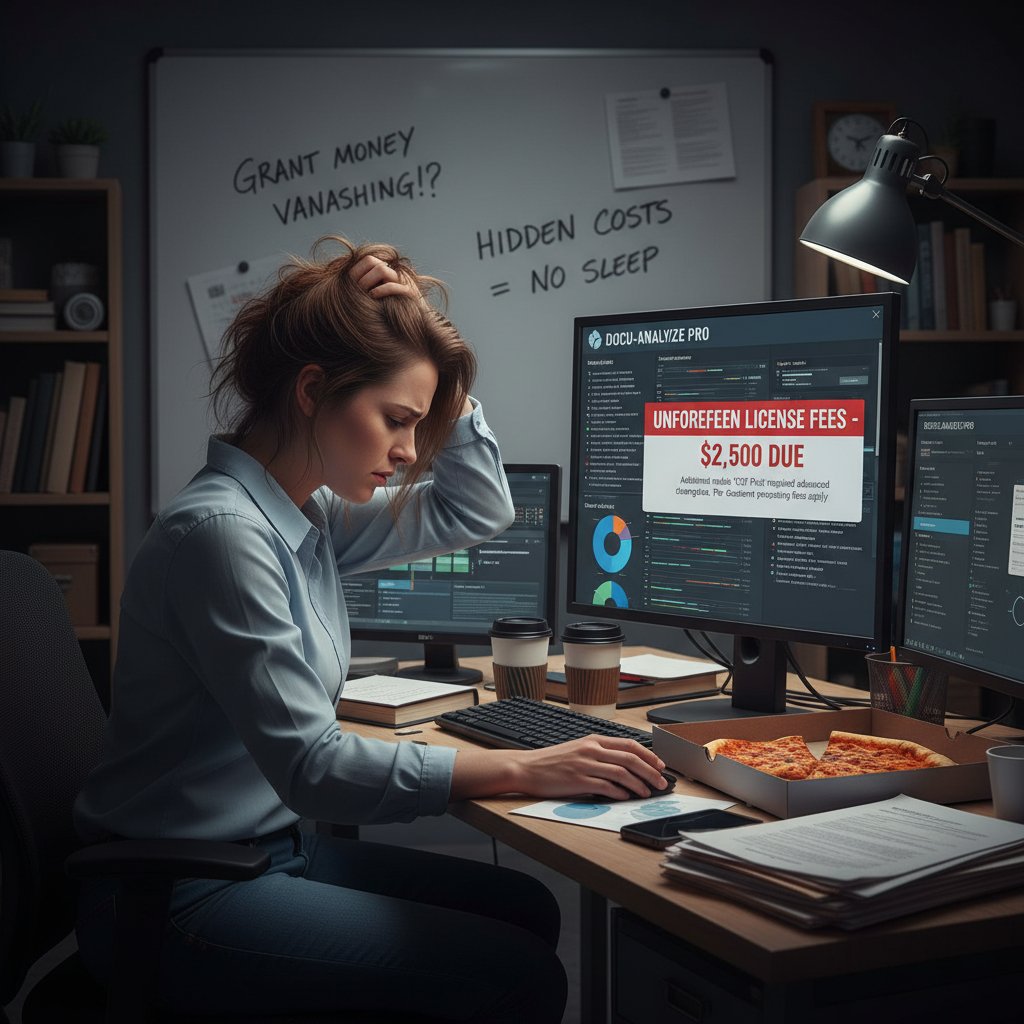

- Cost surprises: Hidden subscription fees and pay-per-use models can bite if you’re processing hundreds of files monthly.

Hidden costs and overlooked benefits

Every platform parades its strengths, but few advertise the fine print:

- Data privacy fees: On-premises or encrypted processing often costs extra.

- Training time: Even intuitive tools require onboarding—factor in the learning curve for your team.

- Export limitations: Some solutions restrict export formats, limiting downstream analysis.

- Overlooked benefits: Tools like textwall.ai continuously learn from your feedback, subtly improving relevance and accuracy with every use.

Real-world impact: case studies and unexpected applications

Academia: faster reviews, deeper insights

Academic researchers have long been buried under literature reviews. Today, AI-powered document analysis tools for researchers are slashing that burden:

- Step-change in review time: According to Metapress, 2025, AI summarizers are helping researchers publish up to 30% faster.

- Deeper thematic analysis: NVivo and ATLAS.ti uncover hidden themes and cross-cutting insights no human would spot in a single pass.

- Global accessibility: Multilingual platforms are breaking down language barriers, expanding the pool of accessible research.

Beyond academia: journalism, law, and healthcare

The impact of document analysis tools for researchers now extends far beyond the ivory tower:

- Journalists use AI tools like Kami AI to quickly mine court transcripts, legislative records, and FOIA dumps for breaking stories.

- Law firms deploy platforms such as Docugami to dig for contractual anomalies, cutting review times by over 50%.

- Healthcare administrators turn to Kapiche and ResearchFlow to process vast patient data sets, streamlining compliance and improving patient care outcomes.

But the story doesn’t always have a happy ending. Automated extraction can miss context or subtle cues—a reminder that human oversight remains essential.

"AI can read thousands of pages a minute, but if you don’t ask the right questions, you’ll still get the wrong answers."

— Dr. Fiona Clarke, Investigative Journalist, ToolsForHumans.ai, 2025

When things go wrong: failures and hard lessons

Even the best document analysis tools for researchers have blind spots. In 2023, a team using automated coding failed to catch a crucial data misclassification in a high-profile study—because no one double-checked the machine’s output. The fallout? Retracted papers, damaged reputations, and a sharp reminder: “trust but verify” is still non-negotiable.

In the words of Dr. Clarke, “AI’s speed is seductive, but it’s not infallible. The most dangerous error is assuming it is.”

Human vigilance is still the ultimate backstop. Smart researchers pair automation with critical review—using the machine to amplify, not replace, their expertise.

Myth-busting: debunking the biggest misconceptions about document analysis tools

AI vs. human: the real face-off

It’s easy to cast AI and humans as adversaries, but the truth is more nuanced. AI supercharges what researchers can do—but only when paired with real-world judgment.

| Task | Human Analyst | AI Tool | Best Use Case |

|---|---|---|---|

| Deep contextual insight | High (with experience) | Moderate (depends on training data) | Interpretive work |

| Speed of processing | Low (manual bottleneck) | High (thousands of docs/hour) | Bulk review |

| Pattern recognition | Good (with limitations) | Excellent (large datasets, subtle patterns) | Thematic analysis |

| Error detection | Inconsistent (fatigue, bias) | Consistent (but lacks context) | Data cleansing |

Table 5: AI vs. human strengths in document analysis for researchers. Source: Original analysis based on TechTarget, 2024.

"A balanced workflow leverages machines for speed and humans for meaning. Ignore either and you’ll get burned." — Dr. Michael Lee, Data Science Lead, Metapress, 2025

Automation isn’t always the answer

Despite the hype, there are hard limits to what automation can deliver.

- Subtlety loss: Automated tools struggle with irony, sarcasm, or culturally nuanced references.

- Data quality traps: Garbage in still means garbage out—no algorithm can rescue fundamentally flawed data.

- Overconfidence bias: The “AI said so” mentality can override critical skepticism, especially among new users.

- Resource imbalance: Smaller teams may lack the infrastructure to fully exploit advanced tools.

Automation is a force multiplier—but it’s not a silver bullet. The best researchers know when to trust the tool and when to double-check the output.

The bias nobody talks about

AI isn’t immune to bias—it can amplify it. Algorithms trained on skewed datasets will reproduce those distortions at terrifying speed.

Systematic distortion introduced by training data or model design, leading to unfair or misleading results.

The tendency to interpret new evidence as confirmation of one’s existing beliefs—a risk AI can inadvertently magnify by surfacing only what matches your queries.

How to choose the right document analysis tool: a brutally honest guide

Checklist: what to demand (and what to avoid)

Don’t sleepwalk into a bad investment. Use this checklist to separate contenders from pretenders:

- Robust NLP capabilities: Can it handle real academic language and obscure jargon?

- Explainable results: Does it show how conclusions were reached?

- Flexible export formats: Are your data and reports truly portable?

- Privacy compliance: Is sensitive info encrypted and/or processed on device?

- Active support and updates: Is the tool evolving with new research needs?

- Pricing transparency: Are there hidden fees or usage caps?

- Integration with key platforms: Does it play nice with your reference manager, storage, and workflow tools?

Red flags in vendor claims and marketing hype

If you see any of these, run—not walk—away:

- Vague AI claims: “Powered by AI” means nothing without concrete examples.

- No clear security policy: If you can’t find details on encryption or compliance, beware.

- One-size-fits-all pitch: Research needs are diverse—tools should reflect that.

- Unverifiable testimonials: If you can’t find real users echoing the claims, be skeptical.

Choose wisely—your team’s productivity and reputation are on the line.

Insider tips from researchers who’ve seen it all

Talk to enough seasoned professionals and a few rules stand out.

"Always test drive before you buy. Upload the worst, messiest document you have—it’ll show you a tool’s real limits." — Dr. Arun Patel, Research Methodologist, ToolsForHumans.ai, 2025

The bottom line? The smartest researchers aren’t the ones with the best tools—they’re the ones who ask the hardest questions, both of their data and their software.

Step-by-step: mastering document analysis workflows for maximum insight

Getting started: setup to first results

Ready to take the plunge? Here’s how to roll out document analysis tools for researchers like a pro:

- Identify project needs: Define what insight you actually want—summary, categorization, trend analysis, or all of the above.

- Select your tool: Use the checklist above to shortlist 2-3 platforms for a free trial.

- Upload your most complex document: Challenge the tool’s limits at the outset.

- Customize extraction settings: Tailor entity recognition, summary length, or coding scheme to your research goals.

- Review and iterate: Compare automated results to manual review; adjust settings based on discrepancies.

- Collaborate: Share outputs with your team, using in-tool comments and tags for live discussion.

- Export and document: Save results in formats compatible with your broader workflow, like CSV, DOCX, or annotated PDF.

Common mistakes and how to dodge them

No tool is foolproof. Here’s how to dodge classic errors:

- Forgetting the setup: Skipping customization is a guaranteed way to get bland, irrelevant outputs.

- Blind trust in automation: Always cross-check critical findings with manual review.

- Ignoring export compatibility: Double-check that outputs fit your team’s tools and reporting formats.

- Underutilizing collaboration: Many platforms offer team features—use them to avoid knowledge silos.

- Prioritizing flashy UI over substance: A pretty dashboard is worthless if the underlying analysis is shallow.

Always remember—the tool is only as smart as the analyst at the helm.

Advanced hacks for power users

- Batch process with category rules: Group similar docs and auto-tag for fast meta-analysis.

- Integrate with citation managers: Sync outputs directly to platforms like Zotero or Mendeley.

- Leverage API access: Automate recurring tasks and connect with data visualization suites.

- Customize entity lists: Train the AI on your domain-specific terms for sharper extraction.

- Cross-compare sources: Use platforms like textwall.ai to compare findings across multiple document types.

The more you push your tools, the more you’ll reveal—and the greater your research edge will be.

The future of document analysis: where research meets revolution

Emerging trends and disruptive technologies

The pace of change in document analysis tools for researchers is unforgiving—and thrilling. Here’s what’s making waves now:

- Multimodal learning: Combining text, images, and tables for richer context.

- Real-time insight delivery: Instant feedback as you upload or annotate a document.

- Workflow automation: AI-driven scheduling, reminders, and task assignment integrated into analysis platforms.

- Explainable AI: Transparent outputs that show logic paths and data sources.

- Cross-platform integration: Seamless sync between document analysis, storage, and reporting tools.

- Real-time translation and annotation for global collaboration.

- Automated compliance and privacy checks built into analysis workflows.

- Continuous learning engines that adapt to user feedback and domain evolution.

Society, ethics, and the researcher’s new dilemma

With great power comes new responsibilities. Document analysis tools for researchers have the potential to amplify bias, compromise privacy, or accelerate misinformation if used carelessly.

Platforms must reveal source logic, training data, and limitations.

Researchers must ensure data stays within legal and ethical boundaries, especially for sensitive fields.

No tool, however advanced, can replace ethical judgment and subject-matter expertise.

Researchers must balance the pull of automation with the imperative for critical, ethical scrutiny.

Will textwall.ai and its peers change everything?

The short answer: they already have—at least for those willing to adapt. Platforms like textwall.ai don’t just process data; they empower researchers to ask better questions, spot deeper trends, and move faster than was conceivable even three years ago.

"Document analysis is no longer a bottleneck. For those who embrace the right tools, it’s a launchpad." — Dr. Lisa Chen, Research Technologist, Metapress, 2025

Still, the ultimate edge lies not in the tool, but in how you wield it.

The researchers who thrive are those who combine relentless curiosity with ruthless rigor—using automation as leverage, not a crutch.

Beyond the tool: building a smarter, more resilient research practice

Human intuition vs. AI: finding the balance

No matter how advanced document analysis tools for researchers get, the human element remains irreplaceable. Pattern recognition, contextual judgment, and creative insight are the beating heart of research. The smartest approach is not choosing between intuition and automation—but blending them.

- Use AI to destroy grunt work and surface patterns.

- Apply human judgment to interpret, synthesize, and challenge those results.

- Foster a culture of skepticism even (especially) of automated outputs.

Continuous learning: staying ahead of the curve

Research is a moving target. Here’s how to stay sharp:

- Schedule regular tool audits: Revisit your platform and plugin choices every quarter.

- Attend cross-disciplinary workshops: New insights often come from neighboring fields.

- Crowdsource best practices: Share lessons learned with your community—what works, what doesn’t.

- Experiment often: Test new features and integrations before they become mainstream.

- Document processes: Ensure learning isn’t lost to team turnover or platform updates.

The only constant is change—so build learning into your workflow.

Building your own document analysis toolkit

No single platform covers everything. Assemble your stack:

- One primary analysis tool (e.g., textwall.ai, Docugami)

- A qualitative coder (e.g., NVivo, ATLAS.ti) for deep dives

- A quantitative engine (e.g., Kapiche) for survey or structured data

- Translation/annotation layer (e.g., Kami AI)

- A rock-solid citation/reference manager

The toolkit is less about brand names than about process: choose platforms that integrate, learn, and scale with you.

Supplementary: adjacent fields and future frontiers

How document analysis is transforming other industries

- Finance: AI-driven analysis spots fraud and market trends in regulatory filings.

- Journalism: Rapid mining of leaks and whistleblower documents brings stories to light faster.

- Education: Automated grading and feedback on student essays.

- Corporate compliance: Real-time audit and risk flagging in vast document sets.

- Government: Policy analysis and public feedback synthesis at scale.

Common misconceptions still holding researchers back

- “AI is only for data science.” Modern tools are built for all fields, from philosophy to pharmacology.

- “Manual review is always safer.” Human fatigue introduces bias and error—automation, when used wisely, can improve accuracy.

- “Only large teams benefit.” Even solo researchers can slash workload with the right stack.

Systematic distortion introduced either by a tool’s training data or its implementation—always check outputs for fairness.

The need for transparent, traceable logic in AI-driven analysis—now a make-or-break feature for trust.

Practical applications you’re probably overlooking

Document analysis tools for researchers aren’t just for academic papers:

- Grant application review: Auto-tagging requirements and eligibility clauses.

- Conference planning: Analyzing attendee feedback and speaker proposals.

- Intellectual property: Sifting patent databases for novelty and citation patterns.

- Public policy: Mining citizen feedback for trends in open consultations.

The potential is only limited by your willingness to experiment—and your vigilance in validating results.

Conclusion: reclaiming your edge in the era of hyper-automation

Key takeaways and next steps

The reality is stark: document analysis tools for researchers are now the difference between drowning in data and distilling it into action. The best tools cut through the noise, surface critical insights, and buy back the most precious commodity in research—time.

- Don’t wait—audit your current workflow for bottlenecks.

- Test and compare leading platforms, prioritizing explainability and integration.

- Invest in continuous learning—algorithms evolve, and so should you.

- Use AI to amplify, not replace, your expertise.

- Stay vigilant—combine automation with skepticism.

- Cherish collaboration—workflows thrive on shared insight.

- Document and share your lessons—raise the bar for your field.

A final word: research, resilience, and the human factor

Ultimately, the revolution isn’t about replacing researchers—it’s about unshackling them. The age of hyper-automation demands resilience, skepticism, and above all, relentless curiosity. The most transformative insights, as always, come from those willing to wrestle with the data, interrogate the tools, and never stop asking better questions.

"The only thing more dangerous than drowning in information is refusing to adapt. Stay sharp. Stay skeptical. Let the machines do the grunt work—your mind is for the real breakthroughs." — Dr. Jane Hamilton, Information Science Specialist, TechTarget, 2024

And if you want a platform that’s battle-tested and ready to cut through the nonsense, remember: your research edge starts with the right tools, and the resolve to use them wisely.

Sources

References cited in this article

- ToolsForHumans.ai(toolsforhumans.ai)

- Metapress(metapress.com)

- TechTarget(techtarget.com)

- DocumentLLM(documentllm.com)

- Forage.ai(forage.ai)

- SAS Blogs(blogs.sas.com)

- Editverse(editverse.com)

- DocsNow(blog.docsnow.io)

- DocuXplorer(docuxplorer.com)

- Ocrolus(ocrolus.com)

- Base64.ai(base64.ai)

- Docsumo(docsumo.com)

- Insight7(insight7.io)

- Lumivero(lumivero.com)

- Tech Junction(techjunction.co)

- Writingmate.ai(writingmate.ai)

- AskYourPDF(askyourpdf.com)

- DigitalDefynd(digitaldefynd.com)

- Insight7(insight7.io)

- RFlow(rflow.ai)

- PR Newswire(mediablog.prnewswire.com)

- Syllo(syllo.ai)

- Rossum.ai(rossum.ai)

- Mobiloitte(mobiloitte.com)

- Scholarly Kitchen(scholarlykitchen.sspnet.org)

- MDPI(mdpi.com)

- Insight7: AI in Research(insight7.io)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Analysis Tools Comparison: AI Winners, Losers and Risks

Discover the shocking gaps, hidden costs, and the 2026 AI revolution. Make your next choice with facts, not hype. Read now.

Document Analysis Tools That Actually Deliver Insight, Not Noise

Document analysis tools are revolutionizing how we extract insights. Discover what really sets advanced tools apart, and why you can't afford to ignore them.

Document Analysis Software: Myths, Risks and the Real Edge

Document analysis software is changing everything—expose the myths, master real risks, and discover the hidden edge before you choose. Don’t get left behind.

Document Analysis Productivity Tools That Actually Save Time

Discover the real impact, hidden pitfalls, and the smartest ways to extract insights. Get ahead—don’t get automated.

Document Analysis Online in 2026: Power, Risk and Who to Trust

Discover insights about document analysis online

Document Analysis in Healthcare: 7 Hard Truths Redefining 2026

Document analysis healthcare industry is transforming patient care, compliance, and data security—discover the 7 bold truths reshaping 2026. Read before you fall behind.

Document Analysis for Risk Management in 2026: Ai’s Hard Reset

Document analysis for risk management just changed—discover the 7 brutal truths, hidden risks, and actionable strategies top firms use in 2026.

Document Analysis for Improved Accuracy When 99% Isn’t Enough

Discover insights about document analysis for improved accuracy

Document Analysis for Corporate Analysts in the AI Risk Era

Step into the high-stakes world of document analysis for corporate analysts—a domain where every overlooked word can erase millions and every late-night