Document Analysis for Improved Accuracy When 99% Isn’t Enough

When was the last time you trusted a crucial document analysis—really trusted it? If your answer is “always,” you might want to keep reading. In the age of digital overwhelm and AI-powered promises, “document analysis for improved accuracy” has become the new corporate mantra. But behind the buzzwords and dashboards, the brutal truth is that most organizations are still losing money, credibility, and even court cases to silent document errors. This isn’t about getting the right word count or ticking compliance boxes. It’s about surviving in a world where a single misread clause or skipped data point can mean disaster. This deep-dive isn’t for the faint of heart. Here, you’ll confront real failures, expose myths, and discover the actionable, research-backed tactics only the most resilient professionals dare to use. Welcome to the sharp end of document analysis.

The hidden cost of inaccuracy: why most document analysis fails

A single error, a million-dollar disaster

It starts innocently enough—a missing decimal, a misclassified invoice, a clause lost in translation. But the real price of document analysis errors doesn’t reveal itself until the damage is irreversible. Consider the infamous case in the financial sector where a misinterpreted contract clause led to a multi-million-dollar penalty for non-compliance. According to a 2024 report by Splore, financial institutions have lost collectively over $2.4 billion in the past two years due to document analysis inaccuracies leading to regulatory breaches. Legal teams aren’t spared either; one overlooked term in a merger agreement cost a top-tier law firm both their reputation and a seven-figure client.

| Year | Industry | Error Type | Consequence |

|---|---|---|---|

| 2023 | Financial | Misread compliance clause | $800M fine, regulatory scrutiny |

| 2024 | Legal | Omitted term in contract | Lost client, reputational damage |

| 2024 | Healthcare | Misfiled patient records | Patient harm, legal action |

| 2025 | Market Research | Misclassified survey data | Faulty strategy, lost revenue |

Table 1: Timeline of high-profile document analysis failures and their organizational impacts. Source: Splore, 2024

Why accuracy is more than a buzzword

Accuracy isn’t just a technical metric; it’s the difference between business as usual and front-page scandal. Too many organizations cling to a superficial sense of security, relying on reported “accuracy rates” that gloss over edge cases and context. According to recent research by Insight7, 2024, perceived accuracy often diverges sharply from real-world outcomes, especially when document complexity increases. When C-suites accept unchecked accuracy claims, they risk cascading errors that undermine the entire decision-making process.

Superficial accuracy claims—think “99% correct”—can seduce leadership into a false sense of security. But, as multiple industry experts warn, these metrics often mask systemic blind spots. What’s missed is often more damaging than what’s captured, especially in high-stakes environments where nuance and intent matter.

"You only realize the cost of inaccuracy when it’s too late." — Alex, Industry Analyst

The silent epidemic: hidden errors and their ripple effects

The most dangerous document analysis errors aren’t the ones flagged in red—they’re the subtle, unnoticed misinterpretations that propagate quietly through workflows. These hidden faults can infect databases, skew reports, and mislead teams for months before surfacing. In healthcare, for instance, a single data entry error can ripple from patient records to insurance billing, regulatory filings, and even patient care. The same dynamics play out in legal and market research contexts, where an unnoticed misclassification can set off a chain reaction of faulty strategies and compliance nightmares. As highlighted by Insight7, the longer these errors remain undetected, the higher the cost—financially, legally, and reputationally.

From manual chaos to AI order: the evolution of document analysis

A brief (and brutal) history of document analysis

Let’s be honest: document analysis began as a slog. Picture harried clerks wading through paper mountains, highlighters running dry, deadlines looming. The jump from manual to digital over the last two decades introduced new efficiencies, but also new vulnerabilities. Early optical character recognition (OCR) tech was notorious for misreading even simple text. Rules-based systems often choked on non-standard phrasing or complex layouts. Only recently have advanced AI models started to genuinely shift the balance toward accuracy.

Key terms you need to know:

- OCR (Optical Character Recognition): Converts scanned images or PDFs into machine-readable text. Crucial for digitizing legacy documents but prone to errors in poor-quality scans.

- NLP (Natural Language Processing): Uses algorithms to analyze and understand language context in documents, moving beyond mere keyword matching.

- LLMs (Large Language Models): Advanced AI trained on vast text corpora, capable of interpreting nuance, extracting meaning, and recognizing patterns missed by traditional systems.

Why automation alone isn’t enough

Pure automation—the dream of a machine that “just works”—is a myth. Automation can blitz through high volumes, but it stumbles on ambiguity, sarcasm, and domain-specific nuance. No matter how advanced, algorithms misinterpret context, especially in edge cases. According to the Insight7 2024 report, even the best AI models achieve peak accuracy only when paired with human subject matter experts who catch subtle intent and industry-specific cues.

- Hidden benefits of hybrid AI + human document analysis:

- Captures contextual nuance machines miss.

- Reduces systemic bias by adding human oversight.

- Flags uncertainties for expert review, reducing unchecked errors.

- Accelerates learning: human corrections inform AI retraining.

- Ensures compliance—humans can audit and explain ambiguous decisions.

- Bolsters trust among stakeholders suspicious of black-box algorithms.

How AI models like textwall.ai change the game

Enter the new breed of AI-powered document analysis platforms. Tools like textwall.ai harness large language models (LLMs) and context-aware NLP to rip through complex, multi-source documents, surfacing actionable insights in seconds. Unlike legacy tools, LLMs can infer meaning from context, uncover trends, and spot anomalies with precision—even across sprawling, unstructured corpora. Instead of being limited to “find X word,” platforms like textwall.ai detect relationships, contradictory statements, and subtle patterns that would elude most humans on a Monday morning.

The result? Speed and scale—AI-driven tools can increase document review velocity by up to 70%, according to Splore (2024), while reducing error rates and boosting auditability. But even here, the smart organizations don’t remove humans from the loop—they empower them.

Debunking the accuracy myth: what the numbers never tell you

Precision vs. recall: the tradeoff nobody talks about

In document analysis, “precision” means how often the system’s findings are correct, while “recall” measures how many relevant results it actually finds. High precision but low recall means you miss crucial data. High recall but low precision floods you with noise. The real world isn’t a lab—striking the right balance is a never-ending battle. According to the Insight7 Best Practices Guide, 2024, organizations that optimize only for one metric often get burned by the other.

| Approach | Precision | Recall | Real-world utility |

|---|---|---|---|

| Manual | High | Low | Misses volume, slow for scale |

| Rules-based | Medium | Medium | Struggles with nuance |

| AI-powered | High | High | Excels at volume and complexity |

| Hybrid | Very High | High | Best overall for accuracy & trust |

Table 2: Comparative analysis of document analysis approaches by precision, recall, and practical effectiveness. Source: Original analysis based on data from Insight7 (2024) and Splore (2024).

Choosing the right mix depends on your risk tolerance. For regulatory compliance, missing a single relevant document can be fatal—so recall is king. For market research, precision may matter more to avoid wild-goose chases.

Why 99% accuracy is a dangerous illusion

Accuracy metrics are often brandished like shields, but they rarely account for the devil in the details. “99% accuracy” sounds impressive—until you realize the 1% failure rate means hundreds of overlooked errors in a million-document batch. And in high-stakes industries, it’s the outliers that cause the most damage.

"If you only focus on big numbers, you’ll miss the real risks." — Jamie, Document Analysis Specialist

The human factor: why context still matters

No algorithm can fully grasp office politics, client intent, or sarcasm-laden footnotes. Human oversight remains essential for interpreting ambiguous text, recognizing unusual phrasing, and catching anomalies. According to Splore (2024), hybrid teams catch up to 30% more context-driven errors than AI or humans alone. In one documented legal case, a subtle change in phrasing—detected only by a human reviewer—prevented a multi-million-dollar liability. Context still reigns supreme.

New rules for accuracy: proven strategies and smart frameworks

Checklist: Step-by-step guide to mastering document analysis accuracy

- Define clear objectives: Know what you’re trying to find or prove before analysis begins.

- Standardize input formats: Use templates and checklists to minimize data chaos.

- Cleanse data: Remove duplicates, fix formatting, and enrich where possible.

- Select the right tools: Match platform capabilities to document complexity.

- Train models on real data: Use domain-specific examples, not just generic samples.

- Implement validation steps: Cross-check findings with multiple sources or reviewers.

- Use sampling for spot-checks: Randomly audit outputs for hidden errors.

- Establish feedback loops: Feed corrections back into the system for continuous improvement.

- Document everything: Keep detailed audit trails for compliance and troubleshooting.

- Involve domain experts: Final review by someone who understands the business context.

Red flags: What the experts warn you about

- Inconsistent data formats: Mixing PDFs, images, and scans without preprocessing invites errors.

- Overreliance on keyword searches: Misses intent, context, and sarcasm.

- Lack of sampling: Never spot-checking outputs means silent errors multiply.

- Ignoring edge cases: Focusing only on bulk stats masks high-risk outliers.

- No validation protocol: Skipping cross-checks is asking for trouble.

- Opaque algorithms: Black-box models hide the “why” behind decisions.

- Missing audit trails: When errors hit, you’ll need a forensic trail.

- Human fatigue: Manual reviewers burn out, and mistakes creep in.

Validation: The unsung hero of high-accuracy analysis

Why is validation so critical? According to Splore (2024), organizations that implement rigorous multi-layer validation cut error rates by 40% compared to those that rely on a single review. Validation can take many forms: cross-checking against reference documents, sampling and spot audits, or shadow analysis (where a parallel process verifies results). Each layer catches different error types—and together, they create a safety net that’s saved more than one company from disaster.

Case files: real-world document analysis gone right (and wrong)

Case study #1: Financial compliance under fire

In 2023, a major bank was facing public scrutiny after a regulatory probe uncovered compliance gaps. The stakes? Multi-million-dollar fines and board-level resignations. The institution overhauled its document analysis workflows, integrating an AI-powered platform with layers of human review, data cleansing, and continuous feedback. By combining domain expertise with LLM-based analysis, they reduced review times by 50% and caught subtle discrepancies missed in earlier audits. Not everything was fixed overnight—some legacy errors persisted—but the shift fundamentally reset their risk profile and restored stakeholder trust.

Case study #2: Academic research—fact or fiction?

A research team at a top-tier university nearly published a high-profile paper based on misinterpreted survey data. Only during post-submission peer review did human analysts discover that automated keyword extraction had misclassified several key responses. The team adopted a best-practice approach: standardized coding frameworks, multi-reviewer validation, and a hybrid AI review process. The result? Subsequent papers sailed through peer review, and the lab’s reputation for rigor soared.

Case study #3: The legal landmine

Legal disputes are often won or lost on the fine print. In a 2024 case, a law firm defending a complex IP claim realized too late that an early contract draft in their database was missing a crucial amendment. Forensic document analysis—using both AI pattern detection and expert review—uncovered the oversight. The lesson was harsh: document versioning and thorough validation are non-negotiable in legal settings. Other industries took note, adopting similar forensic workflows to avoid their own landmines.

How to choose the right approach: manual, automated, or hybrid?

The real-world decision matrix

| Approach | Cost | Speed | Accuracy | Adaptability |

|---|---|---|---|---|

| Manual | High | Slow | Medium-High | High (for nuance) |

| Automated | Low-Medium | Fast | Medium-High | Medium |

| Hybrid | Medium | Fast | Very High | Very High |

Table 3: Feature matrix comparing manual, automated, and hybrid document analysis methods across key criteria. Source: Original analysis based on industry data from Insight7 (2024).

To use this matrix: Start by assessing your document volume and risk profile. For highly nuanced, low-volume work, manual might suffice. For speed and scale, automation is tempting—but for most, hybrid models offer the best of both worlds, balancing precision, recall, and adaptability.

Why hybrid systems are winning in 2025

Hybrid workflows are no longer a luxury—they’re the winning strategy for organizations serious about accuracy. Best-in-class processes pair human reviewers with AI engines, using platforms like textwall.ai to streamline bulk analysis while routing complex or ambiguous cases for expert review. Successful teams build continuous feedback loops, retraining AI models on human corrections and updating protocols based on real-world exceptions.

Cost-benefit analysis: is accuracy worth the investment?

Short-term, automation feels like a bargain—until unresolved errors snowball into crises. The hidden costs of low accuracy include regulatory fines, lost clients, and blown deadlines. Over three years, organizations that invest in robust accuracy frameworks report up to 60% savings when factoring in avoided disasters, according to Splore (2024).

"Cutting corners on accuracy will cost you double later." — Morgan, Process Improvement Lead

Technical deep dive: What makes document analysis accurate?

Beyond OCR: The new science of text understanding

Modern document analysis goes way beyond “read the text.” Advancements in OCR have dramatically improved text extraction from even messy scans, but the real leap is in NLP and LLMs. These models can analyze context, recognize intent, and spot contradictions that would baffle any keyword-based system. Context-aware extraction and semantic analysis are now table stakes for serious accuracy.

Key technical terms:

- Entity recognition: Identifies specific names, dates, places, and organizations in unstructured text.

- Context validation: Ensures extracted information makes sense given the broader document.

- Semantic similarity: Measures how closely two pieces of text relate in meaning, catching paraphrases and subtle rewordings.

Data quality: the foundation nobody talks about

No algorithm can rescue garbage input. The quality of your document data—formatting, completeness, freshness—directly determines analysis accuracy. Practical steps to improve input quality include rigorous data cleansing, enrichment with up-to-date sources, and consistent formatting.

- Top 6 data quality mistakes organizations make:

- Using outdated templates with missing fields.

- Failing to standardize file formats.

- Skipping data cleansing steps before analysis.

- Ignoring metadata (dates, authors, versions).

- Accepting incomplete scans or images.

- Neglecting regular audits of input sources.

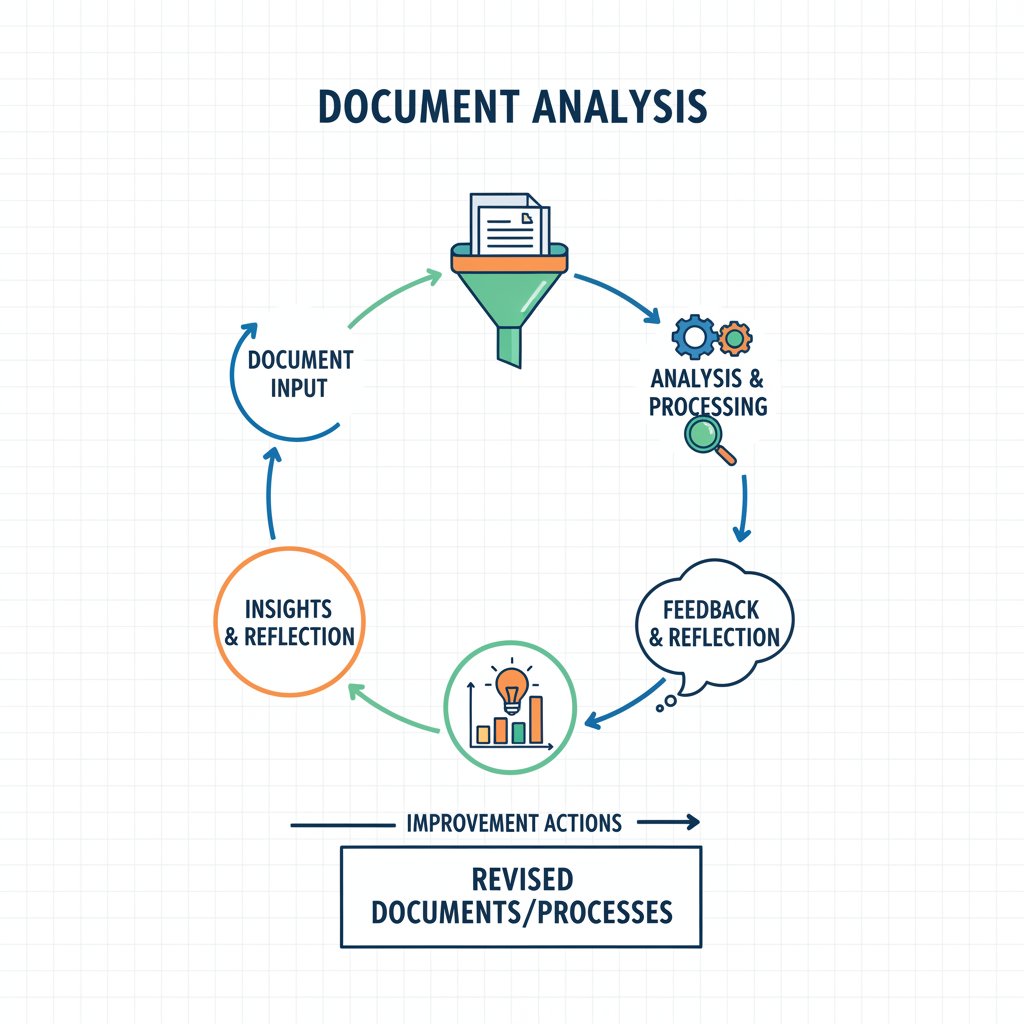

Feedback loops: turning mistakes into improvements

Iterative feedback isn’t just nice-to-have—it’s the backbone of continuous improvement. Organizations that deploy structured feedback loops, where errors spotted by humans are fed back into training sets, see compounding gains in accuracy over time. Setting up effective feedback means documenting all exceptions, retraining models regularly, and involving domain experts in the review process.

Controversies and ethical dilemmas in document analysis

Who gets to decide what’s 'accurate'?

Accuracy isn’t always objective. Different stakeholders—regulators, clients, internal teams—may interpret the same document in conflicting ways. Power dynamics, cultural biases, and organizational politics all shape what’s accepted as “correct.” Experts urge teams to establish transparent protocols for resolving ambiguity and documenting rationale.

Bias in AI: fixing more than just typos

Even advanced AI can reinforce or even amplify existing biases. Algorithmic bias in document analysis has led to discriminatory outcomes, as noted by Splore, 2024. Proactive bias detection and mitigation are now industry requirements.

7 steps to audit your document analysis for bias:

- Regularly review training data for representation.

- Test outputs across demographic and industry segments.

- Use explainability tools to trace decision paths.

- Solicit feedback from diverse stakeholders.

- Implement bias detection software.

- Document every correction and its context.

- Periodically update models with new, balanced data.

Privacy, security, and the dark side of automation

Automated document processing raises real privacy and security risks. Unchecked access to sensitive data, unencrypted storage, and opaque algorithms can lead to catastrophic breaches. The best organizations balance accuracy and transparency with robust security protocols, encryption, and auditable systems.

The future of document analysis: predictions, promises, and hard truths

AI, LLMs, and the next wave of breakthroughs

While the industry is already seeing radical improvements in analysis accuracy thanks to AI and LLMs, organizations must remain vigilant. The next breakthroughs will revolve around explainable AI, real-time analysis, and adaptive models that learn from every interaction. But as the hype cycles persist, only those who pair new tech with sober skepticism and strong frameworks will succeed.

The jobs and skills of tomorrow

Advanced document analysis isn’t making humans obsolete—it’s making them indispensable in new ways. The most sought-after skills now include critical thinking, data literacy, AI oversight, and domain expertise. These roles are less about reading and more about interpreting, validating, and improving systems.

- 7 future-proof skills for document analysis professionals:

- Critical thinking in ambiguous scenarios

- Advanced data literacy

- AI tool fluency

- Process validation and auditing

- Change management

- Ethical oversight

- Cross-functional communication

What most predictions get wrong

Hype cycles have convinced too many that AI can replace human judgment altogether. Current evidence shows the opposite—human oversight, skepticism, and adaptability remain essential to prevent “black swan” errors and navigate the gray areas of document interpretation. The key is a relentless commitment to questioning, validating, and improving.

Adjacent topics: what else you need to know

Document security: protecting accuracy in a hostile world

External threats—tampering, spoofing, and data loss—can erode the foundation of any analysis. Secure workflows, encrypted storage, and rigorous access controls are must-haves. Regularly audit document chains to ensure integrity and prevent subtle sabotage.

Document analysis and digital trust

Accuracy isn’t just operational—it’s reputational. Organizations with rigorous, transparent analysis workflows build trust with partners, regulators, and customers. Open, auditable processes are now a competitive advantage.

The ripple effect: how accurate document analysis transforms organizations

True document accuracy accelerates business efficiency, compliance, and strategic certainty. The culture shifts, too: teams adopt evidence-based decision making, and the “just trust the numbers” mentality is replaced by “prove it, then act on it.”

Conclusion: the new accuracy imperative

Rethink what accuracy really means

Document analysis for improved accuracy is not about searching for perfection, but about embracing imperfection as a catalyst for vigilance, validation, and improvement. The smart move is not to trust the system blindly, but to build in layers of oversight, continuous learning, and ethical scrutiny. This isn’t just a technical exercise—it’s an organizational imperative. If you want to thrive in a world where one error can cost everything, it’s time to raise your standards, challenge your assumptions, and put accuracy at the heart of every process.

Your next steps: where to go from here

- Audit your current document analysis workflows for hidden flaws.

- Establish clear accuracy and validation benchmarks.

- Invest in quality data input and cleansing routines.

- Adopt hybrid human + AI workflows with continuous feedback.

- Regularly retrain and audit your models for bias and errors.

- Develop transparent, auditable processes for compliance and trust.

- Explore advanced analysis solutions like textwall.ai for ongoing efficiency and accuracy gains.

Ready to see where you stand? Dive deeper with resources like textwall.ai and commit to the relentless pursuit of document accuracy. Your reputation, your bottom line, and your sanity depend on it.

Sources

References cited in this article

- Insight7: Best Practices(insight7.io)

- Splore: AI Document Analysis(splore.com)

- ICG: AI Document Review(icg.co)

- Gartner Data Quality Cost(bhwiki.com)

- Doakio: Cost of Poor Documentation(doakio.com)

- Formtek: Document Management(formtek.com)

- A-Team Insight: Bad Data(a-teaminsight.com)

- Business Research Insights(businessresearchinsights.com)

- DocumentLLM: AI Document Analysis(documentllm.com)

- QueryDocs: AI Transformation(querydocs.ai)

- ScribTx: AI Document Analysis Limits(blog.scribtx.com)

- Ironclad: Legal Automation(ironcladapp.com)

- Journal of Big Data(journalofbigdata.springeropen.com)

- Texta.ai: AI Document Analysis(texta.ai)

- DISCO: Legal AI Poll(csdisco.com)

- Insight7: Data Collection(insight7.io)

- Rossum: IDP Myths(rossum.ai)

- ScienceDirect: Document Analysis(sciencedirect.com)

- ICDAR 2024 Proceedings(dl.acm.org)

- Lineal: Technology for Review(lineal.com)

- Integreon: Legal Strategies(integreon.com)

- PDF.ai: Legal Document Analysis(pdf.ai)

- Insight7: AI Software 2024(insight7.io)

- Netguru: AI Document Analysis(netguru.com)

- Retraction Watch: Fraud Red Flags(retractionwatch.com)

- ACFE Fraud Report 2024(tenintel.com)

- Lexology: Arbitration Red Flags(lexology.com)

- Kneat: Validation Goals 2024(kneat.com)

- EvalAcademy: Document Review(evalacademy.com)

- ScienceDirect: Validation Methods(sciencedirect.com)

- NEJM AI Case Studies(ai.nejm.org)

- Gartner: AI Case Studies(gartner.com)

- Mindee: JPMorgan COIN(mindee.com)

- Sago: Research Trends 2024(sago.com)

- Insight7: Qualitative Research(insight7.io)

- Scholiva: Manual vs Automated(scholiva.com)

- ProAI: Automated Analysis(proai.co.uk)

- Insight7: Policy Analysis Guide(insight7.io)

- PipDecks: Decision Matrix(pipdecks.com)

- ICDAR 2023 Workshops(dl.acm.org)

- FileCenter: Document Management Stats 2025(filecenter.com)

- Docsvault: Hybrid Trends(docsvault.com)

- Rossum: Automation Trends 2025(rossum.ai)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Analysis for Corporate Analysts in the AI Risk Era

Step into the high-stakes world of document analysis for corporate analysts—a domain where every overlooked word can erase millions and every late-night

Document Analysis for Compliance Officers in the AI Risk Era

Discover the new rules, hidden pitfalls, and expert strategies to dominate compliance in 2026. Change your approach now.

Document Analysis Decision-Making When One Missed File Costs Millions

Unmask hidden risks, avoid costly mistakes, and discover data-driven tactics for smarter choices. Read now for real-world insights.

Document Analysis Comparison Tools in 2026: Winners, Risks, Traps

Uncover the 7 harsh realities, hidden benefits, and expert strategies to choose the right tool in 2026. Read before you decide.

Document Analysis Better Than Manual Review? the Data Says No Contest

Discover insights about document analysis better than manual

Document Analysis Automation in 2026: Roi, Risks, and Reality

Document analysis automation is reshaping business. Get the real story, hidden risks, and must-know strategies in this 2026 deep dive. Don’t get left behind.

Document Analysis Accuracy Tools and the Illusion of Certainty

Expose the myths, measure what matters, and pick better in 2026. Discover the data, risks, and real-life wins. Read now.

Document Analysis API Integration That Saves You From Technical Debt

Document analysis API integration demystified: Unmask hidden risks, expert strategies, and real-world outcomes for flawless automation. Don’t fall for vendor hype—read first.

Digital Transformation Document Handling When AI Goes Wrong

Discover insights about digital transformation document handling