Text Segmentation Software, Demystified: Power, Pitfalls, Reality

Let’s be honest: if you’re still treating text segmentation software as a “nice-to-have,” you’re already falling behind. In the age of information overload, where every click, meeting, and regulatory memo spawns another megabyte of content, the real battle isn’t just about storage—it’s about survival. "Text segmentation software" isn’t just an IT department’s shiny new toy. It’s the difference between dreading your inbox and mining it for competitive gold. But here’s the unfiltered truth: most organizations are using these tools with their eyes half-closed—blind to hidden risks, hooked on hype, and missing the real revolution happening under their noses. If you think your document analysis stack is cutting it, brace yourself. This isn’t another puff piece. We’re cutting deep—unmasking the myths, exposing the ethical minefields, and handing you the blueprint to dodge disaster while leveraging the actual power of AI-driven text segmentation in 2025.

Why text segmentation software matters more than ever

The information apocalypse: Too much data, not enough insight

The world is drowning in unstructured data. As of 2025, studies confirm that over 80% of enterprise information is unstructured—think emails, contracts, reports, research papers, and chat logs. According to The Business Research Company, the global text analysis software market is exploding, growing from $4.84 billion in 2024 to $5.85 billion in 2025 at a CAGR of nearly 17-20%. The reason is blunt: data’s not just multiplying, it’s mutating—spawning in dozens of languages, formats, and tones, far faster than manual methods or legacy document management systems can even hope to process.

Traditional document handling? Outdated. It crumbles under the weight of today’s “document deluge”—leaving knowledge workers scrambling to find needle-sharp insights in haystacks of noise. The result? Missed opportunities, regulatory slip-ups, decision paralysis, and a whole lot of wasted time.

"Most of what matters gets lost between the lines." — Jordan, data scientist

Let that sink in. The real cost isn’t just inefficiency—it’s the critical knowledge you never even see.

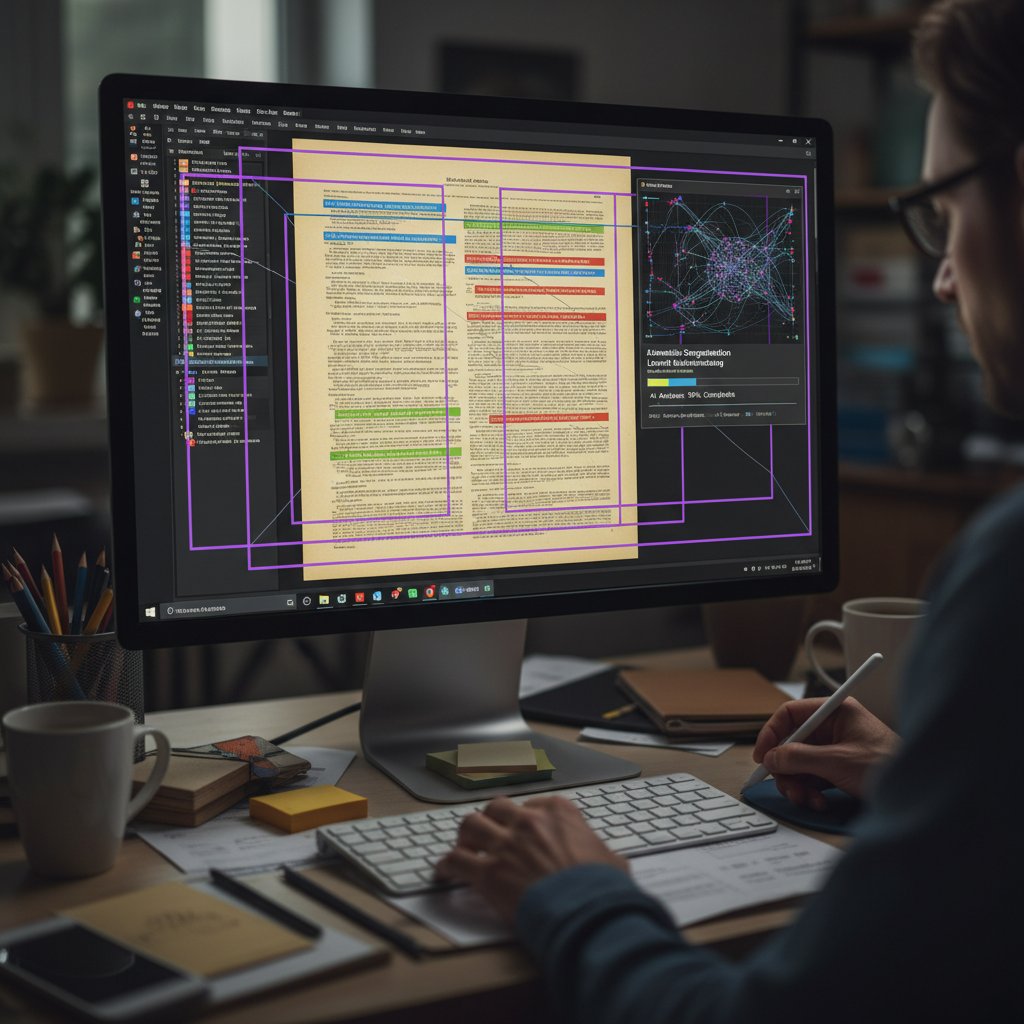

From manual chaos to automated clarity: The segmentation revolution

Text segmentation used to mean color-coding printouts, sticky notes, and hours of manual copy-paste. Now, thanks to AI, it’s about algorithmic clarity—splitting, tagging, and categorizing content at a speed and accuracy no human army can hope to match. The jump from rules and regex to transformer-based, large language models (LLMs) is as dramatic as the switch from candlelight to LEDs.

| Year | Methodology | Key Milestone | Disruptive Shift |

|---|---|---|---|

| 2005 | Manual tagging/sorting | Early enterprise DMS adoption | Introduction of basic keyword search |

| 2010 | Rule-based/NLP pipelines | First-gen text mining tools | Emergence of entity/phrase extraction |

| 2016 | Statistical ML models | Sentiment/intent classification | Rise of machine learning-based chunking |

| 2020 | Transformer/LLM architectures | Contextual, multilingual segmentation | AI/LLM models outperform human baselines |

| 2024 | Hybrid + plug-and-play AI | Real-time, explainable segmentation | End-to-end document understanding, cloud-first |

Table 1: Evolution of text segmentation software methodologies and market shifts (Source: Original analysis based on The Business Research Company, 2024; multiple industry reports)

Modern text segmentation software doesn’t just automate the grind—it rewires the DNA of information work. Instead of drowning in documents, organizations can extract critical points instantly, surface hidden risks, and act before the competition even spots the trend.

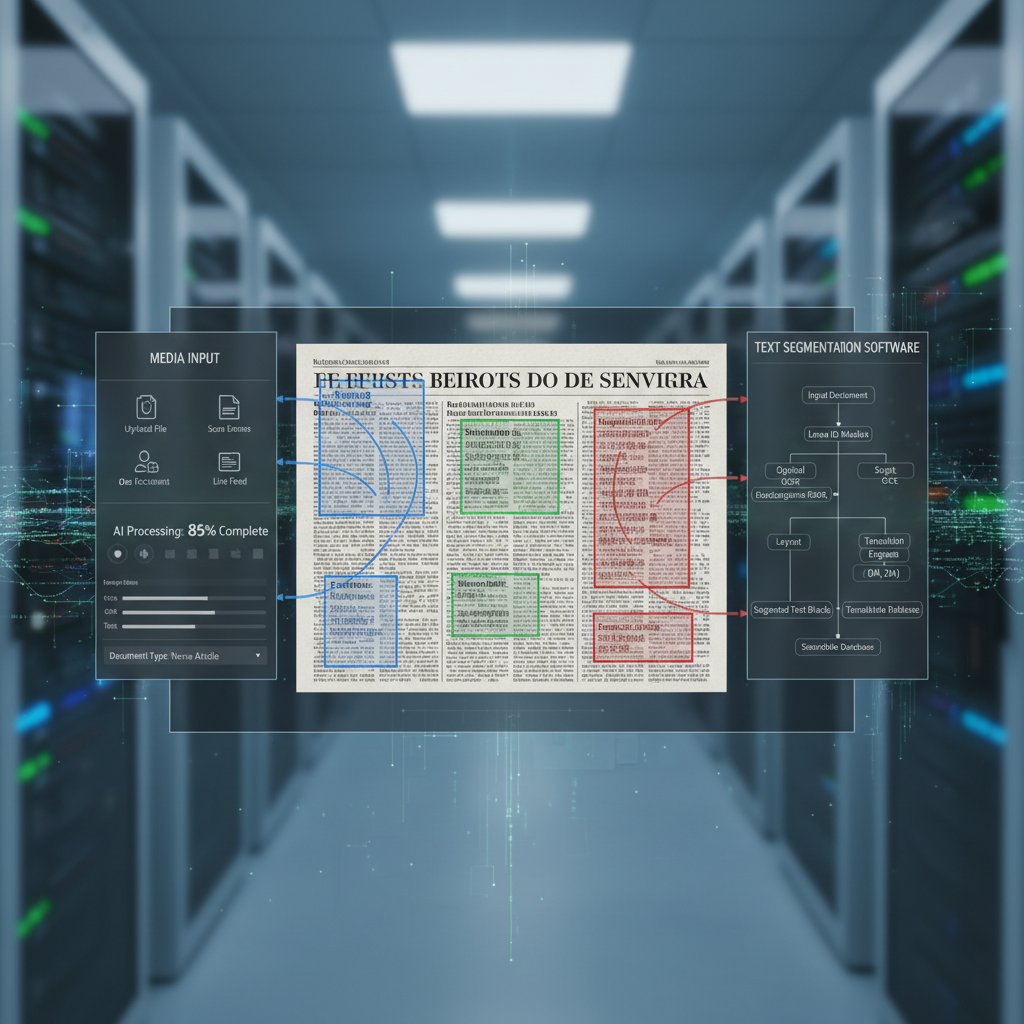

What even is text segmentation software?

Strip away the jargon, and text segmentation software is your digital scalpel. It slices and dices documents into coherent, logical “chunks”—be they sentences, paragraphs, sections, or entities—making them searchable, taggable, and actionable. Imagine turning a 70-page contract into instantly digestible components—clauses, obligations, risk factors—ready for analysis, review, or export.

Definition list: Key terms

- Text segmentation: The automated process of splitting text into meaningful units (sentences, paragraphs, sections) for downstream analysis. Crucial for making unstructured data searchable and actionable.

- Chunking: Breaking text into discrete “chunks” or segments, often based on syntax, semantics, or custom rules. Underpins everything from summarization to entity extraction.

- Entity recognition: Identifying and categorizing key entities (names, dates, organizations) within text. Fuels knowledge graphs and compliance workflows.

- Summarization: Generating concise, context-rich summaries from longer documents. Enables rapid review and decision-making.

These aren’t just buzzwords—they’re the backbone of how organizations reclaim control from the chaos.

How text segmentation software actually works (beyond the hype)

Under the hood: Algorithms that shape your world

At the core of every segmentation tool is an engine—an algorithmic brain that decides where one idea ends and another begins. Classic tools lean on rule-based logic: regex patterns, dictionaries, and human-defined syntax. Advanced tools harness the brute force of LLMs—gigantic neural networks trained on massive, multilingual datasets.

Rule-based approaches offer predictability, but fold in the face of linguistic nuance and domain jargon. LLM-powered segmentation thrives in complexity—handling slang, legalese, or scientific notation, but at the cost of transparency and reproducibility.

LLMs vs. legacy: What really sets next-gen tools apart

Here’s the unvarnished comparison: classic rule-based segmentation is fast, cheap, and deterministic. But it’s brittle—breaks with every edge case or new data source. LLM-based tools, by contrast, adapt on the fly, handle multilingual chaos, and extract context like a seasoned analyst. But they’re resource-hungry and sometimes frustratingly opaque.

| Feature | Rule-based Segmentation | Statistical ML | LLM-based Segmentation |

|---|---|---|---|

| Accuracy | Medium | High (domain-limited) | Very high (context-adaptive) |

| Adaptability | Low | Medium | High |

| Cost | Low | Medium | High |

| Transparency | High | Medium | Low |

| Multilingual support | Weak | Limited | Strong |

| Explainability | High | Medium | Low |

| Requirements (data/infra) | Minimal | Moderate | Substantial |

| Bias risk | Low (but rigid) | Medium | High (if unmonitored) |

Table 2: Feature matrix for text segmentation software approaches (Source: Original analysis based on multiple industry and academic sources)

This isn’t just theory—smart organizations are swapping brittle rules for LLM-driven insights, but only where transparency, bias mitigation, and regulatory compliance are built in from the ground up.

Inside the black box: Addressing the AI transparency problem

Let’s not sugarcoat it—LLM-powered segmentation is often a black box. Even AI experts can’t always explain why a model sliced a document a certain way. This isn’t just academic; when compliance, risk, or justice are on the line, opacity becomes a liability.

Hidden risks of black-box segmentation:

- Unintended bias amplifies existing prejudices in training data

- Critical data points can get lost or misclassified without warning

- Regulatory compliance is harder when outcomes can’t be explained

- Decision-making slows as teams “audit the AI” instead of trusting results

- Security risks rise if proprietary or sensitive data is mis-segmented

- False confidence leads to missed errors and overreliance

- Accountability gaps emerge—who’s responsible when AI slices wrong?

The industry is waking up to these risks, but as of now, transparency and explainability features are still a work in progress for most commercial and open-source segmentation solutions.

Common myths and misconceptions about text segmentation

Myth #1: More data always means better results

Here’s a seductive lie: “Just throw more data at the model, and segmentation will magically improve.” In practice, this breeds overfitting, clutter, and diminishing returns. Research shows that diverse, noisy, and multilingual data sources can tank accuracy if not carefully curated and balanced. According to several NLP studies, carefully labeled, domain-specific datasets consistently outperform generic “big data” dumps.

Take the example of a legal AI trained on 10,000 random contracts vs. one trained on 500 expertly annotated deals from a single jurisdiction. The latter routinely outperforms the brute-force approach, especially on compliance-critical tasks.

Myth #2: All text segmentation tools are the same

False. The landscape is a minefield of underpowered free tools, overpriced legacy vendors, and flashy LLM-based upstarts. Key differentiators include adaptability to new domains, ability to customize rules and output, integration depth, and—crucially—scalability for real-world enterprise workloads.

| Category | Accuracy | Customization | Scalability | Transparency | Best For |

|---|---|---|---|---|---|

| Open-source | Medium | High | Medium | High | Developers, research, prototyping |

| Proprietary | High | Medium | High | Medium | Enterprises, data-heavy organizations |

| LLM-powered | Very high | High | High | Low | Complex, multilingual, dynamic tasks |

Table 3: Comparison of segmentation software types (Source: Original analysis based on market and product reviews)

The winner depends on your use case, compliance needs, and appetite for risk.

Myth #3: Text segmentation is just for PDFs and legal docs

This myth could not be further from the truth. While PDF parsing and contract review get the spotlight, segmentation powers everything from journalism to marketing analytics, academic literature reviews, and even experimental creative writing projects.

Unconventional uses for text segmentation software:

- Automatically structuring news feeds by topic and sentiment

- Surfacing key themes in customer support transcripts

- Tagging and organizing transcripts from podcasts and webinars

- Enabling real-time content moderation for online platforms

- Accelerating literature reviews in academic research

- Extracting insights from social media or forum threads

- Slicing and dicing technical manuals for user onboarding

- Supporting digital archiving and e-discovery in historical projects

If you think text segmentation is niche, you’re missing the forest—and most of the trees.

Real-world applications: From boardrooms to back alleys

Corporate knowledge: Mining gold from internal chaos

Major enterprises are sitting atop mountains of untapped intelligence—buried in reports, emails, meeting logs, and knowledge bases. Modern text segmentation software is the pickaxe: it scans, slices, and surfaces the gold lurking in unstructured messes. A Fortune 100’s workflow might look like this:

- Upload thousands of financial reports into a secure cloud instance.

- AI segments each document into headline risks, key findings, and actionable tasks.

- Automated alerts flag anomalies for compliance review.

- Summarized key points feed into dashboards for executive decision-making.

- All outputs are archived and indexed for future search—no more lost insights.

The payoff? Reduced analysis time by 60-70%, fewer missed risks, and a leaner, smarter decision pipeline.

Legal, medical, and beyond: High-stakes segmentation

In law and healthcare, the stakes are existential—compliance missteps, patient safety, and massive financial exposure. Verified case studies show that segmentation-driven review of legal contracts can cut review time by up to 70% and slash compliance errors. In healthcare, processing extensive patient records with segmentation software reduces administrative workload by half and surfaces previously hidden risks.

These aren’t fringe cases; they’re the frontlines where AI isn’t just a buzzword—it’s a lifeline.

Creative chaos: Journalism, content creation, and the limits of automation

Segmentation software is revolutionizing newsrooms and content studios, helping journalists sort interviews, surface leads, and check facts. But there’s a hard limit: nuance, narrative, and “gut feel” still matter.

"Software can spot the structure, but it can’t always smell the story." — Alex, journalist

No tool can fully automate the art of storytelling. But for investigative teams and content strategists alike, the right segmentation stack is the difference between wading through noise and finding the thread that matters.

The dark side: Risks, biases, and ethical fault lines

When segmentation goes wrong: Real failures and fallout

As powerful as text segmentation software is, the landmines are real. Think contracts split in the wrong place, crucial obligations missed, or sensitive information leaked because of a single segmentation error.

Top 7 segmentation disasters and how to avoid them:

- Missed critical clause in contract—Mitigate by integrating human-in-the-loop review.

- False positive in compliance audit—Test on representative datasets before deployment.

- Bias amplification against minority languages—Enforce multi-lingual benchmarking.

- Mis-segmented medical data causing patient risk—Use domain-specific, validated models.

- Data leak from cloud-based processing—Deploy privacy-preserving mechanisms.

- Regulatory fines for unexplained AI decisions—Build transparency and audit logs.

- Operational downtime from legacy tool failures—Prioritize real-time, scalable solutions.

Learning from these disasters is the only way to future-proof your knowledge workflows.

Algorithmic bias: Who gets left behind?

Bias in segmentation software isn’t just a technical issue—it’s a societal risk. When models are trained on unbalanced, Western-centric, or mono-lingual data, entire communities and contexts vanish. Concrete examples abound: gendered pronouns misclassified in HR records, indigenous languages ignored in government documents, or nuanced legal terms mangled because the model “never saw them before.”

Mitigation strategies? They’re non-negotiable: curate balanced datasets, enforce cross-cultural testing, and build feedback loops that let marginalized voices flag errors in real time.

Privacy and compliance: The silent minefield

Automated text analysis is a privacy minefield. Regulations like GDPR, HIPAA, and CCPA mean every document, every data point, is a potential compliance risk if mismanaged.

"Every new tool is another privacy riddle." — Casey, compliance lead

Organizations serious about risk use privacy-preserving cloud solutions, audit logs, and granular access controls as non-negotiable requirements for any segmentation deployment.

How to choose the right text segmentation software (without getting burned)

Step-by-step guide: From chaos to clarity

Choosing segmentation software isn’t just a technical checklist—it’s a survival strategy. Here’s how to get it right:

Checklist for choosing text segmentation software:

- Clarify your primary use case—Are you segmenting contracts, support logs, or research articles?

- Assess data complexity—Multilingual, jargon-heavy, or highly regulated?

- Map integration requirements—Cloud, on-premises, or hybrid?

- Vet for explainability—Does the tool offer transparency or just “trust us, it works”?

- Check scalability—Will it handle a spike from 100 to 10,000 docs overnight?

- Audit privacy/compliance features—Are there built-in controls and audit trails?

- Test on real-world samples—Always pilot with your own data, not just demos.

- Look for customization—Can you tweak rules, retrain models, or just click “run”?

- Evaluate support and community—Is there real help when things break?

- Calculate true total cost of ownership (TCO)—License, compute, training, support, and update costs.

Follow these steps, and you’ll sidestep most pitfalls that sink segmentation rollouts.

Red flags and hidden costs to watch for

Beneath the glossy demos lurk dangers: opaque pricing, brittle integrations, and “black box” outputs that don’t stand up to regulatory scrutiny.

Red flags in text segmentation software:

- No access to the underlying algorithm or model details

- Vague or missing documentation for compliance features

- Poor multilingual or domain adaptation capabilities

- Overpromising on “100% accuracy” (it doesn’t exist)

- High onboarding fees or unexpected training costs

- Lock-in to proprietary formats or platforms

- Lack of ongoing updates or security patches

Spot these and run—your risk profile will thank you.

Self-audit: Are your workflows ready for automation?

Before you buy, audit your own house. Too many deployments fail because of messy data, unclear requirements, or lack of in-house expertise.

Key readiness factors:

- Data quality: Are your documents clean, consistent, and well-formatted?

- Integration capability: Can your current systems “talk” to new software via APIs or connectors?

- User expertise: Do your teams understand both the limitations and possibilities of AI-driven tools?

- Change management: Is there buy-in from decision-makers and end-users?

- Security posture: Are privacy, access control, and compliance requirements mapped out?

- Scalability: Do your current processes scale, or are they held together by manual heroics?

- Auditability: Can your systems produce logs and proof of actions taken?

Don’t skip this step—automation is only as good as the foundation you build it on.

Comparing the contenders: Open source, proprietary, and LLM-powered solutions

Open-source vs. closed: The real cost of 'free'

Open-source segmentation tools offer flexibility and control but come with steeper learning curves and less robust support. Proprietary options promise polish and scale but can lock you in—and tack on surprise fees. LLM-powered solutions like textwall.ai offer bleeding-edge accuracy but may require more compute and careful handling of privacy and compliance.

| Solution Type | TCO | Flexibility | Support | Innovation Pace | Hidden Costs |

|---|---|---|---|---|---|

| Open source | Low | High | Low/Community | Medium | High setup, training |

| Proprietary | High | Medium | High | Medium | License, lock-in |

| LLM-powered | Medium/High | Medium | Medium | High | Compute, privacy |

Table 4: Cost-benefit analysis of segmentation solutions (Source: Original analysis based on market research and user reviews)

No “free lunch”—each option demands trade-offs.

Feature matrix: What actually matters in 2025

Forget the fluff. Must-have features for segmentation software in 2025 include multilingual support, explainability, real-time processing, API integration, privacy controls, and cloud scalability. Miss one, and your workflow is stuck in 2018.

Industry examples abound: A global bank prioritized explainability and privacy, sacrificing a bit of speed, and nailed compliance audits. A creative agency opted for real-time, LLM-driven tools, boosting content throughput but managing higher costs for compute and fine-tuning.

Case studies: Winners, losers, and lessons from the field

- Success: A market research firm used LLM-based segmentation to slice 10,000 reports monthly, cutting analysis time by 80% and landing new clients who valued speed and depth.

- Failure: A law firm deployed a legacy tool without multilingual support, missing key clauses in cross-border contracts and triggering costly remediation.

- Twist: An academic group paired open-source chunking with custom entity extraction, discovering new research themes but struggling with upkeep as project complexity grew.

The lesson: There’s no silver bullet—winning is about fit, not flash.

Next-level strategies: Getting the most from your segmentation stack

Integration hacks: Making segmentation play nice with your stack

The #1 reason segmentation projects die? Botched integrations. Avoid common mistakes with a few simple strategies:

5 integration tips for seamless segmentation:

- Map all upstream/downstream data flows before rollout.

- Use APIs and webhooks for real-time sync—avoid manual exports.

- Pilot integrations with a subset of documents to catch edge cases.

- Document every mapping, transformation, and exception.

- Involve both IT and business users from the start—don’t let silos kill adoption.

Nail integration, and the rest falls into place.

Beyond segmentation: Linking with summarization, extraction, and more

Segmentation’s real superpower emerges when linked with summarization, entity extraction, and workflow automation:

- Use segmentation to chunk reports, then run summarization to produce exec-ready overviews.

- Pair with entity recognition to extract companies, dates, and obligations for compliance indexing.

- Feed segmented content into workflow bots for automated approvals, flagging, or escalation.

Alternative approaches include plug-and-play frameworks, hybrid (rule + AI) pipelines, and connecting with broader NLP stacks for everything from translation to sentiment analysis.

Continuous improvement: Auditing and optimizing your results

Segmentation isn’t “set and forget.” Top teams audit output weekly, track error rates, and retrain or tweak as new document types emerge. Dashboards with analytics and explainability metrics are your best friend for ongoing optimization.

Iterative improvement is the only way to ensure your segmentation stack stays ahead of the curve—and your competitors.

The future of text segmentation: Bold predictions and coming disruptions

From LLMs to AGI: What’s next for document intelligence

The next five years will see segmentation software moving from “smart slicing” to full-blown document understanding—linking context, extracting actionable intelligence, and integrating seamlessly with end-to-end automation. Transformer-based models with advanced multilingual and cross-domain skills are already bridging historic gaps.

Breakthroughs like few-shot and zero-shot learning are reducing the pain of data labeling, while explainable AI features are giving compliance teams the visibility they crave. Cloud-based, privacy-respecting solutions are dominating deployments, and hybrid models are replacing brittle, one-size-fits-all approaches.

Emerging use cases you haven’t considered

New frontiers for segmentation software? Think real-time news aggregation across multiple languages, plugging into voice transcript analytics, or segmenting video subtitles for accessibility and compliance.

AI is now dissecting not just text but mixed media—audio, image captions, and more—turning the chaotic data soup into structured, actionable knowledge.

How to future-proof your document analysis stack

To stay ahead, organizations must:

- Audit tools and workflows every six months for fit and performance.

- Invest in explainability—don’t trust black boxes.

- Demand privacy by design in any new solution.

- Encourage cross-functional feedback—end users spot blind spots.

- Adopt modular, API-first platforms for easy upgrades.

- Train teams on the nuances of both AI and compliance.

Follow these steps, and you’ll weather whatever disruption comes next.

Adjacent concepts: What else you need to know about document analysis

Text segmentation vs. summarization: Know the difference

While segmentation breaks documents into meaningful sections, summarization distills the core ideas into digestible overviews. Both are foundations of modern document analysis, but their roles and outputs differ.

- Use segmentation when you need granular, structured data—think compliance audits or research reviews.

- Opt for summarization when rapid understanding is key—like executive briefings or search result previews.

- Combine both in workflows needing structure and speed—e.g., segmented clauses with summary risk ratings.

Entity recognition, topic modeling, and other AI magic

Segmentation is just one ingredient in the AI document analysis stew. Entity recognition pulls out names, organizations, and key data points. Topic modeling uncovers hidden themes and relationships. Sentiment analysis gauges tone and bias—vital for PR, HR, and customer support.

Core adjacent concepts:

- Entity recognition: Tagging all mentions of people, places, organizations for structured search.

- Topic modeling: Discovering underlying themes—useful for trend analysis.

- Sentiment analysis: Assigning positive, negative, or neutral tags to sections—critical for feedback analysis.

These techniques work hand-in-hand to turn messy content into strategic intelligence.

When to call in the pros: Advanced document analysis services

Some tasks are too high-stakes, complex, or sensitive for DIY solutions. That’s where advanced document analysis services like textwall.ai come in—delivering expert-level insight, security, and compliance without the learning curve.

Signs you need advanced document analysis services:

- Regulatory audits with harsh deadlines

- Multi-lingual, multi-format document chaos

- Stakes involving intellectual property or confidential data

- In-house teams stretched thin by volume or complexity

- Legacy systems blocking integration or automation

- Frequent workflow breakdowns and lost knowledge

- Need for continuous improvement without constant manual tuning

Sometimes, outsourcing to the experts isn’t a luxury—it’s a business-critical necessity.

Section conclusions: Synthesis, key takeaways, and what’s next

Recap: Brutal truths and bold solutions

Text segmentation software is the backbone of modern knowledge work, but it’s not a panacea. The brutal truths are clear: legacy tools buckle under complexity, AI-powered models can hide dangerous biases, and most organizations still underestimate the effort required for real-world success. Yet, the bold solutions are equally real: hybrid AI, explainable models, privacy-first cloud deployments, and continuous feedback loops are transforming chaos into clarity.

This isn’t just about parsing documents—it’s about rethinking how we find, filter, and act on information in a world that won’t stop generating more.

Action steps: What to do right now

You’ve seen the risks, the opportunities, the landscape. Here’s your five-point action plan:

- Audit your current document chaos—map the pain points.

- Pilot segmentation software with real data—don’t trust vendor demos alone.

- Compare options feature-for-feature—prioritize transparency and scalability.

- Integrate with existing workflows—don’t let silos block adoption.

- Future-proof with regular reviews—adapt as your data and needs evolve.

Your 5-point action plan:

- Map out your current document landscape.

- Test multiple segmentation options with your real workloads.

- Prioritize explainability and compliance in your selection criteria.

- Roll out integrations and train teams for adoption.

- Schedule regular audits and upgrades to keep ahead of the curve.

Final provocation: Rethinking what’s possible with text segmentation

If you’re still treating text segmentation as a back-office IT task, you’re missing the revolution in front of your face. The right tool, in the right workflow, doesn’t just speed you up—it changes the way your organization thinks, acts, and competes. Stop thinking in terms of “processing documents”—start imagining what’s possible when every insight, every risk, every opportunity is a click away. The only question left: are you just parsing the text, or are you changing the story?

"Don’t just parse the text—change the story." — Morgan, technology strategist

Sources

References cited in this article

- Text Analysis Software Market 2025 - The Business Research Company(thebusinessresearchcompany.com)

- Critical Review of Segmentation Techniques - ResearchGate(researchgate.net)

- Insight7.io: Top Text Analytics Solutions 2025(insight7.io)

- Opendatasoft 2025 Study(opendatasoft.com)

- Calcalistech 2025(calcalistech.com)

- Meetanshi Big Data Statistics 2025(meetanshi.com)

- Datanami Report(datanami.com)

- History of Text Analytics(getthematic.com)

- Wikipedia: Text Segmentation(en.wikipedia.org)

- Mailmodo: Customer Segmentation Tools 2025(mailmodo.com)

- Displayr: Best AI Text Analysis Tools 2025(displayr.com)

- EMNLP 2024 Survey(aclanthology.org)

- Matomo: Customer Segmentation Software 2024(matomo.org)

- ScienceDirect: Topic Modeling Perspective(sciencedirect.com)

- AssemblyAI: Text Segmentation Approaches(assemblyai.com)

- SafeGraph: More Data Doesn’t Mean Better Data(safegraph.com)

- SERP AI: Text Segmentation Applications(serp.ai)

- Turnitin: Myths and Misconceptions(turnitin.com)

- LatentView: Real-World Applications(latentview.com)

- Vitalflux: Text Clustering Examples(vitalflux.com)

- Persado: Segment Marketing 2023-24(persado.com)

- HT Media: First-Party Data 2024(htmedia.in)

- Medium: AI in Content Creation(medium.com)

- ISACA Journal: Bias and Ethical Concerns(isaca.org)

- ResearchGate: Ethical Issues in Big Data(researchgate.net)

- CMU Informedia Project(cs.cmu.edu)

- arXiv: Handwritten and Printed Text Segmentation(arxiv.org)

- MDPI: Customer Review Segmentation(mdpi.com)

- Forbes: More Data Does Not Necessarily Lead to Better Outcomes(forbes.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Pattern Recognition in 2026: Power, Bias, and Real-World Risk

Text pattern recognition in 2026: Expose the myths, reveal hidden risks, and seize actionable opportunities with expert insights—don’t get left behind.

Text Mining Strategies That Turn Messy Text Into Real Decisions

Discover 9 game-changing tactics to unlock real insights from messy data. Go beyond the hype with expert tips, warnings, and bold new approaches.

Text Mining Solutions in 2026: Value, Risks and Real Red Flags

Welcome to 2025—where the avalanche of unstructured data isn’t just a technical problem, it’s the new business existential crisis. If you’re still clinging to

Text Mining Software Comparison That Exposes Real 2026 Winners

Text mining software comparison just got real. Discover what the top tools don’t want you to know and get the facts you need to make the smartest decision.

Text Mining Software in 2026: Power, Risks and What Vendors Omit

Text mining software gets real: discover the untold risks, rewards, and mind-blowing uses in 2026. Cut through hype, get the facts, and make smarter moves.

Text Mining Industry Applications That Quietly Decide Who Wins

Text mining industry applications revealed: Discover how AI is transforming business, exposing hidden risks, and giving industries an edge. Read the full guide—don’t get left behind.

Text Mining Benefits in 2026: From Hype to Hard ROI and Risk

Text mining benefits in 2026 go beyond buzzwords—discover real-world ROI, hidden perks, and edgy insights that transform how you see unstructured data. Read before you’re left behind.

Text Mining Applications That Quietly Decide What Happens Next

Text mining applications are transforming industries in 2026. Discover edgy insights, real stories, and actionable tips in this definitive guide.

Text Extraction Solutions Comparison 2026: Winners, Hype, Traps

Text extraction solutions comparison finally stripped bare. See which tools dominate in 2026, why the hype is broken, and how to avoid the hidden traps. Decide smarter.