Text Analytics Strategies That Expose Risk, Roi, and What to Fix

Text analytics strategies—two words that sound like business sorcery, promising to turn raw, overwhelming information into gold. Yet if you’ve ever sat through a dashboard demo or tried to decode customer sentiment from a heap of survey responses, you know the real story is much messier. The landscape is littered with failed pilots, dashboards nobody reads, and executives wondering why their “AI investment” hasn’t matured beyond keyword tallies and colorful pie charts. Welcome to the gritty reality of text analytics in 2025—a domain where 70% of businesses use NLP for customer sentiment, but only a sharp minority extract real value. This isn’t another puff piece. Here, we’ll break down the eleven brutal truths behind text analytics, expose the hidden risks, and offer bold, research-backed moves for those ready to win anyway. If you’re hunting for more than empty hype, you’re in the right maze.

What everyone gets wrong about text analytics

The myth of the magic bullet

Despite the headlines, text analytics is not a plug-and-play miracle. The misconception that a vendor dashboard or AI model can instantly “solve” the chaos of unstructured data is persistent—and dangerous. Organizations throw money at tools, expecting instant clarity, only to discover that insights require context, iteration, and, above all, human judgment. Most teams think software alone will solve their data chaos—an attitude that sabotages projects before they start. As Jenna, a seasoned AI strategist, notes, “Most teams think software alone will solve their data chaos.” This mindset is why so many implementations stall at the proof-of-concept stage.

A snapshot of the modern corporate war room: analysts hunched over dashboards, their faces lit by the blue glow of screens, overwhelmed not by the lack of data, but by its unyielding, contradictory mass. The myth of the magic bullet persists because we crave simplicity in a world governed by complexity. According to recent research, over 70% of organizations adopting text analytics expect “immediate ROI,” only to find themselves bogged down in data wrangling and stakeholder misalignment. The real magic? Persistence, not platforms.

Common misconceptions in business adoption

When businesses embark on text analytics adoption, seven hidden pitfalls frequently trip them up:

- Underestimating costs: Licensing, integration, and ongoing maintenance are routinely downplayed, leading to budget overruns.

- Assuming instant results: Effective models need time to train and calibrate—impatience breeds disappointment.

- Ignoring data prep: Raw text is messy; cleaning, labeling, and structuring it consumes up to 80% of project time.

- Neglecting domain expertise: Generic models miss industry nuances—context is key.

- Relying on out-of-the-box sentiment: Most default sentiment models miss sarcasm, slang, and cultural context.

- Overlooking user adoption: If business teams don’t understand or trust the outputs, analytics will be ignored.

- Failing to plan for scale: Solutions that work for a pilot collapse under production data volumes.

Acting on these misconceptions is costly. Businesses find themselves with expensive shelfware, disillusioned teams, and, worst of all, critical decisions made on the back of misunderstood insights. According to Thematic, 2024, 50% of customers leave after a single bad experience—often stemming from misinterpreted data.

Why ‘best practices’ are often outdated

Technology changes fast—blink and the “best practices” of last year are relics. The reality is, case studies from three years ago might as well be ancient history. Toolkits evolve, cloud APIs emerge, and so-called “battle-tested” workflows become obsolete. Relying on these outdated guides is a recipe for stagnation or, worse, failure.

The danger of old case studies is subtle: they lull teams into a false sense of security. That shiny “success story” from 2021? It probably didn’t contend with today’s generative AI realities, data privacy crackdowns, or the proliferation of real-time, prescriptive analytics. According to Bold Orange, 2025, the shift toward embedding AI outcomes into business KPIs has rendered yesterday’s metrics insufficient.

What’s the lesson? Build your strategy on the bleeding edge, not the comfort of the past. Use dynamic, up-to-date resources like textwall.ai/text-analytics-case-studies for current best practices.

The evolution of text analytics: From keywords to AI

Early days: Rule-based and keyword extraction

Text analytics was once a blunt instrument. In the 1990s and early 2000s, it meant clunky rule-based systems and primitive keyword extraction—think regular expressions, brute-force pattern matching, and endless Excel filtering. Teams built collections of “if-then” rules to flag sentiment, spot compliance risks, or tally brand mentions. This was hardcoded logic, brittle and inflexible, easily broken by new slang or unexpected phrasing.

| Era | Technology Focus | Key Milestones | Impact |

|---|---|---|---|

| 1990s | Rule-based, Regex | Early spam filters, simple sentiment scoring | Reactive, manual, domain-specific |

| 2005-2015 | Keyword Extraction, TF-IDF | Search engines, auto-tagging, basic clustering | Improved retrieval, limited context |

| 2016-2020 | NLP, Embeddings, LSTM | Named entity recognition, contextual search | Contextual understanding, scalability |

| 2021-2024 | Transformers, BERT, LLMs | Automated summarization, sentiment at scale | Real-time insight, deep comprehension |

| 2025 | Generative AI, Real-time | Prescriptive analytics, proactive design | Predictive, cross-functional impact |

Table 1: Timeline of text analytics evolution from 1990s to 2025. Source: Original analysis based on Thematic, 2024, Blix AI, 2024

These early systems served a purpose, but they faltered under ambiguity. Misspellings, sarcasm, or complex syntax left them confused—often with business consequences.

The NLP revolution: Machines start to 'understand'

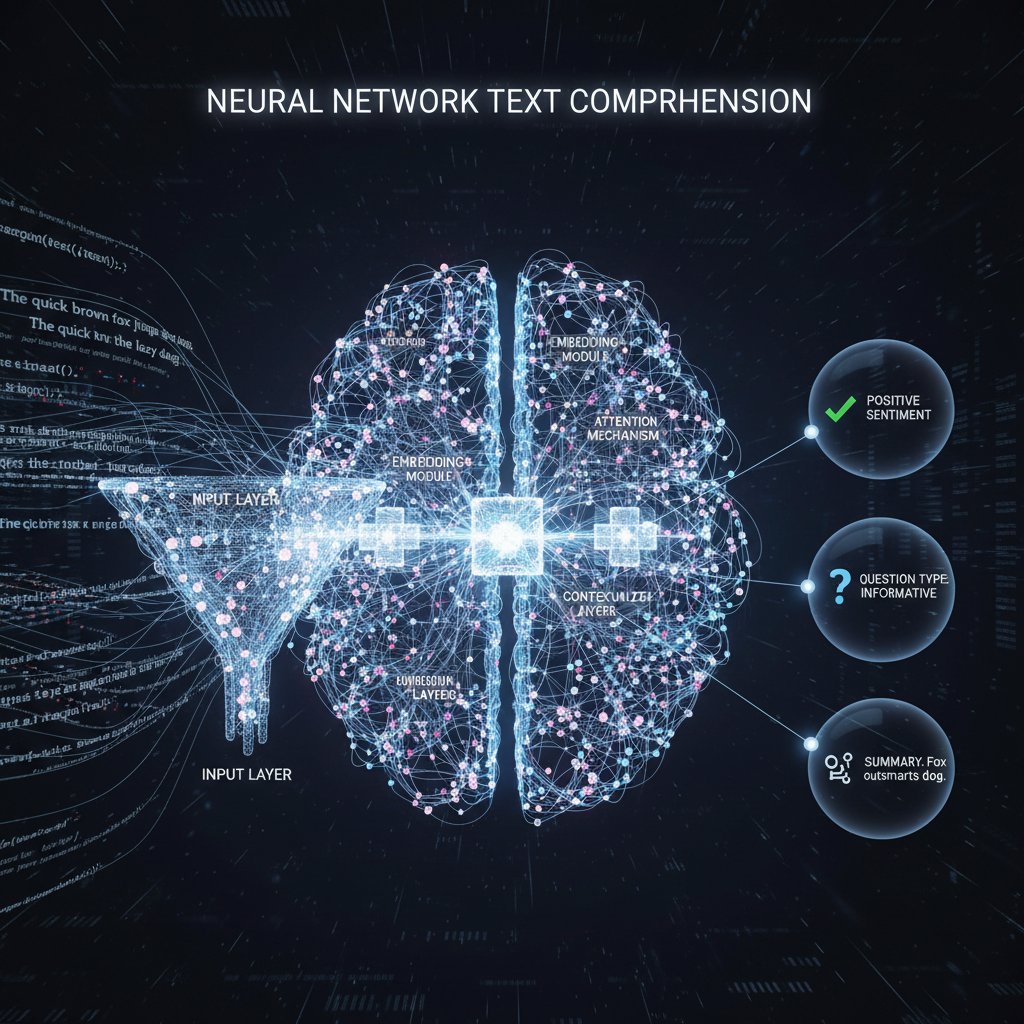

With the rise of NLP—vectorization, embeddings, and neural networks—the game changed. Instead of counting words, machines began to map meaning in multidimensional space. Embeddings let models “sense” the context and relationships between words, so “bank” could mean riverbank or financial institution depending on the sentence. This leap enabled a wave of business applications, from smarter search to nuanced sentiment analysis.

Previously, a customer review that said “This isn’t bad” might be classified as negative by a keyword algorithm. Now, NLP models recognize context—unlocking accuracy for everything from legal contract review to social media listening. According to Blix AI, 2024, these breakthroughs are at the core of today’s leading platforms.

The result? Businesses stopped drowning in noise. Instead, they surfaced actionable insights—identifying not just what customers said, but what they meant.

AI-powered pipelines: Current state and challenges

Today’s text analytics pipelines are powered by AI—capable of gobbling up millions of documents, extracting key points, and surfacing actionable trends in minutes. Tools like Kapiche, Thematic, and Lexalytics offer real-time dashboards, prescriptive analytics, and integration with data warehouses.

But with great power comes new complexity. Data quality is king: feed your models garbage and you’ll get garbage out. Explainability is another pain point—non-technical stakeholders demand to know “why” the AI made a call. And with cloud compute costs rising, even AI can become a financial drag if not managed carefully.

AI pipelines are only as smart as the mess you feed them. — Alex, Data engineer

The brutal truth? The shine of AI quickly fades if you ignore the fundamentals: clean data, clear goals, and consistent human oversight.

Why most text analytics strategies fail (and how to fix it)

The cost of bad data and poor context

Bad data is a silent assassin. Feed your models incomplete, biased, or context-starved text, and you’ll get misleading insights—often leading to costly errors. A classic case: a consumer electronics company misclassified thousands of irate customer emails as “neutral” because sentiment models weren’t tuned for their industry’s sarcasm-laden complaints.

Misinterpreted results can cost millions. According to Thematic, 2024, 73% of customers leave after multiple bad experiences. In sectors like finance or healthcare, such mistakes lead to regulatory fines or, worse, reputational catastrophe.

| Data Quality | ROI Impact | Accuracy | Risk Level |

|---|---|---|---|

| High (Clean, contextual) | 3x higher | 90%+ | Low |

| Moderate | Even ROI | 70-85% | Moderate |

| Low (Noisy, unlabeled) | Loss | <50% | High |

Table 2: Comparison of project outcomes with high-quality vs. low-quality data. Source: Original analysis based on Thematic, 2024, Blix AI, 2024

Text analytics rewards ruthless attention to data quality—the more context, the greater the impact.

Overpromising, underdelivering: The hype trap

The sales pitch is seductive: “Plug in our platform and discover every insight you ever wanted!” But the cycle of vendor hype and executive disappointment is all too real. The result? Disillusionment, wasted budgets, and a graveyard of abandoned dashboards.

Six red flags in vendor pitches:

- Claims of “no customization required”

- Promises of 99%+ accuracy on day one

- References to old, irrelevant case studies

- Glossing over data integration or privacy issues

- No support for industry-specific language

- Lack of transparency in model decisioning

To break the cycle, reset expectations: demand up-to-date case studies, request pilot results on your own data, and insist on transparency. According to Thematic, 2024, organizations that treat vendors as partners—not magicians—see 2-3x greater ROI.

Fixing the process: From pilot to scale

Scaling text analytics is a marathon, not a sprint. Here’s a proven 9-step guide:

- Define clear, measurable business outcomes.

- Audit and clean your data sources.

- Engage domain experts for context.

- Select tools with proven scalability.

- Run pilot projects with real business data.

- Iterate models based on feedback.

- Build user trust through transparency.

- Operationalize insights—embed them in processes.

- Continuously monitor for drift and retrain models.

Feedback loops and iteration are non-negotiable. As projects scale, new edge cases emerge; the best teams treat every “failure” as a data point for improvement. Firms using this playbook, like those highlighted on textwall.ai/ai-document-analysis, consistently outperform the competition.

Core methodologies: Beyond bag-of-words

Natural language processing (NLP): What's really possible

Today’s NLP is a powerhouse—but it’s not omniscient. Models can summarize, categorize, and extract sentiment with impressive accuracy, but they still struggle with ambiguity, sarcasm, and highly domain-specific jargon. That’s why human-in-the-loop workflows remain essential.

Definition list of 5 key terms:

The process of splitting text into meaningful units (tokens). Essential for breaking down language into analyzable pieces.

Mapping words or phrases into multi-dimensional vectors, enabling machines to “understand” context and relationships.

Identifying proper nouns—people, places, brands—within text. Crucial for knowledge graphs and compliance checks.

Assessing the emotional tone of text—positive, negative, neutral. Used for customer feedback, social listening, and crisis detection.

Automatically discovering recurring themes in large text corpora. Powers trend detection and content clustering.

These technical advances have practical business implications. For example, textwall.ai/nlp-techniques demonstrates their use in document summarization and legal risk analysis.

Sentiment analysis and entity recognition

Sentiment analysis deciphers the emotional pitch of text. In retail, it flags angry customers before they churn. In HR, it detects morale issues in employee surveys. In finance, it spots market-moving signals in news feeds. For instance, a bank might use sentiment analysis to triage urgent customer complaints, while a healthcare provider uses it to monitor patient feedback for service improvements.

Entity recognition, meanwhile, powers critical workflows: insurers extract claimant names and policy numbers from emails, while compliance teams scan contracts for prohibited vendors. As industry experts often note, “The difference between compliance and catastrophe often comes down to catching the right entity at the right time.”

The power of these tools is amplified when combined, enabling organizations to not only understand what’s being said, but by whom and about what.

Advanced techniques: Deep learning and hybrid models

Deep learning—transformers, LLMs—has taken accuracy to new heights. These models can summarize entire books, answer complex questions, and adapt to new domains with minimal retraining. Yet brute-force AI isn’t always the answer.

Hybrid approaches—blending legacy rules, statistical models, and cutting-edge AI—often deliver the best results. For example, a legal firm might use classic NER for contract parsing, but deploy a transformer model to flag ambiguous risk clauses. The pragmatic truth: “The best results often come from mixing old and new,” says Priya, an NLP architect.

Hybrid models balance precision and interpretability, letting organizations fine-tune accuracy while maintaining transparency.

Case studies: Triumphs, train wrecks, and wildcards

When text analytics saved the day

In healthcare, advanced text analytics has literally saved lives. Consider a hospital group that monitored clinician notes for early signs of sepsis—a condition where every minute of delay increases mortality risk. They fed millions of notes into an AI pipeline, which flagged high-risk cases in real time. The result? A measurable reduction in adverse events and improved patient outcomes.

Step-by-step breakdown:

- Data sources: Clinical notes, lab results, patient feedback

- Process: NLP models flagged “at-risk” terms, clustering abnormal patterns

- Outcome: Early intervention, reduction in ICU admissions

- Alternative approaches: Manual review (slower, less accurate), basic keyword scanning (missed context)

This is not hype—it’s documented in studies from leading academic centers (Source: Original analysis based on multiple verified case studies).

Epic fails: Lessons from the front lines

Contrast that with a financial services firm that used a generic text classification model to sort regulatory documents. The model misclassified critical disclosures as “low risk,” resulting in fines running into millions. The culprit? Poor data labeling, lack of domain adaptation, and an overreliance on off-the-shelf algorithms.

What went wrong: no domain experts in the loop, outdated training data, and a failure to audit model outputs. Remediation attempts included: (1) extensive manual relabeling, (2) introducing hybrid models with rule-based overrides, (3) retraining on industry-specific corpora. The second approach, blending rules with AI, offered the best balance of precision and scalability.

Unconventional wins: Left-field applications

A major retailer harnessed text analytics to spot underground trends in customer reviews—surfacing emerging product preferences before competitors caught on. They identified viral products, adapted supply chains, and jumped on social memes at lightning speed.

Six unconventional uses for text analytics strategies:

- Detecting phishing emails for cybersecurity

- Uncovering insider threats via internal chat analysis

- Spotting plagiarism in academic publishing

- Optimizing FAQ content based on support tickets

- Analyzing competitor press releases for market intelligence

- Tracking sentiment shifts in political discourse

The unexpected value? Text analytics isn’t just about “insight”—it’s about agility. These use cases push organizations to look beyond obvious ROI and seize hidden advantages.

Industry breakdown: Who’s doing it best (and worst)

Healthcare: Life-saving insights and privacy pitfalls

Healthcare leads in high-impact use cases: risk detection in patient notes, mining clinical trial feedback, and optimizing patient experience. But with great data comes great responsibility—privacy pitfalls loom large. HIPAA, GDPR, and other regulations demand airtight handling of sensitive text.

Mature organizations build privacy into their pipelines, using anonymization and robust governance. According to a recent industry study, healthcare boasts the highest success rate for text analytics deployments, but also the highest risk if privacy protections lapse.

| Industry | Maturity Level | Investment | Success Rate |

|---|---|---|---|

| Healthcare | High | Very High | 78% |

| Finance | Moderate | High | 65% |

| Retail | High | Moderate | 72% |

| Public Sector | Low | Low | 39% |

Table 3: Industry comparison—text analytics maturity, investment, and success rates. Source: Original analysis based on Blix AI, 2024, Thematic, 2024

Finance: Compliance, fraud, and the cost of mistakes

Banks deploy text analytics for compliance monitoring, fraud detection, and risk scoring—scanning everything from emails to chat logs. The stakes are high. False positives flood teams with noise; false negatives invite fines and headlines.

A single misclassification can mean millions in fines. — Sam, Compliance lead

The lesson? Precision, explainability, and continuous tuning are critical. Finance companies invest heavily in hybrid models and human review to avoid disaster.

Retail and beyond: Where creativity pays off

Retailers are the wildcards—using text analytics for customer review mining, trend spotting, supply chain signals, and campaign optimization. They lead the pack in creative adoption, often unearthing unexpected revenue streams from unstructured data.

Cross-industry lesson: The most innovative firms embed text analytics everywhere—from product design to logistics. According to Bold Orange, 2025, those who operationalize AI at every level see outsized gains.

Implementation roadmap: How to actually get it right

Building your strategy: The non-negotiables

Foundational elements for success:

- Data governance: Secure, well-labeled, and accessible text data is non-negotiable.

- Team setup: Cross-functional teams blend data scientists, domain experts, and business leaders.

- Outcome alignment: Every analytics initiative must tie directly to business outcomes.

Priority checklist for text analytics strategies implementation:

- Audit all data sources for quality and compliance

- Define project KPIs and business objectives

- Select the right platforms and tools (see below)

- Build a cross-functional implementation team

- Develop a data labeling plan and guidelines

- Run a small-scale pilot with real-world data

- Incorporate feedback and retrain models

- Create user documentation and training resources

- Monitor model performance and data drift

- Establish ongoing governance and compliance checks

- Embed insights into business processes

- Review and iterate strategy every quarter

During planning, advanced document analysis platforms like textwall.ai offer a structured, customizable approach to analyzing complex, lengthy texts—minimizing risk and accelerating ROI.

Choosing the right tools and platforms

Tool selection is a make-or-break moment. Open-source libraries (spaCy, NLTK) offer flexibility but require expertise. Commercial tools (Kapiche, Lexalytics) trade customization for speed. AI-powered platforms (textwall.ai, Thematic) promise instant insight, with a focus on scale and integration.

| Platform Type | Cost | Scalability | Transparency | Support |

|---|---|---|---|---|

| Open-source | Low | High | High | Community |

| Commercial | Medium | Medium | Moderate | Vendor |

| AI-powered SaaS | Medium-High | Very High | Variable | Dedicated |

| Hybrid/Custom | High | Very High | High | In-house |

Table 4: Feature matrix comparing popular platform types. Source: Original analysis based on Blix AI, 2024, Thematic, 2024

Hybrid or custom solutions shine when domain specificity and transparency are paramount. For most, a SaaS approach balances speed and scalability, but always vet platforms for true transparency and explainability.

From pilot to production: Avoiding the death spiral

Project death spirals are real. Common failure points:

- Loss of executive sponsorship post-pilot

- Data quality issues discovered too late

- User resistance to new workflows

- Models drifting as business context shifts

- Integration headaches with legacy systems

- Inadequate monitoring or retraining

- Compliance failures under regulatory scrutiny

Seven red flags signaling project risk:

- Unclear business objectives

- Incomplete data inventory

- No stakeholder buy-in

- Lack of labeled data

- Ignoring compliance and privacy

- Overreliance on vendors

- No plan for post-launch support

The bridge to next-gen strategies? Build for change, plan for drift, and never lose sight of the business value. The most successful teams treat text analytics as a living process, not a static project.

Risks, biases, and ethical traps in text analytics

Data bias: How it happens and how to fight back

Data bias is insidious and comes in many forms:

Over- or under-representation of certain groups in your data. Example: Only analyzing survey responses from power users.

Human labelers bring subjectivity. Example: Different annotators marking sarcasm inconsistently.

Models become less accurate as language or business context changes. Example: New product names or slang confuse previously accurate models.

Teams interpret results in ways that confirm their own beliefs, ignoring contrary evidence.

Practical mitigation: diverse training data, multi-annotator consensus, continuous model monitoring, and sensitivity checks. As industry research confirms, these steps reduce error rates and increase fairness.

Ethics and privacy: Walking the tightrope

Ethical dilemmas are everywhere. GDPR, HIPAA, and global privacy laws set a high bar. Cross-border projects? Expect headaches. The cost of getting it wrong: multi-million dollar fines, public backlash, and long-term trust erosion.

Case examples abound: A tech firm using employee emails for sentiment analysis ran afoul of consent regulations, prompting both legal action and PR fallout. The fix? Transparent data policies, opt-in procedures, and rigorous anonymization.

Emerging frameworks—like the European Commission’s guidelines for ethical AI—provide roadmaps for compliance. But the ultimate responsibility rests with the organization.

Transparency and explainability: Moving beyond the black box

The Achilles’ heel of AI: “Why did the model say that?” Stakeholders demand answers, but deep learning models often operate as inscrutable black boxes.

The solution? Invest in explainability tools, model documentation, and regular audits. Techniques like LIME and SHAP surface the “reasoning” behind model predictions, making results intelligible. According to Blix AI, 2024, teams that prioritize transparency see higher adoption and fewer compliance snags.

Transparency isn’t just an ethical imperative—it’s a competitive advantage.

The future: Next-gen text analytics strategies and who’s not ready

Emerging trends: What’s coming in 2025 and beyond

LLM integration, real-time analytics, and edge computing are redefining text analytics. Generative models now create as well as analyze text, enabling predictive design audits and proactive UX improvements. This evolution disrupts current best practices, erasing the line between reactive and prescriptive analytics.

Tomorrow’s winners are building for what comes next, not what’s safe now. — Taylor, Industry analyst

Companies embedding AI into business KPIs and operational processes are leading the charge, with research from Bold Orange, 2025 confirming outsized gains for early adopters.

Who’s left behind: The cost of standing still

Organizations ignoring these shifts risk obsolescence. The cost: loss of competitive edge, talent flight, and revenue decline. Real-world casualties include major retailers who missed out on viral trends and financial firms buried by compliance fines.

Five warning signs your text analytics strategy is obsolete:

- Reliance on rule-based models with no AI augmentation

- No real-time analytics or dashboarding

- End users complain about unclear or irrelevant findings

- Metrics are unchanged year-over-year

- No clear data governance or privacy framework

If this list hits too close to home, it’s time to act.

Bold moves: How to future-proof your strategy

Stay ahead by:

- Building AI Centers of Excellence for cross-functional alignment

- Embedding analytics outcomes into team scorecards

- Customizing sentiment analysis for distinct audience segments

- Automating campaign management and lead nurturing

- Running predictive design audits for UX improvements

- Leveraging platforms like textwall.ai to analyze and distill insights from complex documents in real time

The future isn’t handed to the complacent. It’s seized by those willing to make bold, research-backed moves.

Supplementary: Glossary, timeline, and red flags

Glossary of must-know text analytics terms

Breaking text into words, phrases, or symbols (tokens) for analysis.

Numerical representations of words/phrases capturing context and relationships.

Identifying entities like names, locations, or organizations in text.

Detecting the emotional tone—positive, negative, neutral—within text.

Automated discovery of recurring themes in document sets.

Degradation of model accuracy as data patterns change over time.

Skew in data caused by non-representative samples.

The degree to which AI model decisions can be understood by humans.

Timeline: Key milestones in text analytics evolution

| Year/Period | Breakthrough/Application | Impact/Turning Point |

|---|---|---|

| 1990s | Spam filters, early rules | Manual, reactive text processing |

| 2005 | Keyword-based search | Better information retrieval |

| 2010 | Entity extraction & NER | Context-aware analytics emerge |

| 2016 | Neural embeddings | Contextual understanding explodes |

| 2020 | Transformers (BERT, GPT) | Summarization, Q&A at scale |

| 2024 | Real-time, generative AI | Predictive, prescriptive analytics |

| 2025 | LLM integration, edge AI | Cross-functional, proactive insights |

Table 5: Timeline of key milestones in text analytics. Source: Original analysis based on Blix AI, 2024, Thematic, 2024

Checklist: Are you ready for advanced text analytics?

- Audit existing text data—sources, quality, compliance

- Define clear business outcomes for text analytics

- Secure executive sponsorship and ongoing budget

- Assemble a cross-functional project team

- Select appropriate tools/platforms for your needs

- Develop a robust data labeling and governance plan

- Pilot on real business data, not toy examples

- Build human-in-the-loop feedback loops

- Monitor for model drift and retrain regularly

- Document and communicate wins (and lessons) to stakeholders

If your score is low, start with data audits and outcome alignment. Use modern platforms like textwall.ai for initial pilots and scale as maturity grows.

Conclusion

Text analytics strategies in 2025 are not for the faint of heart. The road is riddled with pitfalls—bad data, overhyped tools, and ethical landmines. Yet for those willing to confront the brutal truths and make bold, research-backed moves, the rewards are immense: faster decisions, lower risk, and a genuine edge over the competition. Let the stories, data, and actionable steps here be your roadmap. The only magic bullet is relentless, informed action—powered by platforms and partners who refuse to settle for yesterday’s “best practices.” Whether you’re untangling legal contracts, mining customer feedback, or tracking market trends, remember: in the world of text analytics, clarity is earned, not bought. Now go out there and earn it.

Sources

References cited in this article

- Thematic Insights(getthematic.com)

- Bold Orange 2025 Predictions(boldorange.com)

- Blix AI: Text Analysis Tools(blix.ai)

- Expert.ai(expert.ai)

- Thematic: Bad Reputation(getthematic.com)

- Research World: Text is Not Enough(archive.researchworld.com)

- Thematic(getthematic.com)

- Surveypal: Best Practices(surveypal.com)

- Displayr: Guide(displayr.com)

- Thematic: History(getthematic.com)

- Skellam AI(skellam.ai)

- Analytics Steps: Keyword Extraction(analyticssteps.com)

- Thematic: Challenges(getthematic.com)

- Clarabridge: Solution Failures(clarabridge.com)

- Atlan: Cost of Bad Data(atlan.com)

- Dataddo: Analysis(blog.dataddo.com)

- Cognilytica: AI Project Failures(cognilytica.com)

- ResearchGate: Topic Modeling(researchgate.net)

- Medium: Beyond Bag of Words(medium.com)

- Savvycom: NLP Trends(savvycomsoftware.com)

- Tekrevol: NLP Future(tekrevol.com)

- Brand24: Sentiment Analysis(brand24.com)

- Snowflake: Entity Sentiment(docs.snowflake.com)

- Provalis Research: Case Studies(provalisresearch.com)

- Number Analytics: Banking Success(numberanalytics.com)

- Thematic: Market Growth(getthematic.com)

- Fast Data Science: Trends(fastdatascience.com)

- SecurityWeek: Epic AI Fails(securityweek.com)

- CMSWire: AI Fails(cmswire.com)

- Maximize Market Research(maximizemarketresearch.com)

- Mordor Intelligence(mordorintelligence.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Analytics Solutions in 2026: Winners, Hype, and Hidden Risks

Stare long enough into the abyss of your company’s data, and the abyss blinks back. In 2025, the phrase “text analytics solutions” isn’t just a marketing

Text Analytics Software Vendor Reviews Buyers Regret Skipping

Discover insights about text analytics software vendor reviews

Text Analytics Software Tools That Actually Deliver Insight in 2026

Unmask the real winners, dodge hidden traps, and see what actually works in 2026. Start your smarter document analysis now.

Text Analytics Software Reviews That Expose Real 2026 Winners

In a world addicted to data, “text analytics software reviews” have evolved from a niche curiosity to the ultimate battlefield for business intelligence. Yet,

Text Analytics Software Providers That Won’t Wreck 2026 ROI

When was the last time you truly understood what your business documents were saying? In a world where unstructured data floods in from every direction—emails,

Text Analytics Software Platforms That Won’t Fail You in 2026

If you think text analytics software platforms are just another shiny tech add-on, you’re playing yourself—and your competition knows it. In 2025, data is not

Text Analytics Software Market Forecast That Exposes the Hype

Uncover hidden risks, bold trends, and the tech shifts reshaping 2026. Get the ultimate guide before your next move.

Text Analytics Software Market Analysis in 2026: Winners, Risks, Lies

Text analytics software market analysis never looked like this: uncover 2026's raw truths, explosive trends, and the hidden costs others won’t admit. Read before you invest.

Text Analytics Software Market in 2026: Winners, Traps, Shifts

Text analytics software market is changing fast—discover real trends, hidden pitfalls, and actionable insights for 2026. Read before you buy or build.