Text Analytics Software Comparison When Your Data Is Lying

Everyone loves a shiny dashboard. But in the high-stakes world of document analysis, where text analytics software comparison can mean the difference between insight and disaster, the stakes have never been higher. As organizations drown in unstructured data, the right tool isn’t just a matter of convenience—it’s survival. 2025’s landscape is a minefield of overhyped claims, misunderstood AI, and platforms promising everything while delivering… well, let’s just say, far less than the sales deck. So, what’s real, what’s smoke and mirrors, and what can actually make your data work for you? Buckle up. This is the exposé the vendors won’t show you. We’re cutting through the noise, calling out the brutal truths, and giving you the edge you need to choose the best text analytics software—before your competition does.

Why your data is lying to you: the hidden crisis in text analytics

The illusion of insight: how dashboards deceive

It’s a seductive illusion—glowing dashboards, animated charts, the dopamine rush of “real-time metrics.” But if you’ve ever stared at a wall of dials only to realize you have no actionable clue what to do next, you’re not alone. According to Insight7, 2025, most text analytics platforms offer surface-level metrics that look impressive but fail to reveal actionable patterns, especially when data is messy or context is missing.

The distinction between “seeing numbers” and “understanding meaning” is critical. Vendors often tout keyword clouds and sentiment scores as revolutionary, but without context, these are just noise. Real insight comes from deep, contextual analysis—identifying not just what’s being said, but why, by whom, and with what impact. Most teams drown in charts but starve for answers.

"Most teams drown in charts but starve for answers." — Maya, Data Strategist

The psychological comfort of colorful visuals gives a false sense of control. But the harsh reality? If your analytics stop at the surface, you’re making decisions in the dark, comforted by dashboards that hide more than they reveal.

Case study: when analytics went wrong (and what it cost)

Let’s talk fallout. In 2024, a mid-sized e-commerce company—let’s call them “RetailNext”—bet big on a popular text analytics suite. Their dashboards glowed with customer sentiment charts and trending keywords. But when a wave of complaints about late deliveries hit, the analytics missed the signal. Why? The tool focused on counting keywords like “delivery” and “shipping,” missing the nuanced sarcasm and negative context in customer emails. Leadership, seeing “mostly positive” metrics, didn’t intervene—until churn spiked and social media erupted.

| Date | Event | Decision Point | Missed Warning Sign |

|---|---|---|---|

| Feb 2024 | Rollout of new analytics tool | Trusted default sentiment | Negative sarcasm ignored |

| Mar 2024 | Increase in “delivery” mentions | Viewed as positive engagement | No context on complaints |

| Apr 2024 | Churn spike, social backlash | Delayed leadership action | Lack of actionable alerts |

| May 2024 | Business loss, tool re-evaluation | Switched vendors | Realized depth of problem |

Table 1: Timeline of RetailNext’s analytics failure.

Source: Original analysis based on Insight7 and G2 user reviews.

The root cause? Shallow analytics and blind trust in vendor defaults. As the data later revealed, the missed context cost the company nearly $2 million in lost business and reputation damage.

No tool can save you if you’re asking the wrong questions. — Alex, Former Head of Customer Experience

This isn’t just a cautionary tale—it’s a recurring industry pattern. When platforms focus on demo-friendly dashboards over interpretability and depth, critical signals remain hidden. The cost? Real—and brutal.

Debunking the myth: 'AI-powered' vs. real intelligence

Here’s a dirty secret: “AI-powered” is the new “organic.” It sounds impressive, but the reality is often, at best, a sprinkle of machine learning on top of old-school rules. According to TrustRadius, 2025, many vendors slap the AI label on basic keyword matching or off-the-shelf NLP. This makes for great marketing, but mediocre results.

Key terms decoded:

- AI (Artificial Intelligence): Algorithms designed to mimic human decision-making—often just pattern recognition, not true “thinking.”

- NLP (Natural Language Processing): The subset of AI focused on understanding language; quality varies wildly.

- Machine Learning: Systems that “learn” from data, ideally improving over time. In practice, many platforms use pre-trained models that rarely update with your specific context.

The limitations are stark: most tools struggle with sarcasm, domain-specific jargon, and multilingual nuance. According to Kapiche, 2025, even leading platforms routinely misclassify tone or meaning outside of their training data.

So what does true intelligence look like? Advanced solutions—such as textwall.ai/text-analytics—go beyond keywords, using context, syntax, and behavioral signals. But even the best AI can’t replace expertise or the art of asking the right questions. Transparency and explainability remain rare commodities—which should make every buyer wary.

The anatomy of text analytics software: what actually matters

Core features that separate winners from hype

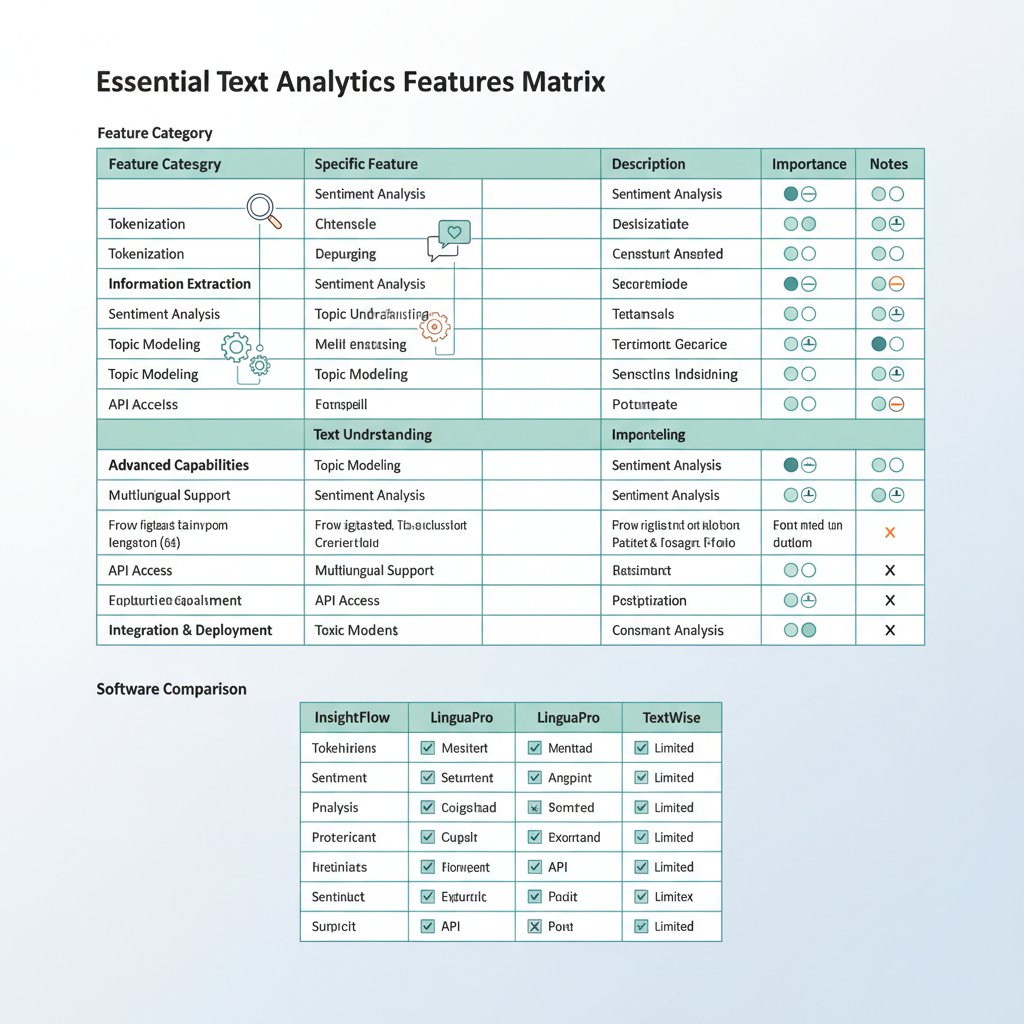

If your evaluation process involves ticking boxes on a vendor checklist, you’re already losing. The winners in text analytics software comparison are those that deliver real, actionable value—not just a laundry list of features. Critical must-haves in 2025 include:

- Deep context extraction: Don’t settle for basic sentiment—demand entity recognition, theme extraction, and context-aware analysis.

- Transparent algorithms: Insist on explainability. Can you trace how insights are derived?

- Customizable workflows: Real value comes from tailoring analysis to your actual data and needs, not preset templates.

- Real-time processing: Actionable insight means nothing if it arrives too late.

- Seamless integration: If a tool can’t plug into your daily workflow, adoption will stall.

- Data quality management: Garbage in, garbage out—top tools address bias, errors, and inconsistencies upfront.

The difference is more than academic. According to Zonka Feedback, 2025, organizations with customizable, transparent workflows saw 45% faster insight-to-action cycles than those using closed, black-box solutions.

Missing these essentials means more than minor frustration—it translates directly into lost revenue, operational inefficiency, and missed opportunities.

Beyond checkboxes: the technical deep dive

Let’s get under the hood. The best text analytics tools aren’t just plug-and-play—they’re engineered for serious, adaptable work. A mature NLP pipeline involves several key stages: text ingestion, cleaning, context extraction, and iterative machine learning. Top platforms offer modular architectures that allow organizations to mix cloud, hybrid, or on-prem deployment as dictated by compliance, performance, or privacy needs.

| Architecture | Pros | Cons | Practical Implications |

|---|---|---|---|

| Cloud | Scalable, easy updates, low upfront | Data privacy, dependency on vendor | Fast start, beware compliance traps |

| Hybrid | Flexibility, some local control | Complexity, variable support | Best for regulated industries |

| On-prem | Maximum security, custom integration | High cost, slow to scale | Ideal for sensitive data, legacy |

Table 2: Comparison of technical architectures in text analytics software.

Source: Original analysis based on G2 and Kapiche reviews.

Your tech stack determines more than IT headaches—it impacts security, flexibility, and even the range of insights you can get. For organizations juggling sensitive documents, understanding these trade-offs isn’t optional.

What vendors won’t tell you: the hidden costs and trade-offs

The sticker price is just the opening salvo. True cost drivers are buried a few layers deep:

- Data preparation: Cleaning, tagging, and reconciling fragmented sources often takes weeks or months.

- Training models: Customizing AI to your domain is labor-intensive, usually requiring ongoing expert involvement.

- Integration headaches: Connecting disparate systems (think CRM, legacy DBs, cloud storage) is where budgets and timelines go to die.

- Maintenance and updates: Algorithms drift, data changes—staying accurate requires regular tuning.

- Licensing traps: Seemingly affordable plans can balloon with seat expansion, storage, or premium features.

- User training: Adoption fails if teams aren’t fully onboarded and workflows updated.

Plug-and-play platforms sacrifice deep customization, while bespoke integrations come with hidden expenses and complexity. If a vendor promises “one-click insight” for all use cases, grab your wallet and run. Red flags include vague pricing, lack of transparency about analytics models, and hard sells on proprietary file formats.

Comparing the contenders: real-world text analytics tools in 2025

Market leaders and disruptors: who’s really innovating?

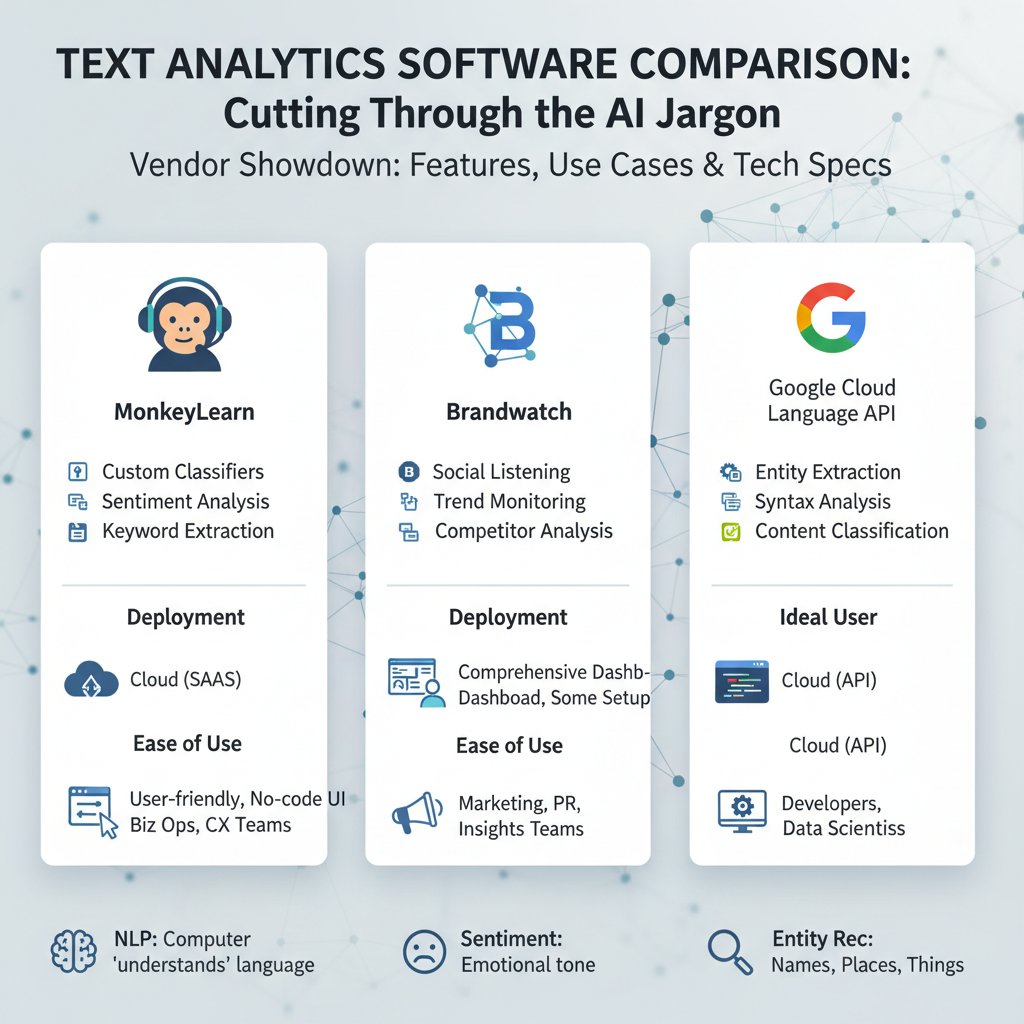

The 2025 market is a battleground—legacy giants jostling with upstart disruptors. According to G2’s Best Text Analysis Software, May 2025, platforms like MonkeyLearn, Lexalytics, and Kapiche remain strong, but new players (including several open-source dark horses) are making waves with modular, API-driven solutions.

Innovation isn’t measured by buzzwords but by actual, frequent product updates and user-driven improvements. Many so-called “AI-first” platforms still lag behind on basic context extraction or integration, while niche vendors double down on explainability and use-case focus.

| Platform Type | Market Share | Adoption Rate | User Satisfaction |

|---|---|---|---|

| Enterprise Suite | 38% | 72% | 7.1/10 |

| Niche Vendor | 22% | 55% | 8.4/10 |

| Open Source | 15% | 49% | 7.8/10 |

| API-first Platform | 17% | 38% | 8.1/10 |

| Legacy/Other | 8% | 20% | 6.5/10 |

Table 3: Statistical summary of market share and user satisfaction in text analytics (2025).

Source: Original analysis based on G2, May 2025.

Open source and commercial tools are converging in features but diverging in support and ecosystem. For some, the flexibility of open platforms is a win; for others, the managed services and support of commercial vendors outweigh the risks.

Niche vs. all-in-one: does specialization win?

The one-size-fits-all promise is dead. Niche platforms excel in targeted domains—legal tech, social listening, or scientific literature—delivering outsized value when the fit is right. For example, a healthcare provider using a clinical text analysis tool achieved 60% faster processing versus a generalist platform, according to TrustRadius, 2025. By contrast, companies lured by all-in-one suites often find themselves wrestling with irrelevant features, slower updates, and bloated costs.

Niche tools succeed when:

- The use case is highly specialized and data formats are predictable.

- Domain expertise is embedded—think medical, legal, or technical language.

- Integration is limited to a handful of systems.

All-in-one tools disappoint when:

- Custom use cases outstrip the tool’s flexibility.

- The promised “plug-and-play” becomes months of configuration.

- Costs escalate with each new feature or user.

Checklist for choosing niche vs. all-in-one:

- Does your industry have unique language or regulatory barriers?

- How diverse are your data sources and formats?

- Do you need rapid deployment or deep customization?

- Is ongoing support more important than flexibility?

Case study: how a newsroom survived a data crisis

In late 2024, a major digital newsroom faced a “fake news” storm. Their legacy analytics stack flagged trending topics but missed coordinated campaigns spreading misinformation. Editorial chaos ensued—until they switched to a hybrid analysis platform integrating advanced NLP and real-time alerting. With precise entity recognition and sentiment tracking, the team quickly identified the sources of disinformation and reclaimed narrative control.

The newsroom’s toolset included a mix of open-source NLP and a commercial platform for workflow integration. The key? Choosing software that could adapt to unpredictable, high-velocity data.

Had they stuck with the old stack, editorial trust and audience numbers would have cratered. The lesson? Flexibility and depth trump surface metrics.

"We only found the truth after ditching our old analytics stack." — Jamie, Newsroom Editor

From buzzwords to breakthroughs: making sense of AI, NLP, and machine learning

Breaking down the jargon: what each technology really does

Let’s slice through the marketing fog.

The science of teaching machines to interpret human language. Real NLP can handle grammar, context, and meaning—not just keywords.

Algorithms that detect emotional tone (positive, negative, neutral). Quality varies; sarcasm and ambiguity remain tough.

Automatically spotting names, places, brands, or concepts in unstructured text. Essential for disambiguating meaning.

Algorithms that improve with more data. In practice, only as good as the data and feedback loops feeding it.

In the real world, these components work together to deliver meaningful, actionable insights. For instance, successful deployments in retail analyze social media streams for emerging crises, while failures often result from tools misreading slang or missing evolving topics.

How modern text analytics platforms actually work

The journey from document ingestion to insight is intense:

- Data ingestion: Raw text flows from email, surveys, or reports.

- Cleaning and normalization: Removing noise, fixing encoding, and standardizing language.

- Context and entity extraction: Advanced NLP identifies who’s speaking, about what, and in what context.

- Machine learning analysis: Models map patterns, classify sentiment, spot anomalies, and surface insights.

- Actionable output: Results flow into dashboards, alerts, or downstream systems.

Basic setups stop at surface keyword spotting. Advanced workflows, as with textwall.ai/advanced-document-analysis, incorporate iterative feedback and domain adaptation, allowing for nuanced, context-rich analysis.

Common misconceptions that could sabotage your project

Let’s explode the top myths:

- “AI solves everything.” Reality: Good data and clear objectives matter more than the algorithm.

- “Pre-built models are always accurate.” Unless trained on your data, they’re prone to glaring errors.

- “Integration is easy.” It never is—expect friction with legacy systems.

- “More data means better insights.” Quality always trumps quantity; garbage in, garbage out.

The biggest trap is skipping context and domain knowledge. No algorithm can replace a sharp analyst tuned to the unique quirks of your sector. Avoiding these pitfalls is the difference between transformative results and expensive shelfware.

The human factor: why people—not just algorithms—make or break your analytics

Training your team for success (and sanity)

Even the most advanced tool is useless if your team can’t wield it. Training isn’t a box-ticking exercise; it’s the single biggest predictor of success in text analytics software comparison projects.

- Map workflows: Start by understanding how your team works today—then overlay analytics.

- Upskill staff: Invest in training on both the tool and the underlying concepts (NLP, data bias).

- Pilot and iterate: Test on real data, collect feedback, refine.

- Establish feedback loops: Make it easy to flag false positives or missed insights.

- Monitor adoption: Track usage and outcomes, not just logins.

Teams that invest in training see faster ROI, fewer mistakes, and higher adoption. Skimping here is a false economy.

When expertise trumps automation: stories from the trenches

Consider the legal team that overruled a text analytics tool’s “neutral” sentiment assessment on a multi-million dollar contract—catching a subtle veiled threat the algorithm missed. Or the HR analyst who spotted a brewing culture crisis in employee feedback, despite the tool reporting “no issues.”

"Automation is only as smart as the person guiding it." — Priya, Analytics Lead

Even the best software amplifies, rather than replaces, human expertise. The most successful organizations combine domain knowledge with AI, using technology as a force multiplier—not a crutch.

Practical playbook: choosing and implementing the right solution

Step-by-step guide to buying text analytics software in 2025

- Define your goals: What decisions do you want to power with text analytics?

- Audit your data: Assess volume, formats, and quality issues.

- Map stakeholders: Involve IT, end-users, and data owners early.

- Set your requirements: Prioritize must-haves (integration, explainability) over gimmicks.

- Shortlist vendors: Use trusted reviews and peer recommendations.

- Run live trials: Test with real data—don’t trust demo datasets.

- Evaluate transparency: Insist on model explainability and audit trails.

- Scrutinize integration: Confirm API access and compatibility with existing systems.

- Pilot, don’t boil the ocean: Start small, expand on success.

- Negotiate contracts: Clarify support, SLAs, and pricing levers.

- Train and onboard: Invest in hands-on training and continuous support.

- Review and iterate: Regularly assess outcomes and tweak as needed.

Negotiating? Push for extended pilots, per-use pricing, and clear data ownership clauses. Watch for vague promises, lock-in tactics, and hidden costs. Common mistakes include skipping data audits, underestimating integration effort, and neglecting user training.

Integration headaches: what no one tells you

Integration is the graveyard of analytics projects. Top pain points:

- Legacy systems: Old databases and software often resist modern APIs.

- Data quality: Inconsistent or biased data can cripple insights.

- Access and permissions: Security bottlenecks can delay deployment.

- Fragmentation: Multiple silos mean duplicated effort and missed connections.

To overcome these, invest in robust data mapping, prioritize API compatibility, and set clear ownership for project delivery. For organizations wrestling with complex documents, textwall.ai/document-integration stands out for handling tricky integrations at scale.

Checklist: are you ready for real-world analytics?

- Data is accessible, clean, and mapped.

- Team includes both domain experts and analytics-savvy staff.

- Clear objectives and KPIs are set.

- Integration plan is mapped out.

- Budget includes training and ongoing support.

- C-suite buy-in is secured.

If you’re ticking most boxes, you’re ready for the real world. If not, pause—the cost of failure is real. Up next: the frontier of analytics, where the rules are being rewritten in real time.

The future of text analytics: where we go from here

Emerging trends and technologies shaping 2025 and beyond

The present is already wild: real-time analytics, multimodal AI (text, voice, images together), and privacy-first design are now minimum requirements. New use cases are exploding, from compliance automation in finance to crisis prediction in humanitarian work.

| Year | Breakthrough | Context/Significance |

|---|---|---|

| 2015 | NLP mainstreaming | Chatbots, customer service |

| 2018 | Sentiment analysis matures | Social listening, brand monitoring |

| 2020 | Multilingual text analytics | Global businesses, academia |

| 2023 | Explainable AI | Demands for transparency, regulated sectors |

| 2025 | Real-time, multimodal analytics | Integrating text, images, audio for context |

Table 4: Timeline of major breakthroughs in text analytics (2015–2025).

Source: Original analysis based on Zonka Feedback, 2025 and industry publications.

Ethics, privacy, and the new rules of engagement

Privacy regulations—GDPR, CCPA, and their global cousins—are forcing vendors to rethink data storage and processing. Automated analysis amplifies risks of bias and unintended consequences, especially when models are trained on skewed data.

Ethical guidelines for responsible text analytics:

- Always anonymize sensitive data before analysis.

- Routinely audit models for bias and fairness.

- Disclose algorithms and data sources wherever possible.

- Seek informed consent for data use—no shortcuts.

- Build transparency and explainability into every layer.

Demanding transparency isn’t just good ethics—it’s smart business. Buyers should ask vendors for detailed documentation, independent audits, and real-world impact studies before committing.

DIY vs. enterprise platforms: which path wins?

Build or buy? The debate rages. DIY solutions offer unmatched flexibility—if you have the in-house expertise and a tolerance for risk. Enterprise platforms deliver support, regular updates, and out-of-the-box compliance.

DIY succeeds when:

- You have a specialized use case and strong data science team.

- Control and customization outweigh convenience.

- Security or regulatory needs preclude third-party vendors.

Enterprise wins when:

- Time to value is critical.

- You lack internal AI or NLP expertise.

- Integration and support trump deep customization.

"Sometimes, the tool you build is the only one you can trust." — Sam, CTO, Manufacturing

Ultimately, the smartest organizations mix both approaches, leveraging enterprise tools where speed and support matter, and custom solutions for unique, high-value needs.

Bonus: adjacent frontiers and unconventional uses

Unconventional applications of text analytics you’ve never considered

Think analytics is just for marketing or customer service? Think again. In 2025, creative industries are leveraging text analytics to curate art gallery catalogs, activists are parsing protest data for policy change, and researchers are mapping climate discourse across continents.

- Climate research: Tracking public discourse to predict policy shifts.

- Art curation: Analyzing exhibition reviews to spot emerging themes.

- Crisis response: Parsing emergency calls and reports for real-time threat detection.

- Literary studies: Digital humanities scholars unearthing patterns in centuries-old texts.

The benefits are often unexpected—discovering hidden connections, surfacing new voices, or accelerating response times in moments that count.

What to read next: resources for staying ahead

If you’re ready to take the plunge, don’t go in blind. We recommend:

- “Text Mining with R” by Julia Silge & David Robinson: The hands-on guide for practitioners.

- “Speech and Language Processing” by Daniel Jurafsky & James H. Martin: The definitive textbook on NLP and AI language tech.

- Industry reports from Kapiche and G2: Unfiltered user feedback and trends.

- Online communities:

- r/MachineLearning – Lively discussion, deep dives.

- DataTau – Hacker News for data nerds.

- textwall.ai/resources – Guides and real-world use cases for document analysis.

Keep pushing boundaries. The world of text analytics doesn’t stand still—and your edge depends on outthinking, not outspending, the competition. For those ready to turn complex documents into actionable insight, textwall.ai is a resource you’ll want in your toolkit.

Conclusion

The truth about text analytics software comparison in 2025 isn’t pretty, but it’s liberating. The best tools don’t just count words—they surface hidden meaning, provide actionable insight, and adapt to your domain and team. Yet, technology alone is never enough. Success depends on marrying sharp software with sharper minds, balancing automation and expertise, and refusing to settle for surface-level answers.

The brutal truths? Your data is lying until you force it to tell the whole story. Dashboards can dazzle but deceive. Integration is hard, but essential. Costs lurk where you least expect them. And above all—context is king.

Armed with this knowledge, you’re ready to challenge vendor hype, sidestep the worst traps, and claim the full power of your organization’s data. Don’t let the tools choose you—choose the tool that finally delivers the clarity and confidence every modern team demands. For those who refuse to settle, textwall.ai stands ready at the cutting edge.

Sources

References cited in this article

- Zonka Feedback: 20 Best Text Analysis Tools & Software 2025(zonkafeedback.com)

- Insight7: Textual Analytics Solutions Review 2025(insight7.io)

- G2: Best Text Analysis Software May 2025(g2.com)

- Kapiche: 11 Best Text Analysis Software(kapiche.com)

- TrustRadius: Best Text Analysis Software 2025(trustradius.com)

- BigDataWire: Four Ways Your Data is Lying to You(bigdatawire.com)

- CDOTrends: AI Trust Crisis(cdotrends.com)

- Hasura: Hidden in plain sight(hasura.io)

- Medium: When Data Lies(medium.com)

- Carlson School: Debunking AI Myths(carlsonschool.umn.edu)

- TheoSym: AI Myths(theosym.com)

- Forbes: Myths and Reality of AI(forbes.com)

- SAS: Anatomy of a Visual Text Analytics Pipeline(communities.sas.com)

- Blix.ai: 11 Best Text Analysis Tools(blix.ai)

- Displayr: 12 Best AI-Powered Tools(displayr.com)

- SG Analytics: Best Text Analytics Tools(sganalytics.com)

- GetThematic: 8 Best Text Analytics Software(getthematic.com)

- SurveySensum: Best Features & Insights(surveysensum.com)

- Mordor Intelligence: Market Report(mordorintelligence.com)

- The CTO Club: Top 22 NLP Tools(thectoclub.com)

- OpenDataScience: NYT & NASA Text Analysis(opendatascience.com)

- Wiley: Media Framing of Data Breach(onlinelibrary.wiley.com)

- Analytics Vidhya: AI & ML Trends(analyticsvidhya.com)

- Lexalytics: ML for NLP(lexalytics.com)

- Tableau: NLP Examples(tableau.com)

- GetThematic: Text Analytics Challenges(getthematic.com)

- WalkerInfo: Common Misconceptions(blog.walkerinfo.com)

- Reclaim: People Analytics Guide 2025(reclaim.ai)

- InMoment: How to Choose the Best Text Analysis Software(inmoment.com)

- Kieran Gilmurray: Automation & Analytics Stories(kierangilmurray.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Text Analytics Software Is Now Deciding More Than Your Team

Text analytics software is transforming how we decode data chaos—unmask industry secrets, avoid costly mistakes, and unlock real-world wins. Get the raw truth now.

Text Analytics Platforms Comparison 2026: Winners, Traps, ROI

Text analytics platforms comparison that exposes hidden costs, real winners, and brutal truths for 2026—ditch the hype and choose smarter. Read before you buy.

Text Analytics Platforms in 2026: Winners, Failures, and Real Risk

Get the 2026 lowdown on what really works, what fails, and how to uncover actionable insights—plus the hidden risks no one tells you.

Text Analytics Market Trends in 2026: Hype, Failures, Real Wins

Text analytics market trends are rewriting the playbook for 2026. Discover hidden risks, real-world failures, and the opportunities competitors hope you’ll miss.

Text Analytics Market Growth Is Surging—But ROI Isn’t Keeping Up

Text analytics market growth is exploding—discover what's fueling it, where forecasts fail, and how to seize the real opportunities now. Don't get left behind.

Text Analytics Market Forecast or Fiction? How to Tell the Difference

Discover insights about text analytics market forecast

Text Analytics Market Analysis in 2026: Hype, Roi, and Hard Truths

Discover insights about text analytics market analysis

Text Analytics Industry Report 2026: Winners, Risks, and What’s Next

Text analytics industry report 2026 exposes the hidden truths, explosive trends, and pitfalls shaping the future. Discover what leaders need to know now.

Text Analytics Industry Insights That Expose Roi, Risk, and Hype

Welcome to the data deluge. Every click, every chat, every contract: we are suffocating under a tidal wave of unstructured text. The text analytics