Technical Document Summarization Is Now a Compliance Risk Tool

If you think the technical document summarization revolution means nobody will ever have to read a turgid 200-page manual again, buckle up. Every day, knowledge workers are buried alive in an avalanche of technical reports, compliance documents, research studies, and contractual small print. AI-powered summarizers promise salvation: a single click, and the chaos organizes itself. But here’s the untold reality—no one is coming to save you from information overload unless you own the problem, master the new tools, and outthink the system. In 2025, the fight isn’t just about having access to information; it’s about wrestling messy, relentless data into something you can act on before it buries you. This guide exposes the myths, the hard truths, and the hidden victories of technical document summarization—the kind of things nobody wants to say out loud but everyone needs to hear. Whether you’re a corporate analyst, legal eagle, or over-caffeinated researcher, welcome to the other side of the document wall.

The information apocalypse: why technical document summarization matters now

Drowning in documents: the scale of the modern problem

The rise of digital communication, regulatory rigor, and global research has unleashed a tidal wave of technical documentation. According to recent analytics, the average enterprise is generating and consuming more than 10 times the unstructured data compared to just five years ago. Reports, whitepapers, technical manuals, compliance records, and emails—each a potential pitfall for missed insights or catastrophic oversight. This deluge isn’t just a nuisance; it’s existential. Teams lose days digging for relevant insights, only to be blindsided by missed details that can tank deals or trigger compliance failures. Even the most diligent professionals can’t keep up. The overwhelming scale demands something smarter than brute force. Enter technical document summarization: the only viable lifeline in a world obsessed with more data, less time, and zero tolerance for error.

What’s really at stake: productivity, compliance, and sanity

Every minute spent lost in a document labyrinth is a minute not spent making decisions or driving results. According to a 2024 report from McKinsey & Company, knowledge workers can spend up to 20% of their time just searching for information. In regulated industries, the stakes are even higher—missed clauses or compliance gaps can trigger fines in the millions or even legal action. The truth? Productivity isn't just about speed. It’s about clarity, risk management, and sanity. When information remains hidden or misunderstood, organizations become vulnerable to costly errors, regulatory penalties, and even reputational harm.

| Impact Area | Estimated Loss per Year (USD) | Root Cause |

|---|---|---|

| Productivity | $10,000 per employee | Time wasted searching and re-reading docs |

| Compliance | $4.2 million/incident | Missed regulatory obligations |

| Sanity (Turnover Cost) | $15,000 per lost worker | Burnout from information overload |

Table 1: Estimated annual cost of poor document handling in technical environments

Source: Original analysis based on McKinsey & Company, 2024, Gartner, 2024.

"If you can’t find it, you can’t fix it." — Jamie, Senior Compliance Analyst (Illustrative)

From manual grind to AI dreams: a very short history

The journey from manual slog to AI-powered summarization is as messy as the documents it tries to tame. Decades ago, technical writers and analysts were the unsung heroes, reading, annotating, and condensing dense material by hand. As document volume exploded, early software solutions offered primitive keyword extraction—clumsy, literal, and often unreliable. The introduction of natural language processing (NLP) marked a leap forward, but it wasn’t until the advent of large language models (LLMs) that context-aware summarization became possible. Today, AI summarizers can churn through thousands of pages in minutes, offering summaries that rival human editors—when tuned and overseen correctly. But the road here has been paved with both innovation and false promises.

Timeline of document summarization tech:

- Manual human summarization (pre-2000)

- Rule-based keyword extraction tools

- Early NLP (shallow parsing, 2000s)

- Machine learning-based extractive summarization (2010s)

- Hybrid statistical + NLP models

- Neural network-based abstractive summarization (mid-2010s)

- Transformer models and LLMs (2020s)

- Domain-adapted, API-driven, enterprise AI summarizers (2023–present)

How technical document summarization actually works (beyond the hype)

Breaking down the algorithms: extractive, abstractive, and hybrid

Not all summarization algorithms are created equal. At the core, technical document summarization takes three main forms—extractive, abstractive, and hybrid. Extractive summarization cherry-picks “important” sentences verbatim from the source, creating a patchwork summary that’s fast but often awkward. Abstractive summarization, the much-hyped darling powered by LLMs, generates new sentences, paraphrasing and reorganizing content for clarity and brevity. Hybrid models blend both: they select core content and then rewrite or compress it, often yielding the best balance of readability and accuracy for technical material.

| Approach | How It Works | Pros | Cons | Example Use Case |

|---|---|---|---|---|

| Extractive | Selects key sentences verbatim | Fast, preserves original wording | Can be disjointed, misses context | Quick compliance reviews |

| Abstractive | Paraphrases and synthesizes content | Readable, context-aware | Can ‘hallucinate’ or misinterpret details | Executive summaries, emails |

| Hybrid | Selects, then rewrites or combines sentences | Balances speed and clarity | Requires more tuning and validation | Technical manuals, legal docs |

Table 2: Comparative summary of technical document summarization approaches

Source: Original analysis based on Analytics Vidhya, 2024, EdenAI, 2025.

Definition list:

-

Extractive summarization

Selects existing sentences or paragraphs from the document, often based on frequency of keywords, sentence position, or statistical relevance. Fast but literal, risking loss of nuance. -

Abstractive summarization

Generates entirely new sentences to convey meaning, relying on deep understanding (or simulation thereof) by AI models. More readable, but prone to introducing errors if the model misunderstands intent. -

Semantic compression

The process of condensing meaning across sentences, preserving core insights while removing repetition and redundancy—essential for lengthy technical documents.

Can AI really understand your jargon?

Here’s the uncomfortable truth: most large language models weren’t trained on your internal technical documentation, proprietary acronyms, or nuanced regulatory code. Out-of-the-box, LLMs stumble over niche terminology, ambiguous abbreviations, and subtle distinctions that make or break legal agreements or engineering specs. Fine-tuned, domain-adapted models handle this better—but even the sharpest tool needs a skilled operator and meticulous prompt design. Without it, even the most advanced summarizer can turn a mission-critical patent review into a game of telephone.

"The devil’s in the details—and in the domain-specific vocabulary." — Priya, Senior AI Researcher (Illustrative)

What gets lost in translation: nuance, intent, and risk

Anyone who’s trusted an autopilot summary knows that the losses aren’t always obvious. Nuance gets smoothed over, intent gets muddied, and critical qualifiers can vanish without a trace. In legal documents, a missing “notwithstanding” clause can spell disaster. In product requirements, the omission of a single assumption can derail a launch. Technical document summarization, without validation, can be a double-edged sword—saving time at the cost of introducing new risks.

- Hidden risks and blind spots with automated summaries:

- Skipping context-specific conditions or caveats

- Oversimplifying complex technical language

- Failing to capture implied obligations or dependencies

- Missing cross-references to related documents

- Misinterpreting ambiguous or multi-meaning terms

- Removing soft signals (e.g., doubts, warnings)

- Blindly trusting “final” summaries without review

Case studies: when technical document summarization saves the day (and when it doesn’t)

Real-world wins: legal, scientific, and engineering breakthroughs

It’s not all doom and gloom—there are tangible wins for those who get technical document summarization right. In legal services, AI-powered summarization has slashed contract review time by more than half, enabling teams to focus on negotiation instead of page-flipping. In scientific research, automated summarizers help scholars digest hundreds of papers in hours—a process that once took weeks. Engineering firms have leveraged domain-adapted tools to extract change orders and technical requirements from sprawling project documents, preventing costly miscommunication.

| Industry | ROI (%) | Time Saved | Summary |

|---|---|---|---|

| Legal | 70% | 14 hours/week | Faster contract review, fewer errors |

| Scientific Research | 40% | 20 hours/month | Accelerated literature review |

| Engineering | 60% | 8 hours/project | Rapid requirements extraction |

Table 3: ROI and productivity gains from technical document summarization across industries

Source: Original analysis based on Friday.app, 2025, Analytics Vidhya, 2024.

Disasters and cautionary tales: when summaries go wrong

For every hero story, there’s a cautionary tale. A financial firm relying on automated summaries failed to catch a single-word change buried in a 300-page contract, leading to a multi-million dollar loss. A research group published findings based on an AI-generated summary that misrepresented a key study’s negative results. In government, missed nuances in policy summaries led to public misinterpretations and policy backtracking.

"We trusted the summary—and paid the price." — Alex, Risk Manager (Illustrative)

- Common mistakes leading to summary failures:

- Blindly accepting summaries without human review

- Neglecting to customize models for domain-specific language

- Failing to set clear prompt instructions

- Overlooking critical metadata or appendix content

- Skipping validation against the original document

- Assuming “more automation” means “less oversight”

What sets the winners apart: human-in-the-loop and best practices

The true leaders don’t treat summarization as a hands-off solution. They create workflows where AI is the engine, but human expertise holds the steering wheel. This means embedding review loops, clarifying ambiguous points, and refining prompts based on real-world feedback. It’s not just about speed—it’s about trust and precision.

How to integrate human oversight in document summarization:

- Define clear summary requirements for each use case

- Select or train models based on domain specificity

- Run initial summaries on sample sets for calibration

- Review AI outputs for accuracy and completeness

- Annotate errors and ambiguities for model fine-tuning

- Establish escalation protocols for high-risk summaries

- Collect feedback and continuously refine workflows

Myth-busting: what most people get wrong about technical document summarization

Debunking the ‘AI magic’ narrative

Let’s get brutally honest: AI summarization isn’t a miracle cure. The hype machine claims you can plug in a document and trust the output blindly. Reality check—results depend on prompt design, model selection, and vigilant oversight. No tool, no matter how advanced, replaces the need for human judgment or industry expertise.

- Top five myths about technical document summarization:

- “AI can summarize any document without errors.” (False—context and domain matter deeply)

- “Summaries are always accurate if the model is big enough.” (Larger isn’t always better; relevance matters)

- “Automation eliminates the need for review.” (Human validation is still essential)

- “All summarization tools are the same.” (Not even close; tuning and adaptation count)

- “Summarization equals comprehension.” (Summaries can miss intent, bias, and nuance)

The hidden costs and false economies no one talks about

While AI-powered summarization slashes manual hours, it isn’t free from hidden costs. Energy use and computational expense—often invisible—can be significant. Overreliance on automated tools can introduce security and privacy vulnerabilities, especially when documents are processed via third-party APIs. The relentless drive for efficiency can also breed a false sense of security, leading to overlooked errors or regulatory breaches that cost far more to fix than to prevent.

| Approach | Upfront Cost | Hidden Costs | Benefits | Risks |

|---|---|---|---|---|

| Manual Review | High | Burnout, slow turnaround | Contextual accuracy | Human error, scalability issues |

| Partially Automated | Moderate | Integration, training, oversight | Faster processing, review loop | Missed edge cases |

| Fully Automated | Low (per doc) | Computational energy, security | Speed, scalability | Compliance, accuracy gaps |

Table 4: Cost-benefit analysis of document summarization approaches

Source: Original analysis based on Gartner, 2024, EdenAI, 2025.

When ‘good enough’ isn’t: the compliance and accuracy trap

In regulated industries, “good enough” can be lethal. Settling for surface-level summaries or skipping validation may fly in routine cases but can trigger catastrophic failures when the stakes are high. A compliance officer must scrutinize every nuance, every exception, every footnote. AI tools are invaluable aids, but the ultimate responsibility always lands on human shoulders. The danger lies in complacency—assuming that speed and convenience outweigh diligence and accuracy.

Inside the black box: technical deep dive into modern LLM summarization

How LLMs parse, prioritize, and compress information

Large language models (LLMs) don’t read like humans—they tokenize, encode, and process text in mathematical space. The journey begins with tokenization: breaking the document into chunks (tokens) the model understands. Then, attention mechanisms weigh the relevance of each piece based on context, relationships, and prompt cues. Layers of neural networks compress, paraphrase, and reorder information, generating a summary that attempts to preserve intent while jettisoning noise. The final output? A summary shaped by millions of parameters, prior training data, and real-time prompt guidance.

Edge cases: ambiguity, contradictions, and data drift

Not all documents play nice with AI summarizers. Ambiguous phrasing, internal contradictions, and outdated model knowledge (data drift) can trip up even the best systems. For example, medical reports with conflicting results, contracts with hidden exceptions, or rapidly evolving technical standards can all lead to summaries that are misleading, incomplete, or just plain wrong.

Checklist for spotting and managing AI summary edge cases:

- Look for abrupt topic shifts or missing sections

- Check for contradictions against the original text

- Validate inclusion of critical qualifiers or exceptions

- Review for outdated terminology or references

- Scan for omitted tables, appendices, or footnotes

- Flag summaries that seem overly “confident” or absolute

Evaluating output quality: metrics that actually matter

Assessing summary quality isn’t just about length or speed. Industry-standard metrics like ROUGE (Recall-Oriented Understudy for Gisting Evaluation) and BLEU (Bilingual Evaluation Understudy) are used to compare machine summaries to human references—but these can miss deeper issues like relevance or actionable insight. Human-centric evaluation, such as expert review, remains the gold standard.

Key evaluation metrics:

- ROUGE: Measures overlap of n-grams, word sequences, and word pairs between model-generated and reference summaries. Good for recall and coverage.

- BLEU: Originally for translation, BLEU evaluates how closely the AI’s summary matches a reference, focusing on precision.

- Human-centric evaluation: Human experts judge summaries for accuracy, relevance, and clarity—indispensable for high-stakes documents.

Putting technical document summarization to work: practical guides and workflows

Step-by-step: building your own summarization pipeline

Launching a robust summarization workflow isn’t plug-and-play. It demands clarity around goals, iterative testing, and integration with existing systems. Whether you’re a solo researcher or an enterprise IT leader, the core steps remain the same: from document ingestion to validation, each phase should be deliberate and auditable.

Priority checklist for deploying technical document summarization:

- Identify document types and use cases

- Set clear summary objectives (compliance, insights, etc.)

- Select or customize an AI model tailored to your domain

- Preprocess documents (formatting, cleanup)

- Define prompt templates that guide summarization

- Run pilot tests on sample datasets

- Embed human-in-the-loop review process

- Integrate with document management/workflow tools

- Regularly retrain or tune the model with new feedback

- Monitor, audit, and refine the pipeline for continuous improvement

Common pitfalls and how to avoid them

Organizations stumble not because of bad intentions, but because of avoidable mistakes: vague requirements, skipping validation, or failing to consider data security. Getting technical document summarization right is about rigor and relentless attention to process.

- Seven practical tips to dodge the most frequent failures:

- Always define your summary’s audience and purpose

- Preprocess documents for clean, consistent input

- Customize prompts for each document type

- Validate results against the original text, not just reference summaries

- Keep humans in the loop for critical documents

- Monitor for data drift and update models regularly

- Protect sensitive data by choosing secure, compliant platforms

Integrating with existing tools and platforms

AI summarization shines brightest when it’s woven into daily operations—feeding results into compliance dashboards, project management tools, or collaborative review platforms. Modern API-driven solutions like textwall.ai make integration frictionless, allowing teams to automate the mind-numbing parts and focus on high-value decision-making.

Unconventional uses and future frontiers of technical document summarization

Beyond the obvious: surprising applications across industries

Technical document summarization is leaking far beyond the confines of research, law, and engineering. In manufacturing, AI is condensing maintenance logs to highlight recurring faults. Insurance firms are summarizing claims files for fraud detection. Content moderation teams are using summaries to triage flagged material more quickly.

- Five unconventional uses for technical document summarization:

- Manufacturing: Identifying trends in maintenance or incident reports

- Insurance: Summarizing claims and identifying risk patterns

- HR: Condensing performance reviews for talent analytics

- Journalism: Curating newswire feeds for editorial decisions

- Public sector: Summarizing citizen feedback for policy development

Real-time summarization: hype or the next big leap?

The holy grail is real-time summarization—instantly processing technical data as it arrives. The technical and organizational barriers are formidable: streaming input, latency, and the need for on-the-fly validation. While cutting-edge solutions are emerging, most organizations still wrestle with batch processing and post-hoc review.

"Summarization at the speed of thought is almost within reach—almost." — Morgan, CTO at Analytics Firm (Illustrative)

The ethical minefield: bias, privacy, and information ownership

Technical document summarization is not immune to the dark side: bias can creep in through training data, sensitive information can leak via third-party APIs, and the question of “who owns the summary” can quickly spiral into legal fights. Robust mitigation strategies—such as model audits, on-premise processing, and explicit consent protocols—are non-negotiable for responsible adoption.

| Ethical Issue | Description | Mitigation Strategy |

|---|---|---|

| Bias in summaries | Inherited from training data or prompts | Regular audits, diverse datasets |

| Privacy risks | Exposure via third-party APIs | On-premise solutions, encryption |

| Ownership ambiguity | Who controls the summary IP? | Explicit contractual terms |

| Transparency | Opaque model decisions | Explainable AI, audit logs |

| Misrepresentation | Summaries distorting original intent | Human review, dual-check process |

Table 5: Key ethical issues and mitigation strategies in technical document summarization

Source: Original analysis based on Analytics Vidhya, 2024, EdenAI, 2025.

Adjacent tech: automated extraction vs. summarization

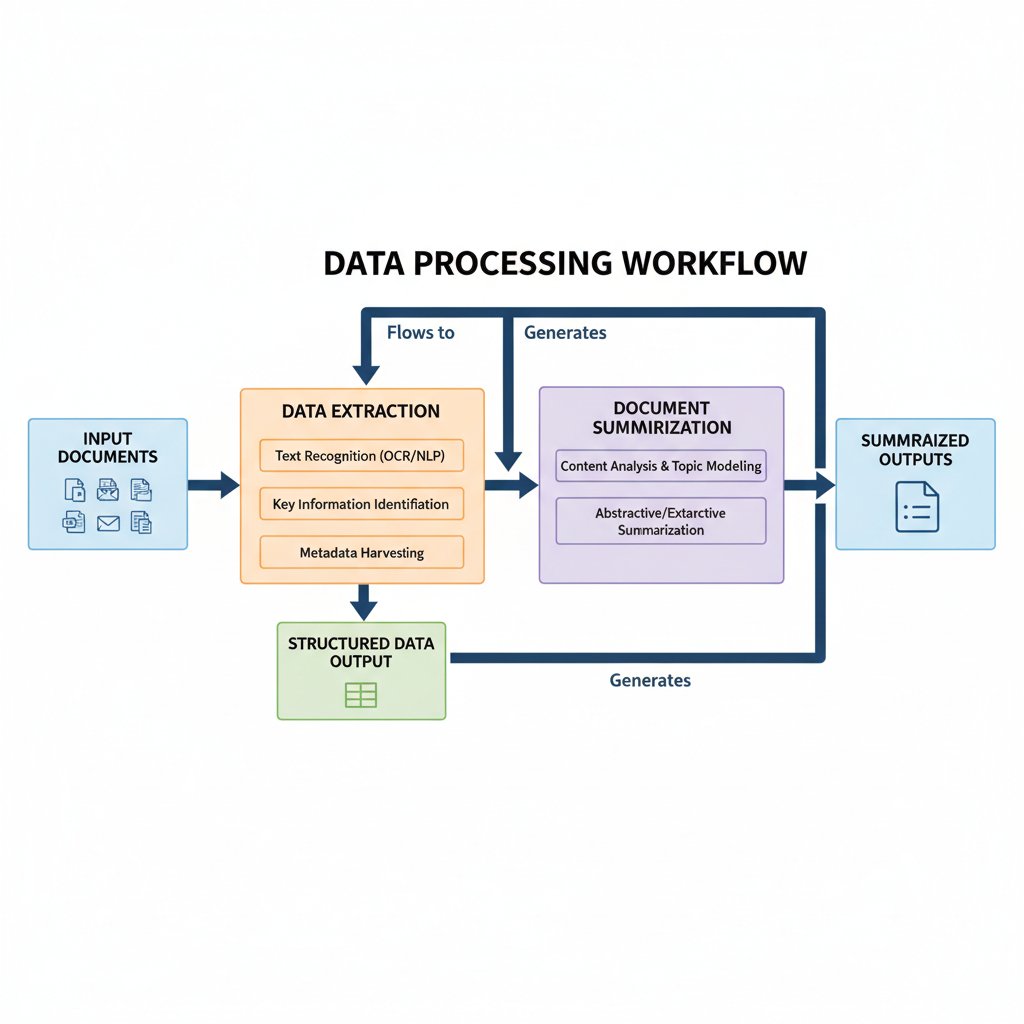

Where extraction ends and summarization begins

Data extraction is about pulling specific facts—names, dates, numbers—from documents. Summarization, on the other hand, is about distilling meaning and context across entire sections or documents. Both are critical, but they serve different masters: extraction feeds analytics, summarization feeds decision-making.

Key definitions:

-

Extraction:

Capturing structured data points (e.g., invoice totals, contract parties) from unstructured text. -

Summarization:

Condensing content for human comprehension, preserving context and intent—not just data points. -

Abstraction:

Generating high-level overviews, sometimes skipping granular details to focus on broader themes or implications.

Synergy: combining extraction and summarization for maximum impact

The real magic happens when extraction and summarization are fused in intelligent workflows. For example, extraction surfaces contract renewal dates, while summarization highlights shifts in terms or obligations—together, they offer the “what” and the “why” at a glance.

Can you really trust AI summaries? The compliance and trust debate

The regulatory landscape: what’s required, what’s risky

Compliance isn’t just a box-ticking exercise; it’s a minefield of evolving standards and unforgiving penalties. Regulators expect defensible, auditable, and transparent processes. Relying blindly on AI-generated summaries—without robust validation and traceability—can expose organizations to steep fines, lawsuits, or reputational ruin.

Five regulatory red flags to watch for when relying on AI summaries:

- Lack of audit trails for summary generation

- Absence of human review in compliance workflows

- Black-box models with no explainability

- Data processed outside approved jurisdictions

- Ignoring document retention or destruction policies

Building trust: transparency, auditability, and the role of human review

Trust is earned through transparency—detailed logs, explainable outputs, and a clear chain of accountability from document ingestion to summary review. Platforms that offer audit trails, secure processing, and seamless human-in-the-loop options are leading the charge, helping organizations prove compliance and build internal confidence.

"Transparency isn’t a luxury—it’s survival." — Riley, Chief Compliance Officer (Illustrative)

The future nobody wants to admit: where technical document summarization goes from here

From hype to habit: how organizations will adapt

Adopting technical document summarization isn’t a single leap—it’s a series of awkward, necessary changes. Teams must shift from treating AI as a shortcut to leveraging it as a partner— continuously refining prompts, reviewing output, and investing in education. The organizations who thrive are those who bake these workflows into their culture, not just their technology stacks.

- Six predictions for how technical document summarization reshapes knowledge work:

- Document review shifts from junior staff to AI “first draft” with expert review

- Summaries become standard deliverables in project handoffs

- Prompt engineering emerges as a core professional skill

- Human oversight is formalized in compliance protocols

- Document management platforms prioritize summary integration

- Auditable, explainable AI becomes a regulatory requirement

The rise of AI-powered knowledge workers

The line between “analyst” and “AI operator” is blurring. Success now hinges on those who can not only read between the lines but train, tune, and oversee AI partners. Diverse teams leveraging platforms like textwall.ai are setting new productivity benchmarks—where the machine does the heavy lifting but the human brings judgment, creativity, and context.

What to watch for: emerging trends, pitfalls, and opportunities

The only thing more dangerous than ignoring the future is being blindsided by it. Leaders must stay vigilant—scanning for both the next breakthrough and the next disaster in the making.

Seven trends and threats to monitor in technical document summarization:

- Rise of regulatory audits focused on AI compliance

- Proliferation of “black box” summarizers with untraceable logic

- Growing demand for prompt engineering and model tuning expertise

- Expansion of real-time summarization in critical workflows

- Escalation of data privacy and security requirements

- Increasing sophistication of adversarial attacks on AI outputs

- Integration of cross-lingual summarization for global teams

Quick reference: essential resources, checklists, and guides

Self-assessment: is your organization ready?

Before diving into the deep end, organizations must confront their own readiness. The following checklist helps teams identify gaps and strengths in their technical document summarization journey.

Ten-point self-assessment for organizational readiness:

- Do you know which documents most need summarization?

- Are your summary goals clear and measurable?

- Is your data preprocessed and consistently structured?

- Have you selected (or customized) an AI model for your domain?

- Is there a dedicated review process for summary validation?

- Are integrations with existing systems mapped and tested?

- Do you have clear data security and privacy protocols?

- Is there an audit trail from document ingestion to summary output?

- Does your team have prompt engineering expertise?

- Are you prepared to monitor and refine the process over time?

Further reading and expert communities

Staying ahead in technical document summarization means tapping into the right resources and communities. Whether you’re seeking peer-reviewed research, expert forums, or the latest tools, these sources are essential for ongoing learning.

- Best AI Document Summarizers for 2025 — In-depth reviews of leading tools (Verified 2025)

- Best Text Summarization APIs 2025 — API-driven options for developers (Verified 2025)

- Analytics Vidhya: Top Summarization Tools — Comparative industry analysis (Verified 2024)

- OpenAI Community — Peer-to-peer support and prompt engineering guides

- arXiv.org: Computation and Language — Cutting-edge academic research

- KDnuggets: NLP Resources — Tutorials and toolkits for technical teams

- textwall.ai — Expert-driven insights and ongoing guides for practitioners

Conclusion

Technical document summarization isn’t just another productivity hack—it’s a survival strategy for organizations facing down the information apocalypse. The tools are more powerful than ever, but the real edge comes from those willing to own the process: blending sharp AI with sharper human oversight, demanding transparency, and never mistaking the map for the territory. As verified by Gartner, 2024 and Analytics Vidhya, 2024, the difference between chaos and clarity is no longer about brute force—it’s about strategic adoption, ruthless validation, and an unyielding commitment to truth over convenience. Own your summaries. Question everything. And remember: in the land of relentless documents, only the prepared, the critical, and the adaptable thrive.

Sources

References cited in this article

- Best AI Document Summarizers for 2025(friday.app)

- Best Text Summarization APIs 2025(edenai.co)

- Analytics Vidhya: Top Summarization Tools(analyticsvidhya.com)

- AI Document Summarization: Transforming Information Overload(documentllm.com)

- Doctopus: Information Overload(doctopus.io)

- ShareFile: AI Document Summarization Guide(sharefile.com)

- DocumentLLM: AI Document Summarization Guide(documentllm.com)

- GetMagical: AI Summarizers 2024(getmagical.com)

- OSTI.gov: Advances in Document Summarization 2023–2024(osti.gov)

- FluidTopics: Technical Documentation Trends 2024(fluidtopics.com)

- Width.ai: Long Text Summarization Methods(width.ai)

- Pure AI, 2024(pureai.com)

- Enago Academy: AI Summarization Tools(enago.com)

- DocumentLLM: Transforming Information Management(documentllm.com)

- ScienceDirect: Human-in-the-Loop Survey(sciencedirect.com)

- NIST: Human-in-the-Loop Technical Document Annotation(nist.gov)

- Oneil.com: Technical Documentation Myths(oneil.com)

- Peoples Dispatch: Hidden Costs of AI(peoplesdispatch.org)

- Width.ai: Open Source LLMs(width.ai)

- Springer: Evaluation Metrics Survey(link.springer.com)

- Neptune.ai: LLM Evaluation(neptune.ai)

- PHPKB: Writing Effective Technical Documentation 2024(phpkb.com)

- Huggingface Tutorial(freecodecamp.org)

- Google Cloud: Generative AI Summarization Pipeline(cloud.google.com)

- ScienceDirect: Abstractive Summarization Frontiers(sciencedirect.com)

- Frontiers in AI: SATS Summarization(frontiersin.org)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Summarize Technical Documents Without Losing Nuance or Missing Risk

Summarize technical documents with radical accuracy—uncover hidden risks, smart solutions, and expert insights. Get ahead with this definitive 2026 guide.

Summarize Research Papers Quickly Without Falling for Bad AI

Summarize research papers quickly with edgy, expert-backed strategies. Discover the overlooked methods, AI hacks, and real-world pitfalls in 2026. Start mastering summaries now.

Summarize Reports Instantly Without Losing Nuance or Control

Summarize reports instantly with cutting-edge AI. Discover hidden truths, avoid common pitfalls, and transform your workflow today. Don’t settle for shallow summaries.

Summarize Patient Records Without Missing What Saves Lives

Summarize patient records like a pro—uncover myths, hidden risks, and bold new solutions for 2026. Don’t let your summaries fail you. Get ahead now.

Summarize Medical Records Without Missing What Actually Matters

Summarize medical records with confidence—discover the hidden pitfalls, expert strategies, and shocking realities that change how you see every patient file.

Summarize Lengthy Text Online Without Losing What Matters

Summarize lengthy text online with confidence—discover the hidden pitfalls, expert tactics, and bold truths about AI summaries in this brutally honest guide.

Summarize Lengthy Reports Like a Strategist, Not a Stenographer

Discover insights about summarize lengthy reports

Summarize Extensive Reports Online Without Losing What Matters

Summarize extensive reports online faster and smarter. Discover the truth about AI tools, common pitfalls, and get actionable advice to transform your workflow.

Summarize Content Quickly Without Losing Context or Getting Misled

Summarize content quickly with radical new hacks and real-world advice. Discover why speed isn’t always your friend—and what experts secretly do.