Natural Language Document Analysis Is Your Unfair Data Advantage

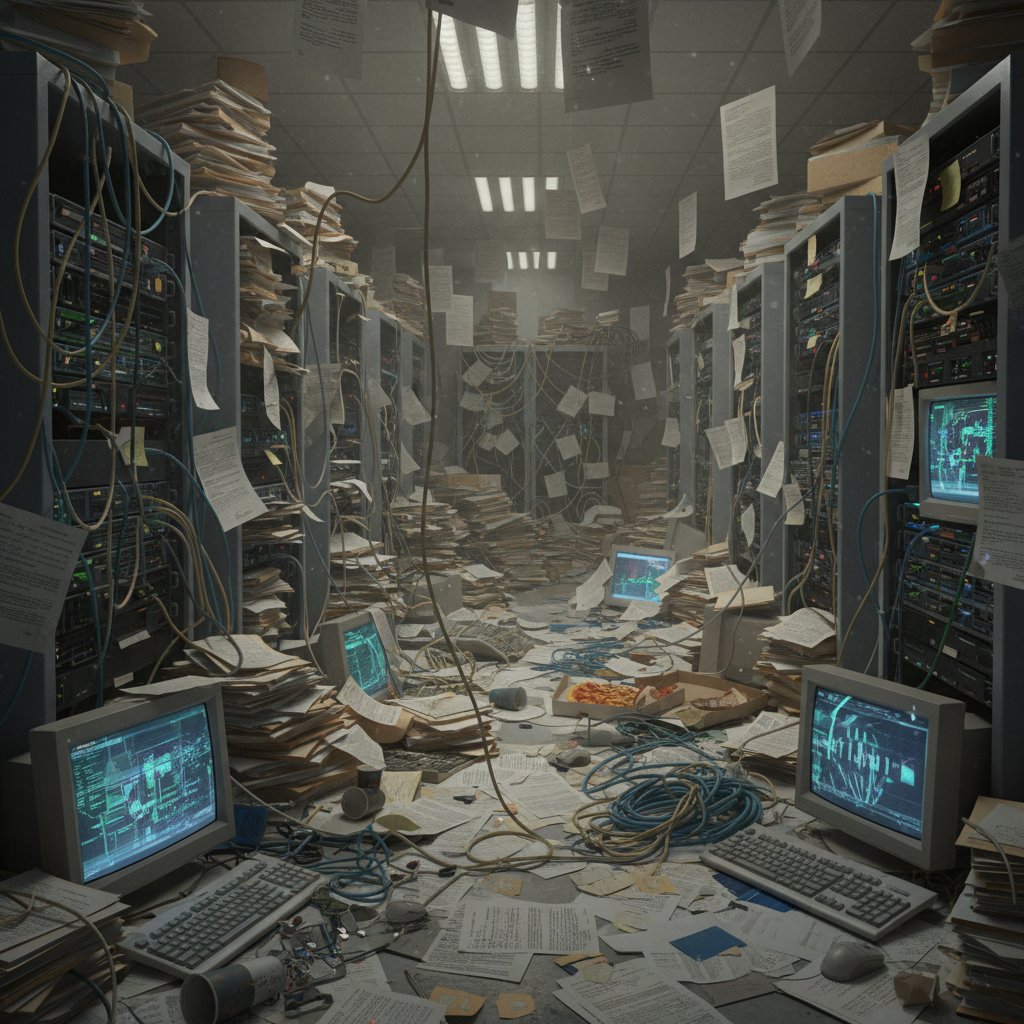

In a world drowning in words but starved for insight, natural language document analysis has become the unsung engine powering everything from whistleblower exposés to billion-dollar boardroom decisions. Blink and you’ll miss it: legal contracts, academic research, market reports, even the endless email chains that make or break corporate deals—all reduced to a digital sea of unstructured chaos. Yet, hidden in this avalanche of raw text are signals, risks, and opportunities overlooked by all but the most advanced AI document processors. The brutal truth? Most organizations are still flailing in the dark, relying on legacy systems or manual review while their competitors quietly weaponize cutting-edge AI to parse meaning from the madness.

This isn’t another hype piece. Here, you’ll uncover the nine hardest truths about natural language document analysis, the hacks that actually work, and the raw edge where machine intelligence meets human judgment. We’ll dissect the state of AI text analytics, expose myths, and show how platforms like textwall.ai are irreversibly changing the field—without ever promising magic. Forget buzzwords. It’s time for the real story.

Why natural language document analysis is the world’s most overlooked power move

The hidden cost of ignoring unstructured data

If your organization is still ignoring unstructured data—think emails, reports, contracts, or customer feedback—you’re not just missing out. You’re hemorrhaging value, and competitors are happy to pick up the slack. According to a recent study by Expert.ai (2023), 71% of NLP solutions now address multiple analytics use cases, signaling that organizations are moving beyond surface-level keyword matching to extract actionable insights.

Unstructured data accounts for over 80% of all enterprise information, yet traditional data mining tools aren’t built to handle the subtlety, ambiguity, or contextual nuance of human language. Miss a clause in a legal contract or a red-flag phrase in a patient record, and the financial—and regulatory—consequences can be savage. In fact, NLP market revenue hit $37.1 billion in 2023, with healthcare alone accounting for 20% of that spend (Scoop.market.us, 2024). This isn’t just about efficiency. It’s survival.

| Data Type | Structured (Relational DBs) | Semi-Structured (Emails, XML) | Unstructured (Reports, Contracts) |

|---|---|---|---|

| Volume in Enterprise | 10-20% | 15-25% | 60-80% |

| Analysis Readiness | High | Medium | Low |

| Risk of Oversight | Low | Medium | High |

Table 1: The distribution of data types and their risk profiles in a typical enterprise environment.

Source: Original analysis based on Expert.ai, 2023, Scoop.market.us, 2024

From spam filters to boardrooms: a brief history

Natural language document analysis didn’t burst onto the scene fully formed. Its roots run deep—from the early days of keyword-based spam filters in the late 1990s, through rule-based information retrieval, to the neural revolution of the 2010s. What started as a blunt instrument to keep junk out of your inbox now sits at the center of high-stakes corporate decisions and scientific discoveries.

- Spam Filtering (1998-2005): Primitive Bayesian classifiers flagging suspicious emails.

- Enterprise Search (2005-2012): Rule-based text mining for knowledge management.

- Semantic Analysis (2012-2017): Introduction of word embeddings and basic sentiment detection.

- Deep Learning Era (2017-2020): LSTM and transformer models enable context-aware parsing.

- LLM Explosion (2020-2024): Transformer-based large language models reshape the landscape, powering solutions like textwall.ai and driving market expansion.

| Era | Milestone Technology | Typical Application |

|---|---|---|

| 1998–2005 | Bayesian Classifiers | Spam detection, filtering |

| 2005–2012 | Rule-Based NLP | Enterprise search, compliance |

| 2012–2017 | Word Embeddings | Sentiment analysis, chatbots |

| 2017–2020 | LSTM, Transformers | Summarization, classification |

| 2020–2024 | LLMs (e.g., GPT) | Complex document analysis, insight mining |

Table 2: Evolution of document analysis technologies and their enterprise impact.

Source: Original analysis based on Gartner Hype Cycle, 2024, Scoop.market.us, 2024

The scale of the chaos: just how much data are we missing?

The numbers boggle the mind. IDC estimates that by 2025, global data volume will hit 175 zettabytes, with over 80% unstructured. Yet, according to Nature Reviews Psychology, 2024, most current AI models still struggle to grasp context, subtlety, and intent—even as they process petabytes of text. The upshot is that organizations are flying blind through a storm of information, missing critical signals.

The result? Missed risks, overlooked opportunities, and a growing gulf between organizations that harness advanced document analysis and those still wading through keyword soup.

From keyword soup to AI insight: how modern document analysis actually works

What is natural language document analysis—really?

It’s easy to get lost in the jargon. At its core, natural language document analysis refers to the process of using computational techniques—statistical, rule-based, or AI-powered—to extract structure, meaning, and actionable insights from text-heavy documents. But the devil is in the details.

- Document Parsing: Breaking down text into readable units (sentences, paragraphs, sections).

- Semantic Analysis: Interpreting meaning, context, and relationships between entities.

- Summarization: Distilling lengthy documents into concise, relevant overviews.

- Classification & Categorization: Sorting documents by topic, intent, or sentiment.

- Entity Extraction: Identifying names, dates, places, organizations, and more.

Definition List:

The initial breakdown of large texts into structured, analyzable units. This is foundational for downstream tasks and is often enhanced with advanced segmentation algorithms for improved accuracy (textwall.ai/document-parsing).

Going beyond mere keywords, this layer interprets context, nuance, and relationships—crucial for applications in law, healthcare, and business (Expert.ai, 2023).

LLMs vs classic NLP: the new battleground

For years, classic NLP systems relied on hand-crafted rules and statistical models. The rise of transformer-based large language models (LLMs) changed the equation. But are LLMs really superior—or do they introduce new headaches?

| Feature | Classic NLP | Transformer-based LLMs |

|---|---|---|

| Context Awareness | Limited | High (multi-sentence, document-level) |

| Training Data Requirement | Moderate | Massive |

| Domain Adaptability | Low | Moderate to High (with fine-tuning) |

| Explainability | High | Often poor |

| Computational Cost | Low | High |

| Hallucination Risk | Low | High |

| Current Enterprise Adoption | Medium | Rapidly growing (>50% in 2024) |

Table 3: Comparing classic NLP and LLMs in enterprise document analysis.

Source: Original analysis based on Nature Reviews Psychology, 2024

Why context matters more than keywords (and how AI gets it wrong)

For all their power, even today’s best AI models still stumble on context. According to Nature Reviews Psychology, 2024, language models often output plausible-sounding but contextually inaccurate text—a phenomenon known as “hallucination.” In high-stakes domains, this is more than an annoyance; it’s a liability.

"Current LLMs excel at generating fluent language but can falter when interpreting real-world context and pragmatic intent."

— Dr. Anna Ivanova, Cognitive Scientist, Nature Reviews Psychology, 2024

- Contextual ambiguity: Models miss the difference between “cancel the contract” and “renew the contract.”

- Domain drift: A system trained on legal documents might misinterpret medical terminology.

- Surface-level matching: Relying on keyword frequency rather than genuine understanding can lead to false positives—and blind spots.

The solution? Human-in-the-loop models, domain-specific fine-tuning, and platforms that combine statistical muscle with expert oversight.

Inside the black box: what actually happens when you upload a document

Step-by-step walkthrough: AI document analysis in action

Ever wondered what happens the moment you upload a densely packed report or legal contract to an AI platform? Here’s a blow-by-blow:

- Document Ingestion

The platform parses the file format—PDF, Word, scanned image—and converts it to machine-readable text. - Preprocessing

Text is cleaned: punctuation standardized, irrelevant metadata removed, language detected. - Segmentation

Sentences and paragraphs are demarcated; sections and headings are identified for context. - Feature Extraction

Named entities (people, organizations, dates) and key phrases are highlighted. - Semantic Analysis

The system applies transformer-based models, graph neural networks, or hybrid approaches to interpret meaning, context, and relationships. This is where LLMs or fine-tuned models shine. - Summarization and Categorization

Insights are distilled into concise summaries or categorized by topic, sentiment, or risk. - User Feedback Loop

Many platforms allow users to flag errors or refine results, fueling continuous learning.

Common mistakes that sabotage your results

Missteps abound, even with the best AI at your fingertips:

-

Uploading low-quality scans or non-editable PDFs, leading to OCR errors and missing data.

-

Ignoring domain-specific vocabulary—standard models may not recognize sector-specific jargon.

-

Relying solely on out-of-the-box summaries, which can overlook critical subtleties.

-

Skipping the human-in-the-loop phase and failing to review flagged ambiguities.

-

Assuming perfect accuracy from AI outputs.

-

Failing to provide context for analysis (e.g., document type or industry).

-

Uploading documents with mixed languages without proper preprocessing.

-

Not updating models with new domain data, leading to outdated interpretations.

How textwall.ai fits into today’s landscape

Platforms like textwall.ai represent the apex of current AI document analysis. Rather than promising silver bullets, they blend advanced LLMs, customizable analysis preferences, and scalable architectures. The result? Faster, more accurate parsing of complex documents in law, research, business, and beyond. Trusted by analysts, researchers, and professionals who need more than just "good enough," textwall.ai’s AI adapts to context, surfaces critical insights, and integrates seamlessly into existing workflows.

Mythbusting: what everyone gets wrong about AI and document analysis

Debunking the ‘set and forget’ fantasy

It’s tempting to treat AI document analysis as a plug-and-play solution. The reality is fiercer. AI models are only as good as their training, the quality of your data, and the vigilance of your oversight.

“Document analysis isn’t a set-and-forget affair. Real value comes from an ongoing feedback loop between humans and machines.”

— Dr. Emily Bender, Computational Linguist, Nature Reviews Psychology, 2024

The myth of perfect accuracy (and why nuance wins)

AI is not infallible. According to Scoop.market.us, 2024, even state-of-the-art models achieve only 94% recall in domain-specific tasks like patent analysis using graph neural networks—a major leap, but not perfection.

| Task | Best AI Recall | Human Analyst Recall | Nuanced Errors (Missed context, bias) |

|---|---|---|---|

| Patent Analysis (GNN-based) | 94% | 98% | Medium |

| Legal Clause Extraction (LLM) | 87% | 96% | High |

| Sentiment in Customer Feedback | 82% | 90% | High |

Table 4: AI vs. human accuracy and where nuance makes a difference.

Source: Original analysis based on ACL Anthology, 2024, Scoop.market.us, 2024

- Subtle context switches (negation, sarcasm, legal double negatives) trip up even elite models.

- Domain knowledge gaps: General-purpose LLMs flounder in technical or industry-specific documents.

- Bias and data drift: AI is only as objective as the data it’s trained on.

Bias, hallucination, and the limits of LLMs

While LLMs are formidable, they’re not oracles. According to Nature Reviews Psychology, 2024, model hallucination—where AI generates plausible-sounding but false text—remains a persistent challenge. This isn’t just theoretical: in legal or compliance contexts, a single error can cost millions.

Game-changers: real-world stories where document analysis turned the tide

How a global NGO uncovered fraud with AI

A leading NGO faced a classic dilemma: thousands of expense reports, hundreds of pages each, and a suspicion that something didn’t add up. Manual review would have taken months. By deploying an AI-powered document analysis platform, they identified linguistic anomalies—unusual phrasing, repeated justifications across different reports, and discrepancies in signature styles. Within days, the NGO flagged fraudulent documents linked to a coordinated scheme, saving over $2 million in potential losses.

- Data ingestion: Bulk upload of scanned reports.

- Semantic anomaly detection: AI flagged suspicious patterns.

- Human review: Experts confirmed AI findings, leading to further investigation.

- Actionable insight: Policy changes implemented based on the discovered fraud patterns.

Three ways journalists are using AI to break stories

Investigative journalists now rely on advanced document analysis to sift through leaks and data dumps, surfacing the stories that matter:

- Pattern recognition in whistleblower leaks: AI uncovers repeated phrases and unusual metadata in thousands of files.

- Automatic topic clustering: Grouping related documents helps journalists focus on the biggest red flags first.

- Sentiment and tone analysis: Detecting shifts in language that might indicate coercion or concealment.

- Automated summarization of hours of legal testimony.

- Detection of inconsistencies in regulatory disclosures.

- Source triangulation by cross-referencing public and leaked documents.

The academic revolution: what researchers are doing with LLMs

Academic researchers increasingly leverage LLMs for literature reviews, metadata extraction, and trend analysis across thousands of publications.

"Large language models have cut literature review times by up to 40%, allowing researchers to focus on genuine discovery instead of manual search."

— Dr. Julian Becker, Research Director, ACL Anthology, 2024

The edge: advanced strategies for extracting actionable insights

Semantic search: beyond surface-level analysis

Forget simple keyword matching. Semantic search uses deep learning to connect concepts, intent, and relationships—surfacing information that keywords alone would miss.

Definition List:

An AI technique that matches user queries to documents based on meaning, not just words—enabling discovery of relevant content that might not share obvious phrasing.

Networks that operate on data structured as graphs, mapping relationships between entities. According to ACL Anthology, 2024, GNNs enable up to 94% recall in patent and technical document analysis.

Combining human intuition with machine muscle

Pure automation is a myth—AI is at its best when amplifying human insight, not replacing it.

- Active learning: Models learn fastest when users flag errors, feeding new labeled data back into the system.

- Expert-in-the-loop: Domain specialists review and correct AI outputs, dramatically improving overall system performance.

- Continuous retraining: Regular updates with new domain data keep models from drifting into irrelevance.

- Hybrid workflows: AI handles grunt work; humans make the final calls.

Feature engineering: what separates average from elite

What’s under the hood matters. Elite document analysis systems invest heavily in feature engineering—curating the right input signals for models, from syntactic cues to topic modeling vectors.

| Feature Type | Importance in Analysis | Example Application |

|---|---|---|

| Named Entity Recognition | Critical | Contract review, risk flagging |

| Topic Modeling | High | Academic paper clustering |

| Dependency Parsing | Medium | Legal clause extraction |

| Sentiment Analysis | Medium | Customer feedback, social media |

| Custom Taxonomies | High | Regulatory compliance |

Table 5: Feature contributions to advanced document analysis.

Source: Original analysis based on Expert.ai, 2023, ACL Anthology, 2024

Risks, red flags, and the future: what nobody wants to talk about

Data privacy nightmares and how to fight back

Processing sensitive documents—contracts, medical records, HR files—comes with real privacy risks.

- Unencrypted data transfer: Always demand end-to-end encryption for uploads and downloads.

- Cloud vs. on-premises: Know where your data lives, and choose platforms that offer private deployment options.

- Model data leakage: Avoid solutions that use your proprietary data to train public models.

- Inadequate access controls: Ensure strict user permissions and audit trails for every document action.

The explainability dilemma: when you can’t trust the machine

Even the most advanced systems can deliver baffling results. When you don’t know why an AI made a decision, trust—and regulatory compliance—fracture.

“Explainable AI isn’t a luxury. In regulated industries, it’s non-negotiable.”

— Dr. Laura Chen, AI Governance Expert, Nature Reviews Psychology, 2024

The regulatory curveball: what’s coming in 2025

Regulatory landscapes are shifting. Laws like the EU AI Act and U.S. privacy regulations are forcing organizations to rethink document analysis workflows, emphasizing transparency, auditability, and data protection.

| Region | Key Regulation | Impact on Document Analysis |

|---|---|---|

| EU | AI Act, GDPR | Strict transparency, data residency |

| US | CCPA, NIST AI Risk | Disclosure, opt-out requirements |

| APAC | Local privacy laws | Varies by country |

Table 6: Regulatory factors affecting document analysis platforms in major markets.

Source: Original analysis based on Gartner Hype Cycle, 2024

How to master natural language document analysis: your action plan

Priority checklist for bulletproof analysis

Failing to plan is planning to fail. Here’s how to lock down your document analysis workflows:

- Define your objectives: Know whether you’re after compliance, insight, or automation—different goals, different toolkits.

- Vet your data: Clean, high-quality documents yield better AI results.

- Pick the right platform: Don’t settle for generic NLP—seek out tools proven in your industry.

- Customize your models: Fine-tune with domain data and human feedback loops.

- Audit outputs: Routinely review results for errors, bias, and drift.

- Protect your data: Demand transparency about storage, processing, and privacy.

- Train your team: Make sure users understand both strengths and limitations.

Red flags to watch out for in vendor promises

Don’t get blindsided by clever marketing. Be alert for:

- Claims of “100% accuracy”—AI doesn’t do perfection.

- Opaque models: If you can’t audit outputs, walk away.

- No human-in-the-loop: Pure automation is a myth.

- Lack of privacy controls: If the vendor can’t explain data handling, run.

- Slow model updates: Stale models are liability magnets.

- One-size-fits-all solutions: Your industry needs specifics, not generalities.

Insider tips for getting results fast

- Upload clean, machine-readable files for best results.

- Always review AI-suggested summaries and flag errors for retraining.

- Leverage domain-specific models and taxonomies when available.

- Use hybrid workflows—let the system surface signals, but keep final review with a human.

- Stay engaged with community forums and user groups for latest hacks.

- Set up recurring retraining schedules for your models.

- Keep feedback loops short—close the gap between user review and model update.

- Document your own best practices and share them with your team.

Beyond the hype: where document analysis goes next

Will LLMs replace human judgment—or just amplify it?

The smartest organizations treat AI as a force multiplier, not a replacement. As Dr. Emily Bender notes:

“AI amplifies expertise rather than replacing it. It’s the combination of human context and machine scale that delivers breakthrough insights.”

— Dr. Emily Bender, Computational Linguist, Nature Reviews Psychology, 2024

Emerging applications nobody’s talking about

- Real-time compliance monitoring in government procurement.

- Dynamic risk assessment for insurance claims using semantic analysis.

- Automated curation of academic literature for meta-analyses.

- Live feedback systems in customer support, flagging intent and urgency.

- Adaptive education content review for curriculum alignment.

- Misinformation detection in public policy documents.

- Deep-dive analysis for ESG (Environmental, Social, Governance) reporting.

What to watch in the next 18 months

- Rapid expansion of multilingual document analysis tools.

- Mainstreaming of privacy-preserving techniques (e.g., federated learning).

- Proliferation of explainable AI requirements in regulated industries.

- Explosion of open-source, community-driven document analysis libraries.

- Tighter integration of semantic search with business intelligence platforms.

Essential glossary: decoding the jargon

Definition List:

The field of computer science focused on enabling machines to understand, interpret, and generate human language. Modern NLP powers document analysis, chatbots, and more.

AI models trained on massive text datasets to understand and generate natural language, with transformer architectures like GPT dominating the field since 2020.

The layer of document analysis concerned with extracting meaning and context, not just word counts or frequencies.

An architecture for deep learning models that uses self-attention to process sequences of text, enabling greater context awareness. Transformers underpin LLMs and are central to modern AI document analysis.

The process of identifying and classifying key entities—like people, organizations, and locations—in a body of text.

A well-rounded understanding of these terms arms you to parse marketing claims and recognize real innovation in a crowded field.

Natural language document analysis isn’t for the faint-hearted. But with these tools, you’re ready to outpace the competition.

Appendix: extended comparisons, case specifics, and further resources

Timeline: the evolution of document analysis

The journey from spam filters to semantic supercomputers is paved with breakthroughs—and plenty of missteps.

| Year | Milestone | Impact |

|---|---|---|

| 1998 | Spam filter launch | First NLP in enterprise |

| 2005 | Rule-based search | Shift to structured retrieval |

| 2012 | Word embeddings | Context-aware document parsing |

| 2017 | Transformers | Deep context, multimodal input |

| 2020 | LLMs (GPT, BERT) | Human-level summarization |

| 2024 | GNNs & hybrid AI | 94%+ recall in technical docs |

Table 7: Key milestones in the evolution of document analysis.

Source: Original analysis based on Gartner Hype Cycle, 2024, ACL Anthology, 2024

- Early keyword-based systems.

- Semantic analysis and word vectors.

- Rise of LLMs and transformer models.

- Industry adoption in law, healthcare, and research.

- Hybrid and explainable AI for nuanced analysis.

Case study breakdowns: process, pitfalls, and outcomes

-

Legal review: AI flagged 17 high-risk clauses in thousands of contracts, reducing manual review time by 70%—but missed a rare jurisdictional phrase requiring human intervention.

-

Market research: Automated summarization processed 500+ reports in a week, but section headers in multiple languages caused minor misclassifications.

-

Academic analysis: LLMs identified emerging trends in climate research, but struggled with new terminology in preprints.

-

Unusual data formats required custom preprocessing.

-

Users needed training to interpret AI outputs.

-

Real-world outcomes included faster insights, more consistent compliance, and dramatic cost savings.

Where to learn more and connect with the community

- ACL Anthology: Cutting-edge research on NLP and document analysis.

- Nature Reviews Psychology: In-depth discussion of LLM limitations.

- Scoop.market.us: Latest market statistics and trends.

- Expert.ai: Enterprise NLP use cases and industry data.

- Gartner Hype Cycle: Technology adoption in document analysis.

- textwall.ai: Leading-edge solutions and thought leadership in document processing.

Conclusion

Natural language document analysis isn’t just a technical upgrade—it’s a strategic necessity in a world awash with unstructured data. As research from Nature Reviews Psychology, 2024 and Scoop.market.us, 2024 reveals, the landscape is fast-moving, complex, and loaded with both promise and peril. The organizations that thrive are those that see through the myths, embrace the brutal truths, and wield the right combination of AI insight and human judgment.

Whether you’re a legal eagle, a research powerhouse, or a business strategist, mastering natural language document analysis is about more than automating grunt work. It’s about surfacing the signals that matter, outmaneuvering risk, and making decisions at the speed of relevance. Don’t settle for good enough. Demand clarity, context, and real insight. The next move is yours.

Sources

References cited in this article

- Gartner Hype Cycle 2024(gartner.com)

- Scoop.market.us(scoop.market.us)

- Nature Reviews Psychology(nature.com)

- ACL Anthology 2024(aclanthology.org)

- MarketsandMarkets(marketsandmarkets.com)

- Expert.ai(aibusiness.com)

- Formtek Blog(formtek.com)

- Statista(storvix.eu)

- Gartner(montecarlodata.com)

- Komprise Report(komprise.com)

- SpotIntelligence(spotintelligence.com)

- Medium(medium.com)

- Dataversity(dataversity.net)

- Indico Data(indicodata.ai)

- Exploding Topics(explodingtopics.com)

- Scoop.market.us(scoop.market.us)

- DocumentLLM(documentllm.com)

- Netguru(netguru.com)

- Cogninest.ai(cogninest.ai)

- Otakoyi(otakoyi.software)

- Softermii(softermii.com)

- Elastic(elastic.co)

- Loffler Blog(loffler.com)

- Expert.ai(content.expert.ai)

- PLOS ONE (2024)(journals.plos.org)

- J Med Internet Res (2024)(jmir.org)

- BMC Public Health (2023)(bmcpublichealth.biomedcentral.com)

- ResearchGate(researchgate.net)

- Merkle(merkle.com)

- Forbes(forbes.com)

- Oxford Academic (2024)(academic.oup.com)

- Intelligent Document Processing(intelligentdocumentprocessing.com)

- Siemens Thought Leadership(blogs.sw.siemens.com)

- J Med Internet Res (2024)(jmir.org)

- Wikipedia(en.wikipedia.org)

- Springer AI Review(link.springer.com)

- The Qualitative Report(nsuworks.nova.edu)

- Coherent Solutions(coherentsolutions.com)

- LANSA(lansa.com)

- Sprout Social(sproutsocial.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Market Trend Analysis in 2026: From Chasing Noise to Shaping Markets

In 2025, market trend analysis is no longer a luxury for the Fortune 500 or the playground of Wall Street’s digital prophets. It’s a blistering necessity, a

Market Research Document Summarizer: Insight or Dangerous Shortcut?

Unmasking how AI transforms research analysis in 2026. Discover hidden risks, real benefits, and what experts won't tell you.

Market Analysis Document Tools That Outthink Human Analysts

Discover the edgy, real-world guide to next-gen document analysis, pitfalls, and hidden benefits. Get ahead or get left behind.

Machine Learning Document Analysis and the Hidden ROI of Your Text

Machine learning document analysis is reshaping how we handle massive text data. Discover the hidden costs, real ROI, and edgy breakthroughs now.

Lengthy Document Summarizer in 2026: Clarity Without Blind Trust

Lengthy document summarizer exposes 2026’s raw reality—debunking myths, revealing hidden risks, and showing you how to turn chaos into clarity. Discover what others won’t tell you.

Legal Document Summarization Software: From Time Bomb to Safety Net

Legal document summarization software is changing law in 2026—saving hours, exposing risks, and raising new questions. Discover what others won’t tell you.

Legal Document Summarization, Where AI Speed Meets Human Judgment

Imagine sifting through a hundred-page contract at 2 a.m., your eyes glazing over as you hunt for a single clause that could make or break a

Legal Document Analysis That Actually Prevents Seven‑figure Mistakes

Legal document analysis exposes hidden risks and opportunities. Discover 7 brutal truths, insider strategies, and how to outsmart costly mistakes—read now.

Law Document Analysis Software: Power, Pitfalls and Who’s Liable

Law document analysis software is transforming legal work—discover hidden risks, untold benefits, and the unfiltered reality of AI-driven document review. Read before you trust any tool.