Document Summarization Technical Use When Accuracy Is Non‑negotiable

In a world where information no longer arrives in manageable waves but in relentless, suffocating tsunamis, navigating the raw, unfiltered chaos of documents has become an existential challenge. Enterprises, legal teams, academics, and analysts are drowning in reports, contracts, studies, and manuals. Document summarization technical use—AI-powered, automated, and allegedly “smart”—has been pitched as salvation. But beneath the glossy marketing and feverish hype, what is the real story? Does the technology actually deliver, or are we blindly trusting black-box algorithms to make billion-dollar decisions? This is an unflinching inside look at the guts and glitches of document summarization: the hard truths, the hidden wins, and the pitfalls nobody on the sales floor wants to admit. Whether you’re a skeptic, an early adopter, or just overwhelmed by the daily avalanche, buckle up as we unmask the state of the art—and the state of the problem.

Why document summarization matters more than you think

The information overload: a new era of document chaos

Step inside the modern workplace and you’ll find a digital landfill of unstructured text: ten-thousand-page legal disclosures, endless market research PDFs, sprawling technical manuals. According to recent data, the average enterprise processes well over 500,000 documents annually, with content growing at 40% per year (ShareFile, 2023). Add to that the near-instantaneous production of content by AI itself, and you’re staring down the barrel of an information apocalypse.

And it’s not just about volume. The variety and velocity of information present a triple threat that manual teams simply can’t keep up with. Each missed insight, every overlooked red flag, isn’t just a “learning opportunity”—it’s a liability.

- Data proliferation: With the proliferation of digital documents, critical data is buried deeper than ever before. Missed key points can mean missed deadlines, regulatory infractions, or strategic missteps.

- Cognitive burden: Human analysts face chronic burnout as they attempt to synthesize massive content, often at the expense of accuracy and well-being.

- Inefficient workflows: Manual review is slow and inconsistent, frequently leading to redundant work and bottlenecks that stall decision-making.

The stakes are higher than ever. Organizations that fail to tame this chaos risk falling behind, not just competitively but also legally and ethically.

Business stakes: when a summary can make or break millions

If you think a bad summary is just a minor inconvenience, think again. In legal, finance, and compliance, a single overlooked clause or misinterpreted insight can swing deals, ignite lawsuits, or trigger regulatory audits. Recent research from an AmLaw 100 law firm (2023) found that AI-based summarization reduced contract review time by up to 70%—but only when deployed with stringent oversight.

| Business Function | Impact of Document Summarization | Potential Risks |

|---|---|---|

| Legal Review | 70% faster contract analysis | Missed legal nuances, compliance errors |

| Market Research | 60% quicker insight extraction | Summary bias, information loss |

| Healthcare Admin | 50% reduced workload | Omitted clinical details, privacy risks |

| Academic Research | 40% less time per literature review | Misinterpretation of research findings |

Table 1: Business impact and risks associated with document summarization (Source: Original analysis based on ShareFile, 2023; AmLaw 100, 2023)

What this table doesn’t show is the invisible underbelly—how a mis-summarized document can snowball into catastrophic losses. As one compliance officer from a Fortune 500 company put it:

“An automated summary is only as good as what it leaves out. We’ve seen missing details cost millions in regulatory fines and lost deals.” — Compliance Officer, Fortune 500, cited in ShareFile AI Summarization Guide, 2023

In other words, the promise of efficiency can become a trap if not paired with scrutiny.

The human cost: burnout, bias, and the promise of relief

Behind every inefficiency is a person—or a team—forced to pick up the slack. Document overload isn’t just a technical headache; it’s a very human crisis. Burnout rates in compliance and legal review roles are at record highs, with research showing 62% of professionals citing “document fatigue” as a primary stressor (Jain et al., 2024).

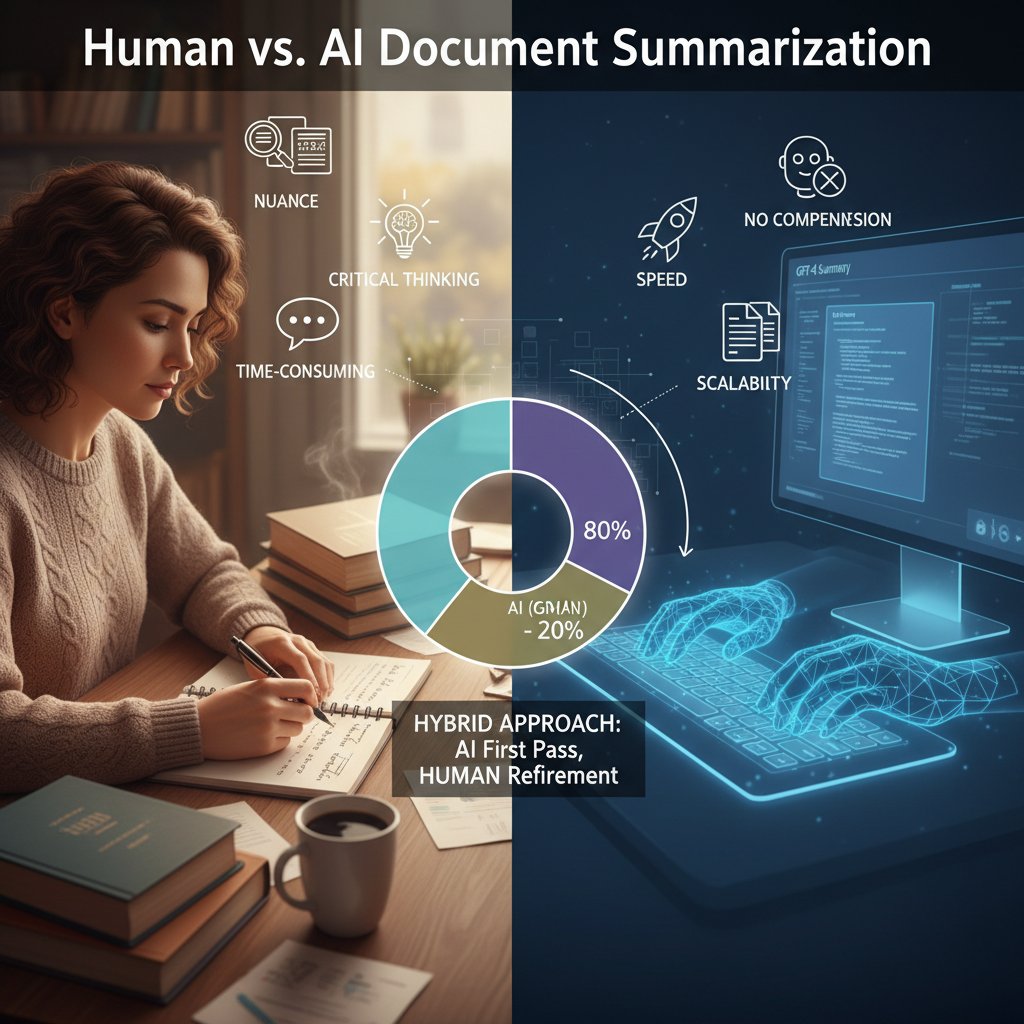

But the cost isn’t just exhaustion. Human reviewers introduce their own biases, glossing over “irrelevant” content that might, in fact, be crucial. The allure of AI-powered summarization is the promise of relief: freeing human minds from tedious grind, restoring focus to strategic, creative endeavors.

Yet, trusting algorithms means swapping one set of biases for another. The solution? Hybrid approaches that empower humans with AI, instead of replacing them. This isn’t about “saving jobs” as much as saving sanity—and decision quality.

Unpacking how document summarization actually works

From extractive to abstractive: the two faces of summarization

At its core, document summarization splits into two camps: extractive and abstractive. Understanding the difference is everything.

Selects and stitches together the most important sentences or phrases from the source document. No new language, just a greatest-hits compilation.

Synthesizes and paraphrases information, generating new sentences that may not exist verbatim in the original. This is “human-like” summarization—harder, riskier, more powerful.

Most commercial systems (including many “AI-powered” tools) are still largely extractive under the hood, despite flashy claims.

The devil is in the details. Here’s a comparison of the two approaches:

| Summarization Type | Pros | Cons |

|---|---|---|

| Extractive | High factual accuracy, low risk of “hallucination” | Can be incoherent, misses deeper meaning, often verbose |

| Abstractive | More concise, human-like, captures nuance | Prone to errors, hallucinations, requires more training data |

Table 2: Extractive vs. Abstractive Summarization (Source: Hassija et al., 2023; Wang et al., 2024)

No single method is “best”—it’s all about context and risk tolerance. Hybrid models are emerging, but they bring their own complexity (Link & Hooker, 2024).

The LLM revolution: why GPT-style models changed the game

Enter the age of the transformer: GPT and its ilk have cracked open new possibilities in document summarization technical use. These models don’t just regurgitate—they generate, condense, and “reason” in ways that resemble human cognition.

What makes LLMs (large language models) revolutionary is their ability to handle diverse topics, adapt to different genres, and incorporate external knowledge. Suddenly, summaries aren’t just shorter; they’re potentially smarter, more relevant, and hyper-customized.

But here’s the rub: these models are as opaque as they are powerful. According to Hassija et al. (2023), neural summarizers operate as black boxes—making explainability a major issue, especially in regulated industries. You might get a beautiful summary, but you’re left wondering: how, and why, did it choose those points?

Beneath the surface, limitations persist. LLMs are constrained by context windows (the amount of text they can “see” at once), and they’re only as reliable as the data they’re fed. In technical or domain-specific content, generic models routinely falter, necessitating domain adaptation (Jain et al., 2024).

Technical bottlenecks: accuracy, context, and the hallucination trap

The harshest truth about document summarization technical use? It’s not as “set and forget” as tech evangelists claim. Every system dances with three demons: accuracy, context, and hallucination.

| Bottleneck | Description | Impact |

|---|---|---|

| Factual Accuracy | Ensuring summaries are true to source | Misinformation, legal liability |

| Context Comprehension | Maintaining relevance and coherence | Misleading or incomplete summaries |

| Hallucination | Inventing facts or details not in source | Trust erosion, compliance risks |

Table 3: Core technical bottlenecks in document summarization (Source: Wang et al., 2024)

Even the best systems stumble over:

- Long documents: LLMs struggle past certain lengths due to context window limits, often skipping or distorting key information.

- Specialized jargon: Generic models misinterpret technical terms, especially in law, medicine, or engineering.

- Metric deception: Standard benchmarks (like ROUGE, BLEU) don’t always reflect summary usefulness or factuality.

To mitigate, leading-edge solutions employ reinforcement learning, hierarchical models, or hybrid pipelines—but complexity skyrockets, and transparency suffers.

Real-world case studies: document summarization in the wild

Compliance audits: when every word is a liability

In regulated industries, a missed clause isn’t a footnote—it can be a felony. Here’s how document summarization technical use is deployed in compliance.

| Organization Type | Summarization Role | Outcome | Downsides |

|---|---|---|---|

| Global Bank | AML review, KYC checks | 50% time reduction | Occasional false negatives |

| Pharma Manufacturer | Regulatory submission vetting | 60% faster error detection | Risk of missing subtle language |

| Tech Enterprise | GDPR compliance mapping | Improved audit throughput | Black-box model explanations |

Table 4: Document summarization in compliance audits (Source: Original analysis based on OSTI.gov: Technical Report 2023-2024, ShareFile, 2023)

In one high-profile case, a global bank slashed its anti-money laundering (AML) review time in half, using a mix of extractive and table summarization. However, the same system nearly missed an obscure footnote that would have triggered a reporting obligation—caught only by a manual reviewer.

Efficiency gains are real, but so are the risks. Regular human oversight isn’t just a safety net—it’s a necessity.

Healthcare triage: the stakes of missing a critical detail

Picture a hospital administrator tasked with reviewing 800 pages of patient records before morning rounds. Manual review is impossible. Enter AI summarization, which clusters key findings, medications, and anomalies.

The impact? According to recent studies, AI-driven summarization can trim administrative review time by 50% in large hospitals (ScienceDirect, 2024). But, as doctors point out, even one missed contraindication or allergy is a disaster waiting to happen.

In practice, most healthcare systems deploy summarization for triage—highlighting likely critical points for human review, not as a substitute for clinical judgment.

“Trust, but verify. AI summaries are a starting line, not the finish. Every abnormality flagged needs a second pair of human eyes.” — Dr. A. N. Researcher, ScienceDirect, 2024

Legal discovery and the myth of 'fully automated review'

AI vendors love to tout “fully automated legal document review.” Reality? The process is far messier.

- Volume vs. depth: Systems excel at surfacing key phrases across thousands of documents but buckle when nuance is required.

- Domain-specificity: Off-the-shelf models routinely misinterpret legalese unless retrained on legal corpora.

- Hybrid workflows: Most law firms now use AI to “triage” docs, but human attorneys still make all final calls.

The myth of hands-free automation is just that: a myth. As case numbers balloon, the role of summarization shifts from replacement to augmentation—scanning the haystack, not choosing which needles matter.

Ultimately, technology is the iron lung for a suffocating process, but not its brain.

The myths and realities of AI-powered summarization

Debunking 'push-button intelligence': why context still matters

The utopian vision of “push-button intelligence”—click, summarize, and move on—is seductive. But it’s a lie. Summarization is as much art as science.

It’s context—industry, purpose, risk tolerance—that determines what counts as “key.” A fact that’s vital in legal review might be trivia in a marketing report. Generic, one-size-fits-all AI often misses these nuances, leading to costly oversights.

The degree to which the summary preserves the truth of the source. Even LLMs can introduce subtle distortions.

How well the summary serves its intended business or analytical purpose—not just brevity, but actionable insight.

The ability to tailor outputs to specific needs (e.g., “highlight regulatory risk,” “extract pricing terms”).

Blind trust in automation is as dangerous as blind trust in an intern. Effective teams treat summaries as drafts for human refinement, not gospel.

Bias, privacy, and the ethical minefield

Algorithms are not neutral—especially when trained on biased or incomplete data. AI summarizers can amplify prejudices, skewing narratives or omitting minority voices. Bias lurks in model assumptions, training sets, and the very definition of “importance.”

Ethical use also means grappling with privacy. Feeding sensitive documents into cloud-based summarizers can create compliance nightmares if data leaves organizational boundaries.

- Bias amplification: Models can reinforce stereotypes or overlook atypical cases.

- Data security: Unauthorized access to summaries can expose confidential info.

- Transparency: Black-box systems make it hard to audit decisions or explain errors.

- Consent: Using personal data for model training creates legal and ethical dilemmas.

Ignoring these issues isn’t just shortsighted—it’s reckless.

When manual review beats the machine (and when it doesn't)

Let’s be clear: sometimes, flesh-and-blood still wins. Manual review trumps AI when:

| Scenario | Manual Review | AI Summarization |

|---|---|---|

| Highly specialized legal cases | ✔ | ✖ |

| Large-scale trend detection | ✖ | ✔ |

| Regulatory submissions | ✔ (final check) | ✔ (initial pass) |

| Email inbox triage | ✖ | ✔ |

Table 5: When manual review outperforms AI and vice versa (Source: Original analysis based on ShareFile, 2023; Jain et al., 2024)

“AI can’t do nuance. If your risk is existential, don’t delegate judgment to an algorithm.” — Legal Technology Analyst, OSTI.gov, 2024

But when scale and speed matter—like sifting market trends across thousands of sources—AI holds the upper hand.

Advanced strategies for technical document summarization

Choosing the right model: open-source vs. proprietary engines

Selecting a summarization engine isn’t just a technical choice; it’s a business gamble.

| Model Type | Pros | Cons | Use Case |

|---|---|---|---|

| Open Source (e.g., Pegasus, BART) | Flexible, customizable, cost-effective | Requires in-house expertise, less UI support | R&D, academia |

| Proprietary (e.g., GPT, cloud APIs) | Easier setup, frequent updates, robust support | Expensive, opaque, vendor lock-in | Enterprise, regulated sectors |

Table 6: Open-source vs. proprietary summarization engines (Source: Original analysis based on Jain et al., 2024; ScienceDirect, 2024)

-

Open-source models shine for organizations wanting full control and transparency, but demand technical know-how.

-

Proprietary tools handle scale and support, but often at the expense of explainability and cost.

-

Flexibility for rare domains

-

Robustness for high-volume, generic tasks

-

Budget and compliance needs dictate the choice

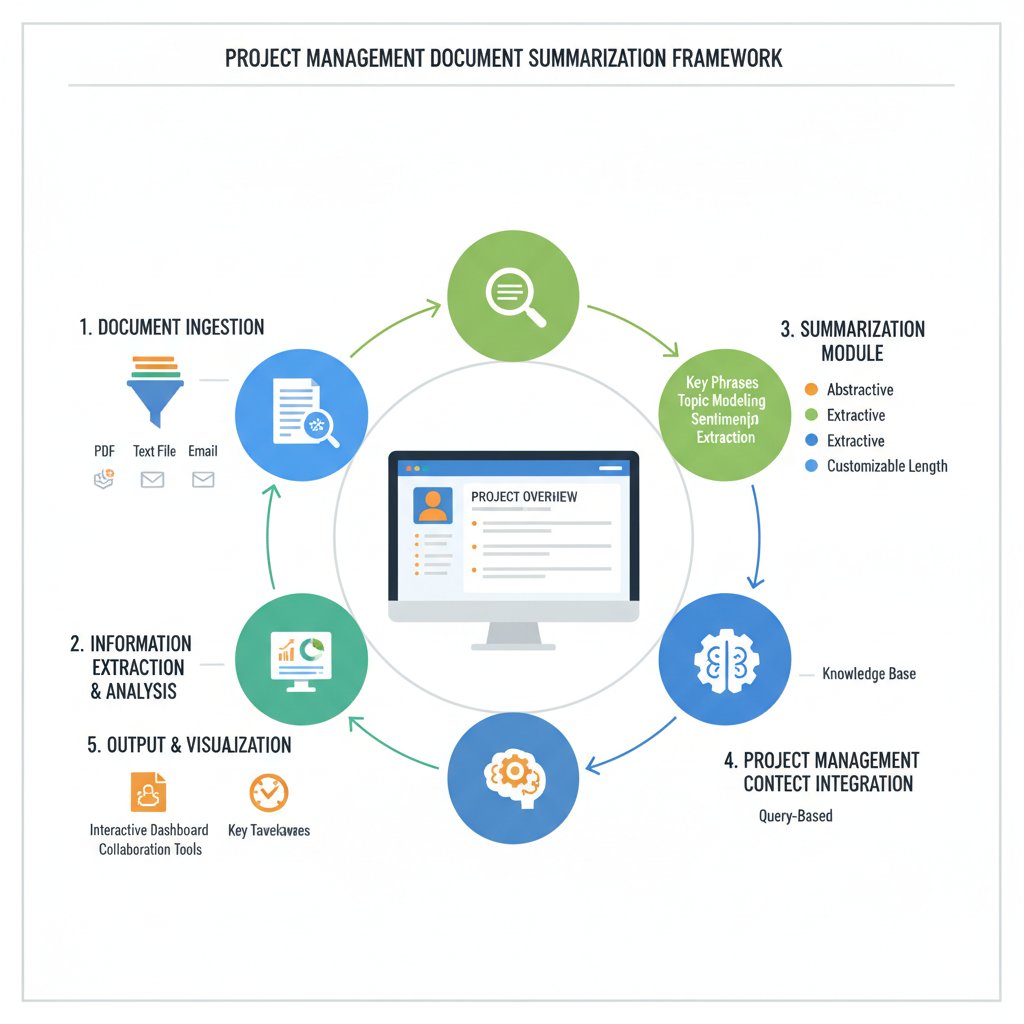

Integration best practices: embedding summarization into your workflow

Getting the most from document summarization technical use means seamless integration.

- Define the use case clearly: Is the goal speed, accuracy, or risk mitigation?

- Pilot and benchmark: Run side-by-side tests with human review, measuring accuracy and time savings.

- Integrate with existing tools: Use APIs to plug summarization into document management or CRM systems.

- Set up feedback mechanisms: Allow users to flag errors or suggest improvements.

- Continuously monitor and tune: Retrain or fine-tune models as new types of documents or edge cases arise.

Effective adoption isn’t just technical—it’s cultural. Users must trust the tool, understand its limits, and know when to escalate to humans.

Red flags: what the sales pitch won’t tell you

Vendors love to oversell. Watch out for:

- Promises of 100% accuracy: No model is infallible—especially in technical or regulatory documents.

- Lack of transparency: Black-box models make audits and compliance harder.

- Data residency issues: Cloud APIs may violate privacy or regulatory rules.

- No feedback loop: Tools that ignore user corrections stagnate or worsen over time.

If the demo looks too good to be true, it probably is.

Measuring success: accuracy, speed, and user trust

Key metrics every technical team should track

Quantifying summarization performance requires more than just “does it work?”

| Metric | Definition | Why it matters |

|---|---|---|

| ROUGE/BLEU scores | Overlap with reference summaries | Standardized, but may miss nuance |

| Factual consistency | Rate of true-to-source statements | Prevents costly misinformation |

| Time saved | Reduction in manual review hours | Direct impact on productivity |

| User satisfaction | End-user rating of summary usefulness | Predictor of adoption, trust |

Table 7: Key evaluation metrics for document summarization (Source: Original analysis based on Wang et al., 2024; ShareFile, 2023)

No single metric tells the whole story. Combine several for a 360° view.

Measuring isn’t just about defense—it's about iteration. Teams need actionable data to drive improvements.

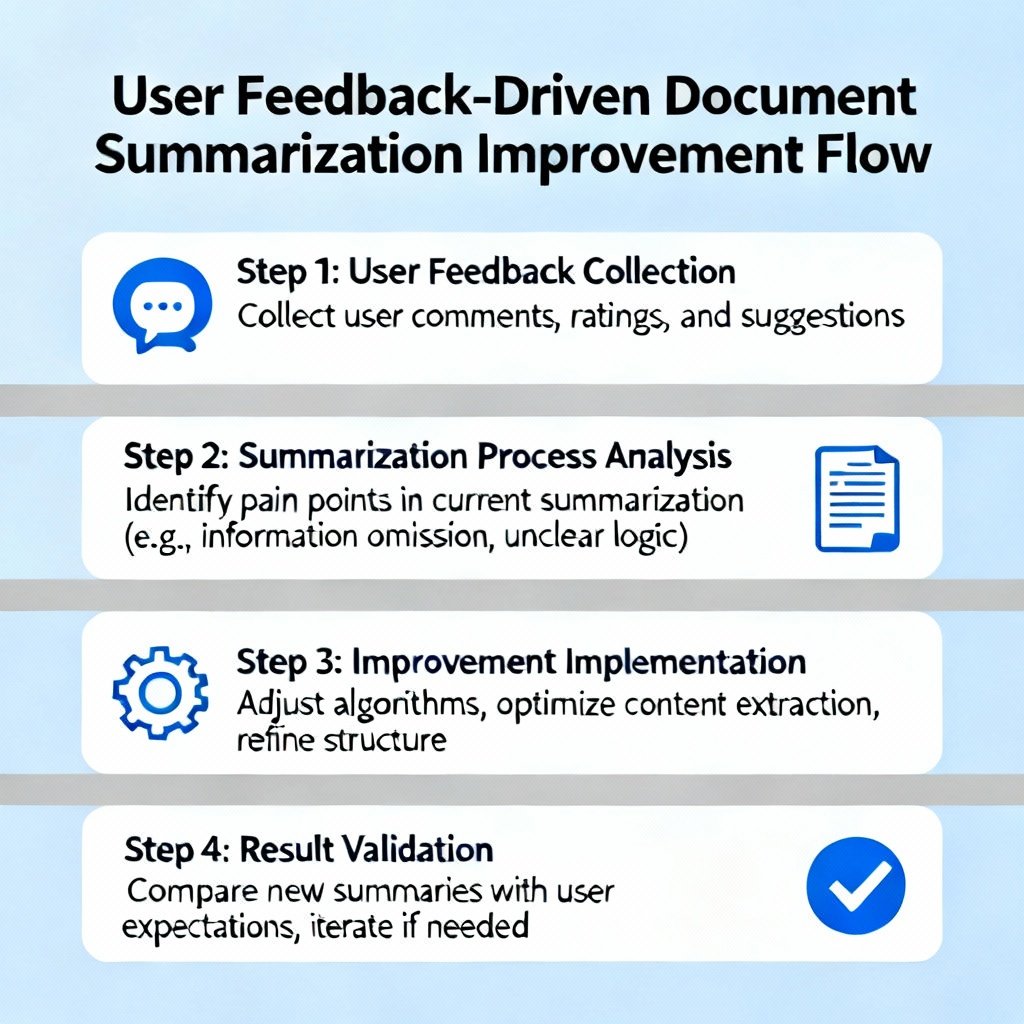

User feedback loops: the missing link in continuous improvement

Many deployments stall because user complaints don’t reach the technical team. Build robust feedback channels:

- Inline feedback on summaries: Let users rate usefulness and flag inaccuracies directly.

- Regular review sessions: Cross-team meetings to surface recurring issues and successes.

- Automated retraining triggers: When error rates spike, models are scheduled for retraining/refinement.

User feedback isn’t an afterthought—it’s an engine that powers the next round of progress.

Cost-benefit analysis: when is document summarization worth it?

Is investing in document summarization technical use justified? The answer is coldly mathematical.

| Factor | Cost Without AI | Cost With AI | Net Benefit |

|---|---|---|---|

| Manual review hours | 1000/month | 300/month | +700 hours saved |

| Error correction | $10,000/year | $3,000/year | $7,000 saved |

| Software/infra spend | $0 | $12,000/year | -$12,000 (expense) |

Table 8: Sample cost-benefit breakdown for document summarization deployment (Source: Original analysis based on ShareFile, 2023; ScienceDirect, 2024)

Organizations must weigh direct productivity gains and error reductions against licensing and infrastructure costs. In most cases, value scales with volume and complexity.

But remember: cost savings vanish if critical errors slip through unchecked.

The future of document summarization: hype vs. reality

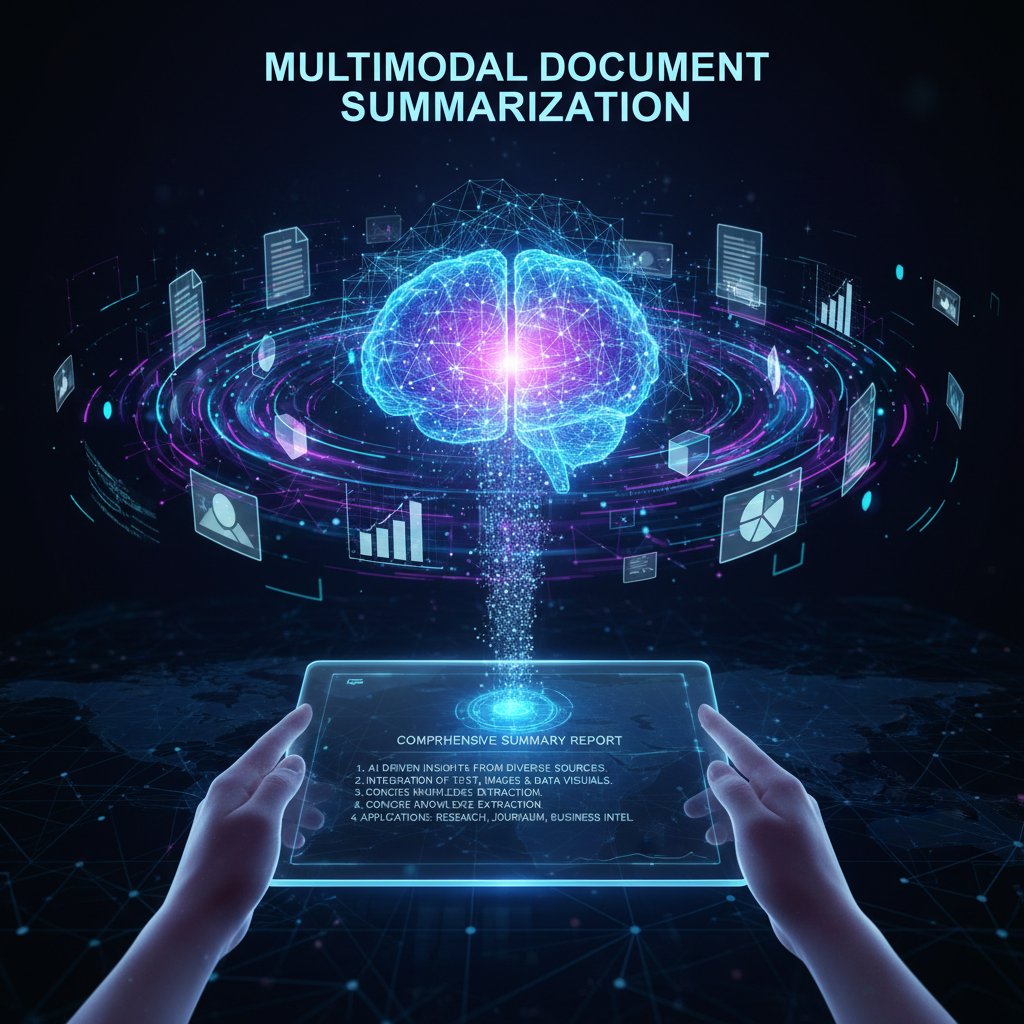

Emerging frontiers: real-time, multimodal, and domain-specific summarization

The cutting edge isn’t about summaries alone. It’s about real-time, cross-format, and deeply specialized solutions.

In 2023, cross-document graph-based methods and multimodal models (text, tables, images) began to outperform single-layer LLMs in technical and compliance settings (EACL, 2023). Domain-specific engines, like Legal Pegasus, now deliver tailored precision unthinkable just three years ago.

- Real-time summarization: Automated meeting minutes or live news digests.

- Multimodal insight extraction: Integrating tables, images, and diagrams with text.

- Domain adaptation: Custom models for law, medicine, finance, and beyond.

Each innovation sharpens the edge—but also raises the bar for oversight.

What’s next for enterprise adoption?

So, how are leading organizations integrating document summarization technical use?

- Piloting in low-risk domains: Start in internal knowledge management before moving to compliance or legal.

- Investing in custom models: Training on proprietary data to boost domain relevance.

- Embedding in digital workflows: Seamless integration with content management, CRM, and analytics tools.

- Layering human review: AI as a triage tool, not judge and jury.

- Continuous measurement: Tracking ROI and error rates in real time.

“Every enterprise is now an information company. Summarization isn’t a luxury—it’s survival.” — Industry Analyst, EACL Proceedings, 2023

Will AI ever replace expert judgment?

| Task Type | AI Strength | Human Strength |

|---|---|---|

| High-volume data sifting | Scale, speed | Contextual judgment |

| Niche domain interpretation | Adaptable (if trained) | Intuition, cross-domain wisdom |

| Compliance/legal review | First-pass triage | Final decision-making |

Table 9: Comparative strengths of AI and human expertise in summarization (Source: Original analysis based on Jain et al., 2024; AmLaw 100, 2023)

Reality check: AI will never fully replace human experts where nuance, risk, and creativity matter. But for the drudgery—surfacing, sorting, and flagging—algorithms now run circles around manual teams.

It’s not “either/or.” It’s a new symbiosis.

Practical frameworks: how to get the most from document summarization

Step-by-step guide to technical implementation

How does an organization embed document summarization technical use without falling flat?

- Audit your document landscape: Catalog types, volumes, and risk areas.

- Define success metrics: Decide on accuracy, time saved, user satisfaction, or compliance rates.

- Select the right model: Choose based on domain, budget, and in-house expertise.

- Pilot with a representative sample: Run A/B tests against manual review.

- Integrate with workflow tools: Use APIs/plug-ins for seamless deployment.

- Monitor and iterate: Set up error tracking and feedback channels.

A haphazard rollout guarantees headaches. A structured approach builds resilience and trust.

Priority checklist: safe and effective summarization rollouts

- Data privacy: Ensure all documents are handled within compliance boundaries.

- Model transparency: Prefer explainable models where possible; log all decisions.

- User training: Educate staff on both strengths and blind spots of AI tools.

- Redundancy: Keep manual review in place for high-risk content.

- Continuous learning: Regularly retrain on new and edge-case documents.

- Feedback integration: Close the loop between users and developers.

A checklist isn’t bureaucracy—it’s survival in a world of high-stakes automation.

Common mistakes (and how to avoid them)

- Ignoring domain adaptation: Deploying generic models in technical fields is a recipe for disaster.

- Over-automating: Removing human review completely, especially in regulated industries.

- Neglecting feedback loops: Failing to learn from mistakes or adapt to new document types.

- Chasing metrics, not outcomes: Optimizing for ROUGE scores instead of real-world usefulness.

- Assuming technology = trust: Users must see and believe in value.

Avoid these and your project won’t just survive—it’ll thrive.

Beyond summarization: adjacent technologies and new frontiers

Document classification, tagging, and extraction: where do they fit?

Summarization doesn’t stand alone. It’s tightly woven with:

Automatically organizing content into categories or topics, speeding retrieval and analysis.

Assigning metadata labels (keywords, entities, themes) to documents for smarter search.

Pulling out structured data (dates, names, figures) for integration with databases or analytics.

Together, these build the foundation for true document intelligence.

Combining summarization with knowledge graphs and search

To unlock real value, leading systems combine summarization with:

- Knowledge graphs: Connecting entities, events, and relationships for deeper insights.

- Semantic search: Allowing users to find contextually relevant information, not just keywords.

- Interactive querying: Users refine summaries or filter content on demand.

- Cross-document analysis: Spotting trends and contradictions across thousands of files.

This isn’t just summarization—it’s sense-making at scale.

The role of advanced document analysis platforms

Standalone tools are fading; integrated platforms are rising. Solutions like textwall.ai are pioneering the convergence of summarization, classification, tagging, and extraction. The result? End-to-end document intelligence that transforms chaos into clarity.

“We don’t need more summaries. We need actionable insight—delivered in time to act.” — Corporate Analyst, ScienceDirect Review, 2024

Platforms that combine multiple technologies don’t just save time—they empower entirely new ways of working.

Conclusion: the blunt truth about document summarization technical use

What we've learned (and what still lies ahead)

The story of document summarization technical use is a paradox—a tale of remarkable gains and stubborn limitations. Today’s AI tools slash review times, surface buried insights, and free up human bandwidth for deeper work. Yet, they’re haunted by accuracy gaps, context failures, and the ever-present risk of hallucination and bias.

The bottom line? The field has matured, but trust and success hinge on hybrid workflows, ruthless validation, and relentless user feedback. Automation is no panacea; it’s a power tool, demanding skill and vigilance.

Your next steps: questions every decision-maker should ask

- What are my highest-risk document types and workflows?

- Do I need domain-specific models, or will general-purpose suffice?

- How will I measure summarization accuracy and business impact?

- What safeguards ensure privacy, compliance, and explainability?

- How will human expertise remain in the loop?

Evaluate, pilot, measure, and iterate. There are no shortcuts—only smarter paths.

If you’re ready to escape the document doom loop, platforms like textwall.ai/document-analysis offer a starting point for responsible, high-impact automation.

Where to learn more and avoid the hype

- OSTI.gov: Technical Report 2023-2024

- ShareFile AI Summarization Guide

- ScienceDirect Review

- EACL 2023 Proceedings

- AI Summarization in Legal Practice (AmLaw 100, 2023)

- textwall.ai/automated-content-extraction

- textwall.ai/llm-document-summarization

- textwall.ai/business-workflow-automation

Don’t fall for buzzwords. Demand proof, push for transparency, and keep humans in the loop. In the war against information overload, document summarization technical use isn’t just a weapon—it’s your best defense, if you wield it wisely.

Sources

References cited in this article

- OSTI.gov: Technical Report 2023-2024(osti.gov)

- ShareFile AI Summarization Guide(sharefile.com)

- ScienceDirect Review(sciencedirect.com)

- Research.com(research.com)

- DocumentLLM(documentllm.com)

- Frontiers in AI(frontiersin.org)

- IEEE Xplore(ieeexplore.ieee.org)

- Analytics Vidhya(analyticsvidhya.com)

- ScienceDirect Topics(sciencedirect.com)

- Iris.ai Blog(iris.ai)

- arXiv: LLM Summarization(arxiv.org)

- DocumentLLM(documentllm.com)

- Neptune.ai: LLM Evaluation(neptune.ai)

- Google Cloud Blog(cloud.google.com)

- Filevine Legal Case Study(filevine.com)

- Bridgepoint Consulting(bridgepointconsulting.com)

- Thomson Reuters 2023 Report(thomsonreuters.com)

- OMB 2024 Compliance Supplement(grantthornton.com)

- Full Stack AI(fullstackai.co)

- Northwestern CASMI(casmi.northwestern.edu)

- Euronews(euronews.com)

- IJRPR 2024(ijrpr.com)

- ResearchGate: Ethics & Privacy(researchgate.net)

- Lexology: Privacy 2024(lexology.com)

- Addepto(addepto.com)

- AWS ML Blog(aws.amazon.com)

- DocumentLLM Guide(documentllm.com)

- ClickUp Workflow Optimization(clickup.com)

- Kawanelite(kawanelite.com)

- Addepto(addepto.com)

- BankersOnline(bankersonline.com)

- Frontiers in AI(frontiersin.org)

- arXiv: Multi-doc Summarization Benchmark(arxiv.org)

- ClickUp Metrics Guide(clickup.com)

- Document360 KPIs(document360.com)

- ABBYY ROI Report(abbyy.com)

- iQuasar Cost-Benefit Analysis(software.iquasar.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Document Summarization Software Alternatives That Actually Protect You

If you think your document summarizer is doing the heavy lifting, think again. The explosion of unstructured data has turned the humble summary into a

Document Summarization Software Is Quietly Rewiring How We Think

In 2025, the flood of information is not a trickle but a relentless tsunami. The old routines—reading page after page, highlighting, summarizing by hand—have

Document Summarization Services Online: What You Can’t Trust in 2026

Uncover the hidden risks, real-world impact, and expert strategies to master document analysis in 2026. Read before you trust any summary.

Document Summarization Service Comparison for Real-World Decisions

Discover the raw truth behind top AI tools, hidden risks, and real-world results—ditch the hype and choose wisely.

Document Summarization Service Alternatives That Won’t Sell Your Data

Document summarization service alternatives that actually deliver. Discover bold, new options, expose industry myths, and take control of your workflow today.

Document Summarization Methods That Actually Work (and Backfire)

In 2025, information is both power and poison. The average enterprise is drowning in unstructured documents, urgent emails, legalese, and market intelligence

Document Summarization Market Research Use That Actually Works

Document summarization market research use is transforming insight discovery—see what’s real, what’s hype, and what every analyst should know now.

Document Summarization Legal Use: Risks, Rewards, and Real Limits

Discover the hidden risks, real-world wins, and essential strategies for legal pros. Don’t get blindsided—read before you rely.

Document Summarization Healthcare Use When It Helps—And When It Harms

Document summarization healthcare use is transforming hospitals. Discover hard truths, real risks, and breakthrough results in this deep-dive. Read before you act.