Automate Categorization of Extensive Texts Without Losing Control

Drowning in information isn’t a distant dystopia—it's your inbox, your SharePoint drive, your daily grind. The explosion of digital documentation has made the phrase “too much data” a lived reality. If you’ve ever tried to extract meaning from a 300-page contract, a tsunami of customer feedback, or never-ending academic reports, you know the stakes. The promise of AI automation—turning chaos into clarity—sounds like salvation. But behind the hype, the reality is messier, riskier, and far more transformative than you’ve been told. In this investigative deep-dive, we slice through the marketing noise to expose what it truly means to automate categorization of extensive texts. From the harsh lessons of failed projects to the surprising wins hiding in the noise, you’ll see why next-gen analysis is both revolution and reckoning. Ready to stop being flooded by words and start riding the wave? Let’s rip the lid off automated text categorization in 2025.

Why information overload became our new normal

The rise of the data tsunami

Every minute, humanity generates millions of new documents, emails, chat logs, and social media posts. In 2023 alone, global data creation hit a staggering 120 zettabytes, with projections pushing that to as much as 180 zettabytes by 2025, according to the Lausanne Movement’s global data report. This isn’t just an IT headache—it’s a societal transformation. Organizations are being forced to confront the reality that the written word, long a bastion of clarity and permanence, has metastasized into an endless digital torrent. Gartner’s 2023 survey found that 38% of employees now cite excessive communication as their top productivity killer. Rensselaer Polytechnic Institute’s 2024 study didn’t pull punches: information overload isn’t just an annoyance—it actively fuels disengagement, decision fatigue, and a spike in emotional health issues across industries.

The numbers are staggering, but their impact is deeply personal. For every headline about “big data,” there are a thousand professionals staring down inboxes they’ll never empty, sifting through noise for that one critical insight. This is the new normal: a world where manual document handling simply breaks down under the weight.

When manual categorization breaks down

Manual document sorting once made sense—when data streams were manageable, and a team of analysts could feasibly tag, group, and file every report or message. But in today’s reality, the bottlenecks are both brutal and expensive. According to Harvard Business Review, organizations relying on manual categorization struggle with accuracy, scalability, and sheer speed. The human cost? Knowledge workers spending hours, even days, on repetitive labeling—hours that could fuel actual analysis or innovation.

| Metric | Manual Categorization | Automated Categorization |

|---|---|---|

| Avg. Time per 10k Docs | 60-80 hours | 2-4 hours |

| Accuracy | 70-85% (variable, fatigue) | 90-96% (top models) |

| Scalability | Linear (needs more staff) | Exponential (parallel) |

| Cost per 10k Docs | $2,000–$4,000 | $300–$700 |

| Error Rate | 5–10% | 2–5% |

Table 1: Manual vs. Automated Categorization—efficiency, accuracy, and cost comparison

Source: Original analysis based on Gartner, 2023, Harvard Business Review, 2023

The old guard is losing ground. Manual approaches don’t just cost more—they kill momentum. Errors slip in, important signals get lost, and analysis lags behind real-world events. In an era when information is power, every hour spent on rote sorting is a competitive liability.

The hidden opportunity in chaos

But let’s flip the script: what if this chaos is more than just a threat? Some organizations don’t drown in data—they surf to the top. How? By mining the noise for actionable insights, spotting patterns competitors miss, and turning overload into strategic fuel. As Jasmine, a veteran data scientist, put it:

“Most companies drown in data, but a few surf it to the top.” — Jasmine, Senior Data Strategist (quote)

This isn’t luck or brute force. It’s the result of embracing automation, advanced analytics, and a culture that thrives on finding order in disorder. The race isn’t against more data—but against your own blind spots.

What does it really mean to automate categorization of extensive texts?

Defining automated text categorization

At its core, automated text categorization is the process of assigning labels, tags, or categories to unstructured text using algorithms and artificial intelligence. Forget the old-school “folder and file” approach—today, automation means that massive volumes of emails, contracts, reports, or social media posts are instantly organized, grouped, and made searchable by relevant themes or intent.

This isn’t just about keeping things tidy. In legal, academic, and enterprise settings, automated categorization is the backbone of compliance, content moderation, customer sentiment analysis, and knowledge management. For instance, a global law firm can instantly flag sensitive clauses in thousands of contracts, while a media organization can auto-tag breaking news stories in real time.

Key Terms Defined:

A structured classification system—think of it as the “skeleton” behind automated sorting. In document analysis, a taxonomy organizes documents by themes, legal codes, or industry standards.

Advanced neural networks trained on billions of words, capable of understanding and categorizing text with human-like nuance. Tools like textwall.ai leverage LLMs to parse even the most complex documents.

Labeling text not just by keywords, but by meaning and context. For example, “merger” and “acquisition” get linked as related concepts, regardless of phrasing.

How AI and LLMs transform the process

The revolution in text categorization is powered by a seismic shift: moving from brittle rules-based systems to adaptable, context-aware LLMs. Just a few years ago, most automation relied on if-then rules and rigid keyword lists. These systems crumbled on ambiguous language, sarcasm, or evolving business terms. Enter LLMs, with their deep learning and contextual understanding.

According to the Frontiers in Computer Science, 2024, neural network-powered categorization can outperform traditional methods, needing less labeled training data and achieving higher accuracy—even in noisy, real-world datasets. SmartOne.ai’s 2024 analysis found that models trained on diverse sources (news, social, domain-specific corpora) show particular strength in real-time scenarios like social media monitoring and compliance screening.

What’s different now isn’t just better algorithms—it’s scale, flexibility, and the ability to learn from mistakes. LLMs can be fine-tuned for specific use cases, adapt to new legal or technical terms, and handle multilingual content. For the first time, it’s possible to automate categorization across millions of documents without sacrificing context or nuance.

Where does human expertise still matter?

Let’s not get seduced by “set it and forget it.” Even the sharpest models have blind spots. Complex jargon, evolving regulations, and subtle context shifts can trip up even the biggest LLMs. That’s where human-in-the-loop systems come in.

“The best systems still need smart humans in the loop.” — Kyle, Senior AI Engineer (quote)

Human expertise is crucial for several reasons: defining taxonomies, reviewing edge cases, and ensuring ethical compliance. When AI stumbles—misclassifying sensitive documents, or missing cultural nuance—human reviewers catch the fallout. The most resilient organizations blend cutting-edge automation with deep subject-matter oversight, closing the gap between scale and precision.

The gritty reality: why most automation projects fail

Common myths and hard truths

The AI hype cycle is relentless. Vendors promise “plug-and-play” solutions that will instantly banish chaos. The reality? Failed rollouts, wasted budgets, and a rude awakening for anyone who thinks technology alone is enough. Persistent myths trip up even the savviest teams.

Red flags to watch out for when automating text categorization:

- Assuming perfect out-of-the-box accuracy: Pre-trained models still need tuning for your data.

- Ignoring data quality: Garbage in, garbage out. Poorly structured or inconsistent documents sabotage automation.

- Overlooking integration headaches: Legacy systems don’t always play nice with new tools.

- Underestimating model drift: As business terms evolve, accuracy drops—unless you retrain regularly.

- Skipping human review: No model nails every edge case. Auditing is non-negotiable.

- Believing the “set-and-forget” myth: Continuous monitoring is a must, or errors multiply invisibly.

- Neglecting user training: If your team can’t interpret results, automation fails at the last mile.

- Ignoring compliance: Data privacy laws can derail automation projects overnight.

- Chasing hype, not use case: Don’t automate what isn’t a bottleneck.

- Failing to plan for scale: What works on a small batch may break with real-world volume.

Each bullet isn’t just a cautionary tale—it’s a trap door waiting to swallow ambitious projects.

Technical roadblocks and how to smash them

The technical landscape is riddled with pitfalls. Poor data quality, model drift, and integration woes can derail even well-funded efforts. Data pipelines break under load, APIs fail, and models trained on “toy” datasets can’t keep up with the ugly, messy reality of enterprise text.

The solution? Relentless focus on foundational work. Clean your data, build robust validation checks, and don’t skimp on integration testing. According to Automate UK 2024 Industry Report, hybrid models—combining rule-based filters with LLMs—help catch outliers and reduce drift. Automation isn’t a magic wand; it’s a discipline.

Real-world lessons from failed rollouts

Consider the infamous case of a global consulting firm that tried to automate contract categorization overnight. The plan: roll out a shiny new LLM solution to process 100,000+ legal documents. The result? Misclassified contracts, missed deadlines, and a spiraling remediation bill.

| Timeline Step | What Happened | Impact | Alternative Approach |

|---|---|---|---|

| Project kickoff | Ignored legacy data clean-up | Model trained on noisy data | Start with thorough audit |

| Week 2 | Deployed on full dataset | System crashed | Pilot with smaller sample |

| Month 1 | No human review in place | Errors went undetected | Add manual QA checkpoint |

| Month 2 | Model drifted as terms evolved | Accuracy plummeted | Schedule regular retraining |

| Month 3 | Compliance flagged issues | Project paused, legal risk | Involve compliance from start |

Table 2: Timeline of implementation missteps in failed automation rollout

Source: Original analysis based on industry reporting and Automate UK 2024 Industry Report

The firm ultimately rebooted the project—slower, but smarter—with a phased rollout, heavy QA, and regular retraining cycles. The lesson: in automation, shortcuts are expensive.

Inside the black box: how LLMs categorize text at scale

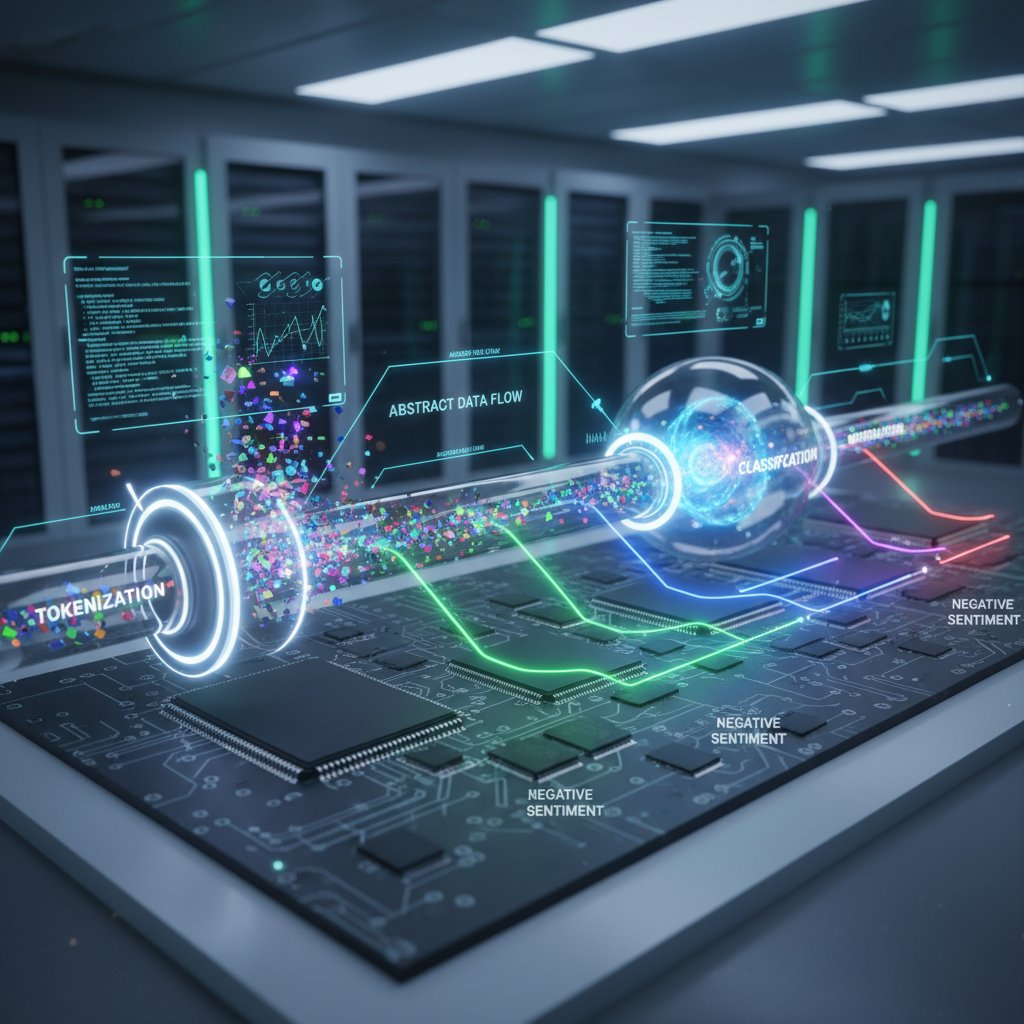

From tokens to taxonomies: a technical deep dive

Behind the scenes, large language models break text into “tokens”—bite-sized pieces, usually words or subwords. These tokens pass through layers of neural networks that extract meaning, context, and relationships. The model then maps these insights to predefined categories, using learned patterns from massive training data.

This isn’t a black-and-white process. Context matters: “Apple” in a tech contract isn’t the same as “apple” in a grocery list. LLMs use attention mechanisms to weigh context, differentiating between homonyms, idioms, and technical jargon. The result? Categories that adapt to nuance, not just surface keywords.

Accuracy vs. speed: the tradeoff no one talks about

There’s a dirty little secret in text automation: you can’t always have it all. High-accuracy models are often slow and resource-intensive, while lightning-fast systems may miss critical nuance. Real-world deployments must balance speed, accuracy, and cost—sometimes sacrificing one for the other, depending on the use case.

| System Type | Accuracy (%) | Speed (Docs/min) | Resource Usage |

|---|---|---|---|

| Rule-based Classic | 70–80 | 10,000 | Low (CPU) |

| LLM Fine-Tuned | 92–96 | 1,200 | High (GPU, RAM) |

| Hybrid (LLM + Rules) | 90–94 | 2,500 | Moderate (CPU+GPU) |

Table 3: Performance metrics for different text categorization systems

Source: Frontiers in Computer Science, 2024, SmartOne.ai Datasets

Not all text is created equal. High-stakes applications—compliance, legal, finance—demand accuracy over speed. For social media monitoring, speed may trump perfect categorization. The trick is knowing your priorities and choosing accordingly.

When AI gets it wrong: bias, hallucination, and error cases

No system is infallible. Even best-in-class models miscategorize, hallucinate new categories, or overlook culturally sensitive context. The consequences range from mild annoyance to regulatory disaster.

“Sometimes the machine invents a category no one asked for.” — Priya, AI Ethics Lead (quote)

These errors aren’t just bugs—they’re symptoms of deeper issues: biased training data, outdated taxonomies, or lack of human oversight. The fallout? Misfiled legal documents, privacy breaches, or public embarrassments. Transparency, audit logs, and human review are your safety net.

Industrial-strength strategies for automating categorization

Step-by-step guide to mastering automated categorization

Forget the “AI magic” narrative. Robust text categorization is a grind—equal parts strategy, engineering, and relentless QA. Here’s how the pros actually get it done:

- Audit your data: Map document sources, formats, and quality. Identify noise and inconsistencies.

- Define your taxonomy: Build a category structure with input from domain experts.

- Pre-process ruthlessly: Cleanse, normalize, and standardize text to reduce garbage in.

- Select your toolkit: Compare LLMs, rule-based engines, and hybrids for your needs.

- Pilot with a sample: Test on a small batch to catch issues early.

- Train and fine-tune: Use labeled data to adapt the model to your domain.

- Integrate with workflows: Hook into existing systems—don’t silo your automation.

- Validate continuously: Set up human review checkpoints and performance dashboards.

- Retrain and update: Schedule regular reviews as terms, laws, or business logic evolve.

- Document and audit: Maintain logs for compliance, troubleshooting, and improvement.

Skip a step, and you’ll pay for it.

Checklist: are you ready to automate?

Jumping into automation blind is a recipe for pain. Before you begin, assess your organization’s readiness on multiple fronts:

- Clear objectives: Are your pain points and goals well-defined?

- Data maturity: Is your document pool organized and consistent enough for automation?

- Stakeholder buy-in: Do leaders and end-users support the shift?

- Technical capability: Is your IT infrastructure ready for LLMs and high-volume processing?

- QA resources: Do you have a plan for ongoing validation and oversight?

- Change management: Are you prepared to retrain staff and adjust workflows?

- Compliance awareness: Have you engaged legal and data privacy teams from the start?

Each point is a potential fault line—ignore at your peril.

Avoiding the top 5 mistakes (with real examples)

The graveyard of failed automation projects is crowded and instructive:

- Skipping pilot phases: A financial firm deployed site-wide at once—crashed under unexpected data types.

- Ignoring edge cases: An academic publisher missed rare but critical document types, tanking accuracy.

- Underestimating integration: A logistics company’s new tool couldn’t talk to legacy systems—manual rework ensued.

- Failing to retrain: As legal definitions changed, a law firm’s model drifted, leading to costly errors.

- Neglecting compliance: A media outlet neglected GDPR—incurred fines after personal data slipped through.

Mistakes aren’t just academic—they’re expensive, public, and sometimes irreversible.

Case studies: when automation changes the game

How a law firm slashed review time by 80%

One global law firm faced a mountain: 50,000 contracts up for annual review. Manual processing required an army of paralegals and months of work. By deploying a hybrid LLM system (fine-tuned with legal-specific data), they slashed review time by 80%—from six months to just five weeks. Key steps included rigorous data cleansing, phased rollout, and ongoing oversight by senior counsel. Errors dropped by 60%, and compliance confidence soared.

The real win wasn’t just speed—it was the firm’s ability to handle urgent regulatory shifts without burning out staff.

Healthcare’s quiet revolution in patient document handling

Hospitals and clinics are notorious for drowning in paperwork. One healthcare network automated patient record categorization using a custom LLM pipeline. The old process: manual tagging by clerks, with delays and frequent misfiles. The new? Near-instant categorization, with a 50% reduction in administrative workload.

| Workflow Step | Manual Process | Automated Process |

|---|---|---|

| Intake Form Review | 10 min per patient | 1 min per patient |

| Tagging Accuracy | 82% | 95% |

| Staff Involvement | 4-5 clerks | 1 supervisor |

| Compliance Incidents | 12/year | 2/year |

Table 4: Healthcare document workflow—before and after automation

Source: Original analysis based on Rensselaer Polytechnic Institute, 2024

The result? Faster admissions, fewer errors, and more time for patient-facing work.

Media, finance, and beyond: cross-industry breakthroughs

- Media: Automated tagging enables real-time news curation, surfacing breaking stories without human bottlenecks.

- Finance: AI sorts regulatory filings, flagging anomalies before compliance deadlines.

- Academic research: Universities summarize and categorize decades of papers, accelerating literature reviews from months to days.

“Every industry has its own flavor of chaos—AI just changes the recipe.” — Alex, Industry Analyst (quote)

The common thread: automation doesn’t just save time—it unlocks new capabilities previously out of reach.

The hidden costs (and unexpected benefits) of automation

What nobody tells you about ongoing costs

The sticker price of automation is only the start. Licensing, maintenance, and retraining pile up. According to industry surveys, retraining an LLM for legal compliance can run $50,000–$100,000 annually for a large enterprise. Vendor lock-in, unexpected integration work, and periodic auditing all add to the bill.

| Cost Element | One-time | Recurring (Annual) | Notes |

|---|---|---|---|

| Licensing | $25,000 | $5,000–$20,000 | Varies by volume/model |

| Setup & Integration | $15,000 | $0 | Initial IT workload |

| Data Cleansing | $10,000 | $2,000 | Ongoing QA |

| Retraining | $5,000 | $50,000–$100,000 | For LLMs, domain-specific |

| Maintenance/Support | $2,000 | $10,000 | Vendor or in-house |

| Compliance Auditing | $0 | $5,000 | Legal, privacy requirements |

Table 5: Cost-benefit analysis for automation

Source: Original analysis based on Automate UK 2024 Industry Report

Transparency about costs is non-negotiable—ask vendors for total cost of ownership, not just upfront fees.

Unlocking hidden value: benefits beyond efficiency

Efficiency dominates the marketing pitch, but the real value of text categorization runs deeper:

- Regulatory compliance: Automated logs and audit trails make regulatory reviews painless.

- Corporate memory: Easy access to decades of knowledge boosts onboarding and innovation.

- Fraud detection: Outlier categorization flags suspicious behavior earlier.

- Content moderation: Real-time moderation at scale keeps communities safer.

- Market intelligence: Hidden trends in customer feedback emerge instantly.

- Morale boost: Less grunt work means happier, more engaged staff.

- M&A readiness: Rapid due diligence in mergers and acquisitions.

- Disaster recovery: Categorized archives speed up crisis response.

These aren’t just “nice-to-haves”—they’re strategic assets.

How to avoid vendor lock-in (and why it matters)

Vendor lock-in is the automation trap nobody talks about—until it’s too late. Switching providers, extracting your data, or integrating new models can become nightmare scenarios. To avoid this, prioritize open APIs, portable data formats, and modular system architecture.

Control isn’t just about cost—it’s about future-proofing your organization.

The ethics and future of AI-powered text categorization

Privacy, bias, and the dark side of automation

Automated text categorization is a double-edged sword: while it accelerates insight, it also risks amplifying bias, breaching privacy, or making decisions without accountability. Regulatory scrutiny is only intensifying—as Nature Human Behaviour’s 2024 editorial frames it, information overload is increasingly seen as a form of “societal pollution,” demanding new safeguards.

“Transparency isn’t optional anymore—it’s survival.” — Morgan, AI Policy Specialist (quote)

From algorithmic transparency to explainability mandates, the field is shifting toward accountability by design. No one can afford black-box systems anymore.

What does the future hold for knowledge workers?

Automation isn’t about replacing humans—it’s about changing the work humans do. Instead of drowning in repetitive sorting, analysts and experts pivot toward interpretation, strategy, and oversight. The division of labor is shifting. As LLMs handle the grunt work, knowledge workers focus on higher-order analysis, ethical review, and creative problem-solving.

The survivors in this new landscape are those who adapt, retrain, and embrace the change—not those who resist it.

textwall.ai and the cutting edge of document analysis

Next-generation tools like textwall.ai are rewriting the rulebook. By leveraging advanced LLMs, semantic tagging, and instant summarization, they transform how organizations handle extensive texts—breaking down walls of jargon, surfacing actionable insights, and enabling smarter decisions at scale.

New Concepts Defined:

Not just data, but findings immediately relevant to decisions—flagging risk clauses, trending themes, or regulatory red flags.

Human experts continuously monitor, correct, and retrain automated systems, ensuring no critical errors slip through.

Categorization schemes visible and editable by end-users, not just hidden in model code.

These aren’t buzzwords—they’re practical frameworks for sustained, resilient automation.

Beyond categorization: adjacent trends shaping text analysis in 2025

Semantic search and intelligent document retrieval

Automated categorization powers the next leap: semantic search. Instead of chasing keywords, users query documents by meaning (“Show me all contracts with hidden fees”). This transforms retrieval from a guessing game into a surgical tool, supercharging research, compliance, and customer support.

Semantic search isn’t a luxury anymore—it’s the backbone of modern knowledge work.

Multimodal analysis: text, image, data fusion

The cutting edge isn’t just about text. Multimodal systems fuse text, images, and metadata—think medical records tagged with both notes and x-rays, or legal cases indexed by both filings and exhibits.

| Feature | Text-only Systems | Multimodal Systems |

|---|---|---|

| Data Types Supported | Text | Text, images, tables |

| Accuracy (complex docs) | 85% | 92% |

| Use Cases | Content moderation | Medical, legal, research |

| Integration Complexity | Low | Moderate to High |

| Example Tools | Legacy DMS | textwall.ai, modern LLMs |

Table 6: Feature matrix—text-only vs. multimodal automation systems

Source: Original analysis based on SmartOne.ai Datasets, 2024

This fusion breaks down silos, surfacing insights unreachable by text alone.

The rise of explainable AI in document processing

With great power comes great responsibility—and the explainable AI movement is taking center stage. Organizations now demand transparency: not just “what” a model decided, but “why.” Practical steps include:

- Choose interpretable models for high-stakes applications.

- Document decision rules and training datasets.

- Enable audit trails for every categorization.

- Implement model cards—plain-language summaries of model behavior.

- Solicit user feedback to catch recurring errors.

- Schedule regular bias audits with diverse test cases.

- Train staff in explainability—if you can’t explain it, you can’t trust it.

This isn’t just compliance theater—it’s how trust is earned.

Conclusion: mastering the chaos—what it takes to lead in 2025

Synthesizing the new rules of document analysis

Automating the categorization of extensive texts isn’t a silver bullet—it’s a paradigm shift. The winners harness LLMs, but never forget the critical role of data quality, domain expertise, and relentless QA. From the data tsunami to the granular reality of failed rollouts, this journey is about mastering complexity, not escaping it. The new rules? Audacity, transparency, and an unflinching willingness to adapt.

The horizon isn’t a mirage—it’s where insight meets action, at scale.

Next steps: your blueprint for action

Ready to stop drowning and start surfing? Here’s your action plan:

- Map your pain points: Identify where manual sorting is killing productivity.

- Assess your data: Audit sources, structure, and quality.

- Define your taxonomy: Collaborate with domain experts.

- Pick your toolkit: Compare LLMs, hybrid approaches, and integration options.

- Pilot, don’t plunge: Start small, validate results, iterate.

- Integrate with workflows: Ensure automation fits, not fights, existing processes.

- Plan for ongoing QA: Assign human reviewers, schedule retraining.

- Document everything: From decision rules to audit logs—future-proof your work.

Each step is a shield against disappointment—and a lever for real transformation.

Why the best are never satisfied

In the world of automated document analysis, complacency is lethal. The best organizations, teams, and tools are in a constant state of restless improvement—tuning models, refining workflows, and questioning assumptions.

“In this game, standing still is falling behind.” — Riley, Automation Lead (quote)

Mastering the chaos isn’t a one-time victory—it’s a discipline. The only question: will you ride the wave, or get swept away?

Sources

References cited in this article

- Automate UK 2024 Industry Report(automate-uk.com)

- Frontiers in Computer Science, 2024(frontiersin.org)

- SmartOne.ai Datasets(smartone.ai)

- Frontiers in Psychology, 2023(frontiersin.org)

- ScienceDaily, 2024(sciencedaily.com)

- Lausanne Movement(lausanne.org)

- IEEE Xplore(ieeexplore.ieee.org)

- AWS Text Classification(aws.amazon.com)

- Full Stack AI: AI Myths(fullstackai.co)

- Automate UK 2024(themanufacturer.com)

- BotsCrew: Why AI Projects Fail(botscrew.com)

- Bain Automation Scorecard 2024(bain.com)

- Springer Applied Network Science, 2024(appliednetsci.springeropen.com)

- ResearchGate, 2023(researchgate.net)

- arXiv: Smart Expert System(arxiv.org)

- MDPI Applied Sciences, 2024(mdpi.com)

- MDPI Information, 2025(mdpi.com)

- Expert Systems with Applications, 2024(ouci.dntb.gov.ua)

- Wikipedia: AI Hallucination(en.wikipedia.org)

- MIT Sloan(mitsloanedtech.mit.edu)

- American Bar Association, 2024(americanbar.org)

- IEEE Xplore, 2023(ieeexplore.ieee.org)

- GetThematic.com(getthematic.com)

- Levity.ai(levity.ai)

- Restackio(restack.io)

- CASE 2024(emw.ku.edu.tr)

- PubMed(pubmed.ncbi.nlm.nih.gov)

- Frontiers in AI, 2024(frontiersin.org)

- Aidoc(aidoc.com)

- Wolters Kluwer(wolterskluwer.com)

- Graft: AI Text Classification Guide(graft.com)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Automate Administrative Document Tasks Without Breaking Your Team

Automate administrative document tasks to escape busywork, crush inefficiency, and uncover hidden pitfalls. Discover bold, actionable strategies for 2026 and beyond.

Analyze Market Research Reports Like a Pro — and Outsmart 2026

Analyze market research reports like a pro: expose hidden pitfalls, decode industry bias, and master expert tactics for actionable insights. Ready to outsmart the 2026 market?

Analyze Lengthy Contracts Like a Pro and Never Miss a Hidden Risk

Analyze lengthy contracts with confidence in 2026. Uncover hidden risks, bold strategies, and expert tips to master complex deals. Don't sign blind—take control.

Analyze Legal Contracts Like a Cfo: Expose Risk, Not Just Clauses

Analyze legal contracts fast—with confidence. Discover 7 brutal truths, hidden risks, and actionable strategies for modern contract analysis. Don’t sign blind.

Analyze Complex Legal Language Before It Costs You Millions

Analyze complex legal language like an insider. Discover the hidden truths, latest AI strategies, and avoid costly mistakes. Outsmart legalese—start now.

Analyze Business Analytics Reports Without Falling for Bad Data

Analyze business analytics reports like a pro—debunk myths, avoid data traps, and discover actionable strategies for turning analytics into results. Don’t just skim—dominate.

Analyze Academic Research Articles Like an Insider with Textwall.ai

Analyze academic research articles like an insider—debunk myths, spot hidden flaws, and unlock actionable insights. Don’t get fooled by scholarly jargon. Start mastering research now.

Smart Alternatives to Spreadsheet Analysis That Kill Hidden Risk

Alternatives to spreadsheet analysis are redefining data work. Discover 9 edgy, expert-backed solutions and how to escape the spreadsheet trap today.

Alternatives to Outsourced Document Analysis That Beat It on Cost and Control

Imagine a digital briefcase splitting open, flooding the room with glowing, confidential files — but this time, they’re not heading to a faceless vendor in