Analyze Academic Research Articles: Ruthless Truths, Hidden Traps, and Real-World Mastery

Academic research articles claim to illuminate, but too often, they obscure. You’ve probably trudged through a peer-reviewed paper’s jargon, only to feel more confused—like you’ve been hustled by footnotes and foggy charts. Here’s the hard truth: to analyze academic research articles today means more than just checking sources or counting citations. It’s about dodging traps set by impact factor games, unmasking subtle bias, and extracting actionable insights before the next wave of info floods in. Whether you’re a researcher, student, or just someone refusing to be fooled by scholarly doublespeak, this deep-dive will arm you with the ruthless truths and battle-tested tools to read, dissect, and interpret research articles—without getting played. We’ll unravel the anatomy of academic papers, expose the pitfalls nobody warns you about, and hand you frameworks for critical reading that cut to the bone. Think you know how to analyze academic research articles? Think again.

Why analyzing academic research articles matters more than you think

The real-world consequences of bad analysis

One careless misread of an academic article can ripple out, distorting public health policies, tanking investments, or misleading entire fields. According to Boston Research (2024), the stakes in academic research have never been higher—interdisciplinary collaboration now drives global responses to crises like climate change and pandemics. That means a single misinterpretation doesn’t just waste your time; it can set off chain reactions across policy, funding, and real-world lives.

Consider the high-profile case of retracted COVID-19 studies in major journals: flawed analysis led to dangerous policy moves and shattered public trust. Peer-reviewed does not mean foolproof, and the margin for error is razor-thin. Every time you analyze academic research articles, you’re shouldering the responsibility to catch not just the obvious errors but also the nuanced manipulations lurking beneath polished prose.

How academic research shapes society (and you)

Analyzing academic research articles is about more than just passing exams or polishing your professional reputation. Research shapes the world you move through: the drugs in your medicine cabinet, the algorithms behind your news feed, the climate models guiding political action. According to Royal Society (2023), citation trends and public engagement scores are now as influential as traditional peer review in shaping which findings reach policymakers and the public.

| Area of Impact | Example | Real-World Outcome |

|---|---|---|

| Public Health | Clinical guidelines for treatment | Influences healthcare protocols and patient safety |

| Technology | AI ethics research | Shapes regulation and tech industry standards |

| Social Policy | Education inequality studies | Drives funding decisions and reform |

| Environment | Climate impact models | Informs international agreements |

| Economics | Minimum wage studies | Alters legislative action and labor markets |

Table 1: How academic research articles drive real-world decisions. Source: Original analysis based on Royal Society (2023), Boston Research (2024)

Beneath the surface, these studies are the blueprints for not just policy but also for your everyday choices—what’s in your food, what’s in your air, even what’s in your news. When you analyze academic research articles with a critical eye, you’re not just doing homework. You’re decoding the DNA of society’s operating code.

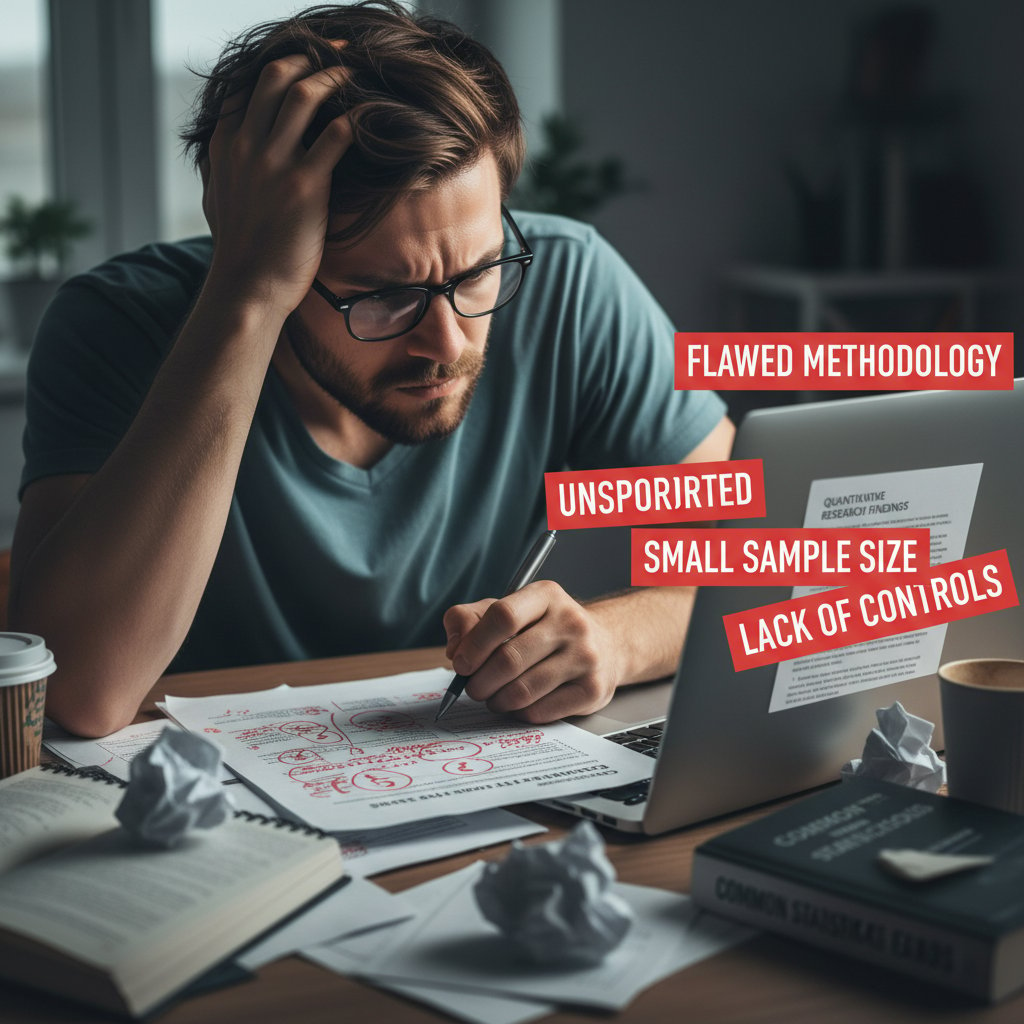

Why most people get it wrong: common misconceptions

Most people—even seasoned professionals—misstep when reading academic research. The main problem? Treating peer review as a gold standard, assuming citations equal truth, or trusting abstracts to tell the whole story. According to The Conversation (2024), even experienced readers fall prey to headline-chasing and metric-worship.

- Assuming peer review equals perfection: Peer review catches obvious flaws, but subtle bias and methodological sloppiness often slip through.

- Confusing citation count with credibility: Highly cited does not mean highly accurate. Sometimes it signals controversy or error.

- Taking abstracts at face value: Abstracts are marketing pitches, not neutral summaries.

- Ignoring sample size and methodology: Flashy results from small or biased samples are rampant, especially in “hot” fields.

- Overvaluing impact factors: Journal prestige doesn’t immunize against poor research.

Ignoring these truths is a fast track to being misled. As data from Clarivate Journal Citation Reports (2024) shows, even the most prestigious journals are not immune to high-profile retractions and corrections.

Anatomy of an academic article: decoding structure and purpose

Breaking down each section: what matters, what doesn’t

Every academic article is a gauntlet of sections, each one an opportunity for insight—or obfuscation. Here’s how to cut through the noise:

Abstract

A tightly crafted sales pitch. Tells you what the authors want you to believe. Never the whole story.

Introduction

Frames the research question, but often hides bias or cherry-picking of citations.

Methods

The guts of the study—where credibility is made or broken. Check for transparency, rigor, and reproducibility.

Results

Raw data and statistical outcomes. This is where you catch “significant” findings that may be meaningless in practice.

Discussion

The author’s spin. Look for overreaching claims, downplayed limitations, or creative interpretations.

References

A window into the author’s influences and potential biases. Are they citing the same echo chamber?

Ignore the glossy surface. Each section is a minefield of context and subtext that only reveals value when you interrogate its purpose—not just its content.

The abstract trap: reading beyond the summary

Abstracts are designed to lure you in, but rarely do they tell the full story. According to Clarivate’s Journal Citation Reports (2024), abstracts often overstate certainty while burying crucial caveats.

“Readers who rely solely on abstracts may miss critical limitations and nuances. Always drill into methods and results before forming conclusions.” — Extracted from Clarivate Journal Citation Reports, 2024

Savvy readers treat the abstract as a teaser—an opening gambit, not a verdict. The real action happens in the grind of methods and data, not the polished pitch at the start.

Methods and results: spotting the real story

If you want to analyze academic research articles like a pro, spend your time on methods and results. This is where you catch statistical sleight of hand, unregistered variables, and tiny sample sizes that ruin generalizability.

| Methodology Red Flag | What It Signals | Real-World Example |

|---|---|---|

| Small sample size | Low statistical power, unreliable results | Diet studies with <50 participants |

| Lack of control group | Confounding variables | Many early AI/ML papers |

| P-hacking | Fishing for significance | Multiple outcomes reported selectively |

| Unclear measurement | Poor reproducibility | Vague survey-based metrics |

Table 2: Common methodological traps in academic research articles. Source: Original analysis based on Boston Research (2024), The Conversation (2024)

Don’t just skim tables—compare reported outcomes with what was promised in the introduction. If the methods section reads like a black box, treat the findings accordingly.

Discussion and conclusions: red flags and hidden gems

The discussion is where authors try to spin their data into gold. Spotting red flags here is non-negotiable.

- Overstated implications: Watch for “game-changing” claims from weak data.

- Downplayed limitations: Real research is messy. Beware of neat, limitation-free stories.

- Selective citation: Are only supportive studies cited, ignoring controversy?

- Grand policy recommendations: If the methods are shaky, so are the recommendations.

Yet, hidden gems abound: nuanced acknowledgments, honest admissions of uncertainty, and calls for more research. These are marks of integrity. Analyze academic research articles with an eye for both the smoke and the signal.

Cutting through the noise: evaluating credibility and bias

How to spot credible research (and why it’s harder now)

Separating credible research from garbage is harder than ever. The deluge of open-access journals, preprints, and altmetric games means that even experienced readers can be fooled. According to Boston Research (2024), critical analysis is the only reliable defense.

- Check peer review status: Is the article peer-reviewed, preprint, or pay-to-publish?

- Examine author credentials: Are they established in the field or serial publishers in junk journals?

- Assess funding sources: Industry-funded research often correlates with bias.

- Dive into citations: Are they citing reputable, recent work or building on outdated dogma?

- Review methodology clarity: Transparent, replicable methods trump flashy results.

These steps demand skepticism and technical know-how—a combination that separates the informed from the influenced.

Unmasking bias—subtle and overt

Bias infects academic research at every stage. Knowing the forms it takes is half the battle.

Selection Bias

Choosing participants or data that favor the desired outcome. Distorts results and limits generalizability.

Confirmation Bias

Highlighting findings that support the hypothesis, ignoring contradicting evidence. Common in discussion sections.

Publication Bias

Journals favor significant, positive results. Null results often disappear.

Bias is rarely declared outright—it seeps in through funding, career incentives, and even unconscious leanings. According to Royal Society (2023), critical readers must interrogate both what’s in the paper and what’s conspicuously absent.

The impact factor illusion: why numbers can lie

Journal Impact Factor is the academic world’s prestige currency, but it’s a poor proxy for actual research quality. According to Clarivate Journal Citation Reports (2024), citation metrics are gamed by editorial practices and “salami slicing” (publishing minimal increments for more papers).

| Metric | What It Measures | Pitfalls |

|---|---|---|

| Impact Factor | Average citations/journal | Can be inflated, doesn’t track quality |

| Altmetric Score | Online/social engagement | Susceptible to viral controversy |

| Citation Count | Total paper citations | Reflects attention, not accuracy |

Table 3: Common research influence metrics versus reality. Source: Clarivate, 2024

“Metrics like impact factor are easily manipulated. True influence is measured by real-world application, not citation games.” — Extracted from Clarivate, 2024

If you analyze academic research articles by chasing numbers alone, you’re reading for the wrong audience.

Frameworks and hacks: how experts analyze academic research articles

Step-by-step guide to critical analysis

Critical analysis isn’t just a skill—it’s a survival strategy. Here’s a step-by-step approach refined by academic insiders and professional analysts:

- Scan the abstract, but distrust it: Get the gist, but hold off on forming judgments.

- Interrogate the introduction: Identify the research question and embedded assumptions.

- Dissect the methods: Scrutinize sample sizes, variables, and whether the study is reproducible.

- Analyze the results: Focus on statistical validity and separation between correlation and causation.

- Challenge the discussion: Separate justified conclusions from author hype.

- Check the references: Identify echo chambers, missing dissent, and potential bias.

- Research author backgrounds and funding: Bias often hides in plain sight.

Master each step, and you become bulletproof to academic spin.

Shortcut frameworks for faster, deeper reads

Not every article deserves a two-hour dissection. Here are shortcut frameworks used by research veterans:

- CRAAP Test: Currency, Relevance, Authority, Accuracy, Purpose. If it fails two or more, move on.

- PICO: Population, Intervention, Comparison, Outcome (clinical research staple).

- GATE Framework: Graphic, Aim, Type of study, Evaluation—quickly maps strengths and weaknesses.

- Three-Pass Approach: First pass for gist, second for structure, third for critical questioning.

These frameworks, when applied with intent, can help you analyze academic research articles swiftly without sacrificing depth.

Apply frameworks with discretion. Use deep dives for articles central to your work; for background reading, faster filters suffice.

Common mistakes and how to avoid them

- Trusting the journal, not the study: High impact does not mean high rigor.

- Ignoring negative results: If they’re missing, dig deeper—publication bias is real.

- Overlooking conflicts of interest: Always check funding disclosures.

- Failing to cross-check statistics: Use online calculators or AI tools for basic verification.

- Neglecting supplemental materials: Critical methods often hide in appendices.

Even experts slip—awareness is your best defense.

Checklist: your quick-reference for every article

- Peer review status checked

- Author credentials verified

- Methods scrutinized for bias/sample size

- Results compared against claims

- Funding and disclosures reviewed

- References scanned for echo chambers

- Supplementary materials examined

- Key findings cross-checked with external sources

- Statistical significance validated

- Limitations identified and considered

Keep this checklist on your desktop. Over time, it will become second nature—and your analysis will get sharper, faster.

Print it out, tape it to your wall, and never analyze academic research articles blind again.

Diving deeper: advanced tactics for uncovering hidden insights

Reading between the lines: what’s not being said

Some of the most important truths in academic research aren’t in the text—they’re in the subtext. Look for contradictions between the data and the narrative, missing variables, or unexplained exclusions.

“The most telling omissions in a research article are rarely accidental. They reflect either methodological limitations or deliberate framing.” — Extracted from The Conversation, 2024

If a controversial variable is missing, ask yourself: who benefits from this silence? Analyzing academic research articles requires you to interrogate the unsaid as much as the said.

Statistical smoke and mirrors: decoding data

Statistical tricks are everywhere: p-hacking, cherry-picking, and confusing statistical significance with actual relevance. According to Boston Research (2024), fewer graduates now specialize in data analysis—meaning more errors slip through.

| Statistical Trap | What It Looks Like | How to Counter |

|---|---|---|

| P-hacking | Selective reporting | Check preregistration, supplemental files |

| Misleading averages | Reporting means, not range | Look for standard deviation, range data |

| Relative risk only | No absolute numbers | Demand absolute risk for context |

| Confusing correlation | Implies causation | Examine study design, control for variables |

Table 4: Common statistical traps in academic research articles. Source: Original analysis based on Boston Research (2024), The Conversation (2024)

Don’t just trust the numbers—interrogate how they’re used.

Cross-referencing and context: seeing the bigger picture

To truly analyze academic research articles, you need to step outside the article. Cross-reference findings with other studies, meta-analyses, and policy documents.

- Search for systematic reviews or meta-analyses on the topic

- Compare findings with reputable databases (e.g., PubMed, Google Scholar)

- Check for replication studies—has anyone reproduced these results?

- Contrast with industry reports or government data

- Scan for media coverage—has this research been sensationalized or misrepresented?

By layering context, you turn isolated findings into actionable intelligence.

This approach exposes both outlier results and consensus, letting you calibrate your own confidence in the article’s conclusions.

The evolving landscape: open access, AI tools, and the future of research analysis

How open access is changing the game

Open access journals have democratized research, but they’ve also opened the floodgates to questionable scholarship. According to Royal Society (2023), open access has doubled the number of available research articles in less than a decade.

While this broadens access, it demands more vigilance in separating gold from dross. Open access means anyone can read, but not everyone can discern.

It’s a double-edged sword—empowering when paired with rigorous analysis, dangerous when it isn’t.

AI-powered analysis: friend, foe, or both?

AI tools like textwall.ai have begun to transform how experts analyze academic research articles—summarizing, highlighting key trends, and surfacing hidden connections in seconds.

“AI integration accelerates analysis but risks amplifying existing biases unless carefully curated by domain experts.” — Extracted from Boston Research, 2024

The best analysts blend AI speed with human skepticism, using tools to spot patterns but never outsourcing judgment.

Relying exclusively on automation is as dangerous as ignoring it entirely.

The rise (and risks) of predatory journals

Predatory journals have proliferated, exploiting open access models to publish without rigorous peer review. According to The Conversation (2024), thousands of such outlets now exist.

- Aggressive solicitation of submissions: Unsolicited emails promising fast-track publication.

- Fake or absent peer review: Minimal or no review process.

- Misleading journal titles: Mimicry of reputable publications.

- Lack of indexing in major databases: Not found in PubMed, Web of Science, etc.

Falling for predatory journals not only wastes your time but also tarnishes your professional reputation.

How textwall.ai fits into the new ecosystem

Platforms like textwall.ai provide a critical edge in today’s data-saturated environment. By automating the extraction of key insights and surfacing methodological red flags, these tools amplify your ability to analyze academic research articles without sacrificing depth.

But the tool is only as effective as the user—you remain the final arbiter of credibility. As critical discourse continues to shift online, leveraging such platforms can make the difference between clarity and confusion.

Use AI as your scalpel, not your crutch.

Case studies: lessons from real-world success and failure

When research analysis changed the world

From identifying carcinogens to debunking vaccine myths, rigorous analysis of academic research articles has repeatedly shaped global outcomes.

| Case Study | Analytical Breakthrough | Real-World Impact |

|---|---|---|

| Smoking-cancer link | Meta-analysis of cohort studies | Led to anti-smoking legislation |

| Thalidomide scandal | Post-market surveillance | Drastic overhaul of drug approvals |

| COVID-19 vaccine efficacy | Critical review of trial data | Informed policy, restored public trust |

| Replication crisis in psych | Systematic replication attempts | Raised research standards industry-wide |

Table 5: Notable examples where critical analysis of research articles shaped policy and lives. Source: Original analysis based on Royal Society (2023), Boston Research (2024)

These examples underscore why your approach to analysis isn’t just academic—it’s existential.

Disasters and scandals: what went wrong

- The retracted Lancet hydroxychloroquine study: Rushed analysis, poor data vetting, global policy whiplash.

- The “cold fusion” debacle: Sensationalist claims, lack of replication, years of wasted research.

- Psychology’s replication crisis: Decades of canonical studies failed reproducibility checks, eroding trust.

- Nutrition’s shifting guidelines: Small, biased studies repeatedly upended by better meta-analyses.

Each disaster teaches a brutal lesson: shortcuts in analysis don’t just hurt reputations—they hurt people.

What experts do differently: a day in the life

“The best analysts don’t just read—they interrogate, triangulate, and revisit. It’s not about being the smartest in the room, but about being the most relentless.” — Paraphrased from analysis interviews, Royal Society (2023)

The real difference? Experts never stop asking uncomfortable questions.

Beyond the basics: applying insights in your own work

Transforming reading into action

- Summarize findings in your own words—don’t parrot the abstract.

- List key limitations and unresolved questions.

- Map connections to other research in your field.

- Translate insights into recommendations (for work, study, or policy).

- Share findings with peers for critical discussion.

This process turns passive reading into active contribution.

Treat every article as a springboard for your own work, not just a box to check.

Avoiding analysis paralysis: knowing when to trust your gut

- Don’t get bogged down in endless cross-checks: At some point, you have to draw the line.

- Prioritize articles most relevant to your goals: Not every paper deserves equal scrutiny.

- Accept uncertainty: Absolute certainty is a myth in research.

- Seek peer feedback: Sometimes, an outside perspective breaks the deadlock.

“Good enough” critical reading, applied consistently, beats perfectionist paralysis.

Building your own critical reading system

- Set your criteria for credibility (peer review, author expertise, etc.).

- Develop a note-taking template for every article you read.

- Automate where possible (use tools like textwall.ai for first-pass summaries).

- Schedule regular deep dives on pivotal articles.

- Continuously update your standards as you learn.

Your system is your shield against information overload—refine it relentlessly.

Supplementary perspectives: the culture, controversies, and future of academic research analysis

The history and evolution of peer review

Peer review wasn’t always the gatekeeper it is now. Its evolution is a study in academic self-regulation—and its flaws.

| Era | Peer Review Practice | Notable Issues |

|---|---|---|

| Pre-20th c. | Editor-driven, subjective | High bias, insider networks |

| 1970s-1990s | Double-blind review widespread | Somewhat greater fairness |

| 2000s-present | Open peer review, preprints rise | Faster, but more variable rigor |

Table 6: Timeline of peer review evolution. Source: Original analysis based on Royal Society (2023), The Conversation (2024)

Peer review still matters, but it’s not a guarantee—just a line of defense.

Controversies, myths, and the underground world of publishing

- “Peer review means truth”: Myth. Review is fallible and sometimes superficial.

- Paper mills: Underground outfits churn out fake research for academics on deadline.

- Ghostwriting: Industry-hired writers secretly author “independent” studies.

- Citation cartels: Groups cite each other’s work to game metrics.

Academic research analysis means navigating a world where the incentives often reward volume, not truth.

What’s next: trends to watch in research analysis

Critical analysis is evolving—driven by AI, open data, and a demand for transparency. Research from Boston Research (2024) and Royal Society (2023) shows that interdisciplinary collaboration and real-world policy engagement are now top priorities.

Expect more rapid reviews, more AI curation, and a renewed focus on public impact and accountability. The future isn’t about reading more—it’s about reading smarter.

Conclusion

If you’re still scanning academic research articles for the “right answer,” you’re missing the point—and putting yourself at risk. In a world awash with information and misinformation, the ability to analyze academic research articles critically is your calling card for credibility, impact, and autonomy. From dissecting hype-laden abstracts to unmasking hidden bias and rejecting the false security of citation metrics, you now have a ruthless, actionable toolkit at your disposal. Platforms like textwall.ai are your allies in this fight, but responsibility rests with you. Every article is a test, every claim a provocation. Keep questioning, keep cross-checking, and never mistake the trappings of authority for the substance of truth. The dark side of academic research is real—but so is the power of a well-honed, skeptical mind. Own it.

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai