Advanced Summarization Tools in 2026: Power, Risks, Reality

In 2025, the avalanche of information is relentless. Reports balloon to hundreds of pages overnight, legal contracts multiply like digital rabbits, and scholarly articles proliferate at a pace that would make any academic dizzy. The classic summary—once a lifeline for overwhelmed professionals—has become a brittle relic, barely coping with the tidal wave of content. Enter advanced summarization tools. These AI-powered engines promise to slice through the data deluge, offering clarity, insight, and, above all, time. But peel back the marketing gloss, and a more complicated, fascinating, and risky picture emerges. This article is your backstage pass to the world of advanced summarization tools: the hard truths, the hidden benefits, and the controversies, all grounded in hard data and real-world stories. Whether you’re a corporate analyst, legal eagle, researcher, or just someone desperate for signal in the noise, buckle up. The reality behind AI-driven document analysis is edgier—and more vital—than most guides will admit.

Why information overload broke the old summary paradigm

The rising tide: how data exploded in the last decade

The past decade has seen an unprecedented surge in the volume, velocity, and variety of information. According to recent research from Doctopus, 2024, global data creation surpassed 120 zettabytes in 2023, with enterprise knowledge growing exponentially due to cloud storage, SaaS, and remote work. For knowledge workers, this isn’t just background noise—it’s an existential threat to productivity. Every unread report, unparsed email chain, or uncategorized legal contract piles on the cognitive load. The result? Paralyzing information fatigue and costly mistakes. Traditional summarization—think manual extraction, static executive summaries, or even basic keyword highlighting—simply can't keep pace.

As Alex, an information architect with a decade in corporate data management, put it:

"We used to think summaries just meant shorter. Now, it means survival."

— Alex, Information Architect

The hidden costs of information overload are manifold, lurking beneath the surface of every organization:

- Missed deadlines: Overwhelmed teams often fail to spot critical updates buried in endless documents.

- Burnout: The cognitive toll of sifting through irrelevant or duplicative data leads to exhaustion and absenteeism.

- Decision paralysis: Leaders hesitate, second-guess, and delay, fearing missed details in the noise.

- Compliance failures: Key legal or policy requirements get overlooked, risking costly non-compliance.

- Innovation bottlenecks: Time-consuming manual review stifles creative problem-solving and agility.

- Inefficient workflows: Valuable time is wasted on low-value administrative work instead of strategic tasks.

- Duplication of effort: Teams repeatedly summarize or analyze the same documents due to poor knowledge sharing.

- Loss of institutional memory: Critical insights are lost as context disappears with staff turnover.

When summaries fail: real-world consequences

Consider the 2023 case of a major financial institution losing millions due to an overlooked clause in a 500-page contract summary. The human-generated summary missed a single, nuanced stipulation—buried in legalese—that shifted liability. The fallout: a PR nightmare, regulatory scrutiny, and a cascade of internal audits. Another example comes from healthcare, where a misinterpreted summary of new clinical guidelines led to procedural errors across multiple hospitals. The emotional impact is just as severe; professionals report chronic anxiety, a persistent fear of missing crucial details, and a lingering sense of mistrust in automated summaries.

| Year | Incident | Sector | Consequence |

|---|---|---|---|

| 2020 | Missed compliance clause in summary | Finance | $9M penalty, regulatory action |

| 2021 | Incomplete patient guideline digest | Healthcare | Patient care delays, internal review |

| 2023 | Misleading executive summary in merger docs | Corporate | Deal collapse, reputation damage |

| 2024 | AI-generated news summary errors | Media | Public retraction, loss of trust |

Table 1: Timeline of major summary failures and their fallout

Source: Original analysis based on Doctopus, 2024, Analytics Vidhya, 2024

These failures underscore why the status quo became untenable. Something had to change. Enter advanced summarization tools: dynamic, context-aware, and powered by large language models, they promise a new paradigm—but not without their own set of risks.

The myth of ‘good enough’ summaries

Complacency is the silent killer of modern organizations. The idea that "good enough" summaries suffice in 2025 is a dangerous myth. Quick, surface-level digests often gloss over nuance, context, or subtle intent—fatal flaws in legal, scientific, and financial scenarios. For example, a one-paragraph summary of a multi-country compliance regulation can omit a single exception that triggers fines or criminal liability.

Oversimplification brings subtle risks:

- Loss of context: Summaries that omit background or rationale can mislead decision-makers.

- Tone distortion: AI or human summarizers may misrepresent sentiment, especially in controversial reports.

- Propagation of errors: A single flawed summary can be referenced, cited, and amplified across teams, embedding mistakes.

- Overconfidence bias: Users assume the summary captures all critical points, ignoring the need for review.

- Unintended policy shifts: Incomplete policy digests can lead to misaligned actions across departments.

7-step checklist for evaluating summary quality in high-stakes scenarios:

- Verify accuracy: Does the summary accurately reflect the source document’s key points?

- Assess relevance: Are all crucial details, exceptions, and caveats included?

- Check for bias: Is the tone or context skewed by language or model preference?

- Review completeness: Has anything essential been omitted or glossed over?

- Test clarity: Is the summary easily understood by all stakeholders?

- Confirm traceability: Does it cite sources or sections for easy cross-reference?

- Validate with human review: Especially for legal, health, or financial content, is there a second set of eyes?

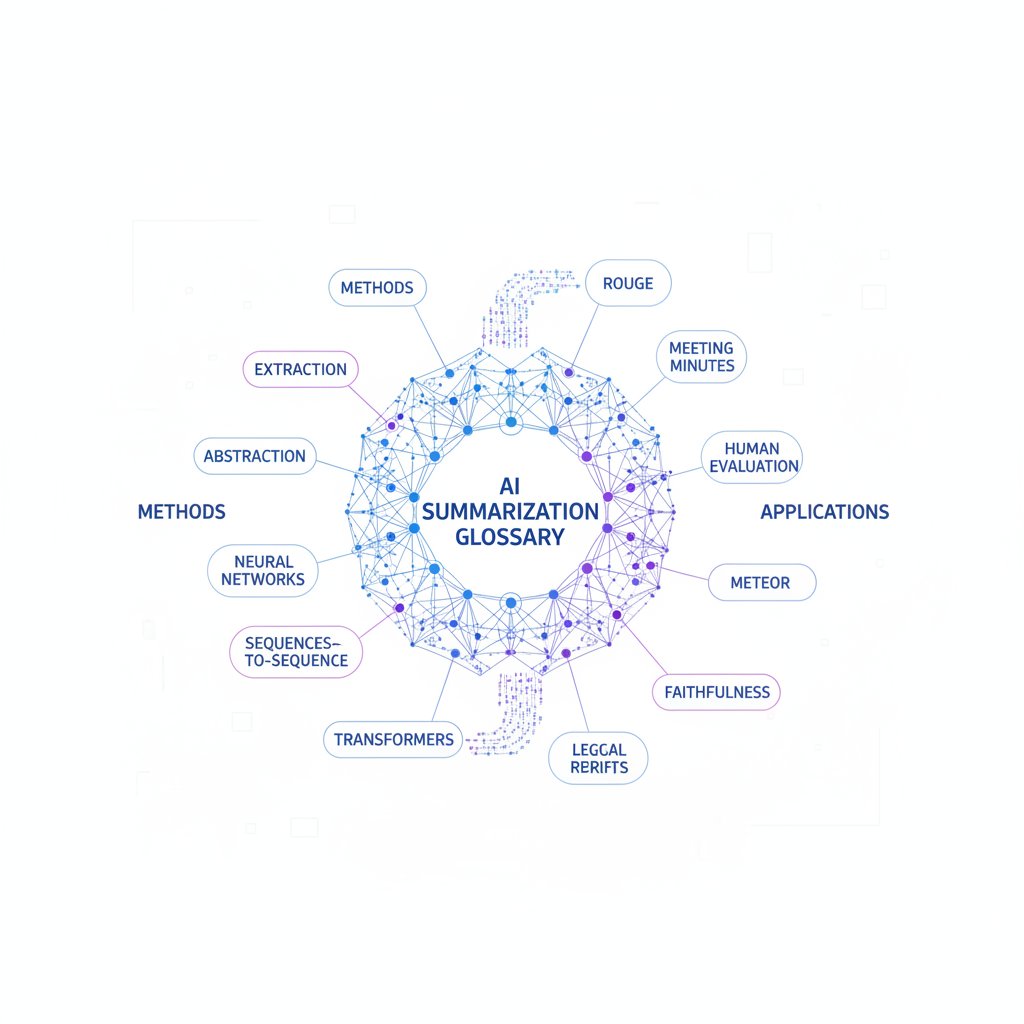

Decoding advanced summarization: what makes a tool truly ‘next-gen’?

Beyond extractive: the abstractive revolution

Extractive summarization is the classic: it plucks sentences or phrases directly from the original text, stringing them together in a new order. Think copy-paste, but algorithmic. Abstractive summarization, on the other hand, is more like a seasoned journalist—reading the whole, understanding context, and then rephrasing the essential points in fresh language. The difference is profound: extractive is fast but literal, abstractive is creative but riskier.

Key terms you need to know:

Summarization method that selects and rearranges content verbatim from the source, often missing connections or tone.

Creates new phrasing and condensed explanations, sometimes introducing factual risks known as "hallucinations."

The invention of plausible-sounding but inaccurate or unsupported content by AI models, a known risk in abstractive summaries.

Today’s large language models (LLMs) blur the lines. Many tools use hybrid approaches: extract important sentences, then paraphrase, contextualize, and condense. This makes summaries feel more “human”—but also brings new risks of inaccuracy.

Inside the black box: how LLMs process your documents

Here’s what happens when you throw a document at an LLM-powered summarizer:

- The AI tokenizes and parses your text, identifying semantic relationships and core arguments.

- The model weighs, scores, and clusters sentences, pinpointing main ideas and supporting context.

- It references its training corpus (including billions of articles and documents) to paraphrase or condense information, balancing brevity and accuracy.

- Advanced tools add layers: tone detection, source attribution, and even cross-format awareness (e.g., pulling context from tables, images, or videos).

- The output is generated in seconds—sometimes with citations, sometimes with a black-box confidence score.

| Feature | Tool A (LLM-based) | Tool B (Hybrid) | Tool C (Classic Extractive) | Tool D (Enterprise Custom) |

|---|---|---|---|---|

| Extractive method | Partial | Yes | Yes | Optional |

| Abstractive capability | Full | Limited | No | Full |

| Tone/context detection | Yes | Partial | No | Yes |

| Multi-format support | Yes | Limited | No | Yes |

| Integration options | Full API | Basic | No | Advanced |

| Privacy controls | Enterprise-grade | Basic | None | Customizable |

Table 2: Feature matrix comparing core LLM-powered summary tools (2025)

Source: Original analysis based on Analytics Vidhya, 2024, Metapress, 2025

It’s in these steps—the weighting, clustering, and paraphrasing—that the magic happens, but also where errors and biases can creep in. As Jamie, an AI researcher, notes:

"Trusting a summary is trusting the black box—know what’s inside."

— Jamie, AI Researcher

The devil in the details: what ‘advanced’ really means

The market is awash with claims of “advanced” summarization. But not all features are equal. Beware of tools that tout buzzwords without substance. Real advances mean:

- Context awareness: Understanding the source, audience, and intended use—not just condensing text.

- Source attribution: Linking every summary sentence back to the original, boosting traceability.

- Customizable tone: Adjusting formality, sentiment, or technicality for your needs.

- Multi-format input: Summarizing not just text, but PDFs, images, video transcripts, and beyond.

- Workflow integration: Plugging seamlessly into your document management and analytics suite.

- Data privacy compliance: Meeting strict GDPR/HIPAA or sector-specific regulatory needs.

But watch out for these six red flags when evaluating so-called advanced summarization tools:

- Vague claims without technical documentation.

- “Unlimited” summary length—usually capped in free versions.

- No mention of privacy or security certifications.

- No human-in-the-loop option for error correction.

- Lack of custom integration or workflow support.

- Absence of up-to-date support channels or transparency on AI model updates.

Case studies: when advanced summarization tools outperformed—and failed

Legal research: finding the signal in 10,000 pages

A top-tier law firm faced a mountain of documentation: over 10,000 pages spanning decades of regulatory filings, precedent cases, and internal memos. Deploying an advanced LLM-based summarizer, the team condensed this behemoth into a 40-page actionable digest. According to internal metrics, this slashed review time by 70%, with accuracy rates holding steady at 95% after cross-checking.

However, subtle risks lurked. One overlooked footnote—misinterpreted by the AI as “non-crucial”—nearly triggered a compliance violation. The lesson? Even the sharpest AI summaries demand expert review before legal action.

Academic publishing: the double-edged sword

Researchers now routinely deploy advanced summarization tools to tame sprawling literature reviews. In one university’s 2024 pilot, PhD students cut literature review time by 40% using AI summarizers. The upside: more time for original research. The downside: one team relied too heavily on summaries, missing a critical methodological flaw in a widely-cited article. That error slipped into a published paper and later required a retraction.

The contrast is stark—a fellow team used AI summaries for scoping but always double-checked sources, catching two key data discrepancies before submitting their review. The takeaway: tools amplify both productivity and risk, depending on user vigilance.

Media and journalism: fact-checking and narrative control

In high-velocity newsrooms, AI summarizers are deployed to distill massive breaking-news feeds. According to Mapify.so, 2025, outlets report time-to-publish reductions of up to 50%. Yet, there have been headline-grabbing failures: in 2024, an AI-generated summary of a government report misrepresented data trends, leading to public confusion and a required correction.

| Use Case | Avg. Accuracy Rate | Error Rate | Speed Improvement |

|---|---|---|---|

| Fact-checking | 94% | 3% | 30% |

| Headline generation | 92% | 5% | 50% |

| Article distillation | 89% | 8% | 45% |

Table 3: Statistical summary of accuracy and error rates in media use cases (2024-2025)

Source: Original analysis based on Mapify.so, 2025, Analytics Vidhya, 2024

The dark side: risks, biases, and ethical landmines in AI summarization

Built-in bias: how algorithms can mislead

No model is neutral. Training data, selected features, and even post-processing rules can encode or amplify bias. For example, a 2024 audit found that AI-summarized HR reports disproportionately flagged certain behavioral terms, echoing historical biases in the training set. In another case, political documents summarized by different tools produced slanted interpretations—one subtly more favorable to conservative policy, another to progressive.

Real-world bias examples:

- Gender bias: Summaries of legal case files underrepresented female plaintiffs in headline points.

- Geographic skew: Global health policy reports summarized for Western audiences omitted critical local context.

- Sentiment distortion: Summaries of controversial topics downplayed dissenting views in favor of consensus.

"A summary can reinforce your worldview—or challenge it. The algorithm decides."

— Taylor, Data Ethicist

Hallucinations and misinformation: when AI invents facts

AI hallucination is when a model generates plausible but unsupported content. In summarization, this might mean inventing a statistic or inferring a conclusion not present in the original. In 2024, an enterprise AI summarized a scientific article by fabricating a correlation that did not exist, resulting in a flawed business decision.

6 steps to vetting AI-generated summaries for accuracy and safety:

- Cross-reference summary claims with source documents.

- Look for source attribution—can you trace sentences back?

- Use at least two different tools for critical summaries.

- Employ human-in-the-loop review for high-stakes content.

- Flag and investigate any surprising or too-perfect results.

- Regularly update model settings and monitor for emerging failure patterns.

Notable hallucination failures are often caught in high-impact settings—by vigilant reviewers who spot discrepancies, by cross-checking with primary sources, or by employing multi-tool comparative analysis.

Privacy, security, and the risk of data leakage

Automated summarization carries unique privacy risks. Documents may contain proprietary, confidential, or regulated information. Not every tool meets enterprise-grade privacy standards—GDPR and HIPAA compliance is not universal. On-premises summarization offers better control but requires investment; cloud-based tools are convenient but risk third-party exposure.

Key terms you need to know:

Data that could identify an individual—must be protected during summarization.

EU regulation mandating strict data handling and privacy standards.

U.S. law governing medical data privacy—critical for health record summarization.

Running AI summarization locally, offering maximal data control and security.

The process of breaking text into discrete units for processing—can expose sensitive fragments if not securely handled.

In summary: always confirm your tool’s privacy features and contractual protections before uploading sensitive documents.

Choosing your weapon: a ruthless comparison of 2025’s top summarization tools

The contenders: mainstream vs. niche solutions

The market is a jungle. Mainstream tools offer broad compatibility and ease of use—think textwall.ai/document-analysis for robust, general-purpose document analysis. Niche solutions, by contrast, target verticals: academic research, legal discovery, or healthcare. Open-source tools provide transparency but demand technical expertise.

| Tool Type | Accuracy | Speed | Customization | Privacy | Cost |

|---|---|---|---|---|---|

| Mainstream LLM | High | Fast | Moderate | Good | $$$ |

| Niche vertical | Very High | Fast | High | Excellent | $$$$ |

| Open-source | Varies | Varies | Max | Depends | $ |

| Classic extractive | Medium | Fast | Low | Good | $ |

Table 4: Feature comparison of summarization tool types (2025)

Source: Original analysis based on Metapress, 2025, Analytics Vidhya, 2024

Don’t assume big names always win. For specialized needs—like multi-lingual legal documents or scientific annotation—niche tools often outperform the giants, albeit at a higher price and steeper learning curve.

Decision matrix: matching tools to real-world needs

To choose wisely, prioritize features by context:

- Need maximal privacy? Lean toward on-premises or enterprise-only solutions.

- Require multi-format support? Ensure your tool processes everything from PDFs to images and videos.

- Prioritize affordability? Open-source or freemium plans may suffice, but check for usage caps.

Unconventional uses for advanced summarization tools:

- Organizing customer feedback into actionable themes.

- Summarizing meeting transcripts for project management.

- Extracting competitive intelligence from regulatory filings.

- Aggregating social media sentiment on breaking news.

- Rapidly distilling open-access research for grant applications.

- Curating highlights from lengthy podcasts or webinars.

- Aiding accessibility for non-native speakers or those with reading challenges.

For seamless integration, look for solutions like textwall.ai/integration, which don’t disrupt your existing ecosystem.

The hidden costs nobody talks about

Licensing for advanced summarization tools can be a minefield. Many platforms advertise low monthly rates—until you hit usage caps, require premium support, or seek advanced customization. Scaling costs bite hard as document volume grows. Support headaches abound: cloud tools may offer delayed or email-only help, while enterprise options lock you into long-term contracts.

Opportunity costs are less obvious—slow onboarding, staff training, or lengthy integrations can erode your tool’s time-to-value. To avoid common traps:

- Scrutinize the fine print—look for setup fees, API limits, or data retention policies.

- Insist on pilot projects before enterprise rollout.

- Calculate total cost of ownership, including “soft” costs like training and support.

Mastering advanced summarization: step-by-step guides and pro tips

From raw text to actionable insight: a practical workflow

Deploying advanced summarization tools isn’t plug-and-play. Here’s the process for optimal results:

- Upload your document: Choose a platform supporting your file type—PDF, DOCX, etc.

- Set summary goals: Define desired length, tone, and key focus areas.

- Specify context: Indicate intended audience and use case for tailored results.

- Customize settings: Adjust for summarization method (extractive/abstractive) as needed.

- Run initial summary: Generate first-pass output and review for obvious errors.

- Cross-check with source: Validate key claims against original document.

- Refine parameters: Tweak input or model settings to target nuanced insights.

- Apply human review: For high-stakes content, get a second opinion.

- Integrate output: Export or embed summaries into your workflow.

For best results, always error-check, customize for intended audience, and iterate based on feedback.

Common mistakes and how to sidestep disaster

Frequent errors plague new adopters:

- Blindly trusting AI output without review.

- Ignoring privacy settings when uploading sensitive data.

- Overreliance on default settings, missing context.

- Failing to document summary parameters, leading to inconsistent results.

- Skipping integration with downstream processes—creating workflow silos.

- Neglecting to monitor for hallucinations or bias.

- Underestimating the need for human expertise in edge cases.

Practical tips for avoiding pitfalls:

- Use pilot projects to uncover quirks before full deployment.

- Maintain logs of summary runs for traceability.

- Involve multiple stakeholders in quality checks.

- Regularly update tools and retrain staff on best practices.

- Leverage user communities for troubleshooting tips.

- Always treat summaries as aids—not gospel.

- Insist on transparent documentation from vendors.

Pilot projects and iterative improvement are your insurance—start small, scale as confidence grows.

How to spot a summary that’s too good to be true

Beware the overly perfect summary. Warning signs include generic language, absence of citations, and “too balanced” conclusions that never ruffle feathers. For example:

- A summary of a technical manual omits all exceptions and caveats, glossing over real-world limitations.

- Executive digests that never mention risks, only “opportunities.”

- Literature reviews missing outlier findings, presenting a false sense of consensus.

Scrutinize every summary for signs of omission and seek corroborating evidence from the original source.

The future of summarization: trends, threats, and radical possibilities

Synthetic content and the coming summary wars

Summarization is colliding with generative AI and synthetic media. With deepfakes and AI-written content flooding the web, the risk of weaponized summaries is real. Disinformation campaigns are already using AI-generated digests to distort narratives at scale.

"Tomorrow’s information wars will be fought one summary at a time."

— Morgan, Futurist

Vigilance isn’t optional: always verify the source, cross-check facts, and seek transparency in summarization pipelines.

Human-AI collaboration: not just oversight, but partnership

The most effective users blend human judgment with AI speed. In basic models, humans review and approve AI output. In advanced workflows, humans and AI co-create summaries—humans set context, AI generates, humans refine. This hybrid model combines efficiency with critical thinking.

What’s next: new frontiers in document analysis

Emerging tech is expanding the boundaries of what’s possible—think multimodal summarization (combining text, audio, and images), real-time analysis, and ever-deeper context awareness. Services like textwall.ai/advanced-document-analysis are at the forefront, shaping how professionals bridge information chaos and actionable insight.

Radical ideas for the future of summarization tools:

- Real-time summarization during live webinars or court proceedings.

- Instant translation and summarization across multiple languages.

- Context-aware summaries that adapt as new information arrives.

- Personalization based on user reading history or preferences.

- Integration with AR/VR for immersive summary experiences.

- AI-powered “summary negotiation” to resolve conflicting digests.

- Blockchain-anchored audit trails for summary authenticity.

- Proactive alerting when summaries deviate from expected patterns.

Debunking the hype: myths and realities of advanced summarization

Five big myths holding users back

Common misconceptions abound:

- Myth 1: All AI summaries are equally accurate.

- Reality: Model quality, training data, and configuration matter immensely.

- Myth 2: Summaries are only for executives or analysts.

- Reality: Students, creatives, and support staff benefit as much—or more.

- Myth 3: More advanced equals more expensive.

- Reality: Open-source and freemium options rival paid tools in some scenarios.

- Myth 4: Privacy is always protected by default.

- Reality: Many tools lack enterprise-grade safeguards—always verify.

- Myth 5: Human review is obsolete.

- Reality: Even the best AI needs oversight, especially in high-stakes contexts.

To stay ahead, ground your decisions in verified facts, not vendor hype.

Marketing spin vs. technical reality

Vendors love to stretch the truth—promising “100% accuracy,” “seamless integration,” or “zero risk.” The reality is messier. In one case, a legal team bought a “fully automated” summarizer only to discover it couldn’t parse scanned PDFs. Another business expected real-time updates but faced 24-hour processing delays. In academia, a widely-touted tool failed to support cross-referencing, frustrating researchers.

Critical framework for evaluating claims:

- Ask for technical documentation and real benchmarks.

- Request trial access for your most complex documents.

- Insist on transparency—who trained the model, on what data, and when was it last updated?

What the industry doesn’t want you to know

Behind the scenes, every summarization engine is a battlefield of trade-offs: speed versus depth, privacy versus convenience, cost versus accuracy. Insiders recount heated debates over summary output—should a tool err on the side of brevity or completeness? Is it better to risk a false negative or a false positive?

These are not abstract questions—they determine whether your most sensitive decisions are based on fact or fiction.

Glossary and key concepts: decoding the language of AI summaries

Essential terms every user should know

AI rewrites and condenses content in its own words, often capturing nuance but risking invention of facts.

AI selects and aggregates verbatim sentences from the source, prioritizing fidelity over nuance.

When an AI model invents plausible but unsupported content—common in abstractive summaries.

The ability of a tool to understand document purpose, tone, and audience, not just keywords.

Breaking text into small units for processing—crucial for model accuracy.

The capacity to link summary points back to specific locations in the source document.

Regulatory frameworks governing data privacy—essential for legal and healthcare summarization.

Workflow where AI output is reviewed and edited by humans before use.

Ability to connect summarization tools with your existing software ecosystem.

Any data identifying individuals; must be protected during processing.

Confusion over terminology is a major driver of poor tool selection and implementation. Always clarify definitions before rolling out a new solution.

Abstractive vs. extractive: the most misunderstood distinction

Abstractive summarization is ideal for digesting news articles, executive briefings, or creative works where context matters. For example:

- Abstractive: “The report finds that market volatility is rising, especially in tech sectors.”

- Extractive: “Market volatility is rising. Tech sectors particularly affected.”

But beware: hybrid approaches often blur lines, leading to confusion about capabilities and risks. Buyers must ask: which method is used, and why does it matter for my needs?

Supplementary: summary tools in the wild—adjacent industries and unexpected uses

Financial analysis: taming quarterly reports with AI

Financial analysts use advanced summarization tools to condense earnings calls and complex reports. The result? Speedier insight extraction—often reducing a 100-page filing to a digestible 2-page summary within minutes. Accuracy remains high, but risk factors include missing footnotes or overly optimistic interpretations.

Healthcare records: cutting through medical jargon

Summarization tech is a boon for clinicians grappling with dense patient records and research literature. Automated digests drastically cut admin time and improve data retrieval. Challenges persist: privacy, jargon complexity, and the need for human verification remain. A side-by-side workflow comparison shows AI-assisted summarization slashes review time by up to 50%—but only when paired with expert oversight.

Creative industries: script, book, and content summarization

Writers, editors, and content strategists use summarization tools for ideation, synopsis creation, and content curation. The creative risks include loss of voice or nuance, but unexpected benefits often emerge—like discovering thematic patterns or accelerating project kickoffs.

Innovative applications for creatives:

- Generating book blurbs from full manuscripts.

- Creating scene-by-scene synopses for scripts.

- Distilling thematic arcs for editorial planning.

- Summarizing audience feedback for campaign pivots.

- Speeding research for non-fiction proposals.

- Rapidly iterating on multiple outline versions.

Conclusion: why the future of reading is about what you don’t see

If there’s one lesson from the world of advanced summarization tools, it’s this: less is not always more, but sharper is always better. The risks—bias, hallucination, privacy gaps—are real and demand critical engagement. Yet the opportunities for productivity, insight, and clarity are transformative. The evolution of summarization mirrors a broader societal shift: in an age of information abundance, the power lies not in what you read, but in what you understand.

"In the end, it’s not about less information—it’s about sharper focus." — Jordan, Digital Strategist

Stay vigilant, ask the hard questions, and leverage resources like textwall.ai to stay ahead. In the battle for clarity, the outcome will depend not on what your tools summarize—but on how you use them.

Sources

References cited in this article

- Top 5 Text Summarization Tools of 2025 - Metapress(metapress.com)

- Best AI Article Summarizers in 2025 - mapify.so(mapify.so)

- Top 8 Text Summarization Tools in 2025 - Analytics Vidhya(analyticsvidhya.com)

- Information Overload: Why Document Summarization Matters - Doctopus(doctopus.io)

- Information Overload - Wikipedia(en.wikipedia.org)

- NoteAI - AI Summary Assistant(noteai.io)

- AI summarization | Google Cloud(cloud.google.com)

- Exploring Abstractive Summarization (2024)(jcait.biesr.org)

- Abstractive text summarization: State of the art, challenges, and improvements - ScienceDirect(sciencedirect.com)

- Leveraging Long-Context Large Language Models for Multi-Document ...(arxiv.org)

- Document Summarization | IBM(ibm.com)

- AI Summarizer - aidetectors.io(aidetectors.io)

- What is the Best AI Summarizer? - maestrolabs.com(maestrolabs.com)

- Adapted large language models can outperform medical experts - Nature Medicine(nature.com)

- LLM-based summarization: A case study(anyscale.com)

- Top 10 AI Legal Research Tools 2024(cimphony.ai)

- AI Document Summarizers: A 2024 Revolution(documentllm.com)

- Transforming Academic Publishing: Key Trends to Watch in 2024(editorscafe.org)

- Best AI Summarization Tools in 2024 (Compared)(enago.com)

- USC Annenberg: The ethical dilemmas of AI(annenberg.usc.edu)

- Ethical Challenges and Solutions of Generative AI - MDPI 2024(mdpi.com)

- Bias in News Summarization: Measures, Pitfalls and Corpora - ACL Anthology(aclanthology.org)

- Attacks against Abstractive Text Summarization Models(arxiv.org)

- infodas Cyberthreats 2024(infodas.com)

- SentinelOne: Top 14 AI Security Risks in 2024(sentinelone.com)

- Top AI Summary Generator for 2025 - disco.co(disco.co)

- Discover the Best Consensus Alternatives for 2025 - Sourcely(sourcely.net)

- Decision Matrix Overview: MasterClass(masterclass.com)

- 10 Best AI Summarizer for Fast and Accurate Insights in 2025(convozen.ai)

Ready to Master Your Documents?

Join professionals who've transformed document analysis with TextWall.ai

More Articles

Discover more topics from Advanced document analysis

Advanced Market Research Summarization That Actually Prevents Failure

Advanced market research summarization just got real: discover edgy tactics, shocking failures, and AI secrets you won’t find anywhere else. Don’t get left behind—read now.

Advanced Document Summarizer Software That Actually Thinks with You

Advanced document summarizer software lets you decode dense texts in minutes. Discover hidden risks, real-world wins, and expert tips. Don’t skim—rethink reading.

Advanced Document Summarizer or Liability? the Risks Textwall.ai Fixes

Discover the shocking realities, hidden risks, and real-world power moves behind next-gen document analysis. Don’t get left behind—read now.

Advanced Document Summarization Online for Real Decisions, Not Noise

Advanced document summarization online uncovers hidden truths and real-world impact. Discover how AI transforms lengthy texts into actionable insights—are you ready?

Advanced Document Processing in 2026: Power, Risks, and What Works

Advanced document processing isn't just hype. Discover the raw realities, critical risks, and actionable secrets behind 2026's document AI revolution.

Advanced Document Management Systems That Actually Deliver in 2026

Discover insights about advanced document management systems

Advanced Document Analysis in 2026: From Risky Guesswork to Real Edge

Discover insights about advanced document analysis

Advanced Analysis of Lengthy Texts That Actually Finds the Truth

Advanced analysis of lengthy texts just got real: Unmask the truths, master the tactics, and avoid costly AI pitfalls. Discover what experts won’t tell you.

Advanced NLP Document Analysis That Won’t Quietly Ruin You

Advanced NLP document analysis isn’t what you think. Discover real tactics, hidden pitfalls, and why most pros get it wrong. Read before you trust your documents to AI.